机器学习--pytorch(1)

接触这个东西之前,什么都不懂。很绝望,而且寒假回家没敲过代码,感觉啥都忘了。

用了三天时间断断续续学习pytorch,做完了实验。哈哈哈哈我是最棒的。

基础教程

b站莫烦python:莫烦-pytorch

知乎:深度炼丹-pytorch

下面学习的代码来自知乎的教程

代码示例1

import torch

import numpy as np

from torch.autograd import Variable

a = torch.Tensor(5, 4)

# print (a)

# tensor([[1.3634e+10, 4.5915e-41, 0.0000e+00, 0.0000e+00],

# [1.1785e-42, 0.0000e+00, 1.3092e+01, 8.3658e-43],

# [1.7265e+10, 4.5915e-41, 1.7265e+10, 4.5915e-41],

# [1.3092e+01, 8.3658e-43, 1.7265e+10, 4.5915e-41],

# [0.0000e+00, 0.0000e+00, 4.3440e-44, 0.0000e+00]])

b = torch.rand(5,4)

# print(b)

# tensor([[0.2416, 0.9073, 0.3216, 0.4597],

# [0.6102, 0.7182, 0.2020, 0.2389],

# [0.2832, 0.3412, 0.1779, 0.5283],

# [0.2052, 0.5030, 0.2734, 0.1610],

# [0.8396, 0.0220, 0.4063, 0.7871]])

print (b.size())

c = np.ones((5,4))

print (c)

#pytorch.tensor转化为numpy

d = b.numpy()

print(d)

#numpy转化为pytorch.tensor

e = np.array([[3,4], [3, 6]])

f = torch.from_numpy(e)

print(f)

print(torch.cuda.is_available())

#是否对其求梯度,默认是False

x = Variable(torch.Tensor([3]), requires_grad=True)

y = Variable(torch.Tensor([5]), requires_grad=True)

z = 2 * x + y + 4

#对x和y分别求导

z.backward()

#输出x的导数和y的导数

print('dz/dx: {}'.format(x.grad.data))

print('dz/dy: {}'.format(y.grad.data))

# (pytorch2) C:\Users\lenovo>python E:\1desktop\now\2019春季-安全系统实验\实验3\练习1.py

# torch.Size([5, 4])

# [[ 1. 1. 1. 1.]

# [ 1. 1. 1. 1.]

# [ 1. 1. 1. 1.]

# [ 1. 1. 1. 1.]

# [ 1. 1. 1. 1.]]

# [[ 0.51665479 0.12178713 0.29267973 0.86160815]

# [ 0.44409192 0.03003657 0.43051815 0.76561362]

# [ 0.56820017 0.67228419 0.69501537 0.99156016]

# [ 0.58648318 0.63945204 0.79995954 0.95069563]

# [ 0.8768664 0.05610359 0.17375535 0.31167436]]

# tensor([[3, 4],

# [3, 6]], dtype=torch.int32)

# False

# dz/dx: tensor([2.])

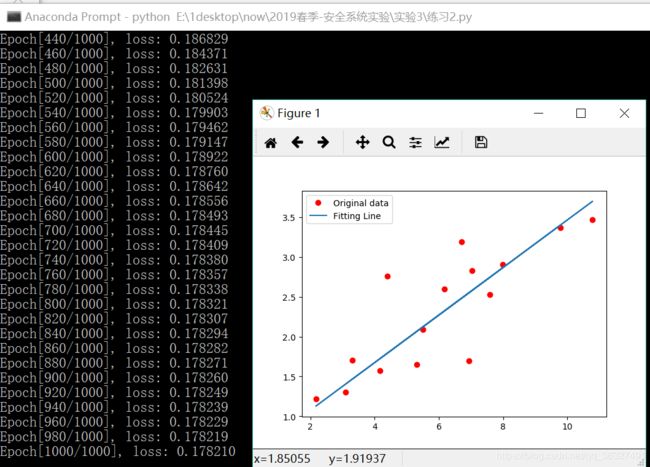

# dz/dy: tensor([1.])代码实例2---线性回归

import torch

from torch import nn, optim

from torch.autograd import Variable

import numpy as np

import matplotlib.pyplot as plt

x_train = np.array([[3.3], [4.4], [5.5], [6.71], [6.93], [4.168],

[9.779], [6.182], [7.59], [2.167], [7.042],

[10.791], [5.313], [7.997], [3.1]], dtype=np.float32)

y_train = np.array([[1.7], [2.76], [2.09], [3.19], [1.694], [1.573],

[3.366], [2.596], [2.53], [1.221], [2.827],

[3.465], [1.65], [2.904], [1.3]], dtype=np.float32)

# numpy转化为tensor

x_train = torch.from_numpy(x_train)

y_train = torch.from_numpy(y_train)

# Linear Regression Model

class LinearRegression(nn.Module):

def __init__(self):

super(LinearRegression, self).__init__()

self.linear = nn.Linear(1, 1) # input and output is 1 dimension 1维

# nn.Linear表示的是 y=w*x+b,里面的两个参数都是1,表示的是x是1维,y也是1维。

# 当然这里是可以根据你想要的输入输出维度来更改的,之前使用的别的框架的同学应该很熟悉。

def forward(self, x):

out = self.linear(x)

return out

model = LinearRegression()

# 定义loss和优化函数

criterion = nn.MSELoss()

optimizer = optim.SGD(model.parameters(), lr=1e-4)

# 开始训练

num_epochs = 1000

for epoch in range(num_epochs):

inputs = Variable(x_train)

target = Variable(y_train)

# forward

out = model(inputs)

loss = criterion(out, target)

# backward

optimizer.zero_grad()

loss.backward()

optimizer.step()

if (epoch+1) % 20 == 0:

print('Epoch[{}/{}], loss: {:.6f}'

.format(epoch+1, num_epochs, loss.item()))

#测试模型

model.eval()

predict = model(Variable(x_train))

predict = predict.data.numpy()

plt.plot(x_train.numpy(), y_train.numpy(), 'ro', label='Original data')

plt.plot(x_train.numpy(), predict, label='Fitting Line')

# 显示图例

plt.legend()

plt.show()

# 保存模型

torch.save(model.state_dict(), './linear.pth')

代码实例3---逻辑回归_MNIST手写集训练

太麻烦了就不一个一个贴运行结果了,反正是能跑的。

import torch

from torch import nn, optim

import torch.nn.functional as F

from torch.autograd import Variable

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision import datasets

import time

# 定义超参数

batch_size = 32

learning_rate = 1e-3

num_epoches = 100

# 下载训练集 MNIST 手写数字训练集

train_dataset = datasets.MNIST(

root='./data', train=True, transform=transforms.ToTensor(), download=True)

test_dataset = datasets.MNIST(

root='./data', train=False, transform=transforms.ToTensor())

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)

# 定义 Logistic Regression 模型

class Logstic_Regression(nn.Module):

def __init__(self, in_dim, n_class):

super(Logstic_Regression, self).__init__()

self.logstic = nn.Linear(in_dim, n_class)

def forward(self, x):

out = self.logstic(x)#

return out

model = Logstic_Regression(28 * 28, 10) # 图片大小是28x28

use_gpu = torch.cuda.is_available() # 判断是否有GPU加速

if use_gpu:

model = model.cuda()

# 定义loss和optimizer

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=learning_rate)

# 开始训练 100次

for epoch in range(num_epoches):

print('*' * 10)#分隔

print('epoch {}'.format(epoch + 1))

since = time.time()

running_loss = 0.0

running_acc = 0.0

for i, data in enumerate(train_loader, 1):

img, label = data

# if i==1:

# print(i)

# print('*' * 10)

# print(img.shape)

# print('*' * 10)

# img = img.view(img.size(0), -1) # 将图片展开成 28x28

# if i==2:

# print(i)

# print('\n')

# print(img.shape)

# print('\n')

if use_gpu:

img = Variable(img).cuda()

label = Variable(label).cuda()

else:

img = Variable(img)

label = Variable(label)

# 向前传播

out = model(img)

loss = criterion(out, label)

running_loss += loss.item() * label.size(0)

_, pred = torch.max(out, 1)

num_correct = (pred == label).sum()

running_acc += num_correct.item()

# 向后传播

optimizer.zero_grad()

loss.backward()

optimizer.step()

if i % 300 == 0:

print('[{}/{}] Loss: {:.6f}, Acc: {:.6f}'.format(

epoch + 1, num_epoches, running_loss / (batch_size * i),

running_acc / (batch_size * i)))

print('Finish {} epoch, Loss: {:.6f}, Acc: {:.6f}'.format(

epoch + 1, running_loss / (len(train_dataset)), running_acc / (len(

train_dataset))))

model.eval()

eval_loss = 0.

eval_acc = 0.

for data in test_loader:

img, label = data

img = img.view(img.size(0), -1)

if use_gpu:

img = Variable(img, volatile=True).cuda()

label = Variable(label, volatile=True).cuda()

else:

img = Variable(img, volatile=True)

label = Variable(label, volatile=True)

out = model(img)

loss = criterion(out, label)

eval_loss += loss.item() * label.size(0)

_, pred = torch.max(out, 1)

num_correct = (pred == label).sum()

eval_acc += num_correct.item()

print('Test Loss: {:.6f}, Acc: {:.6f}'.format(eval_loss / (len(

test_dataset)), eval_acc / (len(test_dataset))))

print('Time:{:.1f} s'.format(time.time() - since))

print()

# 保存模型

torch.save(model.state_dict(), './logstic.pth')