框架入门类练手项目,Scrapy+MongoDB爬取豆瓣《我不是药神》短评

先看看词云成果图:

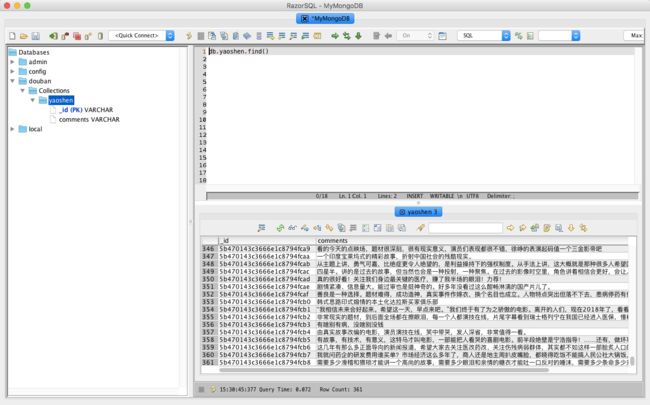

数据库存储图:

由于豆瓣短评网页比较简单,且不存在动态加载的内容,我们下面就直接上代码。有一点需要注意的是,豆瓣短评的前几页不需要登录就可以看,但是后面的内容是是需要我们登录才能查看的,因此我们需要添加自己的cookie。

项目代码

-

Items.py

import scrapy class DbmoivesItem(scrapy.Item): comments = scrapy.Field() # 只爬取内容,所以就一个字段 -

Spider.py

# -*- coding: utf-8 -*- from scrapy import Request,Spider from lxml import etree from ..items import DbmoivesItem import scrapy class DbspiderSpider(scrapy.Spider): ''' 我不是药神 ''' name = 'DBspider' allowed_domains = ['douban.com'] start_urls = ['http://douban.com/'] def start_requests(self): templateurl = 'https://movie.douban.com/subject/26752088/comments?start={}&limit=20&sort=new_score&status=P' # 构造翻页URL for i in range(9091): url = templateurl.format(str(i*20)) yield Request(url = url, callback=self.parse) # 请求网页后解析需要的内容 def parse(self, response): selector = etree.HTML(response.text) item = DbmoivesItem() item['comments'] = selector.xpath('//div[@class="comment"]/p/text()') yield item

-

Pipelines.py

import pymongo class MongoPipeline(object): collection = 'yaoshen' # <我不是药神>数据表 # 初始化数据库连接 def __init__(self,mongo_url,mongo_db): self.mongo_url = mongo_url self.mongo_db = mongo_db # 读取settings.py里的数据库配置信息 @classmethod def from_crawler(cls,crawler): return cls( mongo_url=crawler.settings.get('MONGO_URL'), mongo_db = crawler.settings.get('MONGO_DB') ) # 启动爬虫后连接数据库 def open_spider(self,spider): self.client = pymongo.MongoClient(self.mongo_url) self.db = self.client[self.mongo_db] # 停止爬虫后关闭数据库 def close_spider(self,spider): self.client.close() # 写入爬取内容到数据库 def process_item(self,item,spider): table = self.db[self.collection] for com in item['comments']: data = dict() data['comments'] = com.strip().replace('\n','') table.insert_one(data) return item

-

Middlewares.py

from scrapy import signals from scrapy.downloadermiddlewares.useragent import UserAgentMiddleware import random class MyUseragentMiddleware(UserAgentMiddleware): ''' 设置User-Agent ''' def __int__(self,user_agent): self.user_agent = user_agent # 读取settings.py中的User-Agent @classmethod def from_crawler(cls, crawler): return cls( user_agent=crawler.settings.get('USER_AGENTS') ) # 随机选择User-Agent 防止爬虫被ban def process_request(self, request, spider): agent = random.choice(self.user_agent) request.headers['User-Agent'] = agent

-

Settings.py

BOT_NAME = 'DBmoives' SPIDER_MODULES = ['DBmoives.spiders'] NEWSPIDER_MODULE = 'DBmoives.spiders' # 关闭robots协议 ROBOTSTXT_OBEY = False # 设置延迟 DOWNLOAD_DELAY = 3 # 数据库配置信息 MONGO_URL= 'mongodb://localhost:27017' MONGO_DB = 'douban' # 关闭框架自身的cookies处理 COOKIES_ENABLED = False DEFAULT_REQUEST_HEADERS = { 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,image/apng,*/*;q=0.8', 'Accept-Language': 'zh-CN,zh;q=0.9', 'Cookie': "你自己的cookies" } # 启用中间件 DOWNLOADER_MIDDLEWARES = { 'DBmoives.middlewares.MyUseragentMiddleware': 400, } # 启用管道配置文件 ITEM_PIPELINES = { 'DBmoives.pipelines.MongoPipeline': 300, } # UA库 USER_AGENTS = [ "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)", "Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)", "Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)", "Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)", "Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)", "Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6", "Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1", "Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0", "Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5", "Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11", "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20", "Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.11 TaoBrowser/2.0 Safari/536.11", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.71 Safari/537.1 LBBROWSER", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; LBBROWSER)", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E; LBBROWSER)", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.84 Safari/535.11 LBBROWSER", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)", "Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; QQBrowser/7.0.3698.400)", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Trident/4.0; SV1; QQDownload 732; .NET4.0C; .NET4.0E; 360SE)", "Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)", "Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)", "Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1", "Mozilla/5.0 (iPad; U; CPU OS 4_2_1 like Mac OS X; zh-cn) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8C148 Safari/6533.18.5", "Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:2.0b13pre) Gecko/20110307 Firefox/4.0b13pre", "Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:16.0) Gecko/20100101 Firefox/16.0", "Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.11 (KHTML, like Gecko) Chrome/23.0.1271.64 Safari/537.11", "Mozilla/5.0 (X11; U; Linux x86_64; zh-CN; rv:1.9.2.10) Gecko/20100922 Ubuntu/10.10 (maverick) Firefox/3.6.10", "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36", ]

注意:

请把COOKIES_ENABLED设置为 False,你可能觉得奇怪,为什么我们使用了cookie却需要把它设置为False,原因在于,我们直接把cookie放在了请求头里面,但是scrapy默认自己拥有一套处理cookie的中间件,当你把它设置为True的时候,两者会产生影响,从而请求失败,你可以自己尝试一下。那如果我执意要把他设置为True呢,难道就不能解决了么?当然是可以的,但是我们今天就不在深入的讨论这个问题,以后可以单独解释。

我们这里抓取评论数据是为了之后的分析所用。

你可以去github下载以上的代码和相应的评论数据。

github地址: https://github.com/cnkai/comment.git

声明:本文仅供学习交流所用。

参考链接:http://www.cnblogs.com/cnkai/p/7418330.html

大家如若有兴趣,欢迎朋友,可以加交流群:692-858-412一起学习

喜欢我的文章可以关注我哦,别忘了点个喜欢!