使用Canal监听数据库配置时所报的异常记录

使用docker容器来安装canal和mysql

待解决的问题:监听mysql以后对数据据库所做的操作canal监听不到

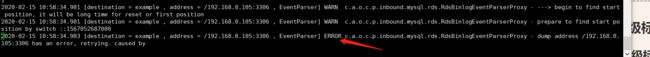

怀疑canal没有配置好,检查canal的日志后发现异常:

2020-02-15 10:58:24.506 [destination = example , address = /192.168.0.105:3306 , EventParser] ERROR com.alibaba.otter.canal.common.alarm.LogAlarmHandler - destination:example[com.alibaba.otter.canal.parse.exception.CanalParseException: command : ‘show master status’ has an error! pls check. you need (at least one of) the SUPER,REPLICATION CLIENT privilege(s) for this operation

]

在这之前已经配置好了mysql和canal的两个配置文件:

这个问题解决不了,就在网上查看了好多篇文档,都说时canal中用户名和密码在MySQL中没有配置好,创建的用户名和密码都是canal

*************************** 5. row ***************************

host: %

user: canal

select_priv: Y

insert_priv: Y

update_priv: Y

delete_priv: Y

5 rows in set (0.00 sec)

还把MySQL的配置文件也给改了一下,打开了mysql的日志

[mysqld]

skip-grant-tables

[mysqld]

log-bin=mysql-bin #开启日志监控

binlog-format=ROW #监控模式为ROW

server_id=1 #配置mysql replaction需要定义,不能和canal的slaveId重复

切记:配置完成后重启mysql——>docker restart mysql

查看canal的配置:

canal.properties

[root@f3ff70f9ae8d canal-server]# vi conf/canal.properties

#################################################

######### common argument #############

#################################################

#canal.manager.jdbc.url=jdbc:mysql://127.0.0.1:3306/canal_manager?useUnicode=true&characterEncoding=UTF-8

#canal.manager.jdbc.username=root

#canal.manager.jdbc.password=121212

canal.id = 1001

canal.ip =

canal.port = 11111

canal.metrics.pull.port = 11112

canal.zkServers =

# flush data to zk

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, RocketMQ

canal.serverMode = tcp

# flush meta cursor/parse position to file

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config

canal.instance.filter.druid.ddl = true

canal.instance.filter.query.dcl = false

canal.instance.filter.query.dml = false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

# binlog format/image check

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config

canal.instance.parser.parallel = true

## concurrent thread number, default 60% available processors, suggest not to exceed Runtime.getRuntime().availableProcessors()

#canal.instance.parser.parallelThreadSize = 16

## disruptor ringbuffer size, must be power of 2

canal.instance.parser.parallelBufferSize = 256

# table meta tsdb info

canal.instance.tsdb.enable = true

canal.instance.tsdb.dir = ${canal.file.data.dir:../conf}/${canal.instance.destination:}

canal.instance.tsdb.url = jdbc:h2:${canal.instance.tsdb.dir}/h2;CACHE_SIZE=1000;MODE=MYSQL;

canal.instance.tsdb.dbUsername = canal

canal.instance.tsdb.dbPassword = canal

# dump snapshot interval, default 24 hour

canal.instance.tsdb.snapshot.interval = 24

# purge snapshot expire , default 360 hour(15 days)

canal.instance.tsdb.snapshot.expire = 360

# aliyun ak/sk , support rds/mq

canal.aliyun.accessKey =

canal.aliyun.secretKey =

#################################################

######### destinations #############

#################################################

#################################################

######### common argument #############

#################################################

#canal.manager.jdbc.url=jdbc:mysql://127.0.0.1:3306/canal_manager?useUnicode=true&characterEncoding=UTF-8

#canal.manager.jdbc.username=root

#canal.manager.jdbc.password=121212

canal.id = 1001

canal.ip =

canal.port = 11111

canal.metrics.pull.port = 11112

canal.zkServers =

# flush data to zk

canal.zookeeper.flush.period = 1000

canal.withoutNetty = false

# tcp, kafka, RocketMQ

canal.serverMode = tcp

# flush meta cursor/parse position to file

canal.file.data.dir = ${canal.conf.dir}

canal.file.flush.period = 1000

## memory store RingBuffer size, should be Math.pow(2,n)

canal.instance.memory.buffer.size = 16384

## memory store RingBuffer used memory unit size , default 1kb

canal.instance.memory.buffer.memunit = 1024

## meory store gets mode used MEMSIZE or ITEMSIZE

canal.instance.memory.batch.mode = MEMSIZE

canal.instance.memory.rawEntry = true

## detecing config

canal.instance.detecting.enable = false

#canal.instance.detecting.sql = insert into retl.xdual values(1,now()) on duplicate key update x=now()

canal.instance.detecting.sql = select 1

canal.instance.detecting.interval.time = 3

canal.instance.detecting.retry.threshold = 3

canal.instance.detecting.heartbeatHaEnable = false

# support maximum transaction size, more than the size of the transaction will be cut into multiple transactions delivery

canal.instance.transaction.size = 1024

# mysql fallback connected to new master should fallback times

canal.instance.fallbackIntervalInSeconds = 60

# network config

canal.instance.network.receiveBufferSize = 16384

canal.instance.network.sendBufferSize = 16384

canal.instance.network.soTimeout = 30

# binlog filter config

canal.instance.filter.druid.ddl = true

canal.instance.filter.query.dcl = false

canal.instance.filter.query.dml = false

canal.instance.filter.query.ddl = false

canal.instance.filter.table.error = false

canal.instance.filter.rows = false

canal.instance.filter.transaction.entry = false

# binlog format/image check

canal.instance.binlog.format = ROW,STATEMENT,MIXED

canal.instance.binlog.image = FULL,MINIMAL,NOBLOB

# binlog ddl isolation

canal.instance.get.ddl.isolation = false

# parallel parser config

canal.instance.parser.parallel = true

instance.properties 文件的配置

canal.instance.dbUsername=root

canal.instance.dbPassword=123456

上边的用户名密码刚开始是canal最后改成自己最初创建的用户名和密码了。

[root@f3ff70f9ae8d canal-server]# vi conf/example/instance.properties

#################################################

## mysql serverId , v1.0.26+ will autoGen

canal.instance.mysql.slaveId=0

# enable gtid use true/false

canal.instance.gtidon=false

# position info

canal.instance.master.address=192.168.0.105:3306

canal.instance.master.journal.name=

canal.instance.master.position=

canal.instance.master.timestamp=

canal.instance.master.gtid=

# rds oss binlog

canal.instance.rds.accesskey=

canal.instance.rds.secretkey=

canal.instance.rds.instanceId=

# table meta tsdb info

canal.instance.tsdb.enable=true

#canal.instance.tsdb.url=jdbc:mysql://192.168.0.105:3306/canal_tsdb

#canal.instance.tsdb.dbUsername=canal

#canal.instance.tsdb.dbPassword=canal

#canal.instance.standby.address =

#canal.instance.standby.journal.name =

#canal.instance.standby.position =

#canal.instance.standby.timestamp =

#canal.instance.standby.gtid=

# username/password

canal.instance.dbUsername=root

canal.instance.dbPassword=123456

canal.instance.connectionCharset = UTF-8

# enable druid Decrypt database password

canal.instance.enableDruid=false

#canal.instance.pwdPublicKey=MFwwDQYJKoZIhvcNAQEBBQADSwAwSAJBALK4BUxdDltRRE5/zXpVEVPUgunvscYFtEip3pmLlhrWpacX7y7GCMo2/JM6LeHmiiNdH1FWgGCpUfircSwlWKUCAwEAAQ==

# table regex .*表示所有数据库\\..*所有表

# 所有数据库的所有表都进行监听

canal.instance.filter.regex=.*\\..*

# table black regex

canal.instance.filter.black.regex=

# mq config 外部监听,需要指定实例的名字

canal.mq.topic=example

# dynamic topic route by schema or table regex

#canal.mq.dynamicTopic=mytest1.user,mytest2\\..*,.*\\..*

canal.mq.partition=0

# hash partition config

#canal.mq.partitionsNum=3

#canal.mq.partitionHash=test.table:id^name,.*\\..*

#################################################

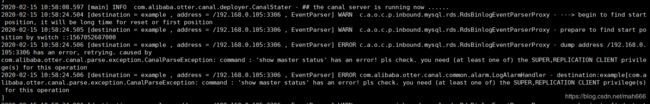

最后重新启动canal

先停止: bin/stop.sh

在启动: bin/startup.sh

查看日志: bin/startup.sh

2020-02-15 10:58:34.901 [destination = example , address = /192.168.0.105:3306 , EventParser] WARN c.a.o.c.p.inbound.mysql.rds.RdsBinlogEventParserProxy - ---> begin to find start position, it will be long time for reset or first position

2020-02-15 10:58:34.901 [destination = example , address = /192.168.0.105:3306 , EventParser] WARN c.a.o.c.p.inbound.mysql.rds.RdsBinlogEventParserProxy - prepare to find start position by switch ::1567052687000

2020-02-15 10:58:34.903 [destination = example , address = /192.168.0.105:3306 , EventParser] ERROR c.a.o.c.p.inbound.mysql.rds.RdsBinlogEventParserProxy - dump address /192.168.0.105:3306 has an error, retrying. caused by

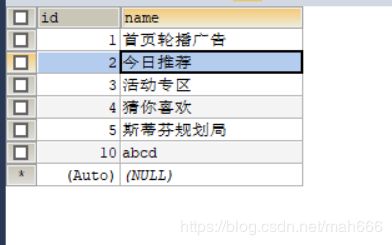

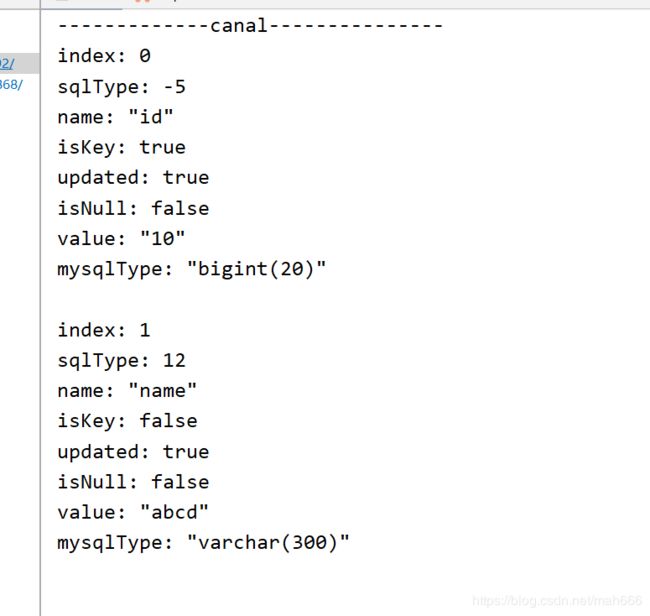

现在在数据库中添加一条记录查看控制台打印:

顺便看一眼代码:

/**

* @Author MH

* @Date 2020/2/14 21:25

* 实现MySql数据监听

*/

@CanalEventListener

public class CanalDataEventListener {

/**

* @param:发生变更的一行数据 只有增加后对的数据

* rowData.getAfterColumnsList(); 增加后对的数据

* @InsertListenPoint 增加数据监听

* CanalEntry.EventType:当前操作的类型;例如:增加数据

*/

@InsertListenPoint

public void onEventInsert(CanalEntry.EventType eventType, CanalEntry.RowData rowData) {

System.out.println("-------------canal---------------");

List afterColumnsList = rowData.getAfterColumnsList();

afterColumnsList.forEach(System.out::println);

}

}