PyTorch实现—Logistic回归,loss和acc可视化

import matplotlib.pyplot as plt

import torch

from torch import nn

from torch.autograd import Variable

import numpy as np

import visdom

viz = visdom.Visdom(env='train')

loss_win = viz.line(np.arange(0.8))

acc_win = viz.line(np.arange(0.8))

#从data.txt读取数据

with open('data.txt','r') as f:

data_list = f.readlines()

data_list = [i.split('\n')[0] for i in data_list]

data_list = [i.split(',') for i in data_list]

data = [(float(i[0]),float(i[1]),float(i[2])) for i in data_list]

x_data = np.array([(float(i[0]),float(i[1])) for i in data_list])

y_data = np.array([[(float(i[2]))] for i in data_list])

x0 = list(filter(lambda x :x[-1] == 0.0,data))

x1 = list(filter(lambda x :x[-1] == 1.0,data))

plot_x0_0 = [i[0] for i in x0]

plot_x0_1 = [i[1] for i in x0]

plot_x1_0 = [i[0] for i in x1]

plot_x1_1 = [i[1] for i in x1]

#plt.plot(plot_x0_0,plot_x0_1,'ro',label='x_0')

#plt.plot(plot_x1_0,plot_x1_1,'bo',label='x_0')

#plt.show()

#获取训练数据

x = torch.from_numpy(x_data).float()

#y = torch.from_numpy(y_data)

y = torch.FloatTensor(y_data)

#print(x.size())

#print(y)

#定义模型

class logisticRegression(nn.Module):

def __init__(self):

super().__init__()

self.line = nn.Linear(2,1)

self.smd = nn.Sigmoid()

def forward(self,x):

x = self.line(x)

return self.smd(x)

logistic_model = logisticRegression()

criterion = nn.BCELoss()

optimizer = torch.optim.SGD(logistic_model.parameters(),lr = 1e-3)

for epoch in range(80000):

x = Variable(x)

y = Variable(y)

#print('****')

#print(x)

#==========forward=========

out = logistic_model(x)

loss = criterion(out,y)

print_loss = loss.item()

#判断输出结果大于0.5就等于1,小于0.5就等于0

#通过这个来计算模型分类的准确率

mask = out.ge(0.5).float()

correct = (mask == y).sum()

acc = correct.item()/x.size(0)

#==========backward========

optimizer.zero_grad()

loss.backward()

optimizer.step()

if(epoch + 1) % 100 == 0:

print('*'*10)

print('epoch{}'.format(epoch+1))

print('loss is {:.4f}'.format(print_loss))

print('acc is {:.4f}'.format(acc))

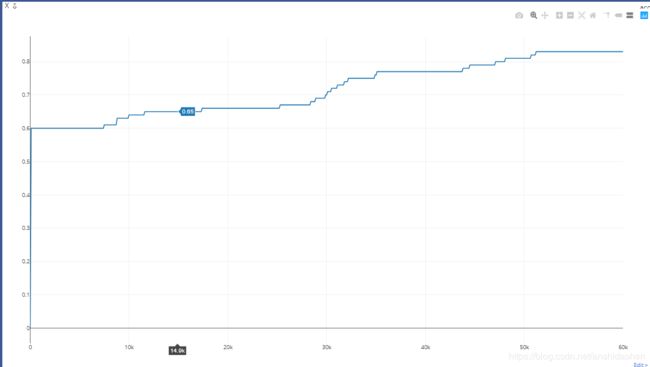

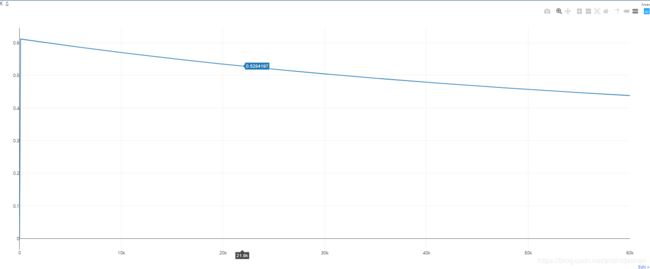

viz.line(Y=np.array([print_loss]), X=np.array([epoch+1]), update='append', opts={'title':'loss'}, win=loss_win)

viz.line(Y=np.array([acc]), X=np.array([epoch+1]), update='append', opts={'title':'acc'}, win=acc_win)

weight = logistic_model.line.weight.data[0]

w0, w1 = weight[0], weight[1]

b = logistic_model.line.bias.data[0]

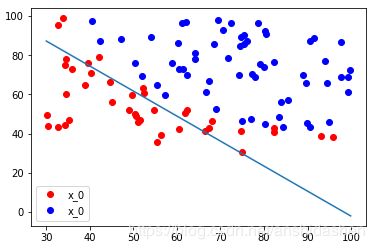

plt.plot(plot_x0_0,plot_x0_1,'ro',label='x_0')

plt.plot(plot_x1_0,plot_x1_1,'bo',label='x_0')

plt.legend(loc = 'best')

plot_x = torch.from_numpy(np.arange(30, 100, 0.1))

plot_y = (-w0 * plot_x - b) / w1

plt.plot(plot_x, plot_y)

plt.show()

本次实验分别测试训练60000次和80000的效果,以上loss和acc是训练60000之后的可视化数据图。训练80000次之后可视化效果更明显。