MHA高可用配置及故障切换

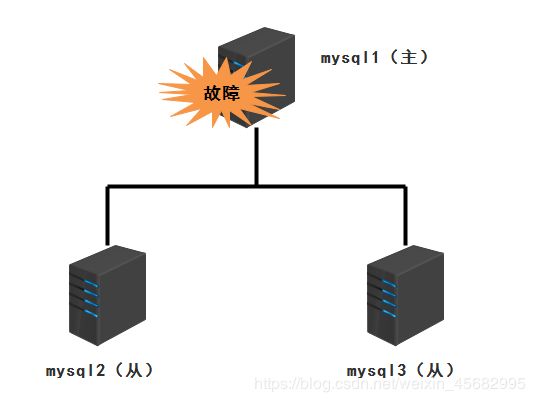

传统的MySQL主从架构存在的问题

单点故障

MHA

MHA概述

- 一套优秀的作为mysql高可用性环境下故障切换和主从提升的高可用软件

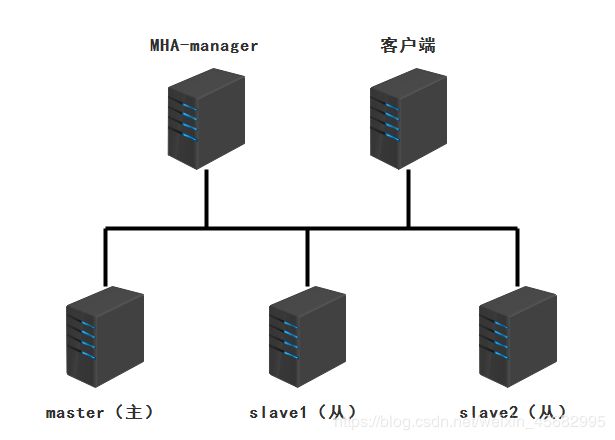

MHA的组成

- MHA Manager(管理节点)

- MHA Node(数据节点)

MHA特点

- 自动切换过程中,MHA试图从宕机的主服务器上保存二进制日志,最大程度的保证数据的不丢失

- 使用MySQL 5.5的版同步复制,可大大降低数据丢失的风险

MHA工作过程

- 从宕机的mysql主服务器保存二进制日志事件

- 识别含有最新更新的从服务器

- 应用差异的中继日志(relay log)到其他的从服务器

- 应用在主服务器保存的二进制日志事件

- 将从服务器中设置好的备用主服务器提升为主服务器

- 使其他的从服务器连接新的主服务器进行复制

案例实施

| 主机名 | IP地址 |

|---|---|

| MHA-manager | 192.168.150.181 |

| master(主服务器) | 192.168.150.240 |

| slave1(从服务器) | 192.168.150.158 |

| slave2(从服务器) | 192.168.150.244 |

| 客户端(测试) | 192.168.150.243 |

2、搭建三台mysql服务器(本次实验使用的是mysql-5.6)

- 搭建完成后在mysql服务器上创建两个软连接

[root@manager ~]# ln -s /usr/local/mysql/bin/mysql /usr/sbin/

[root@manager ~]# ln -s /usr/local/mysql/bin/mysqlbinlog /usr/sbin/

3、搭建mysql主从复制环境

(1)修改主服务器主配置文件

[root@master ~]# vim /etc/my.cnf

#直接添加下面内容

server-id = 1

log_bin = master-bin

log-slave-updates = true

#重启服务

[root@master ~]# service mysqld restart

Shutting down MySQL.. SUCCESS!

Starting MySQL. SUCCESS!

(2)修改从服务器主配置文件

- 更改slave1

[root@slave1 ~]# vim /etc/my.cnf

#直接添加下面内容

server-id = 2

log_bin = master-bin

relay-log = relay-log-bin

relay-log-index = slave-relay-bin.index

#重启服务

[root@slave1 ~]# service mysqld restart

Shutting down MySQL.. SUCCESS!

Starting MySQL. SUCCESS!

- 更改slave2

[root@slave2 ~]# vim /etc/my.cnf

#直接添加下面内容

server-id = 3

log_bin = master-bin

relay-log = relay-log-bin

relay-log-index = slave-relay-bin.index

#重启服务

[root@slave2 ~]# service mysqld restart

Shutting down MySQL.. SUCCESS!

Starting MySQL. SUCCESS!

(3)创建主从同步用户myslave

mysql> grant replication slave on *.* to 'myslave'@'192.168.150.%' identified by '123';

Query OK, 0 rows affected (0.00 sec)

(4)额外调整

- 所有数据库授权mha用户对数据库的操作权限

mysql> grant all privileges on *.* to 'mha'@'192.168.150.%' identified by 'manager';

Query OK, 0 rows affected (0.00 sec)

mysql> grant all privileges on *.* to 'mha'@'master' identified by 'manager';

Query OK, 0 rows affected (0.00 sec)

mysql> grant all privileges on *.* to 'mha'@'slave1' identified by 'manager';

Query OK, 0 rows affected (0.00 sec)

mysql> grant all privileges on *.* to 'mha'@'slave2' identified by 'manager';

Query OK, 0 rows affected (0.00 sec)

#刷新数据库

mysql> flush privileges;

Query OK, 0 rows affected (0.00 sec)

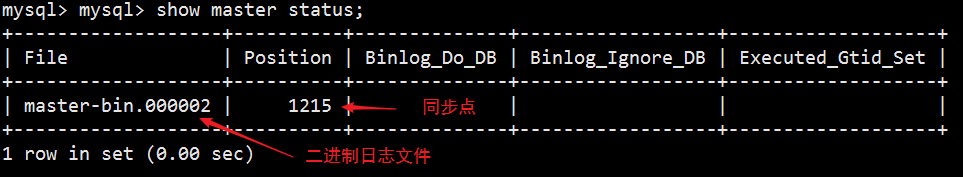

(5)在mysql主服务器上查看为禁止文件和同步点

(6)在从服务器上执行同步

(6)在从服务器上执行同步

mysql> change master to master_host='192.168.150.240',master_user='myslave',master_password='123',master_log_file='master-bin.000002',master_log_pos=12215;

Query OK, 0 rows affected, 2 warnings (0.00 sec)

mysql> start slave;

Query OK, 0 rows affected (0.01 sec)

mysql> show slave status\G;

···省略部分内容

Slave_IO_Running: Yes //如果此处是Slave_IO_Running: Connecting,查看主服务器的防火墙是否关闭

Slave_SQL_Running: Yes

···省略部分内容

(7)设置两台从服务器为只读模式

mysql> set global read_only=1;

Query OK, 0 rows affected (0.00 sec)

4、安装Node组件(所有服务器,版本为0.57)

(1)安装基本环境

[root@master ~]# yum install epel-release --nogpgcheck -y

[root@master ~]# yum install -y perl-DBD-MySQL \

> perl-Config-Tiny \

> perl-Log-Dispatch \

> perl-Parallel-ForkManager \

> perl-ExtUtils-CBuilder \

> perl-ExtUtils-MakeMaker \

> perl-CPAN

(2)安装Node组件

[root@master ~]# tar zxvf /abc/mha/mha4mysql-node-0.57.tar.gz

[root@master ~]# cd mha4mysql-node-0.57/

[root@master mha4mysql-node-0.57]# perl Makefile.PL

[root@master mha4mysql-node-0.57]# make

[root@master mha4mysql-node-0.57]# make install

node安装后在/usr/local/bin/下面生成几个脚本工具(这些工具通常由MHA Manager脚本触发,无需人为操作)

- save_binary_logs:保存和复制master的二进制文件

- apply_diff_relay_logs:识别差异的中继日志事件并将其差异的事件应用于其他的slave

- filter_mysqlbinlog:去除不必要的ROLLBACK回滚事件(MHA已不再使用这个工具)

- purge_relay_logs:清除中继日志(不会阻塞SQL线程)

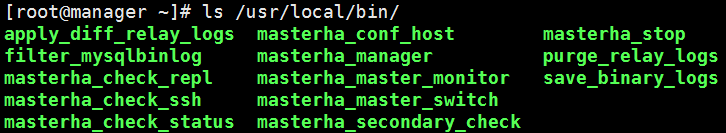

5、安装Manager组件(在manager节点上,版本为0.57)

[root@manager ~]# tar zxvf /abc/mha/mha4mysql-manager-0.57.tar.gz

[root@manager ~]# cd mha4mysql-manager-0.57/

[root@manager mha4mysql-manager-0.57]# perl Makefile.PL

[root@manager mha4mysql-manager-0.57]# make

[root@manager mha4mysql-manager-0.57]# make install

manager安装后在/usr/local/bin/ 下面会生成几个脚本工具

- masterha_check_ssh:检查MHA的SSH配置状况

- masterha_check_repl:检查MySQL复制状况

- masterha_manager:启动manager的脚本

- masterha_check_status:检测当前MHA运行状态

- masterha_master_monitor:检测master是否宕机

- masterha_master_switch:控制故障转移(自动或者手动)

- masterha_conf_host:添加或删除配置server信息

- masterha_stop:关闭manager

6、配置无密码认证

- 工具:ssh-keygen、ssh-copy-id

(1)在manager上配置所有数据节点的无密码认证

[root@manager ~]# ssh-keygen -t rsa

#一直点回车

[root@manager ~]# ssh-copy-id 192.168.150.240

[root@manager ~]# ssh-copy-id 192.168.150.158

[root@manager ~]# ssh-copy-id 192.168.150.244

(2)在master上配置到数据库节点slave1和slave2的无密码认证

#一直点回车

[root@master ~]# ssh-copy-id 192.168.150.158

[root@master ~]# ssh-copy-id 192.168.150.244

(3)在slave1上配置到数据库节点master和slave2的无密码认证

[root@slave1 ~]# ssh-keygen -t rsa

#一直点回车

[root@slave1 ~]# ssh-copy-id 192.168.150.240

[root@slave1 ~]# ssh-copy-id 192.168.150.244

(4)在slave2上配置到数据库节点master和slave1的无密码认证

[root@slave2 ~]# ssh-keygen -t rsa

#一直点回车

[root@slave2 ~]# ssh-copy-id 192.168.150.240

[root@slave2 ~]# ssh-copy-id 192.168.150.158

7、配置MHA(在manager节点上)

(1)复制相关脚本到/usr/local/bin 目录

[root@manager ~]# cp -ra /root/mha4mysql-manager-0.57/samples/scripts /usr/local/bin

- master_ip_failover:自动切换时VIP管理的脚本

- master_ip_online_change:在线切换时vip的管理

- power_manager:故障发生后关闭主机的脚本

- send_report:因故障切换后发送报警的脚本

(2)复制上述的自动切换时VIP管理脚本到/usr/local/bin目录中,使用脚本管理VIP

[root@manager ~]# cp /usr/local/bin/scripts/master_ip_failover /usr/local/bin

(3)修改master_ip_failover脚本(删除原有的内容,重新写入)

[root@manager ~]# vim /usr/local/bin/master_ip_failover

#!/usr/bin/env perl

use strict;

use warnings FATAL => 'all';

use Getopt::Long;

my (

$command, $ssh_user, $orig_master_host, $orig_master_ip,

$orig_master_port, $new_master_host, $new_master_ip, $new_master_port

);

#############################添加内容部分#########################################

#设置漂移IP

my $vip = '192.168.150.200';

my $brdc = '192.168.150.255';

my $ifdev = 'ens33';

my $key = '1';

my $ssh_start_vip = "/sbin/ifconfig ens33:$key $vip";

my $ssh_stop_vip = "/sbin/ifconfig ens33:$key down";

my $exit_code = 0;

#my $ssh_start_vip = "/usr/sbin/ip addr add $vip/24 brd $brdc dev $ifdev label $ifdev:$key;/usr/sbin/arping -q -A -c 1 -I $ifdev $vip;iptables -F;";

#my $ssh_stop_vip = "/usr/sbin/ip addr del $vip/24 dev $ifdev label $ifdev:$key";

##################################################################################

GetOptions(

'command=s' => \$command,

'ssh_user=s' => \$ssh_user,

'orig_master_host=s' => \$orig_master_host,

'orig_master_ip=s' => \$orig_master_ip,

'orig_master_port=i' => \$orig_master_port,

'new_master_host=s' => \$new_master_host,

'new_master_ip=s' => \$new_master_ip,

'new_master_port=i' => \$new_master_port,

);

exit &main();

sub main {

print "\n\nIN SCRIPT TEST====$ssh_stop_vip==$ssh_start_vip===\n\n";

if ( $command eq "stop" || $command eq "stopssh" ) {

my $exit_code = 1;

eval {

print "Disabling the VIP on old master: $orig_master_host \n";

&stop_vip();

$exit_code = 0;

};

if ($@) {

warn "Got Error: $@\n";

exit $exit_code;

}

exit $exit_code;

}

elsif ( $command eq "start" ) {

my $exit_code = 10;

eval {

print "Enabling the VIP - $vip on the new master - $new_master_host \n";

&start_vip();

$exit_code = 0;

};

if ($@) {

warn $@;

exit $exit_code;

}

exit $exit_code;

}

elsif ( $command eq "status" ) {

print "Checking the Status of the script.. OK \n";

exit 0;

}

else {

&usage();

exit 1;

}

}

sub start_vip() {

`ssh $ssh_user\@$new_master_host \" $ssh_start_vip \"`;

}

# A simple system call that disable the VIP on the old_master

sub stop_vip() {

`ssh $ssh_user\@$orig_master_host \" $ssh_stop_vip \"`;

}

sub usage {

print

"Usage: master_ip_failover --command=start|stop|stopssh|status --orig_master_host=host --orig_master_ip=ip --orig_master_port=port --new_master_host=host --new_master_ip=ip --new_master_port=port\n";

}

注意:第一次配置需要去master上手动开启虚拟IP

[root@master ~]# /sbin/ifconfig ens33:1 192.168.150.200/24

(4)创建MHA软件目录并拷贝配置文件

[root@manager ~]# mkdir /etc/masterha

[root@manager ~]# cp /root/mha4mysql-manager-0.57/samples/conf/app1.cnf /etc/masterha/

[root@manager ~]# vim /etc/masterha/app1.cnf

[server default]

#manager配置文件

manager_log=/var/log/masterha/app1/manager.log

#manager日志

manager_workdir=/var/log/masterha/app1

#master保存binlog的位置,这里的路径要与master里配置的bilog的相同

master_binlog_dir=/home/mysql

#设置自动failover时候的切换脚本。也就是上边的那个脚本

master_ip_failover_script=/usr/local/bin/master_ip_failover

#设置手动切换时候的切换脚本

master_ip_online_change_script=/usr/local/bin/master_ip_online_change

#这个密码是前文中创建监控用户的那个密码

password=manager

ping_interval=1

remote_workdir=/tmp

#设置复制用户密码

repl_password=123

#设置复制用户的用户

repl_user=myslave

#设置发生切换后发生报警的脚本

secondary_check_script=/usr/local/bin/masterha_secondary_check -s 192.168.150.158 -s 192.168.150.244

#设置故障发生关闭故障脚本主机

shutdown_script=""

#设置ssh的登录用户名

ssh_user=root

#设置监控用户

user=mha

[server1]

hostname=192.168.150.240

port=3306

[server2]

#设置为候选master,如果设置该参数以后,发送主从切换以后将会从此从库升级为主库

candidate_master=1

#默认情况下如果一个slave落后master 100M的relay logs的话,MHA将不会选择该slave为新的master

check_repl_delay=0

hostname=192.168.150.158

port=3306

[server3]

hostname=192.168.150.244

port=3306

7、测试

- SSH免交互登陆(manager节点)

[root@manager ~]# masterha_check_ssh -conf=/etc/masterha/app1.cnf

···省略部分内容

#如果正常会输出successfully

Sun Jan 12 19:19:11 2020 - [info] All SSH connection tests passed successfully.

- 测试mysql主从连接(健康状态)

[root@manager ~]# masterha_check_repl -conf=/etc/masterha/app1.cnf

···省略部分内容

#提示OK即配置正常

MySQL Replication Health is OK.

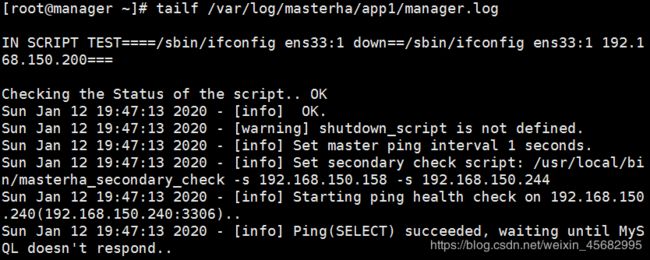

8、启动MHA,查看MHA状态

#启动MHA,放在后台运行

[root@manager ~]# nohup masterha_manager --conf=/etc/masterha/app1.cnf --remove_dead_master_conf --ignore_last_failover < /dev/null > /var/log/masterha/app1/manager.log 2>&1 &

[1] 13525

#查看MHA状态,可以看到当前的master是mysql节点

[root@manager ~]# masterha_check_status --conf=/etc/masterha/app1.cnf

app1 (pid:13525) is running(0:PING_OK), master:192.168.150.240

- –remove_dead_master_conf:该参数代表当发生主从切换后,老的ip将会从配置文件中移除

- –ignore_last_failover:在缺省情况下,如果MHA检测到连续发生宕机,且两次宕机间隔不足8小时的话,则不会进行failover,之所以这样限制是为了避免ping-pong效应,该参数代表忽略上次MHA触发切换后产生的文件,默认情况下,MHA发生切换后会在日志记目录,也就是上面设置的appl.failover.complete文件,下次再次切换的时候如果发现该目录下存在该文件将不允许触发切换,除非在第一次切换后收到删除该文件,为了方便,这里设置为–ignore_last_failover

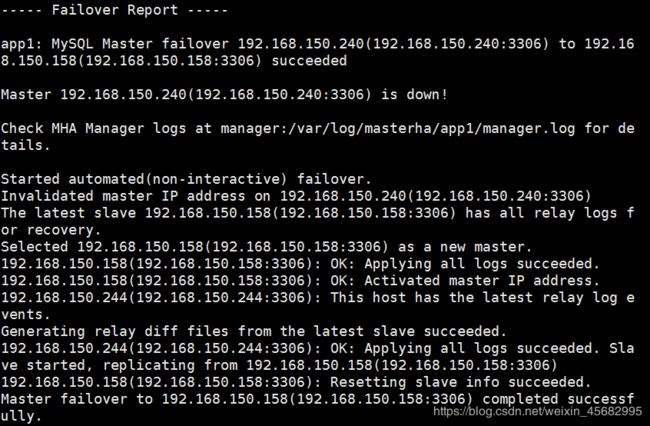

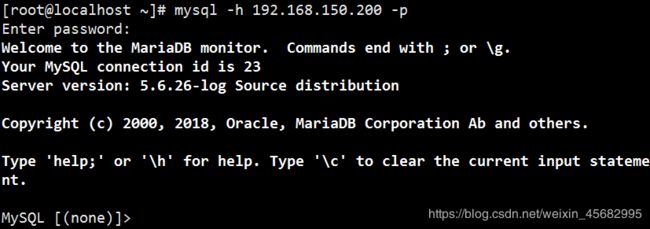

9、验证

(1)在manager上启动监控观察日志记录

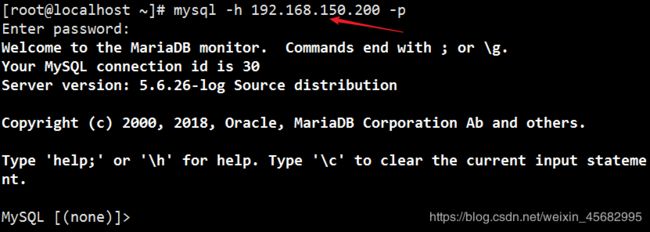

(2)模拟故障 - 使用客户机通过虚拟IP远程登录

- 在主库master上执行停掉mysql服务

[root@master ~]# pkill -9 mysqld

[root@slave1 ~]# ifconfig

ens33: flags=4163 mtu 1500

inet 192.168.150.158 netmask 255.255.255.0 broadcast 192.168.150.255

···

#虚拟IP地址转换到备用主服务器

ens33:1: flags=4163 mtu 1500

inet 192.168.150.200 netmask 255.255.255.0 broadcast 192.168.150.255

···