Windows下darknet训练自己的yolov3模型

Windows下darknet训练自己的yolov3模型

- 准备数据集

- 下载编译darknet

- 修改基本参数

- 编译

- 准备训练集、测试集

- 修改配置参数

- 训练

准备数据集

- 使用labelimg或其他标注工具标注自己准备的数据集

下载编译darknet

下载直达

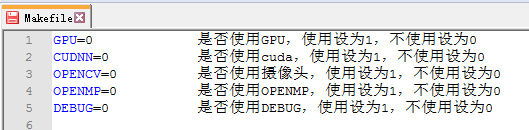

修改基本参数

编译

- 注意Windows下编译需要先在include/darknet.h文件头中添加 #include

make

- 编译完成后的到darknet.exe文件

准备训练集、测试集

import os

import random

trainval_percent = 0.1 #可根据自己需求调整

train_percent = 0.9 #可根据自己需求调整

xmlfilepath = 'Annotations'

txtsavepath = 'ImageSets\Main'

total_xml = os.listdir(xmlfilepath)

num = len(total_xml)

list = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list, tv)

train = random.sample(trainval, tr)

ftrainval = open('ImageSets/Main/trainval.txt', 'w')

ftest = open('ImageSets/Main/test.txt', 'w')

ftrain = open('ImageSets/Main/train.txt', 'w')

fval = open('ImageSets/Main/val.txt', 'w')

for i in list:

name = total_xml[i][:-4] + '\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftest.write(name)

else:

fval.write(name)

else:

ftrain.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()

- 将数据集转成darknet支持的格式:根目录下新建一个py文件,写入以下代码并运行

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

sets=[('data', 'train')]

classes = ["hat", "person"] # 改成自己的类别

def convert(size, box):

dw = 1./(size[0])

dh = 1./(size[1])

x = (box[0] + box[1])/2.0 - 1

y = (box[2] + box[3])/2.0 - 1

w = box[1] - box[0]

h = box[3] - box[2]

x = x*dw

w = w*dw

y = y*dh

h = h*dh

return (x,y,w,h)

def convert_annotation(year, image_id):

in_file = open('data/Annotations/%s.xml'%(image_id))

out_file = open('data/labels/%s.txt'%(image_id), 'w')

tree=ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult)==1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text), float(xmlbox.find('ymax').text))

bb = convert((w,h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

for year, image_set in sets:

if not os.path.exists('data/labels/'):

os.makedirs('data/labels/')

image_ids = open('data/ImageSets/Main/%s.txt'%(image_set)).read().strip().split()

list_file = open('data/%s_%s.txt'%(year, image_set), 'w')

for image_id in image_ids:

list_file.write('%s/data/JPEGImages/%s.jpg\n'%(wd, image_id))

convert_annotation(year, image_id)

list_file.close()

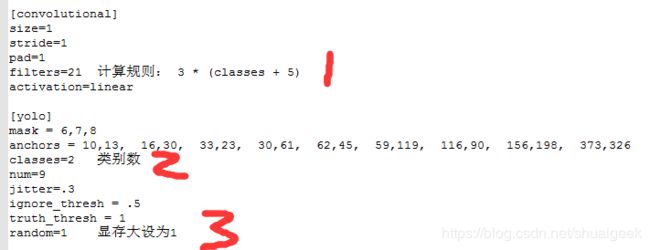

修改配置参数

- 打开cfg下的voc.data和yolov3-voc.cfg文件

- 修改voc.data

classes= 2 #自己数据的类别数,以hat、person两类为例

train = data/data_train.txt

names = data/voc.names #稍后会创建这个文件

backup = data/weights

[net]

# Testing ### 测试模式

# batch=1

# subdivisions=1

# Training ### 训练模式,每次前向的图片数目 = batch/subdivisions

batch=64

subdivisions=16

width=416 ### 网络的输入宽、高、通道数

height=416

channels=3

momentum=0.9 ### 动量

decay=0.0005 ### 权重衰减

angle=0

saturation = 1.5 ### 饱和度

exposure = 1.5 ### 曝光度

hue=.1 ### 色调

learning_rate=0.001 ### 学习率

burn_in=1000 ### 学习率控制的参数

max_batches = 50200 ### 迭代次数

policy=steps ### 学习率策略

steps=40000,45000 ### 学习率变动步长

- 在data目录下新建VOC.names文件,写入自己的类别

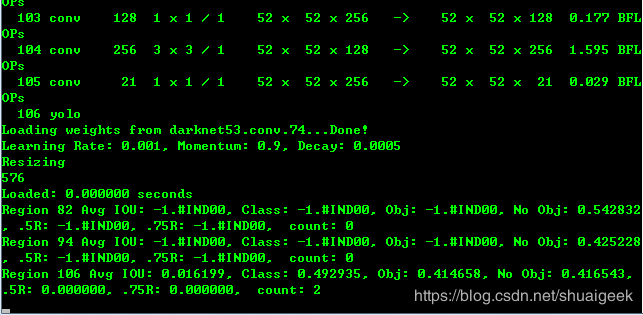

训练

- 下载预训练权重:直达

- 开始训练

.\darknet detector train cfg/voc.data cfg/yolov3-voc.cfg darknet53.conv.74

- 或者指定gpu训练,默认使用gpu0

.\darknet detector train cfg/voc.data cfg/yolov3-voc.cfg darknet53.conv.74 -gups 0,1,2,3

- 从停止处重新训练

.\darknet detector train cfg/voc.data cfg/yolov3-voc.cfg darknet53.conv.74 -gups 0,1,2,3 myData/weights/my_yolov3.backup -gpus 0,1,2,3

- 测试

.\darknet detect cfg/my_yolov3.cfg weights/my_yolov3.weights 1.jpg