CS231n的第二次作业之卷积神经网络

作业二

作业内容:

在本作业中,你将练习编写反向传播代码,训练神经网络和卷积神经网络。

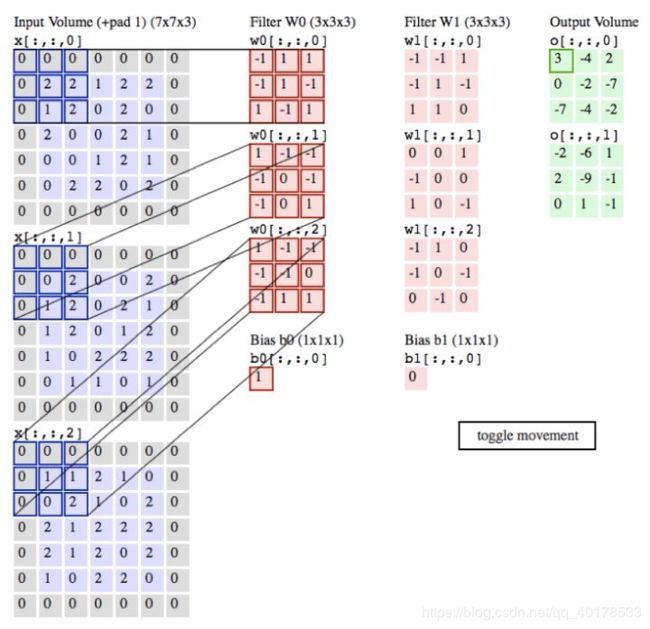

卷积层

卷积层是构建卷积神经网络的核心层,它产生了网络中大部分的计算量。卷积层的参数是有一些可学习的滤波器集合构成的。每个滤波器在空间上(宽度和高度)都比较小,但是深度和输入数据一致。在每个卷积层上,我们会有一整个集合的滤波器(比如12个),每个都会生成一个不同的二维激活图。将这些激活映射在深度方向上层叠起来就生成了输出数据。

在处理图像这样的高维度输入时,让每个神经元都与前一层中的所有神经元进行全连接是不现实的。相反,我们让每个神经元只与输入数据的一个局部区域连接。该连接的空间大小叫做神经元的感受野(receptive field),它的尺寸是一个超参数(其实就是滤波器的空间尺寸)。在深度方向上,这个连接的大小总是和输入量的深度相等。需要再次强调的是,我们对待空间维度(宽和高)与深度维度是不同的:连接在空间(宽高)上是局部的,但是在深度上总是和输入数据的深度一致。

卷积层的超参

3个超参数控制着输出数据体的尺寸:深度(depth),步长(stride)和零填充(zero-padding)。

- 首先,输出数据体的深度是一个超参数:它和使用的滤波器的数量一致,而每个滤波器在输入数据中寻找一些不同的东西。举例来说,如果第一个卷积层的输入是原始图像,那么在深度维度上的不同神经元将可能被不同方向的边界,或者是颜色斑点激活。我们将这些沿着深度方向排列、感受野相同的神经元集合称为深度列(depthcolumn),也有人使用纤(fibre)来称呼它们。

- 其次,在滑动滤波器的时候,必须指定步长。当步长为1,滤波器每次移动1个像素。当步长为2(或者不常用的3,或者更多,这些在实际中很少使用),滤波器滑动时每次移动2个像素。这个操作会让输出数据体在空间上变小。

- 在下文可以看到,有时候将输入数据体用0在边缘处进行填充是很方便的。这个零填充(zero-padding)的尺寸是一个超参数。零填充有一个良好性质,即可以控制输出数据体的空间尺寸(最常用的是用来保持输入数据体在空间上的尺寸,这样输入和输出的宽高都相等)。

- 输出数据体在空间上的尺寸可以通过输入数据体尺寸(W),卷积层中神经元的感受野尺寸(F),步长(S)和零填充的数量(P)的函数来计算。(译者注:这里假设输入数组的空间形状是正方形,即高度和宽度相等)输出数据体的空间尺寸为(W-F +2P)/S+1。比如输入是7x7,滤波器是3x3,步长为1,填充为0,那么就能得到一个5x5的输出。如果步长为2,输出就是3x3。

代码

def conv_forward_naive(x, w, b, conv_param):

"""

A naive implementation of the forward pass for a convolutional layer.

The input consists of N data points, each with C channels, height H and

width W. We convolve each input with F different filters, where each filter

spans all C channels and has height HH and width WW.

Input:

- x: Input data of shape (N, C, H, W)

- w: Filter weights of shape (F, C, HH, WW)

- b: Biases, of shape (F,)

- conv_param: A dictionary with the following keys:

- 'stride': The number of pixels between adjacent receptive fields in the

horizontal and vertical directions.

- 'pad': The number of pixels that will be used to zero-pad the input.

During padding, 'pad' zeros should be placed symmetrically (i.e equally on both sides)

along the height and width axes of the input. Be careful not to modfiy the original

input x directly.

Returns a tuple of:

- out: Output data, of shape (N, F, H', W') where H' and W' are given by

H' = 1 + (H + 2 * pad - HH) / stride

W' = 1 + (W + 2 * pad - WW) / stride

- cache: (x, w, b, conv_param)

"""

out = None

###########################################################################

# TODO: Implement the convolutional forward pass. #

# Hint: you can use the function np.pad for padding. #

###########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

stride = conv_param['stride']

pad = conv_param['pad']

N, C, H, W = x.shape

F, C, HH, WW = w.shape

H_ = int((H + 2*pad - HH)/stride + 1)

W_ = int((W + 2*pad - WW)/stride + 1)

out = np.zeros((N, F, H_, W_))

x_pad = np.pad(x, ((0, 0), (0, 0), (pad, pad), (pad, pad)), 'constant', constant_values = 0)

for f in range(F):

for i in range(H_):

for j in range(W_):

x_conv = x_pad[:, :, i*stride:(i*stride + HH), j*stride:(j*stride + WW)]*w[f, :, :, :]

out[:, f, i, j] = np.sum(x_conv, axis = (1,2,3), keepdims = False)+b[f]

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

###########################################################################

# END OF YOUR CODE #

###########################################################################

cache = (x, w, b, conv_param)

return out, cache

def conv_backward_naive(dout, cache):

"""

A naive implementation of the backward pass for a convolutional layer.

Inputs:

- dout: Upstream derivatives.

- cache: A tuple of (x, w, b, conv_param) as in conv_forward_naive

Returns a tuple of:

- dx: Gradient with respect to x

- dw: Gradient with respect to w

- db: Gradient with respect to b

"""

dx, dw, db = None, None, None

###########################################################################

# TODO: Implement the convolutional backward pass. #

###########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

x, w, b, conv_param = cache

stride = conv_param['stride']

pad = conv_param['pad']

x_pad = np.pad(x, ((0, 0), (0, 0), (pad, pad), (pad, pad)), 'constant', constant_values = 0)

N, C, H, W = x.shape

F, C, HH, WW = w.shape

H_ = int((H + 2*pad - HH)/stride + 1)

W_ = int((W + 2*pad - WW)/stride + 1)

dx_pad = np.zeros((N, C, H+pad*2, W+pad*2))

dw = np.zeros((F, C, HH, WW))

for f in range(F):

for i in range(H_):

for j in range(W_):

temp = x_pad[:, :, i*stride:(i*stride + HH), j*stride:(j*stride + WW)]*dout[:, f, i, j].reshape(-1,1,1,1)

dw[f, :, :, :] += np.sum(temp, axis = 0, keepdims = False)

dx_pad[:, :, i*stride:(i*stride + HH), j*stride:(j*stride + WW)] += w[f, :, :, :]*dout[:, f, i, j].reshape(-1,1,1,1)

dx = dx_pad[:, :, pad:-pad, pad:-pad]

db = np.sum(dout, axis = (0,2,3))

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

###########################################################################

# END OF YOUR CODE #

###########################################################################

return dx, dw, db

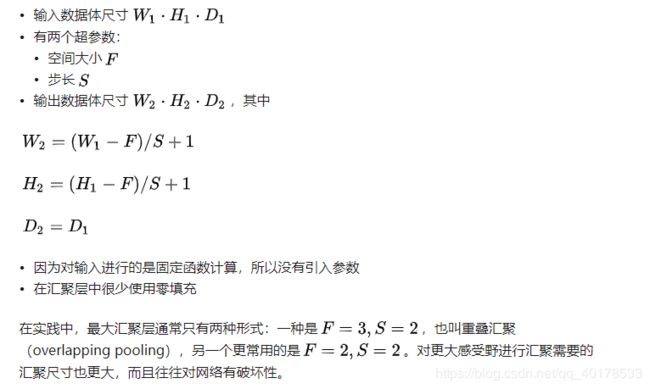

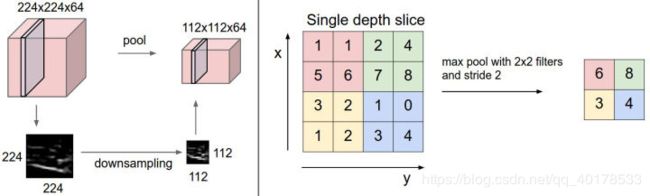

Pooling层

通常,在连续的卷积层之间会周期性地插入一个汇聚层。它的作用是逐渐降低数据体的空间尺寸,这样的话就能减少网络中参数的数量,使得计算资源耗费变少,也能有效控制过拟合。汇聚层使用MAX操作,对输入数据体的每一个深度切片独立进行操作,改变它的空间尺寸。最常见的形式是汇聚层使用尺寸2x2的滤波器,以步长为2来对每个深度切片进行降采样,将其中75%的激活信息都丢掉。每个MAX操作是从4个数字中取最大值(也就是在深度切片中某个2x2的区域)。深度保持不变。汇聚层的一些公式

代码

def max_pool_forward_naive(x, pool_param):

"""

A naive implementation of the forward pass for a max-pooling layer.

Inputs:

- x: Input data, of shape (N, C, H, W)

- pool_param: dictionary with the following keys:

- 'pool_height': The height of each pooling region

- 'pool_width': The width of each pooling region

- 'stride': The distance between adjacent pooling regions

No padding is necessary here. Output size is given by

Returns a tuple of:

- out: Output data, of shape (N, C, H', W') where H' and W' are given by

H' = 1 + (H - pool_height) / stride

W' = 1 + (W - pool_width) / stride

- cache: (x, pool_param)

"""

out = None

###########################################################################

# TODO: Implement the max-pooling forward pass #

###########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

pool_height = pool_param['pool_height']

pool_width = pool_param['pool_width']

stride = pool_param['stride']

N, C, H, W = x.shape

H_ = int((H - pool_height)/stride + 1)

W_ = int((W - pool_width)/stride + 1)

out = np.zeros((N, C, H_, W_))

for i in range(H_):

for j in range(W_):

temp = x[:, :, i*stride:(i*stride+pool_height), j*stride:(j*stride+pool_width)]

out[:, :, i, j] = np.max(temp, axis = (2,3))

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

###########################################################################

# END OF YOUR CODE #

###########################################################################

cache = (x, pool_param)

return out, cache

def max_pool_backward_naive(dout, cache):

"""

A naive implementation of the backward pass for a max-pooling layer.

Inputs:

- dout: Upstream derivatives

- cache: A tuple of (x, pool_param) as in the forward pass.

Returns:

- dx: Gradient with respect to x

"""

dx = None

###########################################################################

# TODO: Implement the max-pooling backward pass #

###########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

x, pool_param = cache

pool_height = pool_param['pool_height']

pool_width = pool_param['pool_width']

stride = pool_param['stride']

N, C, H, W = x.shape

H_ = int((H - pool_height)/stride + 1)

W_ = int((W - pool_width)/stride + 1)

assert dout.shape == (N, C, H_, W_)

dx = np.zeros((N, C, H, W))

for n in range(N):

for c in range(C):

for i in range(H_):

for j in range(W_):

temp = x[n, c, i*stride:(i*stride+pool_height), j*stride:(j*stride+pool_width)]

row, col = np.where(temp == np.max(temp))

dx[n, c, i*stride + int(row), j*stride + int(col)] = dout[n, c, i, j]

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

###########################################################################

# END OF YOUR CODE #

###########################################################################

return dx

归一化层

在卷积神经网络的结构中,提出了很多不同类型的归一化层,有时候是为了实现在生物大脑中观测到的抑制机制。但是这些层渐渐都不再流行,因为实践证明它们的效果即使存在,也是极其有限的。

代码

def spatial_batchnorm_forward(x, gamma, beta, bn_param):

"""

Computes the forward pass for spatial batch normalization.

Inputs:

- x: Input data of shape (N, C, H, W)

- gamma: Scale parameter, of shape (C,)

- beta: Shift parameter, of shape (C,)

- bn_param: Dictionary with the following keys:

- mode: 'train' or 'test'; required

- eps: Constant for numeric stability

- momentum: Constant for running mean / variance. momentum=0 means that

old information is discarded completely at every time step, while

momentum=1 means that new information is never incorporated. The

default of momentum=0.9 should work well in most situations.

- running_mean: Array of shape (D,) giving running mean of features

- running_var Array of shape (D,) giving running variance of features

Returns a tuple of:

- out: Output data, of shape (N, C, H, W)

- cache: Values needed for the backward pass

"""

out, cache = None, None

###########################################################################

# TODO: Implement the forward pass for spatial batch normalization. #

# #

# HINT: You can implement spatial batch normalization by calling the #

# vanilla version of batch normalization you implemented above. #

# Your implementation should be very short; ours is less than five lines. #

###########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

N, C, H, W = x.shape

temp_x = np.transpose(x, (0,2,3,1)).reshape(-1, C)

out, cache = batchnorm_forward(temp_x, gamma, beta, bn_param)

out = np.transpose(out.reshape(N, H, W, C), (0,3,1,2))

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

###########################################################################

# END OF YOUR CODE #

###########################################################################

return out, cache

def spatial_batchnorm_backward(dout, cache):

"""

Computes the backward pass for spatial batch normalization.

Inputs:

- dout: Upstream derivatives, of shape (N, C, H, W)

- cache: Values from the forward pass

Returns a tuple of:

- dx: Gradient with respect to inputs, of shape (N, C, H, W)

- dgamma: Gradient with respect to scale parameter, of shape (C,)

- dbeta: Gradient with respect to shift parameter, of shape (C,)

"""

dx, dgamma, dbeta = None, None, None

###########################################################################

# TODO: Implement the backward pass for spatial batch normalization. #

# #

# HINT: You can implement spatial batch normalization by calling the #

# vanilla version of batch normalization you implemented above. #

# Your implementation should be very short; ours is less than five lines. #

###########################################################################

# *****START OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

N, C, H, W = dout.shape

temp_dout = np.transpose(dout, (0,2,3,1)).reshape(-1, C)

dx, dgamma, dbeta = batchnorm_backward_alt(temp_dout, cache)

dx = np.transpose(dx.reshape(N, H, W, C), (0,3,1,2))

# *****END OF YOUR CODE (DO NOT DELETE/MODIFY THIS LINE)*****

###########################################################################

# END OF YOUR CODE #

###########################################################################

return dx, dgamma, dbeta

全连接层

在全连接层中,神经元对于前一层中的所有激活数据是全部连接的,这个常规神经网络中一样。它们的激活可以先用矩阵乘法,再加上偏差。更多细节请查看神经网络章节。

代码

def svm_loss(x, y):

"""

Computes the loss and gradient using for multiclass SVM classification.

Inputs:

- x: Input data, of shape (N, C) where x[i, j] is the score for the jth

class for the ith input.

- y: Vector of labels, of shape (N,) where y[i] is the label for x[i] and

0 <= y[i] < C

Returns a tuple of:

- loss: Scalar giving the loss

- dx: Gradient of the loss with respect to x

"""

N = x.shape[0]

correct_class_scores = x[np.arange(N), y]

margins = np.maximum(0, x - correct_class_scores[:, np.newaxis] + 1.0)

margins[np.arange(N), y] = 0

loss = np.sum(margins) / N

num_pos = np.sum(margins > 0, axis=1)

dx = np.zeros_like(x)

dx[margins > 0] = 1

dx[np.arange(N), y] -= num_pos

dx /= N

return loss, dx

def softmax_loss(x, y):

"""

Computes the loss and gradient for softmax classification.

Inputs:

- x: Input data, of shape (N, C) where x[i, j] is the score for the jth

class for the ith input.

- y: Vector of labels, of shape (N,) where y[i] is the label for x[i] and

0 <= y[i] < C

Returns a tuple of:

- loss: Scalar giving the loss

- dx: Gradient of the loss with respect to x

"""

shifted_logits = x - np.max(x, axis=1, keepdims=True)

Z = np.sum(np.exp(shifted_logits), axis=1, keepdims=True)

log_probs = shifted_logits - np.log(Z)

probs = np.exp(log_probs)

N = x.shape[0]

loss = -np.sum(log_probs[np.arange(N), y]) / N

dx = probs.copy()

dx[np.arange(N), y] -= 1

dx /= N

return loss, dx

作业代码

import numpy as np

import matplotlib.pyplot as plt

from cs231n.classifiers.cnn import *

from cs231n.data_utils import get_CIFAR10_data

from cs231n.gradient_check import eval_numerical_gradient_array, eval_numerical_gradient

from cs231n.layers import *

from cs231n.fast_layers import *

from cs231n.solver import Solver

%matplotlib inline

plt.rcParams['figure.figsize'] = (10.0, 8.0) # set default size of plots

plt.rcParams['image.interpolation'] = 'nearest'

plt.rcParams['image.cmap'] = 'gray'

%load_ext autoreload

%autoreload 2

def rel_error(x, y):

""" returns relative error """

return np.max(np.abs(x - y) / (np.maximum(1e-8, np.abs(x) + np.abs(y))))

# 加载数据集

data = get_CIFAR10_data()

for k, v in data.items():

print('%s: ' % k, v.shape)

# 测试卷积层的实现

x_shape = (2, 3, 4, 4)

w_shape = (3, 3, 4, 4)

x = np.linspace(-0.1, 0.5, num=np.prod(x_shape)).reshape(x_shape)

w = np.linspace(-0.2, 0.3, num=np.prod(w_shape)).reshape(w_shape)

b = np.linspace(-0.1, 0.2, num=3)

conv_param = {'stride': 2, 'pad': 1}

out, _ = conv_forward_naive(x, w, b, conv_param)

correct_out = np.array([[[[-0.08759809, -0.10987781],

[-0.18387192, -0.2109216 ]],

[[ 0.21027089, 0.21661097],

[ 0.22847626, 0.23004637]],

[[ 0.50813986, 0.54309974],

[ 0.64082444, 0.67101435]]],

[[[-0.98053589, -1.03143541],

[-1.19128892, -1.24695841]],

[[ 0.69108355, 0.66880383],

[ 0.59480972, 0.56776003]],

[[ 2.36270298, 2.36904306],

[ 2.38090835, 2.38247847]]]])

# Compare your output to ours; difference should be around e-8

print('Testing conv_forward_naive')

print('difference: ', rel_error(out, correct_out))

# 根据图像来可视化卷积

from imageio import imread

from PIL import Image

kitten = imread('notebook_images/kitten.jpg')

puppy = imread('notebook_images/puppy.jpg')

# kitten is wide, and puppy is already square

d = kitten.shape[1] - kitten.shape[0]

kitten_cropped = kitten[:, d//2:-d//2, :]

img_size = 200 # Make this smaller if it runs too slow

resized_puppy = np.array(Image.fromarray(puppy).resize((img_size, img_size)))

resized_kitten = np.array(Image.fromarray(kitten_cropped).resize((img_size, img_size)))

x = np.zeros((2, 3, img_size, img_size))

x[0, :, :, :] = resized_puppy.transpose((2, 0, 1))

x[1, :, :, :] = resized_kitten.transpose((2, 0, 1))

# Set up a convolutional weights holding 2 filters, each 3x3

w = np.zeros((2, 3, 3, 3))

# The first filter converts the image to grayscale.

# Set up the red, green, and blue channels of the filter.

w[0, 0, :, :] = [[0, 0, 0], [0, 0.3, 0], [0, 0, 0]]

w[0, 1, :, :] = [[0, 0, 0], [0, 0.6, 0], [0, 0, 0]]

w[0, 2, :, :] = [[0, 0, 0], [0, 0.1, 0], [0, 0, 0]]

# Second filter detects horizontal edges in the blue channel.

w[1, 2, :, :] = [[1, 2, 1], [0, 0, 0], [-1, -2, -1]]

# Vector of biases. We don't need any bias for the grayscale

# filter, but for the edge detection filter we want to add 128

# to each output so that nothing is negative.

b = np.array([0, 128])

# Compute the result of convolving each input in x with each filter in w,

# offsetting by b, and storing the results in out.

out, _ = conv_forward_naive(x, w, b, {'stride': 1, 'pad': 1})

def imshow_no_ax(img, normalize=True):

""" Tiny helper to show images as uint8 and remove axis labels """

if normalize:

img_max, img_min = np.max(img), np.min(img)

img = 255.0 * (img - img_min) / (img_max - img_min)

plt.imshow(img.astype('uint8'))

plt.gca().axis('off')

# Show the original images and the results of the conv operation

plt.subplot(2, 3, 1)

imshow_no_ax(puppy, normalize=False)

plt.title('Original image')

plt.subplot(2, 3, 2)

imshow_no_ax(out[0, 0])

plt.title('Grayscale')

plt.subplot(2, 3, 3)

imshow_no_ax(out[0, 1])

plt.title('Edges')

plt.subplot(2, 3, 4)

imshow_no_ax(kitten_cropped, normalize=False)

plt.subplot(2, 3, 5)

imshow_no_ax(out[1, 0])

plt.subplot(2, 3, 6)

imshow_no_ax(out[1, 1])

plt.show()

# 测试卷积层的反向传播

np.random.seed(231)

x = np.random.randn(4, 3, 5, 5)

w = np.random.randn(2, 3, 3, 3)

b = np.random.randn(2,)

dout = np.random.randn(4, 2, 5, 5)

conv_param = {'stride': 1, 'pad': 1}

dx_num = eval_numerical_gradient_array(lambda x: conv_forward_naive(x, w, b, conv_param)[0], x, dout)

dw_num = eval_numerical_gradient_array(lambda w: conv_forward_naive(x, w, b, conv_param)[0], w, dout)

db_num = eval_numerical_gradient_array(lambda b: conv_forward_naive(x, w, b, conv_param)[0], b, dout)

out, cache = conv_forward_naive(x, w, b, conv_param)

dx, dw, db = conv_backward_naive(dout, cache)

# Your errors should be around e-8 or less.

print('Testing conv_backward_naive function')

print('dx error: ', rel_error(dx, dx_num))

print('dw error: ', rel_error(dw, dw_num))

print('db error: ', rel_error(db, db_num))

# 检验实现池化层

x_shape = (2, 3, 4, 4)

x = np.linspace(-0.3, 0.4, num=np.prod(x_shape)).reshape(x_shape)

pool_param = {'pool_width': 2, 'pool_height': 2, 'stride': 2}

out, _ = max_pool_forward_naive(x, pool_param)

correct_out = np.array([[[[-0.26315789, -0.24842105],

[-0.20421053, -0.18947368]],

[[-0.14526316, -0.13052632],

[-0.08631579, -0.07157895]],

[[-0.02736842, -0.01263158],

[ 0.03157895, 0.04631579]]],

[[[ 0.09052632, 0.10526316],

[ 0.14947368, 0.16421053]],

[[ 0.20842105, 0.22315789],

[ 0.26736842, 0.28210526]],

[[ 0.32631579, 0.34105263],

[ 0.38526316, 0.4 ]]]])

# Compare your output with ours. Difference should be on the order of e-8.

print('Testing max_pool_forward_naive function:')

print('difference: ', rel_error(out, correct_out))

# 测试池化层的反向传播

np.random.seed(231)

x = np.random.randn(3, 2, 8, 8)

dout = np.random.randn(3, 2, 4, 4)

pool_param = {'pool_height': 2, 'pool_width': 2, 'stride': 2}

dx_num = eval_numerical_gradient_array(lambda x: max_pool_forward_naive(x, pool_param)[0], x, dout)

out, cache = max_pool_forward_naive(x, pool_param)

dx = max_pool_backward_naive(dout, cache)

# Your error should be on the order of e-12

print('Testing max_pool_backward_naive function:')

print('dx error: ', rel_error(dx, dx_num))

# 测试快速池化层

from cs231n.fast_layers import conv_forward_fast, conv_backward_fast

from time import time

np.random.seed(231)

x = np.random.randn(100, 3, 31, 31)

w = np.random.randn(25, 3, 3, 3)

b = np.random.randn(25,)

dout = np.random.randn(100, 25, 16, 16)

conv_param = {'stride': 2, 'pad': 1}

t0 = time()

out_naive, cache_naive = conv_forward_naive(x, w, b, conv_param)

t1 = time()

out_fast, cache_fast = conv_forward_fast(x, w, b, conv_param)

t2 = time()

print('Testing conv_forward_fast:')

print('Naive: %fs' % (t1 - t0))

print('Fast: %fs' % (t2 - t1))

print('Speedup: %fx' % ((t1 - t0) / (t2 - t1)))

print('Difference: ', rel_error(out_naive, out_fast))

t0 = time()

dx_naive, dw_naive, db_naive = conv_backward_naive(dout, cache_naive)

t1 = time()

dx_fast, dw_fast, db_fast = conv_backward_fast(dout, cache_fast)

t2 = time()

print('\nTesting conv_backward_fast:')

print('Naive: %fs' % (t1 - t0))

print('Fast: %fs' % (t2 - t1))

print('Speedup: %fx' % ((t1 - t0) / (t2 - t1)))

print('dx difference: ', rel_error(dx_naive, dx_fast))

print('dw difference: ', rel_error(dw_naive, dw_fast))

print('db difference: ', rel_error(db_naive, db_fast))

# Relative errors should be close to 0.0

from cs231n.fast_layers import max_pool_forward_fast, max_pool_backward_fast

np.random.seed(231)

x = np.random.randn(100, 3, 32, 32)

dout = np.random.randn(100, 3, 16, 16)

pool_param = {'pool_height': 2, 'pool_width': 2, 'stride': 2}

t0 = time()

out_naive, cache_naive = max_pool_forward_naive(x, pool_param)

t1 = time()

out_fast, cache_fast = max_pool_forward_fast(x, pool_param)

t2 = time()

print('Testing pool_forward_fast:')

print('Naive: %fs' % (t1 - t0))

print('fast: %fs' % (t2 - t1))

print('speedup: %fx' % ((t1 - t0) / (t2 - t1)))

print('difference: ', rel_error(out_naive, out_fast))

t0 = time()

dx_naive = max_pool_backward_naive(dout, cache_naive)

t1 = time()

dx_fast = max_pool_backward_fast(dout, cache_fast)

t2 = time()

print('\nTesting pool_backward_fast:')

print('Naive: %fs' % (t1 - t0))

print('fast: %fs' % (t2 - t1))

print('speedup: %fx' % ((t1 - t0) / (t2 - t1)))

print('dx difference: ', rel_error(dx_naive, dx_fast))

# 测试三明治层。

from cs231n.layer_utils import conv_relu_pool_forward, conv_relu_pool_backward

np.random.seed(231)

x = np.random.randn(2, 3, 16, 16)

w = np.random.randn(3, 3, 3, 3)

b = np.random.randn(3,)

dout = np.random.randn(2, 3, 8, 8)

conv_param = {'stride': 1, 'pad': 1}

pool_param = {'pool_height': 2, 'pool_width': 2, 'stride': 2}

out, cache = conv_relu_pool_forward(x, w, b, conv_param, pool_param)

dx, dw, db = conv_relu_pool_backward(dout, cache)

dx_num = eval_numerical_gradient_array(lambda x: conv_relu_pool_forward(x, w, b, conv_param, pool_param)[0], x, dout)

dw_num = eval_numerical_gradient_array(lambda w: conv_relu_pool_forward(x, w, b, conv_param, pool_param)[0], w, dout)

db_num = eval_numerical_gradient_array(lambda b: conv_relu_pool_forward(x, w, b, conv_param, pool_param)[0], b, dout)

# Relative errors should be around e-8 or less

print('Testing conv_relu_pool')

print('dx error: ', rel_error(dx_num, dx))

print('dw error: ', rel_error(dw_num, dw))

print('db error: ', rel_error(db_num, db))

from cs231n.layer_utils import conv_relu_forward, conv_relu_backward

np.random.seed(231)

x = np.random.randn(2, 3, 8, 8)

w = np.random.randn(3, 3, 3, 3)

b = np.random.randn(3,)

dout = np.random.randn(2, 3, 8, 8)

conv_param = {'stride': 1, 'pad': 1}

out, cache = conv_relu_forward(x, w, b, conv_param)

dx, dw, db = conv_relu_backward(dout, cache)

dx_num = eval_numerical_gradient_array(lambda x: conv_relu_forward(x, w, b, conv_param)[0], x, dout)

dw_num = eval_numerical_gradient_array(lambda w: conv_relu_forward(x, w, b, conv_param)[0], w, dout)

db_num = eval_numerical_gradient_array(lambda b: conv_relu_forward(x, w, b, conv_param)[0], b, dout)

# Relative errors should be around e-8 or less

print('Testing conv_relu:')

print('dx error: ', rel_error(dx_num, dx))

print('dw error: ', rel_error(dw_num, dw))

print('db error: ', rel_error(db_num, db))

model = ThreeLayerConvNet()

N = 50

X = np.random.randn(N, 3, 32, 32)

y = np.random.randint(10, size=N)

loss, grads = model.loss(X, y)

print('Initial loss (no regularization): ', loss)

model.reg = 0.5

loss, grads = model.loss(X, y)

print('Initial loss (with regularization): ', loss)

num_inputs = 2

input_dim = (3, 16, 16)

reg = 0.0

num_classes = 10

np.random.seed(231)

X = np.random.randn(num_inputs, *input_dim)

y = np.random.randint(num_classes, size=num_inputs)

model = ThreeLayerConvNet(num_filters=3, filter_size=3,

input_dim=input_dim, hidden_dim=7,

dtype=np.float64)

loss, grads = model.loss(X, y)

# Errors should be small, but correct implementations may have

# relative errors up to the order of e-2

for param_name in sorted(grads):

f = lambda _: model.loss(X, y)[0]

param_grad_num = eval_numerical_gradient(f, model.params[param_name], verbose=False, h=1e-6)

e = rel_error(param_grad_num, grads[param_name])

print('%s max relative error: %e' % (param_name, rel_error(param_grad_num, grads[param_name])))

np.random.seed(231)

num_train = 100

small_data = {

'X_train': data['X_train'][:num_train],

'y_train': data['y_train'][:num_train],

'X_val': data['X_val'],

'y_val': data['y_val'],

}

model = ThreeLayerConvNet(weight_scale=1e-2)

solver = Solver(model, small_data,

num_epochs=15, batch_size=50,

update_rule='adam',

optim_config={

'learning_rate': 1e-3,

},

verbose=True, print_every=1)

solver.train()

plt.subplot(2, 1, 1)

plt.plot(solver.loss_history, 'o')

plt.xlabel('iteration')

plt.ylabel('loss')

plt.subplot(2, 1, 2)

plt.plot(solver.train_acc_history, '-o')

plt.plot(solver.val_acc_history, '-o')

plt.legend(['train', 'val'], loc='upper left')

plt.xlabel('epoch')

plt.ylabel('accuracy')

plt.show()

model = ThreeLayerConvNet(weight_scale=0.001, hidden_dim=500, reg=0.001)

solver = Solver(model, data,

num_epochs=1, batch_size=50,

update_rule='adam',

optim_config={

'learning_rate': 1e-3,

},

verbose=True, print_every=20)

solver.train()

from cs231n.vis_utils import visualize_grid

grid = visualize_grid(model.params['W1'].transpose(0, 2, 3, 1))

plt.imshow(grid.astype('uint8'))

plt.axis('off')

plt.gcf().set_size_inches(5, 5)

plt.show()