Kafka: ------ Spring Boot整合Kafka 、接受数据、发送数据

- 引入依赖

<parent>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-starter-parentartifactId>

<version>2.1.5.RELEASEversion>

parent>

<dependencies>

<dependency>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-starterartifactId>

dependency>

<dependency>

<groupId>org.springframework.kafkagroupId>

<artifactId>spring-kafkaartifactId>

dependency>

<dependency>

<groupId>org.apache.kafkagroupId>

<artifactId>kafka-streamsartifactId>

<version>2.0.1version>

dependency>

<dependency>

<groupId>org.springframework.bootgroupId>

<artifactId>spring-boot-starter-testartifactId>

<scope>testscope>

dependency>

dependencies>

接受数据

application.properties

spring.kafka.bootstrap-servers=Centos:9092

spring.kafka.producer.retries=5

spring.kafka.producer.acks=all

spring.kafka.producer.batch-size=16384

spring.kafka.producer.buffer-memory=33554432

spring.kafka.producer.key-serializer=org.apache.kafka.common.serialization.StringSerializer

spring.kafka.producer.value-serializer=org.apache.kafka.common.serialization.StringSerializer

spring.kafka.producer.properties.enable.idempotence=true

spring.kafka.producer.transaction-id-prefix=transaction-id-

spring.kafka.consumer.group-id=group1

spring.kafka.consumer.auto-offset-reset=earliest

spring.kafka.consumer.enable-auto-commit=true

spring.kafka.consumer.auto-commit-interval=100

spring.kafka.consumer.properties.isolation.level=read_committed

spring.kafka.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.consumer.value-deserializer=org.apache.kafka.common.serialization.StringDeserializer

KafkaApplicationDemo 类

package com.baizhi;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class KafkaApplicationDemo {

public static void main(String[] args) {

SpringApplication.run(KafkaApplicationDemo.class,args);

}

}

KafkaListenerComponent 类

package com.baizhi;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.annotation.KafkaListeners;

import org.springframework.stereotype.Component;

@Component

public class KafkaListenerComponent {

@KafkaListeners(value = {@KafkaListener(topicPattern = "topic.*")})

public void reciveRecored(ConsumerRecord<String,String> record){

System.out.println(record.value());

}

}

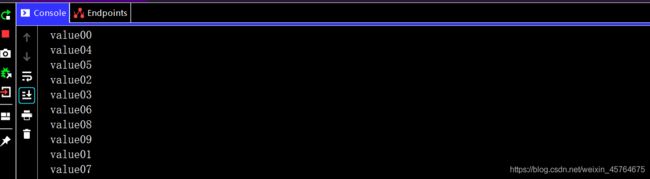

往topic中写入数据即可以得到 如下所示:

生产者类写入topic01数据

package com.baizhi.jsy.kafkaapi;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.serialization.StringSerializer;

import java.text.DecimalFormat;

import java.util.Properties;

public class ProductKafka {

public static void main(String[] args) {

//创建生产者

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,"Centos:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName()); properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

//优化参数

properties.put(ProducerConfig.BATCH_SIZE_CONFIG,1024*1024);//生产者尝试缓存记录,为每一个分区缓存一个mb的数据

properties.put(ProducerConfig.LINGER_MS_CONFIG,500);//最多等待0.5秒.

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<String, String>(properties);

for(int i=0;i<10;i++){

DecimalFormat decimalFormat = new DecimalFormat("00");

String format = decimalFormat.format(i);

ProducerRecord<String, String> record = new ProducerRecord<>("topic01", "key" + format, "value" + format);

kafkaProducer.send(record);

}

kafkaProducer.flush();

kafkaProducer.close();

}

}

发送数据

案例是从topic01读取数据发送到topic03

- 入口类

package com.baizhi;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class KafkaApplicationDemo {

public static void main(String[] args) {

SpringApplication.run(KafkaApplicationDemo.class,args);

}

}

- 读取topic01类

从topic01读取数据,然后调用写好的业务方法 将读取的数据作为参数传送给业务成方法

package com.baizhi;

import com.baizhi.service.IOrderService;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.annotation.KafkaListeners;

import org.springframework.stereotype.Component;

@Component

public class KafkaListenerComponent {

@Autowired

IOrderService iOrderService;

@KafkaListeners(value = {@KafkaListener(topicPattern = "topic01")})

public void reciveRecored(ConsumerRecord<String,String> record){

//System.out.println(record.value());

iOrderService.saveOrder(record.key(),record.value()+"JiangSi Yu");

}

}

- 业务接口

参数是topic的键和值

package com.baizhi.service;

public interface IOrderService {

public void saveOrder(String id,Object message);

}

- 业务实现类

是将数据写入topic03的类 topic01数据作为参数传过来的

package com.baizhi.service.impl;

import com.baizhi.service.IOrderService;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.core.KafkaTemplate;

import org.springframework.stereotype.Service;

import org.springframework.transaction.annotation.Transactional;

@Service

@Transactional

public class OrderService implements IOrderService {

@Autowired

private KafkaTemplate kafkaTemplate;

@Override

public void saveOrder(String id, Object message) {

//做一些业务的处理 发送出去

kafkaTemplate.send(new ProducerRecord("topic03",id,message));

}

}

发送给topic数据的类 如下

package com.baizhi.jsy.transaction;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.errors.ProducerFencedException;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

import java.util.UUID;

public class ProductKafkaTransactionnOnly {

public static void main(String[] args) {

//创建生产者

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "Centos:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

//优化参数

properties.put(ProducerConfig.BATCH_SIZE_CONFIG, 1024 * 1024);//生产者尝试缓存记录,为每一个分区缓存一个mb的数据

properties.put(ProducerConfig.LINGER_MS_CONFIG, 500);//最多等待0.5秒.

//开启幂等性 acks必须是-1

properties.put(ProducerConfig.ACKS_CONFIG,"-1");

//允许超时最大时间

properties.put(ProducerConfig.REQUEST_TIMEOUT_MS_CONFIG,5000);

//失败尝试次数

properties.put(ProducerConfig.RETRIES_CONFIG,3);

//开幂等性 精准一次写入

properties.put(ProducerConfig.ENABLE_IDEMPOTENCE_CONFIG,true);

//开启事务

properties.put(ProducerConfig.TRANSACTIONAL_ID_CONFIG,"transaction-id"+ UUID.randomUUID());

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<String, String>(properties);

//初始化事务

kafkaProducer.initTransactions();

try {

//开启事务

kafkaProducer.beginTransaction();

for (int i=0;i<5;i++){

ProducerRecord<String, String> record = new ProducerRecord<>(

"topic01",

"Transaction",

"Test springboot 发送数据");

kafkaProducer.send(record);

kafkaProducer.flush();

if (i==3){

//Integer b=i/0;//写错

}

}

//事务提交

kafkaProducer.commitTransaction();

} catch (ProducerFencedException e) {

//终止事务

kafkaProducer.abortTransaction();

e.printStackTrace();

}

kafkaProducer.close();

}

}

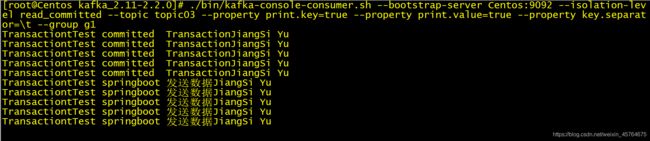

创建topic03

读取topic03结果

可以直接将读取的数据返回给其他的topic (return 即可)

适用于不想对数据进行额外处理的业务场景 直接将数据发送给某个队列

从topic02中读取数据 到topic03中

package com.baizhi;

import com.baizhi.service.IOrderService;

import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.annotation.KafkaListeners;

import org.springframework.messaging.handler.annotation.SendTo;

import org.springframework.stereotype.Component;

@Component

public class KafkaListenerComponent {

@Autowired

IOrderService iOrderService;

@KafkaListeners(value = {@KafkaListener(topicPattern = "topic01")})

public void reciveRecored(ConsumerRecord<String,String> record){

//System.out.println(record.value());

iOrderService.saveOrder(record.key(),record.value()+"JiangSi Yu");

}

@KafkaListeners(value = {@KafkaListener(topicPattern = "topic02")})

@SendTo("topic03")

public String reciveRecored002(ConsumerRecord<String,String> record){

return record.key()+"\tfrom topic02 to topic03\t"+record.value();

}

}

传数据到topic02中

package com.baizhi.jsy.transaction;

import org.apache.kafka.clients.producer.KafkaProducer;

import org.apache.kafka.clients.producer.ProducerConfig;

import org.apache.kafka.clients.producer.ProducerRecord;

import org.apache.kafka.common.errors.ProducerFencedException;

import org.apache.kafka.common.serialization.StringSerializer;

import java.util.Properties;

import java.util.UUID;

public class ProductKafkaTransactionnOnly {

public static void main(String[] args) {

//创建生产者

Properties properties = new Properties();

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG, "Centos:9092");

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

//优化参数

properties.put(ProducerConfig.BATCH_SIZE_CONFIG, 1024 * 1024);//生产者尝试缓存记录,为每一个分区缓存一个mb的数据

properties.put(ProducerConfig.LINGER_MS_CONFIG, 500);//最多等待0.5秒.

//开启幂等性 acks必须是-1

properties.put(ProducerConfig.ACKS_CONFIG,"-1");

//允许超时最大时间

properties.put(ProducerConfig.REQUEST_TIMEOUT_MS_CONFIG,5000);

//失败尝试次数

properties.put(ProducerConfig.RETRIES_CONFIG,3);

//开幂等性 精准一次写入

properties.put(ProducerConfig.ENABLE_IDEMPOTENCE_CONFIG,true);

//开启事务

properties.put(ProducerConfig.TRANSACTIONAL_ID_CONFIG,"transaction-id"+ UUID.randomUUID());

KafkaProducer<String, String> kafkaProducer = new KafkaProducer<String, String>(properties);

//初始化事务

kafkaProducer.initTransactions();

try {

//开启事务

kafkaProducer.beginTransaction();

for (int i=0;i<5;i++){

ProducerRecord<String, String> record = new ProducerRecord<>(

"topic02",

"Transaction",

"Test springboot 发送数据");

kafkaProducer.send(record);

kafkaProducer.flush();

if (i==3){

//Integer b=i/0;//写错

}

}

//事务提交

kafkaProducer.commitTransaction();

} catch (ProducerFencedException e) {

//终止事务

kafkaProducer.abortTransaction();

e.printStackTrace();

}

kafkaProducer.close();

}

}