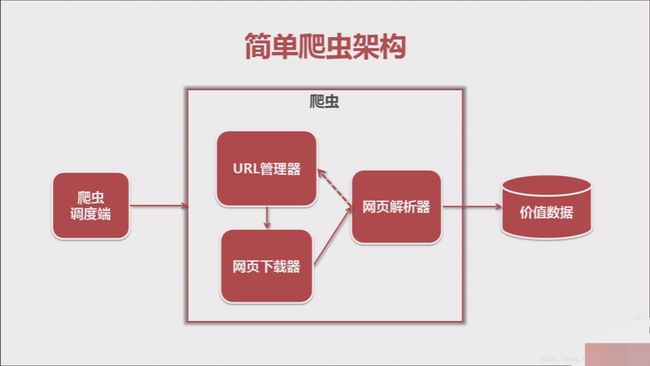

Python实现简单爬虫逻辑

# coding:utf8

import url_manager,html_downloader,html_parser,html_outputer

class SpiderMain(object):

def __init__(self):

self.urls = url_manager.UrlManager()

self.downloader = html_downloader.HtmlDownloader()

self.parser = html_parser.HtmlParser()

self.outputer = html_outputer.HtmlOutputer()

def craw(self,root_url):

count = 1

#将第一个url添加到待爬取的url

self.urls.add_new_url(root_url)

#判断是否还有未爬取的url

while self.urls.has_new_url():

try:

#获取待爬取的url

new_url = self.urls.get_new_url()

print('craw %d : %s' % (count,new_url))

#根据url下载该网页的内容

html_cont = self.downloader.download(new_url)

#解析下载网页的内容,将新获取的ur添加到待爬取列表,获取到想要爬取的内容

new_urls, new_data = self.parser.parse(new_url,html_cont)

self.urls.add_new_urls(new_urls)

self.outputer.collect_date(new_date)

if count == 1000:

break

count = count + 1

except:

print('craw failed')

#将爬取到的内容输出到html中

self.outputer.output_html()

if __name__=="__main__":

root_url = 'https://baike.baidu.com/item/Python/407313'

obj_spider = SpiderMain()

obj_spider.craw(root_url)

url管理器 url_manager.py

class UrlManager(object):

def __init__(self):

self.new_urls = set()

self.old_urls = set()

def add_new_url(self,url):

if url is None:

return

if url not in self.new_urls and url not in self.old_urls:

self.new_urls.add(url)

def add_new_urls(self,urls):

if urls is None or len(urls) == 0:

return

for url in urls:

self.add_new_url(url)

def has_new_url(self):

return len(self.new_urls) != 0

def get_new_url(self):

new_url = self.new_urls.pop()

self.old_urls.add(new_url)

return new_url

网页下载器 html_downloader.py

import urllib

class HtmlDownloader(object):

def download(self,url):

if url is None:

return None

response = urllib.urlopen(url)

if response.getcode() != 200:

return None

return response.read()

网页解析器 html_parser.py

from bs4 import BeautifulSoup

import re

import urllib.parse

class HtmlParser(object):

def parse(self,page_url,html_cont):

if page_url is None or html_cont is None:

return

soup = BeautifulSoup(html_cont,'html.parser',from_encoding='utf-8')

new_urls = self._get_new_urls(page_url,soup) #疑问调用自己的方法是要加下划线吗

new_urls = self._get_new_data(page_url,soup)

return new_urls,new_urls

def _get_new_urls(self,page_url,soup):

#/item/Python/407313

links = soup.find_all('a',href=re.compile(r"/item/"))

for link in links:

new_url = link['href']

new_full_url = urllib.parse.urljoin(page_url,new_url)

new_urls.add(new_full_url)

return new_urls

def _get_new_data(self,page_url,html_cont):

res_data = {}

#url

res_data['url'] = page_url

#

#~ Python

title_node = soup.find('dd',class_='lemmaWgt-lemmaTitle-title').find('h1')

res_data['title'] = title_node.get_text()

#~

summary_node = soup.find('div',class_='lemma-summary')

res_data['summary'] = summary_node.get_text()

return res_data

将爬取到的价值数据输出 html_outputer.py

class HtmlOutputer(object):

def __init__(self):

self.datas=[]

def collect_date(self,data):

if data is None:

return

self.datas.append(data)

def output_html(self):

fout = open('output.html','w')

fout.write("")

fout.write("")

fout.write("")

fout.write("

")

for data in self.datas:

fout.write("")

fout.write("%s "% data['url'].encode('utf-8'))

fout.write("%s "% title['title'].encode('utf-8'))

fout.write("%s "% summary['url'].encode('utf-8'))

fout.write(" ")

fout.write("")

fout.write("")

fout.close()