import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import sklearn as sk

%matplotlib inline

import datetime

import os

import seaborn as sns

from datetime import date

from sklearn.preprocessing import LabelEncoder

from sklearn.preprocessing import StandardScaler

from sklearn.preprocessing import LabelBinarizer

import pickle

import seaborn as sns

from sklearn.metrics import *

from sklearn.model_selection import *

train = pd.read_csv("airbnb/train_users_2.csv")

test = pd.read_csv("airbnb/test_users.csv")

print('the columns name of training dataset:\n',train.columns)

print('the columns name of test dataset:\n',test.columns)

the columns name of training dataset:

Index(['id', 'date_account_created', 'timestamp_first_active',

'date_first_booking', 'gender', 'age', 'signup_method', 'signup_flow',

'language', 'affiliate_channel', 'affiliate_provider',

'first_affiliate_tracked', 'signup_app', 'first_device_type',

'first_browser', 'country_destination'],

dtype='object')

the columns name of test dataset:

Index(['id', 'date_account_created', 'timestamp_first_active',

'date_first_booking', 'gender', 'age', 'signup_method', 'signup_flow',

'language', 'affiliate_channel', 'affiliate_provider',

'first_affiliate_tracked', 'signup_app', 'first_device_type',

'first_browser'],

dtype='object')

print(train.info())

RangeIndex: 213451 entries, 0 to 213450

Data columns (total 16 columns):

id 213451 non-null object

date_account_created 213451 non-null object

timestamp_first_active 213451 non-null int64

date_first_booking 88908 non-null object

gender 213451 non-null object

age 125461 non-null float64

signup_method 213451 non-null object

signup_flow 213451 non-null int64

language 213451 non-null object

affiliate_channel 213451 non-null object

affiliate_provider 213451 non-null object

first_affiliate_tracked 207386 non-null object

signup_app 213451 non-null object

first_device_type 213451 non-null object

first_browser 213451 non-null object

country_destination 213451 non-null object

dtypes: float64(1), int64(2), object(13)

memory usage: 26.1+ MB

None

特征分析:

print(train.date_account_created.head())

0 2010-06-28

1 2011-05-25

2 2010-09-28

3 2011-12-05

4 2010-09-14

Name: date_account_created, dtype: object

print(train.date_account_created.value_counts().head())

print(train.date_account_created.value_counts().tail())

2014-05-13 674

2014-06-24 670

2014-06-25 636

2014-05-20 632

2014-05-14 622

Name: date_account_created, dtype: int64

2010-04-24 1

2010-03-09 1

2010-01-01 1

2010-06-18 1

2010-01-02 1

Name: date_account_created, dtype: int64

print(train.date_account_created.describe())

count 213451

unique 1634

top 2014-05-13

freq 674

Name: date_account_created, dtype: object

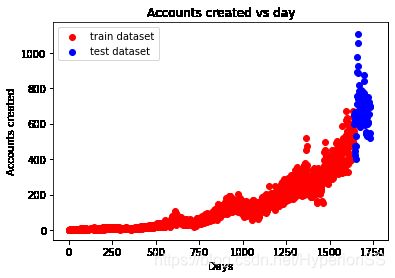

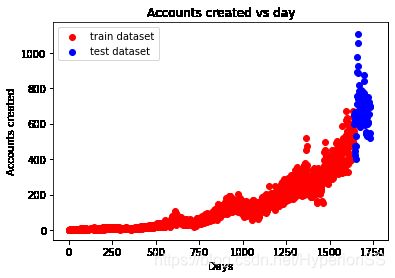

dac_train = train.date_account_created.value_counts()

dac_test = test.date_account_created.value_counts()

dac_train_date = pd.to_datetime(train.date_account_created.value_counts().index)

dac_test_date = pd.to_datetime(test.date_account_created.value_counts().index)

dac_train_day = dac_train_date - dac_train_date.min()

dac_test_day = dac_test_date - dac_train_date.min()

plt.scatter(dac_train_day.days, dac_train.values, color = 'r', label = 'train dataset')

plt.scatter(dac_test_day.days, dac_test.values, color = 'b', label = 'test dataset')

plt.title("Accounts created vs day")

plt.xlabel("Days")

plt.ylabel("Accounts created")

plt.legend(loc = 'upper left')

print(train.timestamp_first_active.head())

0 20090319043255

1 20090523174809

2 20090609231247

3 20091031060129

4 20091208061105

Name: timestamp_first_active, dtype: int64

print(train.timestamp_first_active.value_counts().unique())

[1]

tfa_train_dt = train.timestamp_first_active.astype(str).apply(lambda x:

datetime.datetime(int(x[:4]),

int(x[4:6]),

int(x[6:8]),

int(x[8:10]),

int(x[10:12]),

int(x[12:])))

print(tfa_train_dt.describe())

count 213451

unique 213451

top 2013-07-01 05:26:34

freq 1

first 2009-03-19 04:32:55

last 2014-06-30 23:58:24

Name: timestamp_first_active, dtype: object

print(train.date_first_booking.describe())

print(test.date_first_booking.describe())

count 88908

unique 1976

top 2014-05-22

freq 248

Name: date_first_booking, dtype: object

count 0.0

mean NaN

std NaN

min NaN

25% NaN

50% NaN

75% NaN

max NaN

Name: date_first_booking, dtype: float64

print(train.age.value_counts().head())

30.0 6124

31.0 6016

29.0 5963

28.0 5939

32.0 5855

Name: age, dtype: int64

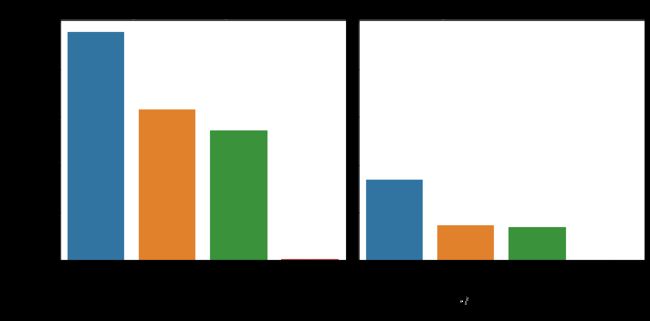

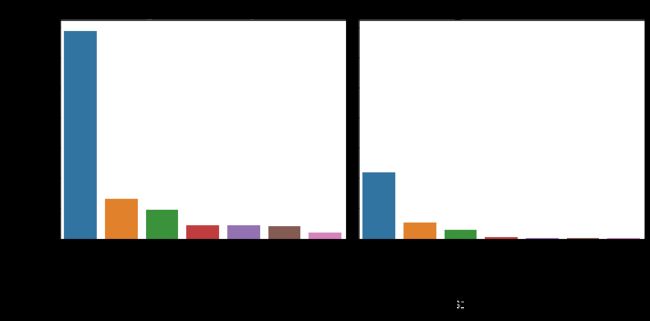

age_train =[train[train.age.isnull()].age.shape[0],

train.query('age < 15').age.shape[0],

train.query("age >= 15 & age <= 90").age.shape[0],

train.query('age > 90').age.shape[0]]

age_test = [test[test.age.isnull()].age.shape[0],

test.query('age < 15').age.shape[0],

test.query("age >= 15 & age <= 90").age.shape[0],

test.query('age > 90').age.shape[0]]

columns = ['Null', 'age < 15', 'age', 'age > 90']

fig, (ax1,ax2) = plt.subplots(1,2,sharex=True, sharey = True,figsize=(10,5))

sns.barplot(columns, age_train, ax = ax1)

sns.barplot(columns, age_test, ax = ax2)

ax1.set_title('training dataset')

ax2.set_title('test dataset')

ax1.set_ylabel('counts')

def feature_barplot(feature, df_train = train, df_test = test, figsize=(10,5), rot = 90, saveimg = False):

feat_train = df_train[feature].value_counts()

feat_test = df_test[feature].value_counts()

fig_feature, (axis1,axis2) = plt.subplots(1,2,sharex=True, sharey = True, figsize = figsize)

sns.barplot(feat_train.index.values, feat_train.values, ax = axis1)

sns.barplot(feat_test.index.values, feat_test.values, ax = axis2)

axis1.set_xticklabels(axis1.xaxis.get_majorticklabels(), rotation = rot)

axis2.set_xticklabels(axis1.xaxis.get_majorticklabels(), rotation = rot)

axis1.set_title(feature + ' of training dataset')

axis2.set_title(feature + ' of test dataset')

axis1.set_ylabel('Counts')

plt.tight_layout()

if saveimg == True:

figname = feature + ".png"

fig_feature.savefig(figname, dpi = 75)

feature_barplot('gender', saveimg = True)

feature_barplot('signup_method')

feature_barplot('signup_flow')

feature_barplot('language')

feature_barplot('affiliate_channel')

feature_barplot('first_affiliate_tracked')

feature_barplot('signup_app')

feature_barplot('first_device_type')

feature_barplot('first_browser')

df_sessions = pd.read_csv('airbnb/sessions.csv')

df_sessions.head(10)

|

user_id |

action |

action_type |

action_detail |

device_type |

secs_elapsed |

| 0 |

d1mm9tcy42 |

lookup |

NaN |

NaN |

Windows Desktop |

319.0 |

| 1 |

d1mm9tcy42 |

search_results |

click |

view_search_results |

Windows Desktop |

67753.0 |

| 2 |

d1mm9tcy42 |

lookup |

NaN |

NaN |

Windows Desktop |

301.0 |

| 3 |

d1mm9tcy42 |

search_results |

click |

view_search_results |

Windows Desktop |

22141.0 |

| 4 |

d1mm9tcy42 |

lookup |

NaN |

NaN |

Windows Desktop |

435.0 |

| 5 |

d1mm9tcy42 |

search_results |

click |

view_search_results |

Windows Desktop |

7703.0 |

| 6 |

d1mm9tcy42 |

lookup |

NaN |

NaN |

Windows Desktop |

115.0 |

| 7 |

d1mm9tcy42 |

personalize |

data |

wishlist_content_update |

Windows Desktop |

831.0 |

| 8 |

d1mm9tcy42 |

index |

view |

view_search_results |

Windows Desktop |

20842.0 |

| 9 |

d1mm9tcy42 |

lookup |

NaN |

NaN |

Windows Desktop |

683.0 |

df_sessions['id'] = df_sessions['user_id']

df_sessions = df_sessions.drop(['user_id'],axis=1)

df_sessions.shape

(10567737, 6)

df_sessions.isnull().sum()

action 79626

action_type 1126204

action_detail 1126204

device_type 0

secs_elapsed 136031

id 34496

dtype: int64

df_sessions.action = df_sessions.action.fillna('NAN')

df_sessions.action_type = df_sessions.action_type.fillna('NAN')

df_sessions.action_detail = df_sessions.action_detail.fillna('NAN')

df_sessions.isnull().sum()

action 0

action_type 0

action_detail 0

device_type 0

secs_elapsed 136031

id 34496

dtype: int64

特征提取

df_sessions.action.head()

0 lookup

1 search_results

2 lookup

3 search_results

4 lookup

Name: action, dtype: object

df_sessions.action.value_counts().min()

1

act_freq = 100

act = dict(zip(*np.unique(df_sessions.action, return_counts=True)))

df_sessions.action = df_sessions.action.apply(lambda x: 'OTHER' if act[x] < act_freq else x)

f_act = df_sessions.action.value_counts().argsort()

f_act_detail = df_sessions.action_detail.value_counts().argsort()

f_act_type = df_sessions.action_type.value_counts().argsort()

f_dev_type = df_sessions.device_type.value_counts().argsort()

dgr_sess = df_sessions.groupby(['id'])

samples = []

ln = len(dgr_sess)

for g in dgr_sess:

gr = g[1]

l = []

l.append(g[0])

l.append(len(gr))

sev = gr.secs_elapsed.fillna(0).values

c_act = [0] * len(f_act)

for i,v in enumerate(gr.action.values):

c_act[f_act[v]] += 1

_, c_act_uqc = np.unique(gr.action.values, return_counts=True)

c_act += [len(c_act_uqc), np.mean(c_act_uqc), np.std(c_act_uqc)]

l = l + c_act

c_act_detail = [0] * len(f_act_detail)

for i,v in enumerate(gr.action_detail.values):

c_act_detail[f_act_detail[v]] += 1

_, c_act_det_uqc = np.unique(gr.action_detail.values, return_counts=True)

c_act_detail += [len(c_act_det_uqc), np.mean(c_act_det_uqc), np.std(c_act_det_uqc)]

l = l + c_act_detail

l_act_type = [0] * len(f_act_type)

c_act_type = [0] * len(f_act_type)

for i,v in enumerate(gr.action_type.values):

l_act_type[f_act_type[v]] += sev[i]

c_act_type[f_act_type[v]] += 1

l_act_type = np.log(1 + np.array(l_act_type)).tolist()

_, c_act_type_uqc = np.unique(gr.action_type.values, return_counts=True)

c_act_type += [len(c_act_type_uqc), np.mean(c_act_type_uqc), np.std(c_act_type_uqc)]

l = l + c_act_type + l_act_type

c_dev_type = [0] * len(f_dev_type)

for i,v in enumerate(gr.device_type .values):

c_dev_type[f_dev_type[v]] += 1

c_dev_type.append(len(np.unique(gr.device_type.values)))

_, c_dev_type_uqc = np.unique(gr.device_type.values, return_counts=True)

c_dev_type += [len(c_dev_type_uqc), np.mean(c_dev_type_uqc), np.std(c_dev_type_uqc)]

l = l + c_dev_type

l_secs = [0] * 5

l_log = [0] * 15

if len(sev) > 0:

l_secs[0] = np.log(1 + np.sum(sev))

l_secs[1] = np.log(1 + np.mean(sev))

l_secs[2] = np.log(1 + np.std(sev))

l_secs[3] = np.log(1 + np.median(sev))

l_secs[4] = l_secs[0] / float(l[1])

log_sev = np.log(1 + sev).astype(int)

l_log = np.bincount(log_sev, minlength=15).tolist()

l = l + l_secs + l_log

samples.append(l)

samples = np.array(samples)

samp_ar = samples[:, 1:].astype(np.float16)

samp_id = samples[:, 0]

col_names = []

for i in range(len(samples[0])-1):

col_names.append('c_' + str(i))

df_agg_sess = pd.DataFrame(samp_ar, columns=col_names)

df_agg_sess['id'] = samp_id

df_agg_sess.index = df_agg_sess.id

df_agg_sess.head()

|

c_0 |

c_1 |

c_2 |

c_3 |

c_4 |

c_5 |

c_6 |

c_7 |

c_8 |

c_9 |

... |

c_448 |

c_449 |

c_450 |

c_451 |

c_452 |

c_453 |

c_454 |

c_455 |

c_456 |

id |

| id |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 00023iyk9l |

40.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

... |

12.0 |

6.0 |

2.0 |

3.0 |

3.0 |

1.0 |

0.0 |

1.0 |

0.0 |

00023iyk9l |

| 0010k6l0om |

63.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

... |

8.0 |

12.0 |

2.0 |

8.0 |

4.0 |

3.0 |

0.0 |

0.0 |

0.0 |

0010k6l0om |

| 001wyh0pz8 |

90.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

... |

27.0 |

30.0 |

9.0 |

8.0 |

1.0 |

0.0 |

0.0 |

0.0 |

0.0 |

001wyh0pz8 |

| 0028jgx1x1 |

31.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

... |

1.0 |

2.0 |

3.0 |

5.0 |

4.0 |

1.0 |

0.0 |

0.0 |

0.0 |

0028jgx1x1 |

| 002qnbzfs5 |

789.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

0.0 |

... |

111.0 |

102.0 |

104.0 |

57.0 |

28.0 |

9.0 |

4.0 |

1.0 |

1.0 |

002qnbzfs5 |

5 rows × 458 columns

train = pd.read_csv("airbnb/train_users_2.csv")

test = pd.read_csv("airbnb/test_users.csv")

train_row = train.shape[0]

labels = train['country_destination'].values

train.drop(['country_destination', 'date_first_booking'], axis = 1, inplace = True)

test.drop(['date_first_booking'], axis = 1, inplace = True)

df = pd.concat([train, test], axis = 0, ignore_index = True)

tfa = df.timestamp_first_active.astype(str).apply(lambda x: datetime.datetime(int(x[:4]),

int(x[4:6]),

int(x[6:8]),

int(x[8:10]),

int(x[10:12]),

int(x[12:])))

df['tfa_year'] = np.array([x.year for x in tfa])

df['tfa_month'] = np.array([x.month for x in tfa])

df['tfa_day'] = np.array([x.day for x in tfa])

df['tfa_wd'] = np.array([x.isoweekday() for x in tfa])

df_tfa_wd = pd.get_dummies(df.tfa_wd, prefix = 'tfa_wd')

df = pd.concat((df, df_tfa_wd), axis = 1)

df.drop(['tfa_wd'], axis = 1, inplace = True)

Y = 2000

seasons = [(0, (date(Y, 1, 1), date(Y, 3, 20))),

(1, (date(Y, 3, 21), date(Y, 6, 20))),

(2, (date(Y, 6, 21), date(Y, 9, 22))),

(3, (date(Y, 9, 23), date(Y, 12, 20))),

(0, (date(Y, 12, 21), date(Y, 12, 31)))]

def get_season(dt):

dt = dt.date()

dt = dt.replace(year=Y)

return next(season for season, (start, end) in seasons if start <= dt <= end)

df['tfa_season'] = np.array([get_season(x) for x in tfa])

df_tfa_season = pd.get_dummies(df.tfa_season, prefix = 'tfa_season')

df = pd.concat((df, df_tfa_season), axis = 1)

df.drop(['tfa_season'], axis = 1, inplace = True)

dac = pd.to_datetime(df.date_account_created)

df['dac_year'] = np.array([x.year for x in dac])

df['dac_month'] = np.array([x.month for x in dac])

df['dac_day'] = np.array([x.day for x in dac])

df['dac_wd'] = np.array([x.isoweekday() for x in dac])

df_dac_wd = pd.get_dummies(df.dac_wd, prefix = 'dac_wd')

df = pd.concat((df, df_dac_wd), axis = 1)

df.drop(['dac_wd'], axis = 1, inplace = True)

df['dac_season'] = np.array([get_season(x) for x in dac])

df_dac_season = pd.get_dummies(df.dac_season, prefix = 'dac_season')

df = pd.concat((df, df_dac_season), axis = 1)

df.drop(['dac_season'], axis = 1, inplace = True)

dt_span = dac.subtract(tfa).dt.days

dt_span.value_counts().head(10)

def get_span(dt):

if dt == -1:

return 'OneDay'

elif (dt < 30) & (dt > -1):

return 'OneMonth'

elif (dt >= 30) & (dt <= 365):

return 'OneYear'

else:

return 'other'

df['dt_span'] = np.array([get_span(x) for x in dt_span])

df_dt_span = pd.get_dummies(df.dt_span, prefix = 'dt_span')

df = pd.concat((df, df_dt_span), axis = 1)

df.drop(['dt_span'], axis = 1, inplace = True)

df.drop(['date_account_created','timestamp_first_active'], axis = 1, inplace = True)

av = df.age.values

av = np.where(np.logical_and(av<2000, av>1900), 2014-av, av)

df['age'] = av

age = df.age

age.fillna(-1, inplace = True)

div = 15

def get_age(age):

if age < 0:

return 'NA'

elif (age < div):

return div

elif (age <= div * 2):

return div*2

elif (age <= div * 3):

return div * 3

elif (age <= div * 4):

return div * 4

elif (age <= div * 5):

return div * 5

elif (age <= 110):

return div * 6

else:

return 'Unphysical'

df['age'] = np.array([get_age(x) for x in age])

df_age = pd.get_dummies(df.age, prefix = 'age')

df = pd.concat((df, df_age), axis = 1)

df.drop(['age'], axis = 1, inplace = True)

feat_toOHE = ['gender',

'signup_method',

'signup_flow',

'language',

'affiliate_channel',

'affiliate_provider',

'first_affiliate_tracked',

'signup_app',

'first_device_type',

'first_browser']

for f in feat_toOHE:

df_ohe = pd.get_dummies(df[f], prefix=f, dummy_na=True)

df.drop([f], axis = 1, inplace = True)

df = pd.concat((df, df_ohe), axis = 1)

df_all = pd.merge(df, df_agg_sess, how='left')

df_all = df_all.drop(['id'], axis=1)

df_all = df_all.fillna(-2)

df_all['all_null'] = np.array([sum(r<0) for r in df_all.values])

数据准备

Xtrain = df_all.iloc[:train_row, :]

Xtest = df_all.iloc[train_row:, :]

Xtrain.to_csv("airbnb/Airbnb_xtrain_v2.csv")

Xtest.to_csv("airbnb/Airbnb_xtest_v2.csv")

labels.tofile("airbnb/Airbnb_ytrain_v2.csv", sep='\n', format='%s')

xtrain = pd.read_csv("airbnb/Airbnb_xtrain_v2.csv",index_col=0)

ytrain = pd.read_csv("airbnb/Airbnb_ytrain_v2.csv", header=None)

xtrain.head()

ytrain.head()

le = LabelEncoder()

ytrain_le = le.fit_transform(ytrain.values)

n = int(xtrain.shape[0]*0.1)

xtrain_new = xtrain.iloc[:n, :]

ytrain_new = ytrain_le[:n]

X_scaler = StandardScaler()

xtrain_new = X_scaler.fit_transform(xtrain_new)

from sklearn.metrics import make_scorer

def dcg_score(y_true, y_score, k=5):

"""

y_true : array, shape = [n_samples] #数据

Ground truth (true relevance labels).

y_score : array, shape = [n_samples, n_classes] #预测的分数

Predicted scores.

k : int

"""

order = np.argsort(y_score)[::-1]

y_true = np.take(y_true, order[:k])

gain = 2 ** y_true - 1

discounts = np.log2(np.arange(len(y_true)) + 2)

return np.sum(gain / discounts)

def ndcg_score(ground_truth, predictions, k=5):

"""

Parameters

----------

ground_truth : array, shape = [n_samples]

Ground truth (true labels represended as integers).

predictions : array, shape = [n_samples, n_classes]

Predicted probabilities. 预测的概率

k : int

Rank.

"""

lb = LabelBinarizer()

lb.fit(range(len(predictions) + 1))

T = lb.transform(ground_truth)

scores = []

for y_true, y_score in zip(T, predictions):

actual = dcg_score(y_true, y_score, k)

best = dcg_score(y_true, y_true, k)

score = float(actual) / float(best)

scores.append(score)

return np.mean(scores)

构建模型

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import KFold

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import train_test_split

lr = LogisticRegression(C = 1.0, penalty='l2', multi_class='ovr')

RANDOM_STATE = 2017

kf = KFold(n_splits=5, random_state=RANDOM_STATE)

train_score = []

cv_score = []

k_ndcg = 3

for train_index, test_index in kf.split(xtrain_new, ytrain_new):

X_train, X_test = xtrain_new[train_index, :], xtrain_new[test_index, :]

y_train, y_test = ytrain_new[train_index], ytrain_new[test_index]

lr.fit(X_train, y_train)

y_pred = lr.predict_proba(X_test)

train_ndcg_score = ndcg_score(y_train, lr.predict_proba(X_train), k = k_ndcg)

cv_ndcg_score = ndcg_score(y_test, y_pred, k=k_ndcg)

train_score.append(train_ndcg_score)

cv_score.append(cv_ndcg_score)

print ("\nThe training score is: {}".format(np.mean(train_score)))

print ("\nThe cv score is: {}".format(np.mean(cv_score)))

iteration = [1,5,10,15,20, 50, 100]

kf = KFold(n_splits=3, random_state=RANDOM_STATE)

train_score = []

cv_score = []

k_ndcg = 5

for i, item in enumerate(iteration):

lr = LogisticRegression(C=1.0, max_iter=item, tol=1e-5, solver='newton-cg', multi_class='ovr')

train_score_iter = []

cv_score_iter = []

for train_index, test_index in kf.split(xtrain_new, ytrain_new):

X_train, X_test = xtrain_new[train_index, :], xtrain_new[test_index, :]

y_train, y_test = ytrain_new[train_index], ytrain_new[test_index]

lr.fit(X_train, y_train)

y_pred = lr.predict_proba(X_test)

train_ndcg_score = ndcg_score(y_train, lr.predict_proba(X_train), k = k_ndcg)

cv_ndcg_score = ndcg_score(y_test, y_pred, k=k_ndcg)

train_score_iter.append(train_ndcg_score)

cv_score_iter.append(cv_ndcg_score)

train_score.append(np.mean(train_score_iter))

cv_score.append(np.mean(cv_score_iter))

ymin = np.min(cv_score)-0.05

ymax = np.max(train_score)+0.05

plt.figure(figsize=(9,4))

plt.plot(iteration, train_score, 'ro-', label = 'training')

plt.plot(iteration, cv_score, 'b*-', label = 'Cross-validation')

plt.xlabel("iterations")

plt.ylabel("Score")

plt.xlim(-5, np.max(iteration)+10)

plt.ylim(ymin, ymax)

plt.plot(np.linspace(20,20,50), np.linspace(ymin, ymax, 50), 'g--')

plt.legend(loc = 'lower right', fontsize = 12)

plt.title("Score vs iteration learning curve")

plt.tight_layout()

perc = [0.01,0.02,0.05,0.1,0.2,0.5,1]

kf = KFold(n_splits=3, random_state=RANDOM_STATE)

train_score = []

cv_score = []

k_ndcg = 5

for i, item in enumerate(perc):

lr = LogisticRegression(C=1.0, max_iter=20, tol=1e-6, solver='newton-cg', multi_class='ovr')

train_score_iter = []

cv_score_iter = []

n = int(xtrain_new.shape[0]*item)

xtrain_perc = xtrain_new[:n, :]

ytrain_perc = ytrain_new[:n]

for train_index, test_index in kf.split(xtrain_perc, ytrain_perc):

X_train, X_test = xtrain_perc[train_index, :], xtrain_perc[test_index, :]

y_train, y_test = ytrain_perc[train_index], ytrain_perc[test_index]

print(X_train.shape, X_test.shape)

lr.fit(X_train, y_train)

y_pred = lr.predict_proba(X_test)

train_ndcg_score = ndcg_score(y_train, lr.predict_proba(X_train), k = k_ndcg)

cv_ndcg_score = ndcg_score(y_test, y_pred, k=k_ndcg)

train_score_iter.append(train_ndcg_score)

cv_score_iter.append(cv_ndcg_score)

train_score.append(np.mean(train_score_iter))

cv_score.append(np.mean(cv_score_iter))

ymin = np.min(cv_score)-0.1

ymax = np.max(train_score)+0.1

plt.figure(figsize=(9,4))

plt.plot(np.array(perc)*100, train_score, 'ro-', label = 'training')

plt.plot(np.array(perc)*100, cv_score, 'bo-', label = 'Cross-validation')

plt.xlabel("Sample size (unit %)")

plt.ylabel("Score")

plt.xlim(-5, np.max(perc)*100+10)

plt.ylim(ymin, ymax)

plt.legend(loc = 'lower right', fontsize = 12)

plt.title("Score vs sample size learning curve")

plt.tight_layout()

from sklearn.ensemble import AdaBoostClassifier, BaggingClassifier, ExtraTreesClassifier

from sklearn.ensemble import GradientBoostingClassifier, RandomForestClassifier

from sklearn.tree import DecisionTreeClassifier

from sklearn.ensemble import *

from sklearn.svm import SVC, LinearSVC, NuSVC

LEARNING_RATE = 0.1

N_ESTIMATORS = 50

RANDOM_STATE = 2017

MAX_DEPTH = 9

clf_tree ={

'DTree': DecisionTreeClassifier(max_depth=MAX_DEPTH,

random_state=RANDOM_STATE),

'RF': RandomForestClassifier(n_estimators=N_ESTIMATORS,

max_depth=MAX_DEPTH,

random_state=RANDOM_STATE),

'AdaBoost': AdaBoostClassifier(n_estimators=N_ESTIMATORS,

learning_rate=LEARNING_RATE,

random_state=RANDOM_STATE),

'Bagging': BaggingClassifier(n_estimators=N_ESTIMATORS,

random_state=RANDOM_STATE),

'ExtraTree': ExtraTreesClassifier(max_depth=MAX_DEPTH,

n_estimators=N_ESTIMATORS,

random_state=RANDOM_STATE),

'GraBoost': GradientBoostingClassifier(learning_rate=LEARNING_RATE,

max_depth=MAX_DEPTH,

n_estimators=N_ESTIMATORS,

random_state=RANDOM_STATE)

}

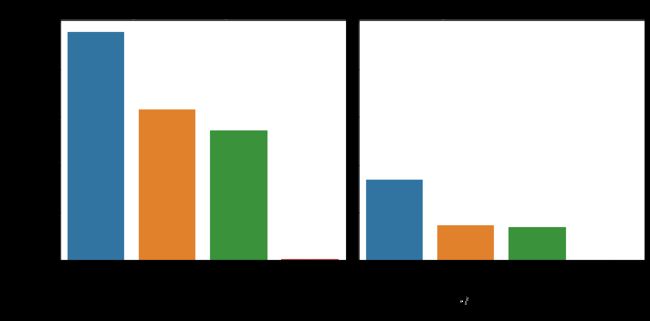

train_score = []

cv_score = []

kf = KFold(n_splits=3, random_state=RANDOM_STATE)

k_ndcg = 5

for key in clf_tree.keys():

clf = clf_tree.get(key)

train_score_iter = []

cv_score_iter = []

for train_index, test_index in kf.split(xtrain_new, ytrain_new):

X_train, X_test = xtrain_new[train_index, :], xtrain_new[test_index, :]

y_train, y_test = ytrain_new[train_index], ytrain_new[test_index]

clf.fit(X_train, y_train)

y_pred = clf.predict_proba(X_test)

train_ndcg_score = ndcg_score(y_train, clf.predict_proba(X_train), k = k_ndcg)

cv_ndcg_score = ndcg_score(y_test, y_pred, k=k_ndcg)

train_score_iter.append(train_ndcg_score)

cv_score_iter.append(cv_ndcg_score)

train_score.append(np.mean(train_score_iter))

cv_score.append(np.mean(cv_score_iter))

train_score_tree = train_score

cv_score_tree = cv_score

ymin = np.min(cv_score)-0.05

ymax = np.max(train_score)+0.05

x_ticks = clf_tree.keys()

plt.figure(figsize=(8,5))

plt.plot(range(len(x_ticks)), train_score_tree, 'ro-', label = 'training')

plt.plot(range(len(x_ticks)),cv_score_tree, 'bo-', label = 'Cross-validation')

plt.xticks(range(len(x_ticks)),x_ticks,rotation = 45, fontsize = 10)

plt.xlabel("Tree method", fontsize = 12)

plt.ylabel("Score", fontsize = 12)

plt.xlim(-0.5, 5.5)

plt.ylim(ymin, ymax)

plt.legend(loc = 'best', fontsize = 12)

plt.title("Different tree methods")

plt.tight_layout()

TOL = 1e-4

MAX_ITER = 1000

clf_svm = {

'SVM-rbf': SVC(kernel='rbf',

max_iter=MAX_ITER,

tol=TOL, random_state=RANDOM_STATE,

decision_function_shape='ovr'),

'SVM-poly': SVC(kernel='poly',

max_iter=MAX_ITER,

tol=TOL, random_state=RANDOM_STATE,

decision_function_shape='ovr'),

'SVM-linear': SVC(kernel='linear',

max_iter=MAX_ITER,

tol=TOL,

random_state=RANDOM_STATE,

decision_function_shape='ovr'),

'LinearSVC': LinearSVC(max_iter=MAX_ITER,

tol=TOL,

random_state=RANDOM_STATE,

multi_class = 'ovr')

}

train_score_svm = []

cv_score_svm = []

kf = KFold(n_splits=3, random_state=RANDOM_STATE)

k_ndcg = 5

for key in clf_svm.keys():

clf = clf_svm.get(key)

train_score_iter = []

cv_score_iter = []

for train_index, test_index in kf.split(xtrain_new, ytrain_new):

X_train, X_test = xtrain_new[train_index, :], xtrain_new[test_index, :]

y_train, y_test = ytrain_new[train_index], ytrain_new[test_index]

clf.fit(X_train, y_train)

y_pred = clf.decision_function(X_test)

train_ndcg_score = ndcg_score(y_train, clf.decision_function(X_train), k = k_ndcg)

cv_ndcg_score = ndcg_score(y_test, y_pred, k=k_ndcg)

train_score_iter.append(train_ndcg_score)

cv_score_iter.append(cv_ndcg_score)

train_score_svm.append(np.mean(train_score_iter))

cv_score_svm.append(np.mean(cv_score_iter))

ymin = np.min(cv_score_svm)-0.05

ymax = np.max(train_score_svm)+0.05

x_ticks = clf_svm.keys()

plt.figure(figsize=(8,5))

plt.plot(range(len(x_ticks)), train_score_svm, 'ro-', label = 'training')

plt.plot(range(len(x_ticks)),cv_score_svm, 'bo-', label = 'Cross-validation')

plt.xticks(range(len(x_ticks)),x_ticks,rotation = 45, fontsize = 10)

plt.xlabel("Tree method", fontsize = 12)

plt.ylabel("Score", fontsize = 12)

plt.xlim(-0.5, 3.5)

plt.ylim(ymin, ymax)

plt.legend(loc = 'best', fontsize = 12)

plt.title("Different SVM methods")

plt.tight_layout()

import xgboost as xgb

def customized_eval(preds, dtrain):

labels = dtrain.get_label()

top = []

for i in range(preds.shape[0]):

top.append(np.argsort(preds[i])[::-1][:5])

mat = np.reshape(np.repeat(labels,np.shape(top)[1]) == np.array(top).ravel(),np.array(top).shape).astype(int)

score = np.mean(np.sum(mat/np.log2(np.arange(2, mat.shape[1] + 2)),axis = 1))

return 'ndcg5', score

NUM_XGB = 200

params = {}

params['colsample_bytree'] = 0.6

params['max_depth'] = 6

params['subsample'] = 0.8

params['eta'] = 0.3

params['seed'] = RANDOM_STATE

params['num_class'] = 12

params['objective'] = 'multi:softprob'

train_score_iter = []

cv_score_iter = []

kf = KFold(n_splits = 3, random_state=RANDOM_STATE)

k_ndcg = 5

for train_index, test_index in kf.split(xtrain_new, ytrain_new):

X_train, X_test = xtrain_new[train_index, :], xtrain_new[test_index, :]

y_train, y_test = ytrain_new[train_index], ytrain_new[test_index]

train_xgb = xgb.DMatrix(X_train, label= y_train)

test_xgb = xgb.DMatrix(X_test, label = y_test)

watchlist = [ (train_xgb,'train'), (test_xgb, 'test') ]

bst = xgb.train(params,

train_xgb,

NUM_XGB,

watchlist,

feval = customized_eval,

verbose_eval = 3,

early_stopping_rounds = 5)

y_pred = np.array(bst.predict(test_xgb))

y_pred_train = np.array(bst.predict(train_xgb))

train_ndcg_score = ndcg_score(y_train, y_pred_train , k = k_ndcg)

cv_ndcg_score = ndcg_score(y_test, y_pred, k=k_ndcg)

train_score_iter.append(train_ndcg_score)

cv_score_iter.append(cv_ndcg_score)

train_score_xgb = np.mean(train_score_iter)

cv_score_xgb = np.mean(cv_score_iter)

print ("\nThe training score is: {}".format(train_score_xgb))

print ("The cv score is: {}\n".format(cv_score_xgb))

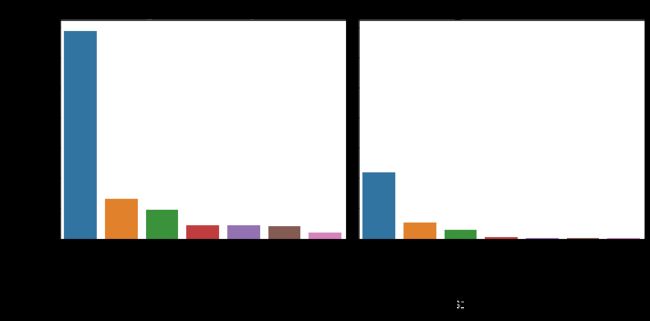

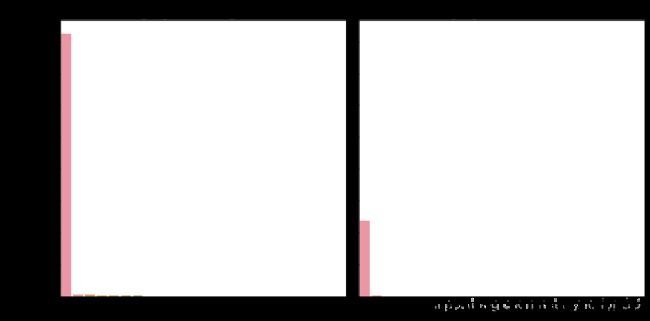

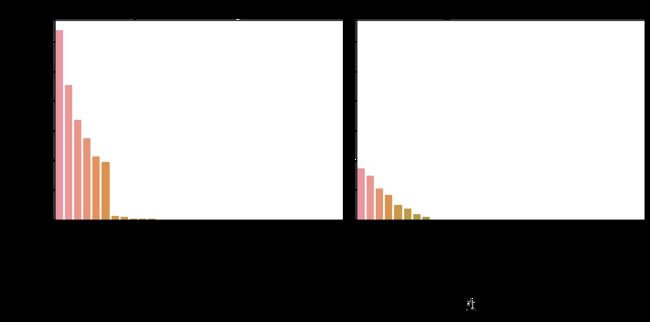

模型比较

model_cvscore = np.hstack((cv_score_lr, cv_score_tree, cv_score_svm, cv_score_xgb))

model_name = np.array(['LinearReg','ExtraTree','DTree','RF','GraBoost','Bagging','AdaBoost','LinearSVC','SVM-linear','SVM-rbf','SVM-poly','Xgboost'])

fig = plt.figure(figsize=(8,4))

sns.barplot(model_cvscore, model_name, palette="Blues_d")

plt.xticks(rotation=0, size = 10)

plt.xlabel("CV score", fontsize = 12)

plt.ylabel("Model", fontsize = 12)

plt.title("Cross-validation score for different models")

plt.tight_layout()