Kubeadm搭建Kubernetes v1.17.0 集群

Kubeadm搭建k8s集群

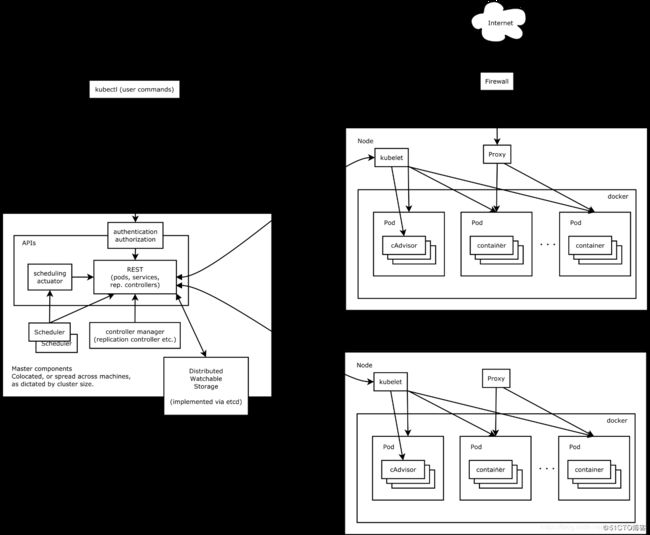

- kubernets架构图

- 一.kubeadm部署kubernetes v1.17.0 集群

- 1.环境准备

- 2.角色

- 3.初始化(注意:全部的主机)

- 3.1 关闭防火墙

- 3.2 关闭selinux

- 3.2 关闭swap分区

- 3.3 设置主机名

- 3.4 配置hosts

- 3.5 内核调整

- 4. 安装kubernets和docker

- 4.1 添加docker的yum源

- 4.2 添加kubernets的yum源

- 4.3 安装docker kubelet kubeadm kubelectl

- 4.3 开启docker

- 4.4 开启kubelet

- 5. Master节点(192.168.3.71)启动集群

- 6. Node节点(192.168.3.72)加入集群

- 7. 集群检查

- 7.1 检查集群节点

- 7.2 检查pod状态

kubernets架构图

一.kubeadm部署kubernetes v1.17.0 集群

1.环境准备

系统版本:Centos7.4

硬件需求:CPU至少2核,内存至少2G

2.角色

| ip | 角色 | 安装软件 |

|---|---|---|

| 192.168.3.71 | k8s-master | kube-apiserver kube-schduler kube-controller-manager docker flannel kubelet |

| 192.168.3.72 | k8s-node1 | kubelet kube-proxy docker flannel |

3.初始化(注意:全部的主机)

3.1 关闭防火墙

systemctl stop firewalld && systemctl disable firewalld

3.2 关闭selinux

sed -i 's/^SELINUX=.*/SELINUX=disabled/' /etc/selinux/config && setenforce 0

3.2 关闭swap分区

swapoff -a # 临时

sed -i '/ swap / s/^\(.*\)$/#\1/g' /etc/fstab #永久

3.3 设置主机名

hostnamectl set-hostname k8s-master(192.168.3.71主机上)

hostnamectl set-hostname k8s-node01(192.168.3.72主机上)

3.4 配置hosts

cat >> /etc/hosts << EOF

192.168.3.71 k8s-master

192.168.3.72 k8s-node01

EOF

3.5 内核调整

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

4. 安装kubernets和docker

4.1 添加docker的yum源

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

4.2 添加kubernets的yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

4.3 安装docker kubelet kubeadm kubelectl

yum install docker kubelet kubeadm kubectl ##每个节点都需要安装

4.3 开启docker

配置国内镜像

vi /etc/docker/daemon.json

{

"registry-mirrors": ["https://dlbpv56y.mirror.aliyuncs.com"]

}

systemctl enable docker

systemctl start docker

4.4 开启kubelet

systemctl enable kubelet

systemctl start kubelet

5. Master节点(192.168.3.71)启动集群

kubeadm init \

--apiserver-advertise-address=192.168.3.71 \

--image-repository registry.aliyuncs.com/google_containers \

--kubernetes-version v1.17.0 \

--service-cidr=10.96.0.0/12 \

--pod-network-cidr=10.244.0.0/16

需要等待几分钟下载镜像

输出结果:

W1218 21:47:32.757652 29684 validation.go:28] Cannot validate kube-proxy config - no validator is available

W1218 21:47:32.757701 29684 validation.go:28] Cannot validate kubelet config - no validator is available

[init] Using Kubernetes version: v1.17.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.3.71]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.3.71 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.3.71 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W1218 21:50:43.371794 29684 manifests.go:214] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W1218 21:50:43.373196 29684 manifests.go:214] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 39.004789 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.17" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[kubelet-check] Initial timeout of 40s passed.

[bootstrap-token] Using token: czc135.u5r001hxfk6ymz52

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.3.71:6443 --token czc135.u5r001hxfk6ymz52 \

--discovery-token-ca-cert-hash sha256:2866f682c032d586e814e48032049177946bb44d9241f135872702030712456d

根据输出提示操作:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

安装网络插件flannel

kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/2140ac876ef134e0ed5af15c65e414cf26827915/Documentation/kube-flannel.yml

更多flannel信息查看GitHub上的CoreOS

6. Node节点(192.168.3.72)加入集群

在192.168.3.72终端

kubeadm join 192.168.3.71:6443 --token czc135.u5r001hxfk6ymz52 \

--discovery-token-ca-cert-hash sha256:2866f682c032d586e814e48032049177946bb44d9241f135872702030712456d

输出结果如下:

W1219 21:26:34.152395 11656 join.go:346] [preflight] WARNING: JoinControlPane.controlPlane settings will be ignored when control-plane flag is not set.

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -oyaml'

[kubelet-start] Downloading configuration for the kubelet from the "kubelet-config-1.17" ConfigMap in the kube-system namespace

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

7. 集群检查

7.1 检查集群节点

[root@k8s-master /]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 5m25s v1.17.0

k8s-node01 Ready <none> 2m22s v1.17.0

7.2 检查pod状态

[root@k8s-master /]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-9d85f5447-6hz7p 1/1 Running 0 4m56s

coredns-9d85f5447-9fv7v 1/1 Running 0 4m56s

etcd-k8s-master 1/1 Running 0 4m51s

kube-apiserver-k8s-master 1/1 Running 0 4m51s

kube-controller-manager-k8s-master 1/1 Running 0 4m51s

kube-flannel-ds-amd64-8gcwc 1/1 Running 0 2m12s

kube-flannel-ds-amd64-g5752 1/1 Running 0 3m17s

kube-proxy-qwd7r 1/1 Running 0 2m12s

kube-proxy-t22g5 1/1 Running 0 4m56s

kube-scheduler-k8s-master 1/1 Running 0 4m51s