Python爬取起点中文网月票榜前500名网络小说介绍

观察网页结构

进入起点原创风云榜:http://r.qidian.com/yuepiao?chn=-1

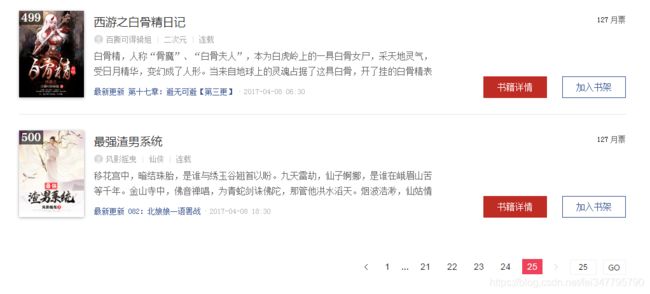

老套路,懂我的人都知道我要看看有多少内容和页数需要爬。

https://ask.hellobi.com/uploads/article/20170408/0b0192094e6d073f9a16bc3211e7e904.png

编写爬虫

import requests

from bs4 import BeautifulSoup

res=requests.get('http://r.qidian.com/yuepiao?chn=-1&page=1')

#print(res)#中间打印看看,好习惯

soup=BeautifulSoup(res.text,'html.parser')#

筛选器

#print(soup)

for news in soup.select('.rank-view-list li'):#定位

print(news)

经过测试

for news in soup.select('.rank-view-list li'):#Wrap后面一定有个空格,因为网页里有

#print(news)

print(news.select('a')[1].text,news.select('a')[2].text,news.select('a')[3].text,news.select('p')[1].text,news.select('p')[2].text,news.select('a')[0]['href'])

可以设置提取内容如上面代码所示

for news in soup.select('.rank-view-list li'):#Wrap后面一定有个空格,因为网页里有

#print(news)

#print(news.select('a')[1].text,news.select('a')[2].text,news.select('a')[3].text,news.select('p')[1].text,news.select('p')[2].text,news.select('a')[0]['href'])

newsary.append({'title':news.select('a')[1].text,'name':news.select('a')[2].text,'style':news.select('a')[3].text,'describe':news.select('p')[2].text,'url':news.select('a')[0]['href']})

使用pandas的DataFrame格式存放

使用循环爬取25页内容

import requests

from bs4 import BeautifulSoup

newsary=[]

for i in range(25):

res=requests.get('http://r.qidian.com/yuepiao?chn=-1&page='+str(i))

#print(res)

soup=BeautifulSoup(res.text,'html.parser')

#print(soup)

#for news in soup.select('.rank-view-list h4'):#Wrap后面一定有个空格,因为网页里有

for news in soup.select('.rank-view-list li'):#Wrap后面一定有个空格,因为网页里有

#print(news)

#print(news.select('a')[1].text,news.select('a')[2].text,news.select('a')[3].text,news.select('p')[1].text,news.select('p')[2].text,news.select('a')[0]['href'])

newsary.append({'title':news.select('a')[1].text,'name':news.select('a')[2].text,'style':news.select('a')[3].text,'describe':news.select('p')[1].text,'lastest':news.select('p')[2].text,'url':news.select('a')[0]['href']})

import pandas

newsdf=pandas.DataFrame(newsary)

newsdf