多目标检测与识别 YOLOV3 解读3 网络结构及实现(PyTorch)

网络结构

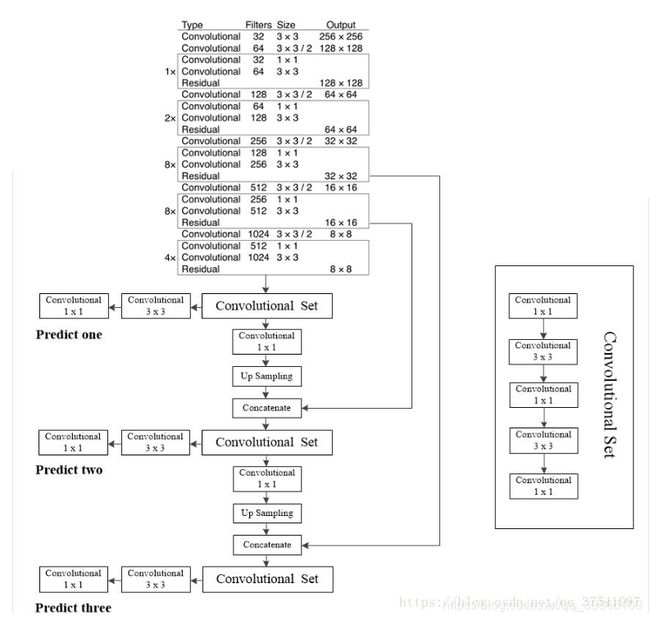

Darknet 53

一、从整体上看,网络一共有152层,分为两个网络,一个是提取特征的网络,另一个是侦测网络。

先看特征提取网络,1、全部用的是1 * 1和3 * 3 的卷积核加残差,这也是为什么能把网络加深的原因。2、

网络用卷积代替池化,让特征充分被提取,池化主要是用来快速压缩信息的,会丢失信息,用在这里提特征不合适。3、开始阶段传入图片较大,计算量也较大,所以用少量的残差块,通道野小。但是后面随着图片给大小的减小,为了平衡与前面计算量,充分利用显存。所用后面通道变大,残差块加多。

二、再看侦测网络,首先predict one得到的特征图大小是13 * 13的是用来侦测大物体的,因为13 * 13的特征图有较大的感受野。predict two 是13 * 13得特征图经过上采样得到的26 * 26的特征图,用来侦测中等物体的,同时这里用了拼接Concatenate,这时为了让深层信息与浅层信息充分融合。同理predict three也是一样的。

下面是代码实现

import torch

import torch.nn as nn

import cfg

from torchsummary import summary

class upsampling(torch.nn.Module):

def __init__(self):

super(upsampling, self).__init__()

def forward(self, x):

return torch.nn.functional.interpolate(x, scale_factor=2, mode='nearest')

class convolutionlayer(torch.nn.Module):

def __init__(self, in_channels, out_channels, kerals, strides, pad):

super(convolutionlayer, self).__init__()

self.sub_module = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kerals, strides, pad),

nn.BatchNorm2d(out_channels),

nn.LeakyReLU()

)

def forward(self, x):

return self.sub_module(x)

class downsampling(torch.nn.Module):

def __init__(self, in_channels, out_channels):

super(downsampling, self).__init__()

self.sub_module = convolutionlayer(in_channels, out_channels, 3, 2, 1)

def forward(self, x):

return self.sub_module(x)

class Residuallayer(torch.nn.Module):

def __init__(self, in_channels):

super(Residuallayer, self).__init__()

self.sub_module = nn.Sequential(

convolutionlayer(in_channels, in_channels // 2, 1, 1, 0),

convolutionlayer(in_channels // 2, in_channels, 3, 1, 1)

)

def forward(self, x):

return self.sub_module(x) + x

class Convolutionalset(torch.nn.Module):

def __init__(self, in_channels, out_channels):

super(Convolutionalset, self).__init__()

self.sub_module = nn.Sequential(

convolutionlayer(in_channels, out_channels, 1, 1, 0),

convolutionlayer(out_channels, in_channels, 3, 1, 1),

convolutionlayer(in_channels, out_channels, 1, 1, 0),

convolutionlayer(out_channels, in_channels, 3, 1, 1),

convolutionlayer(in_channels, out_channels, 1, 1, 0)

)

def forward(self, x):

return self.sub_module(x)

class Miannet(torch.nn.Module):

def __init__(self):

super(Miannet, self).__init__()

self.net_52 = nn.Sequential(

convolutionlayer(3, 32, 3, 1, 1),

convolutionlayer(32, 64, 3, 2, 1),

Residuallayer(64),

downsampling(64, 128),

Residuallayer(128),

Residuallayer(128),

downsampling(128, 256),

Residuallayer(256),

Residuallayer(256),

Residuallayer(256),

Residuallayer(256),

Residuallayer(256),

Residuallayer(256),

Residuallayer(256),

Residuallayer(256)

)

self.net_26 = nn.Sequential(

convolutionlayer(256, 512, 3, 2, 1),

Residuallayer(512),

Residuallayer(512),

Residuallayer(512),

Residuallayer(512),

Residuallayer(512),

Residuallayer(512),

Residuallayer(512),

Residuallayer(512)

)

self.net_13 = nn.Sequential(

convolutionlayer(512, 1024, 3, 2, 1),

Residuallayer(1024),

Residuallayer(1024),

Residuallayer(1024),

Residuallayer(1024)

)

self.result0_13 = nn.Sequential(

Convolutionalset(1024, 512)

)

self.result1_13 = nn.Sequential(

convolutionlayer(512, 1024, 3, 1, 1),

nn.Conv2d(1024, 3 * (5+cfg.CLASS_NUM), 1, 1, 0)

)

self.result0_26 = nn.Sequential(

convolutionlayer(512, 256, 1, 1, 0),

upsampling()

)

self.result1_26 = nn.Sequential(

Convolutionalset(768, 256)

)

self.result2_26 = nn.Sequential(

convolutionlayer(256, 512, 3, 1, 1),

nn.Conv2d(512, 3 * (5+cfg.CLASS_NUM), 1, 1, 0)

)

self.result0_52 = nn.Sequential(

convolutionlayer(256, 128, 1, 1, 0),

upsampling()

)

self.result1_52 = nn.Sequential(

Convolutionalset(384, 128)

)

self.result2_52 = nn.Sequential(

convolutionlayer(128, 256, 3, 1, 1),

nn.Conv2d(256, 3 * (5+cfg.CLASS_NUM), 1, 1, 0)

)

def forward(self, x):

l_52 = self.net_52(x)

m_26 = self.net_26(l_52)

s_13 = self.net_13(m_26)

result_13_out0 = self.result0_13(s_13)

result_13_out1 = self.result1_13(result_13_out0)

result_26_out0 = self.result0_26(result_13_out0)

result_26_out1 = torch.cat((result_26_out0, m_26), dim=1) # 通道融合

result_26_out2 = self.result1_26(result_26_out1)

result_26_out3 = self.result2_26(result_26_out2)

result_52_out0 = self.result0_52(result_26_out2)

result_52_out1 = torch.cat((result_52_out0, l_52), dim=1)

result_52_out2 = self.result1_52(result_52_out1)

result_52_out3 = self.result2_52(result_52_out2)

return result_13_out1, result_26_out3, result_52_out3