tensorflow 保存/加载模型

由于官方网站无法访问,所以只能看大家的博客或者stackOverflow里面的问题。以下是问题其中的一个回答:

Tensorflow version 0.11:

Save the model:

import tensorflow as tf

#Prepare to feed input, i.e. feed_dict and placeholders

w1 = tf.placeholder("float", name="w1")

w2 = tf.placeholder("float", name="w2")

b1= tf.Variable(2.0,name="bias")

feed_dict ={w1:4,w2:8}

#Define a test operation that we will restore

w3 = tf.add(w1,w2)

w4 = tf.multiply(w3,b1,name="op_to_restore")

sess = tf.Session()

sess.run(tf.global_variables_initializer())

#Create a saver object which will save all the variables

saver = tf.train.Saver()

#Run the operation by feeding input

print sess.run(w4,feed_dict)

#Prints 24 which is sum of (w1+w2)*b1

#Now, save the graph

saver.save(sess, 'my_test_model',global_step=1000)

Restore the model:

import tensorflow as tf

sess=tf.Session()

#First let's load meta graph and restore weights

saver = tf.train.import_meta_graph('my_test_model-1000.meta')

saver.restore(sess,tf.train.latest_checkpoint('./'))

# Access saved Variables directly

print(sess.run('bias:0'))

# This will print 2, which is the value of bias that we saved

# Now, let's access and create placeholders variables and

# create feed-dict to feed new data

graph = tf.get_default_graph()

w1 = graph.get_tensor_by_name("w1:0")

w2 = graph.get_tensor_by_name("w2:0")

feed_dict ={w1:13.0,w2:17.0}

#Now, access the op that you want to run.

op_to_restore = graph.get_tensor_by_name("op_to_restore:0")

print sess.run(op_to_restore,feed_dict)

#This will print 60 which is calculated

Reference:

- https://stackoverflow.com/questions/33759623/tensorflow-how-to-save-restore-a-model

- https://cv-tricks.com/tensorflow-tutorial/save-restore-tensorflow-models-quick-complete-tutorial/

- https://blog.csdn.net/liuxiao214/article/details/79048136

总结

-

保存模型

- 记得给张量命名,如:tf.placeholder(“float”, name=“w1”) 中的 name

- saver = tf.train.Saver()

- saver.save(sess, ‘my_test_model’,global_step=1000)

- my_test_model是文件名的前缀 ,global_step是保存第几轮的模型

-

读取模型

- saver = tf.train.import_meta_graph(‘my_test_model-1000.meta’)

- saver.restore(sess,tf.train.latest_checkpoint(’./’))

- graph = tf.get_default_graph()

- graph.get_tensor_by_name(“w1:0”) # w1:张量名 => name

- 建议引用name的时候最好把张量输出出来查看它的name,免得会报错

CNN实例

tensorflow代码

def run(self):

print('Params{\n learning_rate => %.5f\n iteration => %d\n divide_rate => %.2f\n the number of units => %d\n early_stopping => %d\n}\n' %

(self.learn_rate, self.round, self.divide_rate, self.units, self.early_stopping))

# image, label = self.load_image()

train_x, train_y, test_x, test_y = self.divide(rate=self.divide_rate, shuffle=self.shuffle)

print('train_image_size:', train_x.shape)

print('train_label_size:', train_y.shape)

print('test_image_size: ', test_x.shape)

print('test_label_size: ', test_y.shape)

self.text = self.text + '\n' + "train_image_size: {}".format(train_x.shape)

self.text = self.text + '\n' + "train_label_size: {}".format(train_y.shape)

self.text = self.text + '\n' + "test_image_size: {}".format(test_x.shape)

self.text = self.text + '\n' + "test_label_size: {}".format(test_y.shape)

input_x = tf.placeholder(tf.float32, [None, 112 * 92], name='input_x') # None表示张量的第一个维度可以是任意长度

output_y = tf.placeholder(tf.int32, [None, 40]) # 输出:40个数字的标签

input_x_images = tf.reshape(input_x, [-1, 112, 92, 1]) # 改变形状之后的输入

"""Construct convolution neural network"""

"""First convolution layer"""

convolution_one = tf.layers.conv2d(

inputs=input_x_images, # 形状是[112, 92, 1]

filters=32, # 32个过滤器,输出的深度是32

kernel_size=[5, 5], # 过滤器在二维的大小是(5,5)

strides=1, # 步长是1

padding='same', # same表示输出的大小不变,因此需要在外围补0两圈

activation=tf.nn.relu # 激活函数是Relu

) # 形状[112, 92, 32]

"""First pooling layer"""

pool_one = tf.layers.max_pooling2d(

inputs=convolution_one, # 形状[112, 92, 32]

pool_size=[2, 2], # 过滤器在二维的大小是(2 * 2)

strides=2 # 步长是2

) # 形状[56, 46, 32]

"""Second convolution layer"""

convolution_two = tf.layers.conv2d(

inputs=pool_one, # 形状是[56, 46, 32]

filters=64, # 64个过滤器,输出的深度是64

kernel_size=[5, 5], # 过滤器在二维的大小是(5,5)

strides=1, # 步长是1

padding='same', # same表示输出的大小不变,因此需要在外围补0两圈

activation=tf.nn.relu # 激活函数是Relu

) # 形状[56, 46, 64]

"""Second pooling layer"""

pool_two = tf.layers.max_pooling2d(

inputs=convolution_two, # 形状[56, 46, 64]

pool_size=[2, 2], # 过滤器在二维的大小是(2 * 2)

strides=2 # 步长是2

) # 形状[28, 23, 64]

"""flatting"""

flat = tf.reshape(pool_two, [-1, 28 * 23 * 64]) # 形状[7 * 7 * 64, ]

"""The number of neural cell in fully-connected layer"""

dense = tf.layers.dense(inputs=flat, units=self.units, activation=tf.nn.relu)

# Dropout:50%,rate=0.5

dropout = tf.layers.dropout(inputs=dense, rate=0.5, name='dropout')

"""Output layer, there needn't activation function"""

logit = tf.layers.dense(inputs=dropout, units=40, name='logit') # 输出。形状[1, 1, 10]

"""Calculation error - Cross entropy then compute percent probability with Softmax"""

loss = tf.losses.softmax_cross_entropy(onehot_labels=output_y, logits=logit)

# use Adam Optimizer to minimize error with learning_rate

train_op = tf.train.AdamOptimizer(learning_rate=self.learn_rate).minimize(loss) # learn_rate

"""Compute accuracy of test data set"""

# accuracy = tf.metrics.accuracy(labels=tf.argmax(output_y), predictions=tf.argmax(logit))[1]

correct_prediction = tf.equal(tf.argmax(output_y), tf.argmax(logit)) # return a string of bool value

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32))

epoch = 0 # for counting

with tf.Session() as sess:

"""Initial global and local variables"""

init = tf.group(tf.global_variables_initializer(), tf.local_variables_initializer())

sess.run(init)

print('\n-------------start to train model------------')

self.text = self.text + '\n' + "\n------------start to train model------------"

count, formal_score = 0, 0

for i in range(self.round):

"""start graph computation"""

epoch = i

train_loss, train_op_ = sess.run([loss, train_op], feed_dict={input_x: train_x, output_y: train_y})

if i % 1 == 0:

test_accuracy = sess.run(accuracy, feed_dict={input_x: test_x, output_y: test_y})

print("Step=%d, Train loss=%.3f, [Test accuracy=%.3f]" % (i+1, train_loss, test_accuracy))

self.text = self.text + '\n' + "Step={}, Loss={:.4f}, [Accuracy={:.3f}]".format(i+1, train_loss, test_accuracy)

"""Test a sample output

test = self.load_predict_sample('image/10.png')

output = sess.run(logit, feed_dict={input_x: test})

print('prediction: ', output)

"""

print(logit)

if test_accuracy <= formal_score:

count += 1

if count >= self.early_stopping:

print('early stopping.')

self.text = self.text + '\n' + "early stopping."

# if self.__store_model is True:

# self.store_model(sess, epoch)

break

else:

continue

else:

formal_score = test_accuracy

count = 0

if self.__store_model is True:

self.store_model(sess, epoch)

self.text = self.text + '\n' + "over."

return self.text

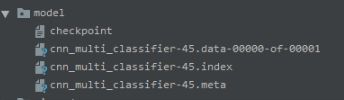

保存模型

def store_model(self, sess, epoch):

absolute_path = 'face_recognition/'

saver = tf.train.Saver() # Save model, maximun to save: 5

my_file = absolute_path + 'model'

if os.path.exists(my_file) is False:

os.mkdir(my_file)

print('make directory successful.')

self.text = self.text + '\n' + "make directory successful."

else:

file_name = os.listdir(my_file)

if len(file_name) != 0:

for string in file_name:

os.remove(my_file + '/' + string) # delete files

saver.save(sess, my_file + "/cnn_multi_classifier", global_step=epoch) # global_step

print('model store successfully.')

self.text = self.text + '\n' + "model store successfully."

保存结果

读取模型

def load_model(self, test):

absolute_path = 'face_recognition/'

if os.path.exists(absolute_path + 'model') is True:

dir_list = os.listdir(absolute_path + 'model')

if len(dir_list) == 0:

print('model is empty.')

exit(0)

else:

with tf.Session() as sess:

path = absolute_path + 'model/cnn_multi_classifier-19.meta'

for file in dir_list:

if 'meta' in file:

path = absolute_path + 'model/' + file

saver = tf.train.import_meta_graph(path)

saver.restore(sess, tf.train.latest_checkpoint(absolute_path + "model/"))

print('load model successfully.')

graph = tf.get_default_graph()

input_x = graph.get_tensor_by_name('input_x:0')

logit = graph.get_tensor_by_name('logit/BiasAdd:0')

feed_dict = {input_x: test}

result = sess.run(logit, feed_dict=feed_dict)

max_index = sess.run(tf.argmax(result, axis=1)) # find the indices of maximum value

print('Prediction result:', result)

print('This image is %d th people.' % (max_index[0] + 1))

return max_index[0] + 1

else:

print('directory is not exist.')

exit(0)

特别提醒

建议引用name的时候最好把张量输出出来查看它的name,免得会报错,就像我上面的案例一样。因为我的logit张量的name是‘logit’,但是它在图里面name确是’logit/BiasAdd:0’。