如何在CDH5上部署Dolphin Scheduler 1.3.1

点击蓝色字关注!

本篇文章大概8440字,阅读时间大约20分钟

本文记录了在CDH5.16.2集群上集成Dolphin Scheduler 1.3.1的详细流程,特别注意一下MySQL数据库的连接串!

1

文档编写目的

详细记录CDH5上Dolphin Scheduler 1.3.1的部署流程

分布式部署Dolphin Scheduler

2

部署环境和依赖组件

为了适配CDH5上的Hive版本, 需要对DS进行源码编译部署,最后会提供编译好的CDH5版本供各位老铁下载

集群环境

CDH 5.16.2

HDFS和YARN都是单点

DS的官网

https://dolphinscheduler.apache.org/en-us/

DS依赖组件

MySQL:用于存储dolphin scheduler的元数据,也可以使用pg,这里主要是因为CDH集群使用的是MySQL

Zookeeper:使用CDH集群的zk

3

Dolphin Scheduler 1.3.1集群规划

| DS服务 |

master.eights.com |

dn1.eights.com |

dn2.eights.com |

| api |

√ | ||

| master |

√ | √ | |

| worker/log |

√ | √ | |

| alert |

√ |

4

源码编译

前置条件

maven

jdk

nvm

代码拉取

git clone https://github.com/apache/incubator-dolphinscheduler.git切换CDH5分支

git checkout 1.3.1-release;

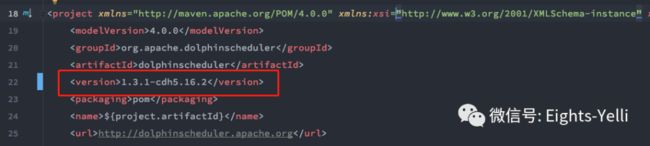

git checkout -b ds-1.3.1-cdh5.16.2;修改pom

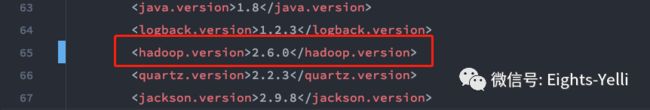

修改根root的hadoop版本,hive版本和version信息,将每一个模块的version都调整为1.3.1-cdh5.16.2

2.6.0

1.1.0

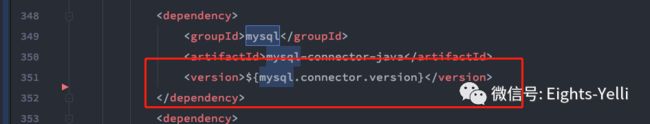

1.3.1-cdh5.16.2 去除mysql包的scope

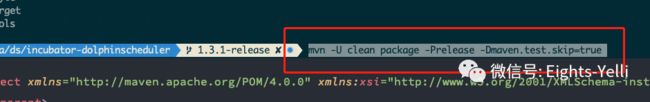

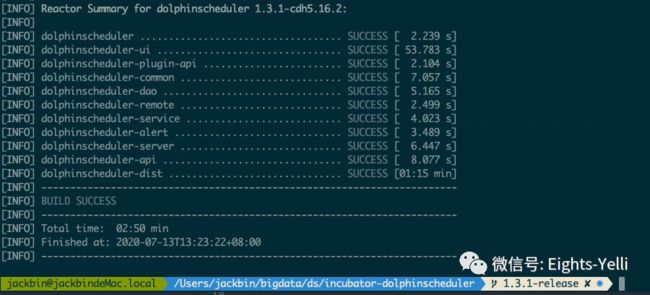

执行编译命令进行源码编译

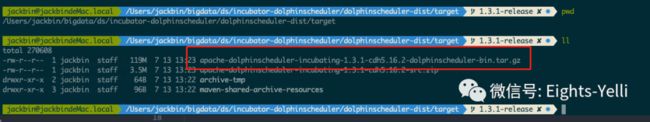

mvn -U clean package -Prelease -Dmaven.test.skip=true编译完成后,dolphinscheduler-dist目录会生成

apache-dolphinscheduler-incubating-1.3.1-cdh5.16.2-dolphinscheduler-bin.tar.gz包,1.3.1版本的前后端是打在一起的,并没有两个包.

5

组件部署

准备工作

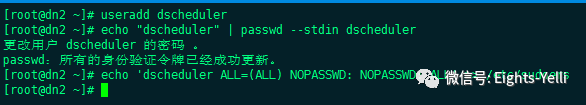

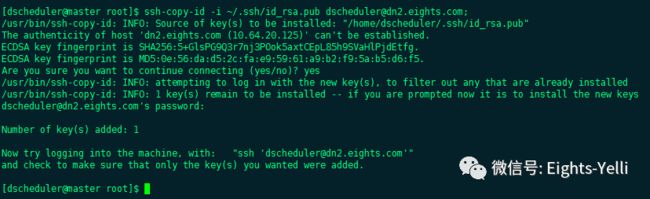

创建部署用户及配置SSH免密

在所有部署机器上创建部署用户,ds在进行任务执行的时候会以sudo -u [linux-user]的方式来执行作业,这里采用dscheduler作为部署用户。

# 添加部署用户

useradd dscheduler;

# 设置密码

echo "dscheduler" | passwd --stdin dscheduler

# 配置免密

echo 'dscheduler ALL=(ALL) NOPASSWD: NOPASSWD: ALL' >> /etc/sudoers

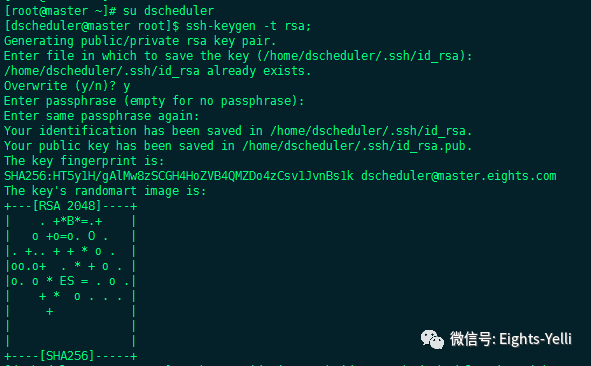

# 切换到部署用户并生成ssh key

su dscheduler;

ssh-keygen -t rsa;

ssh-copy-id -i ~/.ssh/id_rsa.pub dscheduler@[hostname];创建ds的元数据库-MySQL

CREATE DATABASE dolphinscheduler DEFAULT CHARACTER SET utf8 DEFAULT COLLATE

utf8_general_ci;

CREATE USER 'dscheduler'@'%' IDENTIFIED BY 'dscheduler';

GRANT ALL PRIVILEGES ON dolphinscheduler.* TO 'dscheduler'@'%' IDENTIFIED BY 'dscheduler';

flush privileges;安装包解压&权限修改

上传安装包到集群/opt目录,执行解压

# 解压安装包

tar -zxvf apache-dolphinscheduler-incubating-1.3.1-cdh5.16.2-dolphinscheduler-bin.tar.gz -C /opt/

# 重命名

mv apache-dolphinscheduler-incubating-1.3.1-cdh5.16.2-dolphinscheduler-bin ds-1.3.1-cdh5.16.2

# 修改文件权限和组

chmod -R 755 ds-1.3.1-cdh5.16.2;

chown -R dscheduler:dscheduler ds-1.3.1-cdh5.16.2;初始化MySQL

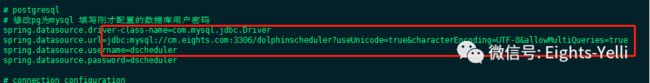

修改数据库配置

这里特别注意数据库的连接配置

这里特别注意数据库的连接配置

这里特别注意数据库的连接配置

vi /opt/ds-1.3.1-cdh5.16.2/conf/datasource.properties;

spring.datasource.driver-class-name=com.mysql.jdbc.Driver

spring.datasource.url=jdbc:mysql://cm.eights.com:3306/dolphinscheduler?useUnicode=true&characterEncoding=UTF-8&allowMultiQueries=true

spring.datasource.username=dscheduler

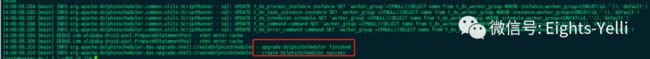

spring.datasource.password=dscheduler在ds的安装包目录下执行数据库初始化脚本

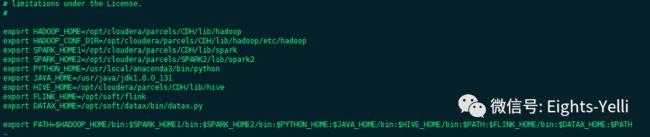

./script/create-dolphinscheduler.sh配置ds所需的环境变量,这里记住一定要配,ds在执行任务的时候会先source dolphinscheduler_env.sh

vi /opt/ds-1.3.1-cdh5.16.2/conf/env/dolphinscheduler_env.sh

# 测试集群上没有datax和flink请忽略相关配置

export HADOOP_HOME=/opt/cloudera/parcels/CDH/lib/hadoop

export HADOOP_CONF_DIR=/opt/cloudera/parcels/CDH/lib/hadoop/etc/hadoop

export SPARK_HOME1=/opt/cloudera/parcels/CDH/lib/spark

export SPARK_HOME2=/opt/cloudera/parcels/SPARK2/lib/spark2

export PYTHON_HOME=/usr/local/anaconda3/bin/python

export JAVA_HOME=/usr/java/jdk1.8.0_131

export HIVE_HOME=/opt/cloudera/parcels/CDH/lib/hive

export FLINK_HOME=/opt/soft/flink

export DATAX_HOME=/opt/soft/datax/bin/datax.py编写ds的配置文件

ds在1.3.0之前,一键部署的配置文件在install.sh中。1.3.0版本的install.sh脚本只是一个部署脚本,部署配置文件在conf/config/install_config.conf中。install_config.conf精简了很多非必须的参数,如果要进一步的进行参数调整,则需要去修改conf下对应模块的配置文件。

下面给出本次部署的集群部署配置

# NOTICE : If the following config has special characters in the variable `.*[]^${}\+?|()@#&`, Please escape, for example, `[` escape to `\[`

# postgresql or mysql

dbtype="mysql"

# db config

# db address and port

dbhost="cm.eights.com:3306"

# db username

username="dscheduler"

# database name

dbname="dolphinscheduler"

# db passwprd

# NOTICE: if there are special characters, please use the \ to escape, for example, `[` escape to `\[`

password="dscheduler"

# zk cluster

zkQuorum="master.eights.com:2181,dn1.eights.com:2181,cm.eights.com:2181"

# Note: the target installation path for dolphinscheduler, please not config as the same as the current path (pwd)

# 在每台部署机器上 安装ds的目录,角色的日志文件和任务日志都在这个目录下

installPath="/opt/ds-1.3.1-agent"

# deployment user

# Note: the deployment user needs to have sudo privileges and permissions to operate hdfs. If hdfs is enabled, the root directory needs to be created by itself

deployUser="dscheduler"

# 邮件我这边是内网邮箱,后续会出外网邮件的配置方法

# alert config

# mail server host

mailServerHost="xxxx"

# mail server port

# note: Different protocols and encryption methods correspond to different ports, when SSL/TLS is enabled, make sure the port is correct.

mailServerPort="25"

# sender

mailSender="xxxx"

# user

mailUser="xxxx"

# sender password

# note: The mail.passwd is email service authorization code, not the email login password.

mailPassword="xxxx"

# TLS mail protocol support

starttlsEnable="false"

# SSL mail protocol support

# only one of TLS and SSL can be in the true state.

sslEnable="false"

#note: sslTrust is the same as mailServerHost

sslTrust="xxxxxx"

# resource storage type:HDFS,S3,NONE

resourceStorageType="HDFS"

# 单点的HDFS和yarn直接进行配置即可

# if resourceStorageType is HDFS,defaultFS write namenode address,HA you need to put core-site.xml and hdfs-site.xml in the conf directory.

# if S3,write S3 address,HA,for example :s3a://dolphinscheduler,

# Note,s3 be sure to create the root directory /dolphinscheduler

defaultFS="hdfs://master.eights.com:8020"

# if resourceStorageType is S3, the following three configuration is required, otherwise please ignore

s3Endpoint="http://192.168.xx.xx:9010"

s3AccessKey="xxxxxxxxxx"

s3SecretKey="xxxxxxxxxx"

# 这里注意,即使yarn是单点也最好把yarnHaIps配上

# if resourcemanager HA enable, please type the HA ips ; if resourcemanager is single, make this value empty

yarnHaIps="master.eights.com"

# if resourcemanager HA enable or not use resourcemanager, please skip this value setting; If resourcemanager is single, you only need to replace yarnIp1 to actual resourcemanager hostname.

singleYarnIp="master.eights.com"

# resource store on HDFS/S3 path, resource file will store to this hadoop hdfs path, self configuration, please make sure the directory exists on hdfs and have read write permissions。/dolphinscheduler is recommended

resourceUploadPath="/dolphinscheduler"

# who have permissions to create directory under HDFS/S3 root path

# Note: if kerberos is enabled, please config hdfsRootUser=

hdfsRootUser="hdfs"

# kerberos config

# whether kerberos starts, if kerberos starts, following four items need to config, otherwise please ignore

kerberosStartUp="false"

# kdc krb5 config file path

krb5ConfPath="$installPath/conf/krb5.conf"

# keytab username

keytabUserName="[email protected]"

# username keytab path

keytabPath="$installPath/conf/hdfs.headless.keytab"

# api server port

apiServerPort="12345"

# install hosts

# Note: install the scheduled hostname list. If it is pseudo-distributed, just write a pseudo-distributed hostname

ips="master.eights.com,dn1.eights.com,dn2.eights.com"

# ssh port, default 22

# Note: if ssh port is not default, modify here

sshPort="22"

# run master machine

# Note: list of hosts hostname for deploying master

masters="master.eights.com,dn1.eights.com"

# run worker machine

# 1.3.1把worker分组从mysql移到了zookeeper中,这个需要打worker的标签

# 目前还不支持一个worker属于多个分组

# note: need to write the worker group name of each worker, the default value is "default"

workers="master.eights.com:default,dn2.eights.com:sqoop"

# run alert machine

# note: list of machine hostnames for deploying alert server

alertServer="dn1.eights.com"

# run api machine

# note: list of machine hostnames for deploying api server

apiServers="dn1.eights.com"添加Hadoop集群配置文件

如果集群未启用HA,直接在install_config.conf文件中进行编写

如果集群启用了HA,请将hadoop的hdfs-site.xml和core-site.xml拷贝到/conf目录下

修改JVM参数

两个文件

/bin/dolphinscheduler-daemon.sh

/scripts/dolphinscheduler-daemon.sh

export DOLPHINSCHEDULER_OPTS="-server -Xmx16g -Xms1g -Xss512k

-XX:+DisableExplicitGC -XX:+UseConcMarkSweepGC -XX:+CMSParallelRemarkEnabled

-XX:LargePageSizeInBytes=128m -XX:+UseFastAccessorMethods

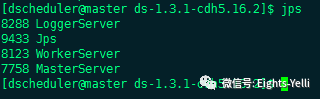

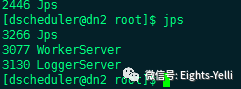

-XX:+UseCMSInitiatingOccupancyOnly -XX:CMSInitiatingOccupancyFraction=70"一键部署&进程检查&单服务启停

# 运行部署ds脚本

sh install.sh

# 进程检查

jps服务启停

# 一键停止

sh ./bin/stop-all.sh

# 一键开启

sh ./bin/start-all.sh

# 启停master

sh ./bin/dolphinscheduler-daemon.sh start master-server

sh ./bin/dolphinscheduler-daemon.sh stop master-server

# 启停worker

sh ./bin/dolphinscheduler-daemon.sh start worker-server

sh ./bin/dolphinscheduler-daemon.sh stop worker-server

# 启停api-server

sh ./bin/dolphinscheduler-daemon.sh start api-server

sh ./bin/dolphinscheduler-daemon.sh stop api-server

# 启停logger

sh ./bin/dolphinscheduler-daemon.sh start logger-server

sh ./bin/dolphinscheduler-daemon.sh stop logger-server

# 启停alert

sh ./bin/dolphinscheduler-daemon.sh start alert-server

sh ./bin/dolphinscheduler-daemon.sh stop alert-server前端访问

dolphinscheduler-1.3.1前端不在需要nginx,直接使用

apiserver:12345/dolphinscheduler进行访问

账号 admin 密码 dolphinscheduler123登陆

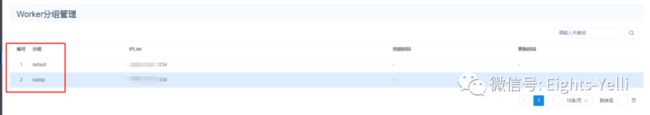

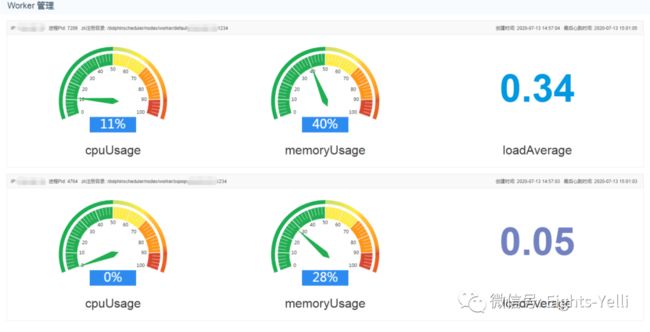

检查worker分组

可以看到1.3.1版本的worker分组是通过install_config.conf去执行的,在页面上不能进行修改

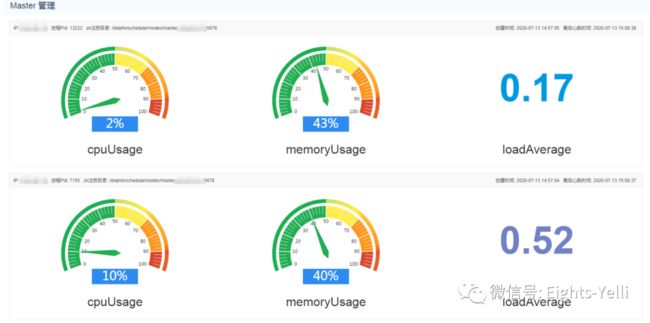

检查服务

编译好的Dolphin Scheduler-1.3.1-cdh5.16.2的包

百度网盘

链接:

https://pan.baidu.com/s/1gEwEF2R2XJVRv76SgiW0hA

提取码:joyq

6

总结

dolphinscheduler-1.3.1版本在部署上大幅精简了install.sh的配置,可以让用户快速部署起来。不过如果做升级的老铁就需要去conf目录下修改对应模块的配置文件。

前端这块也不再需要单独的nginx,用户的部署体验更佳

扫描二维码

获取历史文章

Eights

点个在看吧~

![]()