多层卷积神经网络案例

本文为卷积神经网络的基本示例,采用cifar数据进行训练模型。

在使用本示例时,需要用到cifar的工具: cifar10文件以及cifar-10-batches-bin

https://www.cnblogs.com/Jerry-Dong/p/8109938.html

https://download.csdn.net/download/qq_36639966/11242082

注: cifar10文件以及cifar-10-batches-bin 可以通过以上链接获取,也可以回复本文或私信本人获取

在了解cifar10之后,我们结合cifar10数据集去理解卷积神经网络。

模型数据的准备。 这里需要导入cifar10_input模块,这个模块在网上有源文件,可以自行下载。 然后加载cifar的bin文件,准备训练数据和测试数据

import cifar10_input

import tensorflow as tf

import numpy as np

batch_size = 128

data_dir = "./cifar10_data/cifar-10-batches-bin"

images_train, labels_train = cifar10_input.inputs(eval_data=False, data_dir=data_dir, batch_size=batch_size)

images_test, labels_test = cifar10_input.inputs(eval_data=True, data_dir=data_dir, batch_size=batch_size)

卷积核与偏置的设置

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1) # 产生截断正态分布随机数

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

def avg_pool_6x6(x):

return tf.nn.avg_pool(x, ksize=[1, 6, 6, 1], strides=[1, 6, 6, 1], padding='SAME')定义三层卷积神经网络模型

# cifar data image of shape 24*24*3

x = tf.placeholder(tf.float32, [None, 24, 24, 3])

y = tf.placeholder(tf.float32, [None, 10]) # 0-9 数字=> 10 classes

# 第一层卷积

W_conv1 = weight_variable([5, 5, 3, 64])

b_conv1 = bias_variable([64])

x_image = tf.reshape(x, [-1, 24, 24, 3])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

# 第二层卷积

W_conv2 = weight_variable([5, 5, 64, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

# 第三层卷积

W_conv3 = weight_variable([5, 5, 64, 10])

b_conv3 = bias_variable([10])

h_conv3 = tf.nn.relu(conv2d(h_pool2, W_conv3) + b_conv3)

print(h_conv3.shape)

# 全局平均池化层

nt_hpool3 = avg_pool_6x6(h_conv3)

print(nt_hpool3.shape)

nt_hpool3_flat = tf.reshape(nt_hpool3, [-1, 10])

# 使用softmax分类

y_conv = tf.nn.softmax(nt_hpool3_flat)

# 计算交叉熵, 得到LOSS值

cross_entropy = -tf.reduce_sum(y*tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

# 计算准确率

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))训练数据

sess = tf.Session()

sess.run(tf.global_variables_initializer())

# 启动队列,通过多线程将读取的数据与计算数据分开, 也就是一边从硬盘读取数据,一边进行训练计算,提升效率

tf.train.start_queue_runners(sess=sess)

for i in range(2000):

image_batch, label_batch = sess.run([images_train, labels_train])

# 将LABEL值改为one_hot编码

label_b = np.eye(10, dtype=float)[label_batch]

train_step.run(feed_dict={x: image_batch, y: label_b}, session=sess)

if i % 20 == 0:

train_accuracy = accuracy.eval(feed_dict={x: image_batch, y: label_b}, session=sess)

print("step %d, training accuracy %g" % (i, train_accuracy))测试数据

# 使用测试数据进行测试

image_batch, label_batch = sess.run([images_test, labels_test])

label_b = np.eye(10, dtype=float)[label_batch]

print("test accuracy %g" % accuracy.eval(feed_dict={x: image_batch, y: label_b}, session=sess))

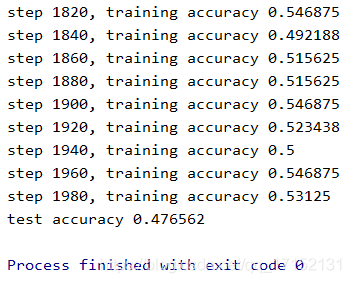

训练过程和结果数据如下:

这是2000次训练的结果,可以看到精度达到47%,不算太高,因为训练次数较少。有条件的可以设置20000次,这样训练出来模型的精度会更高。