知乎爬虫与数据分析(一)数据爬取篇

注:代码完整版可移步Github——https://github.com/florakl/zhihu_spider。

知乎爬虫与数据分析(二)pandas+pyecharts数据可视化分析篇(上)

知乎爬虫与数据分析(三)pandas+pyecharts数据可视化分析篇(下)

目录

- 0 项目介绍

- 1 数据爬取

- 1.1 明确数据需求

- 1.2 爬取代码

0 项目介绍

对自媒体而言,如果想要自己的观点获得更多人认同,除去创作内容本身的含金量外,创作时机、创作形式等因素也同样关键。以知乎为例,如果你有诸如:

①在问题提出后多久去发表回答更容易火起来?

②是不是高赞答主一般都自带粉丝?小透明还有戏吗?

③想知道高赞回答通常有多少字?分多少段?配多少张图?有哪些常用的高频词汇?

等等疑问,不妨花上几分钟看一看这篇文章。我将爬取知乎相关数据,简单分析高赞回答的共同特征与规律,来尝试回答上述问题。

项目流程比较简单:数据爬取——数据处理与可视化分析——总结。

本篇主要简述数据爬取,具体分析请看后两篇。

1 数据爬取

1.1 明确数据需求

基本数据来源:

- 爬取知乎根话题下的M个精华问题,以及问题下的N个回答

基本数据维度:

- 问题——标题、标签、提问内容、提问时间、关注数、回答数、评论数等

- 回答——赞数、回答时间、内容、评论数、答主粉丝数等

- 其他——爬取时间等

这是一般情况下会用到的数据维度,而针对最初提到的三个问题,实际对数据的需求有所区别。

①随机选择数个热门问题,爬取该问题下所有回答的创建时间和赞数即可。

②③则需要爬取多个精华问题下的部分高赞回答(比如前10个),并基于回答的赞数、评论数、粉丝数、回答内容等数据分析。

1.2 爬取代码

代码参考:

爬虫实战之分布式爬取知乎问答数据

Python网络爬虫实战:爬取知乎话题下 18934 条回答数据

知乎 API v4 整理

(1)先编写一个通用的url请求函数,将响应对象存储为json格式。这里使用headers伪装浏览器来爬数据。可能是我爬取的数据量不大,没有被封ip,所以未使用IP代理池。

def url_get(url):

headers = {

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/61.0.3163.100 Safari/537.36",

"Connection": "keep-alive",

"Accept": "text/html,application/json,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

"Accept-Language": "zh-CN,zh;q=0.8"}

html = requests.get(url, headers=headers, timeout=20)

code = html.status_code

if code == 200:

res = html.json()

return res

else:

# print('Status Code:', code)

return None

(2)针对问题①,分批爬取单个问题下的所有回答,存入txt文件。

def question_info_get(qid):

q_url = f'https://www.zhihu.com/api/v4/questions/{qid}?include=answer_count'

q_res = url_get(q_url)

if q_res:

total = q_res['answer_count'] # 回答数

title = q_res['title'] # 问题标题

created_time = q_res['created'] # 创建时间

return total, title, created_time

def answer_time_get(qid, interval, offset, q_time):

voteup = []

ans_time = []

ans_url = f'https://www.zhihu.com/api/v4/questions/{qid}/answers?include=content,comment_count,voteup_count&limit={interval}&offset={offset}&sort_by=default'

ans_res = url_get(ans_url)

answers = ans_res['data']

for answer in answers:

voteup.append(answer['voteup_count'])

ans_time.append(answer['created_time'])

# ans_time.append(answer['created_time'] - q_time)

return voteup, ans_time

def data_get(qid, number):

total, title, created_time = question_info_get(qid)

interval = 20

offset = 0

voteup = []

ans_time = []

while offset < total:

print(f'正在爬取第{offset}-{offset + interval - 1}个回答')

v, a = answer_time_get(qid, interval, offset, created_time)

# 单次爬取回答个数上限为20

voteup += v

ans_time += a

offset += interval

print(f'第{number}个问题爬取成功,id={qid},标题=“{title}”,总回答数={total}')

return voteup, ans_time, title

if __name__ == '__main__':

qids = [314644210, 30265988, 26933347, 33220674, 264958421, 31524027]

number = 1

for qid in qids:

voteup, ans_time, title = data_get(qid, number)

number += 1

filename = 'answer_time.txt'

with open(filename, 'a') as f:

f.write(str(qid) + '\n')

f.write(title + '\n')

for item in ans_time:

f.write(str(item) + ',')

f.write('\n')

for item in voteup:

f.write(str(item) + ',')

f.write('\n')

print('数据保存成功')

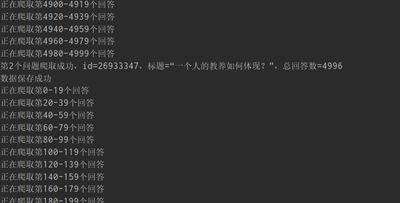

运行截图如下,本次只爬取了6个回答数>1000的问题。

(3)问题②③需要爬取的数据维度更广(虽然后续分析时并未全部用上)。和(2)不同的是这里没法手动输入大量的问题编号qid,需要直接从页面中获取。

def qid_get(interval, offset):

# 知乎根话题下的精华问题

tid = 19776749

url = f'https://www.zhihu.com/api/v4/topics/{tid}/feeds/essence?limit={interval}&offset={offset}'

res = url_get(url)

qids = []

if res:

for question in res['data']:

try:

qid = question['target']['question']['id']

qids.append(qid)

except KeyError:

print('qid无法读取,跳过该问题')

return qids

有了qid就能继续爬数据了,从问题到回答再到答主信息。爬取中会出现一些特殊情况,比如进入用户信息页面采集答主粉丝数信息时,需要考虑已注销、匿名用户、账号停用等特例。

def question_info_get(qid):

# 爬取问题相关信息

q_url = f'https://www.zhihu.com/api/v4/questions/{qid}?include=answer_count,comment_count,follower_count,excerpt,topics'

q_res = url_get(q_url)

if q_res:

answer_count = q_res['answer_count']

if answer_count < 20:

print(f'总回答数为{answer_count},小于20,跳过该问题')

return None

q_info = {}

q_info['qid'] = qid

q_info['answer_count'] = answer_count # 回答数

q_info['title'] = q_res['title'] # 问题标题

excerpt = q_res['excerpt']

if not excerpt:

excerpt = ' '

q_info['q_content'] = excerpt # 问题内容

topics = []

for topic in q_res['topics']:

topics.append(topic['name'])

q_info['topics'] = topics # 问题标签

q_info['comment_count'] = q_res['comment_count'] # 评论数

q_info['follower_count'] = q_res['follower_count'] # 关注数

q_info['created_time'] = q_res['created'] # 创建时间

q_info['updated_time'] = q_res['updated_time'] # 更新时间

q_info['crawl_time'] = datetime.now().strftime('%Y-%m-%d %H:%M:%S') # 爬取时间

return q_info

def answer_info_get(qid, interval, offset):

# 爬取回答相关信息

ans_url = f'https://www.zhihu.com/api/v4/questions/{qid}/answers?include=content,comment_count,voteup_count&limit={interval}&offset={offset}&sort_by=default'

ans_res = url_get(ans_url)

if ans_res:

ans_infos = []

answers = ans_res['data']

for answer in answers:

ans_info = {}

name = answer['author']['name']

un = answer['author']['url_token']

if name == '「已注销」' or name == '匿名用户' or name == '知乎用户':

ans_info['author'] = 'NoName'

ans_info['author_follower_count'] = 0

ans_info['author_voteup_count'] = 0

elif un:

ans_info['author'] = un # 答主用户名

user_url = f"https://www.zhihu.com/api/v4/members/{un}?include=follower_count,voteup_count"

user = url_get(user_url)

if user:

ans_info['author_follower_count'] = user['follower_count'] # 答主关注者数

ans_info['author_voteup_count'] = user['voteup_count'] # 答主获赞数

else:

# 账号停用等情况处理

ans_info['author_follower_count'] = 0

ans_info['author_voteup_count'] = 0

ans_info['author_gender'] = answer['author']['gender'] # 答主性别

ans_info['content'] = answer['content'] # 回答内容

ans_info['voteup_count'] = answer['voteup_count'] # 赞同数

ans_info['comment_count'] = answer['comment_count'] # 评论数

ans_info['created_time'] = answer['created_time'] # 创建时间

ans_info['updated_time'] = answer['updated_time'] # 更新时间

ans_infos.append(ans_info)

return ans_infos

if __name__ == '__main__':

number = 1

for i in range(0, 1000, 10):

# 单次爬取问题个数上限为10

qids = qid_get(interval=10, offset=i)

print(i, qids)

data = []

for qid in qids:

q_info = question_info_get(qid)

if q_info:

ans_infos = answer_info_get(qid, interval=10, offset=0)

print(f'第{number}个问题爬取成功,id = {qid},总回答数 =', q_info['answer_count'])

q_info['answers'] = ans_infos

data.append(q_info)

else:

print(f'第{number}个问题爬取失败')

number += 1

if data:

filename = f'data/data{i}.json'

with open(filename, 'w') as f:

json.dump(data, fp=f, indent=4)

print(f'第{i + 1}-{i + 10}个问题的数据保存成功')

每爬10个问题就存储一个json文件,来应对可能出现的各种异常,比如:

经查询可能是因为服务器自动判定攻击,手动重启就能解决,虽然有些麻烦。

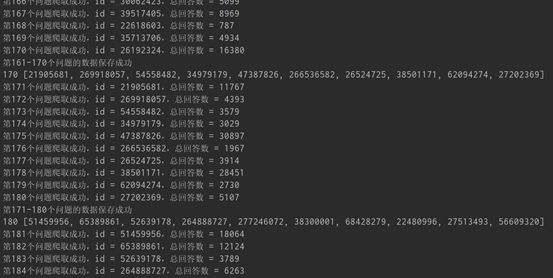

正常运行截图如下:

由于时间关系,我只爬取了400个问题,其中有效qid为397个,最终高赞回答样本数为3970。