(笔记)第二章:一个案例吃透深度学习(下)

目录

- 一、【手写数字识别】之资源配置

- 概述

- 前提条件

- 单GPU训练

- 分布式训练

- 模型并行

- 数据并行

- PRC通信方式

- NCCL2通信方式(Collective)

- 二、【手写数字识别】之训练调试与优化

- 概述

- 计算模型的分类准确率

- 检查模型训练过程,识别潜在训练问题

- 加入校验或测试,更好评价模型效果

- 加入正则化项,避免模型过拟合

- 过拟合现象

- 导致过拟合原因

- 过拟合的成因与防控

- 正则化项

- 可视化分析

- 使用Matplotlib库绘制损失随训练下降的曲线图

- 三、【手写数字识别】之恢复训练

- 模型加载及恢复训练

- 恢复训练

一、【手写数字识别】之资源配置

概述

无论是房价预测任务还是MNIST手写字数字识别任务,训练好一个模型不会超过十分钟,主要原因是我们所使用的神经网络比较简单。

但实际应用时,常会遇到更加复杂的机器学习或深度学习任务,需要运算速度更高的硬件(如GPU、NPU),甚至同时使用多个机器共同训练一个任务(多卡训练和多机训练)。本节我们通过资源配置的优化,提升模型训练效率的方法。

前提条件

在优化算法之前,需要进行数据处理、设计神经网络结构,代码与之前保持一致。

# 加载相关库

import os

import random

import paddle

import paddle.fluid as fluid

from paddle.fluid.dygraph.nn import Conv2D, Pool2D, Linear

import numpy as np

from PIL import Image

import gzip

import json

# 定义数据集读取器

def load_data(mode='train'):

# 读取数据文件

datafile = './work/mnist.json.gz'

print('loading mnist dataset from {} ......'.format(datafile))

data = json.load(gzip.open(datafile))

# 读取数据集中的训练集,验证集和测试集

train_set, val_set, eval_set = data

# 数据集相关参数,图片高度IMG_ROWS, 图片宽度IMG_COLS

IMG_ROWS = 28

IMG_COLS = 28

# 根据输入mode参数决定使用训练集,验证集还是测试

if mode == 'train':

imgs = train_set[0]

labels = train_set[1]

elif mode == 'valid':

imgs = val_set[0]

labels = val_set[1]

elif mode == 'eval':

imgs = eval_set[0]

labels = eval_set[1]

# 获得所有图像的数量

imgs_length = len(imgs)

# 验证图像数量和标签数量是否一致

assert len(imgs) == len(labels), \

"length of train_imgs({}) should be the same as train_labels({})".format(

len(imgs), len(labels))

index_list = list(range(imgs_length))

# 读入数据时用到的batchsize

BATCHSIZE = 100

# 定义数据生成器

def data_generator():

# 训练模式下,打乱训练数据

if mode == 'train':

random.shuffle(index_list)

imgs_list = []

labels_list = []

# 按照索引读取数据

for i in index_list:

# 读取图像和标签,转换其尺寸和类型

img = np.reshape(imgs[i], [1, IMG_ROWS, IMG_COLS]).astype('float32')

label = np.reshape(labels[i], [1]).astype('int64')

imgs_list.append(img)

labels_list.append(label)

# 如果当前数据缓存达到了batch size,就返回一个批次数据

if len(imgs_list) == BATCHSIZE:

yield np.array(imgs_list), np.array(labels_list)

# 清空数据缓存列表

imgs_list = []

labels_list = []

# 如果剩余数据的数目小于BATCHSIZE,

# 则剩余数据一起构成一个大小为len(imgs_list)的mini-batch

if len(imgs_list) > 0:

yield np.array(imgs_list), np.array(labels_list)

return data_generator

# 定义模型结构

class MNIST(fluid.dygraph.Layer):

def __init__(self):

super(MNIST, self).__init__()

# 定义一个卷积层,使用relu激活函数

self.conv1 = Conv2D(num_channels=1, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool1 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个卷积层,使用relu激活函数

self.conv2 = Conv2D(num_channels=20, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool2 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个全连接层,输出节点数为10

self.fc = Linear(input_dim=980, output_dim=10, act='softmax')

# 定义网络的前向计算过程

def forward(self, inputs):

x = self.conv1(inputs)

x = self.pool1(x)

x = self.conv2(x)

x = self.pool2(x)

x = fluid.layers.reshape(x, [x.shape[0], 980])

x = self.fc(x)

return x

单GPU训练

飞桨动态图通过fluid.dygraph.guard(place=None)里的place参数,设置在GPU上训练还是CPU上训练。

with fluid.dygraph.guard(place=fluid.CPUPlace()) #设置使用CPU资源训神经网络。

with fluid.dygraph.guard(place=fluid.CUDAPlace(0)) #设置使用GPU资源训神经网络,默认使用服务器的第一个GPU卡。"0"是GPU卡的编号,比如一台服务器有的四个GPU卡,编号分别为0、1、2、3。

#仅前3行代码有所变化,在使用GPU时,可以将use_gpu变量设置成True

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

with fluid.dygraph.guard(place):

model = MNIST()

model.train()

#调用加载数据的函数

train_loader = load_data('train')

#四种优化算法的设置方案,可以逐一尝试效果

optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, parameter_list=model.parameters())

#optimizer = fluid.optimizer.MomentumOptimizer(learning_rate=0.01, momentum=0.9, parameter_list=model.parameters())

#optimizer = fluid.optimizer.AdagradOptimizer(learning_rate=0.01, parameter_list=model.parameters())

#optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01, parameter_list=model.parameters())

EPOCH_NUM = 2

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据,变得更加简洁

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程

predict = model(image)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了200批次的数据,打印下当前Loss的情况

if batch_id % 200 == 0:

print("epoch: {}, batch: {}, loss is: {}".format(epoch_id, batch_id, avg_loss.numpy()))

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

#保存模型参数

fluid.save_dygraph(model.state_dict(), 'mnist')

loading mnist dataset from ./work/mnist.json.gz ......

epoch: 0, batch: 0, loss is: [2.351801]

epoch: 0, batch: 200, loss is: [0.41903907]

epoch: 0, batch: 400, loss is: [0.41572136]

epoch: 1, batch: 0, loss is: [0.36748636]

epoch: 1, batch: 200, loss is: [0.12394204]

epoch: 1, batch: 400, loss is: [0.33261067]

分布式训练

在工业实践中,很多较复杂的任务需要使用更强大的模型。强大模型加上海量的训练数据,经常导致模型训练耗时严重。比如在计算机视觉分类任务中,训练一个在ImageNet数据集上精度表现良好的模型,大概需要一周的时间,因为过程中我们需要不断尝试各种优化的思路和方案。如果每次训练均要耗时1周,这会大大降低模型迭代的速度。在机器资源充沛的情况下,建议采用分布式训练,大部分模型的训练时间可压缩到小时级别。

分布式训练有两种实现模式:模型并行和数据并行。

模型并行

模型并行是将一个网络模型拆分为多份,拆分后的模型分到多个设备上(GPU)训练,每个设备的训练数据是相同的。模型并行的实现模式可以节省内存,但是应用较为受限。

模型并行的方式一般适用于如下两个场景:

-

模型架构过大: 完整的模型无法放入单个GPU。如2012年ImageNet大赛的冠军模型AlexNet是模型并行的典型案例,由于当时GPU内存较小,单个GPU不足以承担AlexNet,因此研究者将AlexNet拆分为两部分放到两个GPU上并行训练。

-

网络模型的结构设计相对独立: 当网络模型的设计结构可以并行化时,采用模型并行的方式。如在计算机视觉目标检测任务中,一些模型(如YOLO9000)的边界框回归和类别预测是独立的,可以将独立的部分放到不同的设备节点上完成分布式训练。

数据并行

数据并行与模型并行不同,数据并行每次读取多份数据,读取到的数据输入给多个设备(GPU)上的模型,每个设备上的模型是完全相同的,飞桨采用的就是这种方式。

说明:

当前GPU硬件技术快速发展,深度学习使用的主流GPU的内存已经足以满足大多数的网络模型需求,所以大多数情况下使用数据并行的方式。

数据并行的方式与众人拾柴火焰高的道理类似,如果把训练数据比喻为砖头,把一个设备(GPU)比喻为一个人,那单GPU训练就是一个人在搬砖,多GPU训练就是多个人同时搬砖,每次搬砖的数量倍数增加,效率呈倍数提升。值得注意的是,每个设备的模型是完全相同的,但是输入数据不同,因此每个设备的模型计算出的梯度是不同的。如果每个设备的梯度只更新当前设备的模型,就会导致下次训练时,每个模型的参数都不相同。因此我们还需要一个梯度同步机制,保证每个设备的梯度是完全相同的。

梯度同步有两种方式:PRC通信方式和NCCL2通信方式(Nvidia Collective multi-GPU Communication Library)。

PRC通信方式

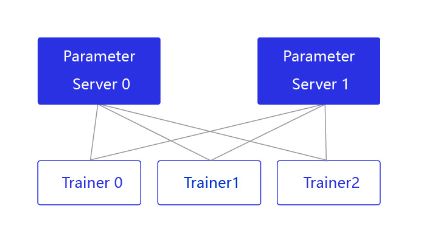

PRC通信方式通常用于CPU分布式训练,它有两个节点:参数服务器Parameter server和训练节点Trainer,结构如 图2 所示。

图2:Pserver通信方式的结构

parameter server收集来自每个设备的梯度更新信息,并计算出一个全局的梯度更新。Trainer用于训练,每个Trainer上的程序相同,但数据不同。当Parameter server收到来自Trainer的梯度更新请求时,统一更新模型的梯度。

NCCL2通信方式(Collective)

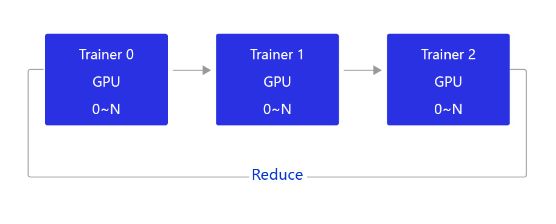

当前飞桨的GPU分布式训练使用的是基于NCCL2的通信方式,结构如 图3 所示。

图3:NCCL2通信方式的结构

相比PRC通信方式,使用NCCL2(Collective通信方式)进行分布式训练,不需要启动Parameter server进程,每个Trainer进程保存一份完整的模型参数,在完成梯度计算之后通过Trainer之间的相互通信,Reduce梯度数据到所有节点的所有设备,然后每个节点再各自完成参数更新。

飞桨提供了便利的数据并行训练方式,用户只需要对程序进行简单修改,即可实现在多GPU上并行训练。接下来讲述如何将一个单机程序通过简单的改造,变成多机多卡程序。

说明:

AI Studio当前仅支持单卡GPU,因此本案例需要在本地GPU上执行,无法在AI Studio上演示。

在启动训练前,需要配置如下参数:

- 从环境变量获取设备的ID,并指定给CUDAPlace。

device_id = fluid.dygraph.parallel.Env().dev_id

place = fluid.CUDAPlace(device_id)

- 对定义的网络做预处理,设置为并行模式。

strategy = fluid.dygraph.parallel.prepare_context() ## 新增

model = MNIST()

model = fluid.dygraph.parallel.DataParallel(model, strategy) ## 新增

- 定义多GPU训练的reader,不同ID的GPU加载不同的数据集。

valid_loader = paddle.batch(paddle.dataset.mnist.test(), batch_size=16, drop_last=true)

valid_loader = fluid.contrib.reader.distributed_batch_reader(valid_loader)

- 收集每批次训练数据的loss,并聚合参数的梯度。

avg_loss = model.scale_loss(avg_loss) ## 新增

avg_loss.backward()

mnist.apply_collective_grads() ## 新增

完整程序如下所示。

def train_multi_gpu():

##修改1-从环境变量获取使用GPU的序号

place = fluid.CUDAPlace(fluid.dygraph.parallel.Env().dev_id)

with fluid.dygraph.guard(place):

##修改2-对原模型做并行化预处理

strategy = fluid.dygraph.parallel.prepare_context()

model = MNIST()

model = fluid.dygraph.parallel.DataParallel(model, strategy)

model.train()

#调用加载数据的函数

train_loader = load_data('train')

##修改3-多GPU数据读取,必须确保每个进程读取的数据是不同的

train_loader = fluid.contrib.reader.distributed_batch_reader(train_loader)

optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, parameter_list=model.parameters())

EPOCH_NUM = 5

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

predict = model(image)

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

# 修改4-多GPU训练需要对Loss做出调整,并聚合不同设备上的参数梯度

avg_loss = model.scale_loss(avg_loss)

avg_loss.backward()

model.apply_collective_grads()

# 最小化损失函数,清除本次训练的梯度

optimizer.minimize(avg_loss)

model.clear_gradients()

if batch_id % 200 == 0:

print("epoch: {}, batch: {}, loss is: {}".format(epoch_id, batch_id, avg_loss.numpy()))

#保存模型参数

fluid.save_dygraph(model.state_dict(), 'mnist')

启动多GPU的训练,还需要在命令行中设置一些参数变量。打开终端,运行如下命令:

$ python -m paddle.distributed.launch --selected_gpus=0,1,2,3 --log_dir ./mylog train_multi_gpu.py

- paddle.distributed.launch:启动分布式运行。

- selected_gpus:设置使用的GPU的序号(需要是多GPU卡的机器,通过命令watch nvidia-smi查看GPU的序号)。

- log_dir:存放训练的log,若不设置,每个GPU上的训练信息都会打印到屏幕。

- train_multi_gpu.py:多GPU训练的程序,包含修改过的train_multi_gpu()函数。

训练完成后,在指定的./mylog文件夹下会产生四个日志文件,其中worklog.0的内容如下:

grep: warning: GREP_OPTIONS is deprecated; please use an alias or script

dev_id 0

I1104 06:25:04.377323 31961 nccl_context.cc:88] worker: 127.0.0.1:6171 is not ready, will retry after 3 seconds...

I1104 06:25:07.377645 31961 nccl_context.cc:127] init nccl context nranks: 3 local rank: 0 gpu id: 1↩

W1104 06:25:09.097079 31961 device_context.cc:235] Please NOTE: device: 1, CUDA Capability: 61, Driver API Version: 10.1, Runtime API Version: 9.0

W1104 06:25:09.104460 31961 device_context.cc:243] device: 1, cuDNN Version: 7.5.

start data reader (trainers_num: 3, trainer_id: 0)

epoch: 0, batch_id: 10, loss is: [0.47507238]

epoch: 0, batch_id: 20, loss is: [0.25089613]

epoch: 0, batch_id: 30, loss is: [0.13120805]

epoch: 0, batch_id: 40, loss is: [0.12122715]

epoch: 0, batch_id: 50, loss is: [0.07328521]

epoch: 0, batch_id: 60, loss is: [0.11860339]

epoch: 0, batch_id: 70, loss is: [0.08205047]

epoch: 0, batch_id: 80, loss is: [0.08192863]

epoch: 0, batch_id: 90, loss is: [0.0736289]

epoch: 0, batch_id: 100, loss is: [0.08607423]

start data reader (trainers_num: 3, trainer_id: 0)

epoch: 1, batch_id: 10, loss is: [0.07032011]

epoch: 1, batch_id: 20, loss is: [0.09687119]

epoch: 1, batch_id: 30, loss is: [0.0307216]

epoch: 1, batch_id: 40, loss is: [0.03884467]

epoch: 1, batch_id: 50, loss is: [0.02801813]

epoch: 1, batch_id: 60, loss is: [0.05751991]

epoch: 1, batch_id: 70, loss is: [0.03721186]

.....

二、【手写数字识别】之训练调试与优化

概述

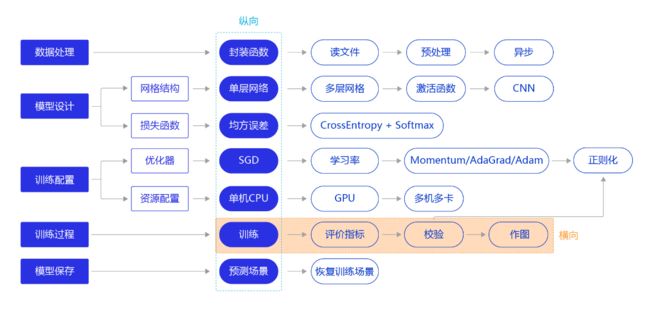

上一节我们研究了资源部署优化的方法,通过使用单GPU和分布式部署,提升模型训练的效率。本节我们依旧横向展开"横纵式",如 图1 所示,探讨在手写数字识别任务中,为了保证模型的真实效果,在模型训练部分,对模型进行一些调试和优化的方法。

训练过程优化思路主要有如下五个关键环节:

1. 计算分类准确率,观测模型训练效果。

交叉熵损失函数只能作为优化目标,无法直接准确衡量模型的训练效果。准确率可以直接衡量训练效果,但由于其离散性质,不适合做为损失函数优化神经网络。

2. 检查模型训练过程,识别潜在问题。

如果模型的损失或者评估指标表现异常,通常需要打印模型每一层的输入和输出来定位问题,分析每一层的内容来获取错误的原因。

3. 加入校验或测试,更好评价模型效果。

理想的模型训练结果是在训练集和验证集上均有较高的准确率,如果训练集上的准确率高于验证集,说明网络训练程度不够;如果验证集的准确率高于训练集,可能是发生了过拟合现象。通过在优化目标中加入正则化项的办法,解决过拟合的问题。

4. 加入正则化项,避免模型过拟合。

飞桨框架支持为整体参数加入正则化项,这是通常的做法。此外,飞桨框架也支持为某一层或某一部分的网络单独加入正则化项,以达到精细调整参数训练的效果。

5. 可视化分析。

用户不仅可以通过打印或使用matplotlib库作图,飞桨还提供了更专业的可视化分析工具VisualDL,提供便捷的可视化分析方法。

计算模型的分类准确率

准确率是一个直观衡量分类模型效果的指标,由于这个指标是离散的,因此不适合作为损失函数来优化。通常情况下,交叉熵损失越小的模型,分类的准确率也越高。基于分类准确率,我们可以公平的比较两种损失函数的优劣,例如【手写数字识别】之损失函数 章节中均方误差和交叉熵的比较。

飞桨提供了计算分类准确率的API,使用fluid.layers.accuracy可以直接计算准确率,该API的输入参数input为预测的分类结果predict,输入参数label为数据真实的label。

在下述代码中,我们在模型前向计算过程forward函数中计算分类准确率,并在训练时打印每个批次样本的分类准确率。

# 加载相关库

import os

import random

import paddle

import paddle.fluid as fluid

from paddle.fluid.dygraph.nn import Conv2D, Pool2D, Linear

import numpy as np

from PIL import Image

import gzip

import json

# 定义数据集读取器

def load_data(mode='train'):

# 读取数据文件

datafile = './work/mnist.json.gz'

print('loading mnist dataset from {} ......'.format(datafile))

data = json.load(gzip.open(datafile))

# 读取数据集中的训练集,验证集和测试集

train_set, val_set, eval_set = data

# 数据集相关参数,图片高度IMG_ROWS, 图片宽度IMG_COLS

IMG_ROWS = 28

IMG_COLS = 28

# 根据输入mode参数决定使用训练集,验证集还是测试

if mode == 'train':

imgs = train_set[0]

labels = train_set[1]

elif mode == 'valid':

imgs = val_set[0]

labels = val_set[1]

elif mode == 'eval':

imgs = eval_set[0]

labels = eval_set[1]

# 获得所有图像的数量

imgs_length = len(imgs)

# 验证图像数量和标签数量是否一致

assert len(imgs) == len(labels), \

"length of train_imgs({}) should be the same as train_labels({})".format(

len(imgs), len(labels))

index_list = list(range(imgs_length))

# 读入数据时用到的batchsize

BATCHSIZE = 100

# 定义数据生成器

def data_generator():

# 训练模式下,打乱训练数据

if mode == 'train':

random.shuffle(index_list)

imgs_list = []

labels_list = []

# 按照索引读取数据

for i in index_list:

# 读取图像和标签,转换其尺寸和类型

img = np.reshape(imgs[i], [1, IMG_ROWS, IMG_COLS]).astype('float32')

label = np.reshape(labels[i], [1]).astype('int64')

imgs_list.append(img)

labels_list.append(label)

# 如果当前数据缓存达到了batch size,就返回一个批次数据

if len(imgs_list) == BATCHSIZE:

yield np.array(imgs_list), np.array(labels_list)

# 清空数据缓存列表

imgs_list = []

labels_list = []

# 如果剩余数据的数目小于BATCHSIZE,

# 则剩余数据一起构成一个大小为len(imgs_list)的mini-batch

if len(imgs_list) > 0:

yield np.array(imgs_list), np.array(labels_list)

return data_generator

# 定义模型结构

class MNIST(fluid.dygraph.Layer):

def __init__(self):

super(MNIST, self).__init__()

# 定义一个卷积层,使用relu激活函数

self.conv1 = Conv2D(num_channels=1, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool1 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个卷积层,使用relu激活函数

self.conv2 = Conv2D(num_channels=20, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool2 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个全连接层,输出节点数为10

self.fc = Linear(input_dim=980, output_dim=10, act='softmax')

# 定义网络的前向计算过程

def forward(self, inputs, label):

x = self.conv1(inputs)

x = self.pool1(x)

x = self.conv2(x)

x = self.pool2(x)

x = fluid.layers.reshape(x, [x.shape[0], 980])

x = self.fc(x)

if label is not None:

acc = fluid.layers.accuracy(input=x, label=label)

return x, acc

else:

return x

#调用加载数据的函数

train_loader = load_data('train')

#在使用GPU机器时,可以将use_gpu变量设置成True

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

with fluid.dygraph.guard(place):

model = MNIST()

model.train()

#四种优化算法的设置方案,可以逐一尝试效果

optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, parameter_list=model.parameters())

#optimizer = fluid.optimizer.MomentumOptimizer(learning_rate=0.01, momentum=0.9, parameter_list=model.parameters())

#optimizer = fluid.optimizer.AdagradOptimizer(learning_rate=0.01, parameter_list=model.parameters())

#optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01, parameter_list=model.parameters())

EPOCH_NUM = 5

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程,同时拿到模型输出值和分类准确率

predict, acc = model(image, label)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了200批次的数据,打印下当前Loss的情况

if batch_id % 200 == 0:

print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(), acc.numpy()))

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

#保存模型参数

fluid.save_dygraph(model.state_dict(), 'mnist')

loading mnist dataset from ./work/mnist.json.gz ......

epoch: 0, batch: 0, loss is: [2.643961], acc is [0.21]

epoch: 0, batch: 200, loss is: [0.48539692], acc is [0.87]

epoch: 0, batch: 400, loss is: [0.13475493], acc is [0.97]

epoch: 1, batch: 0, loss is: [0.242902], acc is [0.93]

epoch: 1, batch: 200, loss is: [0.13521576], acc is [0.96]

epoch: 1, batch: 400, loss is: [0.11970446], acc is [0.96]

epoch: 2, batch: 0, loss is: [0.16194618], acc is [0.92]

epoch: 2, batch: 200, loss is: [0.30858696], acc is [0.93]

epoch: 2, batch: 400, loss is: [0.05798883], acc is [0.99]

epoch: 3, batch: 0, loss is: [0.06590404], acc is [0.98]

epoch: 3, batch: 200, loss is: [0.09361169], acc is [0.96]

epoch: 3, batch: 400, loss is: [0.0949958], acc is [0.97]

epoch: 4, batch: 0, loss is: [0.1374774], acc is [0.97]

epoch: 4, batch: 200, loss is: [0.07921284], acc is [0.99]

epoch: 4, batch: 400, loss is: [0.09471921], acc is [0.97]

检查模型训练过程,识别潜在训练问题

使用飞桨动态图可以方便的查看和调试训练的执行过程。在网络定义的Forward函数中,可以打印每一层输入输出的尺寸,以及每层网络的参数。通过查看这些信息,不仅可以更好地理解训练的执行过程,还可以发现潜在问题,或者启发继续优化的思路。

在下述程序中,使用check_shape变量控制是否打印“尺寸”,验证网络结构是否正确。使用check_content变量控制是否打印“内容值”,验证数据分布是否合理。假如在训练中发现中间层的部分输出持续为0,说明该部分的网络结构设计存在问题,没有充分利用。

# 定义模型结构

class MNIST(fluid.dygraph.Layer):

def __init__(self):

super(MNIST, self).__init__()

# 定义一个卷积层,使用relu激活函数

self.conv1 = Conv2D(num_channels=1, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool1 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个卷积层,使用relu激活函数

self.conv2 = Conv2D(num_channels=20, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool2 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个全连接层,输出节点数为10

self.fc = Linear(input_dim=980, output_dim=10, act='softmax')

# 加入对每一层输入和输出的尺寸和数据内容的打印,根据check参数决策是否打印每层的参数和输出尺寸

def forward(self, inputs, label=None, check_shape=False, check_content=False):

# 给不同层的输出不同命名,方便调试

outputs1 = self.conv1(inputs)

outputs2 = self.pool1(outputs1)

outputs3 = self.conv2(outputs2)

outputs4 = self.pool2(outputs3)

_outputs4 = fluid.layers.reshape(outputs4, [outputs4.shape[0], -1])

outputs5 = self.fc(_outputs4)

# 选择是否打印神经网络每层的参数尺寸和输出尺寸,验证网络结构是否设置正确

if check_shape:

# 打印每层网络设置的超参数-卷积核尺寸,卷积步长,卷积padding,池化核尺寸

print("\n########## print network layer's superparams ##############")

print("conv1-- kernel_size:{}, padding:{}, stride:{}".format(self.conv1.weight.shape, self.conv1._padding, self.conv1._stride))

print("conv2-- kernel_size:{}, padding:{}, stride:{}".format(self.conv2.weight.shape, self.conv2._padding, self.conv2._stride))

print("pool1-- pool_type:{}, pool_size:{}, pool_stride:{}".format(self.pool1._pool_type, self.pool1._pool_size, self.pool1._pool_stride))

print("pool2-- pool_type:{}, poo2_size:{}, pool_stride:{}".format(self.pool2._pool_type, self.pool2._pool_size, self.pool2._pool_stride))

print("fc-- weight_size:{}, bias_size_{}, activation:{}".format(self.fc.weight.shape, self.fc.bias.shape, self.fc._act))

# 打印每层的输出尺寸

print("\n########## print shape of features of every layer ###############")

print("inputs_shape: {}".format(inputs.shape))

print("outputs1_shape: {}".format(outputs1.shape))

print("outputs2_shape: {}".format(outputs2.shape))

print("outputs3_shape: {}".format(outputs3.shape))

print("outputs4_shape: {}".format(outputs4.shape))

print("outputs5_shape: {}".format(outputs5.shape))

# 选择是否打印训练过程中的参数和输出内容,可用于训练过程中的调试

if check_content:

# 打印卷积层的参数-卷积核权重,权重参数较多,此处只打印部分参数

print("\n########## print convolution layer's kernel ###############")

print("conv1 params -- kernel weights:", self.conv1.weight[0][0])

print("conv2 params -- kernel weights:", self.conv2.weight[0][0])

# 创建随机数,随机打印某一个通道的输出值

idx1 = np.random.randint(0, outputs1.shape[1])

idx2 = np.random.randint(0, outputs3.shape[1])

# 打印卷积-池化后的结果,仅打印batch中第一个图像对应的特征

print("\nThe {}th channel of conv1 layer: ".format(idx1), outputs1[0][idx1])

print("The {}th channel of conv2 layer: ".format(idx2), outputs3[0][idx2])

print("The output of last layer:", outputs5[0], '\n')

# 如果label不是None,则计算分类精度并返回

if label is not None:

acc = fluid.layers.accuracy(input=outputs5, label=label)

return outputs5, acc

else:

return outputs5

#在使用GPU机器时,可以将use_gpu变量设置成True

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

with fluid.dygraph.guard(place):

model = MNIST()

model.train()

#四种优化算法的设置方案,可以逐一尝试效果

optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, parameter_list=model.parameters())

#optimizer = fluid.optimizer.MomentumOptimizer(learning_rate=0.01, momentum=0.9, parameter_list=model.parameters())

#optimizer = fluid.optimizer.AdagradOptimizer(learning_rate=0.01, parameter_list=model.parameters())

#optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01, parameter_list=model.parameters())

EPOCH_NUM = 1

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据,变得更加简洁

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程,同时拿到模型输出值和分类准确率

if batch_id == 0 and epoch_id==0:

# 打印模型参数和每层输出的尺寸

predict, acc = model(image, label, check_shape=True, check_content=False)

elif batch_id==401:

# 打印模型参数和每层输出的值

predict, acc = model(image, label, check_shape=False, check_content=True)

else:

predict, acc = model(image, label)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了100批次的数据,打印下当前Loss的情况

if batch_id % 200 == 0:

print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(), acc.numpy()))

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

#保存模型参数

fluid.save_dygraph(model.state_dict(), 'mnist')

print("Model has been saved.")

########## print network layer's superparams ##############

conv1-- kernel_size:[20, 1, 5, 5], padding:[2, 2], stride:[1, 1]

conv2-- kernel_size:[20, 20, 5, 5], padding:[2, 2], stride:[1, 1]

pool1-- pool_type:max, pool_size:[2, 2], pool_stride:[2, 2]

pool2-- pool_type:max, poo2_size:[2, 2], pool_stride:[2, 2]

fc-- weight_size:[980, 10], bias_size_[10], activation:softmax

########## print shape of features of every layer ###############

inputs_shape: [100, 1, 28, 28]

outputs1_shape: [100, 20, 28, 28]

outputs2_shape: [100, 20, 14, 14]

outputs3_shape: [100, 20, 14, 14]

outputs4_shape: [100, 20, 7, 7]

outputs5_shape: [100, 10]

epoch: 0, batch: 0, loss is: [2.4436905], acc is [0.12]

epoch: 0, batch: 200, loss is: [0.5071287], acc is [0.85]

epoch: 0, batch: 400, loss is: [0.33369377], acc is [0.9]

########## print convolution layer's kernel ###############

conv1 params -- kernel weights: name tmp_9640, dtype: VarType.FP32 shape: [5, 5] lod: {}

dim: 5, 5

layout: NCHW

dtype: float

data: [-0.0788555 -0.263069 0.173067 0.0943731 -0.330341 0.0236578 -0.179387 0.210532 -0.447157 -0.0522538 -0.0654522 0.217332 -0.0451361 0.150161 -0.0948279 0.0692094 -0.0831029 -0.106822 -0.0641434 0.175998 -0.246845 0.182557 -0.0475383 0.0575641 0.00949544]

conv2 params -- kernel weights: name tmp_9642, dtype: VarType.FP32 shape: [5, 5] lod: {}

dim: 5, 5

layout: NCHW

dtype: float

data: [0.0423198 0.073819 0.0338475 -0.0274708 0.0477285 -0.0288095 0.0534335 0.0215134 -0.0577497 -0.0343794 -0.023998 -0.0832208 0.0250395 -0.00252906 0.0141905 -0.00945345 -0.0830555 -0.00147843 0.0212909 0.03187 0.0439529 0.0296657 -0.0700109 0.0171939 0.098752]

The 4th channel of conv1 layer: name tmp_9644, dtype: VarType.FP32 shape: [28, 28] lod: {}

dim: 28, 28

layout: NCHW

dtype: float

data: [0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.021769 0.206861 0.515136 0.321631 0.0243235 0 0 0 0 0 0 0 0 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.0668731 0.524322 1.00596 0.860126 0.536083 0.253132 0.141473 0.0800665 0 0 0 0 0 0 0 0 0 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.0604853 0.415215 0.837638 1.33614 1.58236 1.83481 1.72051 1.62514 1.4474 1.12601 0.968901 0.73601 0.359039 0.297623 0.201985 0 0 0 0 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.0746841 0.523313 0.608934 1.36186 2.1777 2.50967 2.54228 2.49515 2.27341 2.35814 2.35396 2.17972 1.97005 1.99839 1.83783 1.42219 1.01067 0.676495 0.172069 0 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.0612366 0.241015 0.0724264 0.524382 1.09504 1.88296 2.39797 2.60094 2.61296 2.62991 2.73247 2.59973 2.60205 2.681 2.62454 2.70811 2.70364 2.21264 1.59859 0.704268 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0 0 0.109234 0.0714753 0.437148 0.913582 1.24854 1.50245 1.20571 1.23379 1.25767 1.35831 1.56994 2.03818 2.52027 2.86013 2.88906 2.78742 2.3397 1.54449 0.657499 0.475663 0.322296 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0 0 0.02484 0 0.139519 0.281053 0.367238 0.26733 0.45591 0.457699 0.68033 1.07908 1.17043 1.30375 1.48663 1.74101 1.99182 2.20382 1.65924 1.64602 1.2718 0.838662 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0 0 0 0 0.107223 0.10521 0.116383 0.301448 0.445406 0.434222 0.392996 0.4552 0.734872 0.971918 1.2271 1.59136 2.02296 1.82172 2.25252 1.79786 1.04398 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.00696449 0.0698108 0.306114 0.638249 0.574245 0.48706 0.505045 0.650838 0.922208 1.03083 1.26737 1.60843 1.94611 2.14032 1.96019 2.09455 1.45005 0.513548 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.119476 0.531783 0.791687 0.683928 0.617155 0.647625 0.915661 1.16325 1.55895 1.70391 1.87787 2.00281 2.0758 1.86225 1.80364 1.01006 0.686135 0.207028 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.320864 0.93655 1.05922 1.02103 0.928237 0.967936 1.41041 1.74845 1.9663 2.11404 2.11844 1.57571 1.33162 0.857894 0.693299 0.448657 0.194235 0.0242473 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.393046 1.09965 1.60758 1.35675 1.64695 1.59205 1.52007 1.51716 1.57844 1.39144 1.05012 0.740933 0.491424 0.425326 0.244853 0.0695524 0.0149523 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.554032 0.882544 1.32872 1.84544 2.28876 2.32176 1.85827 1.31267 0.274212 0.111813 0.259714 0.296329 0.115518 0.0103048 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.347815 0.329892 0.807692 1.69389 2.5928 3.04758 2.67524 1.92107 0.992633 0.0305768 0 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.148353 0.138423 0.233999 0.979146 2.00054 2.88146 3.22387 3.0289 2.0821 1.28299 0.43623 0 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0 0.0721807 0.0504756 0.298574 0.931542 1.71075 2.63278 2.95375 2.96944 2.31449 1.36622 0.299858 0 0 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.0029269 0.0263158 0.0601825 0.0492615 0.119867 0.166048 0.431127 0.79332 1.27371 1.7948 2.09278 2.49575 2.01011 0.98905 0.679764 0.501817 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.0385923 0.132152 0.102091 0.139501 0.204173 0.179747 0.236033 0.235277 0.166877 0.145003 0.242188 0.454453 0.732812 0.996087 1.60188 2.15716 1.84891 1.95408 1.41035 0.901101 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.236445 0.696243 0.510899 0.286746 0.220288 0.3412 0.396412 0.451625 0.469622 0.512363 0.874174 1.23393 1.4006 1.43654 1.89804 2.26362 2.04628 2.33783 1.89397 1.1208 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.403834 0.843024 0.839847 0.726494 0.804957 0.927189 1.05453 1.47207 1.5843 2.06989 2.11453 2.17133 2.07847 2.04159 2.05571 2.1554 1.88123 1.94069 1.40543 0.573527 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.345792 0.418812 0.787919 1.2535 1.97145 2.11531 1.98339 2.046 2.0701 2.09752 2.11892 2.01939 1.80322 1.74538 1.64131 1.14534 1.03367 0.734202 0.629127 0.233853 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.485959 0.138767 0.520503 0.905833 1.59099 1.51878 1.59747 1.58025 1.36107 1.26349 1.02513 0.828682 0.608867 0.583614 0.5606 0.490733 0.379575 0.292602 0.169543 0.0366406 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.082286 0 0 0.32423 0.659481 0.512581 0.552353 0.510085 0.41547 0.353352 0.310295 0.242932 0.200082 0.255137 0.229881 0.141555 0.0389643 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0 0 0 0 0.0934399 0.148254 0.174985 0.203264 0.163449 0.0682934 0.0498783 0.0219235 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174 0.000235174]

The 12th channel of conv2 layer: name tmp_9646, dtype: VarType.FP32 shape: [14, 14] lod: {}

dim: 14, 14

layout: NCHW

dtype: float

data: [0.000929017 0 0.309475 1.11119 0.805983 0.423284 0.454608 0.371629 0.343919 0.243843 0.172078 0.087311 0 0 0 0 0 1.28896 0.902538 0.373528 0.289768 0.699572 0.872787 0.698695 0.569343 0.385074 0.307633 0.00944422 0.000272553 0.0698131 1.10257 2.11675 1.87117 0.979725 0.84203 0.403135 0.226651 0.382831 1.25325 0.632039 0.0170033 0 0.407463 0.740803 1.84782 3.05002 2.35104 1.1681 1.21093 1.00907 0.961463 1.55646 2.12036 0.960969 0 0 0.368913 0.893702 1.16343 1.2273 0.496713 0 0.568834 0.924744 1.6509 2.91082 2.86438 0.793923 0 0 0.2758 0.485655 0.441895 0.0167996 0 1.37072 2.50922 2.92496 2.94638 2.93562 1.74751 0 0 0 0.0507057 0.0662391 0 0 0.485309 3.00727 3.789 3.38903 2.40032 0.864312 0 0 0 0 0.0686874 0.0670989 0.0868876 0 1.54648 2.88879 2.33173 1.0526 0.984942 0 0 0 0 0 0 0.0354275 0.486811 1.16066 2.06858 2.36519 1.58058 1.37376 1.42412 0 0 0 0 0 0 0 0.965428 1.20054 1.37817 1.81458 1.3883 1.87815 2.00237 0 0 0 0 0 0 0.0538009 1.61561 1.87641 1.69647 2.11846 2.31551 2.52584 2.15006 0.118366 0 0 0 0.0242238 0.401002 1.89597 3.05333 2.50413 2.44726 2.3666 1.93338 1.04305 0.073456 0 0 0 0 0.0242238 0.671001 1.28224 2.02276 1.09289 0.301835 0.0253051 0 0 0 0 0 0 0 0.0193666 0.617558 0.746628 1.01896 0.519439 0.15919 0 0 0 0 0 0 0 0 0.0156319]

The output of last layer: name tmp_9647, dtype: VarType.FP32 shape: [10] lod: {}

dim: 10

layout: NCHW

dtype: float

data: [2.33763e-05 2.27381e-06 0.0174974 0.938395 3.25289e-06 0.00404238 3.40669e-06 0.000206355 0.0395423 0.000284761]

Model has been saved.

加入校验或测试,更好评价模型效果

在训练过程中,我们会发现模型在训练样本集上的损失在不断减小。但这是否代表模型在未来的应用场景上依然有效?为了验证模型的有效性,通常将样本集合分成三份,训练集、校验集和测试集。

- 训练集 :用于训练模型的参数,即训练过程中主要完成的工作。

- 校验集 :用于对模型超参数的选择,比如网络结构的调整、正则化项权重的选择等。

- 测试集 :用于模拟模型在应用后的真实效果。因为测试集没有参与任何模型优化或参数训练的工作,所以它对模型来说是完全未知的样本。在不以校验数据优化网络结构或模型超参数时,校验数据和测试数据的效果是类似的,均更真实的反映模型效果。

如下程序读取上一步训练保存的模型参数,读取测试数据集,并测试模型在测试数据集上的效果。

with fluid.dygraph.guard():

print('start evaluation .......')

#加载模型参数

model = MNIST()

model_state_dict, _ = fluid.load_dygraph('mnist')

model.load_dict(model_state_dict)

model.eval()

eval_loader = load_data('eval')

acc_set = []

avg_loss_set = []

for batch_id, data in enumerate(eval_loader()):

x_data, y_data = data

img = fluid.dygraph.to_variable(x_data)

label = fluid.dygraph.to_variable(y_data)

prediction, acc = model(img, label)

loss = fluid.layers.cross_entropy(input=prediction, label=label)

avg_loss = fluid.layers.mean(loss)

acc_set.append(float(acc.numpy()))

avg_loss_set.append(float(avg_loss.numpy()))

#计算多个batch的平均损失和准确率

acc_val_mean = np.array(acc_set).mean()

avg_loss_val_mean = np.array(avg_loss_set).mean()

print('loss={}, acc={}'.format(avg_loss_val_mean, acc_val_mean))

start evaluation .......

loading mnist dataset from ./work/mnist.json.gz ......

loss=0.24862054150551557, acc=0.9312999987602234

从测试的效果来看,模型在测试集上依然有93%的准确率,证明它是有预测效果的。

加入正则化项,避免模型过拟合

过拟合现象

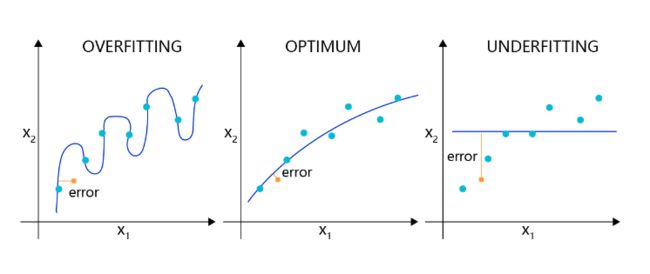

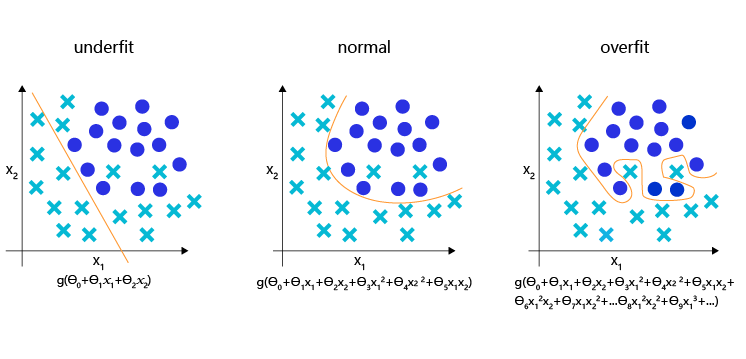

对于样本量有限、但需要使用强大模型的复杂任务,模型很容易出现过拟合的表现,即在训练集上的损失小,在验证集或测试集上的损失较大,如 图2 所示。

图2:过拟合现象,训练误差不断降低,但测试误差先降后增

反之,如果模型在训练集和测试集上均损失较大,则称为欠拟合。过拟合表示模型过于敏感,学习到了训练数据中的一些误差,而这些误差并不是真实的泛化规律(可推广到测试集上的规律)。欠拟合表示模型还不够强大,还没有很好的拟合已知的训练样本,更别提测试样本了。因为欠拟合情况容易观察和解决,只要训练loss不够好,就不断使用更强大的模型即可,因此实际中我们更需要处理好过拟合的问题。

导致过拟合原因

造成过拟合的原因是模型过于敏感,而训练数据量太少或其中的噪音太多。

如图3 所示,理想的回归模型是一条坡度较缓的抛物线,欠拟合的模型只拟合出一条直线,显然没有捕捉到真实的规律,但过拟合的模型拟合出存在很多拐点的抛物线,显然是过于敏感,也没有正确表达真实规律。

图3:回归模型的过拟合,理想和欠拟合状态的表现

如图4 所示,理想的分类模型是一条半圆形的曲线,欠拟合用直线作为分类边界,显然没有捕捉到真实的边界,但过拟合的模型拟合出很扭曲的分类边界,虽然对所有的训练数据正确分类,但对一些较为个例的样本所做出的妥协,高概率不是真实的规律。

图4:分类模型的欠拟合,理想和过拟合状态的表现

过拟合的成因与防控

为了更好的理解过拟合的成因,可以参考侦探定位罪犯的案例逻辑,如 图5 所示。

图5:侦探定位罪犯与模型假设示意

对于这个案例,假设侦探也会犯错,通过分析发现可能的原因:

-

情况1:罪犯证据存在错误,依据错误的证据寻找罪犯肯定是缘木求鱼。

-

情况2:搜索范围太大的同时证据太少,导致符合条件的候选(嫌疑人)太多,无法准确定位罪犯。

那么侦探解决这个问题的方法有两种:或者缩小搜索范围(比如假设该案件只能是熟人作案),或者寻找更多的证据。

归结到深度学习中,假设模型也会犯错,通过分析发现可能的原因:

-

情况1:训练数据存在噪音,导致模型学到了噪音,而不是真实规律。

-

情况2:使用强大模型(表示空间大)的同时训练数据太少,导致在训练数据上表现良好的候选假设太多,锁定了一个“虚假正确”的假设。

对于情况1,我们使用数据清洗和修正来解决。 对于情况2,我们或者限制模型表示能力,或者收集更多的训练数据。

而清洗训练数据中的错误,或收集更多的训练数据往往是一句“正确的废话”,在任何时候我们都想获得更多更高质量的数据。在实际项目中,更快、更低成本可控制过拟合的方法,只有限制模型的表示能力。

正则化项

为了防止模型过拟合,在没有扩充样本量的可能下,只能降低模型的复杂度,可以通过限制参数的数量或可能取值(参数值尽量小)实现。

具体来说,在模型的优化目标(损失)中人为加入对参数规模的惩罚项。当参数越多或取值越大时,该惩罚项就越大。通过调整惩罚项的权重系数,可以使模型在“尽量减少训练损失”和“保持模型的泛化能力”之间取得平衡。泛化能力表示模型在没有见过的样本上依然有效。正则化项的存在,增加了模型在训练集上的损失。

飞桨支持为所有参数加上统一的正则化项,也支持为特定的参数添加正则化项。前者的实现如下代码所示,仅在优化器中设置regularization参数即可实现。使用参数regularization_coeff调节正则化项的权重,权重越大时,对模型复杂度的惩罚越高。

with fluid.dygraph.guard():

model = MNIST()

model.train()

#各种优化算法均可以加入正则化项,避免过拟合,参数regularization_coeff调节正则化项的权重

#optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, regularization=fluid.regularizer.L2Decay(regularization_coeff=0.1),parameter_list=model.parameters()))

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=0.01, regularization=fluid.regularizer.L2Decay(regularization_coeff=0.1),parameter_list=model.parameters())

EPOCH_NUM = 10

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据,变得更加简洁

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程,同时拿到模型输出值和分类准确率

predict, acc = model(image, label)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了100批次的数据,打印下当前Loss的情况

if batch_id % 100 == 0:

print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(), acc.numpy()))

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

#保存模型参数

fluid.save_dygraph(model.state_dict(), 'mnist')

epoch: 0, batch: 0, loss is: [2.5837646], acc is [0.07]

epoch: 0, batch: 100, loss is: [0.44785255], acc is [0.86]

epoch: 0, batch: 200, loss is: [0.5621276], acc is [0.79]

epoch: 0, batch: 300, loss is: [0.36024576], acc is [0.9]

epoch: 0, batch: 400, loss is: [0.2137454], acc is [0.97]

epoch: 1, batch: 0, loss is: [0.32742903], acc is [0.94]

epoch: 1, batch: 100, loss is: [0.38002104], acc is [0.9]

epoch: 1, batch: 200, loss is: [0.28988546], acc is [0.91]

epoch: 1, batch: 300, loss is: [0.3161943], acc is [0.92]

epoch: 1, batch: 400, loss is: [0.26775864], acc is [0.92]

epoch: 2, batch: 0, loss is: [0.41065365], acc is [0.88]

epoch: 2, batch: 100, loss is: [0.36259034], acc is [0.93]

epoch: 2, batch: 200, loss is: [0.23258069], acc is [0.97]

epoch: 2, batch: 300, loss is: [0.438219], acc is [0.84]

epoch: 2, batch: 400, loss is: [0.3724376], acc is [0.91]

epoch: 3, batch: 0, loss is: [0.32197174], acc is [0.92]

epoch: 3, batch: 100, loss is: [0.22500922], acc is [0.95]

epoch: 3, batch: 200, loss is: [0.2791322], acc is [0.93]

epoch: 3, batch: 300, loss is: [0.3274293], acc is [0.92]

epoch: 3, batch: 400, loss is: [0.34126315], acc is [0.92]

epoch: 4, batch: 0, loss is: [0.2301678], acc is [0.96]

epoch: 4, batch: 100, loss is: [0.3037812], acc is [0.91]

epoch: 4, batch: 200, loss is: [0.26526916], acc is [0.91]

epoch: 4, batch: 300, loss is: [0.3834384], acc is [0.92]

epoch: 4, batch: 400, loss is: [0.28414917], acc is [0.93]

epoch: 5, batch: 0, loss is: [0.34119365], acc is [0.92]

epoch: 5, batch: 100, loss is: [0.4605037], acc is [0.83]

epoch: 5, batch: 200, loss is: [0.45947126], acc is [0.86]

epoch: 5, batch: 300, loss is: [0.29822484], acc is [0.92]

epoch: 5, batch: 400, loss is: [0.32954705], acc is [0.89]

epoch: 6, batch: 0, loss is: [0.31615752], acc is [0.92]

epoch: 6, batch: 100, loss is: [0.33288595], acc is [0.92]

epoch: 6, batch: 200, loss is: [0.35239428], acc is [0.89]

epoch: 6, batch: 300, loss is: [0.26963434], acc is [0.94]

epoch: 6, batch: 400, loss is: [0.26679957], acc is [0.95]

epoch: 7, batch: 0, loss is: [0.5286848], acc is [0.84]

epoch: 7, batch: 100, loss is: [0.2586086], acc is [0.93]

epoch: 7, batch: 200, loss is: [0.2933053], acc is [0.94]

epoch: 7, batch: 300, loss is: [0.3014267], acc is [0.95]

epoch: 7, batch: 400, loss is: [0.24960919], acc is [0.93]

epoch: 8, batch: 0, loss is: [0.47720584], acc is [0.84]

epoch: 8, batch: 100, loss is: [0.4612022], acc is [0.83]

epoch: 8, batch: 200, loss is: [0.37532455], acc is [0.9]

epoch: 8, batch: 300, loss is: [0.31857097], acc is [0.94]

epoch: 8, batch: 400, loss is: [0.29566267], acc is [0.95]

epoch: 9, batch: 0, loss is: [0.27703482], acc is [0.92]

epoch: 9, batch: 100, loss is: [0.42859527], acc is [0.9]

epoch: 9, batch: 200, loss is: [0.31278244], acc is [0.91]

epoch: 9, batch: 300, loss is: [0.2926207], acc is [0.93]

epoch: 9, batch: 400, loss is: [0.27071095], acc is [0.95]

可视化分析

训练模型时,经常需要观察模型的评价指标,分析模型的优化过程,以确保训练是有效的。可选用这两种工具:Matplotlib库和VisualDL。

- Matplotlib库:Matplotlib库是Python中使用的最多的2D图形绘图库,它有一套完全仿照MATLAB的函数形式的绘图接口,使用轻量级的PLT库(Matplotlib)作图是非常简单的。

- VisualDL:如果期望使用更加专业的作图工具,可以尝试VisualDL,飞桨可视化分析工具。VisualDL能够有效地展示飞桨在运行过程中的计算图、各种指标变化趋势和数据信息。

使用Matplotlib库绘制损失随训练下降的曲线图

将训练的批次编号作为X轴坐标,该批次的训练损失作为Y轴坐标。

- 训练开始前,声明两个列表变量存储对应的批次编号(iters=[])和训练损失(losses=[])。

iters=[]

losses=[]

for epoch_id in range(EPOCH_NUM):

"""start to training"""

- 随着训练的进行,将iter和losses两个列表填满。

iters=[]

losses=[]

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

predict, acc = model(image, label)

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

# 累计迭代次数和对应的loss

iters.append(batch_id + epoch_id*len(list(train_loader()))

losses.append(avg_loss)

- 训练结束后,将两份数据以参数形式导入PLT的横纵坐标。

plt.xlabel("iter", fontsize=14),plt.ylabel("loss", fontsize=14)

- 最后,调用plt.plot()函数即可完成作图。

plt.plot(iters, losses,color='red',label='train loss')

详细代码如下:

#引入matplotlib库

import matplotlib.pyplot as plt

with fluid.dygraph.guard(place):

model = MNIST()

model.train()

optimizer = fluid.optimizer.SGDOptimizer(learning_rate=0.01, parameter_list=model.parameters())

EPOCH_NUM = 10

iter=0

iters=[]

losses=[]

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据,变得更加简洁

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程,同时拿到模型输出值和分类准确率

predict, acc = model(image, label)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了100批次的数据,打印下当前Loss的情况

if batch_id % 100 == 0:

print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(), acc.numpy()))

iters.append(iter)

losses.append(avg_loss.numpy())

iter = iter + 100

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

#保存模型参数

fluid.save_dygraph(model.state_dict(), 'mnist')

2020-08-15 17:59:27,562-INFO: font search path ['/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/mpl-data/fonts/ttf', '/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/mpl-data/fonts/afm', '/opt/conda/envs/python35-paddle120-env/lib/python3.7/site-packages/matplotlib/mpl-data/fonts/pdfcorefonts']

2020-08-15 17:59:28,978-INFO: generated new fontManager

epoch: 0, batch: 0, loss is: [2.8164496], acc is [0.17]

epoch: 0, batch: 100, loss is: [0.7803092], acc is [0.76]

epoch: 0, batch: 200, loss is: [0.35576484], acc is [0.91]

epoch: 0, batch: 300, loss is: [0.41823608], acc is [0.88]

epoch: 0, batch: 400, loss is: [0.30141822], acc is [0.93]

epoch: 1, batch: 0, loss is: [0.31177253], acc is [0.89]

epoch: 1, batch: 100, loss is: [0.18210725], acc is [0.93]

epoch: 1, batch: 200, loss is: [0.19340122], acc is [0.94]

epoch: 1, batch: 300, loss is: [0.14683631], acc is [0.95]

epoch: 1, batch: 400, loss is: [0.29668286], acc is [0.89]

epoch: 2, batch: 0, loss is: [0.21461716], acc is [0.92]

epoch: 2, batch: 100, loss is: [0.23927967], acc is [0.93]

epoch: 2, batch: 200, loss is: [0.19158168], acc is [0.93]

epoch: 2, batch: 300, loss is: [0.2452182], acc is [0.92]

epoch: 2, batch: 400, loss is: [0.4062963], acc is [0.91]

epoch: 3, batch: 0, loss is: [0.1661708], acc is [0.94]

epoch: 3, batch: 100, loss is: [0.07780333], acc is [0.98]

epoch: 3, batch: 200, loss is: [0.16298363], acc is [0.95]

epoch: 3, batch: 300, loss is: [0.167503], acc is [0.96]

epoch: 3, batch: 400, loss is: [0.12050762], acc is [0.96]

epoch: 4, batch: 0, loss is: [0.21981199], acc is [0.92]

epoch: 4, batch: 100, loss is: [0.11972132], acc is [0.97]

epoch: 4, batch: 200, loss is: [0.20223652], acc is [0.94]

epoch: 4, batch: 300, loss is: [0.06286619], acc is [0.98]

epoch: 4, batch: 400, loss is: [0.0977192], acc is [0.97]

epoch: 5, batch: 0, loss is: [0.135991], acc is [0.97]

epoch: 5, batch: 100, loss is: [0.13423134], acc is [0.94]

epoch: 5, batch: 200, loss is: [0.09947106], acc is [0.97]

epoch: 5, batch: 300, loss is: [0.26743537], acc is [0.92]

epoch: 5, batch: 400, loss is: [0.08938275], acc is [0.96]

epoch: 6, batch: 0, loss is: [0.09112569], acc is [0.97]

epoch: 6, batch: 100, loss is: [0.06174086], acc is [0.98]

epoch: 6, batch: 200, loss is: [0.10499033], acc is [0.97]

epoch: 6, batch: 300, loss is: [0.07620909], acc is [0.97]

epoch: 6, batch: 400, loss is: [0.12196523], acc is [0.97]

epoch: 7, batch: 0, loss is: [0.09976912], acc is [0.95]

epoch: 7, batch: 100, loss is: [0.09516644], acc is [0.97]

epoch: 7, batch: 200, loss is: [0.17530455], acc is [0.93]

epoch: 7, batch: 300, loss is: [0.08290362], acc is [0.98]

epoch: 7, batch: 400, loss is: [0.0663472], acc is [0.98]

epoch: 8, batch: 0, loss is: [0.02740639], acc is [1.]

epoch: 8, batch: 100, loss is: [0.11678866], acc is [0.97]

epoch: 8, batch: 200, loss is: [0.07043077], acc is [0.97]

epoch: 8, batch: 300, loss is: [0.02732858], acc is [1.]

epoch: 8, batch: 400, loss is: [0.10318086], acc is [0.97]

epoch: 9, batch: 0, loss is: [0.0483377], acc is [0.99]

epoch: 9, batch: 100, loss is: [0.04613008], acc is [0.99]

epoch: 9, batch: 200, loss is: [0.07501897], acc is [0.97]

epoch: 9, batch: 300, loss is: [0.04640705], acc is [0.99]

epoch: 9, batch: 400, loss is: [0.031492], acc is [0.99]

#画出训练过程中Loss的变化曲线

plt.figure()

plt.title("train loss", fontsize=24)

plt.xlabel("iter", fontsize=14)

plt.ylabel("loss", fontsize=14)

plt.plot(iters, losses,color='red',label='train loss')

plt.grid()

plt.show()

三、【手写数字识别】之恢复训练

模型加载及恢复训练

在之前的章节已经向读者介绍了将训练好的模型保存到磁盘文件的方法。应用程序可以随时加载模型,完成预测任务。但是在日常训练工作中,我们会遇到一些突发情况,导致训练过程主动或被动的中断。如果训练一个模型需要花费几天的时间,中断后从初始状态重新训练是不可接受的。

万幸的是,飞桨支持从上一次保存状态开始继续训练,只要我们随时保存训练过程中的模型状态,就不用从初始状态重新训练。

下面介绍恢复训练的实现方法,依然使用手写数字识别的案例,网络定义的部分保持不变。

import os

import random

import paddle

import paddle.fluid as fluid

from paddle.fluid.dygraph.nn import Conv2D, Pool2D, Linear

import numpy as np

from PIL import Image

import gzip

import json

# 定义数据集读取器

def load_data(mode='train'):

# 数据文件

datafile = './work/mnist.json.gz'

print('loading mnist dataset from {} ......'.format(datafile))

data = json.load(gzip.open(datafile))

train_set, val_set, eval_set = data

# 数据集相关参数,图片高度IMG_ROWS, 图片宽度IMG_COLS

IMG_ROWS = 28

IMG_COLS = 28

if mode == 'train':

imgs = train_set[0]

labels = train_set[1]

elif mode == 'valid':

imgs = val_set[0]

labels = val_set[1]

elif mode == 'eval':

imgs = eval_set[0]

labels = eval_set[1]

imgs_length = len(imgs)

assert len(imgs) == len(labels), \

"length of train_imgs({}) should be the same as train_labels({})".format(

len(imgs), len(labels))

index_list = list(range(imgs_length))

# 读入数据时用到的batchsize

BATCHSIZE = 100

# 定义数据生成器

def data_generator():

if mode == 'train':

random.shuffle(index_list)

imgs_list = []

labels_list = []

for i in index_list:

img = np.reshape(imgs[i], [1, IMG_ROWS, IMG_COLS]).astype('float32')

label = np.reshape(labels[i], [1]).astype('int64')

imgs_list.append(img)

labels_list.append(label)

if len(imgs_list) == BATCHSIZE:

yield np.array(imgs_list), np.array(labels_list)

imgs_list = []

labels_list = []

# 如果剩余数据的数目小于BATCHSIZE,

# 则剩余数据一起构成一个大小为len(imgs_list)的mini-batch

if len(imgs_list) > 0:

yield np.array(imgs_list), np.array(labels_list)

return data_generator

#调用加载数据的函数

train_loader = load_data('train')

# 定义模型结构

class MNIST(fluid.dygraph.Layer):

def __init__(self):

super(MNIST, self).__init__()

# 定义一个卷积层,使用relu激活函数

self.conv1 = Conv2D(num_channels=1, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool1 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个卷积层,使用relu激活函数

self.conv2 = Conv2D(num_channels=20, num_filters=20, filter_size=5, stride=1, padding=2, act='relu')

# 定义一个池化层,池化核为2,步长为2,使用最大池化方式

self.pool2 = Pool2D(pool_size=2, pool_stride=2, pool_type='max')

# 定义一个全连接层,输出节点数为10

self.fc = Linear(input_dim=980, output_dim=10, act='softmax')

# 定义网络的前向计算过程

def forward(self, inputs, label):

x = self.conv1(inputs)

x = self.pool1(x)

x = self.conv2(x)

x = self.pool2(x)

x = fluid.layers.reshape(x, [x.shape[0], 980])

x = self.fc(x)

if label is not None:

acc = fluid.layers.accuracy(input=x, label=label)

return x, acc

else:

return x

loading mnist dataset from ./work/mnist.json.gz ......

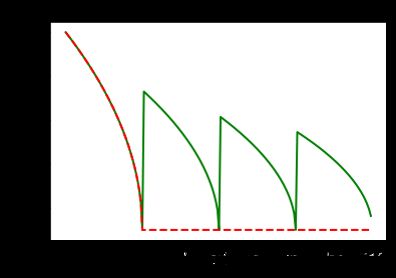

在开始介绍使用飞桨恢复训练前,先正常训练一个模型,优化器使用Adam,使用动态变化的学习率,学习率从0.01衰减到0.001。每训练一轮后保存一次模型,之后将采用其中某一轮的模型参数进行恢复训练,验证一次性训练和中断再恢复训练的模型表现是否一致(训练loss的变化)。

注意进行恢复训练的程序不仅要保存模型参数,还要保存优化器参数。这是因为某些优化器含有一些随着训练过程变换的参数,例如Adam, AdaGrad等优化器采用可变学习率的策略,随着训练进行会逐渐减少学习率。这些优化器的参数对于恢复训练至关重要。

为了演示这个特性,下面训练程序使用Adam优化器,学习率以多项式曲线从0.01衰减到0.001(polynomial decay)。

lr = fluid.dygraph.PolynomialDecay(0.01, total_steps, 0.001)

- learning_rate:初始学习率

- decay_steps:衰减步数

- end_learning_rate:最终学习率

- power:多项式的幂,默认值为1.0

- cycle:下降后是否重新上升,polynomial decay的变化曲线下图所示。

#在使用GPU机器时,可以将use_gpu变量设置成True

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

with fluid.dygraph.guard(place):

model = MNIST()

model.train()

EPOCH_NUM = 5

BATCH_SIZE = 100

# 定义学习率,并加载优化器参数到模型中

total_steps = (int(60000//BATCH_SIZE) + 1) * EPOCH_NUM

lr = fluid.dygraph.PolynomialDecay(0.01, total_steps, 0.001)

# 使用Adam优化器

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=lr, parameter_list=model.parameters())

for epoch_id in range(EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据,变得更加简洁

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程,同时拿到模型输出值和分类准确率

predict, acc = model(image, label)

avg_acc = fluid.layers.mean(acc)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了200批次的数据,打印下当前Loss的情况

if batch_id % 200 == 0:

print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(),avg_acc.numpy()))

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

# 保存模型参数和优化器的参数

fluid.save_dygraph(model.state_dict(), './checkpoint/mnist_epoch{}'.format(epoch_id))

fluid.save_dygraph(optimizer.state_dict(), './checkpoint/mnist_epoch{}'.format(epoch_id))

epoch: 0, batch: 0, loss is: [2.3616602], acc is [0.11]

epoch: 0, batch: 200, loss is: [0.04291746], acc is [0.99]

epoch: 0, batch: 400, loss is: [0.0101074], acc is [1.]

epoch: 1, batch: 0, loss is: [0.03018786], acc is [0.99]

epoch: 1, batch: 200, loss is: [0.00359862], acc is [1.]

epoch: 1, batch: 400, loss is: [0.03399187], acc is [0.98]

epoch: 2, batch: 0, loss is: [0.00359349], acc is [1.]

epoch: 2, batch: 200, loss is: [0.00451456], acc is [1.]

epoch: 2, batch: 400, loss is: [0.00253675], acc is [1.]

epoch: 3, batch: 0, loss is: [0.01382196], acc is [1.]

epoch: 3, batch: 200, loss is: [0.00392301], acc is [1.]

epoch: 3, batch: 400, loss is: [0.01524211], acc is [0.99]

epoch: 4, batch: 0, loss is: [0.00149431], acc is [1.]

epoch: 4, batch: 200, loss is: [0.00273003], acc is [1.]

epoch: 4, batch: 400, loss is: [0.01873435], acc is [0.99]

恢复训练

在上述训练代码中,我们训练了五轮(epoch)。在每轮结束时,均保存了模型参数和优化器相关的参数。

- 使用

model.state_dict()获取模型参数。 - 使用

optimizer.state_dict()获取优化器和学习率相关的参数。 - 调用

fluid.save_dygraph()将参数保存到本地。

比如第一轮训练保存的文件是mnist_epoch0.pdparams,mnist_epoch0.pdopt,分别存储了模型参数和优化器参数。

当加载模型时,如果模型参数文件和优化器参数文件是相同的,我们可以使用load_dygraph同时加载这两个文件,如下代码所示。

params_dict, opt_dict = fluid.load_dygraph(params_path)

如果模型参数文件和优化器参数文件的名字不同,需要调用两次load_dygraph分别获得模型参数和优化器参数。

如何判断模型是否准确的恢复训练呢?

理想的恢复训练是模型状态回到训练中断的时刻,恢复训练之后的梯度更新走向是和恢复训练前的梯度走向完全相同的。基于此,我们可以通过恢复训练后的损失变化,判断上述方法是否能准确的恢复训练。即从epoch 0结束时保存的模型参数和优化器状态恢复训练,校验其后训练的损失变化(epoch 1)是否和不中断时的训练完全一致。

说明:

恢复训练有如下两个要点:

- 保存模型时同时保存模型参数和优化器参数。

- 恢复参数时同时恢复模型参数和优化器参数。

下面的代码将展示恢复训练的过程,并验证恢复训练是否成功。其中,我们重新定义一个train_again()训练函数,加载模型参数并从第一个epoch开始训练,以便读者可以校验恢复训练后的损失变化。

params_path = "./checkpoint/mnist_epoch0"

#在使用GPU机器时,可以将use_gpu变量设置成True

use_gpu = False

place = fluid.CUDAPlace(0) if use_gpu else fluid.CPUPlace()

with fluid.dygraph.guard(place):

# 加载模型参数到模型中

params_dict, opt_dict = fluid.load_dygraph(params_path)

model = MNIST()

model.load_dict(params_dict)

EPOCH_NUM = 5

BATCH_SIZE = 100

# 定义学习率,并加载优化器参数到模型中

total_steps = (int(60000//BATCH_SIZE) + 1) * EPOCH_NUM

lr = fluid.dygraph.PolynomialDecay(0.01, total_steps, 0.001)

# 使用Adam优化器

optimizer = fluid.optimizer.AdamOptimizer(learning_rate=lr, parameter_list=model.parameters())

optimizer.set_dict(opt_dict)

for epoch_id in range(1, EPOCH_NUM):

for batch_id, data in enumerate(train_loader()):

#准备数据,变得更加简洁

image_data, label_data = data

image = fluid.dygraph.to_variable(image_data)

label = fluid.dygraph.to_variable(label_data)

#前向计算的过程,同时拿到模型输出值和分类准确率

predict, acc = model(image, label)

avg_acc = fluid.layers.mean(acc)

#计算损失,取一个批次样本损失的平均值

loss = fluid.layers.cross_entropy(predict, label)

avg_loss = fluid.layers.mean(loss)

#每训练了200批次的数据,打印下当前Loss的情况

if batch_id % 200 == 0:

print("epoch: {}, batch: {}, loss is: {}, acc is {}".format(epoch_id, batch_id, avg_loss.numpy(),avg_acc.numpy()))

#后向传播,更新参数的过程

avg_loss.backward()

optimizer.minimize(avg_loss)

model.clear_gradients()

epoch: 1, batch: 0, loss is: [0.03708917], acc is [0.98]

epoch: 1, batch: 200, loss is: [0.08957174], acc is [0.96]

epoch: 1, batch: 400, loss is: [0.02408227], acc is [0.99]

epoch: 2, batch: 0, loss is: [0.07788698], acc is [0.99]

epoch: 2, batch: 200, loss is: [0.07012209], acc is [0.97]

epoch: 2, batch: 400, loss is: [0.0098958], acc is [1.]

epoch: 3, batch: 0, loss is: [0.00520889], acc is [1.]

epoch: 3, batch: 200, loss is: [0.00995], acc is [0.99]

epoch: 3, batch: 400, loss is: [0.00122163], acc is [1.]

epoch: 4, batch: 0, loss is: [0.00160433], acc is [1.]

epoch: 4, batch: 200, loss is: [0.00131854], acc is [1.]

epoch: 4, batch: 400, loss is: [0.01611667], acc is [0.99]

从恢复训练的损失变化来看,加载模型参数继续训练的损失函数值和正常训练损失函数值是完全一致的,可见使用飞桨实现恢复训练是极其简单的。