【python】利用两层神经网络(网络必须用类)来训练mnist数据(要求准确率90%以上)

要求:

- 用python自建一个class类,不能使用其他高级库函数,如pytorch,tensorflow,含有两个隐含层,隐含层数量可以指定。

- 准确率达到90以上。

- 画出学习曲线:损失曲线核准确率曲线。

- 只使用全连接,不使用卷积层。

本程序在jupyter下完成。共三个代码:

程序可直接下载(包含数据)下载地址

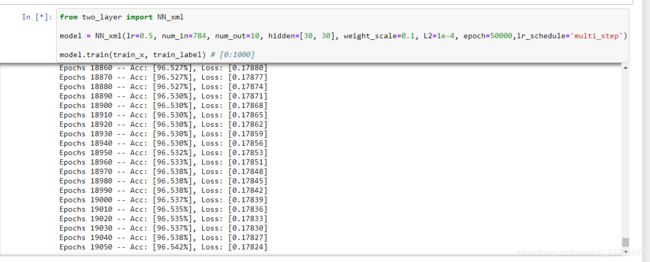

结果展示,

注意:注意参数设置(一定要好好调参)和我的框架设置。

以下为代码

————————————————————————————————————————

1.主代码(自建类函数)

import numpy as np

import matplotlib.pyplot as plt

class NN_xml():

r"""Generates a neural networks with exact two hidden layers for multi-labels classification

"""

def __init__(self, lr=0.001, num_in=100, num_out=10, hidden=[3, 4], weight_scale=1e-3, L2=0.5, epoch=200, lr_schedule='decay'):

r"""

lr - - learning rate

num_in - - number of input layer

num_out - - number of output layer

hidden - - the number of hidden layer(s), for example [3, 4] denotes two hidden layers and have 3 and 4 nodes, respectively

weight_scale - - for normalized the initial weights

L2 - - L2 normalization factor

epoch - - epoch

lr_schedule - - try to change the learning rate: <'multi_step'> <'decay'>

"""

self.lr = lr

self.num_in = num_in

self.num_out = num_out

self.params = {}

self.hidden = hidden

self.weight_scale = weight_scale

self.L2 = L2

self.epoch = epoch

self.lr_schedule = lr_schedule

self.loss = []

self.acc = []

self.flag_init_weight = False

if self.flag_init_weight == False:

self.init_weights()

def init_weights(self):

r"""initialize the weights"""

assert self.flag_init_weight == False

self.params['W1'] = np.random.randn(self.num_in, self.hidden[0]) * self.weight_scale

self.params['W2'] = np.random.randn(self.hidden[0], self.hidden[1]) * self.weight_scale

self.params['W3'] = np.random.randn(self.hidden[1], self.num_out) * self.weight_scale

self.params['b1'] = np.zeros(self.hidden[0], )

self.params['b2'] = np.zeros(self.hidden[1], )

self.params['b3'] = np.zeros(self.num_out, )

self.flag_init_weight = True

def loss_softmax(self, x, y):

r"""compute loss and return the gradient using softmax loss funtion

x - - the output of neural networks

y - - ground truth, 0 < y[i] < self.num_out - 1

"""

shifted_logits = x - np.max(x, axis=1, keepdims=True)

Z = np.sum(np.exp(shifted_logits), axis=1, keepdims=True)

log_probs = shifted_logits - np.log(Z)

probs = np.exp(log_probs)

N = x.shape[0]

loss = -np.sum(log_probs[np.arange(N), y]) / N

dx = probs.copy()

dx[np.arange(N), y] -= 1

dx /= N

return loss, dx

def train(self, input, y):

r""" update the parameters

input - - input data

y - - ground truth, 0 < y[i] < self.num_out - 1

"""

for i in range(self.epoch):

# forward

hidden1_ = np.dot(input, self.params['W1']) + self.params['b1']

hidden1 = 1 / (np.exp(-1 * hidden1_) + 1)

hidden2_ = np.dot(hidden1, self.params['W2']) + self.params['b2']

hidden2 = 1 / (np.exp(-1 * hidden2_) + 1)

output = np.dot(hidden2, self.params['W3']) + self.params['b3']

# output = 1 / (np.exp(-1 * output_) + 1)

# compute loss with L2 normalization

loss, dout = self.loss_softmax(output, y)

loss += 0.5 * self.L2 * (

np.sum(np.square(self.params['W3'])) + np.sum(np.square(self.params['W2'])) + np.sum(

np.square(self.params['W1'])))

self.loss.append(loss)

# compute the accuracy

y_pred = np.argmax(output, axis=1).reshape(1, -1)

y_true = y.reshape(1, -1)

sum_ = 0.0

for c in range(y_pred.shape[1]):

if y_pred[0, c] == y_true[0, c]:

sum_ = sum_ + 1

acc = 100.0 * sum_ / y_pred.shape[1]

self.acc.append(acc)

if i % 10 == 0:

print('Epochs {} -- Acc: [{:.3f}%], Loss: [{:.5f}]'.format(i, 100.0 * sum_ / y_pred.shape[1], loss))

# compute gradients

# dout = dout * (1 - output) * output

dW3 = np.dot(hidden2.T, dout)

db3 = np.sum(dout, axis=0)

dhidden2 = np.dot(dout, self.params['W3'].T) * (1 - hidden2) * hidden2

dW2 = np.dot(hidden1.T, dhidden2)

db2 = np.sum(dhidden2, axis=0)

dhidden1 = np.dot(dhidden2, self.params['W2'].T) * (1 - hidden1) * hidden1

dW1 = np.dot(input.T, dhidden1) # 2*4 and 4*2 => 2*2

db1 = np.sum(dhidden1, axis=0) # 1 * 2

# L2 normalization for weight

dW3 += self.params['W3'] * self.L2

dW2 += self.params['W2'] * self.L2

dW1 += self.params['W1'] * self.L2

# backward

self.params['W3'] -= self.lr * dW3

self.params['b3'] -= self.lr * db3

self.params['W2'] -= self.lr * dW2

self.params['b2'] -= self.lr * db2

self.params['W1'] -= self.lr * dW1

self.params['b1'] -= self.lr * db1

if self.lr_schedule == 'decay':

self.lr = self.lr * 0.999

elif self.lr_schedule == 'multi_step':

if i < 500:

self.lr = self.lr

elif i < 1000 and i >= 500:

self.lr -= self.lr * 0.6

else:

self.lr = self.lr * 0.1

if self.lr < 0.1:

self.lr = 0.1

if i == self.epoch - 1:

y_pred = np.argmax(output, axis=1).reshape(1, -1)

y_true = y.reshape(1, -1)

sum_ = 0.0

for c in range(y_pred.shape[1]):

if y_pred[0, c] == y_true[0, c]:

sum_ = sum_ + 1

print('Epochs {} -- Acc: [{:.3f}%], Loss: [{:.5f}]'.format(i, 100.0 * sum_ / y_pred.shape[1], loss))

def test(self, input, y):

r""" Test the model

input - - input data

y - - ground truth, 0 < y[i] < self.num_out - 1

"""

hidden1_ = np.dot(input, self.params['W1']) + self.params['b1']

hidden1 = 1 / (np.exp(-1 * hidden1_) + 1)

hidden2_ = np.dot(hidden1, self.params['W2']) + self.params['b2']

hidden2 = 1 / (np.exp(-1 * hidden2_) + 1)

output_ = np.dot(hidden2, self.params['W3']) + self.params['b3']

output = 1 / (np.exp(-1 * output_) + 1)

# compute the accuracy

y_pred = np.argmax(output, axis=1).reshape(1, -1)

y_true = y.reshape(1, -1)

sum_ = 0.0

for c in range(y_pred.shape[1]):

if y_pred[0, c] == y_true[0, c]:

sum_ = sum_ + 1

print('Test acc is {:.5f}'.format(sum_ / y_pred.shape[1]))

return sum_ / y_pred.shape[1]

def get_loss_history(self):

return self.loss

def get_acc_history(self):

return self.acc

2.数据分析代码

import numpy as np

import csv

train = csv.reader(open('mnist_train.csv', 'r'))

train_content = []

for line in train:

train_content.append(line)

test = csv.reader(open('mnist_test.csv', 'r'))

test_content = []

for line in test:

test_content.append(line)

train_content = np.array(train_content, dtype=np.float32)

test_content = np.array(test_content, dtype=np.float32)

train_label = np.array(train_content[:, 0], dtype=np.int)

train_x = train_content[:,1 :]

test_label = np.array(test_content[:, 0], dtype=np.int)

test_x = test_content[:, 1:]

assert train_x.shape[1] == test_x.shape[1]

print('Number of input is %d' % train_x.shape[1])

num_input = train_x.shape[1]

train_x = (train_x - 255/2) / 255

test_x = (test_x - 255/2) / 255

3.训练和测试代码

from two_layer import NN_xml

model = NN_xml(lr=0.5, num_in=784, num_out=10, hidden=[40, 30], weight_scale=0.1, L2=1e-5, epoch=2000,lr_schedule='decay')

model.train(train_x, train_label) # [0:1000]

model.test(test_x, test_label)

import matplotlib.pyplot as plt

loss = model.get_loss_history()

%matplotlib inline

plt.plot(loss)

plt.title('Loss Curve')

plt.xlabel('Epochs')

plt.ylabel('Loss')

import matplotlib.pyplot as plt

acc = model.get_acc_history()

%matplotlib inline

plt.plot(acc)

plt.title('Accuracy Curve')

plt.xlabel('Epochs')

plt.ylabel('Accuracy')