xpath语法与lxml库

什么是XPath

xpath(XML Path Language)是一门在XML和HTML文档中查找信息的语言,可用来在XML和HTML文档中对元素和属性进行遍历。

XPath开发工具

1.Chrome插件XPath Helper。

2. Firefox插件Try XPath。

XPath语法

选取节点:

XPath 使用路径表达式来选取 XML 文档中的节点或者节点集。这些路径表达式和我们在常规的电脑文件系统中看到的表达式非常相似。

| 表达式 | 描述 | 示例 | 结果 |

|---|---|---|---|

| nodename | 选取此节点的所有子节点 | store | 选取store下所有的子节点 |

| / | 如果是在最前面,代表从根节点选取。否则选择某节点下的某个节点 | /store | 选取根元素下所有的store节点 |

| // | 从全局节点中选择节点,随便在哪个位置 | //book | 从全局节点中找到所有的book节点 |

| @ | 选取某个节点的属性 | //book[@price] | 选择所有拥有price属性的book节点 |

| . | 当前节点 | ./a | 选取当前节点下的a标签 |

谓语:

谓语用来查找某个特定的节点或者包含某个指定的值的节点,被嵌在方括号中。

在下面的表格中,我们列出了带有谓语的一些路径表达式,以及表达式的结果:

| 路径表达式 | 描述 |

|---|---|

| /bookstore/book[1] | 选取bookstore下的第一个子元素 |

| /bookstore/book[last()] | 选取bookstore下的倒数第二个book元素。 |

| bookstore/book[position() < 3] | 选取bookstore下前面两个子元素。 |

| //book[@price] | 选取拥有price属性的book元素 |

| //book[@price=10] | 选取所有属性price等于10的book元素 |

通配符

| 通配符 | 描述 | 示例 | 结果 |

|---|---|---|---|

| * | 匹配任意节点 | /bookstore/* | 选取bookstore下的所有子元素。 |

| @* | 匹配节点中的任何属性 | //book[@*] | 选取所有带有属性的book元素。 |

选取多个路径:

通过在路径表达式中使用“|”运算符,可以选取若干个路径。

//bookstore/book | //book/title

选取所有book元素以及book元素下所有的title元素

IxmI库

lxml 是 一个HTML/XML的解析器,主要的功能是如何解析和提取 HTML/XML 数据。

lxml和正则一样,也是用 C 实现的,是一款高性能的 Python HTML/XML 解析器,我们可以利用之前学习的XPath语法,来快速的定位特定元素以及节点信息。

基本使用

我们可以利用他来解析HTML代码,并且在解析HTML代码的时候,如果HTML代码不规范,他会自动的进行补全。

from lxml import etree

text = '''

- first item

- second item

- third item

- fourth item

- fifth item # 注意,此处缺少一个

闭合标签

'''

#利用etree.HTML,将字符串解析为HTML文档

html = etree.HTML(text)

# 按字符串序列化HTML文档

result = etree.tostring(html)

print(result)

输入结果:

可以看到。lxml会自动修改HTML代码。例子中不仅补全了li标签,还添加了body,html标签。

从文件中读取html代码

除了直接使用字符串进行解析,lxml还支持从文件中读取内容。

然后利用etree.parse()方法来读取文件。

from lxml import etree

# 读取外部文件 hello.html

html = etree.parse('hello.html')

result = etree.tostring(html, pretty_print=True)

print(result)

输入结果和之前是相同的。

这个函数默认使用的是XML解析器,所以如果碰到一些不规范的HTML代码的时候就会解析错误,这时候就要自己创建HTML解析器。

parser = etree.HTMLParser(encoding=‘utf-8’)

htmlElement = etree.parse(“xx.html”,parser=parser)

print(etree.tostring(htmlElement, encoding=‘utf-8’).decode(‘utf-8’))

在IxmI中使用xpath

1.使用xpath语法,应该使用Element.xpath方法。xpath函数返回来的永远是一个列表。

trs = html.xpath("//tr[position()>1]")

2.获取某个标签的属性。

href = html.xpath("//a/@href")

# 获取a标签的href属性对应的值

3.获取文本,是通过xpath中的text()函数。

address = tr.xpath("./td[4]/text()")[0]

4.在某个标签下,再执行xpath函数,获取这个标签下的子孙元素,那么应该在斜杠之前加一个点,代表是在当前元素下获取。

address = tr.xpath("./td[4]/text()")[0]

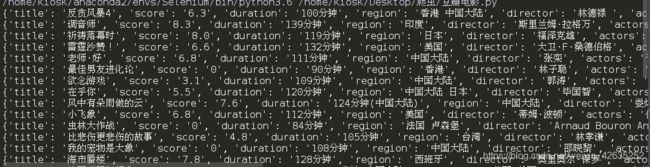

豆瓣电影

import requests

from lxml import etree

headers = {

'User-Agent':'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/59.0.3071.109 Safari/537.36',

'Referer':'https://movie.douban.com/'

}

proxy={'HTTP':'218.87.192.132:9999',

'HTTPS':'163.204.242.235:9999'

}

url = 'https://movie.douban.com/cinema/nowplaying/xian/'

response = requests.get(url,headers=headers,proxies=proxy)

text = response.text

html = etree.HTML(text)

ul = html.xpath("//ul[@class='lists']")[0]

lis = ul.xpath("./li")

movies = []

for li in lis:

title = li.xpath("@data-title")[0]

score = li.xpath("@data-score")[0]

duration = li.xpath("@data-duration")[0]

region = li.xpath("@data-region")[0]

director = li.xpath("@data-director")[0]

actors = li.xpath("@data-actors")[0]

thumbnail = li.xpath(".//img/@src")[0]

movie = {

'title':title,

'score':score,

'duration':duration,

'region':region,

'director':director,

'actors':actors,

'thumbnail':thumbnail,

}

movies.append(movie)

for movie in movies:

print(movie)

电影天堂

import requests

from lxml import etree

headers = {

'User-Agent':'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) '

'Chrome/59.0.3071.109 Safari/537.36',

'Referer':'https://www.dytt8.net/html/gndy/dyzz/index.html'

}

proxy={'HTTP':'218.87.192.132:9999',

'HTTPS':'163.204.242.235:9999'

}

base_url = 'https://www.dytt8.net'

def get_detail_urls(url):

respones = requests.get(url,headers=headers,proxies=proxy)

html = etree.HTML(respones.text)

deteil_urls = html.xpath("//table[@class='tbspan']//a/@href")

deteil_urls=map(lambda url:base_url+url,deteil_urls)

return deteil_urls

def parse_detail_page(url):

movie = {}

respones = requests.get(url,headers=headers,proxies=proxy)

text = respones.content.decode('gbk')

html = etree.HTML(text)

title = html.xpath("//div[@class='title_all']//font[@color='#07519a']/text()")[0]

movie['title'] = title

zoomE = html.xpath("//div[@id='Zoom']")[0]

imgs = zoomE.xpath(".//img/@src")

cover = imgs[0]

screenshot = imgs[1]

movie['cover'] = cover

movie['screenshot'] = screenshot

def parse_info(info, rule):

return info.replace(rule, "").strip()

infos = zoomE.xpath(".//text()")

for index, info in enumerate(infos):

if info.startswith("◎年 代"):

info = parse_info(info, "◎年 代")

movie['year'] = info

elif info.startswith("◎产 地"):

info = parse_info(info, "◎产 地")

movie['country'] = info

elif info.startswith("◎类 别"):

info = parse_info(info, "◎类 别")

movie['category'] = info

elif info.startswith("◎豆瓣评分"):

info = parse_info(info, "◎豆瓣评分")

movie['douban_rating'] = info

elif info.startswith("◎片 长"):

info = parse_info(info, "◎片 长")

movie['duration'] = info

elif info.startswith("◎导 演"):

info = parse_info(info, "◎导 演")

movie['director'] = info

elif info.startswith("◎主 演"):

info = parse_info(info, "◎主 演")

actors = [info]

for x in range(index + 1, len(infos)):

actor = infos[x].strip()

if actor.startswith("◎"):

break

actors.append(actor)

movie['actors'] = actors

elif info.startswith("◎简 介"):

info = parse_info(info, "◎简 介")

for x in range(index + 1, len(infos)):

profile = infos[x].strip()

movie["profile"] = profile

download_url = html.xpath("//td[@bgcolor='#fdfddf']/a/@href")[0]

movie['download_url'] = download_url

return movie

def spider():

base_url = "http://dytt8.net/html/gndy/dyzz/list_23_{}.html"

movies = []

for x in range(1, 8):

# 第一个for循环,是用来控制总共有7业的

url = base_url.format(x)

detail_urls = get_detail_urls(url)

for detail_url in detail_urls:

# 第二个for循环,是用来遍历一页中所有电影的详情url

movie = parse_detail_page(detail_url)

movies.append(movie)

print(movie)

# print(movies)

if __name__ == '__main__':

spider()

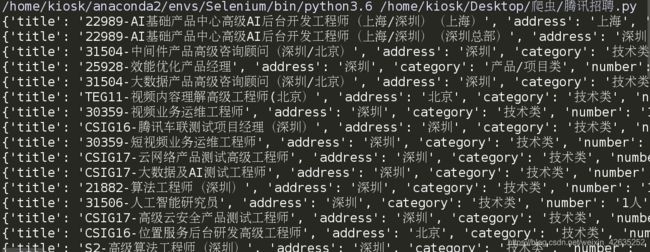

腾讯招聘

import requests

from lxml import etree

headers = {

'User-Agent':'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/59.0.3071.109 Safari/537.36',

'Referer':'https://movie.douban.com/'

}

proxy={'HTTP':'218.87.192.132:9999',

'HTTPS':'163.204.242.235:9999'

}

url = 'https://hr.tencent.com/position.php?lid=&tid=&keywords=python&start={}#a'

base_url = 'https://hr.tencent.com/'

def get_detail_urls(url):

respones = requests.get(url,headers=headers,proxies=proxy)

html = etree.HTML(respones.text)

detail_urls = html.xpath("//tr//a[@target='_blank']/@href")

detail_urls = map(lambda c:base_url+c,detail_urls)

return detail_urls

def parse_detail_urls(url):

position = {}

respones = requests.get(url,headers=headers,proxies=proxy)

html = etree.HTML(respones.content.decode('utf-8'))

tr = html.xpath("//tr[position()<5]")

title = tr[0].xpath("./td/text()")[0]

position['title'] = title

tr_1 = tr[1].xpath("./td/text()") #['上海', '技术类', '4人']

position['address'] = tr_1[0]

position['category'] = tr_1[1]

position['number'] = tr_1[2]

tr_2 = tr[2].xpath(".//ul/li/text()")

position['responsibilities'] = tr_2

tr_3 = tr[3].xpath(".//ul/li/text()")

position['requirements'] = tr_3

return position

def spider():

positiones = []

for i in range(7):

detail_urls=get_detail_urls(url.format(i*10))

for detail_url in detail_urls:

position=parse_detail_urls(detail_url)

positiones.append(position)

print(position)

if __name__ =='__main__':

spider()