Android Camera fw学习(六)-takepicture(ZSL)流程分析

备注:博文仍然是分析Android5.1 API1代码的学习笔记。

文章目录

- 0.注册帧监听对象

- 1.captureSequence线程注册帧监听对象

- 2.ZslProcess3线程注册帧监听对象

- 1.查找ZSL拍照最合适的buffer

- 2.设置zsl input buffer和 jpeg out buffer

- 3.归还jpeg Buffer干了什么.

- 4保存ZSLBuffer.

- 5.获取拍照jpeg Buffer

- 6.拍照帧可用回调

- 7.jpeg buffer回调到app

感兴趣可以加QQ群85486140,大家一起交流相互学习下!

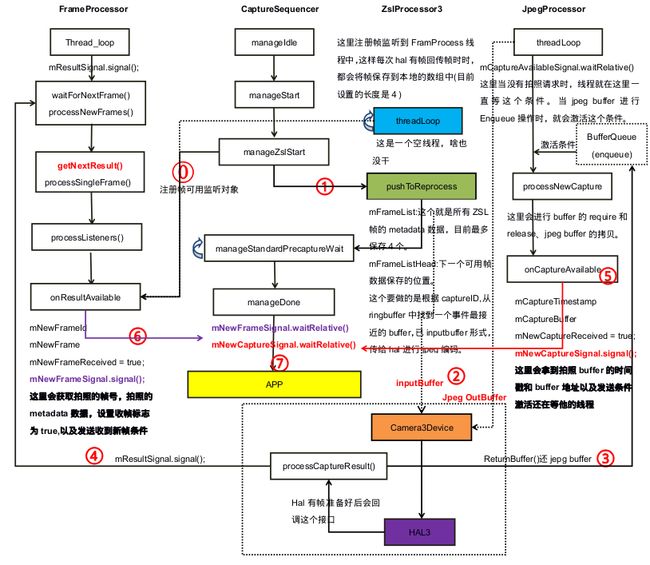

这次笔记主要是来分析ZSL流程的。ZSL(zero shutter lag)即零延时,就是在拍照时不停预览就可以拍照.由于有较好的用户体验度,该feature是现在大部分手机都拥有的功能。

下面不再贴出大量代码来描述过程,直接上图。下图是画了2个小时整理出来的Android5.1 Zsl的基本流程,可以看到与ZSL密切相关的有5个线程frameprocessor、captureSequencer、ZslProcessor3、JpegProcessor、Camera3Device:requestThread。其实还有一个主线程用于更新参数。针对Android5.1看代码所得,ZSL过程中大概分成下面7个流程.

更正:图中左上角的FrameProcessor线程起来后会在waitForNextFrame中执行mResultSignal.waitRelative(),图中没有更改过来。

0.注册帧监听对象

1.captureSequence线程注册帧监听对象

- 1.注册时机

当上层发出ZSL拍照请求时,底层就会触发拍照捕获状态机,改状态机的基本流程图在上篇笔记中已经整理出来过,这里就不多说了。由于camera2Client与其它处理线程对象基本符合金字塔形的架构,可以看到这里是通过camera2Client的对象将帧可用监听对象注册到FrameProcess对象中的List对象中。mRangeListeners;

CaptureSequencer::CaptureState CaptureSequencer::manageZslStart(

sp &client) {

ALOGV("%s", __FUNCTION__);

status_t res;

sp processor = mZslProcessor.promote();

// We don't want to get partial results for ZSL capture.

client->registerFrameListener(mCaptureId, mCaptureId + 1,

this,

/*sendPartials*/false);

// TODO: Actually select the right thing here.

res = processor->pushToReprocess(mCaptureId);

//.......

}

特别注意:可以看到在注册帧监听对象时,传入的两个参数是mCaptureId, mCaptureId + 1,为什么会是这样呢,因为这个就是标记我们想抓的是哪一帧,当拍照buffer从hal上来之后,Camera3Device就会回调帧可用监听对象,然后得到拍照帧的时间戳,紧接着根据时间戳从ZSL RingBuffer中找到最理想的inputBuffer,然后下发给hal进行Jpeg编解码。对比下面ZSL线程的CaptureId,应该就理解了.

- 2.捕获时机

void CaptureSequencer::onResultAvailable(const CaptureResult &result) {

ATRACE_CALL();

ALOGV("%s: New result available.", __FUNCTION__);

Mutex::Autolock l(mInputMutex);

mNewFrameId = result.mResultExtras.requestId;

mNewFrame = result.mMetadata;

if (!mNewFrameReceived) {

mNewFrameReceived = true;

.signal();

}

}

上面即是拍照状态机注册的回调函数,其中当ZSL拍照帧上来之后,机会激活正在等待中的CaptureSequencer线程,以进行后续的操作。

2.ZslProcess3线程注册帧监听对象

- 1.注册时机

status_t ZslProcessor3::updateStream(const Parameters ¶ms) {

if (mZslStreamId == NO_STREAM) {

// Create stream for HAL production

// TODO: Sort out better way to select resolution for ZSL

// Note that format specified internally in Camera3ZslStream

res = device->createZslStream(

params.fastInfo.arrayWidth, params.fastInfo.arrayHeight,

mBufferQueueDepth,

&mZslStreamId,

&mZslStream);

// Only add the camera3 buffer listener when the stream is created.

mZslStream->addBufferListener(this);//这里是在BufferQueue注册的callback,暂时不用关心。

}

client->registerFrameListener(Camera2Client::kPreviewRequestIdStart,

Camera2Client::kPreviewRequestIdEnd,

this,

/*sendPartials*/false);

return OK;

}

上面的即为更新zsl流时调用的函数,可以看到其中使用registerFrameListener注册了RingBuffer可用监听对象,这里我们要特别注意的是下面2个宏。这个是专门为预览预留的requestId,考虑这样也会有录像和拍照的requestId,每次更新参数后,这个requestId会有+1操作,没有参数更新,则不会+1,这个可以在各自的Debug手机上发现。

static const int32_t kPreviewRequestIdStart = 10000000;

static const int32_t kPreviewRequestIdEnd = 20000000;

- 2.捕获时机

void ZslProcessor3::onResultAvailable(const CaptureResult &result) {

ATRACE_CALL();

ALOGV("%s:", __FUNCTION__);

Mutex::Autolock l(mInputMutex);

camera_metadata_ro_entry_t entry;

entry = result.mMetadata.find(ANDROID_SENSOR_TIMESTAMP);

nsecs_t timestamp = entry.data.i64[0];

entry = result.mMetadata.find(ANDROID_REQUEST_FRAME_COUNT);

int32_t frameNumber = entry.data.i32[0];

// Corresponding buffer has been cleared. No need to push into mFrameList

if (timestamp <= mLatestClearedBufferTimestamp) return;

mFrameList.editItemAt(mFrameListHead) = result.mMetadata;

mFrameListHead = (mFrameListHead + 1) % mFrameListDepth;

}

去掉错误检查代码,上面由于CaptureID是下面2个,也就是ZSL的所有预览Buffer可用之后都会回调这个方法,当队列满之后,新buffer会覆盖旧buffer位置。上面可以看到mFrameList中会保存每一帧的metadata数据,mFrameListHead用来标识下一次存放数据的位置。

static const int32_t kPreviewRequestIdStart = 10000000;

static const int32_t kPreviewRequestIdEnd = 20000000;

1.查找ZSL拍照最合适的buffer

一开始我以为是是根据想要抓取那帧的captureId来找到zsl拍照buffer的,但是现在看来就是找时间戳最近的那个buffer来进行jpeg编解码(而且google工程师在源码中注释也是这样说的).

status_t ZslProcessor3::pushToReprocess(int32_t requestId) {

ALOGV("%s: Send in reprocess request with id %d",

__FUNCTION__, requestId);

Mutex::Autolock l(mInputMutex);

status_t res;

sp client = mClient.promote();

//下面是就是在mFrameList查找时间戳最近的帧。

size_t metadataIdx;

nsecs_t candidateTimestamp = getCandidateTimestampLocked(&metadataIdx);

//根据上一次查找的时间戳,从ZSL BufferQueue中查找时间最接近的Buffer,并将

//buffer保存到mInputBufferQueue队列中。

res = mZslStream->enqueueInputBufferByTimestamp(candidateTimestamp,

/*actualTimestamp*/NULL);

//-----------------

{//获取zsl 编解码的metadataId,稍后会传入给hal编解码。

CameraMetadata request = mFrameList[metadataIdx];

// Verify that the frame is reasonable for reprocessing

camera_metadata_entry_t entry;

entry = request.find(ANDROID_CONTROL_AE_STATE);

if (entry.data.u8[0] != ANDROID_CONTROL_AE_STATE_CONVERGED &&

entry.data.u8[0] != ANDROID_CONTROL_AE_STATE_LOCKED) {

ALOGV("%s: ZSL queue frame AE state is %d, need full capture",

__FUNCTION__, entry.data.u8[0]);

return NOT_ENOUGH_DATA;

}

//这中间会更新输入stream的流ID、更新捕获意图为静态拍照、判断这一帧是否AE稳定、

//获取jpegStreamID并更新到metadata中、更新请求ID,最后根据更新后的request metadata

//更新jpeg metadata。最后一步启动Camera3Device抓取图片。

// Update post-processing settings

res = updateRequestWithDefaultStillRequest(request);

mLatestCapturedRequest = request;

res = client->getCameraDevice()->capture(request);

mState = LOCKED;

}

return OK;

}

还记得在启动状态机器时,注册的帧监听对象吧。这里参数requestId就是我们想要抓拍的图片的请求ID,目前发现该请求ID后面会更新到metadata中。这里只要知道该函数功能就可以了。

- 1.从mFrameList中查找时间戳最小的metadata。

- 2.根据从第一步获取到时间戳,从ZSL BufferQueue选择时间最接近Buffer.

- 3.将Buffer放到mInputBufferQueue中,更新jpeg编解码metadata,启动Capture功能。

2.设置zsl input buffer和 jpeg out buffer

其实这一步之前已经讨论过,inputBuffer是ZslProcess3线程查找到最合适的用于jpeg编解码的buffer。outputBuffer为JpegProcessor线程更新的buffer用于存放hal编解码之后的jpeg图片。其中准备jpeg OutBuffer的操作就是在下面操作的。可以看到将outputStream的ID,保存到metadata中了。这样就会在Camera3Device中根据这项metadata来添加outputBuffer到hal。

status_t ZslProcessor3::pushToReprocess(int32_t requestId) {

// TODO: Shouldn't we also update the latest preview frame?

int32_t outputStreams[1] =

{ client->getCaptureStreamId() };

res = request.update(ANDROID_REQUEST_OUTPUT_STREAMS,

outputStreams, 1);

}

3.归还jpeg Buffer干了什么.

当framework将ZSL inputBuffer和jpeg outputBuffer,传给hal后,hal就会启动STLL_CAPTURE流程,将inputBuffer中的图像数据,进行一系列的后处理流程。当后处理完成后,hal则会将临时Buffer拷贝到outPutBuffer中(注意:这里要记得做flush操作,即刷新Buffer,要不然图片有可能会出现绿条).

因为JpegBuffer也是从BufferQueue Dequeue出来的buffer,而且在创建BufferQueue时,也注册了帧监听对象(即:onFrameAvailable()回调).这样的话当帧可用(即:进行了enqueue操作),就会回调onFrameAvailable()方法,这样当hal归还jpegBuffer时就是要进行enqueue()操作。在onFrameAvailable()方法中,会激活jpegproces线程,进行后续的处理,最后激活captureSequeue拍照状态机线程。

4保存ZSLBuffer.

这里由于ZSL Buffer一直会从hal上来,所以当zslBuffer上来后,就会激活FrameProcesor线程保存这一ZslBuffer,目前FrameWork那边默认是4个buffer,这样的话当队列满之后,就会覆盖之前最老的buffer,如此反复操作。

5.获取拍照jpeg Buffer

当hal上来jpeg帧后,就会激活jpegProcess线程,并从BufferQueue中拿到jpegbuffer,下面可以发现进行lockNextBuffer,unlockBuffer操作。

status_t JpegProcessor::processNewCapture() {

res = mCaptureConsumer->lockNextBuffer(&imgBuffer);

mCaptureConsumer->unlockBuffer(imgBuffer);

sp sequencer = mSequencer.promote();

//......

if (sequencer != 0) {

sequencer->onCaptureAvailable(imgBuffer.timestamp, captureBuffer);

}

}

上面可以发现最后回调了captureSequencer线程的onCaptureAvailable()回调方法。该回调方法主要作用就是将时间戳和jpeg buffer的传送到CaptureSequencer线程中,然后激活CaptureSequencer线程。最后将Buffer CallBack到应用层。

void CaptureSequencer::onCaptureAvailable(nsecs_t timestamp,

sp captureBuffer) {

ATRACE_CALL();

ALOGV("%s", __FUNCTION__);

Mutex::Autolock l(mInputMutex);

mCaptureTimestamp = timestamp;

mCaptureBuffer = captureBuffer;

if (!mNewCaptureReceived) {

mNewCaptureReceived = true;

mNewCaptureSignal.signal();

}

}

6.拍照帧可用回调

当拍照帧回到Framework后,就会回调CaptureSequencer的onResultAvailable()接口,用于设置captureSequencer状态机的标志位和条件激活,如下代码所示。条件变量和标志位的使用可以在状态机方法manageStandardCaptureWait()看到使用。

void CaptureSequencer::onResultAvailable(const CaptureResult &result) {

ATRACE_CALL();

ALOGV("%s: New result available.", __FUNCTION__);

Mutex::Autolock l(mInputMutex);

mNewFrameId = result.mResultExtras.requestId;

mNewFrame = result.mMetadata;

if (!mNewFrameReceived) {

mNewFrameReceived = true;

mNewFrameSignal.signal();

}

}

7.jpeg buffer回调到app

该callback是应用注册过来的一个代理对象,下面就是通过binder进程间调用将jpeg Buffer传送到APP端,注意这里的msgTyep = CAMERA_MSG_COMPRESSED_IMAGE,就是告诉上层这是一个压缩的图像数据。

CaptureSequencer::CaptureState CaptureSequencer::manageDone(sp &client) {

status_t res = OK;

ATRACE_CALL();

mCaptureId++;

......

Camera2Client::SharedCameraCallbacks::Lock

l(client->mSharedCameraCallbacks);

ALOGV("%s: Sending still image to client", __FUNCTION__);

if (l.mRemoteCallback != 0) {

l.mRemoteCallback->dataCallback(CAMERA_MSG_COMPRESSED_IMAGE,

mCaptureBuffer, NULL);

} else {

ALOGV("%s: No client!", __FUNCTION__);

}

......

}