Kubernetes高可用性监控:Thanos的部署

原文发表于kubernetes中文社区,为作者原创翻译 ,原文地址

更多kubernetes文章,请多关注kubernetes中文社区

目录

介绍

对Prometheus高可用性的需求

实现

Thanos架构

Thanos包含以下组件:

重复数据删除

Thanos搭建

先决条件

组件部署

部署Prometheus中的ServiceAccount资源对象,分别创建Clusterrole和Clusterrolebinding

部署Prometheus配置文件configmap.yaml

部署prometheus-rules的configmap 这将创建我们的警报规则,该警报规则将中继到alertmanager进行交付

部署Prometheus的StatefulSet资源

部署Prometheus服务

部署Thanos Querier

部署Thanos Store Gateway

部署Thanos Ruler

部署Alertmanager

部署Kubestate指标

部署Node-Exporter Daemonset

部署Grafana

部署Ingress对象

结论

介绍

对Prometheus高可用性的需求

在过去的几个月中,Kubernetes的采用已经增长了很多倍,现在很明显,Kubernetes是容器编排的事实标准。

同时,监视是任何基础架构的重要方面。Prometheus被认为是监视容器应用和非容器应用的绝佳选择。我们应该确保监视系统具有高可用性和高度可扩展性,以适应不断增长的基础架构的需求,尤其是在Kubernetes的情况下。

因此,今天,我们将部署Prometheus集群,它不仅可以抵抗节点故障,而且还可以确保数据归档以备将来参考。我们的集群也具有很大的可扩展性,以至于我们可以在同一监控系统内跨越多个Kubernetes集群。

当前方案

大多数Prometheus部署都使用具备持久性存储的Pod,而Prometheus使用联邦进行扩展。但是,并非所有数据都可以使用联邦进行聚合,在添加其他服务器时,通常需要一种机制来管理Prometheus配置。

解决方案

Thanos旨在解决上述问题。在Thanos的帮助下,我们不仅可以扩展Prometheus实例,并能够消除重复数据,还可以将数据归档在GCS或S3等持久性存储中。

实现

Thanos架构

Thanos包含以下组件:

- Thanos Sidecar:这是在Prometheus运行的主要组件。它读取并存储object store中的数据。此外,它管理Prometheus的配置和生命周期。为了区分每个Prometheus实例,Sidecar组件将外部标签注入Prometheus配置中。Sidecar组件能够在Prometheus服务器的PromQL接口上运行查询。Sidecar组件还侦听Thanos gRPC协议,并在gRPC查询和REST查询之间转换。

- Thanos Store:此组件在object store中的历史数据之上实现Store API。它主要充当API网关,因此不需要大量的本地磁盘空间。它在启动时加入Thanos集群,并公布它可以访问的数据。它会在本地磁盘上保留有关所有远程块的少量信息,并使它与object store保持同步。通常,在重新启动时可以安全地删除此数据,但会增加启动时间。

- Thanos Query:是个查询组件,负责侦听HTTP并将查询转换为Thanos gRPC格式。它汇总了来自不同来源的查询结果,并且可以从Sidecar和Store中读取数据。在高可用性设置中,它甚至可以对重复数据进行删除。

重复数据删除

Prometheus是有状态的,不允许复制其数据库。这意味着通过运行多个Prometheus副本来增强高可用性并不是最佳选择。

简单的负载平衡将不起作用,例如,在发生崩溃后,副本可能已启动,但是查询此类副本将导致其关闭期间的间隙很小。你有第二个副本可能正在运行,但是又可能在另一时间关闭(例如,滚动重启),因此在这些副本上进行负载平衡将无法正常工作。

- 相反,Thanos Querier从两个副本中提取数据,并对这些信号进行重复数据删除,从而帮助Querier使用者填补了空白。

- Thanos Compact:是Thanos的压缩器组件,它采用Prometheus 2.0存储引擎的压缩过程,来阻止数据存储在object store中。通常,它以单例方式部署。 它还负责数据的向下采样(downsampling)-40小时后执行5m的向下采样,而10天后执行1h的向下采样。

- Thanos Ruler:它基本上与Prometheus的rules具有相同的作用。唯一的区别是它可以与Thanos组件进行通信。

Thanos搭建

先决条件

为了完全理解本教程,需要以下内容:

- Kubernetes的工作原理和熟练使用Kubectl

- Kubernetes集群至少有3个节点(在本演示中,使用GKE集群)

- 实现Ingress Controller和Ingress对象(出于演示目的,使用Nginx Ingress Controller)。尽管这不是强制性的,但强烈建议你这样做以减少外部端点创建的数量。

- 创建供Thanos组件访问object store的凭证(在本例中为GCS存储)

- 创建2个GCS存储,并将其命名为prometheus-long-term和thanos-ruler

- 创建一个角色为“ 存储对象管理员”的服务帐户

- 将密钥文件下载保存为JSON格式,并将其命名为thanos-gcs-credentials.json

- 使用secret创建kubernetes密钥 kubectl create secret generic thanos-gcs-credentials --from-file=thanos-gcs-credentials.json -n monitoring

组件部署

部署Prometheus中的ServiceAccount资源对象,分别创建Clusterrole和Clusterrolebinding

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: monitoring

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: monitoring

namespace: monitoring

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups: [""]

resources:

- configmaps

verbs: ["get"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: monitoring

subjects:

- kind: ServiceAccount

name: monitoring

namespace: monitoring

roleRef:

kind: ClusterRole

Name: monitoring

apiGroup: rbac.authorization.k8s.io

---上述清单创建监控命名空间和服务ServiceAccount。

部署Prometheus配置文件configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-server-conf

labels:

name: prometheus-server-conf

namespace: monitoring

data:

prometheus.yaml.tmpl: |-

global:

scrape_interval: 5s

evaluation_interval: 5s

external_labels:

cluster: prometheus-ha

# Each Prometheus has to have unique labels.

replica: $(POD_NAME)

rule_files:

- /etc/prometheus/rules/*rules.yaml

alerting:

# We want our alerts to be deduplicated

# from different replicas.

alert_relabel_configs:

- regex: replica

action: labeldrop

alertmanagers:

- scheme: http

path_prefix: /

static_configs:

- targets: ['alertmanager:9093']

scrape_configs:

- job_name: kubernetes-nodes-cadvisor

scrape_interval: 10s

scrape_timeout: 10s

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

# Only for Kubernetes ^1.7.3.

# See: https://github.com/prometheus/prometheus/issues/2916

- target_label: __address__

replacement: kubernetes.default.svc:443

- source_labels: [__meta_kubernetes_node_name]

regex: (.+)

target_label: __metrics_path__

replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor

metric_relabel_configs:

- action: replace

source_labels: [id]

regex: '^/machine\.slice/machine-rkt\\x2d([^\\]+)\\.+/([^/]+)\.service$'

target_label: rkt_container_name

replacement: '${2}-${1}'

- action: replace

source_labels: [id]

regex: '^/system\.slice/(.+)\.service$'

target_label: systemd_service_name

replacement: '${1}'

- job_name: 'kubernetes-pods'

kubernetes_sd_configs:

- role: pod

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_pod_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: kubernetes_pod_name

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_pod_prometheus_io_port]

action: replace

target_label: __address__

regex: ([^:]+)(?::\d+)?;(\d+)

replacement: $1:$2

- job_name: 'kubernetes-apiservers'

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]

action: keep

regex: default;kubernetes;https

- job_name: 'kubernetes-service-endpoints'

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: kubernetes_name

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]

action: keep

regex: true

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]

action: replace

target_label: __scheme__

regex: (https?)

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]

action: replace

target_label: __metrics_path__

regex: (.+)

- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]

action: replace

target_label: __address__

regex: (.+)(?::\d+);(\d+)

replacement: $1:$2上面的Configmap创建Prometheus配置文件模板。Thanos sidecar组件将读取此配置文件模板,并将生成实际的配置文件,而该配置文件又将由在同一容器中运行的Prometheus容器使用。

在配置文件中添加external_labels部分非常重要,以使Querier可以基于该部分对重复数据进行删除。

部署prometheus-rules的configmap 这将创建我们的警报规则,该警报规则将中继到alertmanager进行交付

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-rules

labels:

name: prometheus-rules

namespace: monitoring

data:

alert-rules.yaml: |-

groups:

- name: Deployment

rules:

- alert: Deployment at 0 Replicas

annotations:

summary: Deployment {

{$labels.deployment}} in {

{$labels.namespace}} is currently having no pods running

expr: |

sum(kube_deployment_status_replicas{pod_template_hash=""}) by (deployment,namespace) < 1

for: 1m

labels:

team: devops

- alert: HPA Scaling Limited

annotations:

summary: HPA named {

{$labels.hpa}} in {

{$labels.namespace}} namespace has reached scaling limited state

expr: |

(sum(kube_hpa_status_condition{condition="ScalingLimited",status="true"}) by (hpa,namespace)) == 1

for: 1m

labels:

team: devops

- alert: HPA at MaxCapacity

annotations:

summary: HPA named {

{$labels.hpa}} in {

{$labels.namespace}} namespace is running at Max Capacity

expr: |

((sum(kube_hpa_spec_max_replicas) by (hpa,namespace)) - (sum(kube_hpa_status_current_replicas) by (hpa,namespace))) == 0

for: 1m

labels:

team: devops

- name: Pods

rules:

- alert: Container restarted

annotations:

summary: Container named {

{$labels.container}} in {

{$labels.pod}} in {

{$labels.namespace}} was restarted

expr: |

sum(increase(kube_pod_container_status_restarts_total{namespace!="kube-system",pod_template_hash=""}[1m])) by (pod,namespace,container) > 0

for: 0m

labels:

team: dev

- alert: High Memory Usage of Container

annotations:

summary: Container named {

{$labels.container}} in {

{$labels.pod}} in {

{$labels.namespace}} is using more than 75% of Memory Limit

expr: |

((( sum(container_memory_usage_bytes{image!="",container_name!="POD", namespace!="kube-system"}) by (namespace,container_name,pod_name) / sum(container_spec_memory_limit_bytes{image!="",container_name!="POD",namespace!="kube-system"}) by (namespace,container_name,pod_name) ) * 100 ) < +Inf ) > 75

for: 5m

labels:

team: dev

- alert: High CPU Usage of Container

annotations:

summary: Container named {

{$labels.container}} in {

{$labels.pod}} in {

{$labels.namespace}} is using more than 75% of CPU Limit

expr: |

((sum(irate(container_cpu_usage_seconds_total{image!="",container_name!="POD", namespace!="kube-system"}[30s])) by (namespace,container_name,pod_name) / sum(container_spec_cpu_quota{image!="",container_name!="POD", namespace!="kube-system"} / container_spec_cpu_period{image!="",container_name!="POD", namespace!="kube-system"}) by (namespace,container_name,pod_name) ) * 100) > 75

for: 5m

labels:

team: dev

- name: Nodes

rules:

- alert: High Node Memory Usage

annotations:

summary: Node {

{$labels.kubernetes_io_hostname}} has more than 80% memory used. Plan Capcity

expr: |

(sum (container_memory_working_set_bytes{id="/",container_name!="POD"}) by (kubernetes_io_hostname) / sum (machine_memory_bytes{}) by (kubernetes_io_hostname) * 100) > 80

for: 5m

labels:

team: devops

- alert: High Node CPU Usage

annotations:

summary: Node {

{$labels.kubernetes_io_hostname}} has more than 80% allocatable cpu used. Plan Capacity.

expr: |

(sum(rate(container_cpu_usage_seconds_total{id="/", container_name!="POD"}[1m])) by (kubernetes_io_hostname) / sum(machine_cpu_cores) by (kubernetes_io_hostname) * 100) > 80

for: 5m

labels:

team: devops

- alert: High Node Disk Usage

annotations:

summary: Node {

{$labels.kubernetes_io_hostname}} has more than 85% disk used. Plan Capacity.

expr: |

(sum(container_fs_usage_bytes{device=~"^/dev/[sv]d[a-z][1-9]$",id="/",container_name!="POD"}) by (kubernetes_io_hostname) / sum(container_fs_limit_bytes{container_name!="POD",device=~"^/dev/[sv]d[a-z][1-9]$",id="/"}) by (kubernetes_io_hostname)) * 100 > 85

for: 5m

labels:

team: devops部署Prometheus的StatefulSet资源

apiVersion: storage.k8s.io/v1beta1

kind: StorageClass

metadata:

name: fast

namespace: monitoring

provisioner: kubernetes.io/gce-pd

allowVolumeExpansion: true

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: prometheus

namespace: monitoring

spec:

replicas: 3

serviceName: prometheus-service

template:

metadata:

labels:

app: prometheus

thanos-store-api: "true"

spec:

serviceAccountName: monitoring

containers:

- name: prometheus

image: prom/prometheus:v2.4.3

args:

- "--config.file=/etc/prometheus-shared/prometheus.yaml"

- "--storage.tsdb.path=/prometheus/"

- "--web.enable-lifecycle"

- "--storage.tsdb.no-lockfile"

- "--storage.tsdb.min-block-duration=2h"

- "--storage.tsdb.max-block-duration=2h"

ports:

- name: prometheus

containerPort: 9090

volumeMounts:

- name: prometheus-storage

mountPath: /prometheus/

- name: prometheus-config-shared

mountPath: /etc/prometheus-shared/

- name: prometheus-rules

mountPath: /etc/prometheus/rules

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- "sidecar"

- "--log.level=debug"

- "--tsdb.path=/prometheus"

- "--prometheus.url=http://127.0.0.1:9090"

- "--objstore.config={type: GCS, config: {bucket: prometheus-long-term}}"

- "--reloader.config-file=/etc/prometheus/prometheus.yaml.tmpl"

- "--reloader.config-envsubst-file=/etc/prometheus-shared/prometheus.yaml"

- "--reloader.rule-dir=/etc/prometheus/rules/"

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name : GOOGLE_APPLICATION_CREDENTIALS

value: /etc/secret/thanos-gcs-credentials.json

ports:

- name: http-sidecar

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: 10902

path: /-/healthy

readinessProbe:

httpGet:

port: 10902

path: /-/ready

volumeMounts:

- name: prometheus-storage

mountPath: /prometheus

- name: prometheus-config-shared

mountPath: /etc/prometheus-shared/

- name: prometheus-config

mountPath: /etc/prometheus

- name: prometheus-rules

mountPath: /etc/prometheus/rules

- name: thanos-gcs-credentials

mountPath: /etc/secret

readOnly: false

securityContext:

fsGroup: 2000

runAsNonRoot: true

runAsUser: 1000

volumes:

- name: prometheus-config

configMap:

defaultMode: 420

name: prometheus-server-conf

- name: prometheus-config-shared

emptyDir: {}

- name: prometheus-rules

configMap:

name: prometheus-rules

- name: thanos-gcs-credentials

secret:

secretName: thanos-gcs-credentials

volumeClaimTemplates:

- metadata:

name: prometheus-storage

namespace: monitoring

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: fast

resources:

requests:

storage: 20Gi上面提供的清单,重要的是要了解以下几个方面:

- Prometheus部署为具有3个副本的StatefulSet,每个副本动态地配置自己的持久存储卷。

- Thanos sidecar容器使用我们在上面创建的模板文件,生成Prometheus配置信息。

- Thanos需要处理数据压缩,因此我们需要设置--storage.tsdb.min-block-duration = 2h和--storage.tsdb.max-block-duration = 2h

- PrometheusStatefulSet被打上thanos-store-api:true的标签, 因此每个headless 服务都会发现每个Pod,我们将在下面的Service资源中创建它。Thanos Querier将使用此headless服务来查询所有Prometheus实例中的数据。我们还将相同的标签(thanos-store-api:true)应用于Thanos Store和Thanos Ruler组件,以便Querier也会发现它们并将其用于查询指标。

- 使用GOOGLE_APPLICATION_CREDENTIALS环境变量提供了GCS存储凭据路径。这个凭据是我们创建secret获得的。

部署Prometheus服务

apiVersion: v1

kind: Service

metadata:

name: prometheus-0-service

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9090"

namespace: monitoring

labels:

name: prometheus

spec:

selector:

statefulset.kubernetes.io/pod-name: prometheus-0

ports:

- name: prometheus

port: 8080

targetPort: prometheus

---

apiVersion: v1

kind: Service

metadata:

name: prometheus-1-service

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9090"

namespace: monitoring

labels:

name: prometheus

spec:

selector:

statefulset.kubernetes.io/pod-name: prometheus-1

ports:

- name: prometheus

port: 8080

targetPort: prometheus

---

apiVersion: v1

kind: Service

metadata:

name: prometheus-2-service

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9090"

namespace: monitoring

labels:

name: prometheus

spec:

selector:

statefulset.kubernetes.io/pod-name: prometheus-2

ports:

- name: prometheus

port: 8080

targetPort: prometheus

---

#This service creates a srv record for querier to find about store-api's

apiVersion: v1

kind: Service

metadata:

name: thanos-store-gateway

namespace: monitoring

spec:

type: ClusterIP

clusterIP: None

ports:

- name: grpc

port: 10901

targetPort: grpc

selector:

thanos-store-api: "true"我们为StatefulSet中的每个Prometheus Pod创建了不同的服务,这不是必需的,这些仅用于调试目的。上面已经解释了headless服务名称为thanos-store-gateway的目的。稍后我们将使用ingress 对象暴露Prometheus服务。

部署Thanos Querier

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: thanos-querier

namespace: monitoring

labels:

app: thanos-querier

spec:

replicas: 1

selector:

matchLabels:

app: thanos-querier

template:

metadata:

labels:

app: thanos-querier

spec:

containers:

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- query

- --log.level=debug

- --query.replica-label=replica

- --store=dnssrv+thanos-store-gateway:10901

ports:

- name: http

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: http

path: /-/healthy

readinessProbe:

httpGet:

port: http

path: /-/ready

---

apiVersion: v1

kind: Service

metadata:

labels:

app: thanos-querier

name: thanos-querier

namespace: monitoring

spec:

ports:

- port: 9090

protocol: TCP

targetPort: http

name: http

selector:

app: thanos-querierThanos Querier是Thanos部署的主要组件之一。请注意以下几点:

- 容器参数--store=dnssrv+thanos-store-gateway:10901有助于从度量标准数据中发现所有组件。

- thanos-querier服务提供了一个Web界面来运行PromQL查询。它还可以选择在多个Prometheus群集之间删除重复数据。

- Thanos Querier也是Grafana等所有仪表板的数据源。

部署Thanos Store Gateway

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: thanos-store-gateway

namespace: monitoring

labels:

app: thanos-store-gateway

spec:

replicas: 1

selector:

matchLabels:

app: thanos-store-gateway

serviceName: thanos-store-gateway

template:

metadata:

labels:

app: thanos-store-gateway

thanos-store-api: "true"

spec:

containers:

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- "store"

- "--log.level=debug"

- "--data-dir=/data"

- "--objstore.config={type: GCS, config: {bucket: prometheus-long-term}}"

- "--index-cache-size=500MB"

- "--chunk-pool-size=500MB"

env:

- name : GOOGLE_APPLICATION_CREDENTIALS

value: /etc/secret/thanos-gcs-credentials.json

ports:

- name: http

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: 10902

path: /-/healthy

readinessProbe:

httpGet:

port: 10902

path: /-/ready

volumeMounts:

- name: thanos-gcs-credentials

mountPath: /etc/secret

readOnly: false

volumes:

- name: thanos-gcs-credentials

secret:

secretName: thanos-gcs-credentials

---这将创建存储组件,该组件存储服务从object store到Querier的指标信息。

部署Thanos Ruler

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: v1

kind: ConfigMap

metadata:

name: thanos-ruler-rules

namespace: monitoring

data:

alert_down_services.rules.yaml: |

groups:

- name: metamonitoring

rules:

- alert: PrometheusReplicaDown

annotations:

message: Prometheus replica in cluster {

{$labels.cluster}} has disappeared from Prometheus target discovery.

expr: |

sum(up{cluster="prometheus-ha", instance=~".*:9090", job="kubernetes-service-endpoints"}) by (job,cluster) < 3

for: 15s

labels:

severity: critical

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

labels:

app: thanos-ruler

name: thanos-ruler

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: thanos-ruler

serviceName: thanos-ruler

template:

metadata:

labels:

app: thanos-ruler

thanos-store-api: "true"

spec:

containers:

- name: thanos

image: quay.io/thanos/thanos:v0.8.0

args:

- rule

- --log.level=debug

- --data-dir=/data

- --eval-interval=15s

- --rule-file=/etc/thanos-ruler/*.rules.yaml

- --alertmanagers.url=http://alertmanager:9093

- --query=thanos-querier:9090

- "--objstore.config={type: GCS, config: {bucket: thanos-ruler}}"

- --label=ruler_cluster="prometheus-ha"

- --label=replica="$(POD_NAME)"

env:

- name : GOOGLE_APPLICATION_CREDENTIALS

value: /etc/secret/thanos-gcs-credentials.json

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

ports:

- name: http

containerPort: 10902

- name: grpc

containerPort: 10901

livenessProbe:

httpGet:

port: http

path: /-/healthy

readinessProbe:

httpGet:

port: http

path: /-/ready

volumeMounts:

- mountPath: /etc/thanos-ruler

name: config

- name: thanos-gcs-credentials

mountPath: /etc/secret

readOnly: false

volumes:

- configMap:

name: thanos-ruler-rules

name: config

- name: thanos-gcs-credentials

secret:

secretName: thanos-gcs-credentials

---

apiVersion: v1

kind: Service

metadata:

labels:

app: thanos-ruler

name: thanos-ruler

namespace: monitoring

spec:

ports:

- port: 9090

protocol: TCP

targetPort: http

name: http

selector:

app: thanos-ruler现在,在与我们的工作负载相同的名称空间中的输入以下命令,能够查看到thanos-store-gateway对应有哪些Pod :

root@my-shell-95cb5df57-4q6w8:/# nslookup thanos-store-gateway

Server: 10.63.240.10

Address: 10.63.240.10#53

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.25.2

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.25.4

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.30.2

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.30.8

Name: thanos-store-gateway.monitoring.svc.cluster.local

Address: 10.60.31.2

root@my-shell-95cb5df57-4q6w8:/# exit上面返回的IP对应于我们的Prometheus中的Pod(thanos-store和thanos-ruler)。

可以通过以下命令验证

$ kubectl get pods -o wide -l thanos-store-api="true"

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

prometheus-0 2/2 Running 0 100m 10.60.31.2 gke-demo-1-pool-1-649cbe02-jdnv

prometheus-1 2/2 Running 0 14h 10.60.30.2 gke-demo-1-pool-1-7533d618-kxkd

prometheus-2 2/2 Running 0 31h 10.60.25.2 gke-demo-1-pool-1-4e9889dd-27gc

thanos-ruler-0 1/1 Running 0 100m 10.60.30.8 gke-demo-1-pool-1-7533d618-kxkd

thanos-store-gateway-0 1/1 Running 0 14h 10.60.25.4 gke-demo-1-pool-1-4e9889dd-27gc 部署Alertmanager

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

kind: ConfigMap

apiVersion: v1

metadata:

name: alertmanager

namespace: monitoring

data:

config.yml: |-

global:

resolve_timeout: 5m

slack_api_url: ""

victorops_api_url: ""

templates:

- '/etc/alertmanager-templates/*.tmpl'

route:

group_by: ['alertname', 'cluster', 'service']

group_wait: 10s

group_interval: 1m

repeat_interval: 5m

receiver: default

routes:

- match:

team: devops

receiver: devops

continue: true

- match:

team: dev

receiver: dev

continue: true

receivers:

- name: 'default'

- name: 'devops'

victorops_configs:

- api_key: ''

routing_key: 'devops'

message_type: 'CRITICAL'

entity_display_name: '{

{ .CommonLabels.alertname }}'

state_message: 'Alert: {

{ .CommonLabels.alertname }}. Summary:{

{ .CommonAnnotations.summary }}. RawData: {

{ .CommonLabels }}'

slack_configs:

- channel: '#k8-alerts'

send_resolved: true

- name: 'dev'

victorops_configs:

- api_key: ''

routing_key: 'dev'

message_type: 'CRITICAL'

entity_display_name: '{

{ .CommonLabels.alertname }}'

state_message: 'Alert: {

{ .CommonLabels.alertname }}. Summary:{

{ .CommonAnnotations.summary }}. RawData: {

{ .CommonLabels }}'

slack_configs:

- channel: '#k8-alerts'

send_resolved: true

---

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: alertmanager

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: alertmanager

template:

metadata:

name: alertmanager

labels:

app: alertmanager

spec:

containers:

- name: alertmanager

image: prom/alertmanager:v0.15.3

args:

- '--config.file=/etc/alertmanager/config.yml'

- '--storage.path=/alertmanager'

ports:

- name: alertmanager

containerPort: 9093

volumeMounts:

- name: config-volume

mountPath: /etc/alertmanager

- name: alertmanager

mountPath: /alertmanager

volumes:

- name: config-volume

configMap:

name: alertmanager

- name: alertmanager

emptyDir: {}

---

apiVersion: v1

kind: Service

metadata:

annotations:

prometheus.io/scrape: 'true'

prometheus.io/path: '/metrics'

labels:

name: alertmanager

name: alertmanager

namespace: monitoring

spec:

selector:

app: alertmanager

ports:

- name: alertmanager

protocol: TCP

port: 9093

targetPort: 9093 alertmanager将根据Prometheus规则生成所有的警报。

部署Kubestate指标

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1

# kubernetes versions before 1.8.0 should use rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: kube-state-metrics

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: kube-state-metrics

subjects:

- kind: ServiceAccount

name: kube-state-metrics

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1

# kubernetes versions before 1.8.0 should use rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: kube-state-metrics

rules:

- apiGroups: [""]

resources:

- configmaps

- secrets

- nodes

- pods

- services

- resourcequotas

- replicationcontrollers

- limitranges

- persistentvolumeclaims

- persistentvolumes

- namespaces

- endpoints

verbs: ["list", "watch"]

- apiGroups: ["extensions"]

resources:

- daemonsets

- deployments

- replicasets

verbs: ["list", "watch"]

- apiGroups: ["apps"]

resources:

- statefulsets

verbs: ["list", "watch"]

- apiGroups: ["batch"]

resources:

- cronjobs

- jobs

verbs: ["list", "watch"]

- apiGroups: ["autoscaling"]

resources:

- horizontalpodautoscalers

verbs: ["list", "watch"]

---

apiVersion: rbac.authorization.k8s.io/v1

# kubernetes versions before 1.8.0 should use rbac.authorization.k8s.io/v1beta1

kind: RoleBinding

metadata:

name: kube-state-metrics

namespace: monitoring

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kube-state-metrics-resizer

subjects:

- kind: ServiceAccount

name: kube-state-metrics

namespace: monitoring

---

apiVersion: rbac.authorization.k8s.io/v1

# kubernetes versions before 1.8.0 should use rbac.authorization.k8s.io/v1beta1

kind: Role

metadata:

namespace: monitoring

name: kube-state-metrics-resizer

rules:

- apiGroups: [""]

resources:

- pods

verbs: ["get"]

- apiGroups: ["extensions"]

resources:

- deployments

resourceNames: ["kube-state-metrics"]

verbs: ["get", "update"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: kube-state-metrics

namespace: monitoring

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kube-state-metrics

namespace: monitoring

spec:

selector:

matchLabels:

k8s-app: kube-state-metrics

replicas: 1

template:

metadata:

labels:

k8s-app: kube-state-metrics

spec:

serviceAccountName: kube-state-metrics

containers:

- name: kube-state-metrics

image: quay.io/mxinden/kube-state-metrics:v1.4.0-gzip.3

ports:

- name: http-metrics

containerPort: 8080

- name: telemetry

containerPort: 8081

readinessProbe:

httpGet:

path: /healthz

port: 8080

initialDelaySeconds: 5

timeoutSeconds: 5

- name: addon-resizer

image: k8s.gcr.io/addon-resizer:1.8.3

resources:

limits:

cpu: 150m

memory: 50Mi

requests:

cpu: 150m

memory: 50Mi

env:

- name: MY_POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: MY_POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

command:

- /pod_nanny

- --container=kube-state-metrics

- --cpu=100m

- --extra-cpu=1m

- --memory=100Mi

- --extra-memory=2Mi

- --threshold=5

- --deployment=kube-state-metrics

---

apiVersion: v1

kind: Service

metadata:

name: kube-state-metrics

namespace: monitoring

labels:

k8s-app: kube-state-metrics

annotations:

prometheus.io/scrape: 'true'

spec:

ports:

- name: http-metrics

port: 8080

targetPort: http-metrics

protocol: TCP

- name: telemetry

port: 8081

targetPort: telemetry

protocol: TCP

selector:

k8s-app: kube-state-metrics需要使用Kubestate指标来中继一些重要的容器指标,这些指标不是kubelet本身公开的,因此不能直接用于Prometheus。

部署Node-Exporter Daemonset

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: extensions/v1beta1

kind: DaemonSet

metadata:

name: node-exporter

namespace: monitoring

labels:

name: node-exporter

spec:

template:

metadata:

labels:

name: node-exporter

annotations:

prometheus.io/scrape: "true"

prometheus.io/port: "9100"

spec:

hostPID: true

hostIPC: true

hostNetwork: true

containers:

- name: node-exporter

image: prom/node-exporter:v0.16.0

securityContext:

privileged: true

args:

- --path.procfs=/host/proc

- --path.sysfs=/host/sys

ports:

- containerPort: 9100

protocol: TCP

resources:

limits:

cpu: 100m

memory: 100Mi

requests:

cpu: 10m

memory: 100Mi

volumeMounts:

- name: dev

mountPath: /host/dev

- name: proc

mountPath: /host/proc

- name: sys

mountPath: /host/sys

- name: rootfs

mountPath: /rootfs

volumes:

- name: proc

hostPath:

path: /proc

- name: dev

hostPath:

path: /dev

- name: sys

hostPath:

path: /sys

- name: rootfs

hostPath:

path: /Node-Exporter是Daemonset资源,它在每个节点上运行一个pod -exporter的容器,并公开非常重要的与节点相关的度量标准,这些度量标准可以由Prometheus实例提取。

部署Grafana

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

---

apiVersion: storage.k8s.io/v1beta1

kind: StorageClass

metadata:

name: fast

namespace: monitoring

provisioner: kubernetes.io/gce-pd

allowVolumeExpansion: true

---

apiVersion: apps/v1beta1

kind: StatefulSet

metadata:

name: grafana

namespace: monitoring

spec:

replicas: 1

serviceName: grafana

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: k8s.gcr.io/heapster-grafana-amd64:v5.0.4

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /etc/ssl/certs

name: ca-certificates

readOnly: true

- mountPath: /var

name: grafana-storage

env:

- name: GF_SERVER_HTTP_PORT

value: "3000"

# The following env variables are required to make Grafana accessible via

# the kubernetes api-server proxy. On production clusters, we recommend

# removing these env variables, setup auth for grafana, and expose the grafana

# service using a LoadBalancer or a public IP.

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

# If you're only using the API Server proxy, set this value instead:

# value: /api/v1/namespaces/kube-system/services/monitoring-grafana/proxy

value: /

volumes:

- name: ca-certificates

hostPath:

path: /etc/ssl/certs

volumeClaimTemplates:

- metadata:

name: grafana-storage

namespace: monitoring

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: fast

resources:

requests:

storage: 5Gi

---

apiVersion: v1

kind: Service

metadata:

labels:

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: grafana

name: grafana

namespace: monitoring

spec:

ports:

- port: 3000

targetPort: 3000

selector:

k8s-app: grafana这将创建我们的Grafana的Deployment和Service资源对象,该Service将 通过我们的Ingress对象公开。

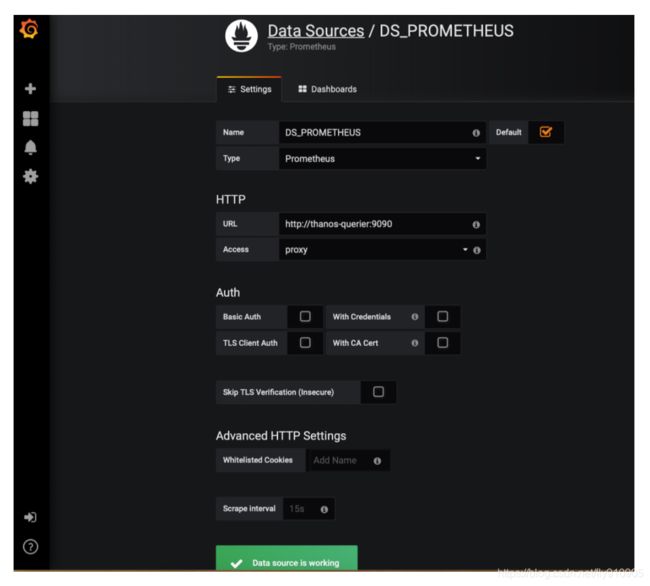

为了将Thanos-Querier添加为Grafana数据源。我们可以这样做:

- 在Grafana单击Add DataSource

- 名称:DS_PROMETHEUS

- 类型:Prometheus

- 网址:http://thanos-querier:9090

- 点击Save and Test。现在,你可以构建自定义仪表板,也可以直接从grafana.net导入仪表板。仪表盘#315和#1471是很好的开始。

部署Ingress对象

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: monitoring-ingress

namespace: monitoring

annotations:

kubernetes.io/ingress.class: "nginx"

spec:

rules:

- host: grafana..com

http:

paths:

- path: /

backend:

serviceName: grafana

servicePort: 3000

- host: prometheus-0..com

http:

paths:

- path: /

backend:

serviceName: prometheus-0-service

servicePort: 8080

- host: prometheus-1..com

http:

paths:

- path: /

backend:

serviceName: prometheus-1-service

servicePort: 8080

- host: prometheus-2..com

http:

paths:

- path: /

backend:

serviceName: prometheus-2-service

servicePort: 8080

- host: alertmanager..com

http:

paths:

- path: /

backend:

serviceName: alertmanager

servicePort: 9093

- host: thanos-querier..com

http:

paths:

- path: /

backend:

serviceName: thanos-querier

servicePort: 9090

- host: thanos-ruler..com

http:

paths:

- path: /

backend:

serviceName: thanos-ruler

servicePort: 9090 这将有助于在Kubernetes集群之外公开我们所有的服务。记得将

现在,您应该可以在http://thanos-querier.

它看起来像这样:

可以选择“ deldupication“ 删除重复数据。

如果单击“ Stores ”,则可以看到thanos-store-gateway服务发现的所有活动端点

现在,您将Thanos Querier添加为Grafana中的数据源,并开始创建仪表板

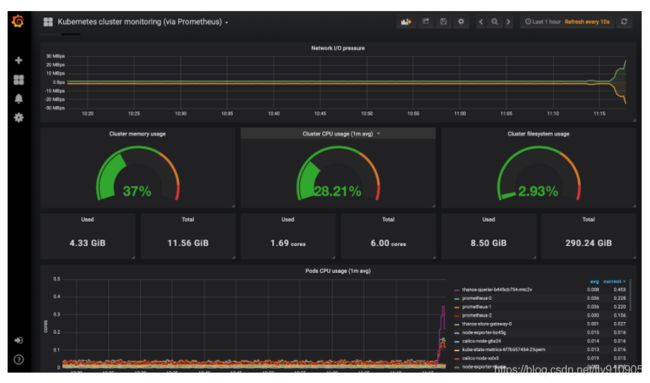

Kubernetes集群监控仪表板

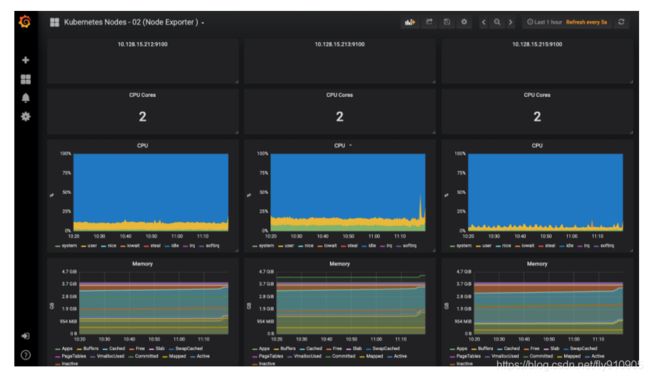

Kubernetes节点监控仪表板

结论

将Thanos与Prometheus集成无疑提供了水平扩展Prometheus的能力,并且由于Thanos-Querier能够从其他查询器实例中提取指标,因此你实际上可以跨集群提取指标,从而在单个仪表板上可视化它们。

我们还能够将度量标准数据存档在object store中,该object store为我们的监视系统提供了无限的存储空间,并提供了来自object store本身的度量。

但是,要实现所有这些,你需要进行大量配置。上面提供的清单已在生产环境中进行了测试。如果你有任何疑问,请随时与我们联系。

译文连接:https://dzone.com/articles/high-availability-kubernetes-monitoring-using-prom