mnist 神经网络——tensorflow基础

使用两层卷积层搭建的卷积神经网络,实现mnist的识别。

使用了两层卷积+池化层,一层全连接层(fully connected layer),并在全连接层中使用dropout以防止过拟合。

代码:

# -*- coding: utf-8 -*-

"""

Created on Sat Jul 21 05:13:22 2018

@author: czx

"""

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("E:\桌面上的文件\MNIST", one_hot=True)

sess = tf.InteractiveSession()

#对输入内容初始化

x = tf.placeholder(tf.float32,[None, 784])

W = tf.Variable(tf.zeros([784, 10]))

b = tf.Variable(tf.zeros([10]))

y_ = tf.placeholder(tf.float32,[None, 10])

#参数初始化

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

#卷积

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

#池化

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME') #如果mean_pool?

#1st layer

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x,[-1,28,28,1])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

#2nd layer

W_conv2 = weight_variable([5,5,32,64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

#Fully Connected Layer

W_fcl = weight_variable([7 * 7 * 64, 1024])

b_fcl = weight_variable([1024])

h_pool2_flat = tf.reshape(h_pool2, [-1, 7 * 7 * 64])

h_fcl = tf.nn.relu(tf.matmul(h_pool2_flat,W_fcl) + b_fcl)

#dropout

keep_prob = tf.placeholder("float") #神经元被选中的概论

h_fcl_drop = tf.nn.dropout(h_fcl, keep_prob) #dropout防止过拟合

#Output Layer

W_fc2 = weight_variable([1024,10])

b_fc2 = bias_variable([10])

y_conv = tf.nn.softmax(tf.matmul(h_fcl_drop, W_fc2) + b_fc2)

#训练模型

cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy) #使用AdamOptimizer

correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction,"float"))

init = tf.global_variables_initializer()

sess.run(init)

step = 20000

for i in range(step):

batch = mnist.train.next_batch(50)

if i%10 == 0:

train_accuracy = sess.run(accuracy, feed_dict = {x:batch[0], y_:batch[1], keep_prob:1.0})

print ("step",i,"train accuracy",train_accuracy)

sess.run(train_step, feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5})

train_accuracy = sess.run(accuracy, feed_dict = {x:mnist.test.image, y_:mnist.test.labels, keep_prob:1.0})

print ("test accuracy",train_accuracy)

sess.close()

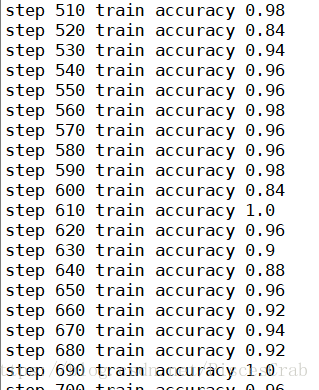

由于电脑实在太渣,并没有进行2w步的训练,不过可以看到,结果已经相当理想。