MapReduce增强 07

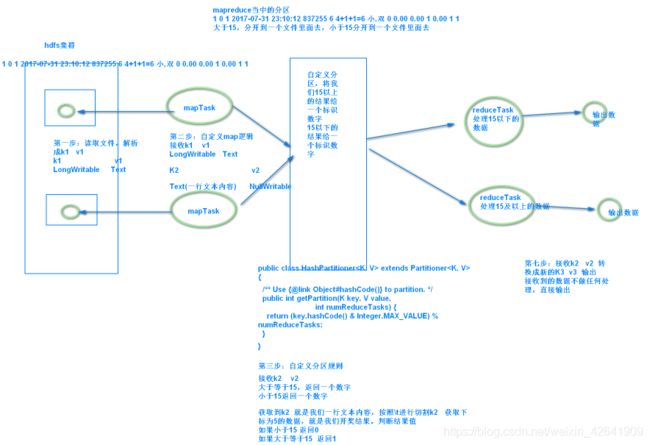

1. 分区以及reduceTask的个数

1.1 概念

- 分区:主要的作用就是决定我们数据去到哪一个reduceTask里面去

物以类聚,人以群分。相同key的数据发送到同一个reduce里面去 - csv格式的数据可以直接被excel加载。excel是一个很强大的数据库。excel里面有很多的函数,日期,时间,金额,求最大值,求最小值,平均值

比较早的时候,很多做数据统计的人都是使用的excel。excel可以出各种统计图表。 - 默认情况下是HashPartitioner

- 自定义Partitioner

1.2 需求

- 将以下数据进行分开处理

详细数据参见partition.csv 这个文本文件,其中第五个字段表示开奖结果数值,现在需求将15以上的结果以及15以下的结果进行分开成两个文件进行保存 - 注意:分区的案例,只能打成jar包发布到集群上面去运行,本地模式已经不能正常运行了

1.3 代码逻辑

1.3.1 定义我们的mapper

我们这里的mapper程序不做任何逻辑,也不对key,与value做任何改变,只是接收我们的数据,然后往下发送

public class MyMapper extends Mapper<LongWritable,Text,Text,NullWritable>{

@Override

protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

context.write(value,NullWritable.get());

}

}

1.3.2 定义reducer逻辑

我们的reducer也不做任何处理,将我们的数据原封不动的输出即可

public class MyReducer extends Reducer<Text,NullWritable,Text,NullWritable> {

@Override

protected void reduce(Text key, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException {

context.write(key,NullWritable.get());

}

}

1.3.3 自定义partitioner

/**

* 这里的输入类型与我们map阶段的输出类型相同

*/

public class MyPartitioner extends Partitioner<Text,NullWritable>{

/**

* 返回值表示我们的数据要去到哪个分区

* 返回值只是一个分区的标记,标记所有相同的数据去到指定的分区

*/

@Override

public int getPartition(Text text, NullWritable nullWritable, int i) {

String result = text.toString().split("\t")[5];

System.out.println(result);

if (Integer.parseInt(result) > 15){

return 1;

}else{

return 0;

}

}

}

1.3.4 程序main函数入口

public class PartitionMain extends Configured implements Tool {

public static void main(String[] args) throws Exception{

int run = ToolRunner.run(new Configuration(), new PartitionMain(), args);

System.exit(run);

}

@Override

public int run(String[] args) throws Exception {

Job job = Job.getInstance(super.getConf(), PartitionMain.class.getSimpleName());

job.setJarByClass(PartitionMain.class);

job.setInputFormatClass(TextInputFormat.class);

job.setOutputFormatClass(TextOutputFormat.class);

TextInputFormat.addInputPath(job,new Path("hdfs://192.168.52.100:8020/partitioner"));

TextOutputFormat.setOutputPath(job,new Path("hdfs://192.168.52.100:8020/outpartition"));

job.setMapperClass(MyMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

job.setOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class);

job.setReducerClass(MyReducer.class);

/**

* 设置我们的分区类,以及我们的reducetask的个数,注意reduceTask的个数一定要与我们的

* 分区数保持一致

*/

job.setPartitionerClass(MyPartitioner.class);

job.setNumReduceTasks(2);

boolean b = job.waitForCompletion(true);

return b?0:1;

}

}

2. 排序以及序列化

- 序列化:是为了实现网络之间的数据传输。hadoop当中,没有沿用java的那一套序列化,使用自己封装的一套序列化机制。所有的数据类型,都需要实现序列化,才可以实现跨网络传输

- hadoop当中的序列化接口叫做writable,只要实现了writable就可以实现序列化

Writable:hadoop当中序列化的接口

Comparable :javaSE当中的排序的接口

如果我们只需要序列化:实现writable即可

如果既需要序列化也需要排序:实现 WritableComparable

默认排序规则是对k2 进行排序。以后记住:需要对谁进行排序就把谁作为k2

2.1 数据格式

a 1

a 9

b 3

a 7

b 8

b 10

a 5

2.2 需求

要求第一列按照字典顺序进行排列,第一列相同的时候,第二列按照升序进行排列

2.3 解决思路

a 1

a 9

b 3

a 7

b 8

b 10

a 5

a 9

排序需求:先对第一列进行排序,如果第一列相等了,再对第二列进行排序

a 1

a 5

a 7

a 9

a 9

b 3

b 8

b 10

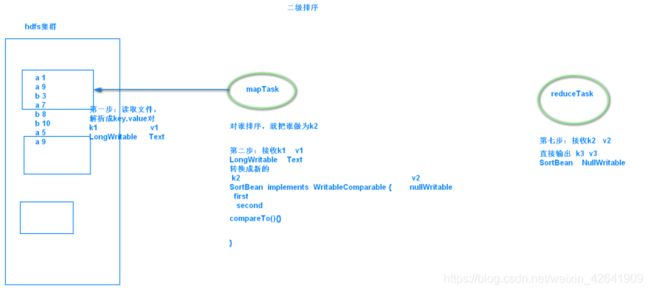

二级排序

两个字段都要进行排序,两个字段都要作为我们K2 。可不可以将两个字段封装成一个javaBean,作为我们k2

javaBean需要序列化 要排序 javaBean 实现 WritableComparable 即可

2.4 代码逻辑

2.4.1 自定义数据类型以及比较器

public class PairWritable implements WritableComparable<PairWritable> {

// 组合key,第一部分是我们第一列,第二部分是我们第二列

private String first;

private int second;

public PairWritable() {

}

public PairWritable(String first, int second) {

this.set(first, second);

}

/**

* 方便设置字段

*/

public void set(String first, int second) {

this.first = first;

this.second = second;

}

/**

* 反序列化

*/

@Override

public void readFields(DataInput input) throws IOException {

this.first = input.readUTF();

this.second = input.readInt();

}

/**

* 序列化

*/

@Override

public void write(DataOutput output) throws IOException {

output.writeUTF(first);

output.writeInt(second);

}

/*

* 重写比较器

*/

public int compareTo(PairWritable o) {

//每次比较都是调用该方法的对象与传递的参数进行比较,说白了就是第一行与第二行比较完了之后的结果与第三行比较,

//得出来的结果再去与第四行比较,依次类推

System.out.println(o.toString());

System.out.println(this.toString());

int comp = this.first.compareTo(o.first);

if (comp != 0) {

return comp;

} else { // 若第一个字段相等,则比较第二个字段

return Integer.valueOf(this.second).compareTo(

Integer.valueOf(o.getSecond()));

}

}

public int getSecond() {

return second;

}

public void setSecond(int second) {

this.second = second;

}

public String getFirst() {

return first;

}

public void setFirst(String first) {

this.first = first;

}

@Override

public String toString() {

return "PairWritable{" +

"first='" + first + '\'' +

", second=" + second +

'}';

}

}

2.4.2 自定义map逻辑

public class SortMapper extends Mapper<LongWritable,Text,PairWritable,IntWritable> {

private PairWritable mapOutKey = new PairWritable();

private IntWritable mapOutValue = new IntWritable();

@Override

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

String lineValue = value.toString();

String[] strs = lineValue.split("\t");

//设置组合key和value ==> <(key,value),value>

mapOutKey.set(strs[0], Integer.valueOf(strs[1]));

mapOutValue.set(Integer.valueOf(strs[1]));

context.write(mapOutKey, mapOutValue);

}

}

2.4.3 自定义reduce逻辑

public class SortReducer extends Reducer<PairWritable,IntWritable,Text,IntWritable> {

private Text outPutKey = new Text();

@Override

public void reduce(PairWritable key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

//迭代输出

for(IntWritable value : values) {

outPutKey.set(key.getFirst());

context.write(outPutKey, value);

}

}

}

2.4.4 定义main方法

public class SecondarySort extends Configured implements Tool {

@Override

public int run(String[] args) throws Exception {

Configuration conf = super.getConf();

conf.set("mapreduce.framework.name","local");

Job job = Job.getInstance(conf, SecondarySort.class.getSimpleName());

job.setJarByClass(SecondarySort.class);

job.setInputFormatClass(TextInputFormat.class);

TextInputFormat.addInputPath(job,new Path("file:///L:\\大数据离线阶段备课教案以及资料文档——by老王\\4、大数据离线第四天\\排序\\input"));

TextOutputFormat.setOutputPath(job,new Path("file:///L:\\大数据离线阶段备课教案以及资料文档——by老王\\4、大数据离线第四天\\排序\\output"));

job.setMapperClass(SortMapper.class);

job.setMapOutputKeyClass(PairWritable.class);

job.setMapOutputValueClass(IntWritable.class);

job.setReducerClass(SortReducer.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

boolean b = job.waitForCompletion(true);

return b?0:1;

}

public static void main(String[] args) throws Exception {

Configuration entries = new Configuration();

ToolRunner.run(entries,new SecondarySort(),args);

}

}

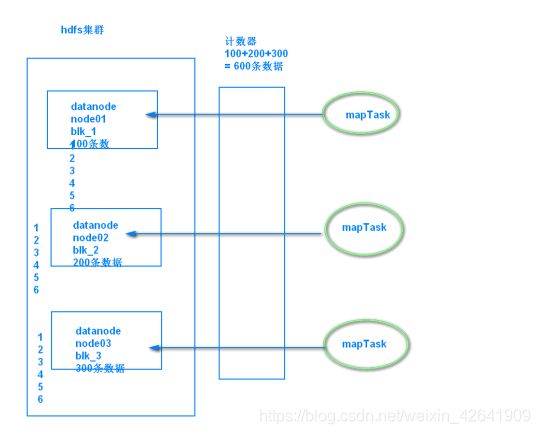

3. MapReduce当中的计数器

计数器主要是mr当中提供给我们的一个计数的工具

mapreduce当中的一个调优的手段

3.1 需求

3.2 代码

3.2.1 第一种方式定义计数器,

通过context上下文对象可以获取我们的计数器,进行记录,在map端使用计数器进行统计

public class SortMapper extends Mapper<LongWritable,Text,PairWritable,IntWritable> {

private PairWritable mapOutKey = new PairWritable();

private IntWritable mapOutValue = new IntWritable();

@Override

public void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

//自定义我们的计数器,这里实现了统计map数据数据的条数

Counter counter = context.getCounter("MR_COUNT", "MapRecordCounter");

counter.increment(1L);

String lineValue = value.toString();

String[] strs = lineValue.split("\t");

//设置组合key和value ==> <(key,value),value>

mapOutKey.set(strs[0], Integer.valueOf(strs[1]));

mapOutValue.set(Integer.valueOf(strs[1]));

context.write(mapOutKey, mapOutValue);

}

}

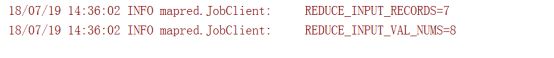

运行程序之后就可以看到我们自定义的计数器在map阶段读取了七条数据

3.2.2 第二种方式定义计数器

通过enum枚举类型来定义计数器

统计reduce端数据的输入的key有多少个,对应的value有多少个

ublic class SortReducer extends Reducer<PairWritable,IntWritable,Text,IntWritable> {

private Text outPutKey = new Text();

public static enum Counter{

REDUCE_INPUT_RECORDS, REDUCE_INPUT_VAL_NUMS,

}

@Override

public void reduce(PairWritable key, Iterable<IntWritable> values, Context context) throws IOException, InterruptedException {

context.getCounter(Counter.REDUCE_INPUT_RECORDS).increment(1L);

//迭代输出

for(IntWritable value : values) {

context.getCounter(Counter.REDUCE_INPUT_VAL_NUMS).increment(1L);

outPutKey.set(key.getFirst());

context.write(outPutKey, value);

}

}

}

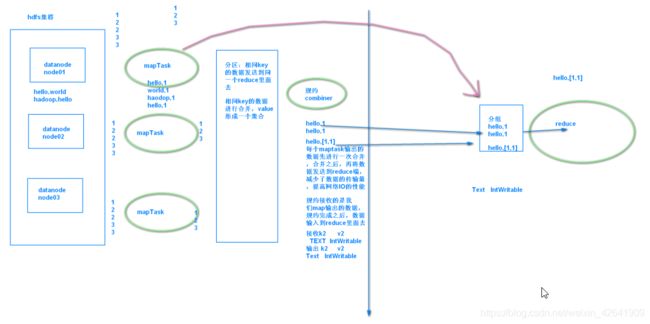

4. MapReduce当中的规约的过程

- 规约是我们的第五步:mapreduce当中的调优的过程,主要是为了减少发送到redcue端key2的数据量,提前在每个maptask数据的数据进行一次聚合

- 天龙八部

输入

map

分区

排序

规约:mapreduce当中调优的手段。combiner就是一个reducer的程序、combiner是运行在map端的一个reduce程序,reducer是真正运行在reduce端的一个程序

分组

reduce

输出 - 具体实现

1、自定义一个 combiner 继承 Reducer,重写 reduce 方法

2、在 job 中设置: job.setCombinerClass(CustomCombiner.class)