yolov3 训练自己数据的配置

目录

- 1.下载代码

- 2.处理图片

-

- a.图片文件夹设置

- b.配置文件

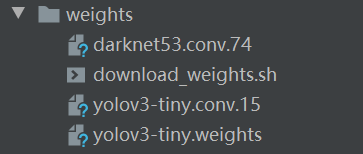

- 3.weights

- 4.train.py

1.下载代码

github

2.处理图片

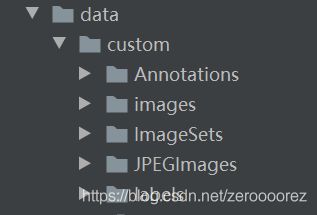

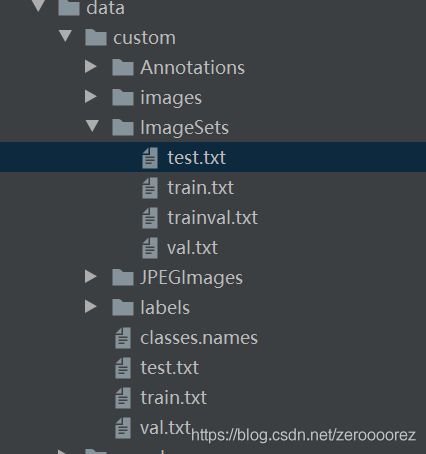

a.图片文件夹设置

--custom # 自定义图片

--Annotation # xml文件

--images # 图片

--imageSets # 运行makeTxt

--test.txt

--train.txt

--trainval.txt

--val.txt

--JPEGImages #复制images

--labels

makeTxt.py

import os

import random

trainval_percent = 0.1

train_percent = 0.9

xmlfilepath = 'data/custom/Annotations'

txtsavepath = 'data/custom/ImageSets'

total_xml = os.listdir(xmlfilepath)

num = len(total_xml)

list = range(num)

tv = int(num * trainval_percent)

tr = int(tv * train_percent)

trainval = random.sample(list, tv)

train = random.sample(trainval, tr)

ftrainval = open('data/custom/ImageSets/trainval.txt', 'w')

ftest = open('data/custom/ImageSets/test.txt', 'w')

ftrain = open('data/custom/ImageSets/train.txt', 'w')

fval = open('data/custom/ImageSets/val.txt', 'w')

for i in list:

name = total_xml[i][:-4] + '\n'

if i in trainval:

ftrainval.write(name)

if i in train:

ftest.write(name)

else:

fval.write(name)

else:

ftrain.write(name)

ftrainval.close()

ftrain.close()

fval.close()

ftest.close()

voc_label.py

注意修改sets,classes

运行后labels生成,并生成训练测试路径文件

import xml.etree.ElementTree as ET

import pickle

import os

from os import listdir, getcwd

from os.path import join

sets = ['train', 'test','val']

classes = ["RBC"]

def convert(size, box):

dw = 1. / size[0]

dh = 1. / size[1]

x = (box[0] + box[1]) / 2.0

y = (box[2] + box[3]) / 2.0

w = box[1] - box[0]

h = box[3] - box[2]

x = x * dw

w = w * dw

y = y * dh

h = h * dh

return (x, y, w, h)

def convert_annotation(image_id):

in_file = open('data/custom/Annotations/%s.xml' % (image_id))

out_file = open('data/custom/labels/%s.txt' % (image_id), 'w')

tree = ET.parse(in_file)

root = tree.getroot()

size = root.find('size')

w = int(size.find('width').text)

h = int(size.find('height').text)

for obj in root.iter('object'):

difficult = obj.find('difficult').text

cls = obj.find('name').text

if cls not in classes or int(difficult) == 1:

continue

cls_id = classes.index(cls)

xmlbox = obj.find('bndbox')

b = (float(xmlbox.find('xmin').text), float(xmlbox.find('xmax').text), float(xmlbox.find('ymin').text),

float(xmlbox.find('ymax').text))

bb = convert((w, h), b)

out_file.write(str(cls_id) + " " + " ".join([str(a) for a in bb]) + '\n')

wd = getcwd()

print(wd)

for image_set in sets:

if not os.path.exists('data/custom/labels/'):

os.makedirs('data/custom/labels/')

image_ids = open('data/custom/ImageSets/%s.txt' % (image_set)).read().strip().split()

list_file = open('data/custom/%s.txt' % (image_set), 'w')

for image_id in image_ids:

list_file.write('data/custom/images/%s.jpg\n' % (image_id))

convert_annotation(image_id)

list_file.close()

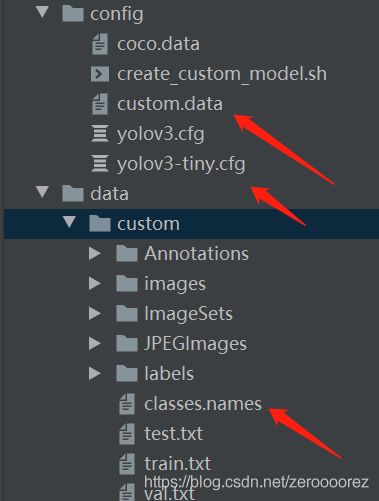

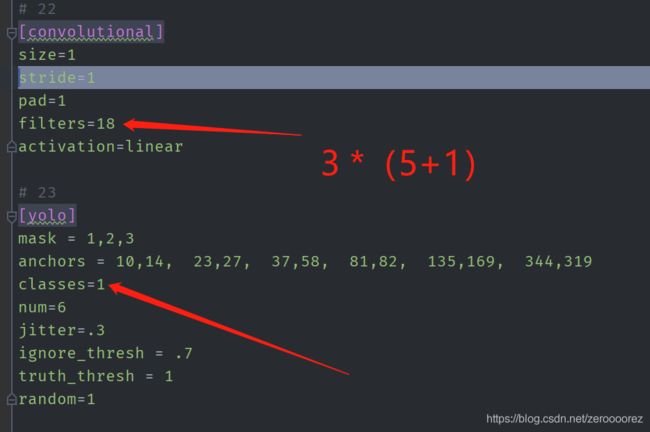

b.配置文件

三处需要修改

i.custom.data 空一格

classes= 1

train=data/custom/train.txt

valid=data/custom/val.txt

names=data/custom/classes.names

ii.classes.names

RBC

iii.yolov3-tiny.cfg 每一处yolo都要修改

3.weights

4.train.py

if __name__ == "__main__":

parser = argparse.ArgumentParser()

parser.add_argument("--epochs", type=int, default=10, help="number of epochs")

parser.add_argument("--batch_size", type=int, default=3, help="size of each image batch")

parser.add_argument("--gradient_accumulations", type=int, default=2, help="number of gradient accums before step")

parser.add_argument("--model_def", type=str, default="config/yolov3-tiny.cfg", help="path to model definition file")

parser.add_argument("--data_config", type=str, default="config/custom.data", help="path to data config file")

parser.add_argument("--pretrained_weights", type=str, help="if specified starts from checkpoint model")

parser.add_argument("--n_cpu", type=int, default=8, help="number of cpu threads to use during batch generation")

parser.add_argument("--img_size", type=int, default=416, help="size of each image dimension")

parser.add_argument("--checkpoint_interval", type=int, default=1, help="interval between saving model weights")

parser.add_argument("--evaluation_interval", type=int, default=1, help="interval evaluations on validation set")

parser.add_argument("--compute_map", default=False, help="if True computes mAP every tenth batch")

parser.add_argument("--multiscale_training", default=True, help="allow for multi-scale training")

opt = parser.parse_args()