图像处理课后作业Ⅲ——OCR文字识别 Ⅰ

第三次课后作业

作业任务:OCR文字识别 Ⅰ

处理想法

导入一张图像,进行边缘检测、获取轮廓、矩阵变换、二值化后,使用开源OCR库识别得出文字。

处理思路

导入图像

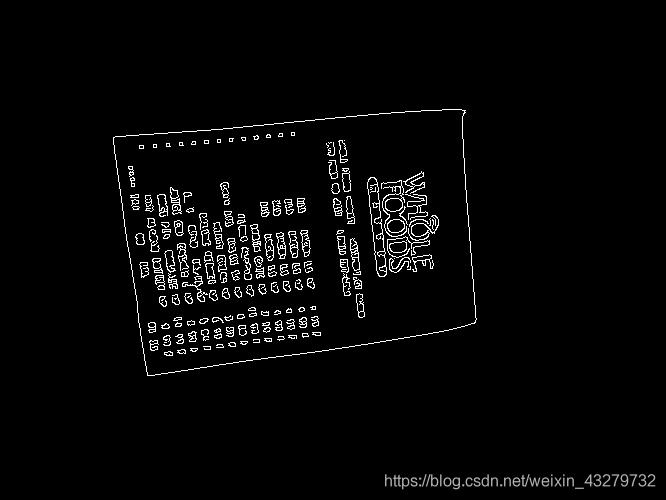

边缘检测

获取轮廓

矩阵变换

OCR

输出识别结果

工程实现

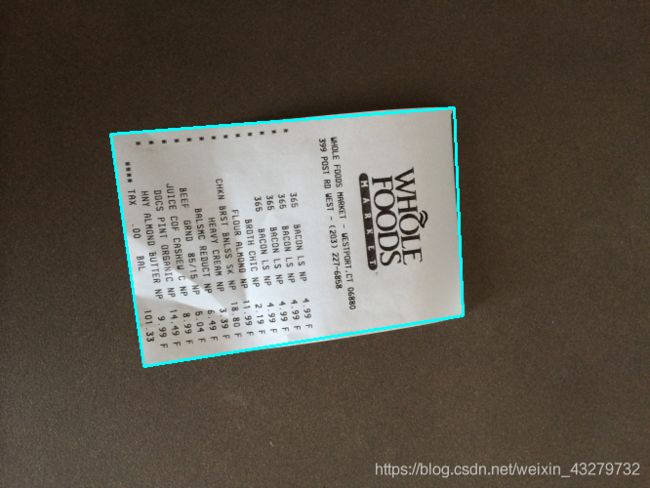

1.导入图像,改变图像大小

# 只需输入宽高之一的数值,另一参数会按比例变化

def resize(image, width=None, height=None, inter=cv2.INTER_AREA):

dim = None

(h, w) = image.shape[:2]

if width is None and height is None:

return image

if width is None:

r = height / float(h)

dim = (int(w * r), height)

else:

r = width / float(w)

dim = (width, int(h * r))

resized = cv2.resize(image, dim, interpolation=inter)

return resized

# 读取输入

image = cv2.imread("E:\\18023\\Pictures\\Camera Roll\\paper.jpg")

ratio = image.shape[0] / 500.0 # 坐标相同变化

orig = image.copy() # 复制原图获取感兴趣区域

image = resize(orig, height = 500) # 改变图像宽高

# 预处理

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # 转灰度图

gray = cv2.GaussianBlur(gray, (5, 5), 0) # 高斯滤波去噪

edged = cv2.Canny(gray, 75, 200) # 边缘检测

# 轮廓检测

cnts = cv2.findContours(edged.copy(), cv2.RETR_LIST, cv2.CHAIN_APPROX_SIMPLE)[0]

cnts = sorted(cnts, key = cv2.contourArea, reverse = True)[:5] # 可同时检测最多5个

# 遍历轮廓

for c in cnts: # C表示输入的点集

peri = cv2.arcLength(c, True) # 计算轮廓近似

# epsilon表示从原始轮廓到近似轮廓的最大距离,它是一个准确度参数

# True表示封闭的

approx = cv2.approxPolyDP(c, 0.02 * peri, True)

# 4个点的时候就拿出来

if len(approx) == 4:

screenCnt = approx

break

# 展示结果

print("STEP 2: 获取轮廓")

cv2.drawContours(image, [screenCnt], -1, (255, 255, 0), 2) # 画出轮廓

def order_points(pts):

# 一共4个坐标点

rect = np.zeros((4, 2), dtype = "float32")

# 按顺序找到对应坐标0123分别是 左上,右上,右下,左下

# 计算左上,右下

s = pts.sum(axis = 1)

rect[0] = pts[np.argmin(s)]

rect[2] = pts[np.argmax(s)]

# 计算右上和左下

diff = np.diff(pts, axis = 1)

rect[1] = pts[np.argmin(diff)]

rect[3] = pts[np.argmax(diff)]

return rect

# 计算变换矩阵

def four_point_transform(image, pts):

# 获取输入坐标点

rect = order_points(pts)

(tl, tr, br, bl) = rect

# 计算输入的w和h值

# 即坐标点的距离

widthA = np.sqrt(((br[0] - bl[0]) ** 2) + ((br[1] - bl[1]) ** 2))

widthB = np.sqrt(((tr[0] - tl[0]) ** 2) + ((tr[1] - tl[1]) ** 2))

maxWidth = max(int(widthA), int(widthB)) # 取较大值

heightA = np.sqrt(((tr[0] - br[0]) ** 2) + ((tr[1] - br[1]) ** 2))

heightB = np.sqrt(((tl[0] - bl[0]) ** 2) + ((tl[1] - bl[1]) ** 2))

maxHeight = max(int(heightA), int(heightB)) # 取较大值

# 变换后对应坐标位置

dst = np.array([

[0, 0], # bl

[maxWidth - 1, 0], # br

[maxWidth - 1, maxHeight - 1], # tr

[0, maxHeight - 1]], dtype = "float32") # tl

# 计算变换矩阵

M = cv2.getPerspectiveTransform(rect, dst)

warped = cv2.warpPerspective(image, M, (maxWidth, maxHeight))

# 返回变换后结果

return warped

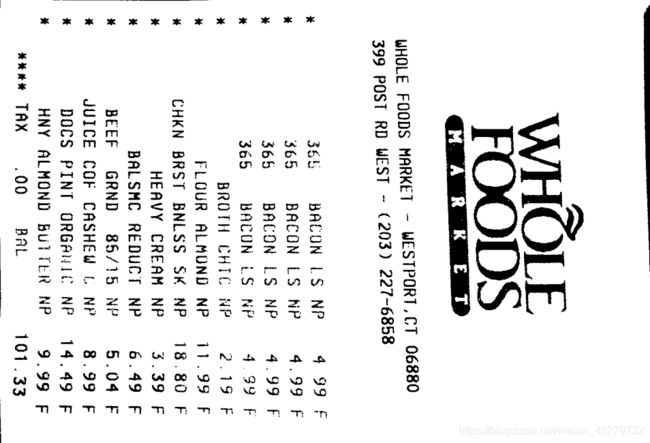

# 透视变换

warped = four_point_transform(orig, screenCnt.reshape(4, 2) * ratio)

5.二值化处理,获得最终图像

# 二值处理

warped = cv2.cvtColor(warped, cv2.COLOR_BGR2GRAY)

ref = cv2.threshold(warped, 100, 255, cv2.THRESH_BINARY)[1]

cv2.imwrite('scan.jpg', ref)

# 展示结果

print("STEP 3: 变换")

cv2.imshow("Original", resize(orig, height = 650))

cv2.imshow("Scanned", resize(ref, height = 650))

cv2.waitKey(0)

这部分下星期完成

学习心得

- 了解边缘检测、轮廓检测、二值化等图像处理方式

- 了解透视变换的原理与矩阵计算

- 了解图像处理的具体应用:OCR文本识别

下周计划

下周会导入开源的OCR库,进行后续的文本识别输出文本内容。