- 04-多核多cluster多系统之间缓存一致性概述

代码改变世界ctw

ARM-TEE-Android缓存cacheDSUarmMMUarm开发armv9

快速链接:.ARMv8/ARMv9架构入门到精通-[目录]付费专栏-付费课程【购买须知】:联系方式-加入交流群----联系方式-加入交流群个人博客笔记导读目录(全部)引流关键词:缓存,高速缓存,cache,CCI,CMN,CCI-550,CCI-500,DSU,SCU,L1,L2,L3,systemcache,Non-cacheable,Cacheable,non-shareable,inner-

- 【人工智能】临时抱佛脚准备明天的人工智能考试,试题与答案汇总

奋力向前123

人工智能人工智能

博主明天参加人工智能相关知识点的考试,于是今天临时抱佛脚从网上找些人工智能相关的试题熟悉熟悉,但愿明天考试能顺利通过,试题与答案汇总简答题解释什么是“过拟合”,并给出一种防止过拟合的方法。过拟合:指模型在训练数据上表现非常好,但在未见过的测试数据上表现很差,即模型学习到了训练数据中的噪声或偶然特征。防止方法:一种常见的方法是正则化(如L1和L2正则化)选择题人工智能的定义中

- 机器学习面试笔试知识点-线性回归、逻辑回归(Logistics Regression)和支持向量机(SVM)

qq742234984

机器学习线性回归逻辑回归

机器学习面试笔试知识点-线性回归、逻辑回归LogisticsRegression和支持向量机SVM微信公众号:数学建模与人工智能一、线性回归1.线性回归的假设函数2.线性回归的损失函数(LossFunction)两者区别3.简述岭回归与Lasso回归以及使用场景4.什么场景下用L1、L2正则化5.什么是ElasticNet回归6.ElasticNet回归的使用场景7.线性回归要求因变量服从正态分布

- OpenCV——边缘检测 Canny

&海哥

OpenCVopencv计算机视觉人工智能

边缘检测函数Canny功能描述:运用边缘检测算子对输入图形的边缘进行检测(根据设定好的最大阈值和最小阈值)并将检测到的边缘显示在输出的图像上。参数释义:参数image:输入图像;参数edges:输出(边缘)图像;参数threshold1:边缘检测的第一个(最小)阈值;参数threshold2:边缘检测的第一个(最大)阈值;参数apertureSize:Sobel算子的大小(默认为3X3);参数L2

- 5G NR协议栈

脚本之家

5G

在移动通信系统(如5GNR和LTE)中,L1、L2、L3是协议栈的分层术语,对应不同的功能层级。以下是具体定义及其在5GNR中的实现:1.层1(L1):物理层(PHY)功能:负责物理信号的传输与接收,直接与无线信道交互。调制/解调(QPSK、16QAM、256QAM等)。信道编码(LDPC、Polar码)与解码。MIMO波束成形、天线阵列处理。资源映射(时域、频域、空域资源分配)。同步、功率控制、

- 51单片机独立按键的扩展应用

杜子不疼.

51单片机嵌入式硬件单片机

提示:按键S7和S6为选择键,确定控制键控制那组LED指示灯。按键S5和S4为控制键,按键该键点亮指定的LED指示灯,松开后熄灭。按下S7点亮L1指示灯,L1点亮后,S6不响应操作,S5控制L3,S4控制L4,再次按下S7,L1指示灯熄灭,S6可可响应操作。按下S6点亮L2指示灯,L2点亮后,S7不响应操作,S5控制L5,S4控制L6,再次按下S6,L2指示灯熄灭,S7可可响应操作。S7和S6未按

- python 链表两数相加

一叶知秋的BLOG

链表算法链表pythonleetcode

|两数相加给你两个非空的链表,表示两个非负的整数。它们每位数字都是按照逆序的方式存储的,并且每个节点只能存储一位数字。请你将两个数相加,并以相同形式返回一个表示和的链表。你可以假设除了数字0之外,这两个数都不会以0开头。输入:l1=[2,4,3],l2=[5,6,4]输出:[7,0,8]解释:342+465=807.示例2:输入:l1=[0],l2=[0]输出:[0]示例3:输入:l1=[9,9,

- 【深度学习】L1损失、L2损失、L1正则化、L2正则化

小小小小祥

深度学习人工智能算法机器学习

文章目录1.L1损失(L1Loss)2.L2损失(L2Loss)3.L1正则化(L1Regularization)4.L2正则化(L2Regularization)5.总结5.1为什么L1正则化会产生稀疏解L2正则化会让权重变小L1损失、L2损失、L1正则化、L2正则化是机器学习中常用的损失函数和正则化技术,它们在优化过程中起着至关重要的作用。它们的作用分别在于如何计算模型误差和如何控制模型的复杂

- 产品经理的人工智能课 02 - 自然语言处理

平头某

人工智能产品经理自然语言处理

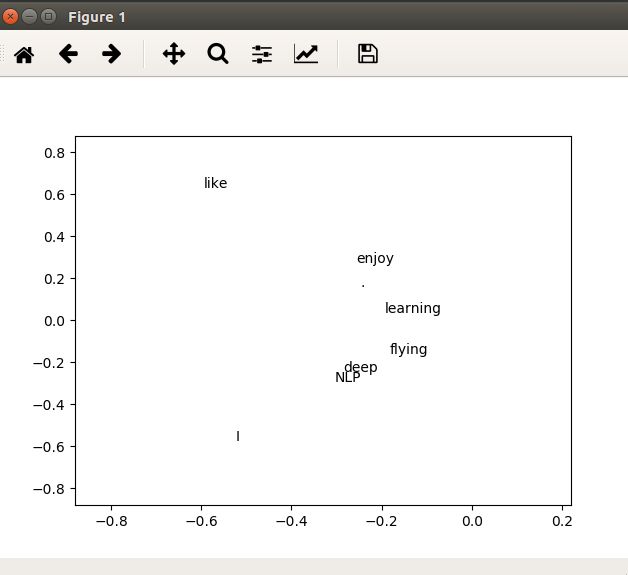

产品经理的人工智能课02-自然语言处理1自然语言处理是什么2一个NLP算法的例子——n-gram模型3预处理与重要概念3.1分词Token3.2词向量化表示与Word2Vec4与大语言模型的交互过程参考链接大语言模型(LargeLanguageModels,LLMs)是自然语言处理(NLP)领域的一个重要分支和核心技术,两者关系密切。所以我们先了解一些自然语言处理的基础概念,为后续了解大语言模型做

- TfidfVectorizer 和 word2vec

SpiritYzw

sklearnpython机器学习

一、TfidfVectorizer简单使用例子,可以统计子变量的频次类特征fromsklearn.feature_extraction.textimportTfidfVectorizertext_list=['aaa,bbb,ccc,aaa','bbb,aaa,aaa,ccc']vectorizer=TfidfVectorizer(stop_words=[',',':','','.','-'],m

- Kubernetes 中 BGP 与二层网络的较量:究竟孰轻孰重?

硅基创想家

Kubernetes实战与经验后端

如果你曾搭建过Kubernetes集群,就会知道网络配置是一个很容易让人深陷其中的领域。在负载均衡器、服务通告和IP管理之间,你要同时应对许多变动的因素。对于许多配置而言,使用二层(L2)网络就完全能满足需求。但边界网关协议(BGP)——支撑互联网运行的技术——也逐渐出现在有关Kubernetes的讨论中。那么,为什么人们对在Kubernetes中使用BGP而非二层网络如此兴奋呢?让我们详细剖析一

- 用蓝桥杯单片机实现温度界面与时钟界面转换

安知甜与乐

单片机蓝桥杯单片机职场和发展

1基本功能描述1)通过DS18B20温度传感器,采集环境温度数据,保留小数点后2位有效数字。2)读取DS1302时钟芯片的时、分、秒数据。3)通过数码管显示时间和温度数据,显示界面可以通过按键来回切换。初始化状态说明1)关闭蜂鸣器、继电器。2)数码管处于时间界面。3)实时时钟的初始化时间是00:00:00显示界面状态1)时间界面指示灯L2点亮,其余指示灯熄灭。2)温度界面指示灯L3点亮,其余指示灯

- 机器学习笔记——正则化

好评笔记

补档机器学习人工智能论文阅读AIGC计算机视觉深度学习面试

大家好,这里是好评笔记,公主号:Goodnote,专栏文章私信限时Free。本笔记介绍机器学习中常见的正则化方法。文章目录正则化L1正则化(Lasso)原理使用场景优缺点L2正则化(Ridge)原理使用场景优缺点ElasticNet正则化定义公式优点缺点应用场景Dropout原理使用场景优缺点早停法(EarlyStopping)原理使用场景优缺点BatchNormalization(BN)原理使用

- Python 浅拷贝 深拷贝

MIPS71

Python

看《流畅的Python》8.3节默认做浅拷贝,自己动手实践。书中提到的网站http://pythontutor.com是一个可视化编程的网站。csdn不支持图片粘贴,我也是服了,图片全没了。。。一、浅拷贝在http://pythontutor.com/visualize.html#mode=edit下输入:importcopyl1=[3,[66,55,44],(7,8,9)]l2=list(l1)

- 【自然语言处理(NLP)】Word2Vec 原理及模型架构(Skip-Gram、CBOW)

道友老李

自然语言处理(NLP)自然语言处理word2vec

文章目录介绍Word2Vec介绍Word2Vec的核心概念Word2Vec的优点Word2Vec的缺点Word2Vec的应用场景Word2Vec的实现工具总结Word2Vec数学推导过程1.CBOW模型的数学推导(1)输入表示(2)词向量矩阵(3)输出层(4)损失函数(5)参数更新2.Skip-Gram模型的数学推导(1)输入表示(2)词向量矩阵(3)输出层(4)损失函数(5)参数更新3.优化技巧

- hot100_21. 合并两个有序链表

TTXS123456789ABC

BS_算法链表数据结构

将两个升序链表合并为一个新的升序链表并返回。新链表是通过拼接给定的两个链表的所有节点组成的。示例1:输入:l1=[1,2,4],l2=[1,3,4]输出:[1,1,2,3,4,4]示例2:输入:l1=[],l2=[]输出:[]示例3:输入:l1=[],l2=[0]输出:[0]迭代思路我们可以用迭代的方法来实现上述算法。当l1和l2都不是空链表时,判断l1和l2哪一个链表的头节点的值更小,将较小值的

- 中望ZW3D 二次开发 输出质量、体积等属性 cvxPartInqShapeMass

CAD二次开发秋实

中望ZW3D二次开发c++

svxPointP1={10,0,0};svxPointP2={20,0,0};svxPointP3={20,10,0};svxPointP4={10,10,0};intL1;cvxPartLine2pt(&P1,&P2,&L1);intL2;cvxPartLine2pt(&P2,&P3,&L2);intL3;cvxPartLine2pt(&P3,&P4,&L3);intL4;cvxPartLin

- 【小白学AI系列】NLP 核心知识点(三)Word2Vec

Blankspace空白

人工智能自然语言处理word2vec

Word2Vec定义:Word2Vec是一种将单词转化为向量的技术,基于神经网络模型,它能够将单词的语义关系通过向量空间的距离和方向进行表示。通过Word2Vec,我们可以将单词从一个离散的符号转化为一个稠密的向量(一般是高维的),并且能够捕捉到单词之间的语义关系和相似性。历史来源:Word2Vec由TomasMikolov等人于2013年在谷歌提出,它迅速成为了词向量表示(wordembeddi

- pytorch基于GloVe实现的词嵌入

纠结哥_Shrek

pytorch人工智能python

PyTorch实现GloVe(GlobalVectorsforWordRepresentation)的完整代码,使用中文语料进行训练,包括共现矩阵构建、模型定义、训练和测试。1.GloVe介绍基于词的共现信息(不像Word2Vec使用滑动窗口预测)适合较大规模的数据(比Word2Vec更稳定)学习出的词向量能捕捉语义信息(如类比关系)importtorchimporttorch.nnasnnimp

- 自然语言处理-词嵌入 (Word Embeddings)

纠结哥_Shrek

自然语言处理人工智能

词嵌入(WordEmbedding)是一种将单词或短语映射到高维向量空间的技术,使其能够以数学方式表示单词之间的关系。词嵌入能够捕捉语义信息,使得相似的词在向量空间中具有相近的表示。常见词嵌入方法基于矩阵分解的方法LatentSemanticAnalysis(LSA)LatentDirichletAllocation(LDA)非负矩阵分解(NMF)基于神经网络的方法Word2Vec(Google提

- 基于Matlab的GPS信号仿真

NoABug

matlab开发语言

基于Matlab的GPS信号仿真近年来,GPS技术已经广泛应用于各个领域,特别是定位和导航领域。为了更好地研究和理解GPS信号的特性,进行GPS信号仿真就成为了一项重要的工作。在本文中,我们将介绍如何使用Matlab软件进行GPS信号仿真,并给出相应的源代码。首先,我们需要了解GPS信号的基本结构。GPS信号由L1和L2两个频段的载波信号、P码和C/A码组成。其中,L1频段的载波频率为1575.4

- 力扣264. 丑数 II

SSSCAESAR

leetcode算法数据结构

给你一个整数n,请你找出并返回第n个丑数。丑数就是质因子只包含2、3和5的正整数。//用一个数组来保存第1到第n个丑数//一个丑数必须是乘以较小的丑数的2、3或5来得到。//使用三路合并方法:L2、L3和L5三个指针遍历2、3、5倍的丑数序列。//假设你有第k个丑数,那么第k+1个必须是Min(L1*2,L2*3,L3*5)。//1通常被视为丑数classSolution{public:intnt

- LSTM的推导与实现

YZXnuaa

NLPPython库

最近在看CS224d,这里主要介绍LSTM(LongShort-TermMemory)的推导过程以及用Python进行简单的实现。LSTM是一种时间递归神经网络,是RNN的一个变种,非常适合处理和预测时间序列中间隔和延迟非常长的事件。假设我们去试着预测‘IgrewupinFrance...(很长间隔)...IspeakfluentFrench’最后的单词,当前的信息建议下一个此可能是一种语言的名字

- 华为鲲鹏ARM处理器920、916系列

itmanll

服务器

鲲鹏处理器-鲲鹏社区(hikunpeng.com)产品规格鲲鹏920系列型号:7260(64核)、5250(48核)、5220(32核)、3210(24核)7260核数64核主频2.6GHz内存通道8TDP功耗180W组件规格计算核兼容Armv8.2架构,华为自研核主频最高2.6GHz缓存L1:64KB指令缓存和数据缓存L2:512KB每核独立缓存L3:24~64MB共享缓存(1MB每核)内存8个

- leetcode——两数相加(java)

gentle_ice

leetcodejava算法

给你两个非空的链表,表示两个非负的整数。它们每位数字都是按照逆序的方式存储的,并且每个节点只能存储一位数字。请你将两个数相加,并以相同形式返回一个表示和的链表。你可以假设除了数字0之外,这两个数都不会以0开头。示例1:输入:l1=[2,4,3],l2=[5,6,4]输出:[7,0,8]解释:342+465=807.示例2:输入:l1=[0],l2=[0]输出:[0]示例3:输入:l1=[9,9,9

- GOCI-L2可以指定变量和日期批量下载

一休哥※

数据集下载windows数据库数据集GOCI

下载数据集运行批量下载脚本按需修改代码注意修改时间修改需要的变量zip下载结果实现批量下载GOCI-II数据集标记 GOCI-II数据集下载脚本运行批量下载脚本数据集网站:https://kosc.kiost.ac.kr/gociSearch/list.nm?menuCd=11&lang=ko&url=gociSearch&dirString=/COMS/GOCI/L2/有批量下载数据集的需求,

- 两数相加【力扣:中等难度】

牛哄哄的柯南

代码面试经典案例leetcode链表算法

title:两数相加【力扣:中等难度】tags:LeetCode题目给你两个非空的链表,表示两个非负的整数。它们每位数字都是按照逆序的方式存储的,并且每个节点只能存储一位数字。请你将两个数相加,并以相同形式返回一个表示和的链表。你可以假设除了数字0之外,这两个数都不会以0开头。示例1:输入:l1=[2,4,3],l2=[5,6,4]输出:[7,0,8]解释:342+465=807.示例2:输入:l

- 基于Python的自然语言处理系列(2):Word2Vec(负采样)

会飞的Anthony

自然语言处理人工智能信息系统自然语言处理word2vec人工智能

在本系列的第二篇文章中,我们将继续探讨Word2Vec模型,这次重点介绍负采样(NegativeSampling)技术。负采样是一种优化Skip-gram模型训练效率的技术,它能在大规模语料库中显著减少计算复杂度。接下来,我们将通过详细的代码实现和理论讲解,帮助你理解负采样的工作原理及其在Word2Vec中的应用。1.Word2Vec(负采样)原理1.1负采样的背景在Word2Vec的Skip-g

- itr流程总共包含多少个l2子流程_流程规划概要(上)

weixin_39743722

“智联·知产·至赢”流程互动群专题分享第十期:流程规划概要(上)分享的提纲,就这里所列出来的4部分:a)流程规划基本内涵b)流程规划核心要点c)流程规划成果应用d)流程规划常见问题今天晚上的分享,重点会在第一部分和第二部分,尤其是在第二部分。一、前言在谈具体内容之前,我想带着大家想像一种场景,就是很多公司做产品的情形,不管是软件产品还是硬件产品,或者说是软硬结合的产品。我们知道,在很多的初创公司,

- 华为流程L1-L6业务流程深度细化到可执行

智慧化智能化数字化方案

华为

该文档主要介绍了华为业务流程的深度细化及相关内容,包括流程框架、建模方法、流程模块描述、流程图建模等,旨在帮助企业构建有效的流程体系,实现战略目标。具体内容如下:华为业务流程的深度细化流程层级:华为业务流程分为L1-L6六个层级,L1为流程大类,L2为流程组,L3为流程,L4为子流程,L5为活动,L6为任务。各级流程层层细化,从宏观战略层面逐步落实到具体执行层面,确保流程的完整性和可操作性。流程框

- VMware Workstation 11 或者 VMware Player 7安装MAC OS X 10.10 Yosemite

iwindyforest

vmwaremac os10.10workstationplayer

最近尝试了下VMware下安装MacOS 系统,

安装过程中发现网上可供参考的文章都是VMware Workstation 10以下, MacOS X 10.9以下的文章,

只能提供大概的思路, 但是实际安装起来由于版本问题, 走了不少弯路, 所以我尝试写以下总结, 希望能给有兴趣安装OSX的人提供一点帮助。

写在前面的话:

其实安装好后发现, 由于我的th

- 关于《基于模型驱动的B/S在线开发平台》源代码开源的疑虑?

deathwknight

JavaScriptjava框架

本人从学习Java开发到现在已有10年整,从一个要自学 java买成javascript的小菜鸟,成长为只会java和javascript语言的老菜鸟(个人邮箱:

[email protected])

一路走来,跌跌撞撞。用自己的三年多业余时间,瞎搞一个小东西(基于模型驱动的B/S在线开发平台,非MVC框架、非代码生成)。希望与大家一起分享,同时有许些疑虑,希望有人可以交流下

平台

- 如何把maven项目转成web项目

Kai_Ge

mavenMyEclipse

创建Web工程,使用eclipse ee创建maven web工程 1.右键项目,选择Project Facets,点击Convert to faceted from 2.更改Dynamic Web Module的Version为2.5.(3.0为Java7的,Tomcat6不支持). 如果提示错误,可能需要在Java Compiler设置Compiler compl

- 主管???

Array_06

工作

转载:http://www.blogjava.net/fastzch/archive/2010/11/25/339054.html

很久以前跟同事参加的培训,同事整理得很详细,必须得转!

前段时间,公司有组织中高阶主管及其培养干部进行了为期三天的管理训练培训。三天的课程下来,虽然内容较多,因对老师三天来的课程内容深有感触,故借着整理学习心得的机会,将三天来的培训课程做了一个

- python内置函数大全

2002wmj

python

最近一直在看python的document,打算在基础方面重点看一下python的keyword、Build-in Function、Build-in Constants、Build-in Types、Build-in Exception这四个方面,其实在看的时候发现整个《The Python Standard Library》章节都是很不错的,其中描述了很多不错的主题。先把Build-in Fu

- JSP页面通过JQUERY合并行

357029540

JavaScriptjquery

在写程序的过程中我们难免会遇到在页面上合并单元行的情况,如图所示

如果对于会的同学可能很简单,但是对没有思路的同学来说还是比较麻烦的,提供一下用JQUERY实现的参考代码

function mergeCell(){

var trs = $("#table tr");

&nb

- Java基础

冰天百华

java基础

学习函数式编程

package base;

import java.text.DecimalFormat;

public class Main {

public static void main(String[] args) {

// Integer a = 4;

// Double aa = (double)a / 100000;

// Decimal

- unix时间戳相互转换

adminjun

转换unix时间戳

如何在不同编程语言中获取现在的Unix时间戳(Unix timestamp)? Java time JavaScript Math.round(new Date().getTime()/1000)

getTime()返回数值的单位是毫秒 Microsoft .NET / C# epoch = (DateTime.Now.ToUniversalTime().Ticks - 62135

- 作为一个合格程序员该做的事

aijuans

程序员

作为一个合格程序员每天该做的事 1、总结自己一天任务的完成情况 最好的方式是写工作日志,把自己今天完成了什么事情,遇见了什么问题都记录下来,日后翻看好处多多

2、考虑自己明天应该做的主要工作 把明天要做的事情列出来,并按照优先级排列,第二天应该把自己效率最高的时间分配给最重要的工作

3、考虑自己一天工作中失误的地方,并想出避免下一次再犯的方法 出错不要紧,最重

- 由html5视频播放引发的总结

ayaoxinchao

html5视频video

前言

项目中存在视频播放的功能,前期设计是以flash播放器播放视频的。但是现在由于需要兼容苹果的设备,必须采用html5的方式来播放视频。我就出于兴趣对html5播放视频做了简单的了解,不了解不知道,水真是很深。本文所记录的知识一些浅尝辄止的知识,说起来很惭愧。

视频结构

本该直接介绍html5的<video>的,但鉴于本人对视频

- 解决httpclient访问自签名https报javax.net.ssl.SSLHandshakeException: sun.security.validat

bewithme

httpclient

如果你构建了一个https协议的站点,而此站点的安全证书并不是合法的第三方证书颁发机构所签发,那么你用httpclient去访问此站点会报如下错误

javax.net.ssl.SSLHandshakeException: sun.security.validator.ValidatorException: PKIX path bu

- Jedis连接池的入门级使用

bijian1013

redisredis数据库jedis

Jedis连接池操作步骤如下:

a.获取Jedis实例需要从JedisPool中获取;

b.用完Jedis实例需要返还给JedisPool;

c.如果Jedis在使用过程中出错,则也需要还给JedisPool;

packag

- 变与不变

bingyingao

不变变亲情永恒

变与不变

周末骑车转到了五年前租住的小区,曾经最爱吃的西北面馆、江西水饺、手工拉面早已不在,

各种店铺都换了好几茬,这些是变的。

三年前还很流行的一款手机在今天看起来已经落后的不像样子。

三年前还运行的好好的一家公司,今天也已经不复存在。

一座座高楼拔地而起,

- 【Scala十】Scala核心四:集合框架之List

bit1129

scala

Spark的RDD作为一个分布式不可变的数据集合,它提供的转换操作,很多是借鉴于Scala的集合框架提供的一些函数,因此,有必要对Scala的集合进行详细的了解

1. 泛型集合都是协变的,对于List而言,如果B是A的子类,那么List[B]也是List[A]的子类,即可以把List[B]的实例赋值给List[A]变量

2. 给变量赋值(注意val关键字,a,b

- Nested Functions in C

bookjovi

cclosure

Nested Functions 又称closure,属于functional language中的概念,一直以为C中是不支持closure的,现在看来我错了,不过C标准中是不支持的,而GCC支持。

既然GCC支持了closure,那么 lexical scoping自然也支持了,同时在C中label也是可以在nested functions中自由跳转的

- Java-Collections Framework学习与总结-WeakHashMap

BrokenDreams

Collections

总结这个类之前,首先看一下Java引用的相关知识。Java的引用分为四种:强引用、软引用、弱引用和虚引用。

强引用:就是常见的代码中的引用,如Object o = new Object();存在强引用的对象不会被垃圾收集

- 读《研磨设计模式》-代码笔记-解释器模式-Interpret

bylijinnan

java设计模式

声明: 本文只为方便我个人查阅和理解,详细的分析以及源代码请移步 原作者的博客http://chjavach.iteye.com/

package design.pattern;

/*

* 解释器(Interpreter)模式的意图是可以按照自己定义的组合规则集合来组合可执行对象

*

* 代码示例实现XML里面1.读取单个元素的值 2.读取单个属性的值

* 多

- After Effects操作&快捷键

cherishLC

After Effects

1、快捷键官方文档

中文版:https://helpx.adobe.com/cn/after-effects/using/keyboard-shortcuts-reference.html

英文版:https://helpx.adobe.com/after-effects/using/keyboard-shortcuts-reference.html

2、常用快捷键

- Maven 常用命令

crabdave

maven

Maven 常用命令

mvn archetype:generate

mvn install

mvn clean

mvn clean complie

mvn clean test

mvn clean install

mvn clean package

mvn test

mvn package

mvn site

mvn dependency:res

- shell bad substitution

daizj

shell脚本

#!/bin/sh

/data/script/common/run_cmd.exp 192.168.13.168 "impala-shell -islave4 -q 'insert OVERWRITE table imeis.${tableName} select ${selectFields}, ds, fnv_hash(concat(cast(ds as string), im

- Java SE 第二讲(原生数据类型 Primitive Data Type)

dcj3sjt126com

java

Java SE 第二讲:

1. Windows: notepad, editplus, ultraedit, gvim

Linux: vi, vim, gedit

2. Java 中的数据类型分为两大类:

1)原生数据类型 (Primitive Data Type)

2)引用类型(对象类型) (R

- CGridView中实现批量删除

dcj3sjt126com

PHPyii

1,CGridView中的columns添加

array(

'selectableRows' => 2,

'footer' => '<button type="button" onclick="GetCheckbox();" style=&

- Java中泛型的各种使用

dyy_gusi

java泛型

Java中的泛型的使用:1.普通的泛型使用

在使用类的时候后面的<>中的类型就是我们确定的类型。

public class MyClass1<T> {//此处定义的泛型是T

private T var;

public T getVar() {

return var;

}

public void setVa

- Web开发技术十年发展历程

gcq511120594

Web浏览器数据挖掘

回顾web开发技术这十年发展历程:

Ajax

03年的时候我上六年级,那时候网吧刚在小县城的角落萌生。传奇,大话西游第一代网游一时风靡。我抱着试一试的心态给了网吧老板两块钱想申请个号玩玩,然后接下来的一个小时我一直在,注,册,账,号。

彼时网吧用的512k的带宽,注册的时候,填了一堆信息,提交,页面跳转,嘣,”您填写的信息有误,请重填”。然后跳转回注册页面,以此循环。我现在时常想,如果当时a

- openSession()与getCurrentSession()区别:

hetongfei

javaDAOHibernate

来自 http://blog.csdn.net/dy511/article/details/6166134

1.getCurrentSession创建的session会和绑定到当前线程,而openSession不会。

2. getCurrentSession创建的线程会在事务回滚或事物提交后自动关闭,而openSession必须手动关闭。

这里getCurrentSession本地事务(本地

- 第一章 安装Nginx+Lua开发环境

jinnianshilongnian

nginxluaopenresty

首先我们选择使用OpenResty,其是由Nginx核心加很多第三方模块组成,其最大的亮点是默认集成了Lua开发环境,使得Nginx可以作为一个Web Server使用。借助于Nginx的事件驱动模型和非阻塞IO,可以实现高性能的Web应用程序。而且OpenResty提供了大量组件如Mysql、Redis、Memcached等等,使在Nginx上开发Web应用更方便更简单。目前在京东如实时价格、秒

- HSQLDB In-Process方式访问内存数据库

liyonghui160com

HSQLDB一大特色就是能够在内存中建立数据库,当然它也能将这些内存数据库保存到文件中以便实现真正的持久化。

先睹为快!

下面是一个In-Process方式访问内存数据库的代码示例:

下面代码需要引入hsqldb.jar包 (hsqldb-2.2.8)

import java.s

- Java线程的5个使用技巧

pda158

java数据结构

Java线程有哪些不太为人所知的技巧与用法? 萝卜白菜各有所爱。像我就喜欢Java。学无止境,这也是我喜欢它的一个原因。日常

工作中你所用到的工具,通常都有些你从来没有了解过的东西,比方说某个方法或者是一些有趣的用法。比如说线程。没错,就是线程。或者确切说是Thread这个类。当我们在构建高可扩展性系统的时候,通常会面临各种各样的并发编程的问题,不过我们现在所要讲的可能会略有不同。

- 开发资源大整合:编程语言篇——JavaScript(1)

shoothao

JavaScript

概述:本系列的资源整合来自于github中各个领域的大牛,来收藏你感兴趣的东西吧。

程序包管理器

管理javascript库并提供对这些库的快速使用与打包的服务。

Bower - 用于web的程序包管理。

component - 用于客户端的程序包管理,构建更好的web应用程序。

spm - 全新的静态的文件包管

- 避免使用终结函数

vahoa.ma

javajvmC++

终结函数(finalizer)通常是不可预测的,常常也是很危险的,一般情况下不是必要的。使用终结函数会导致不稳定的行为、更差的性能,以及带来移植性问题。不要把终结函数当做C++中的析构函数(destructors)的对应物。

我自己总结了一下这一条的综合性结论是这样的:

1)在涉及使用资源,使用完毕后要释放资源的情形下,首先要用一个显示的方