深度学习(3) - 神经网络和反向传播算法

学习文章:https://www.zybuluo.com/hanbingtao/note/476663#an1

简书上原文章的转载,可以找到原链接挂掉的图:https://www.jianshu.com/p/b8ee6e260d39?from=singlemessage

反向传播算法其实就是链式求导法则的应用!!!

属实是妙,以及Bengio这句话:

激活函数的意义:

如果不用激活函数,每一层输出都是上层输入的线性函数,无论神经网络有多少层,输出都是输入的线性组合,这种情况就是最原始的感知机(Perceptron)。

如果使用的话,激活函数给神经元引入了非线性因素,使得神经网络可以任意逼近任何非线性函数,这样神经网络就可以应用到众多的非线性模型中。

sigmoid函数:

导数:

令 y = sigmoid(x)

则 y’ = y * ( 1 - y )

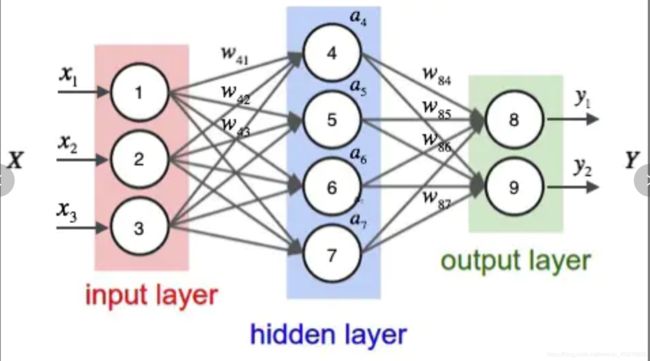

根据输入计算神经网络的输出,需要首先将输入向量的每个元素的值赋给神经网络的输入层的对应神经元,依次向前计算每一层的每个神经元的值,直到最后一层输出层的所有神经元的值计算完毕。最后,将输出层每个神经元的值串在一起就得到了输出向量。

推导结果(配合代码食用):

当前节点的导数是其下游节点(downstream)的δ(delta)之和

node.py

from functools import reduce

import math

def sigmoid(x):

return 1 / (1 + math.e ** -x)

# 节点类,负责记录和维护节点自身信息以及与这个节点相关的上下游连接,实现输出值和误差项的计算。

class Node(object):

def __init__(self, layer_index, node_index):

"""

构造节点对象。

layer_index: 节点所属的层的编号

node_index: 节点的编号

downstream: 到下游节点的连接

upstream: 到上游节点的连接

output: 输出值

delta: 节点误差项

"""

self.layer_index = layer_index

self.node_index = node_index

self.downstream = []

self.upstream = []

self.output = 0

self.delta = 0

def set_output(self, output):

"""

设置节点的输出值。如果节点属于输入层会用到这个函数。

"""

self.output = output

def append_downstream_connection(self, conn):

"""

添加一个到下游节点的连接

"""

self.downstream.append(conn)

def append_upstream_connection(self, conn):

"""

添加一个到上游节点的连接

"""

self.upstream.append(conn)

def calc_output(self):

"""

根据式1计算节点的输出

Y = sigmoid(W * X)

"""

output = reduce(lambda ret, conn: ret + conn.upstream_node.output * conn.weight, self.upstream, 0)

self.output = sigmoid(output)

def calc_hidden_layer_delta(self):

"""

节点属于隐藏层时,根据式4计算delta

delta = a * (1 - a) * ADD(w_k * delta_k)

"""

downstream_delta = reduce(

lambda ret, conn: ret + conn.downstream_node.delta * conn.weight,

self.downstream, 0.0)

self.delta = self.output * (1 - self.output) * downstream_delta

def calc_output_layer_delta(self, label):

"""

节点属于输出层时,根据式3计算delta

delta = y * (1 - y) * (t - y)

"""

self.delta = self.output * (1 - self.output) * (label - self.output)

def __str__(self):

"""

打印节点的信息

"""

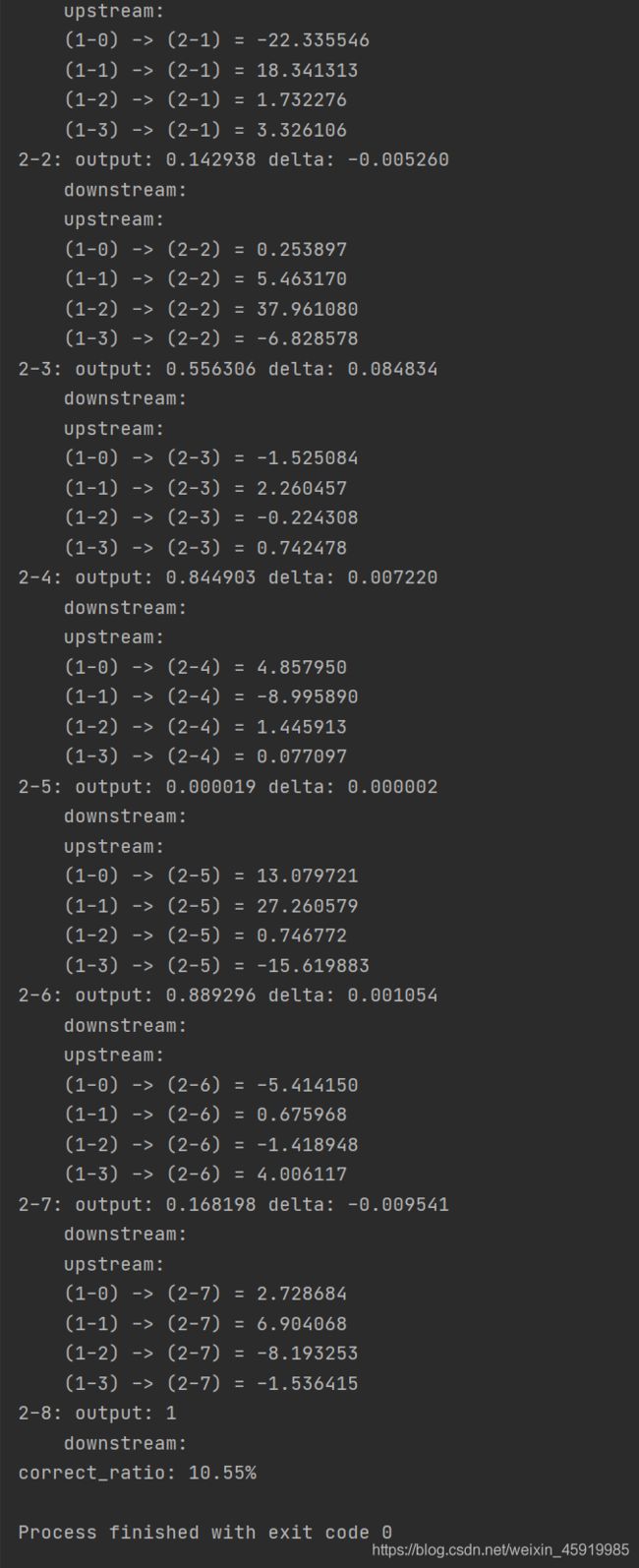

node_str = '%u-%u: output: %f delta: %f' % (self.layer_index, self.node_index, self.output, self.delta)

downstream_str = reduce(lambda ret, conn: ret + '\n\t' + str(conn), self.downstream, '')

upstream_str = reduce(lambda ret, conn: ret + '\n\t' + str(conn), self.upstream, '')

return node_str + '\n\tdownstream:' + downstream_str + '\n\tupstream:' + upstream_str

const_node.py

from functools import reduce

class ConstNode(object):

# ConstNode对象,为了实现一个输出恒为1的节点(计算偏置项时需要)

def __init__(self, layer_index, node_index):

"""

构造节点对象。

layer_index: 节点所属的层的编号

node_index: 节点的编号

downstream: 下游节点

output: 输出值

delta: 节点误差项

"""

self.layer_index = layer_index

self.node_index = node_index

self.downstream = []

self.output = 1

self.delta = 0

def append_downstream_connection(self, conn):

"""

添加一个到下游节点的连接

"""

self.downstream.append(conn)

def calc_hidden_layer_delta(self):

"""

节点属于隐藏层时,根据式4计算delta

"""

downstream_delta = reduce(

lambda ret, conn: ret + conn.downstream_node.delta * conn.weight,

self.downstream, 0.0)

self.delta = self.output * (1 - self.output) * downstream_delta

def __str__(self):

"""

打印节点的信息

"""

node_str = '%u-%u: output: 1' % (self.layer_index, self.node_index)

downstream_str = reduce(lambda ret, conn: ret + '\n\t' + str(conn), self.downstream, '')

return node_str + '\n\tdownstream:' + downstream_str

connection.py

import random

class Connection(object):

def __init__(self, upstream_node, downstream_node):

"""

初始化连接,权重初始化为是一个很小的随机数

upstream_node: 连接的上游节点

downstream_node: 连接的下游节点

"""

self.upstream_node = upstream_node

self.downstream_node = downstream_node

self.weight = random.uniform(-0.1, 0.1)

self.gradient = 0.0

def calc_gradient(self):

"""

计算梯度

"""

self.gradient = self.downstream_node.delta * self.upstream_node.output

def get_gradient(self):

"""

获取当前的梯度

"""

return self.gradient

def update_weight(self, rate):

"""

根据梯度下降算法更新权重

"""

self.calc_gradient()

self.weight += rate * self.gradient

def __str__(self):

"""

打印连接信息

"""

return '(%u-%u) -> (%u-%u) = %f' % (

self.upstream_node.layer_index,

self.upstream_node.node_index,

self.downstream_node.layer_index,

self.downstream_node.node_index,

self.weight)

class Connections(object):

def __init__(self):

self.connections = []

def add_connection(self, connection):

self.connections.append(connection)

def dump(self):

for conn in self.connections:

print(conn)

layer.py

from node import Node

from neural_net.const_node import ConstNode

class Layer(object):

def __init__(self, layer_index, node_count):

"""

初始化一层

layer_index: 层编号

node_count: 层所包含的节点个数

"""

self.layer_index = layer_index

self.nodes = []

# 节点编号0~n

for i in range(node_count):

self.nodes.append(Node(layer_index, i))

# bias节点

self.nodes.append(ConstNode(layer_index, node_count))

def set_output(self, data):

"""

设置层的输出。当层是输入层时会用到。

"""

for i in range(len(data)):

self.nodes[i].set_output(data[i])

def calc_output(self):

"""

计算层的输出向量

"""

for node in self.nodes[:-1]:

node.calc_output()

def dump(self):

"""

打印层的信息

"""

for node in self.nodes:

print(node)

network.py

from neural_net.connection import Connection, Connections

from neural_net.layer import Layer

class Network(object):

def __init__(self, layers):

"""

初始化一个全连接神经网络

layers: 二维数组,描述神经网络每层节点数

"""

self.connections = Connections()

self.layers = []

# 神经网络层数

layer_count = len(layers)

node_count = 0

for i in range(layer_count):

self.layers.append(Layer(i, layers[i]))

# 全连接神经网络,两两节点相连

for layer in range(layer_count - 1):

connections = [Connection(upstream_node, downstream_node)

# 当前层节点

for upstream_node in self.layers[layer].nodes

# 下一层节点 注意bias节点没有上游节点 故:[:-1]

for downstream_node in self.layers[layer + 1].nodes[:-1]]

for conn in connections:

# 向网络中添加连接

self.connections.add_connection(conn)

# 向上下游节点添加连接

conn.downstream_node.append_upstream_connection(conn)

conn.upstream_node.append_downstream_connection(conn)

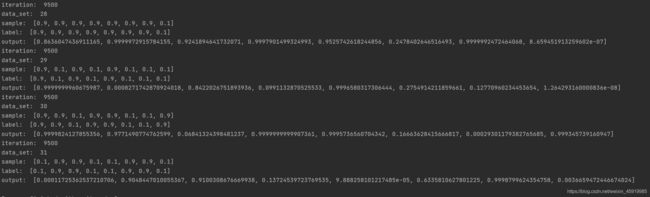

def train(self, labels, data_set, rate, iteration):

"""

训练神经网络

labels: 数组,训练样本标签。每个元素是一个样本的标签。

data_set: 二维数组,训练样本特征。每个元素是一个样本的特征。

rate: 学习速率

iteration: 训练次数

"""

for i in range(iteration):

for d in range(len(data_set)):

# print(labels[d])

flag = 0

# if i % 500 == 0:

# flag = 1

# print("iteration: ", i)

# print("data_set: ", d)

self.train_one_sample(labels[d], data_set[d], rate, flag)

def train_one_sample(self, label, sample, rate, flag):

"""

内部函数,用一个样本训练网络

"""

# 将样本输入网络,更新各层output

output = self.predict(sample)

# 与标签比较,更新各节点误差项

self.calc_delta(label)

# 更新权重

self.update_weight(rate)

if flag == 1:

print("sample: ", sample)

print("label: ", label)

print("output: ", output)

def calc_delta(self, label):

"""

内部函数,计算每个节点的delta

"""

# 输出层

output_nodes = self.layers[-1].nodes

for i in range(len(label)):

output_nodes[i].calc_output_layer_delta(label[i])

# 隐藏层

for layer in self.layers[-2::-1]:

for node in layer.nodes:

node.calc_hidden_layer_delta()

def update_weight(self, rate):

"""

内部函数,更新每个连接权重

"""

for layer in self.layers[:-1]:

for node in layer.nodes:

for conn in node.downstream:

conn.update_weight(rate)

def calc_gradient(self):

"""

内部函数,计算每个连接的梯度

"""

for layer in self.layers[:-1]:

for node in layer.nodes:

for conn in node.downstream:

conn.calc_gradient()

def get_gradient(self, label, sample):

"""

获得网络在一个样本下,每个连接上的梯度

label: 样本标签

sample: 样本输入

"""

self.predict(sample)

self.calc_delta(label)

self.calc_gradient()

def predict(self, sample):

"""

根据输入的样本预测输出值

sample: 数组,样本的特征,也就是网络的输入向量

"""

# 设置输入层,输入层输出向量及为sample

self.layers[0].set_output(sample)

# 逐层求出目标向量

for i in range(1, len(self.layers)):

self.layers[i].calc_output()

return list(map(lambda node: node.output, self.layers[-1].nodes[:-1]))

def dump(self):

"""

打印网络信息

"""

for layer in self.layers:

layer.dump()

main.py

from network import Network

from functools import reduce

import random

class Normalizer(object):

# 数据归一化

# 0x 十六进制

def __init__(self):

self.mask = [

0x1, 0x2, 0x4, 0x8, 0x10, 0x20, 0x40, 0x80

]

def norm(self, number):

return list(map(lambda m: 0.9 if number & m else 0.1, self.mask))

def denorm(self, vec):

binary = list(map(lambda _i: 1 if _i > 0.5 else 0, vec))

for i in range(len(self.mask)):

binary[i] = binary[i] * self.mask[i]

return reduce(lambda x, y: x + y, binary)

def gradient_check(network, sample_feature, sample_label):

"""

梯度检查

network: 神经网络对象

sample_feature: 样本的特征

sample_label: 样本的标签

"""

# 计算网络误差

network_error = lambda vec1, vec2: \

0.5 * reduce(lambda a, b: a + b,

map(lambda v: (v[0] - v[1]) * (v[0] - v[1]),

zip(vec1, vec2)))

# 获取网络在当前样本下每个连接的梯度

network.get_gradient(sample_feature, sample_label)

# 对每个权重做梯度检查

for conn in network.connections.connections:

# 获取指定连接的梯度

actual_gradient = conn.get_gradient()

# 增加一个很小的值,计算网络的误差

epsilon = 0.0001

conn.weight += epsilon

error1 = network_error(network.predict(sample_feature), sample_label)

# 减去一个很小的值,计算网络的误差

conn.weight -= 2 * epsilon # 刚才加过了一次,因此这里需要减去2倍

error2 = network_error(network.predict(sample_feature), sample_label)

# 根据式6计算期望的梯度值

expected_gradient = (error2 - error1) / (2 * epsilon)

# 打印

print('expected gradient: \t%f\nactual gradient: \t%f' % (

expected_gradient, actual_gradient))

def train_data_set():

normalizer = Normalizer()

# 数据

data_set = []

# 标签

labels = []

for i in range(0, 256, 8):

r = int(random.uniform(0, 256))

# 构造一个0.1或0.9的序列 n

n = normalizer.norm(r)

# 将map返回的迭代器转换为list类型

n = list(n)

# for i in n:

# print("n: ", i)

data_set.append(n)

labels.append(n)

return labels, data_set

def train(network, iteration):

# 初始化数据

labels, data_set = train_data_set()

# 模型训练

network.train(labels, data_set, 0.3, iteration)

def test(network, data):

normalizer = Normalizer()

norm_data = normalizer.norm(data)

predict_data = network.predict(norm_data)

print('\ttestdata(%u)\tpredict(%u)' % (

data, normalizer.denorm(predict_data)))

def correct_ratio(network):

normalizer = Normalizer()

correct = 0.0

for i in range(256):

if normalizer.denorm(network.predict(normalizer.norm(i))) == i:

correct += 1.0

print('correct_ratio: %.2f%%' % (correct / 256 * 100))

def gradient_check_test():

net = Network([2, 2, 2])

sample_feature = [0.9, 0.1]

sample_label = [0.9, 0.1]

gradient_check(net, sample_feature, sample_label)

if __name__ == '__main__':

net = Network([8, 3, 8])

train(net, 500)

# 打印网络信息

net.dump()

# 预测准确率

correct_ratio(net)

输出样例: