吴恩达机器学习作业(二)_python实现

一,必做部分

import pandas as pd

import numpy as np

import scipy.optimize as opt

import matplotlib.pyplot as plt

from sklearn.metrics import classification_report#这个包是评价报告

def get_X(df):#读取特征

ones = pd.DataFrame({

'ones': np.ones(len(df))})#ones是m行1列的dataframe

data = pd.concat([ones, df], axis=1) # 合并数据,根据列合并

return data.iloc[:, :-1].values

def get_y(df):#读取标签

return np.array(df.iloc[:, -1])#df.iloc[:, -1]是指df的最后一列

def normalize_feature(df):

return df.apply(lambda column: (column - column.mean()) / column.std())#特征缩放

def sigmoid(z):

return 1 / (1 + np.exp(-z))

def cost(theta, X, y):

return np.mean(-y * np.log(sigmoid(X @ theta)) - (1 - y) * np.log(1 - sigmoid(X @ theta)))

def gradient(theta, X, y):

return (1 / len(X)) * X.T @ (sigmoid(X @ theta) - y)

def predict(x, theta):

prob = sigmoid(x @ theta)

return (prob >= 0.5).astype(int)#.astype方法前的数据类型为numpy.ndarray

theta=np.zeros(3)

data = pd.read_csv('ex2data1.txt', names=['exam 1', 'exam 2', 'admitted'])

X = get_X(data)

y = get_y(data)

res = opt.minimize(fun=cost, x0=theta, args=(X, y), method='Newton-CG', jac=gradient)

'''func:优化的目标函数

x0:初值

fprime:提供优化函数func的梯度函数,不然优化函数func必须返回函数值和梯度,或者设置approx_grad=True

args:元组,是传递给优化函数的参数'''

final_theta = res.x#res.x is final theta

y_pred = predict(X, final_theta)

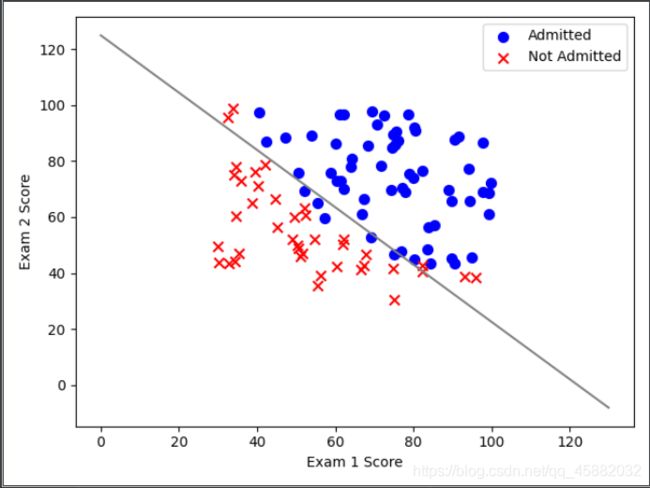

#二维图

positive = data[data['admitted'].isin([1])]

negative = data[data['admitted'].isin([0])]

plt.scatter(positive['exam 1'], positive['exam 2'], s=50, c='b', marker='o', label='Admitted')

plt.scatter(negative['exam 1'], negative['exam 2'], s=50, c='r', marker='x', label='Not Admitted')

plt.legend(loc=0)#增加图例,0,1,2,3,4分别表示不同的位置

plt.xlabel('Exam 1 Score')

plt.ylabel('Exam 2 Score')

coef = -(res.x / res.x[2]) # find the equation

x = np.arange(130, step=0.1)

y2 = coef[0] + coef[1]*x#y = theta@X = sigmod(theta[0]*X[0]+theta[1]*X[1]+theta[2]*X[2])

plt.plot(x, y2, 'grey')

plt.show()

#三维图,注释掉了,看三维效果把下面的注释去掉,49-60加注释

"""x = plt.axes(projection='3d')

ax.scatter(X[:,1], X[:,2], y, alpha=0.3)

D = final_theta[0]

A = final_theta[1]

B = final_theta[2]

Z = A*X[:,1] + B*X[:,2] + D

ax.plot_trisurf(X[:,1], X[:,2], Z,

linewidth=0, antialiased=False,color='r')

ax.set_zlim(-2,2);"""

print(classification_report(y, y_pred))

二,选作部分

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import scipy.optimize as opt

def sigmoid(z):

return 1 / (1 + np.exp(-z))

def cost(theta, X, y, learningRate):

theta = np.mat(theta)

X = np.mat(X)

y = np.mat(y)

first = np.multiply(-y, np.log(sigmoid(X * theta.T)))

second = np.multiply((1 - y), np.log(1 - sigmoid(X * theta.T)))

reg = (learningRate / (2 * len(X))) * np.sum(np.power(theta[:,1:theta.shape[1]], 2))

return np.sum(first - second) / len(X) + reg

def gradientReg(theta, X, y, learningRate):

theta = np.mat(theta)

X = np.mat(X)

y = np.mat(y)

parameters = int(theta.ravel().shape[1])

grad = np.zeros(parameters)

error = sigmoid(X * theta.T) - y

for i in range(parameters):

term = np.multiply(error, X[:, i])

if (i == 0):

grad[i] = np.sum(term) / len(X)

else:

grad[i] = (np.sum(term) / len(X)) + ((learningRate / len(X)) * theta[:, i])

return grad

def predict(theta, X):

probability = sigmoid(X * theta.T)

return [1 if x >= 0.5 else 0 for x in probability]

data2 = pd.read_csv("ex2data2.txt", header=None, names=['Test 1', 'Test 2', 'Accepted'])

df = data2[:]

#原始数据

positive = data2[data2['Accepted'].isin([1])]

negative = data2[data2['Accepted'].isin([0])]

plt.scatter(positive['Test 1'], positive['Test 2'], s=50, c='b', marker='o', label='Accepted')

plt.scatter(negative['Test 1'], negative['Test 2'], s=50, c='r', marker='x', label='Rejected')

plt.legend()

plt.xlabel('Test 1 Score')

plt.ylabel('Test 2 Score')

plt.show()

degree = 5

x1 = data2['Test 1']

x2 = data2['Test 2']

data2.insert(3, 'Ones', 1)#插入在第三列之前

for i in range(1, degree):#i:1~4

for j in range(0, i):#i=4时,j为0到3

data2['F' + str(i) + str(j)] = np.power(x1, i-j) * np.power(x2, j)

'''将F10,F20,F21这些列加在data2的最后,例如:data["ww"]=1 会在data2的列之后在格外加一列名字为ww'''

data2.drop('Test 1', axis=1, inplace=True)#删除方法,将index为Test 1,axis=1说明删除的是列

data2.drop('Test 2', axis=1, inplace=True)#如果手动设定为True(默认为False),那么原数组直接就被替换

#而采用inplace=False之后,原数组名对应的内存值并不改变,需要将新的结果赋给一个新的数组或者覆盖原数组的内存位置

cols = data2.shape[1]

X2 = data2.iloc[:, 1:cols].values#删除accepted列

y2 = data2.iloc[:, 0:1].values#仅保留accepted列

theta2 = np.zeros(11)

learningRate = 1

final_theta = opt.fmin_tnc(func=cost, x0=theta2, fprime=gradientReg, args=(X2, y2, learningRate))

'''或用

res = opt.minimize(fun=cost,

x0=theta2,

args=(X2, y2, learningRate),

method='TNC',

jac=gradientReg)

final_theta = res.x可得到相同的结果

func:优化的目标函数

x0:初值

fprime:提供优化函数func的梯度函数,不然优化函数func必须返回函数值和梯度,或者设置approx_grad=True

args:元组,是传递给优化函数的参数

'''

theta_min = np.mat(final_theta[0])

predictions = predict(theta_min, X2)

correct = [1 if ((a == 1 and b == 1) or (a == 0 and b == 0)) else 0 for (a, b) in zip(predictions, y2)]

accuracy = (sum(map(int, correct)) % len(correct))

print('accuracy = {0}%'.format(accuracy))

原始数据如图

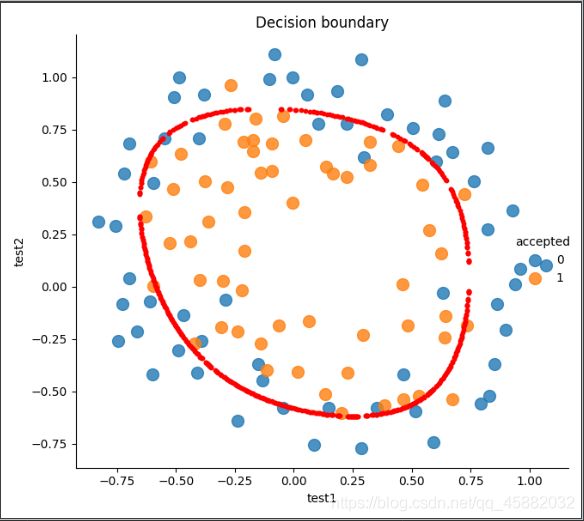

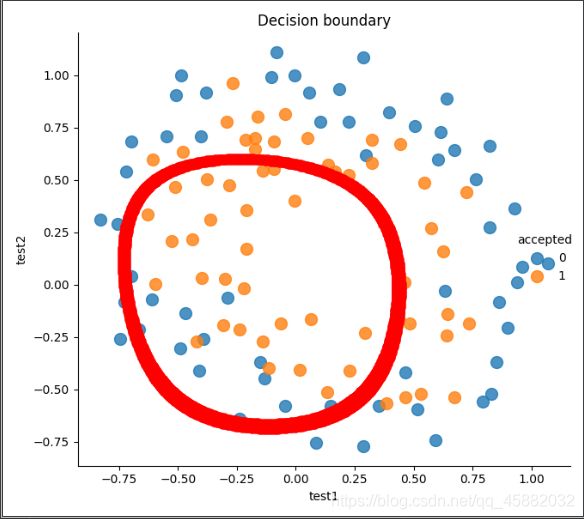

画出决策边界:由于×是个11维的图,我们不能直观的表示出来

但可以找到所有 ×近似等于0的值以此来画出决策边界(是这么个思路,以下复制了国外大牛的代码)

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import scipy.optimize as opt

import seaborn as sns

def cost(theta, X, y):

return np.mean(-y * np.log(sigmoid(X @ theta)) - (1 - y) * np.log(1 - sigmoid(X @ theta)))

def sigmoid(z):

return 1 / (1 + np.exp(-z))

def gradient(theta, X, y):

return (1 / len(X)) * X.T @ (sigmoid(X @ theta) - y)

def feature_mapping(x, y, power, as_ndarray=False):

data = {

"f{}{}".format(i - p, p): np.power(x, i - p) * np.power(y, p)

for i in np.arange(power + 1)

for p in np.arange(i + 1)

}

if as_ndarray:

return pd.DataFrame(data).values

else:

return pd.DataFrame(data)

def regularized_gradient(theta, X, y, l=1):

# '''still, leave theta_0 alone'''

theta_j1_to_n = theta[1:]

regularized_theta = (l / len(X)) * theta_j1_to_n

# by doing this, no offset is on theta_0

regularized_term = np.concatenate([np.array([0]), regularized_theta])

return gradient(theta, X, y) + regularized_term

def regularized_cost(theta, X, y, l=1):

# '''you don't penalize theta_0'''

theta_j1_to_n = theta[1:]

regularized_term = (l / (2 * len(X))) * np.power(theta_j1_to_n, 2).sum()

return cost(theta, X, y) + regularized_term

def feature_mapped_logistic_regression(power, l):

df = pd.read_csv('ex2data2.txt', names=['test1', 'test2', 'accepted'])

x1 = np.array(df.test1)

x2 = np.array(df.test2)

y = np.array(df.iloc[:, -1])

X = feature_mapping(x1, x2, power, as_ndarray=True)

theta = np.zeros(X.shape[1])

res = opt.minimize(fun=regularized_cost,

x0=theta,

args=(X, y, l),

method='TNC',

jac=regularized_gradient)

final_theta = res.x

return final_theta

def find_decision_boundary(density, power, theta, threshhold):

t1 = np.linspace(-1, 1.5, density)

t2 = np.linspace(-1, 1.5, density)

cordinates = [(x, y) for x in t1 for y in t2]

x_cord, y_cord = zip(*cordinates)

mapped_cord = feature_mapping(x_cord, y_cord, power) # this is a dataframe

inner_product = mapped_cord.values @ theta

decision = mapped_cord[np.abs(inner_product) < threshhold]

return decision.f10, decision.f01

def draw_boundary(power, l):

density = 1000

threshhold = 2 * 10**-3

final_theta = feature_mapped_logistic_regression(power, l)

x, y = find_decision_boundary(density, power, final_theta, threshhold)

df = pd.read_csv('ex2data2.txt', names=['test1', 'test2', 'accepted'])

sns.lmplot('test1', 'test2', hue='accepted', data=df, size=6, fit_reg=False, scatter_kws={

"s": 100})

plt.scatter(x, y, color='red', s=10)

plt.title('Decision boundary')

plt.show()

draw_boundary(6, 1)#第二个为lambda的值,lambda=0过拟合,lambda=100欠拟合