原理

- 寻找一个分割超平面来作为分类边界,找到离分割超平面最近的点,确保它们离分割超平面的距离尽可能远。

- 支持向量就是离分割超平面最近的那些点

优点:

缺点:

- 对参数调节和核函数的选择敏感,原始分类器不加修改仅适用于处理二类问题。

适用数据类型:

简化版SMO算法

#加载数据

def loadData(path):

#新建数据和标签列表

dataList = [];labelList= []

#获得文件指针

fr = open(path)

#一行一行读取

for line in fr.readlines():

#分割返回list

lineList = line.strip().split()

#取前1,2列作为训练数据

dataList.append([float(lineList[0]),float(lineList[1])])

#最后一列最为标签数据

labelList.append(float(lineList[-1]))

return dataList,labelList

dataList,labelList = loadData('../../Reference Code/Ch06/testSet.txt')

#随机选择alpha

import random

def selectJrand(i,m):

#这里可能随机取值会取到和i相等的值,为了j!=i,所以才先赋值j=1,再while循环

j = i

while(j==i):

j = int(random.uniform(0,m))

return j

j = selectJrand(1,6)

# def selectJrand(m):

# j = int(random.uniform(0,m))

# return j

# j = selectJrand(6)

def clipAlpha(aj,H,L):

if aj>H:

aj = H

if L>aj:

aj = L

return aj

import sys

print(sys.executable)

/home/ubuntu/anaconda3/bin/python

#简化版SMO

import numpy as np

def smoSimple(dataMatIn,classLabels,C,toler,maxIter):

'''

参数:

dataMatIn:输入数据

classLabels:标签

C:惩罚项

toler:错误容忍度

maxIter:最大迭代次数

返回:

b:偏置项

alphas:拉格朗日乘子

'''

#数据和标签转化为ndarray

#等价于dataMatrix = mat(dataMatIn); labelMat = mat(classLabels).T

dataArray = np.array(dataMatIn); labelArray = np.array(classLabels).reshape(-1,1)

#初始化b,获得数据矩阵的行列

b = 0; m,n = shape(dataArray)

#初始化alphas全为0向量

alphas = np.zeros((m,1))

#初始化迭代次数

Iter =0

#当迭代次数小于最大迭代次数

while (Iter toler) and (alphas[i] > 0)):

#随机选择j,j!=i

j = selectJrand(i,m)

#计算g(xj)

'''

注意区别

shape(dataArray[1,:]) #(2, )

shape(dataArray[1,:].T)# (2, )

shape(dataArray[1:2,:].T)(2, 1)

'''

fXj = float(np.dot((alphas*labelArray).T,np.dot(dataArray,dataArray[j:j+1,:].T))) + b

#计算Ej

Ej = fXj - float(labelArray[j])

#赋值旧的alphai和alphaj

alphaIold = alphas[i].copy()

alphaJold = alphas[j].copy()

#若yi!=yj

if (labelArray[i] != labelArray[j]):

L = max(0, alphas[j] - alphas[i])

H = min(C, C + alphas[j] - alphas[i])

#若yi=yj

else:

L = max(0, alphas[j] + alphas[i] - C)

H = min(C, alphas[j] - alphas[i])

if L==H:print('L==H');continue

#计算eta,参考李航PP127

eta = 2.0*np.dot(dataArray[i],dataArray[j]) \

- np.dot(dataArray[i],dataArray[i])\

- np.dot(dataArray[j],dataArray[j])

if eta >= 0:print('eta>=0');continue

#跟新alphaj

alphas[j] -= labelArray[j]*(Ei - Ej)/eta

#若alphaj>H,则取H,若alphajalphas[i]):b = b1

elif (0 < alphas[j]) and (C>alphas[j]): b = b2

else: b = (b1+b2)/2.0

#alpha对加一

alphaPairsChanged += 1

print("循环次数: {} alpha:{}, alpha对修改了 {} 次".format(Iter,i,alphaPairsChanged))

if(alphaPairsChanged == 0): Iter += 1

else: Iter = 0

print("迭代次数: {}".format(Iter))

return b,alphas

dataList,labelList = loadData('../../Reference Code/Ch06/testSet.txt')

b, alphas = smoSimple(dataList, labelList, 0.6, 0.001, 40)

print('b= {}'.format(b))

print('(支持向量对应的alpha>0)alpha>0\n{}'.format(alphas[alphas>0]))

循环次数: 0 alpha:0, alpha对修改了 1 次

循环次数: 0 alpha:2, alpha对修改了 2 次

L==H

j not moving enough

循环次数: 0 alpha:6, alpha对修改了 3 次

j not moving enough

j not moving enough

j not moving enough

循环次数: 0 alpha:22, alpha对修改了 4 次

j not moving enough

L==H

j not moving enough

L==H

j not moving enough

j not moving enough

L==H

L==H

循环次数: 0 alpha:54, alpha对修改了 5 次

循环次数: 0 alpha:55, alpha对修改了 6 次

j not moving enough

L==H

j not moving enough

L==H

L==H

迭代次数: 0

j not moving enough

j not moving enough

L==H

L==H

L==H

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

L==H

L==H

L==H

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

L==H

循环次数: 0 alpha:97, alpha对修改了 1 次

迭代次数: 0

j not moving enough

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

循环次数: 0 alpha:54, alpha对修改了 1 次

j not moving enough

j not moving enough

循环次数: 0 alpha:97, alpha对修改了 2 次

迭代次数: 0

j not moving enough

循环次数: 0 alpha:13, alpha对修改了 1 次

迭代次数: 25

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 26

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 27

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 28

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 29

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 30

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 31

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 32

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 33

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 34

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 35

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 36

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 37

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 38

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 39

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 40

b= [-4.48392829]

(支持向量对应的alpha>0)alpha>0

[0.02485639 0.33967041 0.26285541 0.1016714 ]

#打印支持向量

for i in range(len(dataList)):

if alphas[i]>0.0:

print(dataList[i],labelList[i])

[4.658191, 3.507396] -1

[2.893743, -1.643468] -1

[5.286862, -2.358286] 1

[6.080573, 0.418886] 1

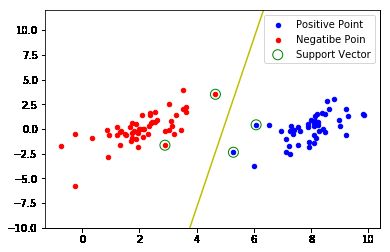

import matplotlib.pyplot as plt

import numpy as np

def dataToShow(dataList,labelList,b,alphas):

#array形式方便处理

dataArray = np.array(dataList)

alphasArray = np.array(alphas.tolist())

#变成一列,-1表示自动计算多少行

labelArray = np.array(labelList).reshape(-1,1)

#正类负类分开画图,np.squeeze转化成一维的

posData = dataArray[np.squeeze(labelArray>0.0)]

negData = dataArray[np.squeeze(labelArray<0.0)]

svData = dataArray[np.squeeze(alphasArray>0.0)]

plt.figure()

plt.scatter(posData[:,0],posData[:,1],c='b',s=20)

plt.scatter(negData[:,0],negData[:,1],c='r',s=20)

plt.scatter(svData[:,0],svData[:,1],marker='o',c='',s=100,edgecolors='g')

plt.legend(['Positive Point','Negatibe Poin','Support Vector'])

#画分割超平面

w = np.dot((alphasArray * labelArray).T, dataArray)

x0 = np.array([2, 8])

#分割线:w0*x0+w1*x1+b=0

x1 = -(w[0, 0] * x0 + np.squeeze(np.array(b))) / w[0, 1]

plt.plot(x0, x1, color = 'y')

plt.ylim((-10,12))

plt.show()

dataToShow(dataList,labelList,b,alphas)

完整版SMO算法

class optStructK:

def __init__(self,dataMatIn, classLabels, C, toler):

self.X = dataMatIn

selef.labelMat = classLabels

self.C = C

self.tol = toler

self.m = shape(dataMatIn)[0]

self.alphas = np.zeros((self.m,1))

self.b = 0

self.eCache = np.zeros((self.m,2)) #误差缓存

#计算Ei

def calcEk(oS, k):

fXk = float(np.dot((oS.alphas * oS.labelMat).T, np.dot(oS.X, oS.X[k:k+1,:].T))) + oS.b

#计算Ej

Ek = fXk - float(oS.labelMat[k])

return Ek

#内循环选择alpha

def selectJK(i,oS,Ei):

'''

内循环选择alpha的启发式算法

参数:

i -- 外循环alpha的下标

oS -- 类

Ei -- 误差

返回:

j -- 选择alpha的下标

Ej -- 误差

'''

#初始化

maxK = -1;maxDeltaE = 0; Ej = 0

oS.eCache[i] = [1,Ei]

#选择合理的集合

validEcacheList = np.nonzero(oS.eCache[:,0])[0]

if (len(validEcacheList)) > 1:

#选择最大步长的alpha

for k in validEcacheList:

#不重复计算

if k == i: continue

#计算误差

Ek = calcEk(oS, k)

#计算步长

deltaE = abs(Ei - Ek)

#记录最佳选择

if (deltaE > maxDeltaE):

maxK = k;maxDeltaE = deltaE; Ej = Ek

return maxK, Ej

#没有合理值

else:

#随机选择

j = select(i,oS.m)

Ej = calcEk(oS,j)

return j,Ej

def updateEkK(oS,k):

#在alpha更新后存储计算得到的误差

Ek = calcEk(oS,k)

oS.eCache[k] = [1,Ek]

def innerLK(i, oS):

#计算误差

Ei = calcEkK(oS, i)

#找出不满足KKT条件的alpha

if ((oS.labelMat[i, 0]*Ei < -oS.tol) and (oS.alphas[i, 0] < oS.C)) or ((oS.labelMat[i, 0]*Ei > oS.tol) and (oS.alphas[i, 0] > 0)):

#选择j

j,Ej = selectJK(i, oS, Ei)

#存储旧的值

alphaIold = oS.alphas[i, 0].copy(); alphaJold = oS.alphas[j, 0].copy();

#两种情况求边界值

if (oS.labelMat[i, 0] != oS.labelMat[j, 0]):

L = max(0, oS.alphas[j, 0] - oS.alphas[i, 0])

H = min(oS.C, oS.C + oS.alphas[j, 0] - oS.alphas[i, 0])

else:

L = max(0, oS.alphas[j, 0] + oS.alphas[i, 0] - oS.C)

H = min(oS.C, oS.alphas[j, 0] + oS.alphas[i, 0])

if L==H: return 0

#计算变化量

eta = 2.0 * np.dot(oS.X[i:i+1,:], oS.X[j:j+1,:].T) - np.dot(oS.X[i:i+1,:], oS.X[i:i+1,:].T) - np.dot(oS.X[j:j+1,:], oS.X[j:j+1,:].T)

if eta >= 0: return 0

#更新alpha

oS.alphas[j, 0] -= oS.labelMat[j, 0]*(Ei - Ej)/eta

#约束alpha

oS.alphas[j, 0] = clipAlpha(oS.alphas[j, 0],H,L)

updateEkK(oS, j)

if (abs(oS.alphas[j, 0] - alphaJold) < 0.00001): return 0

oS.alphas[i, 0] += oS.labelMat[j, 0]*oS.labelMat[i, 0]*(alphaJold - oS.alphas[j, 0])

updateEkK(oS, i)

b1 = oS.b - Ei- oS.labelMat[i, 0]*(oS.alphas[i, 0]-alphaIold)*np.dot(oS.X[i:i+1,:], oS.X[i:i+1,:].T) - oS.labelMat[j, 0]*(oS.alphas[j, 0]-alphaJold)*np.dot(oS.X[i:i+1,:], oS.X[j:j+1,:].T)

b2 = oS.b - Ej- oS.labelMat[i, 0]*(oS.alphas[i, 0]-alphaIold)*np.dot(oS.X[i:i+1,:], oS.X[j:j+1,:].T) - oS.labelMat[j, 0]*(oS.alphas[j, 0]-alphaJold)*np.dot(oS.X[j:j+1,:], oS.X[j:j+1,:].T)

if (0 < oS.alphas[i, 0]) and (oS.C > oS.alphas[i, 0]): oS.b = b1

elif (0 < oS.alphas[j, 0]) and (oS.C > oS.alphas[j, 0]): oS.b = b2

else: oS.b = (b1 + b2)/2.0

return 1

else: return 0

def smoPK(dataMatIn, classLabels, C, toler, maxIter):

#建立类变量

oS = optStructK(np.array(dataMatIn),np.array(classLabels).reshape(-1, 1),C,toler)

iter = 0

entireSet = True; alphaPairsChanged = 0

#执行循环

while (iter < maxIter) and ((alphaPairsChanged > 0) or (entireSet)):

alphaPairsChanged = 0

if entireSet:

#遍历所有

for i in range(oS.m):

alphaPairsChanged += innerLK(i,oS)

#print("fullSet, iter: {} i:{}, pairs changed {}".format(iter,i,alphaPairsChanged))

iter += 1

else:

#遍历非边界值

nonBoundIs = np.nonzero((oS.alphas > 0) * (oS.alphas < C))[0]

for i in nonBoundIs:

alphaPairsChanged += innerLK(i,oS)

#print("non-bound, iter: {} i:{}, pairs changed {}".format(iter,i,alphaPairsChanged))

iter += 1

if entireSet: entireSet = False

elif (alphaPairsChanged == 0): entireSet = True

#print("iteration number: {}".format(iter))

return oS.b,oS.alphas

dataList,labelList = loadData('../../Reference Code/Ch06/testSet.txt')

b, alphas = smoSimple(dataList, labelList, 0.6, 0.001, 40)

print('b= {}'.format(b))

print('(支持向量对应的alpha>0)alpha>0\n{}'.format(alphas[alphas>0]))

循环次数: 0 alpha:0, alpha对修改了 1 次

L==H

循环次数: 0 alpha:4, alpha对修改了 2 次

j not moving enough

循环次数: 0 alpha:6, alpha对修改了 3 次

L==H

j not moving enough

L==H

循环次数: 0 alpha:25, alpha对修改了 4 次

L==H

循环次数: 0 alpha:29, alpha对修改了 5 次

L==H

循环次数: 0 alpha:52, alpha对修改了 6 次

j not moving enough

循环次数: 0 alpha:55, alpha对修改了 7 次

L==H

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 0

j not moving enough

j not moving enough

j not moving enough

j not moving enough

L==H

L==H

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

L==H

循环次数: 0 alpha:52, alpha对修改了 1 次

j not moving enough

循环次数: 0 alpha:55, alpha对修改了 2 次

j not moving enough

j not moving enough

j not moving enough

j not moving enough

循环次数: 0 alpha:76, alpha对修改了 3 次

j not moving enough

L==H

L==H

迭代次数: 0

循环次数: 0 alpha:0, alpha对修改了 1 次

j not moving enough

j not moving enough

L==H

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

循环次数: 0 alpha:96, alpha对修改了 2 次

j not moving enough

迭代次数: 0

j not moving enough

j not moving enough

循环次数: 0 alpha:8, alpha对修改了 1 次

循环次数: 0 alpha:10, alpha对修改了 2 次

L==H

j not moving enough

j not moving enough

j not moving enough

L==H

L==H

循环次数: 0 alpha:54, alpha对修改了 3 次

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 0

j not moving enough

循环次数: 0 alpha:5, alpha对修改了 1 次

j not moving enough

j not moving enough

循环次数: 0 alpha:17, alpha对修改了 2 次

L==H

j not moving enough

j not moving enough

L==H

j not moving enough

L==H

L==H

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

L==H

L==H

迭代次数: 0

j not moving enough

循环次数: 0 alpha:5, alpha对修改了 1 次

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

循环次数: 0 alpha:29, alpha对修改了 2 次

j not moving enough

循环次数: 0 alpha:52, alpha对修改了 3 次

循环次数: 0 alpha:54, alpha对修改了 4 次

j not moving enough

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 0

j not moving enough

j not moving enough

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

循环次数: 0 alpha:54, alpha对修改了 1 次

j not moving enough

j not moving enough

j not moving enough

循环次数: 0 alpha:86, alpha对修改了 2 次

L==H

L==H

j not moving enough

L==H

j not moving enough

迭代次数: 0

j not moving enough

j not moving enough

循环次数: 0 alpha:5, alpha对修改了 1 次

j not moving enough

j not moving enough

L==H

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 38

j not moving enough

j not moving enough

j not moving enough

j not moving enough

迭代次数: 39

j not moving enough

j not moving enough

L==H

j not moving enough

迭代次数: 40

b= [-4.65074859]

(支持向量对应的alpha>0)alpha>0

[0.11699604 0.29638533 0.41338137]

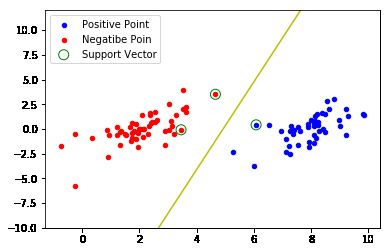

dataToShow(dataList,labelList,b,alphas)

引入核函数

def kernelTrans(X, A, kTup): #calc the kernel or transform data to a higher dimensional space

m,n = shape(X)

K = mat(zeros((m,1)))

if kTup[0]=='lin': K = X * A.T #linear kernel

elif kTup[0]=='rbf':

for j in range(m):

deltaRow = X[j,:] - A

K[j] = np.dot(deltaRow, deltaRow.T)

K = exp(K/(-1*kTup[1]**2)) #divide in NumPy is element-wise not matrix like Matlab

else: raise NameError('Houston We Have a Problem -- \

That Kernel is not recognized')

return K

class optStruct:

def __init__(self,dataMatIn, classLabels, C, toler, kTup): # Initialize the structure with the parameters

self.X = dataMatIn

self.labelMat = classLabels

self.C = C

self.tol = toler

self.m = shape(dataMatIn)[0]

self.alphas = mat(zeros((self.m,1)))

self.b = 0

self.eCache = mat(zeros((self.m,2))) #first column is valid flag

self.K = mat(zeros((self.m,self.m)))

for i in range(self.m):

self.K[:,i] = kernelTrans(self.X, self.X[i,:], kTup)

def calcEk(oS, k):

fXk = float(multiply(oS.alphas,oS.labelMat).T*oS.K[:,k] + oS.b)

Ek = fXk - float(oS.labelMat[k])

return Ek

def selectJ(i, oS, Ei): #this is the second choice -heurstic, and calcs Ej

maxK = -1; maxDeltaE = 0; Ej = 0

oS.eCache[i] = [1,Ei] #set valid #choose the alpha that gives the maximum delta E

validEcacheList = nonzero(oS.eCache[:,0].A)[0]

if (len(validEcacheList)) > 1:

for k in validEcacheList: #loop through valid Ecache values and find the one that maximizes delta E

if k == i: continue #don't calc for i, waste of time

Ek = calcEk(oS, k)

deltaE = abs(Ei - Ek)

if (deltaE > maxDeltaE):

maxK = k; maxDeltaE = deltaE; Ej = Ek

return maxK, Ej

else: #in this case (first time around) we don't have any valid eCache values

j = selectJrand(i, oS.m)

Ej = calcEk(oS, j)

return j, Ej

def updateEk(oS, k):#after any alpha has changed update the new value in the cache

Ek = calcEk(oS, k)

oS.eCache[k] = [1,Ek]

def innerL(i, oS):

Ei = calcEk(oS, i)

if ((oS.labelMat[i]*Ei < -oS.tol) and (oS.alphas[i] < oS.C)) or ((oS.labelMat[i]*Ei > oS.tol) and (oS.alphas[i] > 0)):

j,Ej = selectJ(i, oS, Ei) #this has been changed from selectJrand

alphaIold = oS.alphas[i].copy(); alphaJold = oS.alphas[j].copy();

if (oS.labelMat[i] != oS.labelMat[j]):

L = max(0, oS.alphas[j] - oS.alphas[i])

H = min(oS.C, oS.C + oS.alphas[j] - oS.alphas[i])

else:

L = max(0, oS.alphas[j] + oS.alphas[i] - oS.C)

H = min(oS.C, oS.alphas[j] + oS.alphas[i])

if L==H: return 0

eta = 2.0 * oS.K[i,j] - oS.K[i,i] - oS.K[j,j] #changed for kernel

if eta >= 0: return 0

oS.alphas[j] -= oS.labelMat[j]*(Ei - Ej)/eta

oS.alphas[j] = clipAlpha(oS.alphas[j],H,L)

updateEk(oS, j) #added this for the Ecache

if (abs(oS.alphas[j] - alphaJold) < 0.00001): return 0

oS.alphas[i] += oS.labelMat[j]*oS.labelMat[i]*(alphaJold - oS.alphas[j])#update i by the same amount as j

updateEk(oS, i) #added this for the Ecache #the update is in the oppostie direction

b1 = oS.b - Ei- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*oS.K[i,i] - oS.labelMat[j]*(oS.alphas[j]-alphaJold)*oS.K[i,j]

b2 = oS.b - Ej- oS.labelMat[i]*(oS.alphas[i]-alphaIold)*oS.K[i,j]- oS.labelMat[j]*(oS.alphas[j]-alphaJold)*oS.K[j,j]

if (0 < oS.alphas[i]) and (oS.C > oS.alphas[i]): oS.b = b1

elif (0 < oS.alphas[j]) and (oS.C > oS.alphas[j]): oS.b = b2

else: oS.b = (b1 + b2)/2.0

return 1

else: return 0

def smoP(dataMatIn, classLabels, C, toler, maxIter,kTup=('lin', 0)): #full Platt SMO

oS = optStruct(mat(dataMatIn),mat(classLabels).transpose(),C,toler, kTup)

iter = 0

entireSet = True; alphaPairsChanged = 0

while (iter < maxIter) and ((alphaPairsChanged > 0) or (entireSet)):

alphaPairsChanged = 0

if entireSet: #go over all

for i in range(oS.m):

alphaPairsChanged += innerL(i,oS)

#print("fullSet, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

else:#go over non-bound (railed) alphas

nonBoundIs = nonzero((oS.alphas.A > 0) * (oS.alphas.A < C))[0]

for i in nonBoundIs:

alphaPairsChanged += innerL(i,oS)

#print( "non-bound, iter: %d i:%d, pairs changed %d" % (iter,i,alphaPairsChanged))

iter += 1

if entireSet: entireSet = False #toggle entire set loop

elif (alphaPairsChanged == 0): entireSet = True

#print( "iteration number: %d" % iter)

return oS.b,oS.alphas

def calcWs(alphas,dataArr,classLabels):

X = mat(dataArr); labelMat = mat(classLabels).transpose()

m,n = shape(X)

w = zeros((n,1))

for i in range(m):

w += multiply(alphas[i]*labelMat[i],X[i,:].T)

return w

测试

def testRbf(k1=1.3):

dataArr,labelArr = loadData('../../Reference Code/Ch06/testSetRBF.txt')

b,alphas = smoP(dataArr, labelArr, 200, 0.0001, 10000, ('rbf', k1)) #C=200 important

datMat=mat(dataArr); labelMat = mat(labelArr).transpose()

svInd=nonzero(alphas.A>0)[0]

sVs=datMat[svInd] #get matrix of only support vectors

labelSV = labelMat[svInd];

print( "there are %d Support Vectors" % shape(sVs)[0])

m,n = shape(datMat)

errorCount = 0

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],('rbf', k1))

predict=kernelEval.T * multiply(labelSV,alphas[svInd]) + b

if sign(predict)!=sign(labelArr[i]): errorCount += 1

print( "the training error rate is: %f" % (float(errorCount)/m))

dataArr,labelArr = loadData('../../Reference Code/Ch06/testSetRBF2.txt')

errorCount = 0

datMat=mat(dataArr); labelMat = mat(labelArr).transpose()

m,n = shape(datMat)

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],('rbf', k1))

predict=kernelEval.T * multiply(labelSV,alphas[svInd]) + b

if sign(predict)!=sign(labelArr[i]): errorCount += 1

print( "the test error rate is: %f" % (float(errorCount)/m) )

return b,alphas

import matplotlib.pyplot as plt

import numpy as np

def dataToShow(dataList,labelList,b,alphas):

#array形式方便处理

dataArray = np.array(dataList)

alphasArray = np.array(alphas.tolist())

#变成一列,-1表示自动计算多少行

labelArray = np.array(labelList).reshape(-1,1)

#正类负类分开画图,np.squeeze转化成一维的

posData = dataArray[np.squeeze(labelArray>0.0)]

negData = dataArray[np.squeeze(labelArray<0.0)]

svData = dataArray[np.squeeze(alphasArray>0.0)]

plt.figure()

plt.scatter(posData[:,0],posData[:,1],c='b',s=20)

plt.scatter(negData[:,0],negData[:,1],c='r',s=20)

plt.scatter(svData[:,0],svData[:,1],marker='o',c='',s=100,edgecolors='g')

plt.legend(['Positive Point','Negatibe Poin','Support Vector'])

# #画分割超平面

# w = np.dot((alphasArray * labelArray).T, dataArray)

# x0 = np.array([2, 8])

# #分割线:w0*x0+w1*x1+b=0

# x1 = -(w[0, 0] * x0 + np.squeeze(np.array(b))) / w[0, 1]

# plt.plot(x0, x1, color = 'y')

# plt.ylim((-10,12))

plt.show()

dataList,labelList = loadData('../../Reference Code/Ch06/testSetRBF.txt')

b,alphas = testRbf(k1=1.3)

dataToShow(dataList,labelList,b,alphas)

there are 29 Support Vectors

the training error rate is: 0.130000

the test error rate is: 0.150000

b,alphas = testRbf(k1=0.1)

dataToShow(dataList,labelList,b,alphas)

there are 84 Support Vectors

the training error rate is: 0.000000

the test error rate is: 0.090000

手写识别

def img2vector(filename):

returnVect = zeros((1,1024))

fr = open(filename)

for i in range(32):

lineStr = fr.readline()

for j in range(32):

returnVect[0,32*i+j] = int(lineStr[j])

return returnVect

def loadImages(dirName):

from os import listdir

hwLabels = []

trainingFileList = listdir(dirName) #load the training set

m = len(trainingFileList)

trainingMat = zeros((m,1024))

for i in range(m):

fileNameStr = trainingFileList[i]

fileStr = fileNameStr.split('.')[0] #take off .txt

classNumStr = int(fileStr.split('_')[0])

if classNumStr == 9: hwLabels.append(-1)

else: hwLabels.append(1)

trainingMat[i,:] = img2vector('%s/%s' % (dirName, fileNameStr))

return trainingMat, hwLabels

def testDigits(kTup=('rbf', 10)):

dataArr,labelArr = loadImages('../../../Week1/Reference Code/trainingDigits')

b,alphas = smoP(dataArr, labelArr, 200, 0.0001, 10000, kTup)

datMat=mat(dataArr); labelMat = mat(labelArr).transpose()

svInd=nonzero(alphas.A>0)[0]

sVs=datMat[svInd]

labelSV = labelMat[svInd];

print("there are %d Support Vectors" % shape(sVs)[0])

m,n = shape(datMat)

errorCount = 0

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],kTup)

predict=kernelEval.T * multiply(labelSV,alphas[svInd]) + b

if sign(predict)!=sign(labelArr[i]): errorCount += 1

print("the training error rate is: %f" % (float(errorCount)/m))

dataArr,labelArr = loadImages('../../../Week1/Reference Code/testDigits')

errorCount = 0

datMat=mat(dataArr); labelMat = mat(labelArr).transpose()

m,n = shape(datMat)

for i in range(m):

kernelEval = kernelTrans(sVs,datMat[i,:],kTup)

predict=kernelEval.T * multiply(labelSV,alphas[svInd]) + b

if sign(predict)!=sign(labelArr[i]): errorCount += 1

print("the test error rate is: %f" % (float(errorCount)/m))

testDigits(('rbf', 20))

there are 204 Support Vectors

the training error rate is: 0.000000

the test error rate is: 0.010571