FFmpeg的libavfilter模块能够进行音视频处理与编辑.Avfilter功能很丰富,包含了多种滤镜,如加水印,视频旋转,音频声道处理等. 一般avfilter模块处于解码模块和显示模块之间.

filter的使用方法,可以直接参考ffmpeg源码中的例子: ffmpeg-4.1.3/doc/examples/filtering_video.c, ffmpeg-4.1.3/doc/examples/filtering_audio.c我的目的是想在播放视频时能够对视频进行旋转, 这里主要关注视频的filter.

filtergraph 和filter

filtergraph是包含很多已经连接的filter的有向图. 从输入输出端的个数来分类,filter可以分为三种类型:Source Filter, Sink Filter, Filter. Source Filter是指只有输出端的滤镜, Sink Filter是只有输入端的滤镜, Filter是既有输入端又有输出端的滤镜.

filter的简单使用

初始化filter

先看下filter创建和初始化的代码

static int init_filters(const char *filters_descr)

{

char args[512];

int ret = 0;

const AVFilter *buffersrc = avfilter_get_by_name("buffer");

const AVFilter *buffersink = avfilter_get_by_name("buffersink");

AVFilterInOut *outputs = avfilter_inout_alloc();

AVFilterInOut *inputs = avfilter_inout_alloc();

AVRational time_base = fmt_ctx->streams[video_stream_index]->time_base;

// AV_PIX_FMT_GRAY8 应该设置成输出所对应的pix format,及render需要的显示格式

enum AVPixelFormat pix_fmts[] = { AV_PIX_FMT_GRAY8, AV_PIX_FMT_NONE };

filter_graph = avfilter_graph_alloc();

if (!outputs || !inputs || !filter_graph) {

ret = AVERROR(ENOMEM);

goto end;

}

/* buffer video source: the decoded frames from the decoder will be inserted here. */

snprintf(args, sizeof(args),

"video_size=%dx%d:pix_fmt=%d:time_base=%d/%d:pixel_aspect=%d/%d",

dec_ctx->width, dec_ctx->height, dec_ctx->pix_fmt,

time_base.num, time_base.den,

dec_ctx->sample_aspect_ratio.num, dec_ctx->sample_aspect_ratio.den);

ret = avfilter_graph_create_filter(&buffersrc_ctx, buffersrc, "in",

args, NULL, filter_graph);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot create buffer source\n");

goto end;

}

/* buffer video sink: to terminate the filter chain. */

ret = avfilter_graph_create_filter(&buffersink_ctx, buffersink, "out",

NULL, NULL, filter_graph);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot create buffer sink\n");

goto end;

}

//设置sink 输出的pixel format

ret = av_opt_set_int_list(buffersink_ctx, "pix_fmts", pix_fmts,

AV_PIX_FMT_NONE, AV_OPT_SEARCH_CHILDREN);

if (ret < 0) {

av_log(NULL, AV_LOG_ERROR, "Cannot set output pixel format\n");

goto end;

}

/*

* Set the endpoints for the filter graph. The filter_graph will

* be linked to the graph described by filters_descr.

*/

/*

* The buffer source output must be connected to the input pad of

* the first filter described by filters_descr; since the first

* filter input label is not specified, it is set to "in" by

* default.

*/

outputs->name = av_strdup("in");

outputs->filter_ctx = buffersrc_ctx;

outputs->pad_idx = 0;

outputs->next = NULL;

/*

* The buffer sink input must be connected to the output pad of

* the last filter described by filters_descr; since the last

* filter output label is not specified, it is set to "out" by

* default.

*/

inputs->name = av_strdup("out");

inputs->filter_ctx = buffersink_ctx;

inputs->pad_idx = 0;

inputs->next = NULL;

if ((ret = avfilter_graph_parse_ptr(filter_graph, filters_descr,

&inputs, &outputs, NULL)) < 0)

goto end;

if ((ret = avfilter_graph_config(filter_graph, NULL)) < 0)

goto end;

end:

avfilter_inout_free(&inputs);

avfilter_inout_free(&outputs);

return ret;

}

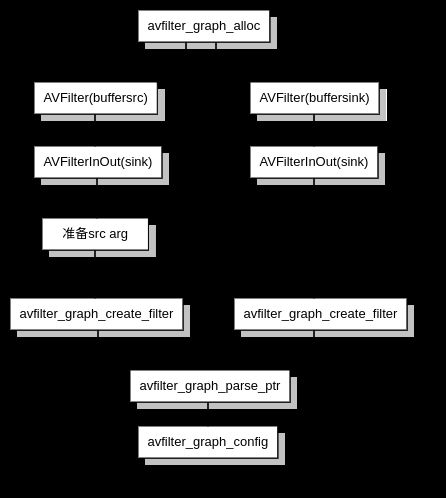

初始的流程大概可以总结为,创建filtergraph, 创建source filter 和sink filter, 将 filter连接起来添加到filtergraph, 配置filtergraph

filter过滤数据

filter创建完成后,接下来就是在播放器运行的代码中,将解码的得到的数据传入filter,并从filter获取处理好的数据

/* push the decoded frame into the filtergraph */

if (av_buffersrc_add_frame_flags(buffersrc_ctx, frame, AV_BUFFERSRC_FLAG_KEEP_REF) < 0) {

av_log(NULL, AV_LOG_ERROR, "Error while feeding the filtergraph\n");

break;

}

/* pull filtered frames from the filtergraph */

while (1) {

ret = av_buffersink_get_frame(buffersink_ctx, filt_frame);

if (ret == AVERROR(EAGAIN) || ret == AVERROR_EOF)

break;

if (ret < 0)

goto end;

//将filter处理完的数据送去显示

display_frame(filt_frame, buffersink_ctx->inputs[0]->time_base);

av_frame_unref(filt_frame);

}