unravel AI图片动起来

虽然不是闲的无聊,但是人生在于折腾嘛

之前的这个在B站很火,现在实验室新机器到了,拿这个试试水

参考教程如下:

https://zhuanlan.zhihu.com/p/193119216

https://blog.csdn.net/weixin_44087733/article/details/108858612

基本上见到的都是用的这个实现

https://github.com/anandpawara/Real_Time_Image_Animation

因为这个大佬实现的环境比较老,而且还相互之间都有关联,所以建议用anaconda新建一个虚拟环境

conda create -n yourname python=3.7.3

然后在新建的里面直接按照项目给的要求装,别自己折腾了,不然各种奇怪报错(心累)

python -m pip install -r requirements.txt

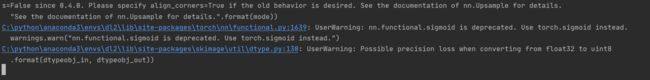

然后最坑的是30系列显卡一直有问题,花了好长时间把能想到的都解决了结果又出来个雅可比矩阵的报错,这就是CUDA的问题了,这时候看到一篇文章

30系显卡适配工作不完善,支持不好

绝望啊,就是拿这个测试机器的,结果CUDA又出问题,即使我按照那个项目作者的环境装上torch1.0.0也不行,如果我按照当时流行的pytorch1.0.0.+CUDA10.0那也不一定行啊……淦

放弃,改用纯cpu的方式,缺点就是慢,会在这样的界面持续很久

image_animation.py代码如下:

import imageio

import torch

from tqdm import tqdm

from animate import normalize_kp

from demo import load_checkpoints

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.animation as animation

from skimage import img_as_ubyte

from skimage.transform import resize

import cv2

import os

import argparse

import subprocess

import os

from PIL import Image

def video2mp3(file_name):

"""

将视频转为音频

:param file_name: 传入视频文件的路径

:return:

"""

outfile_name = file_name.split('.')[0] + '.mp3'

cmd = 'ffmpeg -i ' + file_name + ' -f mp3 ' + outfile_name

print(cmd)

subprocess.call(cmd, shell=True)

def video_add_mp3(file_name, mp3_file):

"""

视频添加音频

:param file_name: 传入视频文件的路径

:param mp3_file: 传入音频文件的路径

:return:

"""

outfile_name = file_name.split('.')[0] + '-f.mp4'

subprocess.call('ffmpeg -i ' + file_name

+ ' -i ' + mp3_file + ' -strict -2 -f mp4 '

+ outfile_name, shell=True)

ap = argparse.ArgumentParser()

ap.add_argument("-i", "--input_image", required=True, help="Path to image to animate")

ap.add_argument("-c", "--checkpoint", required=True, help="Path to checkpoint")

ap.add_argument("-v", "--input_video", required=False, help="Path to video input")

args = vars(ap.parse_args())

print("[INFO] loading source image and checkpoint...")

source_path = args['input_image']

checkpoint_path = args['checkpoint']

if args['input_video']:

video_path = args['input_video']

else:

video_path = None

source_image = imageio.imread(source_path)

source_image = resize(source_image, (256, 256))[..., :3]

generator, kp_detector = load_checkpoints(config_path='config/vox-256.yaml', checkpoint_path=checkpoint_path,cpu=True)

if not os.path.exists('output'):

os.mkdir('output')

relative = True

adapt_movement_scale = True

cpu = True

if video_path:

cap = cv2.VideoCapture(video_path)

print("[INFO] Loading video from the given path")

else:

cap = cv2.VideoCapture(0)

print("[INFO] Initializing front camera...")

fps = cap.get(cv2.CAP_PROP_FPS)

size = (int(cap.get(cv2.CAP_PROP_FRAME_WIDTH)), int(cap.get(cv2.CAP_PROP_FRAME_HEIGHT)))

video2mp3(file_name=video_path)

fourcc = cv2.VideoWriter_fourcc('M', 'P', 'E', 'G')

# out1 = cv2.VideoWriter('output/test.avi', fourcc, fps, (256*3 , 256), True)

out1 = cv2.VideoWriter('output/test.mp4', fourcc, fps, size, True)

cv2_source = cv2.cvtColor(source_image.astype('float32'), cv2.COLOR_BGR2RGB)

with torch.no_grad():

predictions = []

source = torch.tensor(source_image[np.newaxis].astype(np.float32)).permute(0, 3, 1, 2)

if not cpu:

source = source.cuda()

kp_source = kp_detector(source)

count = 0

while (True):

ret, frame = cap.read()

frame = cv2.flip(frame, 1)

if ret == True:

if not video_path:

x = 143

y = 87

w = 322

h = 322

frame = frame[y:y + h, x:x + w]

frame1 = resize(frame, (256, 256))[..., :3]

if count == 0:

source_image1 = frame1

source1 = torch.tensor(source_image1[np.newaxis].astype(np.float32)).permute(0, 3, 1, 2)

kp_driving_initial = kp_detector(source1)

frame_test = torch.tensor(frame1[np.newaxis].astype(np.float32)).permute(0, 3, 1, 2)

driving_frame = frame_test

if not cpu:

driving_frame = driving_frame.cuda()

kp_driving = kp_detector(driving_frame)

kp_norm = normalize_kp(kp_source=kp_source,

kp_driving=kp_driving,

kp_driving_initial=kp_driving_initial,

use_relative_movement=relative,

use_relative_jacobian=relative,

adapt_movement_scale=adapt_movement_scale)

out = generator(source, kp_source=kp_source, kp_driving=kp_norm)

predictions.append(np.transpose(out['prediction'].data.cpu().numpy(), [0, 2, 3, 1])[0])

im = np.transpose(out['prediction'].data.cpu().numpy(), [0, 2, 3, 1])[0]

im = cv2.cvtColor(im, cv2.COLOR_RGB2BGR)

# joinedFrame = np.concatenate((cv2_source,im,frame1),axis=1)

# joinedFrame = np.concatenate((cv2_source,im,frame1),axis=1)

# cv2.imshow('Test',joinedFrame)

# out1.write(img_as_ubyte(joinedFrame))

out1.write(img_as_ubyte(im))

count += 1

# if cv2.waitKey(20) & 0xFF == ord('q'):

# break

else:

break

cap.release()

out1.release()

cv2.destroyAllWindows()

video_add_mp3(file_name='output/test.mp4', mp3_file=video_path.split('.')[0] + '.mp3')

然后执行命令就可以了

python image_animation.py -i Inputs/trump2.png -c checkpoints/vox-cpk.pth.tar -v 1.mp4

不过拿同学们的图片跑出来还真的很欢乐哈哈哈哈哈

完结