Yolov5学习笔记(2)——部署在jetson nano上

本教程系列将从模型训练开始,从0开始带领你部署Yolov5模型到jetson nano上

这是本系列的第二部分内容

目录

1.更换源

2.更改环境变量

2.2测试CUDA

2.3安装pip3

2 .4安装GPU版的tensorflow

2.5安装pycuda

3.1下载tensorrtx的源码

3.2模型测试

1.更换源

Ubuntu跟Windows不同,能从官方指定的源服务器上下载安装各种软件,不用满世界找,但是默认的源可能在国外,速度很慢,有

的包无法安装。所谓换源就是更改源服务器,一般换成国内的。

简单通俗点来说就是,如果你希望加快下载各种包的速度要做的事情

我用的一般都是清华源,也有用中科大的源

先备份本身的源,防止误操作后无法删除

这里没有用vim,gedit更加方便

sudo cp /etc/apt/sources.list /etc/apt/sources.list.bak #为防止误操作后无法恢复,先备份原文件sources.list

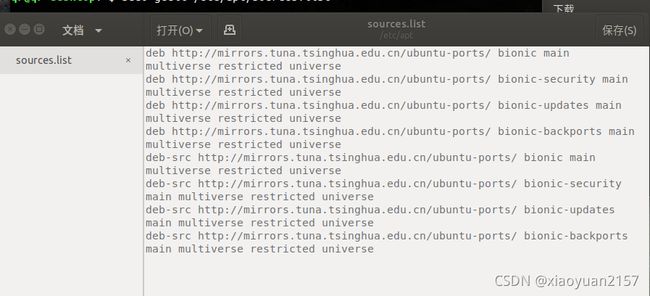

sudo gedit /etc/apt/sources.list 然后删除所有内容,复制以下内容,保存

deb http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic main multiverse restricted universe

deb http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic-security main multiverse restricted universe

deb http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic-updates main multiverse restricted universe

deb http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic-backports main multiverse restricted universe

deb-src http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic main multiverse restricted universe

deb-src http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic-security main multiverse restricted universe

deb-src http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic-updates main multiverse restricted universe

deb-src http://mirrors.tuna.tsinghua.edu.cn/ubuntu-ports/ bionic-backports main multiverse restricted universe别忘记更新一下哦

sudo apt-get update2.更改环境变量

在更改环境变量之前,记得确定好cuda的版本,在添加环境变量的时候,因为cuda的版本原因导致的错误很常见,输入下面路径查看cuda版本

cd /usr/local

ls如上图所示,cuda版本为10.2,那么路径也为cuda-10.2现在就可以添加环境变量了

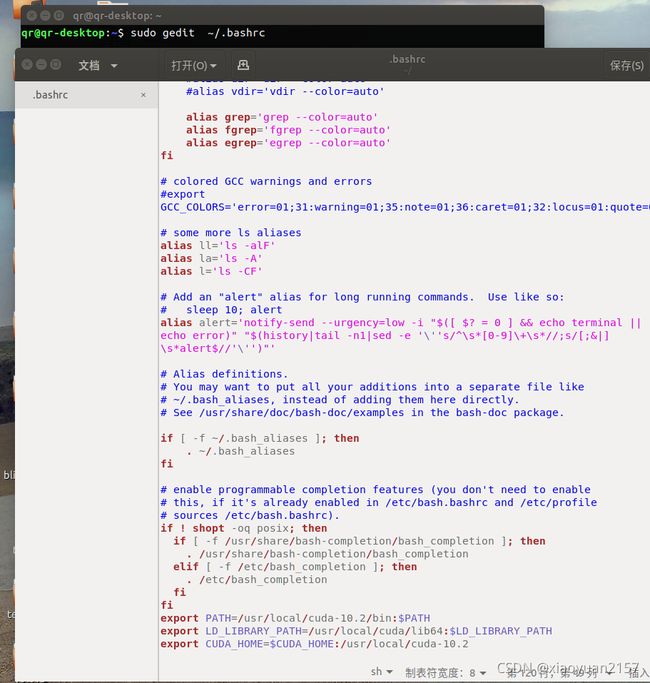

sudo gedit ~/.bashrc在文本的最后添加以下三行:

export PATH=/usr/local/cuda-10.2/bin:$PATH

export LD_LIBRARY_PATH=/usr/local/cuda/lib64:$LD_LIBRARY_PATH

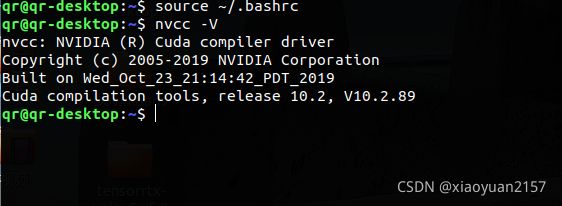

export CUDA_HOME=$CUDA_HOME:/usr/local/cuda-10.2重新执行.bashrc文件,可以直接生效;

source ~/.bashrc输入nvcc -V命令测试环境变量是否正确

nvcc -V2.2测试CUDA

依次输入下面的命令测试cuda

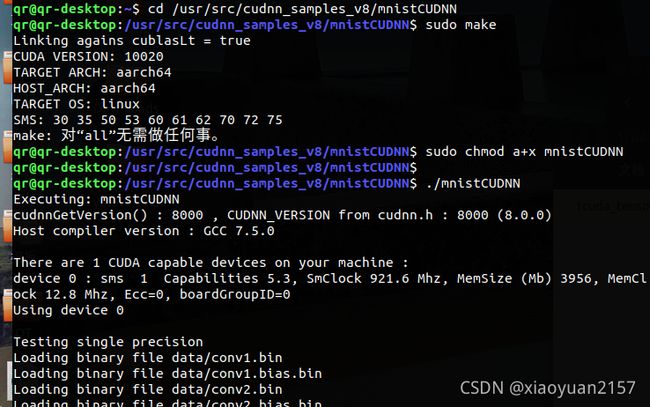

cd /usr/src/cudnn_samples_v8/mnistCUDNN

sudo make

sudo chmod a+x mnistCUDNN

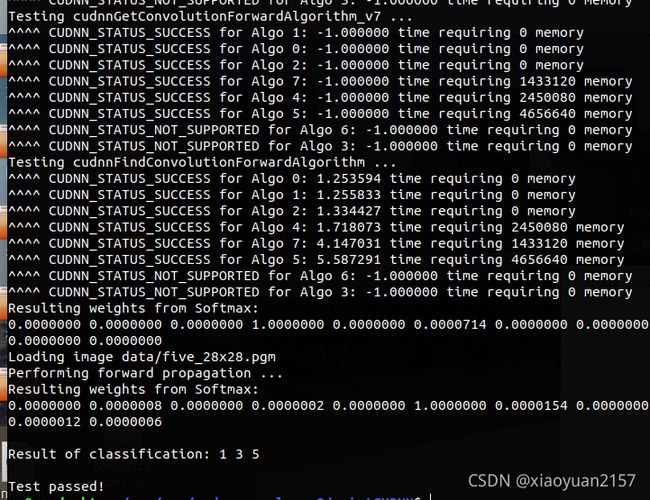

./mnistCUDNN等待片刻后,出现test passed表明成功(如下图)

2.3安装pip3

可能有同学要问了,pip是个啥,其实这不重要,你可以把他当作一个下载器,pip是Python 的包管理器,python已经来到了3.0版本,如果用pip的话,下载下来的包会保存在python2.7版本的路径下,总之,最后用pip3来下载安装python的各类包,避免后期出现麻烦

sudo apt-get install python3-pip python3-dev如果在使用的过程中出现报不能导入’main’错误

打开路径 "/usr/bin/"下的pip3文件,

将内容

from pip import main

if __name__ == '__main__':

sys.exit(main())

修改为

from pip import __main__

if __name__ == '__main__':

sys.exit(__main__._main())2 .4安装GPU版的tensorflow

首先得安装一些依赖

sudo apt-get install libhdf5-serial-dev hdf5-tools安装GPU版本的tensorflow

pip3 install --extra-index-url https://developer.download.nvidia.com/compute/redist/jp/v42 tensorflow-gpu==1.13.1+nv19.3 --user如果说你对自己安装的tensorflow版本不太放心,或者在下载过程中网络断开的话,不妨用下面的代码测试一下

import tensorflow as tf

with tf.device('/cpu:0'):

a = tf.constant([1.0,2.0,3.0],shape=[3],name='a')

b = tf.constant([1.0,2.0,3.0],shape=[3],name='b')

with tf.device('/gpu:1'):

c = a+b

sess = tf.Session(config=tf.ConfigProto(allow_soft_placement=True,log_device_placement=True))

sess.run(tf.global_variables_initializer())

print(sess.run(c))

安装一些机器学习常用的库

sudo apt-get install python3-numpy

sudo apt-get install python3-scipy

sudo apt-get install python3-pandas

sudo apt-get install python3-matplotlib

sudo apt-get install python3-sklearn

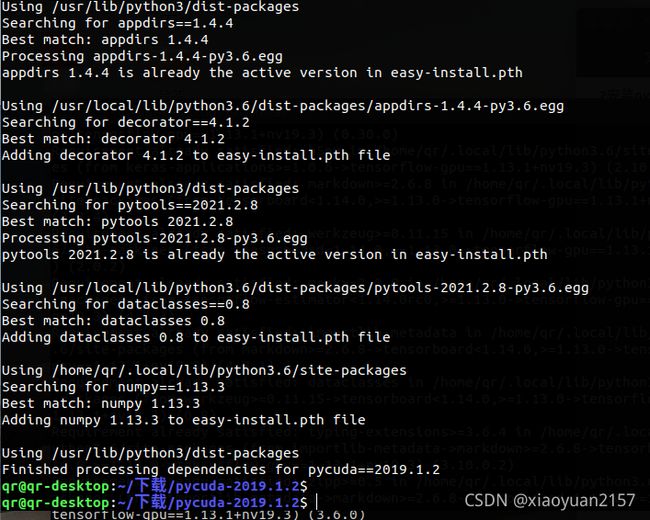

2.5安装pycuda

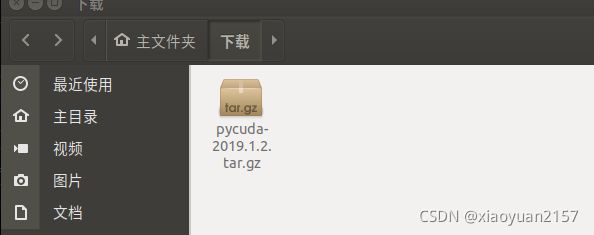

pycuda-2019.1.2点击下载pycuda2019版本pycuda-2019.1.2 这里有pycuda的github源码,里面有各种pycuda版本,但是可能会出现一些版本不兼容的问题

https://github.com/inducer/pycuda

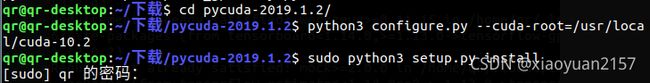

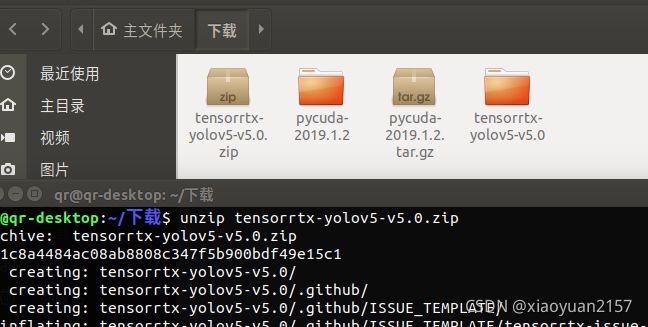

下载完成后来到下载好的路径下,终端打开,依次输入下解压安装

tar zxvf pycuda-2019.1.2.tar.gz

cd pycuda-2019.1.2/

python3 configure.py --cuda-root=/usr/local/cuda-10.2

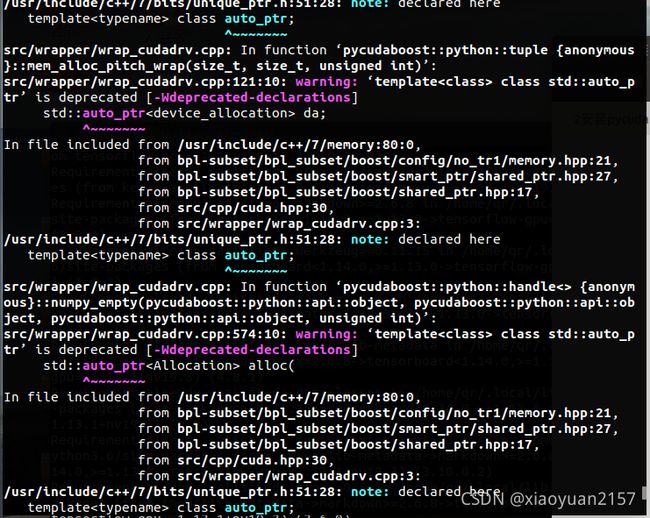

sudo python3 setup.py install安装过程中出现类似下图中的内容可以暂时忽略

稍作等待几分钟,直到出现安装完成

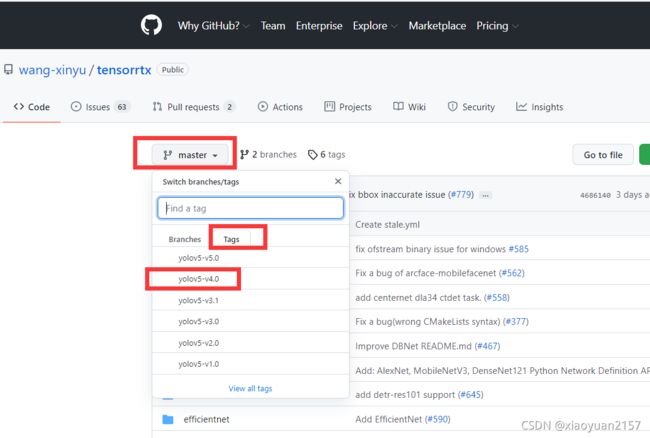

3.1下载tensorrtx的源码

很高兴你能来到这里,到这里已经完成了一半,是不是觉得很麻烦,别担心,当你完成了部署结果一定会让你很兴奋的

进入tensorrtx的官网,下载你训练时对应的yolov5的版本,点击左上角的master-->tags-->yolov5

如:yolov5-4.0版本的模型,要下载yolo5版本的tensorrtx进行部署,否则在生成引擎文件时会出现报错

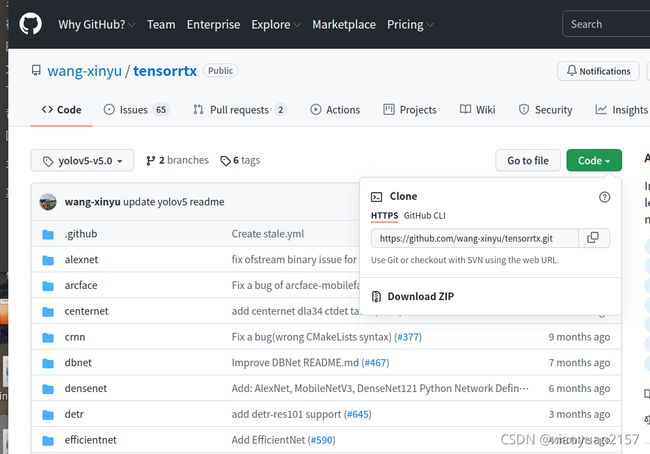

点击右边绿色的Code,然后Download ZIP

下载完成后,来到下载目录下,输入以下命令解压,我这里是v5.0版本

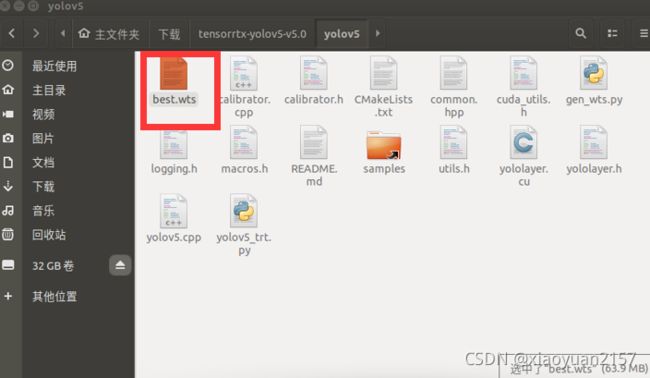

unzip tensorrtx-yolov5-v5.0.zip把之前训练的模型生成的wts权重文件放到tensorrtx的yolov5文件夹中

没有wts文件只是想体验强大的jetson nano的同学可以先下载一下我的五类垃圾分类权重文件

链接: https://pan.baidu.com/s/1nciB7Xn1vXj9ZfBAoj39Bw 提取码: r74h

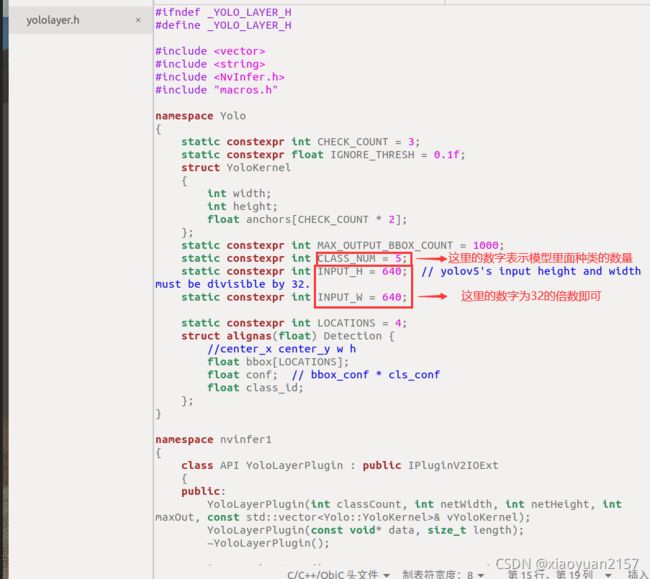

来到tensorrtx的yolov5文件夹,打开yololayer.h的代码,修改CLASS_NUM

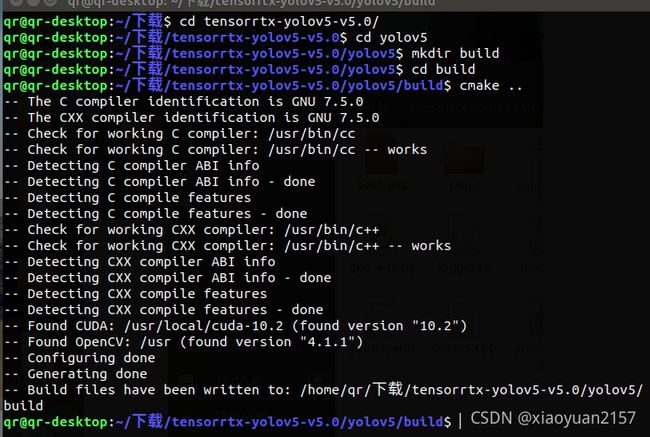

创建进入文件夹build并cmake ..

mkdir build

cd build

cmake ..make -j6生成引擎文件

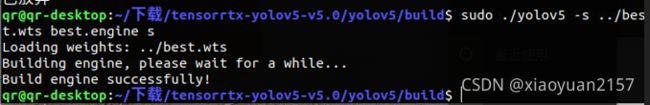

sudo ./yolov5 -s ../best.wts best.engine s 这一段模型引擎生成的命令解释如下

sudo ./yolov5 -s/ [.wts文件路径] [.engine文件名称] [s/m/l/x/s6/m6/l6/x6 or c/c6 gd gw]

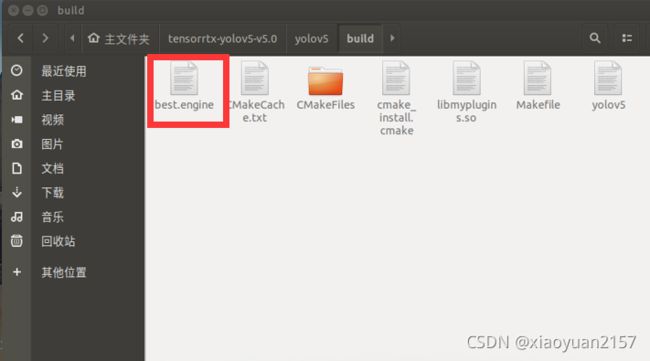

稍作等待后,出现Build engine successfully!表示生成完成,这时build文件夹里面会多出一个best.engine文件

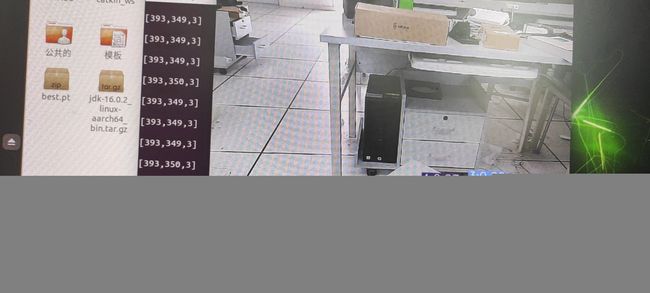

3.2模型测试

到这里,恭喜你已经完成了模型的部署,我们放自己根据官方的yolov5_trt改的代码来测试一下

是不是很棒!

"""

# Yolov5 基于pytorch,修改起来更加方便快捷;

# yolov5自带anchor生成器,自动为你的数据集生成最优化的anchor;

# yolov5的整体AP比yolov4更高。

"""

import ctypes

import os

import random

import sys

import threading

import time

# 安装串口函数库 sudo pip3 install pyserial

import serial as ser # 导入串口库

import cv2

import numpy as np # 构造ndarray对象

import pycuda.autoinit

import pycuda.driver as cuda

import tensorrt as trt

from time import sleep

# from jetcam.csi_camera import CSICamera

# import torch

# import torchvision#在nano上安装这两个库是有些麻烦的特别是torchvision。

INPUT_W = 640

INPUT_H = 640

CONF_THRESH = 0.8 # 概率阈值

IOU_THRESHOLD = 0.1

# 定义画框函数

def plot_one_box(x, img, color=None, label=None, line_thickness=None):

'''

description: Plots one bounding box on image img,

this function comes from YoLov5 project.

param:

x: a box likes [x1,y1,x2,y2]

img: a opencv image object

label: str

line_thickness: int

return:

no return

'''

# img, result_boxes, result_scores, result_classid = yolov5_wrapper.infer(img)

# img = draw_boxes(img, result_boxes, result_scores, result_classid)

tl = (

line_thickness or round(0.002 * (img.shape[0] + img.shape[1]) / 2) + 1

) # line/font thickness

color = color or [random.randint(0, 255) for _ in range(3)]

c1, c2 = (int(x[0]), int(x[1])), (int(x[2]), int(x[3]))

cv2.rectangle(img, c1, c2, color, thickness=tl, lineType=cv2.LINE_AA)

# print("left:(" + str(c1[0]) + "," + str(c1[1]) +")","right:(" + str(c2[0]) + "," + str(c2[1])+ ")")

a = int(c1[0])

b = int(c2[0])

c = int(c1[1])

d = int(c2[1])

x1 = (b + a) / 2

x = int(x1)

y1 = (d + c) / 2

y = int(y1)

r = label[2:6] #rate

sleep(0.0009)

c =str(label[0]) #class

# print(c)

# if(c == 0):

# r0 = label[2:6]

# elif(c == 1):

# r1 = label[2:6]

# elif(c == 2):

# r2 = label[2:6]

# elif(c == 3):

# r3 = label[2:6]

# elif(c == 4):

# r4 = label[2:6]

# # output_str = ('[' + str(x) + ',' + str(y) + ',' +str(c) + ']'+'\r\n')

# if(c == 3 or r3>r0 or r3>r2 or r3>r1 or r3>r4):

# print('[' + str(x) + ',' + str(y) + ',' +str(3) + ']'+'\r\n')

# else:

# print(r)

# print(x,y)

#se.write(str(label))

#print(label[0])

#print(r)#label rate

#se.write((int(x) + ',' + int(y) + ',' +int(label[0]) + '\r\n').encode())

# output_str = ('[' + str(x) + ',' + str(y) + ',' +str(label[0]) + ']'+'\r\n')

# print(output_str)

# print(r)

# se.write(output_str.encode())

# sleep(0.0009)

# se.write((x + y + '\r\n').encode())

if label:

tf = max(tl - 1, 1) # font thickness

t_size = cv2.getTextSize(label, 0, fontScale=tl / 3, thickness=tf)[0]

c2 = c1[0] + t_size[0], c1[1] - t_size[1] - 3

cv2.rectangle(img, c1, c2, color, -1, cv2.LINE_AA) # filled

cv2.putText(

img,

label,

(c1[0], c1[1] - 2),

0,

tl / 3,

[225, 255, 255],

thickness=tf,

lineType=cv2.LINE_AA,

)

return x, y

# 画框函数

def draw_boxes(image_raw, result_boxes, result_scores, result_classid):

max_scores = -1

max_index = -1

max_x,max_y = -1,-1

for i in range(len(result_boxes)):

box = result_boxes[i]

x, y = plot_one_box(

box,

image_raw,

label="{}:{:.2f}".format(

categories[int(result_classid[i])], result_scores[i]

)

)

# print(result_classid[i])

# se.write((str(x) + ',' + str(y) + ',' + str(result_classid[i]) + '\r\n').encode())

# global max_score

if result_boxes.all() > max_scores:

max_scores = result_scores[i]

max_index = i

max_x, max_y = x, y

if max_scores != -1:

c = int(result_classid[max_index])

output_str = ('[' + str(x) + ',' + str(y) + ',' +str(c) + ']'+'\r\n')

print(output_str)

se.write(output_str.encode())

sleep(0.0009)

return image_raw

# yolov5模型转到TensorRT中推理

# 定义yolov5转trt的类 start

class YoLov5TRT(object):

"""

description: A YOLOv5 class that warps TensorRT ops, preprocess and postprocess ops.

"""

def __init__(self, engine_file_path):

# Create a Context on this device,

self.ctx = cuda.Device(0).make_context()

stream = cuda.Stream()

TRT_LOGGER = trt.Logger(trt.Logger.INFO)

runtime = trt.Runtime(TRT_LOGGER)

# Deserialize the engine from file

with open(engine_file_path, "rb") as f:

engine = runtime.deserialize_cuda_engine(f.read())

context = engine.create_execution_context()

host_inputs = []

cuda_inputs = []

host_outputs = []

cuda_outputs = []

bindings = []

for binding in engine:

size = trt.volume(engine.get_binding_shape(binding)) * engine.max_batch_size

dtype = trt.nptype(engine.get_binding_dtype(binding))

# Allocate host and device buffers

host_mem = cuda.pagelocked_empty(size, dtype)

cuda_mem = cuda.mem_alloc(host_mem.nbytes)

# Append the device buffer to device bindings.

bindings.append(int(cuda_mem))

# Append to the appropriate list.

if engine.binding_is_input(binding):

host_inputs.append(host_mem)

cuda_inputs.append(cuda_mem)

else:

host_outputs.append(host_mem)

cuda_outputs.append(cuda_mem)

# Store

self.stream = stream

self.context = context

self.engine = engine

self.host_inputs = host_inputs

self.cuda_inputs = cuda_inputs

self.host_outputs = host_outputs

self.cuda_outputs = cuda_outputs

self.bindings = bindings

# 释放引擎,释放GPU显存,释放CUDA流

def __del__(self):

print("delete object to release memory")

def infer(self, input_image_path):

threading.Thread.__init__(self)

# Make self the active context, pushing it on top of the context stack.

self.ctx.push()

# Restore

stream = self.stream

context = self.context

engine = self.engine

host_inputs = self.host_inputs

cuda_inputs = self.cuda_inputs

host_outputs = self.host_outputs

cuda_outputs = self.cuda_outputs

bindings = self.bindings

# Do image preprocess

input_image, image_raw, origin_h, origin_w = self.preprocess_image(

input_image_path

)

# Copy input image to host buffer

np.copyto(host_inputs[0], input_image.ravel())

start = time.time()

# Transfer input data to the GPU.

cuda.memcpy_htod_async(cuda_inputs[0], host_inputs[0], stream)

# Run inference.

context.execute_async(bindings=bindings, stream_handle=stream.handle)

# Transfer predictions back from the GPU.

cuda.memcpy_dtoh_async(host_outputs[0], cuda_outputs[0], stream)

# Synchronize the stream

stream.synchronize()

end = time.time()

# Remove any context from the top of the context stack, deactivating it.

self.ctx.pop()

# Here we use the first row of output in that batch_size = 1

output = host_outputs[0]

# Do postprocess

result_boxes, result_scores, result_classid = self.post_process(

output, origin_h, origin_w

)

# Draw rectangles and labels on the original image

# for i in range(len(result_boxes)):

# box = result_boxes[i]

# plot_one_box(

# box,

# image_raw,

# label="{}:{:.2f}".format(

# categories[int(result_classid[i])], result_scores[i]

# ),

# )

# parent, filename = os.path.split(input_image_path)

# save_name = os.path.join(parent, "output_" + filename)

## Save image

# cv2.imwrite(save_name, image_raw)

# print('{:.2f}ms, saving {}'.format((end - start) * 1000, save_name))

return image_raw, result_boxes, result_scores, result_classid

def destroy(self):

# Remove any context from the top of the context stack, deactivating it.

self.ctx.pop()

def preprocess_image(self, image_raw):

"""

description: Read an image from image path, convert it to RGB,

resize and pad it to target size, normalize to [0,1],

transform to NCHW format.

param:

input_image_path: str, image path

return:

image: the processed image

image_raw: the original image

h: original height

w: original width

"""

h, w, c = image_raw.shape

image = cv2.cvtColor(image_raw, cv2.COLOR_BGR2RGB)

# Calculate widht and height and paddings

r_w = INPUT_W / w

r_h = INPUT_H / h

if r_h > r_w:

tw = INPUT_W

th = int(r_w * h)

tx1 = tx2 = 0

ty1 = int((INPUT_H - th) / 2)

ty2 = INPUT_H - th - ty1

else:

tw = int(r_h * w)

th = INPUT_H

tx1 = int((INPUT_W - tw) / 2)

tx2 = INPUT_W - tw - tx1

ty1 = ty2 = 0

# Resize the image with long side while maintaining ratio

image = cv2.resize(image, (tw, th))

# Pad the short side with (128,128,128)

image = cv2.copyMakeBorder(

image, ty1, ty2, tx1, tx2, cv2.BORDER_CONSTANT, (128, 128, 128)

)

image = image.astype(np.float32)

# Normalize to [0,1]

image /= 255.0

# HWC to CHW format:

image = np.transpose(image, [2, 0, 1])

# CHW to NCHW format

image = np.expand_dims(image, axis=0)

# Convert the image to row-major order, also known as "C order":

image = np.ascontiguousarray(image)

return image, image_raw, h, w

def xywh2xyxy(self, origin_h, origin_w, x):

"""

description: Convert nx4 boxes from [x, y, w, h] to [x1, y1, x2, y2] where xy1=top-left, xy2=bottom-right

param:

origin_h: height of original image

origin_w: width of original image

x: A boxes tensor, each row is a box [center_x, center_y, w, h]

return:

y: A boxes tensor, each row is a box [x1, y1, x2, y2]

"""

y = np.zeros_like(x)

# y = torch.zeros_like(x) if isinstance(x, torch.Tensor) else np.zeros_like(x)

r_w = INPUT_W / origin_w

r_h = INPUT_H / origin_h

if r_h > r_w:

y[:, 0] = x[:, 0] - x[:, 2] / 2

y[:, 2] = x[:, 0] + x[:, 2] / 2

y[:, 1] = x[:, 1] - x[:, 3] / 2 - (INPUT_H - r_w * origin_h) / 2

y[:, 3] = x[:, 1] + x[:, 3] / 2 - (INPUT_H - r_w * origin_h) / 2

y /= r_w

else:

y[:, 0] = x[:, 0] - x[:, 2] / 2 - (INPUT_W - r_h * origin_w) / 2

y[:, 2] = x[:, 0] + x[:, 2] / 2 - (INPUT_W - r_h * origin_w) / 2

y[:, 1] = x[:, 1] - x[:, 3] / 2

y[:, 3] = x[:, 1] + x[:, 3] / 2

y /= r_h

return y

# 往YoLov5TRT这个类中加入一个方法,此处是用numpy的方式实现nms

def nms(self, boxes, scores, iou_threshold=IOU_THRESHOLD): # 非极大值抑制,是目标检测框架中的后处理模块

# 空间距离结合并交比(IOU)完成聚类划分

x1 = boxes[:, 0]

y1 = boxes[:, 1]

x2 = boxes[:, 2]

y2 = boxes[:, 3]

areas = (y2 - y1 + 1) * (x2 - x1 + 1)

scores = scores

keep = []

index = scores.argsort()[::-1]

while index.size > 0:

i = index[0] # every time the first is the biggst, and add it directly

keep.append(i)

x11 = np.maximum(x1[i], x1[index[1:]]) # calculate the points of overlap

y11 = np.maximum(y1[i], y1[index[1:]])

x22 = np.minimum(x2[i], x2[index[1:]])

y22 = np.minimum(y2[i], y2[index[1:]])

w = np.maximum(0, x22 - x11 + 1) # the weights of overlap

h = np.maximum(0, y22 - y11 + 1) # the height of overlap

overlaps = w * h

ious = overlaps / (areas[i] + areas[index[1:]] - overlaps)

idx = np.where(ious <= iou_threshold)[0]

index = index[idx + 1] # because index start from 1

# print(overlaps)

# print(x1)

# sleep(1)

return keep

# 把nms的结果赋值给indices变量,改写post_process函数

def post_process(self, output, origin_h, origin_w):

"""

description: postprocess the prediction

param:

output: A tensor likes [num_boxes,cx,cy,w,h,conf,cls_id, cx,cy,w,h,conf,cls_id, ...]

origin_h: height of original image

origin_w: width of original image

return:

result_boxes: finally boxes, a boxes tensor, each row is a box [x1, y1, x2, y2]

result_scores: finally scores, a tensor, each element is the score correspoing to box

result_classid: finally classid, a tensor, each element is the classid correspoing to box

"""

# Get the num of boxes detected

num = int(output[0])

# Reshape to a two dimentional ndarray

pred = np.reshape(output[1:], (-1, 6))[:num, :]

# to a torch Tensor

# pred = torch.Tensor(pred).cuda()#去掉这行,用torchvision库中的nms方法来完成非极大值抑制。

# Get the boxes

boxes = pred[:, :4]

# Get the scores

scores = pred[:, 4]

# Get the classid

classid = pred[:, 5]

# Choose those boxes that score > CONF_THRESH

si = scores > CONF_THRESH

boxes = boxes[si, :]

scores = scores[si]

classid = classid[si]

# Trandform bbox from [center_x, center_y, w, h] to [x1, y1, x2, y2]

boxes = self.xywh2xyxy(origin_h, origin_w, boxes)

# Do nms

# 去掉cpu方法,因为ndarray没有这个方法

# indices = torchvision.ops.nms(boxes, scores, iou_threshold=IOU_THRESHOLD).cpu()

# result_boxes = boxes[indices, :].cpu()

# result_scores = scores[indices].cpu()

# result_classid = classid[indices].cpu()

indices = self.nms(boxes, scores, IOU_THRESHOLD)

result_boxes = boxes[indices, :]

result_scores = scores[indices]

result_classid = classid[indices]

# print(result_boxes)

# print(result_classid)

return result_boxes, result_scores, result_classid

class myThread(threading.Thread):

def __init__(self, func, args):

threading.Thread.__init__(self)

self.func = func

self.args = args

def run(self):

self.func(*self.args)

# 摄像头检测

def detect_camera(camera, yolov5_wrapper):

# def detect_camera(x,camera, yolov5_wrapper):

count = 0

# c1, c2 = (int(x[0]), int(x[1])), (int(x[2]), int(x[3]))

# cv2.rectangle(img, c1, c2, color, thickness=tl, lineType=cv2.LINE_AA)

# print("left:(" + str(c1[0]) + "," + str(c1[1]) +")","right:(" + str(c2[0]) + "," + str(c2[1])+ ")")

# x = str(c1[0])

# y = str(c1[1])

# print(x)

# 开始循环检测

while True:

# img = camera.read()#CSI摄像头

ret, img = camera.read() # usb摄像头用这个

img, result_boxes, result_scores, result_classid = yolov5_wrapper.infer(img)

img = draw_boxes(img, result_boxes, result_scores, result_classid)

count = count + 1

cv2.imshow("result", img) # 显示结果

if cv2.waitKey(1) == ord('q'):

break

# 定义摄像头函数

def main_camera():

camera = cv2.VideoCapture(0) # usb摄像头用这个

# camera = CSICamera(capture_device=0, width=640, height=480)

# load custom plugins

camera.set(3, 640)

camera.set(4, 480)

PLUGIN_LIBRARY = "build/libmyplugins.so"

ctypes.CDLL(PLUGIN_LIBRARY)

engine_file_path = "build/yolov5s.engine"

# YoLov5TRT instance

yolov5_wrapper = YoLov5TRT(engine_file_path)

print("start detection!")

detect_camera(camera, yolov5_wrapper)

# camera.release() # 使用cv方法打开摄像头才需要这句

cv2.destroyAllWindows()

print("\nfinish!")

if __name__ == "__main__":

# load custom plugins 修改成你build出来的引擎的相对路径

PLUGIN_LIBRARY = "build/libmyplugins.so"

ctypes.CDLL(PLUGIN_LIBRARY)

engine_file_path = "build/yolov5s.engine"

se = ser.Serial('/dev/ttyTHS1', 115200, timeout=0.5) # 设置使用的引脚、波特率和超时时间 8接R,10接T

# load coco labels

# categories = ['battery', 'orange', 'bottle', 'paper_cup', 'spitball'] # 垃圾种类

categories = ['0', '1', '2', '3', '4'] # 垃圾种类

# a YoLov5TRT instance

# yolov5_wrapper = YoLov5TRT(engine_file_path)

# input_image_paths = ["samples/zidane.jpg", "samples/bus.jpg"]

# for input_image_path in input_image_paths:

# # create a new thread to do inference

# thread1 = myThread(yolov5_wrapper.infer, [input_image_path])

# thread1.start()

# thread1.join()

#writen by lyj

## destroy the instance

# yolov5_wrapper.destroy()

main_camera()

#context.pop()

参考文章

jetson nano安装pycuda!!!_帅的发光发亮的博客-CSDN博客_jetson nano安装pycuda

(26条消息) Jetson Nano配置与使用(5)cuda测试及tensorflow gpu安装_u013617229的博客-CSDN博客