【pytorch学习笔记】第五篇——训练分类器

文章目录

- 1. 数据

- 2. 训练图像分类器

-

- 2. 1 加载并标准化 CIFAR10

- 2.2 训练图像

- 3. 定义卷积神经网络、损失函数、优化器、训练网络和保存模型

- 4. 测试自己的模型

- 5. 在GPU上进行训练

1. 数据

通常,当您必须处理图像,文本,音频或视频数据时,可以使用将数据加载到 NumPy 数组中的标准 Python 包。 然后,您可以将该数组转换为torch.*Tensor。

- 对于图像,Pillow,OpenCV 等包很有用

- 对于音频,请使用 SciPy 和 librosa 等包

- 对于文本,基于 Python 或 Cython 的原始加载,或者 NLTK 和 SpaCy 很有用

专门针对视觉,我们创建了一个名为torchvision的包,其中包含用于常见数据集(例如 Imagenet,CIFAR10,MNIST 等)的数据加载器,以及用于图像(即torchvision.datasets和torch.utils.data.DataLoader)的数据转换器。

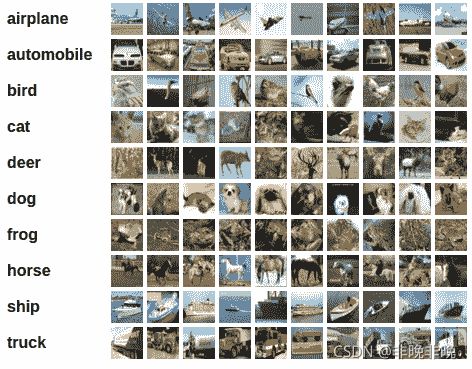

在本教程中,我们将使用 CIFAR10 数据集。 它具有以下类别:“飞机”,“汽车”,“鸟”,“猫”,“鹿”,“狗”,“青蛙”,“马”,“船”,“卡车”。 CIFAR-10 中的图像尺寸为3x32x32,即尺寸为32x32像素的 3 通道彩色图像。

2. 训练图像分类器

我们将按顺序执行以下步骤:

- 使用torchvision加载并标准化 CIFAR10 训练和测试数据集

- 定义卷积神经网络

- 定义损失函数

- 根据训练数据训练网络

- 在测试数据上测试网络

下面分步骤介绍

2. 1 加载并标准化 CIFAR10

TorchVision 数据集的输出是[0, 1]范围的PILImage图像。 我们将它们转换为归一化范围[-1, 1]的张量。

import torch

import torchvision

import torchvision.transforms as transforms

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

# 下载数据集

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

# 加载数据集

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=2)

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

输出:

Downloading https://www.cs.toronto.edu/~kriz/cifar-10-python.tar.gz to ./data/cifar-10-python.tar.gz

170499072it [22:13, 127813.17it/s]

Extracting ./data/cifar-10-python.tar.gz to ./data

Files already downloaded and verified

会在当前文件下生成data文件夹,并且里面有下载的cifar-10-batches-py数据。

2.2 训练图像

import matplotlib.pyplot as plt

import numpy as np

# functions to show an image

def imshow(img):

img = img / 2 + 0.5 # unnormalize

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show()

# get some random training images

dataiter = iter(trainloader)

images, labels = dataiter.next()

# show images

imshow(torchvision.utils.make_grid(images))

# print labels

print(' '.join('%5s' % classes[labels[j]] for j in range(4)))

输出:

cat frog deer truck

3. 定义卷积神经网络、损失函数、优化器、训练网络和保存模型

下列步骤分别为:

- 加载并标准化CIFAR10

- 定义神经网络(上一章自定义)

- 定义损失函数和优化器

- 训练网络

- 保存训练过的模型

# 1. 加载并标准化CIFAR10

import torch

import torchvision

import torchvision.transforms as transforms

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=2)

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

# 2. 定义神经网络

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

# 3. 定义损失函数和优化器

import torch.optim as optim

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9)

# 4. 训练网路

for epoch in range(2): # loop over the dataset multiple times

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

# get the inputs; data is a list of [inputs, labels]

inputs, labels = data

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.item()

if i % 2000 == 1999: # print every 2000 mini-batches

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

print('Finished Training')

# 4. 保存训练的模型

PATH = './cifar_net.pth'

torch.save(net.state_dict(), PATH)

输出:

[1, 2000] loss: 2.234

[1, 4000] loss: 1.920

[1, 6000] loss: 1.699

[1, 8000] loss: 1.623

[1, 10000] loss: 1.535

[1, 12000] loss: 1.496

[2, 2000] loss: 1.408

[2, 4000] loss: 1.376

[2, 6000] loss: 1.342

[2, 8000] loss: 1.340

[2, 10000] loss: 1.281

[2, 12000] loss: 1.285

Finished Training

在当前目标下,会生成cifar_net.pth的训练模型。

4. 测试自己的模型

我们已经在训练数据集中对网络进行了 2 次训练。 但是我们需要检查网络是否学到了什么。我们将通过预测神经网络输出的类别标签并根据实际情况进行检查来进行检查。 如果预测正确,则将样本添加到正确预测列表中。

下列步骤以此为:

- 加载并标准化CIFAR10

- 显示测试集中的图像以使其熟悉

- 加载模型

- 输出10 类的能量。

# 1. 加载并标准化CIFAR10

import torch

import torchvision

import torchvision.transforms as transforms

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=2)

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

# 2. 显示测试集中的图像以使其熟悉

import matplotlib.pyplot as plt

import numpy as np

# functions to show an image

def imshow(img):

img = img / 2 + 0.5 # unnormalize

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show()

dataiter = iter(testloader)

images, labels = dataiter.next()

# print images

imshow(torchvision.utils.make_grid(images))

print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

# 3. 加载模型

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net = Net()

PATH = './cifar_net.pth'

net.load_state_dict(torch.load(PATH))

# 4. 输出10 类的能量。

outputs = net(images)

print(outputs)

输出为:

GroundTruth: cat ship ship plane

tensor([[-1.1036, -1.1703, 0.1451, 2.0333, -0.7547, 1.0753, 2.0552, -1.2556,

-0.7143, -0.8021],

[ 4.6933, 6.5281, -0.7193, -3.1148, -1.8851, -3.8340, -3.7057, -4.3794,

6.2864, 3.4431],

[ 2.9412, 3.4974, -0.8135, -1.6855, -0.9687, -2.5153, -3.1499, -2.1516,

3.8812, 2.0316],

[ 3.4499, 2.1135, -0.1122, -1.5846, 0.2921, -2.5501, -2.5868, -0.8204,

2.3701, 1.0973]], grad_fn=<AddmmBackward>)

Predicted: frog car ship plane

让我们看一下网络在整个数据集上的表现。

correct = 0

total = 0

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs.data, 1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy of the network on the 10000 test images: %d %%' % (

100 * correct / total))

输出:

Accuracy of the network on the 10000 test images: 53 %

可以看出,53%高于盲猜的10%,所以该网络有一定的学习效率。

来看看哪一类的表现更好:

class_correct = list(0. for i in range(10))

class_total = list(0. for i in range(10))

with torch.no_grad():

for data in testloader:

images, labels = data

outputs = net(images)

_, predicted = torch.max(outputs, 1)

c = (predicted == labels).squeeze()

for i in range(4):

label = labels[i]

class_correct[label] += c[i].item()

class_total[label] += 1

for i in range(10):

print('Accuracy of %5s : %2d %%' % (

classes[i], 100 * class_correct[i] / class_total[i]))

输出:

Accuracy of plane : 61 %

Accuracy of car : 83 %

Accuracy of bird : 17 %

Accuracy of cat : 21 %

Accuracy of deer : 50 %

Accuracy of dog : 47 %

Accuracy of frog : 67 %

Accuracy of horse : 78 %

Accuracy of ship : 56 %

Accuracy of truck : 49 %

可以看出car的表现更佳。

5. 在GPU上进行训练

就像将张量转移到 GPU 上一样,您也将神经网络转移到 GPU 上。

如果可以使用 CUDA,首先将我们的设备定义为第一个可见的 cuda 设备:

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# Assuming that we are on a CUDA machine, this should print a CUDA device:

print(device)

然后,这些方法将递归遍历所有模块,并将其参数和缓冲区转换为 CUDA 张量:

net.to(device)

请记住,您还必须将每一步的输入和目标也发送到 GPU:

inputs, labels = data[0].to(device), data[1].to(device) #只是一个参考,不能直接放入该程序