学习笔记:基于BI-LSTM+CRF实现命名实体识别(Pytorch实现)

写在前面:

本文根据自己的理解介绍Pytorch实现Bi-LSTM+CRF的官方代码的实现过程和原理,初入NLP如有不对请大牛指正。

原文链接:https://pytorch.org/tutorials/beginner/nlp/advanced_tutorial.html#bi-lstm-conditional-random-field-discussion

代码下载链接:

先不讲原理,来看看代码的实现过程

一、main函数(包括准备数据、训练、验证结果)

1.首先准备好数据

词语(输入):将训练句子中出现的所有词语放入一个字典中,字典的key为该词语,value为编号。

词性(输出):同理将标签tag也放入一个词典,由于tag比较少就直接写了。

if __name__== '__main__':

START_TAG = ""

STOP_TAG = ""

EMBEDDING_DIM = 5

HIDDEN_DIM = 4

#准备数据,每个词语机器对应的词性

training_data = [(

"the wall street journal reported today that apple corporation made money".split(),

"B I I I O O O B I O O".split()

), (

"georgia tech is a university in georgia".split(),

"B I O O O O B".split()

)]

#将准备好的词语放进词集,通过word_to_ix将每个词转换成数字

word_to_ix = {

}

for sentence, tags in training_data:

for word in sentence:

if word not in word_to_ix:

word_to_ix[word] = len(word_to_ix)

#词性转换成数字

tag_to_ix = {

"B": 0, "I": 1, "O": 2, START_TAG: 3, STOP_TAG: 4}

#创建模型和优化函数

model = BiLSTM_CRF(len(word_to_ix), tag_to_ix, EMBEDDING_DIM, HIDDEN_DIM)

optimizer = optim.SGD(model.parameters(), lr=0.01, weight_decay=1e-4)

# Check predictions before training

#训练前查看一下数据在模型中的预测(标注)结果

with torch.no_grad():

precheck_sent = prepare_sequence(training_data[0][0], word_to_ix)

precheck_tags = torch.tensor([tag_to_ix[t] for t in training_data[0][1]], dtype=torch.long)

print(model(precheck_sent))

# Make sure prepare_sequence from earlier in the LSTM section is loaded

#训练

for epoch in range(

300): # again, normally you would NOT do 300 epochs, it is toy data

for sentence, tags in training_data:

# Step 1. Remember that Pytorch accumulates gradients.

# We need to clear them out before each instance

model.zero_grad()

# Step 2. Get our inputs ready for the network, that is,

# turn them into Tensors of word indices.

sentence_in = prepare_sequence(sentence, word_to_ix)

targets = torch.tensor([tag_to_ix[t] for t in tags], dtype=torch.long)

# Step 3. Run our forward pass.

loss = model.neg_log_likelihood(sentence_in, targets)

# Step 4. Compute the loss, gradients, and update the parameters by

# calling optimizer.step()

loss.backward()

optimizer.step()

# Check predictions after training

#训练后查看结果

with torch.no_grad():

precheck_sent = prepare_sequence(training_data[0][0], word_to_ix)

print(model(precheck_sent))

# We got it!

2.训练数据。

训练的过程我们放到后面,可以暂时理解为一个黑盒,该神经网络可以将我们传入的句子输入其对应的词性。

3.查看训练前的预测结果

结果为(tensor(2.6907), [1, 2, 2, 2, 2, 2, 2, 2, 2, 2, 1]),可以看到根据我们定义的tag,可以看到预测的标签与原本定义下的[0, 1, 1, 1, 2, 2, 2, 0, 1, 2, 2]并不一样。

训练后的结果为(tensor(20.4906), [0, 1, 1, 1, 2, 2, 2, 0, 1, 2, 2])与结果相同。

with torch.no_grad():

precheck_sent = prepare_sequence(training_data[0][0], word_to_ix)

precheck_tags = torch.tensor([tag_to_ix[t] for t in training_data[0][1]], dtype=torch.long)

print(model(precheck_sent))

这里有一个prepare_sequence方法,其实就是在此表里去查找该句子中所有词对应的序列

例如,我们将第一个句子“the wall street journal reported today that apple corporation made money”11个词语以此放入词集,取出来也应该是对应的[ 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10],再返回tensor格式tensor([ 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10])

同理第二个句子在词集中对应的数字为[11, 12, 13, 14, 15, 16, 11]

def prepare_sequence(seq, to_ix):

idxs = [to_ix[w] for w in seq]

return torch.tensor(idxs, dtype=torch.long)

到这里如果你不深究该神经网络的实现原理,其实你已经可以写自己的命名实体识别代码了,将自己的数据集准备成对应的格式即可。下面我么继续研究神经网络的搭建。

二、数据准备好后,我们需要搭建神经网络。

1.先讲一下搭建流程

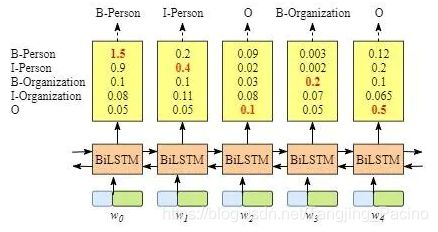

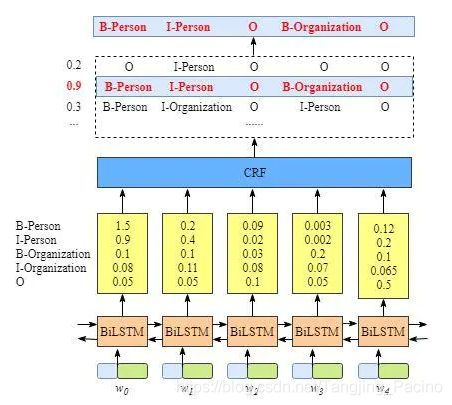

下图为一个Bi-LSTM词性标注的过程,w0,w1…表示句子里面的字,经过biLSTM处理,输出每个字对应每个标签的分数,我们将最大数值表示对该字预测的标签。

上述方法的缺点就是,会有得分与真实值不符的标签出现,因为Bi-LSTM只能预测文本序列与标签的关系,而不能预测标签与标签的关系。比如我们常知道的名字后面跟动词,而不能是动词+动词。

所以我们将Bi-LSTM的输出作为CRF的输入,计算出一个得分最高的标注序列。

2.神经网络搭建代码(这里只给出结果,后面一步步分析)

class BiLSTM_CRF(nn.Module):

def __init__(self, vocab_size, tag_to_ix, embedding_dim, hidden_dim):

super(BiLSTM_CRF, self).__init__()

self.embedding_dim = embedding_dim

self.hidden_dim = hidden_dim

self.vocab_size = vocab_size

self.tag_to_ix = tag_to_ix

self.tagset_size = len(tag_to_ix)

self.word_embeds = nn.Embedding(vocab_size, embedding_dim)

self.lstm = nn.LSTM(embedding_dim, hidden_dim // 2,

num_layers=1, bidirectional=True)

# Maps the output of the LSTM into tag space.

self.hidden2tag = nn.Linear(hidden_dim, self.tagset_size)

# Matrix of transition parameters. Entry i,j is the score of

# transitioning *to* i *from* j.

self.transitions = nn.Parameter(

torch.randn(self.tagset_size, self.tagset_size))

# These two statements enforce the constraint that we never transfer

# to the start tag and we never transfer from the stop tag

self.transitions.data[tag_to_ix[START_TAG], :] = -10000

self.transitions.data[:, tag_to_ix[STOP_TAG]] = -10000

self.hidden = self.init_hidden()

def init_hidden(self):

return (torch.randn(2, 1, self.hidden_dim // 2),

torch.randn(2, 1, self.hidden_dim // 2))

def _forward_alg(self, feats):

# Do the forward algorithm to compute the partition function

init_alphas = torch.full((1, self.tagset_size), -10000.)

# START_TAG has all of the score.

init_alphas[0][self.tag_to_ix[START_TAG]] = 0.

# Wrap in a variable so that we will get automatic backprop

forward_var = init_alphas

# Iterate through the sentence

for feat in feats:

alphas_t = [] # The forward tensors at this timestep

for next_tag in range(self.tagset_size):

# broadcast the emission score: it is the same regardless of

# the previous tag

emit_score = feat[next_tag].view(

1, -1).expand(1, self.tagset_size)

# the ith entry of trans_score is the score of transitioning to

# next_tag from i

trans_score = self.transitions[next_tag].view(1, -1)

# The ith entry of next_tag_var is the value for the

# edge (i -> next_tag) before we do log-sum-exp

next_tag_var = forward_var + trans_score + emit_score

# The forward variable for this tag is log-sum-exp of all the

# scores.

alphas_t.append(log_sum_exp(next_tag_var).view(1))

forward_var = torch.cat(alphas_t).view(1, -1)

terminal_var = forward_var + self.transitions[self.tag_to_ix[STOP_TAG]]

alpha = log_sum_exp(terminal_var)

return alpha

def _get_lstm_features(self, sentence):

self.hidden = self.init_hidden()

embeds = self.word_embeds(sentence).view(len(sentence), 1, -1)

lstm_out, self.hidden = self.lstm(embeds, self.hidden)

lstm_out = lstm_out.view(len(sentence), self.hidden_dim)

lstm_feats = self.hidden2tag(lstm_out)

return lstm_feats

def _score_sentence(self, feats, tags):

# Gives the score of a provided tag sequence

score = torch.zeros(1)

tags = torch.cat([torch.tensor([self.tag_to_ix[START_TAG]], dtype=torch.long), tags])

for i, feat in enumerate(feats):

score = score + \

self.transitions[tags[i + 1], tags[i]] + feat[tags[i + 1]]

score = score + self.transitions[self.tag_to_ix[STOP_TAG], tags[-1]]

return score

def _viterbi_decode(self, feats):

backpointers = []

# Initialize the viterbi variables in log space

init_vvars = torch.full((1, self.tagset_size), -10000.)

init_vvars[0][self.tag_to_ix[START_TAG]] = 0

# forward_var at step i holds the viterbi variables for step i-1

forward_var = init_vvars

for feat in feats:

bptrs_t = [] # holds the backpointers for this step

viterbivars_t = [] # holds the viterbi variables for this step

for next_tag in range(self.tagset_size):

# next_tag_var[i] holds the viterbi variable for tag i at the

# previous step, plus the score of transitioning

# from tag i to next_tag.

# We don't include the emission scores here because the max

# does not depend on them (we add them in below)

next_tag_var = forward_var + self.transitions[next_tag]

best_tag_id = argmax(next_tag_var)

bptrs_t.append(best_tag_id)

viterbivars_t.append(next_tag_var[0][best_tag_id].view(1))

# Now add in the emission scores, and assign forward_var to the set

# of viterbi variables we just computed

forward_var = (torch.cat(viterbivars_t) + feat).view(1, -1)

backpointers.append(bptrs_t)

# Transition to STOP_TAG

terminal_var = forward_var + self.transitions[self.tag_to_ix[STOP_TAG]]

best_tag_id = argmax(terminal_var)

path_score = terminal_var[0][best_tag_id]

# Follow the back pointers to decode the best path.

best_path = [best_tag_id]

for bptrs_t in reversed(backpointers):

best_tag_id = bptrs_t[best_tag_id]

best_path.append(best_tag_id)

# Pop off the start tag (we dont want to return that to the caller)

start = best_path.pop()

assert start == self.tag_to_ix[START_TAG] # Sanity check

best_path.reverse()

return path_score, best_path

def neg_log_likelihood(self, sentence, tags):

feats = self._get_lstm_features(sentence)

forward_score = self._forward_alg(feats)

gold_score = self._score_sentence(feats, tags)

return forward_score - gold_score

def forward(self, sentence): # dont confuse this with _forward_alg above.

# Get the emission scores from the BiLSTM

lstm_feats = self._get_lstm_features(sentence)

# Find the best path, given the features.

score, tag_seq = self._viterbi_decode(lstm_feats)

return score, tag_seq

代码比较长,但是真正搭建神经网络的函数只有Init。我们一个一个方法分析。

首先回到我们刚才的训练步骤,代码如下

神经网络训练的基本步骤:

1.创建一个神经网络,即我们自己定义的神经网络(上面的代码)

2.定义优化函数,这里使用SGD

3.开始训练,这里将两个句子训练300次

训练基本步骤:

(1)生成预测值(一般可以写在训练步骤,这里写在了神经网络定义步骤)

(2)计算预测值与真实值的差值Loss

(3)梯度归零、反向传递、参数更新(基本操作)

model = BiLSTM_CRF(len(word_to_ix), tag_to_ix, EMBEDDING_DIM, HIDDEN_DIM)

optimizer = optim.SGD(model.parameters(), lr=0.01, weight_decay=1e-4)

for epoch in range(

300): # again, normally you would NOT do 300 epochs, it is toy data

for sentence, tags in training_data:

# Step 1. Remember that Pytorch accumulates gradients.

# We need to clear them out before each instance

#梯度归零

model.zero_grad()

# Step 2. Get our inputs ready for the network, that is,

# turn them into Tensors of word indices.

#准备数据,将句子和转换成tensor格式的数字

sentence_in = prepare_sequence(sentence, word_to_ix)

targets = torch.tensor([tag_to_ix[t] for t in tags], dtype=torch.long)

# Step 3. Run our forward pass.

#,将训练集和目标值传入模型并计算得到差值

loss = model.neg_log_likelihood(sentence_in, targets)

# Step 4. Compute the loss, gradients, and update the parameters by

# calling optimizer.step()

loss.backward()

optimizer.step()

上面所有方法中,神经网络的入口为loss = model.neg_log_likelihood(sentence_in, targets)

def neg_log_likelihood(self, sentence, tags):

# 这里进来sentence的是词典编码tensor([ 0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10])

# 比如本项目的训练语句为"the wall street journal reported today that apple corporation made money"。通过lstm输出的的特征,表示训练语句中每个词语对应每个标签的得分,如第一行第一个数字代表the这个单词对应标签0(即“B”)的得分,同时该特征将作为CRF的输入,即发射矩阵。

# tensor([[-0.1079, -0.0006, 0.2340, -0.0838, 0.1052],

# [-0.0326, -0.1672, 0.0877, -0.0408, 0.1419],

# [-0.2220, -0.1824, 0.1869, -0.0737, 0.0579],

# [-0.0658, -0.1156, 0.0281, -0.0952, -0.0408],

# [-0.1824, 0.1028, -0.1790, 0.1596, -0.0385],

# [-0.0917, 0.0357, 0.0443, -0.0058, 0.1017],

# [-0.2441, -0.0490, 0.0046, 0.0394, 0.1387],

# [-0.2499, -0.0145, -0.0439, -0.0399, 0.2532],

# [-0.0973, -0.0133, 0.0963, 0.0926, 0.0216],

# [0.1057, 0.1108, -0.0436, 0.0596, 0.0328],

# [-0.0921, -0.0858, 0.1399, -0.1722, -0.0161]],

# grad_fn= < AddmmBackward >)

feats = self._get_lstm_features(sentence)

# 这里是将Bi-lstm得到的发射矩阵传入进来,计算得到一个得分,再返回最大的得分值,即CRF的功能

forward_score = self._forward_alg(feats)

# 这里计算了目标tag的得分情况

gold_score = self._score_sentence(feats, tags)

# 将得分相见得到差值并返回

return forward_score - gold_score

下面是神经网络的搭建

定义一个LSTM神经网络并初始化其值

def __init__(self, vocab_size, tag_to_ix, embedding_dim, hidden_dim):

super(BiLSTM_CRF, self).__init__()

self.embedding_dim = embedding_dim

self.hidden_dim = hidden_dim

self.vocab_size = vocab_size

self.tag_to_ix = tag_to_ix

self.tagset_size = len(tag_to_ix)

self.word_embeds = nn.Embedding(vocab_size, embedding_dim)

self.lstm = nn.LSTM(embedding_dim, hidden_dim // 2,

num_layers=1, bidirectional=True)

# Maps the output of the LSTM into tag space.

self.hidden2tag = nn.Linear(hidden_dim, self.tagset_size)

# Matrix of transition parameters. Entry i,j is the score of

# transitioning *to* i *from* j.

self.transitions = nn.Parameter(

torch.randn(self.tagset_size, self.tagset_size))

# These two statements enforce the constraint that we never transfer

# to the start tag and we never transfer from the stop tag

self.transitions.data[tag_to_ix[START_TAG], :] = -10000

self.transitions.data[:, tag_to_ix[STOP_TAG]] = -10000

self.hidden = self.init_hidden()

def init_hidden(self):

return (torch.randn(2, 1, self.hidden_dim // 2),

torch.randn(2, 1, self.hidden_dim // 2))

def forward(self, sentence): # dont confuse this with _forward_alg above.

# Get the emission scores from the BiLSTM

lstm_feats = self._get_lstm_features(sentence)

# Find the best path, given the features.

score, tag_seq = self._viterbi_decode(lstm_feats)

return score, tag_seq

上面有一个_viterbi_decode方法,这个方法的作用是计算出序列的可能性的值。即tensor的那个得分,并返回该得分的路径。

def _viterbi_decode(self, feats):

backpointers = []

# Initialize the viterbi variables in log space

init_vvars = torch.full((1, self.tagset_size), -10000.)

init_vvars[0][self.tag_to_ix[START_TAG]] = 0

# forward_var at step i holds the viterbi variables for step i-1

forward_var = init_vvars

for feat in feats:

bptrs_t = [] # holds the backpointers for this step

viterbivars_t = [] # holds the viterbi variables for this step

for next_tag in range(self.tagset_size):

# next_tag_var[i] holds the viterbi variable for tag i at the

# previous step, plus the score of transitioning

# from tag i to next_tag.

# We don't include the emission scores here because the max

# does not depend on them (we add them in below)

next_tag_var = forward_var + self.transitions[next_tag]

best_tag_id = argmax(next_tag_var)

bptrs_t.append(best_tag_id)

viterbivars_t.append(next_tag_var[0][best_tag_id].view(1))

# Now add in the emission scores, and assign forward_var to the set

# of viterbi variables we just computed

forward_var = (torch.cat(viterbivars_t) + feat).view(1, -1)

backpointers.append(bptrs_t)

# Transition to STOP_TAG

terminal_var = forward_var + self.transitions[self.tag_to_ix[STOP_TAG]]

best_tag_id = argmax(terminal_var)

path_score = terminal_var[0][best_tag_id]

# Follow the back pointers to decode the best path.

best_path = [best_tag_id]

for bptrs_t in reversed(backpointers):

best_tag_id = bptrs_t[best_tag_id]

best_path.append(best_tag_id)

# Pop off the start tag (we dont want to return that to the caller)

start = best_path.pop()

assert start == self.tag_to_ix[START_TAG] # Sanity check

best_path.reverse()

return path_score, best_path

休息一下,留坑分析公式和方法细节。