网络爬虫入门

网络爬虫入门

- 一、初识网络爬虫

-

- (一)网络爬虫定义

- (二)网络爬虫原理

- (三)使用范围

- (四)爬虫工作的过程

- (五)爬虫分类

-

- 1.通用网络爬虫

- 2.增量爬虫

- 3.垂直爬虫

- 4.Deep Web爬虫

- 二、爬取南阳理工学院ACM题目网站 http://www.51mxd.cn/ 练习题目数据

-

- (一)新建.py文件

- (二)爬取结果

- (三)代码分析

- 三、爬取重庆交通大学新闻网站中近几年所有的信息通知(http://news.cqjtu.edu.cn/xxtz.htm) 的发布日期和标题全部爬取

-

- (一)确定爬取信息的位置

- (二)代码实现

- (三)运行结果

- 四、总结

- 五、参考资料

一、初识网络爬虫

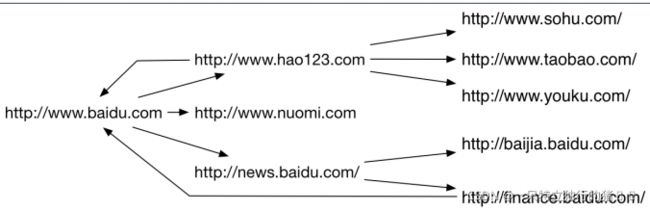

(一)网络爬虫定义

- 网络爬虫英文名叫Web Crawler戒Web

Spider。 - 它是一种自动浏览网页并采集所需要信

息癿程序。

- 每个节点都是一个网页

- 每条边都是一个超链接

- 网络爬虫就是从这样一个网络图中抓取感兴趣的内容

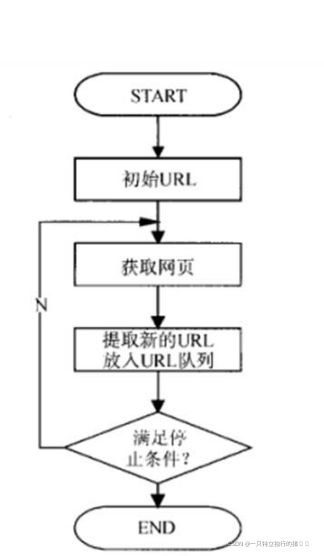

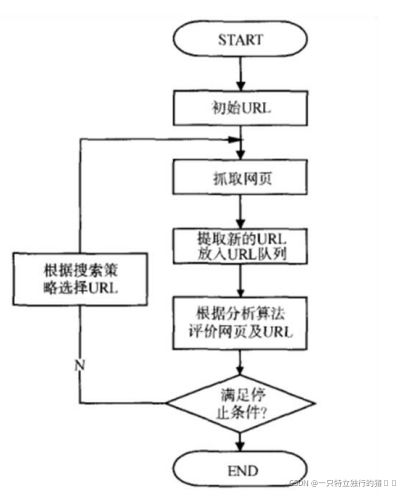

(二)网络爬虫原理

- 爬虫从初始网页的URL开始, 获取初始网页上的URL;

- 在抓取网页的过程中, 丌断仍当前页面上抽取新的URL放入队列;

- 直到满足系统给定的停止条件

(三)使用范围

- 作为搜索引擎的网页搜集器,抓取整个互联网,如谷歌,百度等;

- 作为垂直搜索引擎,抓取特定主题信息,如视频网站,招聘网站等。

- 作为测试网站前端的检测工具,用来评价网站前端代码的健壮性

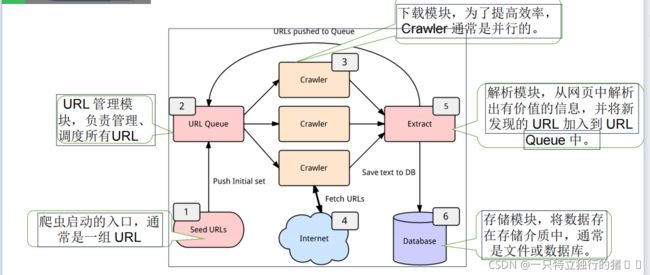

(四)爬虫工作的过程

- URL管理模块:发起请求。一般是通过HTTP库,对目标站点进行请求。等同于自己打开浏览器,输入网址。

- 下载模块:获取响应内容(response)。如果请求的内容存在于服务器上,那么服务器会返回请求的内容,一般为:HTML,二进制文件(视频,音频),文档,Json字符串等。

- 解析模块:解析内容。对于用户而言,就是寻找自己需要的信息。对于Python爬虫而言,就是利用正则表达式或者其他库提取目标信息。

- 存储模块:保存数据。解析得到的数据可以多种形式,如文本,音频,视频保存在本地。

(五)爬虫分类

1.通用网络爬虫

- 通用网络爬虫又称全网爬虫(Scalable Web Crawler),

爬行对象从·一些种子 URL 扩充到整个 Web,主要为门户

站点搜索引擎和大型 Web 服务提供商采集数据。 - 通用网络爬虫根据预先设定的一个或若干初始种

子URL开始,以此获得初始网页上的URL列表,在

爬行过程中不断从URL队列中获一个的URL,进而

访问并下载该页面。

2.增量爬虫

- 增量式网络爬虫(Incremental Web Crawler)是 指 对 已 下 载 网 页 采

取增量式更新和只爬行新产生的或者已经发生发化网页的爬虫,它能够在一

定程度上保证所爬行的页面是尽可能新的页面。 - 增量式爬虫有两个目标:保持本地页面集中存储的页面为最新页面和提高

本地页面集中页面的质量。 - 通用的商业搜索引擎如谷歌,百度等,本质上都属亍增量爬虫。

3.垂直爬虫

- 垂直爬虫,又称为聚焦网络爬虫(Focused Crawler),或主题网络爬虫(Topical Crawler) -

- 是指选择性地爬行那些不预先定义好癿主题相关页

面的·网络爬虫。如Email地址、电子书、商品价格等。 - 爬行策略实现的关键是评价页面内容和链接的重要

性,不同的方法计算出的重要性不同,由此导致链

接的访问顺序也丌同

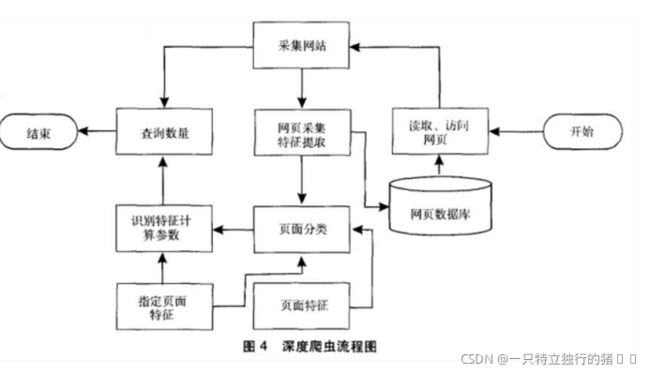

4.Deep Web爬虫

- Deep Web 是那些大部分内容不能通过静态链接获取的、隐藏在搜索表单后的,叧有用户提交一些关键词才能获得的 Web 页面。

- Deep Web 爬虫爬行过程中最重要部分就是表单填写,包含两种类型:

• 1) 基亍领域知识的表单填写

• 2) 基亍网页结构分析的表单填写

二、爬取南阳理工学院ACM题目网站 http://www.51mxd.cn/ 练习题目数据

(一)新建.py文件

import requests# 导入网页请求库

from bs4 import BeautifulSoup# 导入网页解析库

import csv

from tqdm import tqdm

# 模拟浏览器访问

Headers = 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3741.400 QQBrowser/10.5.3863.400'

# 表头

csvHeaders = ['题号', '难度', '标题', '通过率', '通过数/总提交数']

# 题目数据

subjects = []

# 爬取题目

print('题目信息爬取中:\n')

for pages in tqdm(range(1, 11 + 1)):

# 传入URL

r = requests.get(f'http://www.51mxd.cn/problemset.php-page={pages}.htm', Headers)

r.raise_for_status()

r.encoding = 'utf-8'

# 解析URL

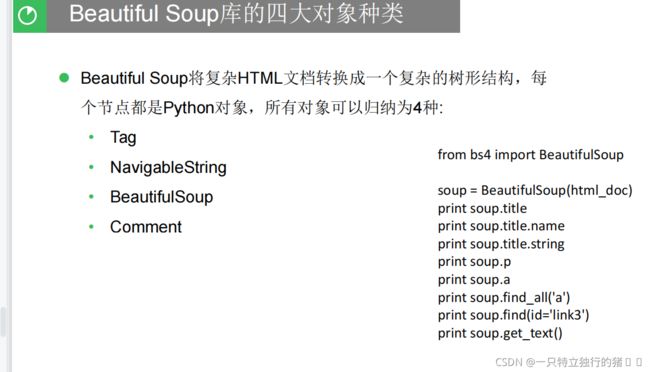

soup = BeautifulSoup(r.text, 'html5lib')

#查找爬取与td相关所有内容

td = soup.find_all('td')

subject = []

for t in td:

if t.string is not None:

subject.append(t.string)

if len(subject) == 5:

subjects.append(subject)

subject = []

# 存放题目

with open('NYOJ_Subjects.csv', 'w', newline='') as file:

fileWriter = csv.writer(file)

fileWriter.writerow(csvHeaders)

fileWriter.writerows(subjects)

print('\n题目信息爬取完成!!!')

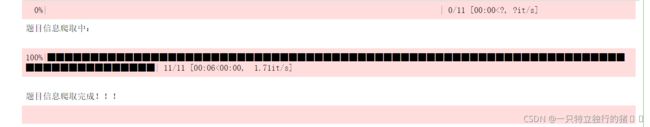

(二)爬取结果

点击运行后生成NYOJ_Subjects.csv文件,打开该文件

(三)代码分析

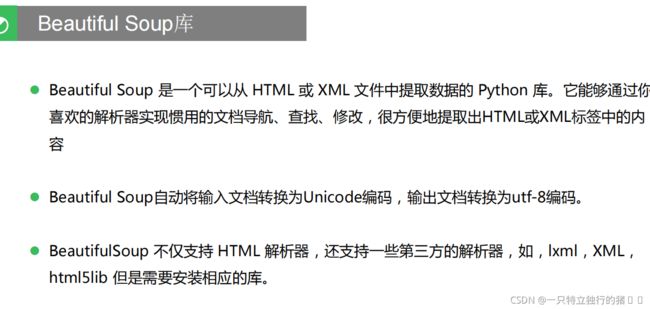

2.本次调用了 requests网页请求库和Beautiful Soup网页解析库

import requests# 导入网页请求库

from bs4 import BeautifulSoup# 导入网页解析库

3.定义访问浏览器所需的请求头和写入csv文件需要的表头以及存放题目数据的列表

# 模拟浏览器访问

# 模拟浏览器访问

Headers = 'Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/70.0.3538.25 Safari/537.36 Core/1.70.3741.400 QQBrowser/10.5.3863.400'

# 表头

csvHeaders = ['题号', '难度', '标题', '通过率', '通过数/总提交数']#表头列表

# 题目数据

subjects = []#定义列表存放数据

4.根据表头csvHeaders中内容爬取信息,并在进度条中显示进度

# 爬取题目

print('题目信息爬取中:\n')

for pages in tqdm(range(1, 11 + 1)):#一页一页地爬取信息

# 传入URL

r = requests.get(f'http://www.51mxd.cn/problemset.php-page={pages}.htm', Headers)

r.raise_for_status()

r.encoding = 'utf-8'#输出文档为utf-8编码

# 解析URL

soup = BeautifulSoup(r.text, 'html5lib')

#查找爬取与csvHeaders表头中相关所有内容

td = soup.find_all('td')

subject = []#新定义一个subject用来存放当前页面爬取的满足特征的信息

for t in td:

if t.string is not None:

subject.append(t.string)

if len(subject) == 5:#通过长度判断subject内容是否爬取到上面5项

subjects.append(subject)#把subject存放进上面的subjects中

subject = []#subject置空

5.把爬取内容存放文件NYOJ_Subjects.csv中

# 存放题目

with open('NYOJ_Subjects.csv', 'w', newline='') as file:

fileWriter = csv.writer(file)

fileWriter.writerow(csvHeaders)

fileWriter.writerows(subjects)

print('\n题目信息爬取完成!!!')

三、爬取重庆交通大学新闻网站中近几年所有的信息通知(http://news.cqjtu.edu.cn/xxtz.htm) 的发布日期和标题全部爬取

(一)确定爬取信息的位置

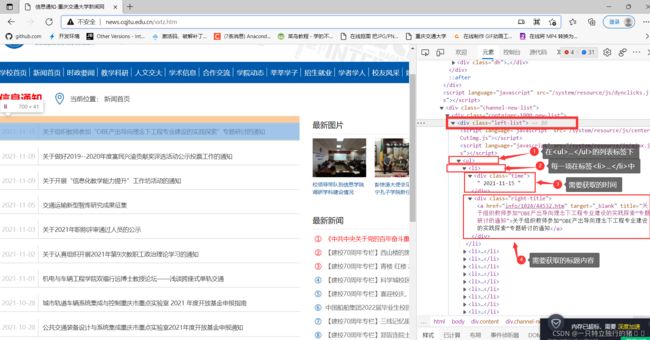

1.进入网站http://news.cqjtu.edu.cn/xxtz.htm

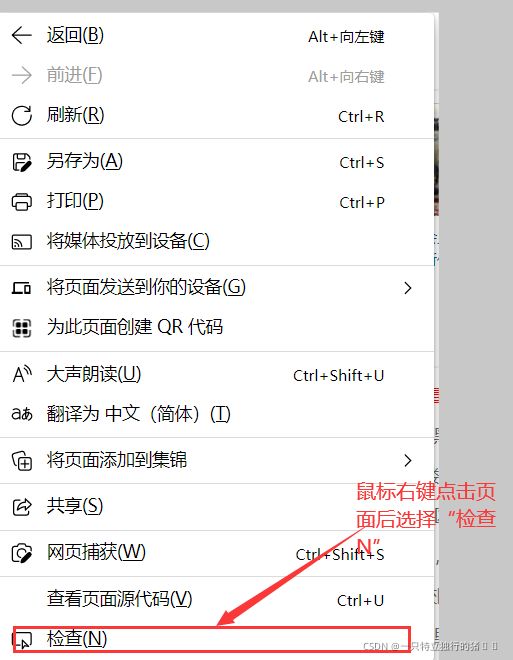

2.鼠标空白处点击后选择检查N

3.可以观察到需要爬取时间和标题所在位置

(二)代码实现

# -*- coding: utf-8 -*-

"""

Created on Wed Nov 17 14:39:03 2021

@author: 86199

"""

import requests

from bs4 import BeautifulSoup

import csv

from tqdm import tqdm

import urllib.request, urllib.error # 制定URL 获取网页数据

# 所有新闻

subjects = []

# 模拟浏览器访问

Headers = { # 模拟浏览器头部信息

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/95.0.4638.69 Safari/537.36 Edg/95.0.1020.53"

}

# 表头

csvHeaders = ['时间', '标题']

print('信息爬取中:\n')

for pages in tqdm(range(1, 65 + 1)):

# 发出请求

request = urllib.request.Request(f'http://news.cqjtu.edu.cn/xxtz/{pages}.htm', headers=Headers)

html = ""

# 如果请求成功则获取网页内容

try:

response = urllib.request.urlopen(request)

html = response.read().decode("utf-8")

except urllib.error.URLError as e:

if hasattr(e, "code"):

print(e.code)

if hasattr(e, "reason"):

print(e.reason)

# 解析网页

soup = BeautifulSoup(html, 'html5lib')

# 存放一条新闻

subject = []

# 查找所有li标签

li = soup.find_all('li')

for l in li:

# 查找满足条件的div标签

if l.find_all('div',class_="time") is not None and l.find_all('div',class_="right-title") is not None:

# 时间

for time in l.find_all('div',class_="time"):

subject.append(time.string)

# 标题

for title in l.find_all('div',class_="right-title"):

for t in title.find_all('a',target="_blank"):

subject.append(t.string)

if subject:

print(subject)

subjects.append(subject)

subject = []

# 保存数据

with open('test.csv', 'w', newline='',encoding='utf-8') as file:

fileWriter = csv.writer(file)

fileWriter.writerow(csvHeaders)

fileWriter.writerows(subjects)

print('\n信息爬取完成!!!')

(三)运行结果

四、总结

网络爬虫需要一定的Web基础,需要分析所要获取的内容信息的存放位置后设置条件进行爬虫。Python中通过调用库来进行爬虫获取信息比较简单方便。

五、参考资料

基础篇-爬虫基本原理

爬虫-Python编程入门

基于python爬取重庆交通大学新闻网内容