【玩转PointPillars】点云特征提取结构PFN(PillarFeatureNet)

PointPillars整体网络结构有三个部分构成,他们分别是PFN(Pillar Feature Net),Backbone(2D CNN)和Detection Head(SSD)。其中PFN是PointPillars中最重要也是最具创新性的部分。

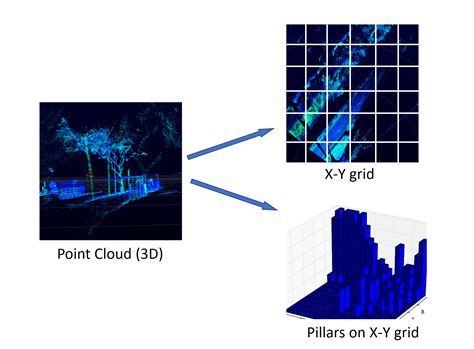

在正式讨论PFN之前我们可以幻想一下自己变成一个小蜜蜂,飞翔在3D点云空间中,俯瞰整个场景中的点云。它们在各个区域的分布或相对密集,或稀疏,或者几乎就不可见。在PFN中会将我们俯瞰到的X-Y平面划分成固定尺寸的网格,每一个网格单元在Z轴方向延伸就形成了一个个的柱子,也就是所谓的Pillar。因为点云在空间中分布的不均匀,不同Pillar中的点云数量也就不一样。而且由于点云分布的高度稀疏性,有很大一部分Pillar里面是没有点云的。下图可以比较直观地表

示这一点。基于以上论述,对于PointPillars而言,我们应该有以下几点认识:(1).同一帧点云数据中非空Pillar内的点云数量是非固定的;(2).不同帧点云数据中非空Pillar的数量也是动态变化的。PointPillars对于非空Pillar会设定一个采样阈值(默认是100)。非空Pillar中的点云数量超过阈值则会采样到该阈值,而点云数量小于该阈值则使用0进行填充。我们知道原始的点云数据有4个维度 (x,y,z,r),(x,y,z)代表点在点云3D坐标系中的位置, r代表反射强度,论文中将其扩展为9个维度 (x,y,z,r,x_c,y_c,z_c,x_p,y_p),带 c下标的是Pillar中的点相对于Pillar内点云算术中心的偏移量,带 p下标的是点相对于Pillar网格中心(x,y中心坐标)的偏移量。于是就形成了维度为(D,P,N)的张量,其中D=9, N为每个 Pillar的采样点数, P为非空的 Pillar数目。

# file:pytorch/models/pointpillars.py

def forward(self, pillar_x, pillar_y, pillar_z, pillar_i,

num_voxels, #num_points

x_sub_shaped, y_sub_shaped,

mask):

# Find distance of x, y, and z from cluster center

# pillar_xyz = torch.cat((pillar_x, pillar_y, pillar_z), 3)

pillar_xyz = torch.cat((pillar_x, pillar_y, pillar_z), 1)

#算pillar算术均值

points_mean = pillar_xyz.sum(dim=3, keepdim=True) / num_voxels.view(1, 1, -1, 1)

f_cluster = pillar_xyz - points_mean

# Find distance of x, y, and z from pillar center

#f_center = torch.zeros_like(features[:, :, :2])

#f_center[:, :, 0] = features[:, :, 0] - (coors[:, 3].float().unsqueeze(1) * self.vx + self.x_offset)

#f_center[:, :, 1] = features[:, :, 1] - (coors[:, 2].float().unsqueeze(1) * self.vy + self.y_offset)

f_center_offset_0 = pillar_x - x_sub_shaped

f_center_offset_1 = pillar_y - y_sub_shaped

f_center_concat = torch.cat((f_center_offset_0, f_center_offset_1), 1)

pillar_xyzi = torch.cat((pillar_x, pillar_y, pillar_z, pillar_i), 1)

features_list = [pillar_xyzi, f_cluster, f_center_concat]

features = torch.cat(features_list, dim=1)

masked_features = features * mask

pillar_feature = self.pfn_layers[0](masked_features)

return pillar_feature将非空Pillar内的每个点的维度扩展到9维,然后就是学习点云特征。具体说来就是用一个简化的 PointNet从 D(D等于9)维中学出 C个channel来得到一个(C,P,N)的张量。然后在N这个维度上做max operation,得到 (C,P)的张量.最后得到 (C,H,W) 的伪图像。max operation这个只是论文中的说法,代码实现中并不是max pooling,而是带孔卷积。只要你控制好kernel_size和dilation的大小,带孔卷及操作完等效于max pooling。

class PFNLayer(nn.Module):

def __init__(self,

in_channels,

out_channels,

use_norm=True,

last_layer=False):

"""

Pillar Feature Net Layer.

The Pillar Feature Net could be composed of a series of these layers, but the PointPillars paper results only

used a single PFNLayer. This layer performs a similar role as second.pytorch.voxelnet.VFELayer.

:param in_channels: . Number of input channels.

:param out_channels: . Number of output channels.

:param use_norm: . Whether to include BatchNorm.

:param last_layer: . If last_layer, there is no concatenation of features.

"""

#对于PointPillars而言,它只用到了一层PFNLayer

super().__init__()

self.name = 'PFNLayer'

self.last_vfe = last_layer

if not self.last_vfe:

out_channels = out_channels // 2

self.units = out_channels

self.in_channels = in_channels

# if use_norm:

# BatchNorm1d = change_default_args(eps=1e-3, momentum=0.01)(nn.BatchNorm1d)

# Linear = change_default_args(bias=False)(nn.Linear)

# else:

# BatchNorm1d = Empty

# Linear = change_default_args(bias=True)(nn.Linear)

self.linear= nn.Linear(self.in_channels, self.units, bias = False)

self.norm = nn.BatchNorm2d(self.units, eps=1e-3, momentum=0.01)

# kernel=>1x1x9x64

self.conv1 = nn.Conv2d(in_channels=self.in_channels, out_channels=self.units, kernel_size=1, stride=1)

self.conv2 = nn.Conv2d(in_channels=100, out_channels=1, kernel_size=1, stride=1)

self.t_conv = nn.ConvTranspose2d(100, 1, (1,8), stride=(1,7))

#带孔卷积

self.conv3 = nn.Conv2d(64, 64, kernel_size=(1, 34), stride=(1, 1), dilation=(1,3))

def forward(self, input):

x = self.conv1(input) #kernel=>1x1x9x64

x = self.norm(x) #=>shape[1,64,N,100]

x = F.relu(x) #

x = self.conv3(x) #=>shape[1,64,N,1]

return x