详解反向传播神经网络 (Back Propagation Neural Network, BPNN)

文章目录

- 一、基本概念

-

- (一)单一神经元数学模型

- (二)多层BPNN的定义

- 二、运算过程

- 三、训练过程

-

- (一)代价函数

- (二)梯度推导

- (三)迭代训练

- 四、归一化

- 五、应用示例

-

- (一)数据准备

- (二)定义网络

- (三)训练拟合

一、基本概念

所谓神经网络,本质上也是用训练样本构造出一个回归模型,与经典回归模型所不同的是它的函数形式并不简洁,而是多重函数的嵌套,因此它无法给出数据之间关系的直观描述,而给人一种黑盒的感觉。但其价值在于,理论上可以拟合多输入多输出的任意非线性函数,故而在回归、聚类等多领域都可应用。而神经网络中又以反向传播神经网络最为经典,此文将给出此类神经网络的基本原理和使用方法。

(一)单一神经元数学模型

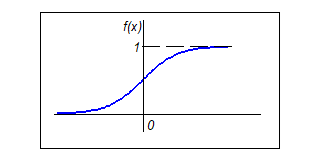

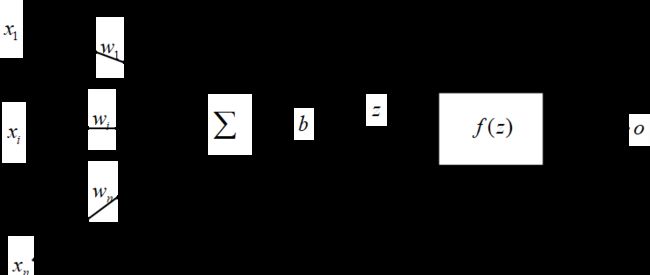

根据生物神经元细胞的构造抽象出单一神经元数学模型,如图1所示:

多个外界输入( x 1 ∼ x n x_1 \sim x_n x1∼xn)经分别加权后求和,并做一定偏移( b b b),输入到一个非线性激励函数( f f f)中,最后得到该神经元的输出值( o o o)。其数学表达式为:

o = f ( ∑ i = 1 n w i x i + b ) = f ( w T x + b ) o = f \left ({\sum_{i=1}^n w_i x_i} + b \right ) = f(\boldsymbol w^T \boldsymbol x + b) o=f(i=1∑nwixi+b)=f(wTx+b)由于进入到每个神经元各外部输入都是经过一个线性组合,即组成一个线性函数后再作为自变量进入到该神经元的的激励函数,如果激励也是一个线性函数,那就成了线性函数的线性函数,最终还是线性函数,如此一来,无论多少个神经元组成的神经网络也只能化归成一个线性函数。因此激励函数必须是非线性的,才能通过多层神经元组合连接,加权嵌套出任意非线性函数,实现复杂问题处理。激励函数一般选用以下三种类型:

由各激励函数图像可见,只在0附近会产生显著的响应差异,所以要在激励函数的输入中引入偏移量( b b b),从而相当于对响应函数做了适当平移。

(二)多层BPNN的定义

当多个神经元形成多层交叉连接时,即形成神经网络。前一层神经元的输出成为后一层神经元的输入,最后一层直接给出整个网络输出的神经元称为输出层,输出层前面的各层称为隐含层。当一个神经网络有一个以上的隐含层时,称为深度学习网络。此文以一个含有两个隐含层(共三层神经元)的深度学习网络为例做阐述,其构造如图2所示,其运算和训练过程可推广至更多层网络。

该网络的入参是一个 n n n维向量 x = [ x 1 x 2 ⋯ x n ] \boldsymbol x = \left [ \begin{matrix} x_1 & x_2 & \cdots & x_n \end{matrix} \right] x=[x1x2⋯xn],出参是一个 m m m维向量 y = [ y 1 y 2 ⋯ y m ] \boldsymbol y = \left [ \begin{matrix} y_1 & y_2 & \cdots & y_m \end{matrix} \right] y=[y1y2⋯ym]。从左至右,各层神经元的输入权重矩阵、偏移向量、入参向量、出参向量分别是:

w ( 1 ) = [ w 11 ( 1 ) w 21 ( 1 ) ⋯ w n 1 ( 1 ) w 12 ( 1 ) w 22 ( 1 ) ⋯ w n 2 ( 1 ) ⋮ ⋮ ⋱ ⋮ w 1 k ( 1 ) w 2 k ( 1 ) ⋯ w n k ( 1 ) ] b ( 1 ) = [ b 1 ( 1 ) b 2 ( 1 ) ⋮ b k ( 1 ) ] z ( 1 ) = [ z 1 ( 1 ) z 2 ( 1 ) ⋮ z k ( 1 ) ] o ( 1 ) = [ o 1 ( 1 ) o 2 ( 1 ) ⋮ o k ( 1 ) ] \boldsymbol w^{(1)} = \left [ \begin{matrix} w_{11}^{(1)} & w_{21}^{(1)} & \cdots & w_{n1}^{(1)} \\ w_{12}^{(1)} & w_{22}^{(1)} & \cdots & w_{n2}^{(1)} \\ \vdots & \vdots & \ddots & \vdots \\ w_{1k}^{(1)} & w_{2k}^{(1)} & \cdots & w_{nk}^{(1)} \\ \end{matrix} \right] \quad \boldsymbol b^{(1)} = \left [ \begin{matrix} b_{1}^{(1)} \\ b_{2}^{(1)} \\ \vdots \\ b_{k}^{(1)} \end{matrix} \right] \quad \boldsymbol z^{(1)} = \left [ \begin{matrix} z_{1}^{(1)} \\ z_{2}^{(1)} \\ \vdots \\ z_{k}^{(1)} \end{matrix} \right] \quad \boldsymbol o^{(1)} = \left [ \begin{matrix} o_{1}^{(1)} \\ o_{2}^{(1)} \\ \vdots \\ o_{k}^{(1)} \end{matrix} \right] w(1)=⎣⎢⎢⎢⎢⎡w11(1)w12(1)⋮w1k(1)w21(1)w22(1)⋮w2k(1)⋯⋯⋱⋯wn1(1)wn2(1)⋮wnk(1)⎦⎥⎥⎥⎥⎤b(1)=⎣⎢⎢⎢⎢⎡b1(1)b2(1)⋮bk(1)⎦⎥⎥⎥⎥⎤z(1)=⎣⎢⎢⎢⎢⎡z1(1)z2(1)⋮zk(1)⎦⎥⎥⎥⎥⎤o(1)=⎣⎢⎢⎢⎢⎡o1(1)o2(1)⋮ok(1)⎦⎥⎥⎥⎥⎤ w ( 2 ) = [ w 11 ( 2 ) w 21 ( 2 ) ⋯ w k 1 ( 2 ) w 12 ( 2 ) w 22 ( 2 ) ⋯ w k 2 ( 2 ) ⋮ ⋮ ⋱ ⋮ w 1 k ( 2 ) w 2 k ( 2 ) ⋯ w k l ( 2 ) ] b ( 2 ) = [ b 1 ( 2 ) b 2 ( 2 ) ⋮ b l ( 2 ) ] z ( 2 ) = [ z 1 ( 2 ) z 2 ( 2 ) ⋮ z l ( 2 ) ] o ( 2 ) = [ o 1 ( 2 ) o 2 ( 2 ) ⋮ o l ( 2 ) ] \boldsymbol w^{(2)} = \left [ \begin{matrix} w_{11}^{(2)} & w_{21}^{(2)} & \cdots & w_{k1}^{(2)} \\ w_{12}^{(2)} & w_{22}^{(2)} & \cdots & w_{k2}^{(2)} \\ \vdots & \vdots & \ddots & \vdots \\ w_{1k}^{(2)} & w_{2k}^{(2)} & \cdots & w_{kl}^{(2)} \\ \end{matrix} \right] \quad \boldsymbol b^{(2)} = \left [ \begin{matrix} b_1^{(2)} \\ b_2^{(2)} \\ \vdots \\ b_l^{(2)} \end{matrix} \right] \quad \boldsymbol z^{(2)} = \left [ \begin{matrix} z_1^{(2)} \\ z_2^{(2)} \\ \vdots \\ z_l^{(2)} \end{matrix} \right] \quad \boldsymbol o^{(2)} = \left [ \begin{matrix} o_1^{(2)} \\ o_2^{(2)} \\ \vdots \\ o_l^{(2)} \end{matrix} \right] w(2)=⎣⎢⎢⎢⎢⎡w11(2)w12(2)⋮w1k(2)w21(2)w22(2)⋮w2k(2)⋯⋯⋱⋯wk1(2)wk2(2)⋮wkl(2)⎦⎥⎥⎥⎥⎤b(2)=⎣⎢⎢⎢⎢⎡b1(2)b2(2)⋮bl(2)⎦⎥⎥⎥⎥⎤z(2)=⎣⎢⎢⎢⎢⎡z1(2)z2(2)⋮zl(2)⎦⎥⎥⎥⎥⎤o(2)=⎣⎢⎢⎢⎢⎡o1(2)o2(2)⋮ol(2)⎦⎥⎥⎥⎥⎤ w ( 3 ) = [ w 11 ( 3 ) w 21 ( 3 ) ⋯ w l 1 ( 3 ) w 12 ( 3 ) w 22 ( 3 ) ⋯ w l 2 ( 3 ) ⋮ ⋮ ⋱ ⋮ w 1 k ( 3 ) w 2 k ( 3 ) ⋯ w l m ( 3 ) ] b ( 3 ) = [ b 1 ( 3 ) b 2 ( 3 ) ⋮ b m ( 3 ) ] z ( 3 ) = [ z 1 ( 3 ) z 2 ( 3 ) ⋮ z m ( 3 ) ] o ( 3 ) = [ o 1 ( 3 ) o 2 ( 3 ) ⋮ o m ( 3 ) ] \boldsymbol w^{(3)} = \left [ \begin{matrix} w_{11}^{(3)} & w_{21}^{(3)} & \cdots & w_{l1}^{(3)} \\ w_{12}^{(3)} & w_{22}^{(3)} & \cdots & w_{l2}^{(3)} \\ \vdots & \vdots & \ddots & \vdots \\ w_{1k}^{(3)} & w_{2k}^{(3)} & \cdots & w_{lm}^{(3)} \\ \end{matrix} \right] \quad \boldsymbol b^{(3)} = \left [ \begin{matrix} b_1^{(3)} \\ b_2^{(3)} \\ \vdots \\ b_m^{(3)} \end{matrix} \right] \quad \boldsymbol z^{(3)} = \left [ \begin{matrix} z_1^{(3)} \\ z_2^{(3)} \\ \vdots \\ z_m^{(3)} \end{matrix} \right] \quad \boldsymbol o^{(3)} = \left [ \begin{matrix} o_1^{(3)} \\ o_2^{(3)} \\ \vdots \\ o_m^{(3)} \end{matrix} \right] w(3)=⎣⎢⎢⎢⎢⎡w11(3)w12(3)⋮w1k(3)w21(3)w22(3)⋮w2k(3)⋯⋯⋱⋯wl1(3)wl2(3)⋮wlm(3)⎦⎥⎥⎥⎥⎤b(3)=⎣⎢⎢⎢⎢⎡b1(3)b2(3)⋮bm(3)⎦⎥⎥⎥⎥⎤z(3)=⎣⎢⎢⎢⎢⎡z1(3)z2(3)⋮zm(3)⎦⎥⎥⎥⎥⎤o(3)=⎣⎢⎢⎢⎢⎡o1(3)o2(3)⋮om(3)⎦⎥⎥⎥⎥⎤其中 w j k ( i ) w_{jk}^{(i)} wjk(i)表示第 i i i层神经元前第 j j j个输入源传达该层第 k k k个神经元上的强度权重; b j ( i ) b_j^{(i)} bj(i)表示第 i i i层第 j j j个神经元的输入偏移量; z j ( i ) z_j^{(i)} zj(i)表示第 i i i层第 j j j个神经元的激励函数入参; o j ( i ) o_j^{(i)} oj(i)表示第 i i i层第 j j j个神经元的激励函数出参。最后一层神经元的出参也就是整个神经网络的输出向量,即 o ( 3 ) = y ^ = [ y ^ 1 y ^ 2 ⋯ y ^ m ] \boldsymbol o^{(3)} = \hat \boldsymbol y = \left [ \begin{matrix} \hat y_1 & \hat y_2 & \cdots & \hat y_m \end{matrix} \right] o(3)=y^=[y^1y^2⋯y^m]。第一层神经元的输入源即为整个神经网络的输入向量,即 x = [ x 1 x 2 ⋯ x n ] \boldsymbol x = \left [ \begin{matrix} x_1 & x_2 & \cdots & x_n \end{matrix} \right] x=[x1x2⋯xn]。

二、运算过程

基于上述定义,该神经网络的运算过程可表示为:

z ( 1 ) = w ( 1 ) ⊗ x + b ( 1 ) , o ( 1 ) = f ( z ( 1 ) ) \boldsymbol z^{(1)} =\boldsymbol w^{(1)} \otimes \boldsymbol x +\boldsymbol b^{(1)}, \quad \boldsymbol o^{(1)} = f(\boldsymbol z^{(1)}) z(1)=w(1)⊗x+b(1),o(1)=f(z(1)) z ( 2 ) = w ( 2 ) ⊗ o ( 1 ) + b ( 2 ) , o ( 2 ) = f ( z ( 2 ) ) \boldsymbol z^{(2)} =\boldsymbol w^{(2)} \otimes \boldsymbol o^{(1)} +\boldsymbol b^{(2)}, \quad \boldsymbol o^{(2)} = f(\boldsymbol z^{(2)}) z(2)=w(2)⊗o(1)+b(2),o(2)=f(z(2)) z ( 3 ) = w ( 3 ) ⊗ o ( 2 ) + b ( 3 ) , o ( 3 ) = f ( z ( 3 ) ) \boldsymbol z^{(3)} =\boldsymbol w^{(3)} \otimes \boldsymbol o^{(2)} +\boldsymbol b^{(3)}, \quad \boldsymbol o^{(3)} = f(\boldsymbol z^{(3)}) z(3)=w(3)⊗o(2)+b(3),o(3)=f(z(3))对于其中运算符 “ ⊗ ” “\otimes” “⊗”,当将其定义为做内积时,就是普通BP网络;当将其定义为右边向量与左边矩阵各行向量求欧氏距时,就成了径向基网络(Radial Basis Funtion, RBF),一种有监督聚类网络,此时如果神经元的激励函数是高斯函数,则相当于其权重向量越是离输入向量距离近的神经元越会被激活。

三、训练过程

目前看来,神经网络运算的原理并不复杂,在实际应用中也确实不会对运算资源带来多大消耗。非常消耗计算资源的是网络的训练过程,其原理也相对复杂,需要使得其可以每次训练中找到不足的原因,并做出改进,直到可以对每个输入都能给出完美输出。本章将阐述如何定量输出与预想结果的不足,如何迭代改进各神经元的权重和偏移量使得神经网络最终输出预想结果。

(一)代价函数

此处定义代价函数为一个训练样本集中各样本训练误差的均值,用来定量描述每组训练中神经网络表现不佳的程度。设一个样本量为 t t t的训练样本集合,其入参向量集为 { x 1 , x 2 , ⋯ , x t } \{ \boldsymbol x_1, \boldsymbol x_2, \cdots , \boldsymbol x_t \} {x1,x2,⋯,xt},其中每个入参是一个 n n n维向量;对应的出参向量集为 { y 1 , y 2 , ⋯ , y t } \{ \boldsymbol y_1, \boldsymbol y_2, \cdots , \boldsymbol y_t \} {y1,y2,⋯,yt},其中每个出参是一个 m m m维向量。则代价函数为:

J ( w ( 1 ) , w ( 2 ) , w ( 3 ) , b ( 1 ) , b ( 2 ) , b ( 3 ) ) = 1 t ∑ i = 1 t L i J(\boldsymbol w^{(1)} , \boldsymbol w^{(2)} , \boldsymbol w^{(3)} , \boldsymbol b^{(1)} , \boldsymbol b^{(2)} , \boldsymbol b^{(3)}) = {1 \over t} \sum_{i=1}^t L_i J(w(1),w(2),w(3),b(1),b(2),b(3))=t1i=1∑tLi其中 L i L_i Li表示第 i i i个训练样本的训练误差,为方便后续计算,将其定义为该样本出参向量与预测向量欧氏距平方的一半,即

L i ( w ( 1 ) , w ( 2 ) , w ( 3 ) , b ( 1 ) , b ( 2 ) , b ( 3 ) ) = 1 2 ∥ y i − y ^ i ∥ 2 = 1 2 ∑ j = 1 m ( y i j − y ^ i j ) 2 L_i (\boldsymbol w^{(1)} , \boldsymbol w^{(2)} , \boldsymbol w^{(3)} , \boldsymbol b^{(1)} , \boldsymbol b^{(2)} , \boldsymbol b^{(3)}) = {1 \over 2} \| \boldsymbol y_i - \hat \boldsymbol y_i \|^2 = {1 \over 2} \sum_{j=1}^m (y_{ij} - \hat y_{ij})^2 Li(w(1),w(2),w(3),b(1),b(2),b(3))=21∥yi−y^i∥2=21j=1∑m(yij−y^ij)2由于代价函数就是一个由各层神经元各权重和各偏移量构成的多元函数,训练神经网络的目的在于找到一组使得该代价函数取得最小值的各层神经元权重矩阵和偏移向量。这里要引入梯度下降法。梯度就是由多元函数各自变量的偏导数组成的向量,一个自变量的偏导数反应了该自变量对函数值增长的影响度,所以一个多元函数的梯度指向的就是在多维空间中该函数值增长最快的方向,而反方向就是函数值下降最快的方向,沿此方向移动自变量,即所谓梯度下降。根据每次迭代训练计算代价函数梯度时所选用的训练样本集合不同,分为三种梯度下降方式:

- 批量梯度下降(Batch Gradient Descent,BGD)。即每次训练迭代时,使用当前训练样本集合的全集来计算代价函数梯度。

- 随机梯度下降(Stochastic Gradient Descent,SGD)。即每次训练迭代时,仅使用当前训练样本集合中的一个随机样本来计算代价函数梯度。

- 小批量梯度下降(Mini-Batch Gradient Descent, MBGD)。即每次训练迭代时,使用当前训练样本集合中的一个随机子集样本来计算代价函数梯度。此训练方案是上述两种方案的折中,一般效果最好。

(二)梯度推导

由上述看来,训练神经网络的关键是先求得其代价函数的梯度,才能沿梯度下降方向一步步优化网络参数。由于和的导数就等于导数的和,即

d d x [ f 1 ( x ) + f 2 ( x ) ] = d d x f 1 ( x ) + d d x f 2 ( x ) {d \over dx}[f_1(x)+f_2(x)] = {d \over dx}f_1(x) + {d \over dx}f_2(x) dxd[f1(x)+f2(x)]=dxdf1(x)+dxdf2(x)由于梯度是各偏导数组成的向量,因此同样适用上述性质,因而

∇ J = 1 t ∑ i = 1 t ∇ L i \nabla J = {1 \over t} \sum_{i=1}^t \nabla L_i ∇J=t1i=1∑t∇Li对于其中任一个训练样本,其误差函数的梯度为:

∇ L = { ∂ L ∂ w ( 1 ) ∂ L ∂ w ( 2 ) ∂ L ∂ w ( 3 ) ∂ L ∂ b ( 1 ) ∂ L ∂ b ( 2 ) ∂ L ∂ b ( 3 ) } \nabla L = \left \{ \begin{matrix} {\partial L \over \partial \boldsymbol w^{(1)}} & {\partial L \over \partial \boldsymbol w^{(2)}} & {\partial L \over \partial \boldsymbol w^{(3)}} & {\partial L \over \partial \boldsymbol b^{(1)}} & {\partial L \over \partial \boldsymbol b^{(2)}} & {\partial L \over \partial \boldsymbol b^{(3)}} \end{matrix} \right \} ∇L={∂w(1)∂L∂w(2)∂L∂w(3)∂L∂b(1)∂L∂b(2)∂L∂b(3)∂L}上式之所以用大括号是因为其中每个元素又是一个矩阵或向量。首先考虑各权重矩阵的偏导。以第一层神经元为例,由前述神经网络运算过程可知, w 1 q ( 1 ) ∼ w n q ( 1 ) w_{1q}^{(1)} \sim w_{nq}^{(1)} w1q(1)∼wnq(1)都仅是 z q ( 1 ) z_q^{(1)} zq(1)的自变量,而 z q ( 1 ) z_q^{(1)} zq(1)又是 L L L的自变量。对于嵌套函数 f [ g ( x ) ] f[g(x)] f[g(x)],其导数为

d f d x = d f d g d g d x {df \over dx} = {df \over dg} {dg \over dx} dxdf=dgdfdxdg换成偏导数同样适用此法则,因此

∂ L ∂ w p q ( 1 ) = ∂ L ∂ z q ( 1 ) ∂ z q ( 1 ) ∂ w p q ( 1 ) {\partial L \over \partial w_{pq}^{(1)}} = {\partial L \over \partial z_q^{(1)}} {\partial z_q^{(1)} \over \partial w_{pq}^{(1)}} ∂wpq(1)∂L=∂zq(1)∂L∂wpq(1)∂zq(1)以此类推有

∂ L ∂ w ( 1 ) = [ ∂ L ∂ w 11 ( 1 ) ∂ L ∂ w 21 ( 1 ) ⋯ ∂ L ∂ w n 1 ( 1 ) ∂ L ∂ w 12 ( 1 ) ∂ L ∂ w 22 ( 1 ) ⋯ ∂ L ∂ w n 2 ( 1 ) ⋮ ⋮ ⋮ ⋮ ∂ L ∂ w 1 k ( 1 ) ∂ L ∂ w 2 k ( 1 ) ⋯ ∂ L ∂ w n k ( 1 ) ] = [ ∂ L ∂ z 1 ( 1 ) ∂ z 1 ( 1 ) ∂ w 11 ( 1 ) ∂ L ∂ z 1 ( 1 ) ∂ z 1 ( 1 ) ∂ w 21 ( 1 ) ⋯ ∂ L ∂ z 1 ( 1 ) ∂ z 1 ( 1 ) ∂ w n 1 ( 1 ) ∂ L ∂ z 2 ( 1 ) ∂ z 2 ( 1 ) ∂ w 12 ( 1 ) ∂ L ∂ z 2 ( 1 ) ∂ z 2 ( 1 ) ∂ w 22 ( 1 ) ⋯ ∂ L ∂ z 2 ( 1 ) ∂ z 2 ( 1 ) ∂ w n 2 ( 1 ) ⋮ ⋮ ⋮ ⋮ ∂ L ∂ z k ( 1 ) ∂ z k ( 1 ) ∂ w 1 k ( 1 ) ∂ L ∂ z k ( 1 ) ∂ z k ( 1 ) ∂ w 2 k ( 1 ) ⋯ ∂ L ∂ z k ( 1 ) ∂ z k ( 1 ) ∂ w n k ( 1 ) ] {\partial L \over \partial \boldsymbol w^{(1)}} = \left [ \begin{matrix} {\partial L \over \partial w_{11}^{(1)}} & {\partial L \over \partial w_{21}^{(1)}} & \cdots & {\partial L \over \partial w_{n1}^{(1)}} \\ {\partial L \over \partial w_{12}^{(1)}} & {\partial L \over \partial w_{22}^{(1)}} & \cdots & {\partial L \over \partial w_{n2}^{(1)}} \\ \vdots & \vdots & \vdots & \vdots \\ {\partial L \over \partial w_{1k}^{(1)}} & {\partial L \over \partial w_{2k}^{(1)}} & \cdots & {\partial L \over \partial w_{nk}^{(1)}} \\ \end{matrix} \right] = \left [ \begin{matrix} {\partial L \over \partial z_1^{(1)}} {\partial z_1^{(1)} \over \partial w_{11}^{(1)}} & {\partial L \over \partial z_1^{(1)}} {\partial z_1^{(1)} \over \partial w_{21}^{(1)}} & \cdots & {\partial L \over \partial z_1^{(1)}} {\partial z_1^{(1)} \over \partial w_{n1}^{(1)}} \\ {\partial L \over \partial z_2^{(1)}} {\partial z_2^{(1)} \over \partial w_{12}^{(1)}} & {\partial L \over \partial z_2^{(1)}} {\partial z_2^{(1)} \over \partial w_{22}^{(1)}} & \cdots & {\partial L \over \partial z_2^{(1)}} {\partial z_2^{(1)} \over \partial w_{n2}^{(1)}} \\ \vdots & \vdots & \vdots & \vdots \\ {\partial L \over \partial z_k^{(1)}} {\partial z_k^{(1)} \over \partial w_{1k}^{(1)}} & {\partial L \over \partial z_k^{(1)}} {\partial z_k^{(1)} \over \partial w_{2k}^{(1)}} & \cdots & {\partial L \over \partial z_k^{(1)}} {\partial z_k^{(1)} \over \partial w_{nk}^{(1)}} \\ \end{matrix} \right] ∂w(1)∂L=⎣⎢⎢⎢⎢⎢⎡∂w11(1)∂L∂w12(1)∂L⋮∂w1k(1)∂L∂w21(1)∂L∂w22(1)∂L⋮∂w2k(1)∂L⋯⋯⋮⋯∂wn1(1)∂L∂wn2(1)∂L⋮∂wnk(1)∂L⎦⎥⎥⎥⎥⎥⎤=⎣⎢⎢⎢⎢⎢⎢⎢⎡∂z1(1)∂L∂w11(1)∂z1(1)∂z2(1)∂L∂w12(1)∂z2(1)⋮∂zk(1)∂L∂w1k(1)∂zk(1)∂z1(1)∂L∂w21(1)∂z1(1)∂z2(1)∂L∂w22(1)∂z2(1)⋮∂zk(1)∂L∂w2k(1)∂zk(1)⋯⋯⋮⋯∂z1(1)∂L∂wn1(1)∂z1(1)∂z2(1)∂L∂wn2(1)∂z2(1)⋮∂zk(1)∂L∂wnk(1)∂zk(1)⎦⎥⎥⎥⎥⎥⎥⎥⎤由前述神经网络运算过程可知,其中

∂ z 1 ( 1 ) ∂ w p 1 ( 1 ) = ∂ z 2 ( 1 ) ∂ w p 2 ( 1 ) = ⋯ = ∂ z k ( 1 ) ∂ w p k ( 1 ) = x p {\partial z_1^{(1)} \over \partial w_{p1}^{(1)}} = {\partial z_2^{(1)} \over \partial w_{p2}^{(1)}} = \cdots = {\partial z_k^{(1)} \over \partial w_{pk}^{(1)}} = x_p ∂wp1(1)∂z1(1)=∂wp2(1)∂z2(1)=⋯=∂wpk(1)∂zk(1)=xp所以

∂ L ∂ w ( 1 ) = [ ∂ L ∂ z 1 ( 1 ) ∂ L ∂ z 2 ( 1 ) ⋮ ∂ L ∂ z k ( 1 ) ] [ ∂ z q ( 1 ) ∂ w 1 q ( 1 ) ∂ z q ( 1 ) ∂ w 2 q ( 1 ) ⋯ ∂ z q ( 1 ) ∂ w n q ( 1 ) ] = [ ∂ L ∂ z 1 ( 1 ) ∂ L ∂ z 2 ( 1 ) ⋮ ∂ L ∂ z k ( 1 ) ] [ x 1 x 2 ⋯ x n ] = δ ( 1 ) x T {\partial L \over \partial \boldsymbol w^{(1)}} = \left [ \begin{matrix} {\partial L \over \partial z_1^{(1)}} \\ {\partial L \over \partial z_2^{(1)}} \\ \vdots \\ {\partial L \over \partial z_k^{(1)}} \end{matrix} \right] \left [ \begin{matrix} {\partial z_q^{(1)} \over \partial w_{1q}^{(1)}} & {\partial z_q^{(1)} \over \partial w_{2q}^{(1)}} & \cdots & {\partial z_q^{(1)} \over \partial w_{nq}^{(1)}} \end{matrix} \right] = \left [ \begin{matrix} {\partial L \over \partial z_1^{(1)}} \\ {\partial L \over \partial z_2^{(1)}} \\ \vdots \\ {\partial L \over \partial z_k^{(1)}} \end{matrix} \right] \left [ \begin{matrix} x_1 & x_2 & \cdots & x_n \end{matrix} \right] = \boldsymbol \delta^{(1)} \boldsymbol x^T ∂w(1)∂L=⎣⎢⎢⎢⎢⎢⎡∂z1(1)∂L∂z2(1)∂L⋮∂zk(1)∂L⎦⎥⎥⎥⎥⎥⎤[∂w1q(1)∂zq(1)∂w2q(1)∂zq(1)⋯∂wnq(1)∂zq(1)]=⎣⎢⎢⎢⎢⎢⎡∂z1(1)∂L∂z2(1)∂L⋮∂zk(1)∂L⎦⎥⎥⎥⎥⎥⎤[x1x2⋯xn]=δ(1)xT同理可得

∂ L ∂ w ( 2 ) = δ ( 2 ) ( o ( 1 ) ) T {\partial L \over \partial \boldsymbol w^{(2)}} = \boldsymbol \delta^{(2)} (\boldsymbol o^{(1)})^T ∂w(2)∂L=δ(2)(o(1))T ∂ L ∂ w ( 3 ) = δ ( 3 ) ( o ( 2 ) ) T {\partial L \over \partial \boldsymbol w^{(3)}} = \boldsymbol \delta^{(3)} (\boldsymbol o^{(2)})^T ∂w(3)∂L=δ(3)(o(2))T再考虑各偏移向量的偏导数。以第一层神经元为例,由前述神经网络运算过程可知, b q ( 1 ) b_q^{(1)} bq(1)仅是 z q ( 1 ) z_q^{(1)} zq(1)的自变量,所以有

∂ L ∂ b q ( 1 ) = ∂ L ∂ z q ( 1 ) ∂ z q ( 1 ) ∂ b q ( 1 ) = ∂ L ∂ z q ( 1 ) {\partial L \over \partial b_q^{(1)}} = {\partial L \over \partial z_q^{(1)}} {\partial z_q^{(1)} \over \partial b_q^{(1)}} = {\partial L \over \partial z_q^{(1)}} ∂bq(1)∂L=∂zq(1)∂L∂bq(1)∂zq(1)=∂zq(1)∂L因为其中 ∂ z q ( 1 ) ∂ b q ( 1 ) = 1 {\partial z_q^{(1)} \over \partial b_q^{(1)}} = 1 ∂bq(1)∂zq(1)=1。以此类推有

∂ L ∂ b ( 1 ) = [ ∂ L ∂ b 1 ( 1 ) ∂ L ∂ b 2 ( 1 ) ⋮ ∂ L ∂ b k ( 1 ) ] = [ ∂ L ∂ z 1 ( 1 ) ∂ L ∂ z 2 ( 1 ) ⋮ ∂ L ∂ z k ( 1 ) ] = δ ( 1 ) {\partial L \over \partial \boldsymbol b^{(1)}} = \left [ \begin{matrix} {\partial L \over \partial b_1^{(1)}} \\ {\partial L \over \partial b_2^{(1)}} \\ \vdots \\ {\partial L \over \partial b_k^{(1)}} \end{matrix} \right] = \left [ \begin{matrix} {\partial L \over \partial z_1^{(1)}} \\ {\partial L \over \partial z_2^{(1)}} \\ \vdots \\ {\partial L \over \partial z_k^{(1)}} \end{matrix} \right] = \boldsymbol \delta^{(1)} ∂b(1)∂L=⎣⎢⎢⎢⎢⎢⎡∂b1(1)∂L∂b2(1)∂L⋮∂bk(1)∂L⎦⎥⎥⎥⎥⎥⎤=⎣⎢⎢⎢⎢⎢⎡∂z1(1)∂L∂z2(1)∂L⋮∂zk(1)∂L⎦⎥⎥⎥⎥⎥⎤=δ(1)同理可得

∂ L ∂ b ( 2 ) = δ ( 2 ) {\partial L \over \partial \boldsymbol b^{(2)}} = \boldsymbol \delta^{(2)} ∂b(2)∂L=δ(2) ∂ L ∂ b ( 3 ) = δ ( 3 ) {\partial L \over \partial \boldsymbol b^{(3)}} = \boldsymbol \delta^{(3)} ∂b(3)∂L=δ(3)综上所述,任一组训练样本的误差函数梯度为:

∇ L = { δ ( 1 ) x T δ ( 2 ) ( o ( 1 ) ) T δ ( 3 ) ( o ( 2 ) ) T δ ( 1 ) δ ( 2 ) δ ( 3 ) } \nabla L = \left \{ \begin{matrix} \boldsymbol \delta^{(1)} \boldsymbol x^T & \boldsymbol \delta^{(2)} (\boldsymbol o^{(1)})^T & \boldsymbol \delta^{(3)} (\boldsymbol o^{(2)})^T & \boldsymbol \delta^{(1)} & \boldsymbol \delta^{(2)} & \boldsymbol \delta^{(3)} \end{matrix} \right \} ∇L={δ(1)xTδ(2)(o(1))Tδ(3)(o(2))Tδ(1)δ(2)δ(3)}此时可以发现计算梯度的关键是计算出 δ ( i ) \boldsymbol \delta^{(i)} δ(i),它被称为第 i i i层神经元的灵敏度,反映了第 i i i层神经元对网络总输出误差的影响程度。仍以第一层神经元为例,由于 z 1 ( 1 ) z_1^{(1)} z1(1)仅是一个激励函数的入参,即仅是 o 1 ( 1 ) o_1^{(1)} o1(1)的自变量,而 o 1 ( 1 ) o_1^{(1)} o1(1)是下一层所有神经元激励函数入参 z ( 2 ) \boldsymbol z^{(2)} z(2)的自变量。对于一个多元嵌套函数,其偏导计算公式为:

∂ ∂ x 1 f [ g 1 ( x 1 , x 2 ) , g 2 ( x 1 , x 2 ) ] = ∂ f ∂ g 1 ∂ g 1 ∂ x 1 + ∂ f ∂ g 2 ∂ g 2 ∂ x 1 {\partial \over \partial x_1}f[g_1(x_1,x_2),g_2(x_1,x_2)] = {\partial f \over \partial g_1}{\partial g_1 \over \partial x_1} + {\partial f \over \partial g_2}{\partial g_2 \over \partial x_1} ∂x1∂f[g1(x1,x2),g2(x1,x2)]=∂g1∂f∂x1∂g1+∂g2∂f∂x1∂g2所以有

∂ L ∂ z 1 ( 1 ) = ∂ L ∂ o 1 ( 1 ) ∂ o 1 ( 1 ) ∂ z 1 ( 1 ) = ∂ L ∂ o 1 ( 1 ) f ′ ( z 1 ( 1 ) ) = f ′ ( z 1 ( 1 ) ) [ ∂ L ∂ z 1 ( 2 ) ∂ z 1 ( 2 ) ∂ o 1 ( 1 ) + ∂ L ∂ z 2 ( 2 ) ∂ z 2 ( 2 ) ∂ o 1 ( 1 ) + ⋯ + ∂ L ∂ z l ( 2 ) ∂ z l ( 2 ) ∂ o 1 ( 1 ) ] {\partial L \over \partial z_1^{(1)}} = {\partial L \over \partial o_1^{(1)}} {\partial o_1^{(1)} \over \partial z_1^{(1)}} = {\partial L \over \partial o_1^{(1)}} f'(z_1^{(1)}) = f'(z_1^{(1)}) \left [ {\partial L \over \partial z_1^{(2)}} {\partial z_1^{(2)} \over \partial o_1^{(1)}} + {\partial L \over \partial z_2^{(2)}} {\partial z_2^{(2)} \over \partial o_1^{(1)}} + \cdots + {\partial L \over \partial z_l^{(2)}} {\partial z_l^{(2)} \over \partial o_1^{(1)}}\right] ∂z1(1)∂L=∂o1(1)∂L∂z1(1)∂o1(1)=∂o1(1)∂Lf′(z1(1))=f′(z1(1))[∂z1(2)∂L∂o1(1)∂z1(2)+∂z2(2)∂L∂o1(1)∂z2(2)+⋯+∂zl(2)∂L∂o1(1)∂zl(2)]以此类推

δ ( 1 ) = ∂ L ∂ z ( 1 ) = [ ∂ L ∂ z 1 ( 1 ) ∂ L ∂ z 2 ( 1 ) ⋮ ∂ L ∂ z k ( 1 ) ] = [ f ′ ( z 1 ( 1 ) ) f ′ ( z 2 ( 1 ) ) ⋱ f ′ ( z k ( 1 ) ) ] [ ∂ z 1 ( 2 ) ∂ o 1 ( 1 ) ∂ z 2 ( 2 ) ∂ o 1 ( 1 ) ⋯ ∂ z l ( 2 ) ∂ o 1 ( 1 ) ∂ z 1 ( 2 ) ∂ o 2 ( 1 ) ∂ z 2 ( 2 ) ∂ o 2 ( 1 ) ⋯ ∂ z l ( 2 ) ∂ o 2 ( 1 ) ⋮ ⋮ ⋮ ⋮ ∂ z 1 ( 2 ) ∂ o k ( 1 ) ∂ z 2 ( 2 ) ∂ o k ( 1 ) ⋯ ∂ z l ( 2 ) ∂ o k ( 1 ) ] [ ∂ L ∂ z 1 ( 2 ) ∂ L ∂ z 2 ( 2 ) ⋮ ∂ L ∂ z l ( 2 ) ] \boldsymbol \delta^{(1)} = {\partial L \over \partial \boldsymbol z^{(1)}} = \left [ \begin{matrix} {\partial L \over \partial z_1^{(1)}} \\ {\partial L \over \partial z_2^{(1)}} \\ \vdots \\ {\partial L \over \partial z_k^{(1)}} \end{matrix} \right] = \left [ \begin{matrix} f'(z_1^{(1)}) & & & \\ & f'(z_2^{(1)}) & & \\ & & \ddots & \\ & & & f'(z_k^{(1)}) \end{matrix} \right] \left [ \begin{matrix} {\partial z_1^{(2)} \over \partial o_1^{(1)}} & {\partial z_2^{(2)} \over \partial o_1^{(1)}} & \cdots & {\partial z_l^{(2)} \over \partial o_1^{(1)}} \\ {\partial z_1^{(2)} \over \partial o_2^{(1)}} & {\partial z_2^{(2)} \over \partial o_2^{(1)}} & \cdots & {\partial z_l^{(2)} \over \partial o_2^{(1)}} \\ \vdots & \vdots & \vdots & \vdots \\ {\partial z_1^{(2)} \over \partial o_k^{(1)}} & {\partial z_2^{(2)} \over \partial o_k^{(1)}} & \cdots & {\partial z_l^{(2)} \over \partial o_k^{(1)}} \\ \end{matrix} \right] \left [ \begin{matrix} {\partial L \over \partial z_1^{(2)}} \\ {\partial L \over \partial z_2^{(2)}} \\ \vdots \\ {\partial L \over \partial z_l^{(2)}} \end{matrix} \right] δ(1)=∂z(1)∂L=⎣⎢⎢⎢⎢⎢⎡∂z1(1)∂L∂z2(1)∂L⋮∂zk(1)∂L⎦⎥⎥⎥⎥⎥⎤=⎣⎢⎢⎢⎡f′(z1(1))f′(z2(1))⋱f′(zk(1))⎦⎥⎥⎥⎤⎣⎢⎢⎢⎢⎢⎢⎢⎡∂o1(1)∂z1(2)∂o2(1)∂z1(2)⋮∂ok(1)∂z1(2)∂o1(1)∂z2(2)∂o2(1)∂z2(2)⋮∂ok(1)∂z2(2)⋯⋯⋮⋯∂o1(1)∂zl(2)∂o2(1)∂zl(2)⋮∂ok(1)∂zl(2)⎦⎥⎥⎥⎥⎥⎥⎥⎤⎣⎢⎢⎢⎢⎢⎡∂z1(2)∂L∂z2(2)∂L⋮∂zl(2)∂L⎦⎥⎥⎥⎥⎥⎤即

δ ( 1 ) = ∂ o ( 1 ) ∂ z ( 1 ) ∂ z ( 2 ) ∂ o ( 1 ) ∂ L ∂ z ( 2 ) = d i a g [ f ′ ( z ( 1 ) ) ] ( w ( 2 ) ) T δ ( 2 ) \boldsymbol \delta^{(1)} = {\partial \boldsymbol o^{(1)} \over \partial \boldsymbol z^{(1)}} {\partial \boldsymbol z^{(2)} \over \partial \boldsymbol o^{(1)}} {\partial \boldsymbol L \over \partial \boldsymbol z^{(2)}} = diag[f'(\boldsymbol z^{(1)})] (\boldsymbol w^{(2)})^T \boldsymbol \delta^{(2)} δ(1)=∂z(1)∂o(1)∂o(1)∂z(2)∂z(2)∂L=diag[f′(z(1))](w(2))Tδ(2)同理可得

δ ( 2 ) = ∂ o ( 2 ) ∂ z ( 2 ) ∂ z ( 3 ) ∂ o ( 2 ) ∂ L ∂ z ( 3 ) = d i a g [ f ′ ( z ( 2 ) ) ] ( w ( 3 ) ) T δ ( 3 ) \boldsymbol \delta^{(2)} = {\partial \boldsymbol o^{(2)} \over \partial \boldsymbol z^{(2)}} {\partial \boldsymbol z^{(3)} \over \partial \boldsymbol o^{(2)}} {\partial \boldsymbol L \over \partial \boldsymbol z^{(3)}} = diag[f'(\boldsymbol z^{(2)})] (\boldsymbol w^{(3)})^T \boldsymbol \delta^{(3)} δ(2)=∂z(2)∂o(2)∂o(2)∂z(3)∂z(3)∂L=diag[f′(z(2))](w(3))Tδ(3)可见每一层神经元的灵敏度都是前一层神经元灵敏度的自变量(即入参),这也就体现了误差从输出层反向传播到输入层,故而称这种网络为反向传播网络。正是因为这个特性,使得无论有多少层神经元,只要知道了最后一层输出层神经元的灵敏度,就可以很方便的迭代出各层神经元的灵敏度。在本文所举例的三层BPNN中,输出层的灵敏度为:

δ ( 3 ) = ∂ L ∂ z ( 3 ) = [ ∂ L ∂ z 1 ( 3 ) ∂ L ∂ z 2 ( 3 ) ⋮ ∂ L ∂ z m ( 3 ) ] \boldsymbol \delta^{(3)} = {\partial \boldsymbol L \over \partial \boldsymbol z^{(3)}} = \left [ \begin{matrix} {\partial L \over \partial z_1^{(3)}} \\ {\partial L \over \partial z_2^{(3)}} \\ \vdots \\ {\partial L \over \partial z_m^{(3)}} \end{matrix} \right] δ(3)=∂z(3)∂L=⎣⎢⎢⎢⎢⎢⎡∂z1(3)∂L∂z2(3)∂L⋮∂zm(3)∂L⎦⎥⎥⎥⎥⎥⎤其中,由于 z 1 ( 3 ) z_1^{(3)} z1(3)仅是 o 1 ( 3 ) o_1^{(3)} o1(3)(即 y ^ 1 \hat y_1 y^1)的自变量,而 y ^ 1 \hat y_1 y^1又仅是误差函数 L L L的自变量,所以有

∂ L ∂ z 1 ( 3 ) = ∂ L ∂ o 1 ( 3 ) ∂ o 1 ( 3 ) ∂ z 1 ( 3 ) = ∂ L ∂ y ^ 1 f ′ ( z 1 ( 3 ) ) = ( y 1 − y ^ 1 ) f ′ ( z 1 ( 3 ) ) {\partial L \over \partial z_1^{(3)}} = {\partial L \over \partial o_1^{(3)}} {\partial o_1^{(3)} \over \partial z_1^{(3)}} = {\partial L \over \partial \hat y_1} f'(z_1^{(3)}) = (y_1-\hat y_1) f'(z_1^{(3)}) ∂z1(3)∂L=∂o1(3)∂L∂z1(3)∂o1(3)=∂y^1∂Lf′(z1(3))=(y1−y^1)f′(z1(3))以此类推

δ ( 3 ) = ∂ L ∂ z ( 3 ) = [ ∂ L ∂ z 1 ( 3 ) ∂ L ∂ z 2 ( 3 ) ⋮ ∂ L ∂ z m ( 3 ) ] = [ f ′ ( z 1 ( 3 ) ) f ′ ( z 2 ( 3 ) ) ⋱ f ′ ( z m ( 3 ) ) ] [ y 1 − y ^ 1 y 2 − y ^ 2 ⋮ y m − y ^ m ] = d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) \boldsymbol \delta^{(3)} = {\partial \boldsymbol L \over \partial \boldsymbol z^{(3)}} = \left [ \begin{matrix} {\partial L \over \partial z_1^{(3)}} \\ {\partial L \over \partial z_2^{(3)}} \\ \vdots \\ {\partial L \over \partial z_m^{(3)}} \end{matrix} \right] = \left [ \begin{matrix} f'(z_1^{(3)}) & & & \\ & f'(z_2^{(3)}) & & \\ & & \ddots & \\ & & & f'(z_m^{(3)}) \end{matrix} \right] \left [ \begin{matrix} y_1-\hat y_1 \\ y_2-\hat y_2 \\ \vdots \\ y_m-\hat y_m \end{matrix} \right] = diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) δ(3)=∂z(3)∂L=⎣⎢⎢⎢⎢⎢⎡∂z1(3)∂L∂z2(3)∂L⋮∂zm(3)∂L⎦⎥⎥⎥⎥⎥⎤=⎣⎢⎢⎢⎡f′(z1(3))f′(z2(3))⋱f′(zm(3))⎦⎥⎥⎥⎤⎣⎢⎢⎢⎡y1−y^1y2−y^2⋮ym−y^m⎦⎥⎥⎥⎤=diag[f′(z(3))](y−y^)至此,误差函数的梯度公式可以进一步细化为

∇ L = { ∂ L ∂ w ( 1 ) ∂ L ∂ w ( 2 ) ∂ L ∂ w ( 3 ) ∂ L ∂ b ( 1 ) ∂ L ∂ b ( 2 ) ∂ L ∂ b ( 3 ) } = { δ ( 1 ) x T δ ( 2 ) ( o ( 1 ) ) T δ ( 3 ) ( o ( 2 ) ) T δ ( 1 ) δ ( 2 ) δ ( 3 ) } = { d i a g [ f ′ ( z ( 1 ) ) ] ( w ( 2 ) ) T d i a g [ f ′ ( z ( 2 ) ) ] ( w ( 3 ) ) T d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) x T d i a g [ f ′ ( z ( 2 ) ) ] ( w ( 3 ) ) T d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) ( o ( 1 ) ) T d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) ( o ( 2 ) ) T d i a g [ f ′ ( z ( 1 ) ) ] ( w ( 2 ) ) T d i a g [ f ′ ( z ( 2 ) ) ] ( w ( 3 ) ) T d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) d i a g [ f ′ ( z ( 2 ) ) ] ( w ( 3 ) ) T d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) d i a g [ f ′ ( z ( 3 ) ) ] ( y − y ^ ) } \nabla L = \left \{ \begin{matrix} {\partial L \over \partial \boldsymbol w^{(1)}} \\ {\partial L \over \partial \boldsymbol w^{(2)}} \\ {\partial L \over \partial \boldsymbol w^{(3)}} \\ {\partial L \over \partial \boldsymbol b^{(1)}} \\ {\partial L \over \partial \boldsymbol b^{(2)}} \\ {\partial L \over \partial \boldsymbol b^{(3)}} \end{matrix} \right \} = \left \{ \begin{matrix} \boldsymbol \delta^{(1)} \boldsymbol x^T \\ \boldsymbol \delta^{(2)} (\boldsymbol o^{(1)})^T \\ \boldsymbol \delta^{(3)} (\boldsymbol o^{(2)})^T \\ \boldsymbol \delta^{(1)} \\ \boldsymbol \delta^{(2)} \\ \boldsymbol \delta^{(3)} \end{matrix} \right \} = \left \{ \begin{matrix} diag[f'(\boldsymbol z^{(1)})] (\boldsymbol w^{(2)})^T diag[f'(\boldsymbol z^{(2)})] (\boldsymbol w^{(3)})^T diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) \boldsymbol x^T \\ diag[f'(\boldsymbol z^{(2)})] (\boldsymbol w^{(3)})^T diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) (\boldsymbol o^{(1)})^T \\ diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) (\boldsymbol o^{(2)})^T \\ diag[f'(\boldsymbol z^{(1)})] (\boldsymbol w^{(2)})^T diag[f'(\boldsymbol z^{(2)})] (\boldsymbol w^{(3)})^T diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) \\ diag[f'(\boldsymbol z^{(2)})] (\boldsymbol w^{(3)})^T diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) \\ diag[f'(\boldsymbol z^{(3)})] (\boldsymbol y-\hat \boldsymbol y) \end{matrix} \right \} ∇L=⎩⎪⎪⎪⎪⎪⎪⎪⎨⎪⎪⎪⎪⎪⎪⎪⎧∂w(1)∂L∂w(2)∂L∂w(3)∂L∂b(1)∂L∂b(2)∂L∂b(3)∂L⎭⎪⎪⎪⎪⎪⎪⎪⎬⎪⎪⎪⎪⎪⎪⎪⎫=⎩⎪⎪⎪⎪⎪⎪⎪⎨⎪⎪⎪⎪⎪⎪⎪⎧δ(1)xTδ(2)(o(1))Tδ(3)(o(2))Tδ(1)δ(2)δ(3)⎭⎪⎪⎪⎪⎪⎪⎪⎬⎪⎪⎪⎪⎪⎪⎪⎫=⎩⎪⎪⎪⎪⎪⎪⎨⎪⎪⎪⎪⎪⎪⎧diag[f′(z(1))](w(2))Tdiag[f′(z(2))](w(3))Tdiag[f′(z(3))](y−y^)xTdiag[f′(z(2))](w(3))Tdiag[f′(z(3))](y−y^)(o(1))Tdiag[f′(z(3))](y−y^)(o(2))Tdiag[f′(z(1))](w(2))Tdiag[f′(z(2))](w(3))Tdiag[f′(z(3))](y−y^)diag[f′(z(2))](w(3))Tdiag[f′(z(3))](y−y^)diag[f′(z(3))](y−y^)⎭⎪⎪⎪⎪⎪⎪⎬⎪⎪⎪⎪⎪⎪⎫设其中激活函数选用Sigmoid函数,即 f ( x ) = 1 1 + e − x f(x) = {1 \over 1+e^{-x}} f(x)=1+e−x1,所以有

f ′ ( x ) = ( 1 + e − x ) − 2 e − x = f ( x ) [ 1 − f ( x ) ] f'(x) = (1+e^{-x})^{-2} e^{-x} = f(x)[1-f(x)] f′(x)=(1+e−x)−2e−x=f(x)[1−f(x)]故而

f ′ ( z ( i ) ) = d i a g [ f ( z ( i ) ) ] [ 1 − f ( z ( i ) ) ] = d i a g ( o ( i ) ) ( 1 − o ( i ) ) f'(\boldsymbol z^{(i)}) = diag[f(\boldsymbol z^{(i)})][1-f(\boldsymbol z^{(i)})] = diag(\boldsymbol o^{(i)})(1-\boldsymbol o^{(i)}) f′(z(i))=diag[f(z(i))][1−f(z(i))]=diag(o(i))(1−o(i))至此,训练样本集合中的每个训练样本的误差函数梯度( ∇ L \nabla L ∇L)都可以计算出来了,将其都代入前述代价函数梯度公式(即算数平均值)即得到代价函数梯度。

(三)迭代训练

由于梯度描述的是函数值增长最快的方向,因此为找到使代价函数取得最小值的点,则需沿梯度反方向前进,即向所谓梯度下降方向对所有权重和偏移量做一小步调整,此调整的大小称为迭代步长。然后再重新计算当前梯度,再做反向调整,周而复始直到梯度值足够小为止。

四、归一化

归一化是一个线性变化过程。即用向量的每一个值除以这些向量的一种共同线性范数(一般可取模长、最大坐标、或是最大与最小坐标间距等),还可再做一些相对平移使其中心化。即通过线性变换将每个量都统一成同一单位(或无量纲值,如占比),从而可以互相进行比较。这就如同要比较一个学生俩次成绩的好坏,不应该比较分数,而应该比较名次一样。

由各响应函数的图像可见,神经网络的各输出值范围只能是在 [ 0 , 1 ] [0,1] [0,1]或 [ − 1 , 1 ] [-1,1] [−1,1]之间,故而要用于处理实际问题时也必须对出参做归一化处理。对训练样本出参可做归一化处理如下:

y 。 = y − min ( y ) max ( y ) − min ( y ) \boldsymbol y^。= {\boldsymbol y - \min(\boldsymbol y) \over \max(\boldsymbol y) - \min(\boldsymbol y)} y。=max(y)−min(y)y−min(y)其中 y \boldsymbol y y为一个训练样本出参向量, y 。 \boldsymbol y^。 y。为归一化后的该训练样本出参向量。当已知待估计结果的值域后,如上限和下限向量分别为 m \boldsymbol m m和 n \boldsymbol n n,则对神经网络估计出的结果须做反归一化处理:

y ^ = y ^ 。 ( m − n ) + n \hat \boldsymbol y = \hat \boldsymbol y^。(\boldsymbol m - \boldsymbol n) + \boldsymbol n y^=y^。(m−n)+n其中 y ^ 。 \hat \boldsymbol y^。 y^。为整个神经网络输出的估计向量,本身就是归一化的。

五、应用示例

此例中根据给定的平面直角坐标系上的一系列点来训练一个BPNN,从而拟合出一条曲线。使用python语言实现。

(一)数据准备

import numpy as np

import matplotlib.pyplot as plt

# 定义一个函数

def f(x):

return np.sin(x) + 0.5*x

# 利用上述函数创建出一系列离散点,并绘制出图像

x = np.linspace(-2*np.pi, 2*np.pi, 50)

y = f(x)

plt.figure(2)

plt.plot(x, y, 'b.', label='points')

plt.legend(loc=2)

(二)定义网络

根据前述神经网络原理,可定义一个BPNN类如下:

import numpy as np

import numpy.matlib as matlib

import matplotlib.pyplot as plt

import numpy.random as random

class BPANN:

'''

1、定义一个各层神经元个数分别为neures=[k1, k2, ...]的神经网络,其中k1为作为输入的

神经元个数。

2、初始化入参W为由各神经元层之间的连接权重矩阵构成的数组;初始化入参B为由各神经元层

的偏移向量构成的数组。均默认为随机数。

3、每个神经元的激活函数默认为是Sigmoid函数。

'''

def __init__(self, neures, W=None, B=None):

self.neures = neures

self.W = [] # The weight matrixes of each neures layer

self.B = [] # The bias vectors of each neures layer

# Construct the matrixes of weight and bias of each neures layer

for i in range(1, len(neures)):

# A matrix of random floats follow standard normal distribution

self.W.append(matlib.randn(neures[i], neures[i-1]))

self.B.append(matlib.randn(neures[i], 1))

# Activation function (Sigmoid function) of each neures

def actFun(self, x): # The input x should be considered as a vector

return 1/(1+np.exp(-x))

# Use the ANN to get the output

def work(self, X, lowerLimit=0, upperLimit=1):

# Check the type of input parameters

if not all(isinstance(x, float) or isinstance(x, int) for x in X):

raise Exception("the type of parameters must be float or int list")

else:

self.Z = [] # The input vectors of each neures layer

self.O = [] # The output vectors of each neures layer

# The input vector of first neure layer

self.Z.append(self.W[0]*np.matrix(X).T + self.B[0])

# The output vector of first neure layer

self.O.append(self.actFun(self.Z[-1]))

for (w, b) in zip(self.W[1:], self.B[1:]):

self.Z.append(w*self.O[-1] + b)

self.O.append(self.actFun(self.Z[-1]))

# Renormalize and change the type of output to a list

return list((self.O[-1]*(upperLimit-lowerLimit)+lowerLimit).A1)

# Train the ANN

def train(self, sampleX, sampleY, step, iterations=100, size=0):

'''

sampleX:所有不重复训练样本的入参,一个一维列表。

sampleY:所有不重复训练样本的出参,一个与上述入参同长度的一维列表。

step:每次训练迭代的步长,一个(0, 10)的数值。

iterations:训练迭代倍数,乘以样本子集个数即为训练迭代次数,是一个大于零的整

数,默认是30倍。

size:每次迭代从样本全集中抽取的样本子集大小,最小值是1,最大值不超过样本全集

大小(若超过或值为0则取样本全集大小)的整数。

'''

# The indices list of the samples in the universal set

self.uniSetIndices = list(range(0,len(sampleX)))

random.shuffle(self.uniSetIndices) # Disrupt the sort of samples

# The list of the indices lists of the samples in each subset

self.subsetsIndices = []

if size > 0 and size <= len(sampleX):

i = 0

while i < len(sampleX):

self.subsetsIndices.append(self.uniSetIndices[i: i+size])

i = i + size

else:

self.subsetsIndices = [self.uniSetIndices]

# Normalize the sampleY

SYM = np.matrix(sampleY)

# Use each colume's max data to consturct a matrix that has the same shape

maxData = matlib.ones((SYM.shape[0], 1)) * np.amax(SYM, axis=0)

# Use each colume's min data to consturct a matrix that has the same shape

minData = matlib.ones((SYM.shape[0], 1)) * np.amin(SYM, axis=0)

# Normal = (original - min)/(max - min)

sampleY = np.nan_to_num(np.divide((SYM - minData),

(maxData - minData)), nan=1).tolist()

# The list of loss expectation of each iteration's eache subset

J = []

# The number of neures layers include the input layer

layerNum = len(self.neures)

while iterations > 0:

# Dispose each subset

for sI in self.subsetsIndices:

# The partial derivative matrixes of each neures layer's weight

self.dW = []

# The partial derivative vectors of each neures layer's bias

self.dB = []

# Construct all weight and bias matrixex filled by zero

for i in range(1, layerNum):

self.dW.append(matlib.zeros((self.neures[i],

self.neures[i-1])))

self.dB.append(matlib.zeros((self.neures[i], 1)))

# Dispose each sample in the subset

L = 0 # The loss of all samples in the subset

subSize = len(sI) # The size of the subset

for i in sI:

# The sensitivity vectors of each neures layer

Delta = []

# Get the current state of the ANN

Y = np.matrix(self.work(sampleX[i])).T

# Recalculate the loss which is defined as sum of squared errors

L = L + 0.5*np.sum(np.square(Y-np.matrix(sampleY[i]).T))

# Get the sensitivity vectors of the last neure layer

Delta.append(np.diag(np.multiply(self.O[-1],

(1-self.O[-1])).A1) * (Y-np.matrix(sampleY[i]).T))

for j in range(layerNum-2, 0, -1):

# Delta_j = diag[f'(Z_j)]*W_j.T*Delta_(j-1)

Delta.append(np.diag(np.multiply(self.O[j-1],

(1-self.O[j-1])).A1) * self.W[j].T*Delta[-1])

Delta.reverse()

# Sum gradients at each sample in the subset

self.dW[0] = self.dW[0] + Delta[0