目标检测模型YOLOv3之提取特征

图像分类的章节中,我们已经讲解过了通过卷积神经网络提取图像特征。通过连续使用多层卷积和池化等操作,能得到语义含义更加丰富的特征图。在检测问题中,也使用卷积神经网络逐层提取图像特征,通过最终的输出特征图来表征物体位置和类别等信息。

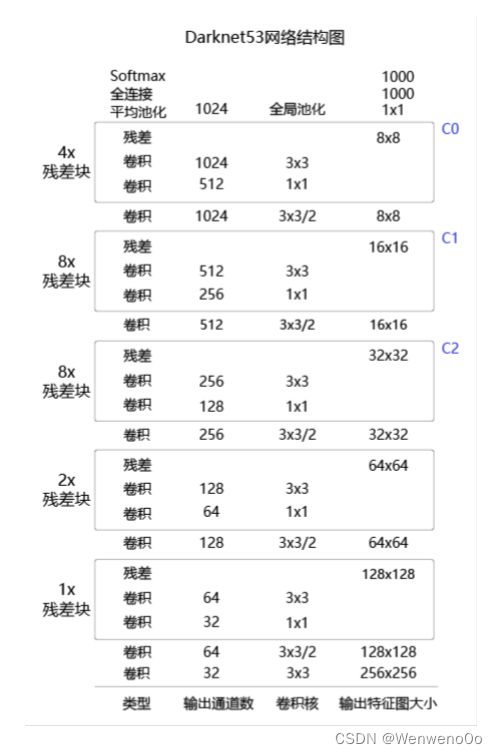

YOLOv3算法使用的骨干网络是Darknet53。Darknet53网络的具体结构如下图所示,在ImageNet图像分类任务上取得了很好的成绩。在检测任务中,将图中C0后面的平均池化、全连接层和Softmax去掉,保留从输入到C0部分的网络结构,作为检测模型的基础网络结构,也称为骨干网络。YOLOv3模型会在骨干网络的基础上,再添加检测相关的网络模块。

下面的程序是Darknet53骨干网络的实现代码。

名词解释:特征图的步幅(stride)

在提取特征的过程中通常会使用步幅大于1的卷积或者池化,导致后面的特征图尺寸越来越小,特征图的步幅等于输入图片尺寸除以特征图尺寸。例如:C0的尺寸是20×20,原图尺寸是640×640,则C0的步幅是 640 20 = 32 \frac{640}{20}=32 20640=32。同理,C1的步幅是16,C2的步幅是8。

##基于paddlepaddle

import paddle

import paddle.nn.functional as F

import numpy as np

class ConvBNLayer(paddle.nn.Layer):

def __init__(self, ch_in, ch_out,

kernel_size=3, stride=1, groups=1,

padding=0, act="leaky"):

super(ConvBNLayer, self).__init__()

self.conv = paddle.nn.Conv2D(

in_channels=ch_in,

out_channels=ch_out,

kernel_size=kernel_size,

stride=stride,

padding=padding,

groups=groups,

weight_attr=paddle.ParamAttr(

initializer=paddle.nn.initializer.Normal(0., 0.02)),

bias_attr=False)

self.batch_norm = paddle.nn.BatchNorm2D(

num_features=ch_out,

weight_attr=paddle.ParamAttr(

initializer=paddle.nn.initializer.Normal(0., 0.02),

regularizer=paddle.regularizer.L2Decay(0.)),

bias_attr=paddle.ParamAttr(

initializer=paddle.nn.initializer.Constant(0.0),

regularizer=paddle.regularizer.L2Decay(0.)))

self.act = act

def forward(self, inputs):

out = self.conv(inputs)

out = self.batch_norm(out)

if self.act == 'leaky':

out = F.leaky_relu(x=out, negative_slope=0.1)

return out

class DownSample(paddle.nn.Layer):

# 下采样,图片尺寸减半,具体实现方式是使用stirde=2的卷积

def __init__(self,

ch_in,

ch_out,

kernel_size=3,

stride=2,

padding=1):

super(DownSample, self).__init__()

self.conv_bn_layer = ConvBNLayer(

ch_in=ch_in,

ch_out=ch_out,

kernel_size=kernel_size,

stride=stride,

padding=padding)

self.ch_out = ch_out

def forward(self, inputs):

out = self.conv_bn_layer(inputs)

return out

class BasicBlock(paddle.nn.Layer):

"""

基本残差块的定义,输入x经过两层卷积,然后接第二层卷积的输出和输入x相加

"""

def __init__(self, ch_in, ch_out):

super(BasicBlock, self).__init__()

self.conv1 = ConvBNLayer(

ch_in=ch_in,

ch_out=ch_out,

kernel_size=1,

stride=1,

padding=0

)

self.conv2 = ConvBNLayer(

ch_in=ch_out,

ch_out=ch_out*2,

kernel_size=3,

stride=1,

padding=1

)

def forward(self, inputs):

conv1 = self.conv1(inputs)

conv2 = self.conv2(conv1)

out = paddle.add(x=inputs, y=conv2)

return out

class LayerWarp(paddle.nn.Layer):

"""

添加多层残差块,组成Darknet53网络的一个层级

"""

def __init__(self, ch_in, ch_out, count, is_test=True):

super(LayerWarp,self).__init__()

self.basicblock0 = BasicBlock(ch_in,

ch_out)

self.res_out_list = []

for i in range(1, count):

res_out = self.add_sublayer("basic_block_%d" % (i), # 使用add_sublayer添加子层

BasicBlock(ch_out*2,

ch_out))

self.res_out_list.append(res_out)

def forward(self,inputs):

y = self.basicblock0(inputs)

for basic_block_i in self.res_out_list:

y = basic_block_i(y)

return y

# DarkNet 每组残差块的个数,来自DarkNet的网络结构图

DarkNet_cfg = {53: ([1, 2, 8, 8, 4])}

class DarkNet53_conv_body(paddle.nn.Layer):

def __init__(self):

super(DarkNet53_conv_body, self).__init__()

self.stages = DarkNet_cfg[53]

self.stages = self.stages[0:5]

# 第一层卷积

self.conv0 = ConvBNLayer(

ch_in=3,

ch_out=32,

kernel_size=3,

stride=1,

padding=1)

# 下采样,使用stride=2的卷积来实现

self.downsample0 = DownSample(

ch_in=32,

ch_out=32 * 2)

# 添加各个层级的实现

self.darknet53_conv_block_list = []

self.downsample_list = []

for i, stage in enumerate(self.stages):

conv_block = self.add_sublayer(

"stage_%d" % (i),

LayerWarp(32*(2**(i+1)),

32*(2**i),

stage))

self.darknet53_conv_block_list.append(conv_block)

# 两个层级之间使用DownSample将尺寸减半

for i in range(len(self.stages) - 1):

downsample = self.add_sublayer(

"stage_%d_downsample" % i,

DownSample(ch_in=32*(2**(i+1)),

ch_out=32*(2**(i+2))))

self.downsample_list.append(downsample)

def forward(self,inputs):

out = self.conv0(inputs)

#print("conv1:",out.numpy())

out = self.downsample0(out)

#print("dy:",out.numpy())

blocks = []

for i, conv_block_i in enumerate(self.darknet53_conv_block_list): #依次将各个层级作用在输入上面

out = conv_block_i(out)

blocks.append(out)

if i < len(self.stages) - 1:

out = self.downsample_list[i](out)

return blocks[-1:-4:-1] # 将C0, C1, C2作为返回值

torch实现

class BN_Conv2d_Leaky(nn.Module):

"""

BN_CONV_LeakyRELU

"""

def __init__(self, in_channels: object, out_channels: object, kernel_size: object, stride: object, padding: object,

dilation=1, groups=1, bias=False) -> object:

super(BN_Conv2d_Leaky, self).__init__()

self.seq = nn.Sequential(

nn.Conv2d(in_channels, out_channels, kernel_size=kernel_size, stride=stride,

padding=padding, dilation=dilation, groups=groups, bias=bias),

nn.BatchNorm2d(out_channels)

)

def forward(self, x):

return F.leaky_relu(self.seq(x))

class Dark_block(nn.Module):

"""block for darknet"""

def __init__(self, channels, is_se=False, inner_channels=None):

super(Dark_block, self).__init__()

self.is_se = is_se

if inner_channels is None:

inner_channels = channels // 2

self.conv1 = BN_Conv2d_Leaky(channels, inner_channels, 1, 1, 0)

self.conv2 = nn.Conv2d(inner_channels, channels, 3, 1, 1)

self.bn = nn.BatchNorm2d(channels)

if self.is_se:

self.se = SE(channels, 16)

def forward(self, x):

out = self.conv1(x)

out = self.conv2(out)

out = self.bn(out)

if self.is_se:

coefficient = self.se(out)

out *= coefficient

out += x

return F.leaky_relu(out)

class DarkNet(nn.Module):

def __init__(self, layers: object, num_classes, is_se=False) -> object:

super(DarkNet, self).__init__()

self.is_se = is_se

filters = [64, 128, 256, 512, 1024]

self.conv1 = BN_Conv2d(3, 32, 3, 1, 1)

self.redu1 = BN_Conv2d(32, 64, 3, 2, 1)

self.conv2 = self.__make_layers(filters[0], layers[0])

self.redu2 = BN_Conv2d(filters[0], filters[1], 3, 2, 1)

self.conv3 = self.__make_layers(filters[1], layers[1])

self.redu3 = BN_Conv2d(filters[1], filters[2], 3, 2, 1)

self.conv4 = self.__make_layers(filters[2], layers[2])

self.redu4 = BN_Conv2d(filters[2], filters[3], 3, 2, 1)

self.conv5 = self.__make_layers(filters[3], layers[3])

self.redu5 = BN_Conv2d(filters[3], filters[4], 3, 2, 1)

self.conv6 = self.__make_layers(filters[4], layers[4])

self.global_pool = nn.AdaptiveAvgPool2d((1, 1))

self.fc = nn.Linear(filters[4], num_classes)

def __make_layers(self, num_filter, num_layers):

layers = []

for _ in range(num_layers):

layers.append(Dark_block(num_filter, self.is_se))

return nn.Sequential(*layers)

def forward(self, x):

out = self.conv1(x)

out = self.redu1(out)

out = self.conv2(out)

out = self.redu2(out)

out = self.conv3(out)

out = self.redu3(out)

out = self.conv4(out)

out = self.redu4(out)

out = self.conv5(out)

out = self.redu5(out)

out = self.conv6(out)

out = self.global_pool(out)

out = out.view(out.size(0), -1)

out = self.fc(out)

return F.softmax(out)

def darknet_53(num_classes=1000):

return DarkNet([1, 2, 8, 8, 4], num_classes)