Yolov5如何更换EIOU/alpha IOU?

Yolov5如何更换EIOU/alpha IOU?

文章目录

-

- Yolov5如何更换EIOU/alpha IOU?

- 1.EIoU更换方式

- 2.alpha IoU更换方式

- 3.IoU

- 4.GIoU

- 4.DIoU

- 5.CIoU

- 6.IoU原理

1.EIoU更换方式

第一步;将metrics.py文件中bbox_iou()替换为以下代码

def bbox_iou(box1, box2, x1y1x2y2=True, GIoU=False, DIoU=False, CIoU=False, EIoU=False, eps=1e-7):

# Returns the IoU of box1 to box2. box1 is 4, box2 is nx4

box2 = box2.T

# Get the coordinates of bounding boxes

if x1y1x2y2: # x1, y1, x2, y2 = box1

b1_x1, b1_y1, b1_x2, b1_y2 = box1[0], box1[1], box1[2], box1[3]

b2_x1, b2_y1, b2_x2, b2_y2 = box2[0], box2[1], box2[2], box2[3]

else: # transform from xywh to xyxy

b1_x1, b1_x2 = box1[0] - box1[2] / 2, box1[0] + box1[2] / 2

b1_y1, b1_y2 = box1[1] - box1[3] / 2, box1[1] + box1[3] / 2

b2_x1, b2_x2 = box2[0] - box2[2] / 2, box2[0] + box2[2] / 2

b2_y1, b2_y2 = box2[1] - box2[3] / 2, box2[1] + box2[3] / 2

# Intersection area

inter = (torch.min(b1_x2, b2_x2) - torch.max(b1_x1, b2_x1)).clamp(0) * \

(torch.min(b1_y2, b2_y2) - torch.max(b1_y1, b2_y1)).clamp(0)

# Union Area

w1, h1 = b1_x2 - b1_x1, b1_y2 - b1_y1 + eps

w2, h2 = b2_x2 - b2_x1, b2_y2 - b2_y1 + eps

union = w1 * h1 + w2 * h2 - inter + eps

iou = inter / union

if GIoU or DIoU or CIoU or EIoU:

cw = torch.max(b1_x2, b2_x2) - torch.min(b1_x1, b2_x1) # convex (smallest enclosing box) width

ch = torch.max(b1_y2, b2_y2) - torch.min(b1_y1, b2_y1) # convex height

if CIoU or DIoU or EIoU: # Distance or Complete IoU https://arxiv.org/abs/1911.08287v1

c2 = cw ** 2 + ch ** 2 + eps # convex diagonal squared

rho2 = ((b2_x1 + b2_x2 - b1_x1 - b1_x2) ** 2 +

(b2_y1 + b2_y2 - b1_y1 - b1_y2) ** 2) / 4 # center distance squared

if DIoU:

return iou - rho2 / c2 # DIoU

elif CIoU: # https://github.com/Zzh-tju/DIoU-SSD-pytorch/blob/master/utils/box/box_utils.py#L47

v = (4 / math.pi ** 2) * torch.pow(torch.atan(w2 / h2) - torch.atan(w1 / h1), 2)

with torch.no_grad():

alpha = v / (v - iou + (1 + eps))

return iou - (rho2 / c2 + v * alpha) # CIoU

elif EIoU:

rho_w2 = ((b2_x2 - b2_x1) - (b1_x2 - b1_x1)) ** 2

rho_h2 = ((b2_y2 - b2_y1) - (b1_y2 - b1_y1)) ** 2

cw2 = cw ** 2 + eps

ch2 = ch ** 2 + eps

return iou - (rho2 / c2 + rho_w2 / cw2 + rho_h2 / ch2)

else: # GIoU https://arxiv.org/pdf/1902.09630.pdf

c_area = cw * ch + eps # convex area

return iou - (c_area - union) / c_area # GIoU

else:

return iou # IoU

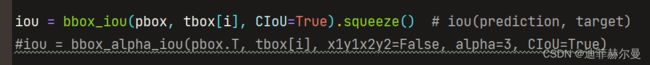

第二步;在utils/loss.py中,找到ComputeLoss类中的__call__()函数,把Regression loss中计算iou的代码,换成下面这句:

iou = bbox_iou(pbox.T, tbox[i], x1y1x2y2=False, CIoU=False, EIoU=True)

# iou(prediction, target)

2.alpha IoU更换方式

第一步;直接将utils/metrics.py文件中bbox_iou()替换,随后将bbox_alpha_iou()改为bbox_iou()

def bbox_alpha_iou(box1, box2, x1y1x2y2=False, GIoU=False, DIoU=False, CIoU=False, alpha=3, eps=1e-7):

# Returns tsqrt_he IoU of box1 to box2. box1 is 4, box2 is nx4

box2 = box2.T

# Get the coordinates of bounding boxes

if x1y1x2y2: # x1, y1, x2, y2 = box1

b1_x1, b1_y1, b1_x2, b1_y2 = box1[0], box1[1], box1[2], box1[3]

b2_x1, b2_y1, b2_x2, b2_y2 = box2[0], box2[1], box2[2], box2[3]

else: # transform from xywh to xyxy

b1_x1, b1_x2 = box1[0] - box1[2] / 2, box1[0] + box1[2] / 2

b1_y1, b1_y2 = box1[1] - box1[3] / 2, box1[1] + box1[3] / 2

b2_x1, b2_x2 = box2[0] - box2[2] / 2, box2[0] + box2[2] / 2

b2_y1, b2_y2 = box2[1] - box2[3] / 2, box2[1] + box2[3] / 2

# Intersection area

inter = (torch.min(b1_x2, b2_x2) - torch.max(b1_x1, b2_x1)).clamp(0) * \

(torch.min(b1_y2, b2_y2) - torch.max(b1_y1, b2_y1)).clamp(0)

# Union Area

w1, h1 = b1_x2 - b1_x1, b1_y2 - b1_y1 + eps

w2, h2 = b2_x2 - b2_x1, b2_y2 - b2_y1 + eps

union = w1 * h1 + w2 * h2 - inter + eps

# change iou into pow(iou+eps)

# iou = inter / union

iou = torch.pow(inter/union + eps, alpha)

# beta = 2 * alpha

if GIoU or DIoU or CIoU:

cw = torch.max(b1_x2, b2_x2) - torch.min(b1_x1, b2_x1) # convex (smallest enclosing box) width

ch = torch.max(b1_y2, b2_y2) - torch.min(b1_y1, b2_y1) # convex height

if CIoU or DIoU: # Distance or Complete IoU https://arxiv.org/abs/1911.08287v1

c2 = (cw ** 2 + ch ** 2) ** alpha + eps # convex diagonal

rho_x = torch.abs(b2_x1 + b2_x2 - b1_x1 - b1_x2)

rho_y = torch.abs(b2_y1 + b2_y2 - b1_y1 - b1_y2)

rho2 = ((rho_x ** 2 + rho_y ** 2) / 4) ** alpha # center distance

if DIoU:

return iou - rho2 / c2 # DIoU

elif CIoU: # https://github.com/Zzh-tju/DIoU-SSD-pytorch/blob/master/utils/box/box_utils.py#L47

v = (4 / math.pi ** 2) * torch.pow(torch.atan(w2 / h2) - torch.atan(w1 / h1), 2)

with torch.no_grad():

alpha_ciou = v / ((1 + eps) - inter / union + v)

# return iou - (rho2 / c2 + v * alpha_ciou) # CIoU

return iou - (rho2 / c2 + torch.pow(v * alpha_ciou + eps, alpha)) # CIoU

else: # GIoU https://arxiv.org/pdf/1902.09630.pdf

# c_area = cw * ch + eps # convex area

# return iou - (c_area - union) / c_area # GIoU

c_area = torch.max(cw * ch + eps, union) # convex area

return iou - torch.pow((c_area - union) / c_area + eps, alpha) # GIoU

else:

return iou # torch.log(iou+eps) or iou

第二步;在utils/loss.py中,找到ComputeLoss类中的__call__()函数,把Regression loss中计算iou的代码,换成下面这句:

下面这几个IoU方法源码里写到一起了,通过给相应的IoU置为True就可以打开。

3.IoU

def Iou_loss(preds, bbox, eps=1e-6, reduction='mean'):

'''

preds:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

bbox:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

reduction:"mean"or"sum"

return: loss

'''

x1 = torch.max(preds[:, 0], bbox[:, 0])

y1 = torch.max(preds[:, 1], bbox[:, 1])

x2 = torch.min(preds[:, 2], bbox[:, 2])

y2 = torch.min(preds[:, 3], bbox[:, 3])

w = (x2 - x1 + 1.0).clamp(0.)

h = (y2 - y1 + 1.0).clamp(0.)

inters = w * h

print("inters:\n",inters)

uni = (preds[:, 2] - preds[:, 0] + 1.0) * (preds[:, 3] - preds[:, 1] + 1.0) + (bbox[:, 2] - bbox[:, 0] + 1.0) * (

bbox[:, 3] - bbox[:, 1] + 1.0) - inters

print("uni:\n",uni)

ious = (inters / uni).clamp(min=eps)

loss = -ious.log()

if reduction == 'mean':

loss = torch.mean(loss)

elif reduction == 'sum':

loss = torch.sum(loss)

else:

raise NotImplementedError

print("last_loss:\n",loss)

return loss

4.GIoU

def Giou_loss(preds, bbox, eps=1e-7, reduction='mean'):

'''

https://github.com/sfzhang15/ATSS/blob/master/atss_core/modeling/rpn/atss/loss.py#L36

:param preds:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

:param bbox:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

:return: loss

'''

ix1 = torch.max(preds[:, 0], bbox[:, 0])

iy1 = torch.max(preds[:, 1], bbox[:, 1])

ix2 = torch.min(preds[:, 2], bbox[:, 2])

iy2 = torch.min(preds[:, 3], bbox[:, 3])

iw = (ix2 - ix1 + 1.0).clamp(0.)

ih = (iy2 - iy1 + 1.0).clamp(0.)

# overlap

inters = iw * ih

print("inters:\n",inters)

# union

uni = (preds[:, 2] - preds[:, 0] + 1.0) * (preds[:, 3] - preds[:, 1] + 1.0) + (bbox[:, 2] - bbox[:, 0] + 1.0) * (

bbox[:, 3] - bbox[:, 1] + 1.0) - inters + eps

print("uni:\n",uni)

# ious

ious = inters / uni

print("Iou:\n",ious)

ex1 = torch.min(preds[:, 0], bbox[:, 0])

ey1 = torch.min(preds[:, 1], bbox[:, 1])

ex2 = torch.max(preds[:, 2], bbox[:, 2])

ey2 = torch.max(preds[:, 3], bbox[:, 3])

ew = (ex2 - ex1 + 1.0).clamp(min=0.)

eh = (ey2 - ey1 + 1.0).clamp(min=0.)

# enclose erea

enclose = ew * eh + eps

print("enclose:\n",enclose)

giou = ious - (enclose - uni) / enclose

loss = 1 - giou

if reduction == 'mean':

loss = torch.mean(loss)

elif reduction == 'sum':

loss = torch.sum(loss)

else:

raise NotImplementedError

print("last_loss:\n",loss)

return loss

4.DIoU

def Diou_loss(preds, bbox, eps=1e-7, reduction='mean'):

'''

preds:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

bbox:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

eps: eps to avoid divide 0

reduction: mean or sum

return: diou-loss

'''

ix1 = torch.max(preds[:, 0], bbox[:, 0])

iy1 = torch.max(preds[:, 1], bbox[:, 1])

ix2 = torch.min(preds[:, 2], bbox[:, 2])

iy2 = torch.min(preds[:, 3], bbox[:, 3])

iw = (ix2 - ix1 + 1.0).clamp(min=0.)

ih = (iy2 - iy1 + 1.0).clamp(min=0.)

# overlaps

inters = iw * ih

# union

uni = (preds[:, 2] - preds[:, 0] + 1.0) * (preds[:, 3] - preds[:, 1] + 1.0) + (bbox[:, 2] - bbox[:, 0] + 1.0) * (

bbox[:, 3] - bbox[:, 1] + 1.0) - inters

# iou

iou = inters / (uni + eps)

print("iou:\n",iou)

# inter_diag

cxpreds = (preds[:, 2] + preds[:, 0]) / 2

cypreds = (preds[:, 3] + preds[:, 1]) / 2

cxbbox = (bbox[:, 2] + bbox[:, 0]) / 2

cybbox = (bbox[:, 3] + bbox[:, 1]) / 2

inter_diag = (cxbbox - cxpreds) ** 2 + (cybbox - cypreds) ** 2

print("inter_diag:\n",inter_diag)

# outer_diag

ox1 = torch.min(preds[:, 0], bbox[:, 0])

oy1 = torch.min(preds[:, 1], bbox[:, 1])

ox2 = torch.max(preds[:, 2], bbox[:, 2])

oy2 = torch.max(preds[:, 3], bbox[:, 3])

outer_diag = (ox1 - ox2) ** 2 + (oy1 - oy2) ** 2

print("outer_diag:\n",outer_diag)

diou = iou - inter_diag / outer_diag

diou = torch.clamp(diou, min=-1.0, max=1.0)

diou_loss = 1 - diou

print("last_loss:\n",diou_loss)

if reduction == 'mean':

loss = torch.mean(diou_loss)

elif reduction == 'sum':

loss = torch.sum(diou_loss)

else:

raise NotImplementedError

return loss

5.CIoU

import math

def Ciou_loss(preds, bbox, eps=1e-7, reduction='mean'):

'''

https://github.com/Zzh-tju/DIoU-SSD-pytorch/blob/master/utils/loss/multibox_loss.py

:param preds:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

:param bbox:[[x1,y1,x2,y2], [x1,y1,x2,y2],,,]

:param eps: eps to avoid divide 0

:param reduction: mean or sum

:return: diou-loss

'''

ix1 = torch.max(preds[:, 0], bbox[:, 0])

iy1 = torch.max(preds[:, 1], bbox[:, 1])

ix2 = torch.min(preds[:, 2], bbox[:, 2])

iy2 = torch.min(preds[:, 3], bbox[:, 3])

iw = (ix2 - ix1 + 1.0).clamp(min=0.)

ih = (iy2 - iy1 + 1.0).clamp(min=0.)

# overlaps

inters = iw * ih

# union

uni = (preds[:, 2] - preds[:, 0] + 1.0) * (preds[:, 3] - preds[:, 1] + 1.0) + (bbox[:, 2] - bbox[:, 0] + 1.0) * (

bbox[:, 3] - bbox[:, 1] + 1.0) - inters

# iou

iou = inters / (uni + eps)

print("iou:\n",iou)

# inter_diag

cxpreds = (preds[:, 2] + preds[:, 0]) / 2

cypreds = (preds[:, 3] + preds[:, 1]) / 2

cxbbox = (bbox[:, 2] + bbox[:, 0]) / 2

cybbox = (bbox[:, 3] + bbox[:, 1]) / 2

inter_diag = (cxbbox - cxpreds) ** 2 + (cybbox - cypreds) ** 2

# outer_diag

ox1 = torch.min(preds[:, 0], bbox[:, 0])

oy1 = torch.min(preds[:, 1], bbox[:, 1])

ox2 = torch.max(preds[:, 2], bbox[:, 2])

oy2 = torch.max(preds[:, 3], bbox[:, 3])

outer_diag = (ox1 - ox2) ** 2 + (oy1 - oy2) ** 2

diou = iou - inter_diag / outer_diag

print("diou:\n",diou)

# calculate v,alpha

wbbox = bbox[:, 2] - bbox[:, 0] + 1.0

hbbox = bbox[:, 3] - bbox[:, 1] + 1.0

wpreds = preds[:, 2] - preds[:, 0] + 1.0

hpreds = preds[:, 3] - preds[:, 1] + 1.0

v = torch.pow((torch.atan(wbbox / hbbox) - torch.atan(wpreds / hpreds)), 2) * (4 / (math.pi ** 2))

alpha = v / (1 - iou + v)

ciou = diou - alpha * v

ciou = torch.clamp(ciou, min=-1.0, max=1.0)

ciou_loss = 1 - ciou

if reduction == 'mean':

loss = torch.mean(ciou_loss)

elif reduction == 'sum':

loss = torch.sum(ciou_loss)

else:

raise NotImplementedError

print("last_loss:\n",loss)

return loss

6.IoU原理

这几种IoU的原理就引用【知乎江大白】老师的笔记了

目标检测任务的损失函数一般由Classificition Loss(分类损失函数)和Bounding Box Regeression Loss(回归损失函数)两部分构成。

Bounding Box Regeression的Loss近些年的发展过程是:Smooth L1 Loss-> IoU Loss(2016)-> GIoU Loss(2019)-> DIoU Loss(2020)->CIoU Loss(2020)

我们从最常用的IOU_Loss开始,进行对比拆解分析

a.IOU_Loss

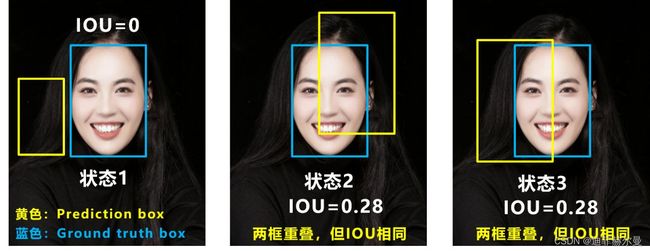

可以看到IOU的loss其实很简单,主要是交集/并集,但其实也存在两个问题。

问题1:即状态1的情况,当预测框和目标框不相交时,IOU=0,无法反应两个框距离的远近,此时损失函数不可导,IOU_Loss无法优化两个框不相交的情况。

问题2:即状态2和状态3的情况,当两个预测框大小相同,两个IOU也相同,IOU_Loss无法区分两者相交情况的不同。

因此2019年出现了GIOU_Loss来进行改进。

可以看到右图GIOU_Loss中,增加了相交尺度的衡量方式,缓解了单纯IOU_Loss时的尴尬。

但为什么仅仅说缓解呢?

因为还存在一种不足:

问题:状态1、2、3都是预测框在目标框内部且预测框大小一致的情况,这时预测框和目标框的差集都是相同的,因此这三种状态的GIOU值也都是相同的,这时GIOU退化成了IOU,无法区分相对位置关系。

基于这个问题,2020年的AAAI又提出了DIOU_Loss。

c.DIOU_Loss

好的目标框回归函数应该考虑三个重要几何因素:重叠面积、中心点距离,长宽比。

针对IOU和GIOU存在的问题,作者从两个方面进行考虑

一:如何最小化预测框和目标框之间的归一化距离?

二:如何在预测框和目标框重叠时,回归的更准确?

针对第一个问题,提出了DIOU_Loss(Distance_IOU_Loss)

DIOU_Loss考虑了重叠面积和中心点距离,当目标框包裹预测框的时候,直接度量2个框的距离,因此DIOU_Loss收敛的更快。

但就像前面好的目标框回归函数所说的,没有考虑到长宽比。

比如上面三种情况,目标框包裹预测框,本来DIOU_Loss可以起作用。

但预测框的中心点的位置都是一样的,因此按照DIOU_Loss的计算公式,三者的值都是相同的。

针对这个问题,又提出了CIOU_Loss,不对不说,科学总是在解决问题中,不断进步!!

d.CIOU_Loss

CIOU_Loss和DIOU_Loss前面的公式都是一样的,不过在此基础上还增加了一个影响因子,将预测框和目标框的长宽比都考虑了进去。

其中v是衡量长宽比一致性的参数,我们也可以定义为:

这样CIOU_Loss就将目标框回归函数应该考虑三个重要几何因素:重叠面积、中心点距离,长宽比全都考虑进去了。

再来综合的看下各个Loss函数的不同点:

IOU_Loss:主要考虑检测框和目标框重叠面积。

GIOU_Loss:在IOU的基础上,解决边界框不重合时的问题。

DIOU_Loss:在IOU和GIOU的基础上,考虑边界框中心点距离的信息。

CIOU_Loss:在DIOU的基础上,考虑边界框宽高比的尺度信息。

本人更多Yolov5(v6.1)实战内容导航

1.手把手带你调参Yolo v5 (v6.1)(一)

2.手把手带你调参Yolo v5 (v6.1)(二)

3.手把手带你Yolov5 (v6.1)添加注意力机制(一)(并附上30多种顶会Attention原理图)

4.手把手带你Yolov5 (v6.1)添加注意力机制(二)(在C3模块中加入注意力机制)

5.Yolov5如何更换激活函数?

6.如何快速使用自己的数据集训练Yolov5模型

7.Yolov5(v6.1)数据增强方式解析