OpenCV+Mediapipe手势动作捕捉与Unity引擎的结合

OpenCV+Mediapipe手势动作捕捉与Unity引擎的结合

- 前言

- Demo演示

- 认识Mediapipe

- 项目环境

- 手势动作捕捉部分

-

- 实时动作捕捉

- 核心代码

-

- 完整代码

-

- Hands.py

- py代码效果

- Unity 部分

-

- 建模

- Unity代码

-

- UDP.cs

-

- UDP.cs接收效果图

- Line.cs

- Hands.cs

-

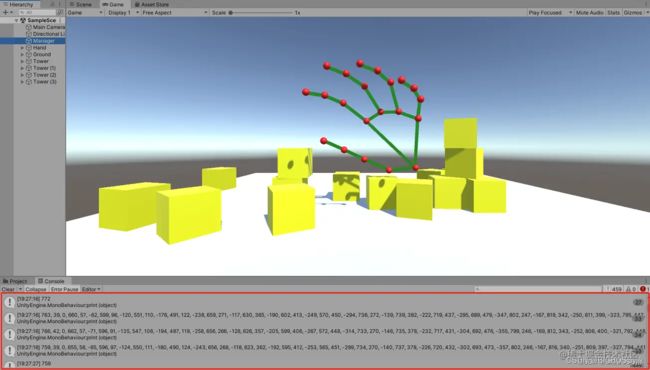

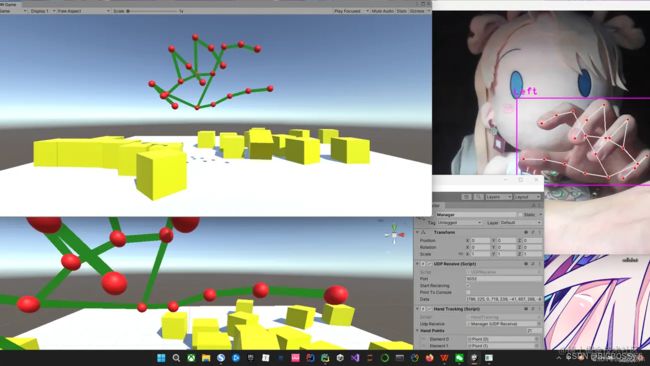

- 最终实现效果

前言

本篇文章将介绍如何使用Python利用OpenCV图像捕捉,配合强大的Mediapipe库来实现手势动作检测与识别;将识别结果实时同步至Unity中,实现手势模型在Unity中运动身体结构识别

Demo演示

Demo展示:https://hackathon2022.juejin.cn/#/works/detail?unique=WJoYomLPg0JOYs8GazDVrw

本篇文章所用的技术会整理后开源,后续可以持续关注:

GitHub:https://github.com/BIGBOSS-dedsec

项目下载地址:https://github.com/BIGBOSS-dedsec/OpenCV-Unity-To-Build-3DHands

CSDN: https://blog.csdn.net/weixin_50679163?type=edu

同时本篇文章实现的技术参加了稀土掘金2022编程挑战赛-游戏赛道

作品展示:https://hackathon2022.juejin.cn/#/works/detail?unique=WJoYomLPg0JOYs8GazDVrw

![]()

认识Mediapipe

项目的实现,核心是强大的Mediapipe ,它是google的一个开源项目:

| 功能 | 详细 |

|---|---|

| 人脸检测 FaceMesh | 从图像/视频中重建出人脸的3D Mesh |

| 人像分离 | 从图像/视频中把人分离出来 |

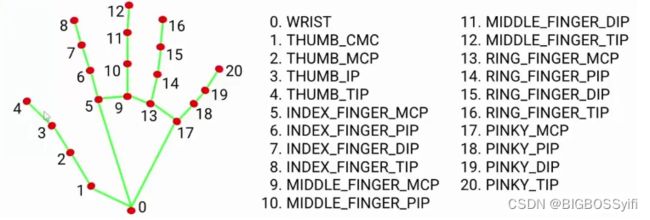

| 手势跟踪 | 21个关键点的3D坐标 |

| 人体3D识别 | 33个关键点的3D坐标 |

| 物体颜色识别 | 可以把头发检测出来,并图上颜色 |

Mediapipe Dev

以上是Mediapipe的几个常用功能 ,这几个功能我们会在后续一一讲解实现

Python安装Mediapipe

pip install mediapipe==0.8.9.1

也可以用 setup.py 安装

https://github.com/google/mediapipe

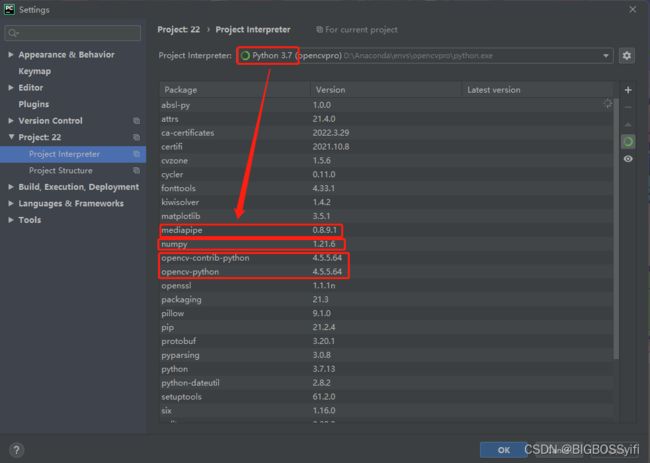

项目环境

Python 3.7

Mediapipe 0.8.9.1

Numpy 1.21.6

OpenCV-Python 4.5.5.64

OpenCV-contrib-Python 4.5.5.64

实测也支持Python3.8-3.9

手势动作捕捉部分

实时动作捕捉

本项目在Unity中实现实时动作捕捉的核心是通过本地UDP与socket 进行通信

关于数据文件部分,详细可以查看OpenCV+Mediapipe人物动作捕捉与Unity引擎的结合中对数据文件部分的讲解和使用

核心代码

摄像头捕捉部分:

import cv2

cap = cv2.VideoCapture(0) #OpenCV摄像头调用:0=内置摄像头(笔记本) 1=USB摄像头-1 2=USB摄像头-2

while True:

success, img = cap.read()

imgRGB = cv2.cvtColor(img, cv2.COLOR_BGR2RGB) #cv2图像初始化

cv2.imshow("HandsImage", img) #CV2窗体

cv2.waitKey(1) #关闭窗体

视频帧率计算

import time

#帧率时间计算

pTime = 0

cTime = 0

while True

cTime = time.time()

fps = 1 / (cTime - pTime)

pTime = cTime

cv2.putText(img, str(int(fps)), (10, 70), cv2.FONT_HERSHEY_PLAIN, 3,

(255, 0, 255), 3) #FPS的字号,颜色等设置

Socket通信:

定义Localhost和post端口地址

sock = socket.socket(socket.AF_INET, socket.SOCK_DGRAM)

serverAddressPort = ("127.0.0.1", 5052) # 定义IP和端口

# 发送数据

sock.sendto(str.encode(str(data)), serverAddressPort)

手势动作捕捉:

if hands:

# Hand 1

hand = hands[0]

lmList = hand["lmList"]

for lm in lmList:

data.extend([lm[0], h - lm[1], lm[2]])

完整代码

Hands.py

from cvzone.HandTrackingModule import HandDetector

import cv2

import socket

cap = cv2.VideoCapture(0)

cap.set(3, 1280)

cap.set(4, 720)

success, img = cap.read()

h, w, _ = img.shape

detector = HandDetector(detectionCon=0.8, maxHands=2)

sock = socket.socket(socket.AF_INET, socket.SOCK_DGRAM)

serverAddressPort = ("127.0.0.1", 5052)

while True:

success, img = cap.read()

hands, img = detector.findHands(img)

data = []

if hands:

# Hand 1

hand = hands[0]

lmList = hand["lmList"]

for lm in lmList:

data.extend([lm[0], h - lm[1], lm[2]])

sock.sendto(str.encode(str(data)), serverAddressPort)

cv2.imshow("Image", img)

cv2.waitKey(1)

py代码效果

Unity 部分

建模

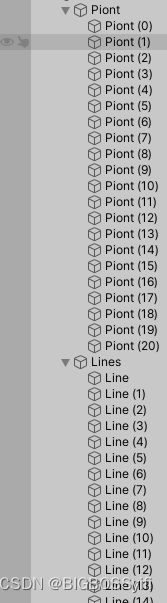

在Unity中,我们需要搭建一个人物的模型,这里需要一个21个Sphere作为手势的特征点和21个Cube作为中间的支架

具体文件目录如下:

Line的编号对应手势模型特征点

Unity代码

UDP.cs

本代码功能将Socket发送的数据进行接收

using UnityEngine;

using System;

using System.Text;

using System.Net;

using System.Net.Sockets;

using System.Threading;

public class UDPReceive : MonoBehaviour

{

Thread receiveThread;

UdpClient client;

public int port = 5052;

public bool startRecieving = true;

public bool printToConsole = false;

public string data;

public void Start()

{

receiveThread = new Thread(

new ThreadStart(ReceiveData));

receiveThread.IsBackground = true;

receiveThread.Start();

}

private void ReceiveData()

{

client = new UdpClient(port);

while (startRecieving)

{

try

{

IPEndPoint anyIP = new IPEndPoint(IPAddress.Any, 0);

byte[] dataByte = client.Receive(ref anyIP);

data = Encoding.UTF8.GetString(dataByte);

if (printToConsole) { print(data); }

}

catch (Exception err)

{

print(err.ToString());

}

}

}

}

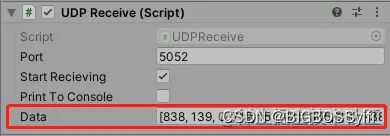

UDP.cs接收效果图

Line.cs

这里是每个Line对应cs文件,实现功能:使特征点和Line连接在一起

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class LineCode : MonoBehaviour

{

LineRenderer lineRenderer;

public Transform origin;

public Transform destination;

void Start()

{

lineRenderer = GetComponent<LineRenderer>();

lineRenderer.startWidth = 0.1f;

lineRenderer.endWidth = 0.1f;

}

// 连接两个点

void Update()

{

lineRenderer.SetPosition(0, origin.position);

lineRenderer.SetPosition(1, destination.position);

}

}

Hands.cs

这里是读取上文识别并保存的手势动作数据,并将每个子数据循环遍历到每个Sphere点,使特征点随着摄像头的捕捉进行运动

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class HandTracking : MonoBehaviour

{

public UDPReceive udpReceive;

public GameObject[] handPoints;

void Start()

{

}

void Update()

{

string data = udpReceive.data;

data = data.Remove(0, 1);

data = data.Remove(data.Length - 1, 1);

print(data);

string[] points = data.Split(',');

print(points[0]);

for (int i = 0; i < 21; i++)

{

float x = 7-float.Parse(points[i * 3]) / 100;

float y = float.Parse(points[i * 3 + 1]) / 100;

float z = float.Parse(points[i * 3 + 2]) / 100;

handPoints[i].transform.localPosition = new Vector3(x, y, z);

}

}

}

最终实现效果

Good Luck,Have Fun and Happy Coding!!!