K8S学习笔记0522

K8S中资源限制

如果运行的容器没有定义资源(memory、CPU)等限制,但是在namespace定义了LimitRange限制,那么该容器会继承LimitRange中的默认限制。

如果namespace没有定义LimitRange限制,那么该容器可以只要宿主机的最大可用资源,直到无资源可用而触发宿主机(OOMKiller)。

CPU以核心为单位进行限制,单位可以是整核、浮点核心数或毫核(m/milli)。2=2核心=200%0.5=500m=50%1.2=1200m=120%

memory以字节为单位,单位可以是E、P、T、G、M、K、 Ei、Pi、Ti、Gi.Mi、 Ki。如1536Mi=1.5Girequests(请求)为kubernetes scheduler执行pod调度时node节点至少需要拥有的资源。

limits(限制)为pod运行成功后最多可以使用的资源上限。

备注:request不建议比limits小太多,如果request内存设置100m,limits内存设置512m,node节点内存只有300m,慢慢使用过程中会超过300m内存从而OOM

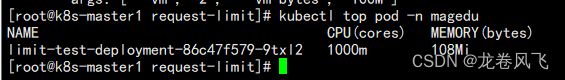

实例 内存/CPU限制

创建pod

apiVersion: apps/v1

kind: Deployment

metadata:

name: limit-test-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: limit-test-pod

template:

metadata:

labels:

app: limit-test-pod

spec:

containers:

- name: limit-test-container

image: k8s-harbor.com/public/docker-stress-ng:v1#压测镜像

resources:

limits:

memory: "200Mi"

requests:

memory: "100Mi"

args: ["--vm", "2", "--vm-bytes", "100M"]#2线程,每个线程占100m内存

添加cpu限制

apiVersion: apps/v1

kind: Deployment

metadata:

name: limit-test-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: limit-test-pod

template:

metadata:

labels:

app: limit-test-pod

spec:

containers:

- name: limit-test-container

image: k8s-harbor.com/public/docker-stress-ng:v1

resources:

limits:

cpu: "1"

memory: "200Mi"

requests:

cpu: "0.5"

memory: "100Mi"

args: ["--vm", "2", "--vm-bytes", "100M"]

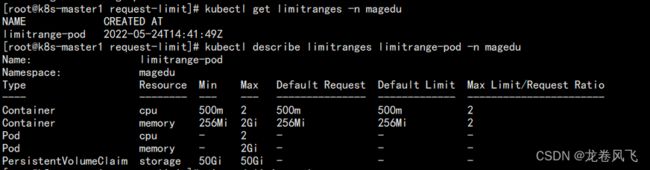

Pod的资源限制

Limit Range是对具体某个Pod或容器的资源使用进行限制:https://kubernetes.io/zh/docs/concepts/policy/limit-rangel

1、限制namespace中每个Pod或容器的最小与最大计算资源

2、限制namespace中每个Pod或容器计算资源request、 limit之间的比例

3、限制namespace中每个存储卷声明(PersistentVolumeClaim)可使用的最小与最大存储空间

4、设置namespace中容器默认计算资源的request. limit,并在运行时自动注入到容器中root@k8s-master1 request-limit]# cat pod-limit.yaml

apiVersion: v1

kind: LimitRange

metadata:

name: limitrange-pod

namespace: magedu

spec:

limits:

- type: Container #限制资源类型

max:

cpu: "2" #限制单个容器最大的CPU

memory: "2Gi" #限制单个容器最大的内存

min:

cpu: "500m" #限制单个容器最小的CPU

memory: "256Mi" #限制单个容器最小的内存

default:

cpu: "500m" #默认单个容器的CPU限制

memory: "256Mi" #默认单个容器的内存限制

defaultRequest:

cpu: "500m" #默认单个容器的CPU创建请求

memory: "256Mi" #默认单个容器的内存创建请求

maxLimitRequestRatio:

cpu: "2" #限制CPU limit/request比值最大为2

memory: "2" #限制内存 limit/request比值最大为2

- type: Pod

max:

cpu: "2" #限制单个Pod的最大CPU

memory: "2Gi" #限制单个Pod的最大内存

- type: PersistentVolumeClaim

max:

storage: 50Gi #限制PVC最大的storage

min:

storage: 50Gi #限制PVC最小的storage

[root@k8s-master1 request-limit]# kubectl get limitranges -n magedu

NAME CREATED AT

limitrange-pod 2022-05-24T14:41:49Z

[root@k8s-master1 request-limit]# kubectl describe limitranges limitrange-pod -n magedu

Name: limitrange-pod

Namespace: magedu

Type Resource Min Max Default Request Default Limit Max Limit/Request Ratio

---- -------- --- --- --------------- ------------- -----------------------

Container cpu 500m 2 500m 500m 2

Container memory 256Mi 2Gi 256Mi 256Mi 2

Pod cpu - 2 - - -

Pod memory - 2Gi - - -

PersistentVolumeClaim storage 50Gi 50Gi - - -

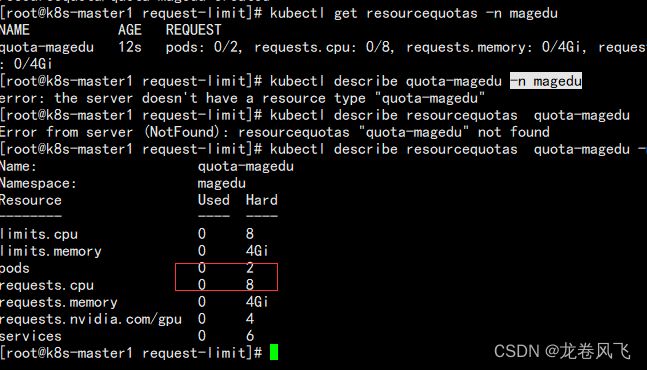

Namespace资源限制

kubernetes对整个namespace的CPU及memory实现资源限制

https://kubernetes.io/zh/docs/concepts/policy/resource-quotas/

限定某个对象类型(如Pod. service)可创建对象的总数;

限定某个对象类型可消耗的计算资源(CPU、内存)与存储资源(存储卷声明)总数

apiVersion: v1

kind: ResourceQuota

metadata:

name: quota-magedu

namespace: magedu

spec:

hard:

requests.cpu: "8" #一般等于宿主机最大CPU

limits.cpu: "8" #一般等于宿主机最大CPU

requests.memory: 4Gi #一般等于宿主机最大内存

limits.memory: 4Gi #一般等于宿主机最大内存

requests.nvidia.com/gpu: 4

pods: "20" #pod数量,已经创建的不受影响

services: "6" #service数量

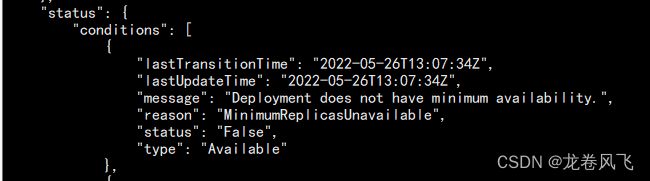

[root@k8s-master1 request-limit]# kubectl get deployments -n magedu

NAME READY UP-TO-DATE AVAILABLE AGE

magedu-nginx-deployment 2/5 2 2 2m17s

kubectl get deployments magedu-nginx-deployment -n magedu -o jsonK8S的亲和和反亲和

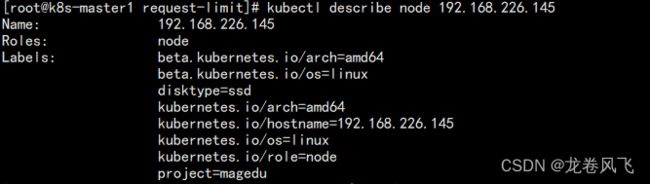

nodeSelector

简介

nodeSelector基于node标签选择器,将pod调度的指定的目的节点上.

https://kubernetes.io/zh/docs/concepts/scheduling-eviction/assign-pod-node/

可用于基于服务类型干预Pod调度结果,如对磁盘I/O要求高的pod调度到SSD节点,对内存要求比较高的pod调度的内存较高的节点。

也可以用于区分不同项目的pod,如将node添加不同项目的标签,然后区分调度。查看默认标签

[root@k8s-master1 request-limit]# kubectl describe node 192.168.226.145

Name: 192.168.226.145

Roles: node

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=192.168.226.145

kubernetes.io/os=linux

kubernetes.io/role=node

给节点打标签

[root@k8s-master1 request-limit]# kubectl label node 192.168.226.145 project=magedu

node/192.168.226.145 labeled

[root@k8s-master1 request-limit]# kubectl label node 192.168.226.145 disktype=ssd

node/192.168.226.145 labeled

给节点删除标签

[root@k8s-master1 request-limit]# kubectl label node 192.168.226.145 disktype-

node/192.168.226.145 unlabeled

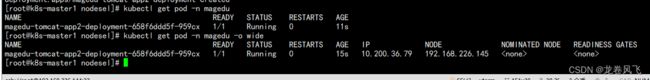

pod调度指定node标签

[root@k8s-master1 nodesel]# cat case1-nodeSelector.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: "512Mi"

requests:

cpu: 500m

memory: "512Mi"

nodeSelector: #pod全局

project: magedu #指定项目

disktype: ssd #指定磁盘类型

pod调度指定node名称

[root@k8s-master1 nodesel]# cat case2-nodename.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

nodeName: 192.168.226.146

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: "512Mi"

requests:

cpu: 500m

memory: "512Mi"

node affinty 节点亲和与反亲和

affinity是Kubernetes 1.2版本后引入的新特性,类似于nodeSelector,允许使用者指定一些Pod在Node间调度的约鬼,目前支持两种形式:

- requiredDuringSchedulinglgnoredDuringExecution(不匹配调度失败):#必须满足pod调度匹配条件,如果不满足则不进行调度

- preferredDuringSchedulinglgnoredDuringExecution(不匹配但最终还会调度成功):#倾向满足pod调度匹配条件,不满足的情况下会调度的不符合条件的Node上

lgnoreDuringExecution:表示如果在Pod运行期间Node的标签发生变化,致亲和性策略不能满足,也会继续运行当前的Pod。

Afinity与anti-affinity的目的也是控制pod的调度结果,但是相对于nodeSelector,亲和与反亲和功能更加强大

1、标签选择器不仅仅支持and,还支持In、NotIn、Exists、DoesNotExist、Gt、Lt;

2、可以设置软匹配和硬匹配,在软匹配下,如果调度器无法匹配节点,仍然将pod调度到其它不符合条件的节点;

3、还可以对pod定义亲和策略,比如允许哪些pod可以或者不可以被调度至同一台node.

In:标签的值存在匹配列表中(匹配成功就调度到目的node,实现node亲和)

Notln:标签的值不存在指定的匹配列表中(不会调度到目的node,实现反亲和)

Gt:标签的值大于某个值(字符串)

Lt:标签的值小于某个值(字符串)

Exists:指定的标签存在硬亲和案例一

如果定义一个nodeSelectorTerms(条件)中通过一个matchExpressions基于列表指定了多个operator条件,则只要满足其中一个条件,就会被调度到相应的节点上,即or的关系,即如果nodeSelectorTerms下面有多个条件的话,只要满足任何一个条件就可以

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions: #匹配条件1,多个values可以调度

- key: disktype

operator: In

values:

- ssd # 只有一个value是匹配成功也可以调度

- xxx

- matchExpressions: #匹配条件2,多个matchExpressions加上以及每个matchExpressions values只有其中一个value匹配成功就可以调度

- key: project

operator: In

values:

- mmm #即使这俩条件2的都匹配不上也可以调度

- nnn

硬亲和案例二

如果定义一个nodeSelectorTerms中都通过一个matchExpressions(匹配表达式)指定key匹配多个条件,则所有的目的条件都必须满足才会调度到对应的节点,即and的关系,即果matchExpressions有多个选项的话,则必须同时满足所有这些条件才能正常调度

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions: #硬亲和匹配条件1

- key: disktype

operator: In

values:

- ssd

- xxx #同个key的多个value只有有一个匹配成功就行

- key: project #硬亲和条件1和条件2必须同时满足,否则不调度

operator: In

values:

- magedu

软亲和案例一

软亲和匹配不成功也会调度

affinity:

nodeAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 80 #匹配成功就调度,不在往下面走

preference:

matchExpressions:

- key: project

operator: In

values:

- mageduxx

- weight: 60 #权重

preference:

matchExpressions:

- key: disktype

operator: In

values:

- ssdxx

案例三 软亲和与硬亲和结合使用

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution: #硬亲和

nodeSelectorTerms:

- matchExpressions: #硬匹配条件1

- key: "kubernetes.io/role"

operator: NotIn

values:

- "master" #硬性匹配key 的值kubernetes.io/role不包含master的节点,即绝对不会调度到master节点(node反亲和)

preferredDuringSchedulingIgnoredDuringExecution: #软亲和

- weight: 80

preference:

matchExpressions:

- key: project

operator: In

values:

- magedu

- weight: 60

preference:

matchExpressions:

- key: disktype

operator: In

values:

- ssd

node节点反亲和

[root@k8s-master1 nodesel]# cat case3-3.1-nodeantiaffinity.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

- matchExpressions: #匹配条件1

- key: disktype

operator: NotIn #调度的目的节点没有key为disktype且值为hdd的标签

values:

- ssd #绝对不会调度到含有label的key为disktype且值为ssd的hdd的节点,即会调度到没有key为disktype且值为hdd的hdd的节点

pod级别亲和与反亲和

Pod亲和性与反亲和性可以基于已经在node节点上运行的Pod的标签来约束新创建的Pod可以调度到的目的节点,注意不是基于node上的标签而是使用的已经运行在node上的pod标签匹配。

其规则的格式为如果node节点A已经运行了一个或多个满足调度新创建的Pod B的规则,那么新的Pod B在亲和的条件下会调度到A节点之上,而在反亲和性的情况下则不会调度到A节点至上.

其中规则表示一个具有可选的关联命名空间列表的LabelSelector,只所以Pod亲和与反亲和需可以通过LabelSelecto选择namespace,是因为Pod是命名空间限定的而node不属于任何nemespace所以node的亲和与反亲和不需要namespace,因此作用于Pod标签的标签选择算符必须指定选择算符应用在哪个命名空间.

从概念上讲, node节点是一个拓扑域(具有拓扑结构的域),比如k8s集群中的单台node节点、一个机架、云供应商可用区云供应商地理区域等,可以使用topologyKey来定义亲和或者分亲和的颗粒度是node级别还是可用区级别,以便K8S调度系统用来识别并选择正确的目的拓扑域。

pod亲和的写法

- Pod亲和性与反亲和性的合法操作符(operator)有ln、NotIn、Exists、DoesNotExist.

- 在Pod亲和性配置中,在requiredDuringSchedulinglgnoredDuringExecution和preferredDuringSchedulinglgnoredDuringExecution中,topologyKey不元诗为空炬opty topologykey is not allowed.)。

- 在Pod反亲和性中配置中,requiredDuringSchedulinglgnoredDuringExecution和preferredDuringschedulinglgnoredDuringExecution中,topologyKey也不可以为空(Empty topologyKey is not allowed.).

- 对于requiredDuring5chedulinglanoredDuringExecution要求的Pod反亲和性,准入控制器LimitPodHardAntiAffinityTopology被引入以确保topologyKey只能是kubernetes.io/hostname,如果希望topologyKey也可用于其他定制拓扑逻辑,可以更改准入控制器或者禁用.除上述情况外, topologyKey可以是任何合法的标签键.

案例 pod软亲和

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: python-nginx-deployment-label

name: python-nginx-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: python-nginx-selector

template:

metadata:

labels:

app: python-nginx-selector

project: python

spec:

containers:

- name: python-nginx-container

image: nginx:1.20.2-alpine

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 80

protocol: TCP

name: http

- containerPort: 443

protocol: TCP

name: https

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

# resources:

# limits:

# cpu: 2

# memory: 2Gi

# requests:

# cpu: 500m

# memory: 1Gi

---

kind: Service

apiVersion: v1

metadata:

labels:

app: python-nginx-service-label

name: python-nginx-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 80

nodePort: 30014

- name: https

port: 443

protocol: TCP

targetPort: 443

nodePort: 30453

selector:

app: python-nginx-selector

project: python #一个或多个selector,至少能匹配目标pod的一个标签

[root@k8s-master1 nodesel]# cat case4-4.2-podaffinity-preferredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAffinity:

#requiredDuringSchedulingIgnoredDuringExecution:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python

topologyKey: kubernetes.io/hostname

namespaces:

- magedu

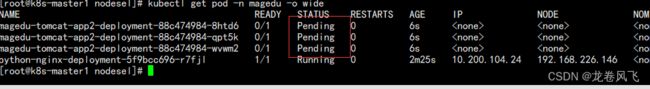

案例 pod硬亲和

[root@k8s-master1 nodesel]# cat case4-4.3-podaffinity-requiredDuring.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 3

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python1

topologyKey: "kubernetes.io/hostname"

namespaces:

- magedu

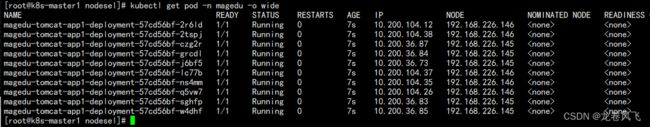

pod 硬反亲和

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAntiAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

- labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python

topologyKey: "kubernetes.io/hostname"

namespaces:

- magedu

pod软反亲和

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app2-deployment-label

name: magedu-tomcat-app2-deployment

namespace: magedu

spec:

replicas: 1

selector:

matchLabels:

app: magedu-tomcat-app2-selector

template:

metadata:

labels:

app: magedu-tomcat-app2-selector

spec:

containers:

- name: magedu-tomcat-app2-container

image: tomcat:7.0.94-alpine

imagePullPolicy: IfNotPresent

#imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: project

operator: In

values:

- python

topologyKey: kubernetes.io/hostname

namespaces:

- magedu

K8S中污点与容忍

污点(taints),用于node节点排斥Pod调度,与亲和的作用是完全相反的,即taint的node和pod是排斥调度关系,

容忍(pleration),用于Pod容忍node节点的污点信息,即node有污点信息也会将新的pod调度到node。

污点

污点三种类型

NoSchedule:表示不会将pid调度到具有该污点的节点上(硬限制)

kubectl taint node 192.168.226.146 key1=value1:NoSchedule #设置污点

[root@k8s-master1 nodesel]# kubectl describe node 192.168.226.146 #查看污点

Name: 192.168.226.146

Roles: node

Labels: beta.kubernetes.io/arch=amd64

beta.kubernetes.io/os=linux

kubernetes.io/arch=amd64

kubernetes.io/hostname=192.168.226.146

kubernetes.io/os=linux

kubernetes.io/role=node

Annotations: node.alpha.kubernetes.io/ttl: 0

volumes.kubernetes.io/controller-managed-attach-detach: true

CreationTimestamp: Sun, 17 Apr 2022 19:27:24 +0800

Taints: key1=value1:NoSchedule

kubectl taint node 192.168.226.146 key1:NoSchedule- #取消污点PrefeNoSchedule:表示k8s将尽量避免将Pod调度到具有该污点的Node上

NoExecute:表示k8s将不会将Pod调度到具有该污点的Node上,同时会将Node上已经存在的Pod强制驱逐出去

kubectl taint nodes 192.168.226.146 key1=value1:NoExecute容忍

定义Pod的容忍度,可以调度至含有污点的node.

基于operator的污点匹配:

如果operator是Exists,侧容忍度不需要value而是直接匹配污点类型。

如果operator是Equal,则需要指定value并且value的值需要等于tolerations的key.案例 容忍pod

[root@k8s-master1 nodesel]# cat case5.1-taint-tolerations.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app1-deployment-label

name: magedu-tomcat-app1-deployment

namespace: magedu

spec:

replicas: 10

selector:

matchLabels:

app: magedu-tomcat-app1-selector

template:

metadata:

labels:

app: magedu-tomcat-app1-selector

spec:

containers:

- name: magedu-tomcat-app1-container

#image: harbor.magedu.local/magedu/tomcat-app1:v7

image: tomcat:7.0.93-alpine

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

imagePullPolicy: IfNotPresent

ports:

- containerPort: 8080

protocol: TCP

name: http

# env:

# - name: "password"

# value: "123456"

# - name: "age"

# value: "18"

# resources:

# limits:

# cpu: 1

# memory: "512Mi"

# requests:

# cpu: 500m

# memory: "512Mi"

tolerations:

- key: "key1"

operator: "Equal"

value: "value1"

effect: "NoSchedule"

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-tomcat-app1-service-label

name: magedu-tomcat-app1-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

#nodePort: 40003

selector:

app: magedu-tomcat-app1-selector

[root@k8s-master1 nodesel]# kubectl taint node 192.168.226.146 key1=value1:NoSchedule

node/192.168.226.146 tainted

[root@k8s-master1 nodesel]# kubectl taint node 192.168.226.145 key1=value1:NoSchedule

node/192.168.226.145 tainted

驱逐

节点压力驱逐是由各kubelet进程主动终止Pod,以回收节点上的内存、磁盘空间等资源的过程, kubelet监控当前node节点的CPU、内存、磁盘空间和文件系统的inode等资源,当这些资源中的一个或者多个达到特定的消耗水平kubelet就会主动地将节点上一个或者多个Pod强制驱逐,以防止当前node节点资源无法正常分配而引发的OOM.taint 的 effect 值 NoExecute,它会影响已经在节点上运行的 pod:

如果 pod 不能容忍 effect 值为 NoExecute 的 taint,那么 pod 将马上被驱逐

如果 pod 能够容忍 effect 值为 NoExecute 的 taint,且在 toleration 定义中没有指定 tolerationSeconds,则 pod 会一直在这个节点上运行。

如果 pod 能够容忍 effect 值为 NoExecute 的 taint,但是在toleration定义中指定了 tolerationSeconds,则表示 pod 还能在这个节点上继续运行的时间长度

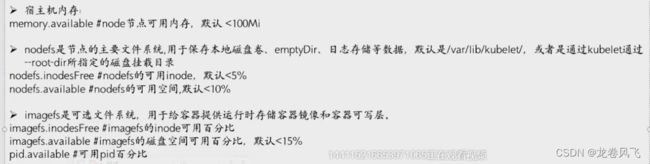

kubelet配置的K8S硬驱逐

/var/lib/kubelet/config.yaml

evictionHard:

imagefs.available: 15%

memory.available: 300Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

驱逐(eviction,节点驱逐),用于当node节点资源不足的时候自动将pod进行强制驱逐,以保证当前node节点的正常运行。

Kubernetes基于是QoS(服务质量等级)驱逐Pod , Qos等级包括目前包括以下三个:

- Guaranteed: limits和request的值相等,等级最高、最后被驱逐

- Burstable: limit和request不相等,等级折中、中间被驱逐

- BestEffort:没有限制,即resources为空,等级最低、最先被驱逐

驱逐条件

- eviction-signal: kubelet捕获node节点驱逐触发信号,进行判断是否驱逐,比如通过cgroupfs获取memory.available的值来进行下一步匹配.

- operator:操作符,通过操作符对比条件是否匹配资源量是否触发驱逐.

- quantity:使用量,即基于指定的资源使用值进行判断,如memory.available:30OMi、 nodefs.available:10%等.

比如: nodefs.available<10%

以上公式为当node节点磁盘空间可用率低于10%就会触发驱逐信号软驱逐

软驱逐不会立即驱逐pod,可以自定义宽限期,在条件持续到宽限期还没有恢复,kubelet再强制杀死pod并触发驱逐

软驱逐条件:

- eviction-soft:软驱逐触发条件,比如memory.available < 1.5Gi,如果驱逐条件持续时长超过指定的宽限期,可触发pod驱逐

- eviction-soft-grace-period:软驱逐宽限期, 如memory.available=1m30s,定义软驱逐条件在触发Pod驱逐之前必须保持多久

- eviction-max-pod-grace-period:终止pod的宽限期,即在满足软驱逐条件而终止Pod时使用的最大允许宽限期(以秒为单位)

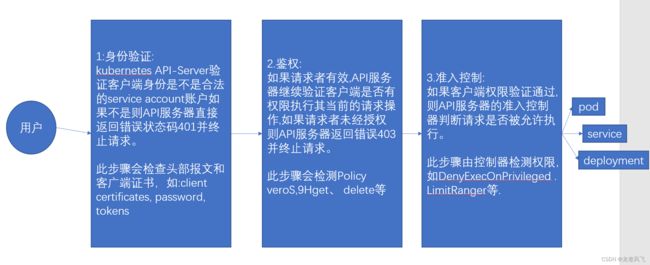

K8S的准入控制

/root/.kube/config 证书文件

鉴权流程

K8S中鉴权类型分为node鉴权和RBAC(考试会考)

node(节点鉴权):针对kubelet发出的API请求进行鉴权。

授予node节点的kubelet读取services、 endpoints、 secrets、 configmaps等事件状态,并向APl server更新pod与node状态

[root@k8s-master1 ~]# vim /etc/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/GoogleCloudPlatform/kubernetes

After=network.target

[Service]

ExecStart=/usr/local/bin/kube-apiserver \

--allow-privileged=true \

--anonymous-auth=false \

--api-audiences=api,istio-ca \

--authorization-mode=Node,RBAC \ #默认类型

Webhook:一个HTTP回调,发生某些事情时调用的HTTP调用。如镜像扫描器

ABAC(Attribute-based access control ):基于属性的访问控制,1.6之前使用,将属性与账户直接绑定。

{"apiVersion": "abac.authorization.kubernetes.io/v1beta1","kind" : "Policy ", "spec": {"user": "user1", "namespace :"*",resource :"*",apiGroup" : "*"}}#用户user1对所有namespace所有API版本的所有资源拥有所有权限((没有设置" readonly': true).--authorization-mode=....RBAC,ABAC --authorization-policy-file=mypolicy.json: #开启ABAC参数

RBAC(Role-Based Access Control):基于角色的访问控制,将权限与角色(role)先进行关联,然后将角色与用户进行绑定(Binding)从而继承角色中的权限。角色分为clusterrole和role,clusterrole是将集群中所有资源对象分配给一个用户,而role需要指定namespace/deployment

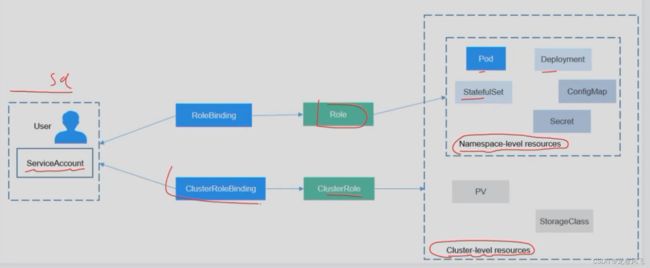

RBAC

RBAC简介

- RBAC API声明了四种Kubernetes对象: Role、ClusterRole、RoleBinding和ClusterRoleBinding.Role:定义一组规则,用于访问命名空间中的Kubernetes资源。

- RoleBinding:定义用户和角色(Role)的绑定关系。

- ClusterRole:定义了一组访问集群中Kubernetes资源(包括所有命名空间)的规则。

- ClusterRoleBinding:定义了用户和集群角色(ClusterRole)的绑定关系。

1、首先创建账号

2、创建role或者cluster role

3、绑定账号创建账号

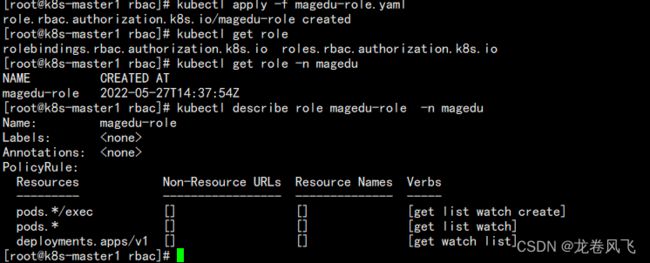

kubectl create sa magedu -n magedu创建role规则

kind: Role #类似为role即角色,分为Role/ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:

namespace: magedu #角色所在namespace

name: magedu-role #角色的名称

rules: #定义授权规则

- apiGroups: ["*"] #资源对象的API,空代表所有版本,可以kubectl api-resources查看并指定版本

resources: ["pods/exec"] #目标资源对象,针对pod执行命令的资源对象

#verbs: ["*"]

##RO-Role

verbs: ["get", "list", "watch", "create"] #该角色的权限

- apiGroups: ["*"]

resources: ["pods"]

#verbs: ["*"]

##RO-Role

verbs: ["get", "list", "watch"]

- apiGroups: ["apps/v1"]

resources: ["deployments"]

#verbs: ["get", "list", "watch", "create", "update", "patch", "delete"]

##RO-Role

verbs: ["get", "watch", "list"]

[root@k8s-master1 rbac]# kubectl get role -n magedu

NAME CREATED AT

magedu-role 2022-05-27T14:37:54Z

[root@k8s-master1 rbac]# kubectl describe role magedu-role -n magedu

Name: magedu-role

Labels:

Annotations:

PolicyRule:

Resources Non-Resource URLs Resource Names Verbs

--------- ----------------- -------------- -----

pods.*/exec [] [] [get list watch create]

pods.* [] [] [get list watch]

deployments.apps/v1 [] [] [get watch list]

role绑定用户

kind: RoleBinding #将角色与用户进行绑定。类型:角色绑定

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: role-bind-magedu #角色绑定名称

namespace: magedu #角色绑定所在namespace

subjects: #主体配置,格式为列表

- kind: ServiceAccount

name: magedu-user #角色绑定的目标账号。一个账号可以绑定多个角色

namespace: magedu

roleRef: #角色配置,指是与Role还是ClusterRole绑定

kind: Role

name: magedu-role #必须与角色名称一致

apiGroup: rbac.authorization.k8s.io #api版本

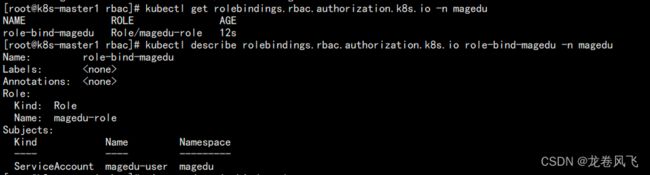

~ [root@k8s-master1 rbac]# kubectl get rolebindings.rbac.authorization.k8s.io -n magedu

NAME ROLE AGE

role-bind-magedu Role/magedu-role 12s

[root@k8s-master1 rbac]# kubectl describe rolebindings.rbac.authorization.k8s.io role-bind-magedu -n magedu

Name: role-bind-magedu

Labels:

Annotations:

Role:

Kind: Role

Name: magedu-role

Subjects:

Kind Name Namespace

---- ---- ---------

ServiceAccount magedu-user magedu

获取token

[root@k8s-master1 rbac]# kubectl get secret -n magedu

[root@k8s-master1 rbac]# kubectl describe secret magedu-user-token-zs8bk -n magedu

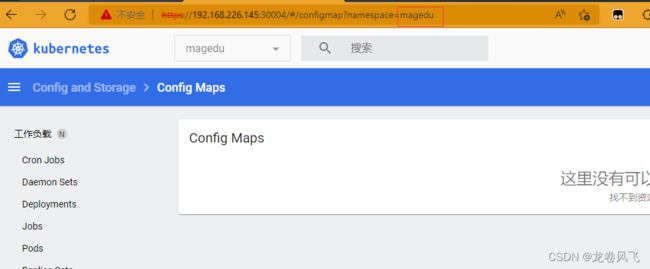

token登录dashboard

基于kube-config文件登录

用于开发登录环境进行执行kubectl命令等

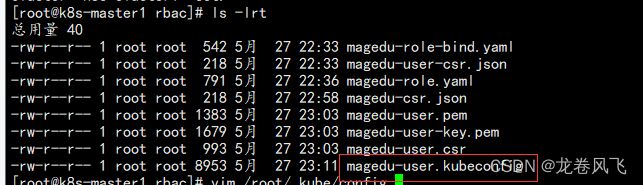

1.1、创建car文件

[root@k8s-master1 rbac]# cat magedu-csr.json

{

"CN": "China",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"ST": "BeiJing",

"L": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

2.2:签发证书

ln -sv /etc/kubeasz/bin/cfssl* /usr/bin/

cfssl gencert -ca=/etc/kubernetes/ssl/ca.pem -ca-key=/etc/kubernetes/ssl/ca-key.pem -config=/etc/kubeasz/clusters/k8s-cluster1/ssl/ca-config.json -profile=kubernetes magedu-csr.json | cfssljson -bare magedu-user

#指定与k8s同一ca,指定k8s的config文件,描述k8s2.3、生成普通用户kubeconfig文件

kubectl config set-cluster k8s-cluster1 --certificate-authority=/etc/kubernetes/ssl/ca.pem --embed-certs=true --server=https://192.168.226.201:6443 --kubeconfig=magedu-user.kubeconfig 集群名称通过/root/.kube/config获取,server地址可以填写为vip地址2.4、设置客户端认证参数

cp *.pem /etc/kubernetes/ssl/ #为了规范,将生成证书拷贝到k8s路径

root@k8s-master1 rbac]# kubectl config set-credentials magedu-user \

> --client-certificate=/etc/kubernetes/ssl/magedu-user.pem \

> --client-key=/etc/kubernetes/ssl/magedu-user-key.pem \

> --embed-certs=true \

> --kubeconfig=magedu-user.kubeconfig

2.5、设置上下文参数(多集群使用上下文区分)

kubectl config set-context k8s-cluster1 \

--cluster=k8s-cluster1 \

--user=magedu-user \

--namespace=magedu \

--kubeconfig=magedu-user.kubeconfig2.6、 设置默认上下文

# kubectl config use-context k8s-cluster1 --kubeconfig=magedu-user.kubeconfig2.7、将token写入用户kube-config文件

[root@k8s-master1 rbac]# kubectl get secrets -n magedu

[root@k8s-master1 rbac]# kubectl describe secrets magedu-user-token-zs8bk -n magedu

K8S中的CICD

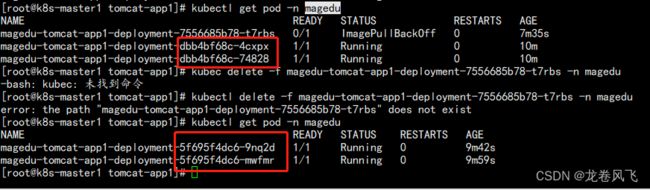

常见的更新方式有更新yaml,kubectl set images和API调用。

K8S中更新方式

1、更新yaml

修改yaml中镜像版本

2、命令方式更新

kubectl set image deployment magedu-tomcat-app1-deployment magedu-tomcat-app1-container=k8s-harbor.com/public/tomcatapp1:v2 -n magedu

# deployment名称,pod名称,镜像名称,namespace[root@k8s-master1 cicd]# kubectl rollout history deployment/magedu-tomcat-app1-deployment -n magedu

deployment.apps/magedu-tomcat-app1-deployment

REVISION CHANGE-CAUSE

2

3

#查看历史版本 [root@k8s-master1 cicd]# kubectl rollout undo deployment/magedu-tomcat-app1-deployment -n magedu

deployment.apps/magedu-tomcat-app1-deployment rolled back

#回退上一个版本kubectl rollout undo deployment/magedu-tomcat-app1-deployment --to-revision=3 -n magedu

#回退指定版本暂停更新与恢复更新

kubectl rollout pause deployment/magedu-tomcat-app1-deployment -n magedu

kubectl rollout resume deployment/magedu-tomcat-app1-deployment -n mageduK8S中的重建更新和滚动更新

重建更新

先删除现有的pod,然后基于新版本的镜像重建,Pod重建期间服务是不可用的,其优势是同时只有一个版本在线,不会产生多版本在线问题,缺点是pod删除后到pod重建成功中间的时间会导致服务无法访问,因此较少使用。

具体流程:先删除旧版本Pod,然后deployment控制器再新建一个ReplicaSet控制器并基于新版本镜像创建新的Pod,旧版本的ReplicaSet控制会保留用于版本回退。

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app1-deployment-label

name: magedu-tomcat-app1-deployment

namespace: magedu

spec:

replicas: 2

strategy:

type: Recreate #重建更新

selector:

matchLabels:

app: magedu-tomcat-app1-selector

template:

metadata:

labels:

app: magedu-tomcat-app1-selector

spec:

containers:

- name: magedu-tomcat-app1-container

image: k8s-harbor.com/public/tomcatapp1:v2

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 1

memory: "512Mi"

requests:

cpu: 500m

memory: "512Mi"

startupProbe:

httpGet:

path: /myapp/index.html

port: 8080

initialDelaySeconds: 5 #首次检测延迟5s

failureThreshold: 3 #从成功转为失败的次数

periodSeconds: 3 #探测间隔周期

readinessProbe:

httpGet:

#path: /monitor/monitor.html

path: /myapp/index.html

port: 8080

initialDelaySeconds: 5

periodSeconds: 3

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 3

livenessProbe:

httpGet:

#path: /monitor/monitor.html

path: /myapp/index.html

port: 8080

initialDelaySeconds: 5

periodSeconds: 3

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 3

# volumeMounts:

# - name: magedu-images

# mountPath: /usr/local/nginx/html/webapp/images

# readOnly: false

# - name: magedu-static

# mountPath: /usr/local/nginx/html/webapp/static

# readOnly: false

# volumes:

# - name: magedu-images

# nfs:

# server: 172.31.7.109

# path: /data/k8sdata/magedu/images

# - name: magedu-static

# nfs:

# server: 172.31.7.109

# path: /data/k8sdata/magedu/static

# nodeSelector:

# project: magedu

# app: tomcat

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-tomcat-app1-service-label

name: magedu-tomcat-app1-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 30092

selector:

app: magedu-tomcat-app1-selector

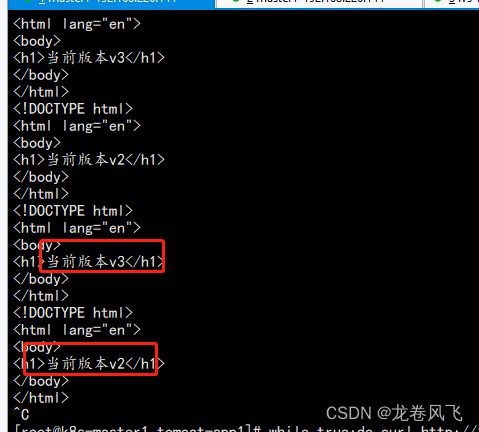

滚动更新

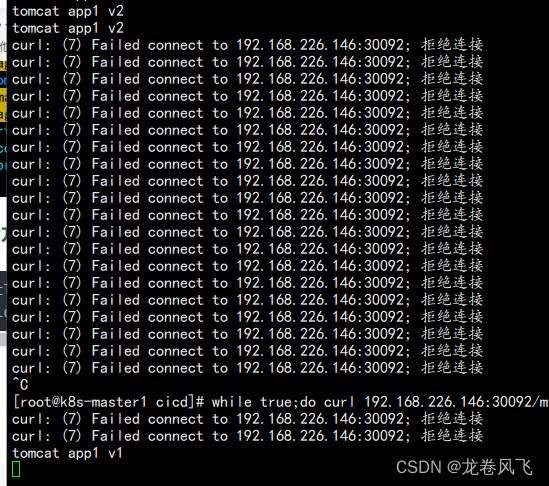

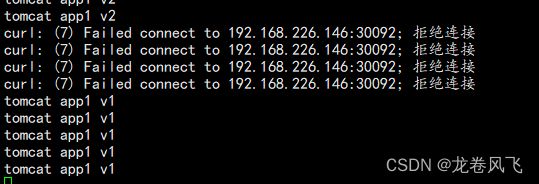

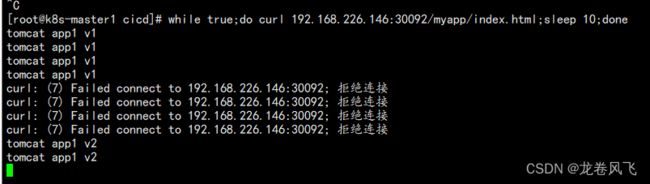

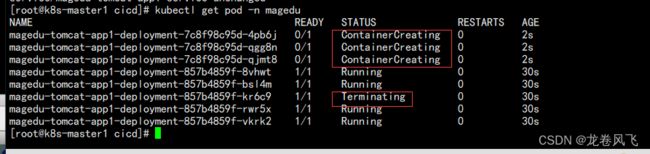

滚动更新是默认的更新策略,滚动更新是新建一个ReplicaSet并基于新版本镜像创建一部分新版本的pod,然后删除一部分旧版本的Pod,然后再创建新一部分版本Pod,再删除一部分旧版本Pod,直到旧版本Pod删除完成,滚动更新优势是在升级过程当中不会导致服务不可用,缺点是升级过程中会导致新版本和旧版本两个版本在短时间内的并存。

升级流程:具体升级过程是在执行更新操作后k8s会再创建一个新版本的ReplicaSet控制器,再删除旧版本的ReplicaSet控制器下的一部分Pod的同时会在新版本的ReplfcaSet空制器中创建新的Pod,直到旧版本的Pod全部被删除完成。旧版本的ReplicaSet控制会保留用于版本回退

在执行滚动更新的同时,为了保证服务的可用性,当前控制器内不可用的pod(pod需要拉取镜像执行创建和执行探针探测期间是不可用的)不能超出一定范围,因此需要至少保留一定数量的pod以保证服务可以被客户端正常访问,可以通过以下参数指定:

kubectl explain deployment.spec.strategy

deployment.spec.strategy.rollingUpdate.maxSurge #指定在升级期间pod总数可以超出定义好的期望的pod数的个数或者百分比(往上取整)。maxSurge是如果是百分比会向上取pod整数,默认为25%,如果设置为10%,假如当前是100个pod,那么升级时最多将创建100个pod即额外有10%的pod临时会超出当前(replicas)指定的副本数限制。

deployment.spec.trategy.rollingUpdate.maxUnavailable 指定在升级期间最大不可用的pod数,可以是整数或者当前pod的百分比,而maxUnavailable如果是百分比则向下取pod整数,默认是25%,假如当前是100个pod,那么升级时最多可以有25个(25%)pod不可用即还要75个(75%)pod是可用的(向下取整)。

注意:以上两个值不能同时为0,如果maxUnavailable最大不可用pod为0, maxSurge超出pod数也为0,那么将会导致pod无法进行滚动更新。

假如副本数正好是10个,maxSurge和maxUnavailable的值都是默认的25%。

1.那么在代码部署过程中pod最多可以有当前的副本数(replica 10+(10 x maxSurge的25%=10+3(往上取整)13个当前副本数+可以超出的副本数(maxSurge=升级过程中总副本数

2.maxUnavailable也是25%,那么包含两层含义:

2.1:更新期间不可用的pod最多是(maxUnavailable)个,即用当前副本数10×25%=2(往下取整)。maxUnavailable=当前副本数×25%=2(往下取整)

2.2:更新期间可用的pod是当前副本数10-(10x 25%=2) =8个。可用副本数=当前副本数-maxUnavailable

3.10个pod,在基于RollingUpdate升级过程中会出现13个pod,,其中8个可用的以及5个不可用的,直到滚动全部更新后恢复10个.

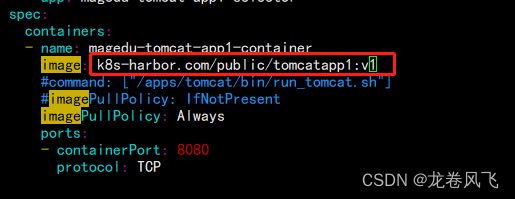

[root@k8s-master1 cicd]# cat 2.tomcat-app1-RollingUpdate.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: magedu-tomcat-app1-deployment-label

name: magedu-tomcat-app1-deployment

namespace: magedu

spec:

replicas: 5

strategy:

type: RollingUpdate

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

selector:

matchLabels:

app: magedu-tomcat-app1-selector

template:

metadata:

labels:

app: magedu-tomcat-app1-selector

spec:

containers:

- name: magedu-tomcat-app1-container

image: k8s-harbor.com/public/tomcatapp1:v2

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

env:

- name: "password"

value: "123456"

- name: "age"

value: "18"

resources:

limits:

cpu: 100m

memory: "100Mi"

requests:

cpu: 100m

memory: "100Mi"

startupProbe:

httpGet:

path: /myapp/index.html

port: 8080

initialDelaySeconds: 15 #首次检测延迟5s

failureThreshold: 3 #从成功转为失败的次数

periodSeconds: 3 #探测间隔周期

readinessProbe:

httpGet:

#path: /monitor/monitor.html

path: /myapp/index.html

port: 8080

initialDelaySeconds: 15

periodSeconds: 3

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 3

livenessProbe:

httpGet:

#path: /monitor/monitor.html

path: /myapp/index.html

port: 8080

initialDelaySeconds: 15

periodSeconds: 3

timeoutSeconds: 5

successThreshold: 1

failureThreshold: 3

# volumeMounts:

# - name: magedu-images

# mountPath: /usr/local/nginx/html/webapp/images

# readOnly: false

# - name: magedu-static

# mountPath: /usr/local/nginx/html/webapp/static

# readOnly: false

# volumes:

# - name: magedu-images

# nfs:

# server: 172.31.7.109

# path: /data/k8sdata/magedu/images

# - name: magedu-static

# nfs:

# server: 172.31.7.109

# path: /data/k8sdata/magedu/static

# nodeSelector:

# project: magedu

# app: tomcat

---

kind: Service

apiVersion: v1

metadata:

labels:

app: magedu-tomcat-app1-service-label

name: magedu-tomcat-app1-service

namespace: magedu

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 30092

selector:

app: magedu-tomcat-app1-selector

升级过程中访问不受影响

案例 持续化部署

gitlab部署

安装gitlab

# 下载gitlab包

[root@lvs-master ~]# wget https://mirrors.tuna.tsinghua.edu.cn/gitlab-ce/yum/el7/gitlab-ce-10.0.0-ce.0.el7.x86_64.rpm

#安装依赖

yum -y install policycoreutils openssh-server openssh-clients postfix

yum install -y curl policycoreutils-python openssh-server

#设置postfix开机自启,并启动,postfix支持gitlab发信功能

systemctl enable postfix && systemctl start postfix修改配置并启动

vim /etc/gitlab/gitlab.rb

external_url 'http://192.168.226.151:9090'

gitlab_rails['smtp_enable'] = true

gitlab_rails['smtp_address'] = "smtp.qq.com"

gitlab_rails['smtp_port'] = 465

gitlab_rails['smtp_user_name'] = "[email protected]"

gitlab_rails['smtp_password'] = "ipjyqvimnedhbdac"

gitlab_rails['smtp_domain'] = "qq.com"

gitlab_rails['smtp_authentication'] = :login

gitlab_rails['smtp_enable_starttls_auto'] = true

gitlab_rails['smtp_tls'] = true

gitlab_rails['gitlab_email_from'] = "[email protected]"

user["git_user_email"] = "[email protected]"

配置GitLab(配置完自动启动,默认账号root)

gitlab-ctl reconfigure验证邮件功能

gitlab-rails console

Notify.test_email('[email protected]', 'test', 'hello').deliver_now #邮箱,主题,正文

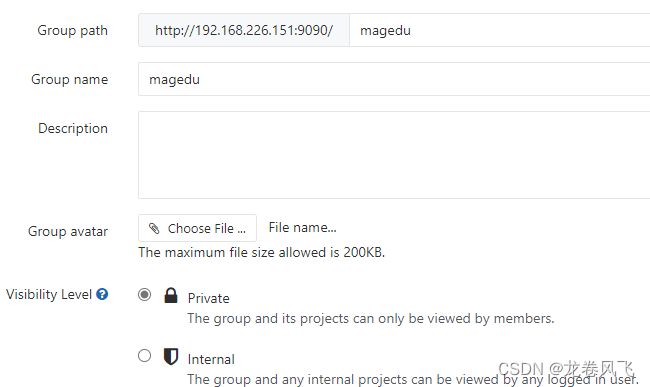

创建组

创建project

验证上传和下载

[root@lvs-master ~]# git clone http://192.168.226.151:9090/magedu/app1.git

正克隆到 'app1'...

Username for 'http://192.168.226.151:9090': root

Password for 'http://[email protected]:9090':

remote: Counting objects: 3, done.

remote: Compressing objects: 100% (2/2), done.

remote: Total 3 (delta 0), reused 0 (delta 0)

Unpacking objects: 100% (3/3), done.

git add index.html

git config --global user.name "root"

git config --global user.email "[email protected]"

git commit -m "添加到远程"

git push origin master

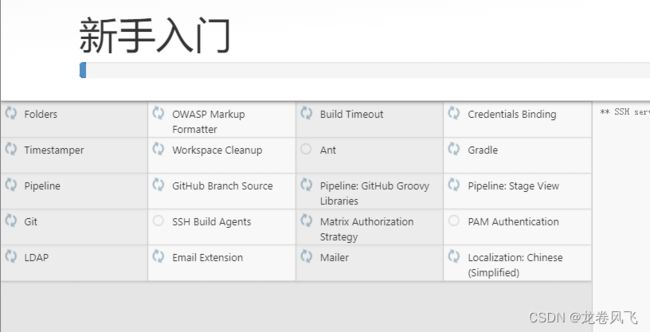

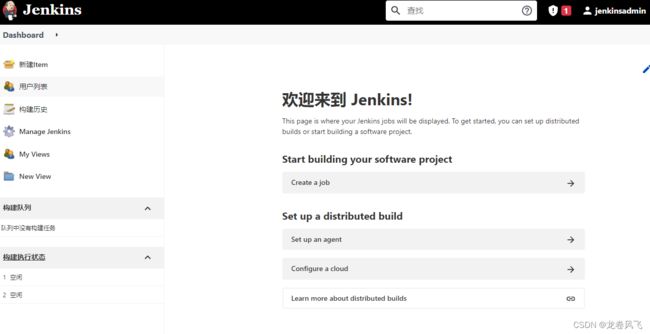

安装Jenkins

安装依赖

yum list java*

yum install -y java-11-openjdk-devel.x86_64

yum install -y daemonize.x86_64

安装Jenkins

wget https://mirrors.tuna.tsinghua.edu.cn/jenkins/redhat/jenkins-2.323-1.1.noarch.rpm

rpm -i jenkins-2.323-1.1.noarch.rpm修改配置

vim /etc/sysconfig/jenkins

JENKINS_USER="root"

JENKINS_PORT="8888"

JENKINS_ARGS="-Dhudson.security.csrf.GlobalCrumblssuerConfiguration.DISABLE_CSRF_PROTECTION=true"

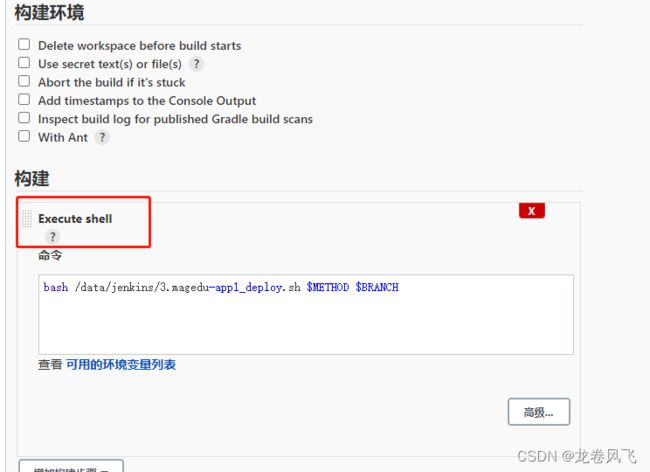

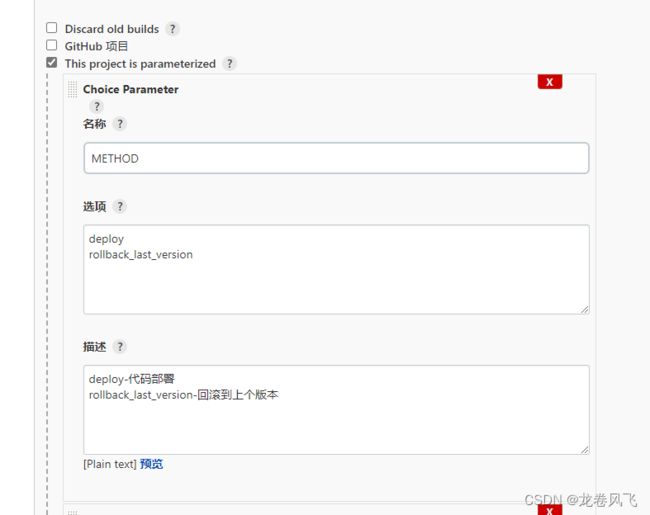

systemctl status jenkins通过脚本自动更新yaml文件

创建项目后配置

#!/bin/bash

#Author: ZhangShiJie

#Date: 2018-10-24

#Version: v1

#记录脚本开始执行时间

starttime=`date +'%Y-%m-%d %H:%M:%S'`

#变量

SHELL_DIR="/data/scripts"

SHELL_NAME="$0"

K8S_CONTROLLER1="192.168.226.144"

K8S_CONTROLLER2="192.168.226.144"

DATE=`date +%Y-%m-%d_%H_%M_%S`

METHOD=$1

Branch=$2

if test -z $Branch;then

Branch=develop

fi

function Code_Clone(){

Git_URL="[email protected]:magedu/app1.git"

DIR_NAME=`echo ${Git_URL} |awk -F "/" '{print $2}' | awk -F "." '{print $1}'`

DATA_DIR="/data/gitdata/magedu"

Git_Dir="${DATA_DIR}/${DIR_NAME}"

cd ${DATA_DIR} && echo "即将清空上一版本代码并获取当前分支最新代码" && sleep 1 && rm -rf ${DIR_NAME}

echo "即将开始从分支${Branch} 获取代码" && sleep 1

git clone -b ${Branch} ${Git_URL}

echo "分支${Branch} 克隆完成,即将进行代码编译!" && sleep 1

#cd ${Git_Dir} && mvn clean package

#echo "代码编译完成,即将开始将IP地址等信息替换为测试环境"

#####################################################

sleep 1

cd ${Git_Dir}

tar czf ${DIR_NAME}.tar.gz ./*

}

#将打包好的压缩文件拷贝到k8s 控制端服务器

function Copy_File(){

echo "压缩文件打包完成,即将拷贝到k8s 控制端服务器${K8S_CONTROLLER1}" && sleep 1

scp ${Git_Dir}/${DIR_NAME}.tar.gz root@${K8S_CONTROLLER1}:/root/dockerfile/web/tomcat/app1/tomcat-app1

echo "压缩文件拷贝完成,服务器${K8S_CONTROLLER1}即将开始制作Docker 镜像!" && sleep 1

}

#到控制端执行脚本制作并上传镜像

function Make_Image(){

echo "开始制作Docker镜像并上传到Harbor服务器" && sleep 1

ssh root@${K8S_CONTROLLER1} "cd /root/dockerfile/web/tomcat/app1/tomcat-app1 && bash build-command.sh ${DATE}"

echo "Docker镜像制作完成并已经上传到harbor服务器" && sleep 1

}

#到控制端更新k8s yaml文件中的镜像版本号,从而保持yaml文件中的镜像版本号和k8s中版本号一致

function Update_k8s_yaml(){

echo "即将更新k8s yaml文件中镜像版本" && sleep 1

ssh root@${K8S_CONTROLLER1} "cd /root/k8sdata/yaml/tomcat-app1 && sed -i 's/image: k8s-harbor.com.*/image: k8s-harbor.com\/project\/tomcat-app1:${DATE}/g' tomcat-app1.yaml"

echo "k8s yaml文件镜像版本更新完成,即将开始更新容器中镜像版本" && sleep 1

}

#到控制端更新k8s中容器的版本号,有两种更新办法,一是指定镜像版本更新,二是apply执行修改过的yaml文件

function Update_k8s_container(){

#第一种方法

#ssh root@${K8S_CONTROLLER1} "kubectl set image deployment/magedu-tomcat-app1-deployment magedu-tomcat-app1-container=harbor.magedu.net/magedu/tomcat-app1:${DATE} -n magedu"

#第二种方法,推荐使用第一种

ssh root@${K8S_CONTROLLER1} "cd /root/k8sdata/yaml/tomcat-app1 && kubectl apply -f tomcat-app1.yaml --record"

echo "k8s 镜像更新完成" && sleep 1

echo "当前业务镜像版本: k8s-harbor.com/project/tomcat-app1:${DATE}"

#计算脚本累计执行时间,如果不需要的话可以去掉下面四行

endtime=`date +'%Y-%m-%d %H:%M:%S'`

start_seconds=$(date --date="$starttime" +%s);

end_seconds=$(date --date="$endtime" +%s);

echo "本次业务镜像更新总计耗时:"$((end_seconds-start_seconds))"s"

}

#基于k8s 内置版本管理回滚到上一个版本

function rollback_last_version(){

echo "即将回滚之上一个版本"

ssh root@${K8S_CONTROLLER1} "kubectl rollout undo deployment/magedu-tomcat-app1-deployment -n magedu"

sleep 1

echo "已执行回滚至上一个版本"

}

#使用帮助

usage(){

echo "部署使用方法为 ${SHELL_DIR}/${SHELL_NAME} deploy "

echo "回滚到上一版本使用方法为 ${SHELL_DIR}/${SHELL_NAME} rollback_last_version"

}

#主函数

main(){

case ${METHOD} in

deploy)

Code_Clone;

Copy_File;

Make_Image;

Update_k8s_yaml;

Update_k8s_container;

;;

rollback_last_version)

rollback_last_version;

;;

*)

usage;

esac;

}

main $1 $2

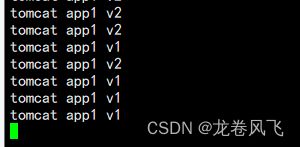

开始构建

Started by user jenkinsadmin

Running as SYSTEM

Building in workspace /var/lib/jenkins/workspace/magedu-app1-deploy

[magedu-app1-deploy] $ /bin/sh -xe /tmp/jenkins16112575484443251394.sh

+ bash /data/jenkins/3.magedu-app1_deploy.sh deploy master

即将清空上一版本代码并获取当前分支最新代码

即将开始从分支master 获取代码

正克隆到 'app1'...

分支master 克隆完成,即将进行代码编译!

压缩文件打包完成,即将拷贝到k8s 控制端服务器192.168.226.144

压缩文件拷贝完成,服务器192.168.226.144即将开始制作Docker 镜像!

开始制作Docker镜像并上传到Harbor服务器

Sending build context to Docker daemon 24.13MB

Step 1/10 : FROM k8s-harbor.com/public/tomcat-base:v8.5.43

---> 7ea3377316b8

Step 2/10 : ADD catalina.sh /apps/tomcat/bin/catalina.sh

---> Using cache

---> 3b25fe5aacbf

Step 3/10 : ADD server.xml /apps/tomcat/conf/server.xml

---> Using cache

---> 84690133c4fc

Step 4/10 : ADD app1.tar.gz /data/tomcat/webapps/myapp/

---> 230d20337a93

Step 5/10 : ADD run_tomcat.sh /apps/tomcat/bin/run_tomcat.sh

---> e1d8ebe600d9

Step 6/10 : RUN chown -R tomcat.tomcat /data/ /apps/

---> Running in 9e0f278f8bf6

Removing intermediate container 9e0f278f8bf6

---> 8a44670e297d

Step 7/10 : RUN chmod +x /apps/tomcat/bin/*.sh

---> Running in a61e72f275a9

Removing intermediate container a61e72f275a9

---> 4c66e3649ef3

Step 8/10 : RUN chown -R tomcat.tomcat /etc/hosts

---> Running in 56e0a987ba70

Removing intermediate container 56e0a987ba70

---> 78fd5eadb5c6

Step 9/10 : EXPOSE 8080 8443

---> Running in e25d88e13dcf

Removing intermediate container e25d88e13dcf

---> 5210bcb3ceaf

Step 10/10 : CMD ["/apps/tomcat/bin/run_tomcat.sh"]

---> Running in c60e7ec81b0e

Removing intermediate container c60e7ec81b0e

---> 60ebcb6495c9

Successfully built 60ebcb6495c9

Successfully tagged k8s-harbor.com/project/tomcat-app1:2022-06-01_21_45_38

The push refers to repository [k8s-harbor.com/project/tomcat-app1]

97339653270b: Preparing

e9cea446fe6d: Preparing

929ff8717426: Preparing

7985d8fd4402: Preparing

9734eefba5cf: Preparing

6c4c08cf6d73: Preparing

f62f634dbf27: Preparing

5b015a98e271: Preparing

4f907dbb0d0c: Preparing

889d184cab84: Preparing

bad6015e778c: Preparing

75ebb1de7e4b: Preparing

f42438a30a0d: Preparing

3322aa74f34d: Preparing

fb82b029bea0: Preparing

889d184cab84: Waiting

bad6015e778c: Waiting

6c4c08cf6d73: Waiting

75ebb1de7e4b: Waiting

f42438a30a0d: Waiting

3322aa74f34d: Waiting

fb82b029bea0: Waiting

f62f634dbf27: Waiting

4f907dbb0d0c: Waiting

5b015a98e271: Waiting

9734eefba5cf: Layer already exists

6c4c08cf6d73: Layer already exists

f62f634dbf27: Layer already exists

97339653270b: Pushed

5b015a98e271: Layer already exists

929ff8717426: Pushed

7985d8fd4402: Pushed

4f907dbb0d0c: Layer already exists

bad6015e778c: Layer already exists

75ebb1de7e4b: Layer already exists

889d184cab84: Layer already exists

f42438a30a0d: Layer already exists

3322aa74f34d: Layer already exists

fb82b029bea0: Layer already exists

e9cea446fe6d: Pushed

2022-06-01_21_45_38: digest: sha256:fa2347db889d8a90946461c11edfba44c06b497306184e98ad43568e50e51b43 size: 3461

Docker镜像制作完成并已经上传到harbor服务器

即将更新k8s yaml文件中镜像版本

k8s yaml文件镜像版本更新完成,即将开始更新容器中镜像版本

Flag --record has been deprecated, --record will be removed in the future

deployment.apps/magedu-tomcat-app1-deployment configured

service/magedu-tomcat-app1-service configured

k8s 镜像更新完成

当前业务镜像版本: k8s-harbor.com/project/tomcat-app1:2022-06-01_21_45_38

本次业务镜像更新总计耗时:73s

Finished: SUCCESS

版本已经更新