改进YOLOv5系列:3.Swin Transformer结构的修改

YOLOv5模块改进,适用于 YOLOv7、YOLOv5、YOLOv4、Scaled_YOLOv4、YOLOv3、YOLOR一系列YOLO算法的模块改进

一系列YOLO算法改进Trick组合!

很多Trick排列组合

助力论文

数据集涨点

创新点改进

具体博客看置顶

以下Swin_transformer 的改进

使用YOLOv5算法作为演示,模块可以无缝插入到YOLOv7、YOLOv5、YOLOv4、Scaled_YOLOv4、YOLOv3、YOLOR等一系列YOLO算法中

文章目录

-

- 方法1.

-

- YOLOv5的yaml配置文件

- 新增或者减少模块

- common.py配置

- yolo.py配置

- 训练yolov5_swin_transfomrer模型

- 针对以上yaml文件继续修改

- 理论

方法1.

YOLOv5的yaml配置文件

首先增加以下yolov5_swin_transfomrer.yaml文件

代码

# YOLOv5 by Ultralytics, GPL-3.0 license

# Parameters

nc: 80 # number of classes

depth_multiple: 0.33 # model depth multiple

width_multiple: 0.50 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 -SwinV2.0 Backbone

backbone:

# [from, number, module, args]

[[-1, 1, PatchEmbed, [4,3,64]], # 0-P1/2

[-1, 1, SwinTransformer_Layer, [64,2,2]], # 1-P2/4 [1, 128, 32, 32])

[-1, 1, SwinTransformer_Layer, [128,2,4]], # 2[1, 256, 16, 16])

[-1, 1, SwinTransformer_Layer, [256,6,8,True]],

[-1, 1, LayerNorm, [256]],

[-1, 1, SwinTransformer_Layer, [256,6,8,True]],

[-1, 1, LayerNorm, [256]], # 3-P5/8

[-1, 1, SwinTransformer_Layer, [256,6,8]], #7 [1, 512, 8, 8])

[-1, 1, SwinTransformer_Layer, [512,2,16,True]], # 8 True:last_layer no PathchMerging

]

# YOLOv5 v6.0 head

head:

[

[-1, 1, nn.Conv2d, [512,256,1]], #9 1,512,8,8

[-1, 1, nn.Upsample, [None, 2, 'nearest']], #1,512,8,8

[[-1, 2], 1, Concat, [1]], # cat backbone P4

[-1, 1, SwinTransformer_Layer, [512,2,8,True,4]], # [1, 512, 16, 16])

[-1, 1, nn.Conv2d, [512,128,1]], #13 [1, 256, 8, 8]

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 1], 1, Concat, [1]], # cat backbone P3

[-1, 1, SwinTransformer_Layer, [256,2,2,True,8]], # 16 (P3/8-small) torch.Size([1, 256, 32, 32])

[-1, 1, nn.Conv2d, [256,128,1,2]], #13

[[-1, 13], 1, Concat, [1]], # cat head P4

[-1, 1, SwinTransformer_Layer, [256,2,2,True,8]], # 19 (P4/16-medium) 16 16

[-1, 1, nn.Conv2d, [256,256,1,2]], #16

[[-1, 9], 1, Concat, [1]], # cat head P5

[-1, 1, SwinTransformer_Layer, [512,2,2,True,8]], # 22 (P5/32-large)

[[16,19, 22], 1, Detect, [nc, anchors,[256,256,512]]], # Detect(P3, P4, P5)

]

新增或者减少模块

当需要修改yaml配置文件,将xx模块 加到你想加入的位置(层数);

首先基于一个可以成功运行的.yaml模型配置文件,进行新增或者减少层数 之后,那么该层网络后续的层的编号都会发生改变,对应的一些层都需要针对性的修改,以匹配通道和层数的关系,

下篇文章详细讲解这块(以yolov5s.yaml文件为例,增加模块的例子)

common.py配置

在./models/common.py文件中增加以下模块,直接复制即可

# Swin_Transformer

def drop_path_f(x, drop_prob: float = 0., training: bool = False):

"""Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

This is the same as the DropConnect impl I created for EfficientNet, etc networks, however,

the original name is misleading as 'Drop Connect' is a different form of dropout in a separate paper...

See discussion: https://github.com/tensorflow/tpu/issues/494#issuecomment-532968956 ... I've opted for

changing the layer and argument names to 'drop path' rather than mix DropConnect as a layer name and use

'survival rate' as the argument.

"""

if drop_prob == 0. or not training:

return x

keep_prob = 1 - drop_prob

shape = (x.shape[0],) + (1,) * (x.ndim - 1) # work with diff dim tensors, not just 2D ConvNets

random_tensor = keep_prob + torch.rand(shape, dtype=x.dtype, device=x.device)

random_tensor.floor_() # binarize

output = x.div(keep_prob) * random_tensor

return output

class DropPath(nn.Module):

"""Drop paths (Stochastic Depth) per sample (when applied in main path of residual blocks).

"""

def __init__(self, drop_prob=None):

super(DropPath, self).__init__()

self.drop_prob = drop_prob

def forward(self, x):

return drop_path_f(x, self.drop_prob, self.training)

def window_partition(x, window_size: int):

"""

将feature map按照window_size划分成一个个没有重叠的window

Args:

x: (B, H, W, C)

window_size (int): window size(M)

Returns:

windows: (num_windows*B, window_size, window_size, C)

"""

B, H, W, C = x.shape

x = x.view(B, H // window_size, window_size, W // window_size, window_size, C)

# permute: [B, H//Mh, Mh, W//Mw, Mw, C] -> [B, H//Mh, W//Mh, Mw, Mw, C]

# view: [B, H//Mh, W//Mw, Mh, Mw, C] -> [B*num_windows, Mh, Mw, C]

windows = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(-1, window_size, window_size, C)

return windows

def window_reverse(windows, window_size: int, H: int, W: int):

"""

将window还原成一个feature map

Args:

windows: (num_windows*B, window_size, window_size, C)

window_size (int): Window size(M)

H (int): Height of image

W (int): Width of image

Returns:

x: (B, H, W, C)

"""

B = int(windows.shape[0] / (H * W / window_size / window_size))

# view: [B*num_windows, Mh, Mw, C] -> [B, H//Mh, W//Mw, Mh, Mw, C]

x = windows.view(B, H // window_size, W // window_size, window_size, window_size, -1)

# permute: [B, H//Mh, W//Mw, Mh, Mw, C] -> [B, H//Mh, Mh, W//Mw, Mw, C]

# view: [B, H//Mh, Mh, W//Mw, Mw, C] -> [B, H, W, C]

x = x.permute(0, 1, 3, 2, 4, 5).contiguous().view(B, H, W, -1)

return x

class Mlp(nn.Module):

""" MLP as used in Vision Transformer, MLP-Mixer and related networks

"""

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.drop1 = nn.Dropout(drop)

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop2 = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop1(x)

x = self.fc2(x)

x = self.drop2(x)

return x

class LayerNorm(nn.Module):

""" MLP as used in Vision Transformer, MLP-Mixer and related networks

"""

def __init__(self, dim):

super().__init__()

self.norm = nn.LayerNorm(dim)

def forward(self, x):

# print("0:",x.size())

x = x.permute(0, 3, 2, 1).contiguous() # B,H,W,C

x = self.norm(x)

# print("1:",x.size())

return x.permute(0, 3, 2, 1).contiguous()

class PatchEmbed(nn.Module):

""" Image to Patch Embedding

Args:

patch_size (int): Patch token size. Default: 4.

in_chans (int): Number of input image channels. Default: 3.

embed_dim (int): Number of linear projection output channels. Default: 96.

norm_layer (nn.Module, optional): Normalization layer. Default: None

"""

def __init__(self, patch_size=4, in_chans=3, embed_dim=96, norm_layer=nn.LayerNorm):

super().__init__()

patch_size = (patch_size, patch_size)

self.patch_size = patch_size

self.in_chans = in_chans

self.embed_dim = embed_dim

# liner embedding --->conv

self.tokens = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=patch_size)

# LayerNorm

if norm_layer is not None:

self.norm = norm_layer(embed_dim)

else:

self.norm = None

self.pos_drop = nn.Dropout(p=0.)

def forward(self, x):

"""Forward function."""

# 在下方或者是右侧进行padding 保证patchsize整除

_, _, H, W = x.size()

# print("pasize:",self.patch_size)

if W % self.patch_size[1] != 0:

x = F.pad(x, (0, self.patch_size[1] - W % self.patch_size[1]))

if H % self.patch_size[0] != 0:

x = F.pad(x, (0, 0, 0, self.patch_size[0] - H % self.patch_size[0]))

# wh * Ww tokens

# print("下采样倍数:",self.patch_size)

x = self.tokens(x) # B C Wh Ww

# print('self.norm',self.norm)

_, _, H, W = x.shape

# flatten: [B, C, H, W] -> [B, C, HW]

# transpose: [B, C, HW] -> [B, HW, C]

if self.norm is not None:

Wh, Ww = x.size(2), x.size(3)

x = x.flatten(2).transpose(1, 2)

x = self.norm(x)

x = x.transpose(1, 2).view(-1, self.embed_dim, Wh, Ww)

x = self.pos_drop(x)

# print('PatchEMbed output shape:',x.size())

return x

class PatchMerging(nn.Module):

""" Patch Merging Layer

Args:

dim (int): Number of input channels.

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

"""

def __init__(self, dim, norm_layer=nn.LayerNorm):

super().__init__()

self.dim = dim

self.reduction = nn.Linear(4 * dim, 2 * dim, bias=False)

self.norm = norm_layer(4 * dim)

def forward(self, x, H, W):

""" Forward function.

Args:

x: Input feature, tensor size (B, H*W, C).

H, W: Spatial resolution of the input feature.

"""

B, C, H, W = x.shape

# print('------------------------PatchMErging input shape:',x.size())

# H=L**0.5

# W=H

# assert L == H * W, "input feature has wrong size"

# assert H==W , "input feature has wrong size"

x = x.view(B, int(H), int(W), C)

# padding

pad_input = (H % 2 == 1) or (W % 2 == 1)

if pad_input:

x = F.pad(x, (0, 0, 0, W % 2, 0, H % 2))

x0 = x[:, 0::2, 0::2, :] # B H/2 W/2 C 左上

x1 = x[:, 1::2, 0::2, :] # B H/2 W/2 C 左下

x2 = x[:, 0::2, 1::2, :] # B H/2 W/2 C 右上

x3 = x[:, 1::2, 1::2, :] # B H/2 W/2 C 右下

x = torch.cat([x0, x1, x2, x3], -1) # B H/2 W/2 4*C

x = x.view(B, -1, 4 * C) # B H/2*W/2 4*C

x = self.norm(x)

x = self.reduction(x) # B H/2*W/2 2*C

# print('PatchMerging output shape:',x.size())

return x

class Global_WindowAttention(nn.Module):

r""" MOA - multi-head self attention (W-MSA) module with relative position bias.

Args:

dim (int): Number of input channels.

window_size (tuple[int]): The height and width of the window.

num_heads (int): Number of attention heads.

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

qk_scale (float | None, optional): Override default qk scale of head_dim ** -0.5 if set

attn_drop (float, optional): Dropout ratio of attention weight. Default: 0.0

proj_drop (float, optional): Dropout ratio of output. Default: 0.0

"""

def __init__(self, dim, window_size, input_resolution, num_heads, qkv_bias=True, qk_scale=None, attn_drop=0.,

proj_drop=0.):

super().__init__()

self.dim = dim

self.window_size = window_size # Wh, Ww

self.query_size = self.window_size[0]

self.key_size = self.window_size[0] + 2

h, w = input_resolution

self.seq_len = h // self.query_size

self.num_heads = num_heads

head_dim = dim // num_heads

self.scale = qk_scale or head_dim ** -0.5

self.reduction = 32

self.pre_conv = nn.Conv2d(dim, int(dim // self.reduction), 1)

# define a parameter table of relative position bias

self.relative_position_bias_weight = nn.Parameter(

torch.zeros((2 * self.seq_len - 1) * (2 * self.seq_len - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nH

# print(self.relative_position_bias_weight.shape)

# get pair-wise relative position index for each token inside the window

coords_h = torch.arange(self.seq_len)

coords_w = torch.arange(self.seq_len)

coords = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, Wh, Ww

coords_flatten = torch.flatten(coords, 1) # 2, Wh*Ww

relative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # 2, Wh*Ww, Wh*Ww

relative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2

relative_coords[:, :, 0] += self.seq_len - 1 # shift to start from 0

relative_coords[:, :, 1] += self.seq_len - 1

relative_coords[:, :, 0] *= 2 * self.seq_len - 1

relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Ww

self.register_buffer("relative_position_index", relative_position_index)

self.queryembedding = Rearrange('b c (h p1) (w p2) -> b (p1 p2 c) h w', p1=self.query_size, p2=self.query_size)

self.keyembedding = nn.Unfold(kernel_size=(self.key_size, self.key_size), stride=14, padding=1)

self.query_dim = int(dim // self.reduction) * self.query_size * self.query_size

self.key_dim = int(dim // self.reduction) * self.key_size * self.key_size

self.q = nn.Linear(self.query_dim, self.dim, bias=qkv_bias)

self.kv = nn.Linear(self.key_dim, 2 * self.dim, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

# trunc_normal_(self.relative_position_bias_weight, std=.02)

self.softmax = nn.Softmax(dim=-1)

def forward(self, x, H, W):

"""

Args:

x: input features with shape of (num_windows*B, N, C)

mask: (0/-inf) mask with shape of (num_windows, Wh*Ww, Wh*Ww) or None

"""

# B, H, W, C = x.shape

B, _, C = x.shape

x = x.reshape(-1, C, H, W)

x = self.pre_conv(x)

query = self.queryembedding(x).view(B, -1, self.query_dim)

query = self.q(query)

B, N, C = query.size()

q = query.reshape(B, N, self.num_heads, C // self.num_heads).permute(0, 2, 1, 3)

key = self.keyembedding(x).view(B, -1, self.key_dim)

kv = self.kv(key).reshape(B, N, 2, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

k = kv[0]

v = kv[1]

q = q * self.scale

attn = (q @ k.transpose(-2, -1))

relative_position_bias = self.relative_position_bias_weight[self.relative_position_index.view(-1)].view(

self.seq_len * self.seq_len, self.seq_len * self.seq_len, -1) # Wh*Ww,Wh*Ww,nH

relative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, Wh*Ww, Wh*Ww

attn = attn + relative_position_bias.unsqueeze(0)

attn = self.softmax(attn)

attn = self.attn_drop(attn)

x = (attn @ v).transpose(1, 2).reshape(B, N, C)

x = self.proj(x)

x = self.proj_drop(x)

return x

class WindowAttention(nn.Module):

""" Window based multi-head self attention (W-MSA) module with relative position bias.

It supports both of shifted and non-shifted window.

Args:

dim (int): Number of input channels.

window_size (tuple[int]): The height and width of the window.

num_heads (int): Number of attention heads.

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

attn_drop (float, optional): Dropout ratio of attention weight. Default: 0.0

proj_drop (float, optional): Dropout ratio of output. Default: 0.0

"""

def __init__(self, dim, window_size, num_heads, qkv_bias=True, attn_drop=0., proj_drop=0.,

meta_network_hidden_features=256):

super().__init__()

self.dim = dim

self.window_size = window_size # [Mh, Mw]

self.num_heads = num_heads

head_dim = dim // num_heads

# self.scale = head_dim ** -0.5

# define a parameter table of relative position bias

self.relative_position_bias_weight = nn.Parameter(

torch.zeros((2 * window_size[0] - 1) * (2 * window_size[1] - 1), num_heads)) # [2*Mh-1 * 2*Mw-1, nH]

# 获取窗口内每对token的相对位置索引

# get pair-wise relative position index for each token inside the window

# coords_h = torch.arange(self.window_size[0])

# coords_w = torch.arange(self.window_size[1])

# coords = torch.stack(torch.meshgrid([coords_h, coords_w] )) # [2, Mh, Mw]indexing="ij"

# coords_flatten = torch.flatten(coords, 1) # [2, Mh*Mw]

# # [2, Mh*Mw, 1] - [2, 1, Mh*Mw]

# relative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # [2, Mh*Mw, Mh*Mw]

# relative_coords = relative_coords.permute(1, 2, 0).contiguous() # [Mh*Mw, Mh*Mw, 2]

# relative_coords[:, :, 0] += self.window_size[0] - 1 # shift to start from 0

# relative_coords[:, :, 1] += self.window_size[1] - 1

# relative_coords[:, :, 0] *= 2 * self.window_size[1] - 1

# relative_position_index = relative_coords.sum(-1) # [Mh*Mw, Mh*Mw]

# self.register_buffer("relative_position_index", relative_position_index)

# Init meta network for positional encodings

self.meta_network: nn.Module = nn.Sequential(

nn.Linear(in_features=2, out_features=meta_network_hidden_features, bias=True),

nn.ReLU(inplace=True),

nn.Linear(in_features=meta_network_hidden_features, out_features=num_heads, bias=True))

self.qkv = nn.Linear(dim, dim * 3, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

nn.init.trunc_normal_(self.relative_position_bias_weight, std=.02)

self.softmax = nn.Softmax(dim=-1)

# Init tau

self.register_parameter("tau", nn.Parameter(torch.zeros(1, num_heads, 1, 1)))

# Init pair-wise relative positions (log-spaced)

indexes = torch.arange(self.window_size[0], device=self.tau.device)

coordinates = torch.stack(torch.meshgrid([indexes, indexes]), dim=0)

coordinates = torch.flatten(coordinates, start_dim=1)

relative_coordinates = coordinates[:, :, None] - coordinates[:, None, :]

relative_coordinates = relative_coordinates.permute(1, 2, 0).reshape(-1, 2).float()

relative_coordinates_log = torch.sign(relative_coordinates) \

* torch.log(1. + relative_coordinates.abs())

self.register_buffer("relative_coordinates_log", relative_coordinates_log)

def get_relative_positional_encodings(self):

"""

Method computes the relative positional encodings

:return: Relative positional encodings [1, number of heads, window size ** 2, window size ** 2]

"""

relative_position_bias = self.meta_network(self.relative_coordinates_log)

relative_position_bias = relative_position_bias.permute(1, 0)

relative_position_bias = relative_position_bias.reshape(self.num_heads,

self.window_size[0] * self.window_size[1], \

self.window_size[0] * self.window_size[1])

return relative_position_bias.unsqueeze(0)

def forward(self, x, mask=None):

"""

Args:

x: input features with shape of (num_windows*B, Mh*Mw, C)

mask: (0/-inf) mask with shape of (num_windows, Wh*Ww, Wh*Ww) or None

"""

# [batch_size*num_windows, Mh*Mw, total_embed_dim]

B_, N, C = x.shape

# qkv(): -> [batch_size*num_windows, Mh*Mw, 3 * total_embed_dim]

# reshape: -> [batch_size*num_windows, Mh*Mw, 3, num_heads, embed_dim_per_head]

# permute: -> [3, batch_size*num_windows, num_heads, Mh*Mw, embed_dim_per_head]

qkv = self.qkv(x).reshape(B_, N, 3, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

# [batch_size*num_windows, num_heads, Mh*Mw, embed_dim_per_head]

q, k, v = qkv.unbind(0) # make torchscript happy (cannot use tensor as tuple)

attn = torch.einsum("bhqd, bhkd -> bhqk", q, k) \

/ torch.maximum(torch.norm(q, dim=-1, keepdim=True)

* torch.norm(k, dim=-1, keepdim=True).transpose(-2, -1),

torch.tensor(1e-06, device=q.device, dtype=q.dtype))

# transpose: -> [batch_size*num_windows, num_heads, embed_dim_per_head, Mh*Mw]

# @: multiply -> [batch_size*num_windows, num_heads, Mh*Mw, Mh*Mw]

# q = q * self.scale

# cosine -->dot?????? Scaled cosine attention:cosine(q,k)/tau 也许理解的不准确: 控制数值范围有利于训练稳定 (残差块的累加 导致深层难以稳定训练)

# attn = (q @ k.transpose(-2, -1))

# q = torch.norm(q, p=2, dim=-1)

# k = torch.norm(k, p=2, dim=-1)

# attn /= q.unsqueeze(-1)

# attn /= k.unsqueeze(-2)

# attn=attention_map

# print('attn shape:',attn.size())

# print('attn2 shape:',attention_map.size())

attn /= self.tau.clamp(min=0.01)

# relative_position_bias_table.view: [Mh*Mw*Mh*Mw,nH] -> [Mh*Mw,Mh*Mw,nH]

# relative_position_bias = self.relative_position_bias_weight[self.relative_position_index.view(-1)].view(

# self.window_size[0] * self.window_size[1], self.window_size[0] * self.window_size[1], -1)

# relative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # [nH, Mh*Mw, Mh*Mw]

# print("net work new positional_enco:",self.__get_relative_positional_encodings().size())

# print('attn shape:',attn.size())

# attn = attn + relative_position_bias.unsqueeze(0)

attn = attn + self.get_relative_positional_encodings()

if mask is not None:

# mask: [nW, Mh*Mw, Mh*Mw]

nW = mask.shape[0] # num_windows

# attn.view: [batch_size, num_windows, num_heads, Mh*Mw, Mh*Mw]

# mask.unsqueeze: [1, nW, 1, Mh*Mw, Mh*Mw]

attn = attn.view(B_ // nW, nW, self.num_heads, N, N) + mask.unsqueeze(1).unsqueeze(0)

attn = attn.view(-1, self.num_heads, N, N)

attn = self.softmax(attn)

else:

attn = self.softmax(attn)

attn = self.attn_drop(attn)

# @: multiply -> [batch_size*num_windows, num_heads, Mh*Mw, embed_dim_per_head]

# transpose: -> [batch_size*num_windows, Mh*Mw, num_heads, embed_dim_per_head]

# reshape: -> [batch_size*num_windows, Mh*Mw, total_embed_dim]

# x = (attn @ v).transpose(1, 2).reshape(B_, N, C) ## float()

x = torch.einsum("bhal, bhlv -> bhav", attn, v)

# x = self.proj(x)

# x = self.proj_drop(x)

# print('out shape:',x.size())

return x

class SwinTransformerBlock(nn.Module):

r""" Swin Transformer Block.

Args:

dim (int): Number of input channels.

num_heads (int): Number of attention heads.

window_size (int): Window size.

shift_size (int): Shift size for SW-MSA.

mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

drop (float, optional): Dropout rate. Default: 0.0

attn_drop (float, optional): Attention dropout rate. Default: 0.0

drop_path (float, optional): Stochastic depth rate. Default: 0.0

act_layer (nn.Module, optional): Activation layer. Default: nn.GELU

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

"""

def __init__(self, dim, num_heads, window_size=7, shift_size=0,

mlp_ratio=4., qkv_bias=True, drop=0., attn_drop=0., drop_path=0.,

act_layer=nn.GELU, norm_layer=nn.LayerNorm, Global=False):

super().__init__()

self.dim = dim

self.num_heads = num_heads

self.window_size = window_size

self.shift_size = shift_size

self.mlp_ratio = mlp_ratio

assert 0 <= self.shift_size < self.window_size, "shift_size must in 0-window_size"

# patches_resolution = [img_size[0] // patch_size[0], img_size[1] // patch_size[1]]

self.norm1 = norm_layer(dim)

self.attn = WindowAttention(

dim, window_size=(self.window_size, self.window_size), num_heads=num_heads, qkv_bias=qkv_bias,

attn_drop=attn_drop, proj_drop=drop)

# if Global else Global_WindowAttention(

# dim, window_size=(self.window_size, self.window_size),input_resolution=() num_heads=num_heads, qkv_bias=qkv_bias,

# attn_drop=attn_drop, proj_drop=drop)

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, out_features=dim, act_layer=act_layer,

drop=drop)

def forward(self, x, attn_mask):

# H, W = self.H, self.W

# print("org-input block shape:",x.size())

x = x.permute(0, 3, 2, 1).contiguous() # B,H,W,C

B, H, W, C = x.shape

# B, L, C = x.shape

# assert L == H * W, "input feature has wrong size"

shortcut = x

# H,W=int(H), int(W)

# x = self.norm1(x)

# x = x.view(B, H, W, C)

# pad feature maps to multiples of window size

# 把feature map给pad到window size的整数倍

# if min(H, W) < self.window_size or H % self.window_size!=0:

# Padding = True

pad_l = pad_t = 0

pad_r = (self.window_size - W % self.window_size) % self.window_size

pad_b = (self.window_size - H % self.window_size) % self.window_size

x = F.pad(x, (0, 0, pad_l, pad_r, pad_t, pad_b))

_, Hp, Wp, _ = x.shape

# cyclic shift

if self.shift_size > 0:

shifted_x = torch.roll(x, shifts=(-self.shift_size, -self.shift_size), dims=(1, 2))

else:

shifted_x = x

attn_mask = None

# partition windows

x_windows = window_partition(shifted_x, self.window_size) # [nW*B, Mh, Mw, C]

x_windows = x_windows.view(-1, self.window_size * self.window_size, C) # [nW*B, Mh*Mw, C]

# W-MSA/SW-MSA

attn_windows = self.attn(x_windows, mask=attn_mask) # [nW*B, Mh*Mw, C]

# merge windows

attn_windows = attn_windows.view(-1, self.window_size, self.window_size, C) # [nW*B, Mh, Mw, C]

shifted_x = window_reverse(attn_windows, self.window_size, Hp, Wp) # [B, H', W', C]

# reverse cyclic shift

if self.shift_size > 0:

x = torch.roll(shifted_x, shifts=(self.shift_size, self.shift_size), dims=(1, 2))

else:

x = shifted_x

if pad_r > 0 or pad_b > 0:

# 把前面pad的数据移除掉

x = x[:, :H, :W, :].contiguous()

x = self.norm1(x) # pos-norm.1

# x = x.view(B, H * W, C)

# FFN

x = shortcut + self.drop_path(x)

x = x + self.drop_path(self.norm2(self.mlp(x))) # pos-norm.2

x = x.permute(0, 3, 2, 1).contiguous()

# print("swinblock ouput——shape:",x.size())

return x

class SwinTransformer_Layer(nn.Module):

"""

A basic Swin Transformer layer for one stage.

Args:

dim (int): Number of input channels.

depth (int): Number of blocks.

num_heads (int): Number of attention heads.

window_size (int): Local window size: 7 or 8

mlp_ratio (float): Ratio of mlp hidden dim to embedding dim.

qkv_bias (bool, optional): If True, add a learnable bias to query, key, value. Default: True

drop (float, optional): Dropout rate. Default: 0.0

attn_drop (float, optional): Attention dropout rate. Default: 0.0

drop_path (float | tuple[float], optional): Stochastic depth rate. Default: 0.0

norm_layer (nn.Module, optional): Normalization layer. Default: nn.LayerNorm

downsample (nn.Module | None, optional): Downsample layer at the end of the layer. Default: None

use_checkpoint (bool): Whether to use checkpointing to save memory. Default: False.

"""

def __init__(self, dim, depth, num_heads, last_layer=False, window_size=7,

mlp_ratio=4., qkv_bias=True, drop=0., attn_drop=0.,

drop_path=0., norm_layer=nn.LayerNorm, downsample=PatchMerging, use_checkpoint=False):

super().__init__()

self.dim = dim

self.depth = depth

self.last_layer = last_layer

self.window_size = window_size

self.use_checkpoint = use_checkpoint

self.shift_size = window_size // 2

# build blocks

self.blocks = nn.ModuleList([

SwinTransformerBlock(

dim=dim,

num_heads=num_heads,

window_size=window_size,

shift_size=0 if (i % 2 == 0) else self.shift_size,

mlp_ratio=mlp_ratio,

qkv_bias=qkv_bias,

drop=drop,

attn_drop=attn_drop,

drop_path=drop_path[i] if isinstance(drop_path, list) else drop_path,

norm_layer=norm_layer,

Global=False)

for i in range(depth)])

# patch merging layer

if self.last_layer is False:

# print('开始进行patchmergin------打印层深度:',depth)

self.downsample = downsample(dim=dim, norm_layer=norm_layer)

else:

# print('最后1层默认没有Patchmerging:',depth)

# self.norm = norm_layer(self.num_features)

# self.avgpool = nn.AdaptiveAvgPool1d(1)

self.downsample = None

self.avgpool = nn.AdaptiveAvgPool1d(1)

def create_mask(self, x, H, W):

# calculate attention mask for SW-MSA

# 保证Hp和Wp是window_size的整数倍

Hp = int(np.ceil(H / self.window_size)) * self.window_size

Wp = int(np.ceil(W / self.window_size)) * self.window_size

# 拥有和feature map一样的通道排列顺序,方便后续window_partition

img_mask = torch.zeros((1, Hp, Wp, 1), device=x.device) # [1, Hp, Wp, 1]

h_slices = (slice(0, -self.window_size),

slice(-self.window_size, -self.shift_size),

slice(-self.shift_size, None))

w_slices = (slice(0, -self.window_size),

slice(-self.window_size, -self.shift_size),

slice(-self.shift_size, None))

cnt = 0

for h in h_slices:

for w in w_slices:

img_mask[:, h, w, :] = cnt

cnt += 1

mask_windows = window_partition(img_mask, self.window_size) # [nW, Mh, Mw, 1]

mask_windows = mask_windows.view(-1, self.window_size * self.window_size) # [nW, Mh*Mw]

attn_mask = mask_windows.unsqueeze(1) - mask_windows.unsqueeze(2) # [nW, 1, Mh*Mw] - [nW, Mh*Mw, 1]

# [nW, Mh*Mw, Mh*Mw]

attn_mask = attn_mask.masked_fill(attn_mask != 0, float(-100.0)).masked_fill(attn_mask == 0, float(0.0))

return attn_mask

def forward(self, x):

# print('swinlayers input shape:',x.size())

B, C, H, W = x.size()

# H=int(L**0.5)

# W=H

# assert L == H * W, "input feature has wrong size"

attn_mask = self.create_mask(x, H, W) # [nW, Mh*Mw, Mh*Mw]

for blk in self.blocks:

blk.H, blk.W = H, W

if not torch.jit.is_scripting() and self.use_checkpoint:

x = checkpoint.checkpoint(blk, x, attn_mask)

else:

x = blk(x, attn_mask)

if self.downsample is not None:

x = self.downsample(x, H, W)

H, W = (H + 1) // 2, (W + 1) // 2

# if self.last_layer:

# x=x.view(B,H,W,C)

# x=x.transpose(1,3)

# x = self.norm(x) # [B, L, C]

# x = self.avgpool(x.transpose(1, 2)) # [B, C, 1]

# x = x.view(B,-1,H,W)

# x = window_reverse(x, self.window_size, H, W) # [B, H', W', C]

# x = torch.flatten(x, 1)

x = x.view(B, -1, H, W) #

# print("Swin-Transform 层 ------------------------输出维度:",x.size())

return x

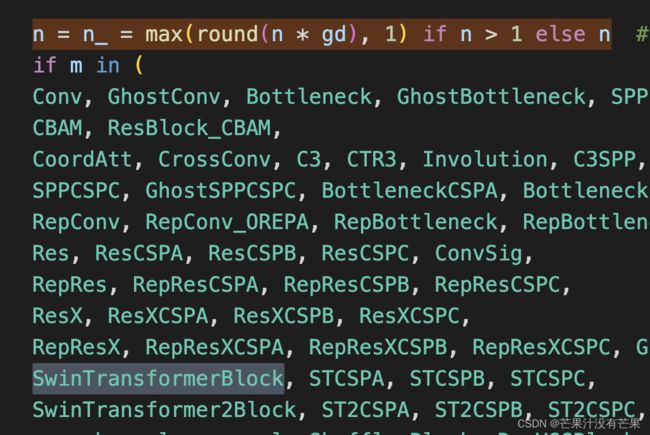

yolo.py配置

然后找到./models/yolo.py文件下里的parse_model函数,将类名加入进去

在 models/yolo.py文件夹下全局搜索 n = n_ = max(round(n * gd), 1) if n > 1 else n

下方按图所示 只需要增加 SwinTransformerBlock

训练yolov5_swin_transfomrer模型

python train.py --cfg yolov5_swin_transfomrer.yaml

针对以上yaml文件继续修改

关于yolov5_swin_transfomrer.yaml文件配置中的SwinTransformer模块,可以针对不同数据集自行再进行魔改,原理一致

理论

理论部分参考:Swin Transformer的继任者:Local Vision Transformer的革命