机器学习Sklearn总结2——分类算法

目录

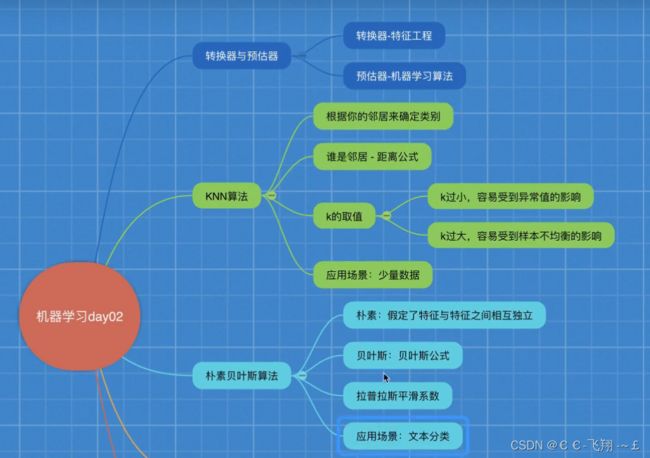

一、转换器与估计器

二、分类算法

K-近邻算法

案例代码:

模型选择与调优

案例代码:

朴素贝叶斯算法:

朴素贝叶斯算法总结

案例代码:

决策树总结:

案例代码:

使用随机森林来实现:

随机森林总结

总结

本次案例的代码集:

一、转换器与估计器

二、分类算法

K-近邻算法

KNN算法总结:

优点:

简单、易于理解、易于实现、无需训练

缺点:

1)必须指定K值,K值选定不当则分类精度不能保证。

2)懒惰算法,对测试样本分类时的计算量大,内存开销大

使用场景:

小数据场景,几千~几万条样本,具体使用看业务场景。

案例代码:

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

def knn_iris():

"""

用KNN算法对iris数据进行分类

:return:

"""

# 1)获取数据

iris = load_iris()

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=6

)

# 3) 特征工程:标准化

transfer = StandardScaler()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4) KNN算法预估器

estimator = KNeighborsClassifier(n_neighbors=3)

estimator.fit(x_train, y_train)

# 5) 模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

return None

if __name__ == '__main__':

# 代码1:用KNN算法对iris数据进行分类

knn_iris()

模型选择与调优

案例代码

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.model_selection import GridSearchCV

def knn_iris_gscv():

"""

用KNN算法对iris数据进行分类,添加网格搜索和交叉验证

:return:

"""

# 1)获取数据

iris = load_iris()

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=6

)

# 3) 特征工程:标准化

transfer = StandardScaler()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4) KNN算法预估器

estimator = KNeighborsClassifier()

# 加入网格搜索和交叉验证

# 参数准备

param_dict = {"n_neighbors": [1, 3, 5, 7, 9, 11]}

estimator = GridSearchCV(estimator, param_grid=param_dict, cv=10)

estimator.fit(x_train, y_train)

# 5) 模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 最佳参数结果:best_param_

print("最佳参数:\n", estimator.best_params_)

# 最佳结果:best_score_

print("最佳结果:\n", estimator.best_score_)

# 最佳估计器:best_estimator_

print("最佳估计器:\n", estimator.best_estimator_)

# 交叉验证结果: cv_results_

print("交叉验证结果:\n", estimator.cv_results_)

return None

if __name__ == '__main__':

# 代码2: 用KNN算法对iris数据进行分类,添加网格搜索和交叉验证

knn_iris_gscv()facebook数据挖掘案例:

案例代码:

import pandas as pd

from sklearn.model_selection import train_test_split, GridSearchCV

from sklearn.neighbors import KNeighborsClassifier

from sklearn.preprocessing import StandardScaler

def predict_data():

"""

数据预处理

:return:

"""

# 1)读取数据

data = pd.read_csv("./train.csv")

# 2)基本数据处理

# 缩小范围

data = data.query("x<2.5 & x>2 & y<1.5 & y>1.0")

# 处理时间特征

time_value = pd.to_datatime(data["time"], unit="s")

date = pd.DatetimeIndex(time_value)

data.loc[:, "day"] = date.day

data.loc[:, "weekday"] = date.weekday

data["hour"] = data.hour

# 3)过滤签到次数少的地点

data.groupby("place_id").count()

place_count = data.groupby("place_id").count()["row_id"]

data_final = data[data['place_id'].isin(place_count[place_count > 3].index.vlaues)]

# 筛选特征值和目标值

x = data_final[["x", "y", "accuracy", "day", "weekday", "hour"]]

y = data_final["place_id"]

# 数据集划分

# 机器学习

x_train, x_test, y_train, y_test = train_test_split(x, y)

# 3) 特征工程:标准化

transfer = StandardScaler()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4) KNN算法预估器

estimator = KNeighborsClassifier()

# 加入网格搜索和交叉验证

# 参数准备

param_dict = {"n_neighbors": [1, 3, 5, 7, 9, 11]}

estimator = GridSearchCV(estimator, param_grid=param_dict, cv=3)

estimator.fit(x_train, y_train)

# 5) 模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 最佳参数结果:best_param_

print("最佳参数:\n", estimator.best_params_)

# 最佳结果:best_score_

print("最佳结果:\n", estimator.best_score_)

# 最佳估计器:best_estimator_

print("最佳估计器:\n", estimator.best_estimator_)

# 交叉验证结果: cv_results_

print("交叉验证结果:\n", estimator.cv_results_)

return None

if __name__ == '__main__':

predict_data()

朴素贝叶斯算法:

案例代码

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.model_selection import GridSearchCV

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

def nb_news():

"""

用朴素贝叶斯算法对新闻进行分类

:return:

"""

# 1)获取数据

news = fetch_20newsgroups(subset="all")

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(news.data, news.target)

# 3)特征工程文本特征抽取-tfidf

transfer = TfidfVectorizer()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4)朴素贝叶斯算法预估器流程

estimator = MultinomialNB()

estimator.fit(x_train, y_train)

# 5)模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2:计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

return None

if __name__ == '__main__':

# 代码3:用朴素贝叶斯算法对新闻进行分类

nb_news()朴素贝叶斯算法总结

优点:

对缺失数据不太敏感,算法比较简单,常用于文本分类。

分类准确度高,速度快。

缺点:

由于使用样本独立的假设,所以如果特征之间关联,预测效果不明显。

决策树

案例代码:

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.model_selection import GridSearchCV

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn.tree import DecisionTreeClassifier, export_graphviz

def decision_iris():

"""

用决策树对iris数据进行分类

:return:

"""

# 1)获取数据集

iris = load_iris()

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=22)

# 3)决策树预估器

estimator = DecisionTreeClassifier(criterion="entropy")

estimator.fit(x_train, y_train)

# 4)模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 可视化决策树

export_graphviz(estimator, out_file="iris_tree.dot", feature_names=iris.feature_names)

return None

if __name__ == '__main__':

# 代码4:用决策树对iris数据进行分类

decision_iris()

决策树支持可视化:

.dot文件转换为可视化图像的网页:Graphviz Online

决策树总结:

优点:

可视化——解释性强

缺点:

容易产生过拟合,这时候使用随机森林效果会好些

决策树的实验项目——titanic数据的案例

案例代码:

import pandas as pd

from sklearn.feature_extraction import DictVectorizer

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeClassifier, export_graphviz

def decision_titanic():

# 1、获取数据

titanic = pd.read_csv("./titanic.csv")

print(titanic)

# 筛选特征值和目标值

x = titanic[["pclass", "age", "sex"]]

y = titanic["survived"]

# 2、数据处理

# 1)缺失值处理

x['age'].fillna(x["age"].mean(), inplace=True)

# 2)转换成字典

x = x.to_dict(orient="records")

# 3、数据集划分

x_train, x_test, y_train, y_test = train_test_split(x, y, random_state=22)

transfer = DictVectorizer()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 3)决策树预估器

estimator = DecisionTreeClassifier(criterion="entropy", max_depth=8)

estimator.fit(x_train, y_train)

# 4)模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2:计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 可视化决策树

export_graphviz(estimator, out_file="titanic_tree.dot", feature_names=transfer.get_feature_names())

if __name__ == '__main__':

decision_titanic()

使用随机森林来实现:

import pandas as pd

from sklearn.feature_extraction import DictVectorizer

from sklearn.model_selection import train_test_split

from sklearn.tree import DecisionTreeClassifier, export_graphviz

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import GridSearchCV

def decision_titanic():

# 1、获取数据

titanic = pd.read_csv("./titanic.csv")

print(titanic)

# 筛选特征值和目标值

x = titanic[["pclass", "age", "sex"]]

y = titanic["survived"]

# 2、数据处理

# 1)缺失值处理

x['age'].fillna(x["age"].mean(), inplace=True)

# 2)转换成字典

x = x.to_dict(orient="records")

# 3、数据集划分

x_train, x_test, y_train, y_test = train_test_split(x, y, random_state=22)

transfer = DictVectorizer()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 3)随机森林预估器

estimator = RandomForestClassifier()

# 加入网格搜索与交叉验证

# 参数准备

param_dict = {"n_estimators": [120, 200, 300, 500, 800, 1200],

"max_depth": [5, 8, 15, 25, 30]}

estimator = GridSearchCV(estimator, param_grid=param_dict, cv=3)

estimator.fit(x_train, y_train)

# 4)模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2:计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 可视化决策树

export_graphviz(estimator, out_file="titanic_tree.dot", feature_names=transfer.get_feature_names())

if __name__ == '__main__':

decision_titanic()

随机森林总结

优点:

能够有效的运行在大数据集上

处理具有高维特征的输入样本,而且不需要降维。

总结

本次案例的代码集:

from sklearn.datasets import load_iris

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.model_selection import GridSearchCV

from sklearn.datasets import fetch_20newsgroups

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn.tree import DecisionTreeClassifier, export_graphviz

def knn_iris():

"""

用KNN算法对iris数据进行分类

:return:

"""

# 1)获取数据

iris = load_iris()

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=6

)

# 3) 特征工程:标准化

transfer = StandardScaler()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4) KNN算法预估器

estimator = KNeighborsClassifier(n_neighbors=3)

estimator.fit(x_train, y_train)

# 5) 模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

return None

def knn_iris_gscv():

"""

用KNN算法对iris数据进行分类,添加网格搜索和交叉验证

:return:

"""

# 1)获取数据

iris = load_iris()

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=6

)

# 3) 特征工程:标准化

transfer = StandardScaler()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4) KNN算法预估器

estimator = KNeighborsClassifier()

# 加入网格搜索和交叉验证

# 参数准备

param_dict = {"n_neighbors": [1, 3, 5, 7, 9, 11]}

estimator = GridSearchCV(estimator, param_grid=param_dict, cv=10)

estimator.fit(x_train, y_train)

# 5) 模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 最佳参数结果:best_param_

print("最佳参数:\n", estimator.best_params_)

# 最佳结果:best_score_

print("最佳结果:\n", estimator.best_score_)

# 最佳估计器:best_estimator_

print("最佳估计器:\n", estimator.best_estimator_)

# 交叉验证结果: cv_results_

print("交叉验证结果:\n", estimator.cv_results_)

return None

def nb_news():

"""

用朴素贝叶斯算法对新闻进行分类

:return:

"""

# 1)获取数据

news = fetch_20newsgroups(subset="all")

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(news.data, news.target)

# 3)特征工程文本特征抽取-tfidf

transfer = TfidfVectorizer()

x_train = transfer.fit_transform(x_train)

x_test = transfer.transform(x_test)

# 4)朴素贝叶斯算法预估器流程

estimator = MultinomialNB()

estimator.fit(x_train, y_train)

# 5)模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2:计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

return None

def decision_iris():

"""

用决策树对iris数据进行分类

:return:

"""

# 1)获取数据集

iris = load_iris()

# 2)划分数据集

x_train, x_test, y_train, y_test = train_test_split(iris.data, iris.target, random_state=22)

# 3)决策树预估器

estimator = DecisionTreeClassifier(criterion="entropy")

estimator.fit(x_train, y_train)

# 4)模型评估

# 方法1:直接比对真实值和预测值

y_predict = estimator.predict(x_test)

print("y_predict:\n", y_predict)

print("直接比对真实值和预测值:\n", y_test == y_predict)

# 方法2: 计算准确率

score = estimator.score(x_test, y_test)

print("准确率为:\n", score)

# 可视化决策树

export_graphviz(estimator, out_file="iris_tree.dot", feature_names=iris.feature_names)

return None

if __name__ == '__main__':

# 代码1:用KNN算法对iris数据进行分类

# knn_iris()

# 代码2: 用KNN算法对iris数据进行分类,添加网格搜索和交叉验证

# knn_iris_gscv()

# 代码3:用朴素贝叶斯算法对新闻进行分类

# nb_news()

# 代码4:用决策树对iris数据进行分类

decision_iris()