k8s集群搭建 + KubeSphere (2021)PS:无坑操作,复制粘贴

k8s集群搭建 + KubeSphere (2021)PS:无坑操作,复制粘贴

k8s集群搭建相关操作(2021)

-------工具 Vagrant + VBox + Xshell

宿主机:Win10 虚拟机: Centos7

- 使用vagrantFile创建虚拟机

1.创建一个文件夹k8s,在当前目录打开命令行窗口,运行

vagrant init

2.打开当前目录生成的Vagrantfile文件,修改内容如下

Vagrant.configure(“2”) do |config|

(1…3).each do |i|

config.vm.define “k8s-node#{i}” do |node|

# 设置虚拟机的Box

node.vm.box = “centos/7”

# 设置虚拟机的主机名

node.vm.hostname="k8s-node#{i}"

# 设置虚拟机的IP

node.vm.network "private_network", ip: "192.168.56.#{99+i}", netmask: "255.255.255.0"

# 设置主机与虚拟机的共享目录

# node.vm.synced_folder "~/Documents/vagrant/share", "/home/vagrant/share"

# VirtaulBox相关配置

node.vm.provider "virtualbox" do |v|

# 设置虚拟机的名称

v.name = "k8s-node#{i}"

# 设置虚拟机的内存大小

v.memory = 4096

# 设置虚拟机的CPU个数

v.cpus = 4

end

end

end

end

3.再次运行

vagrant up

等待虚拟机创建完成,如下图

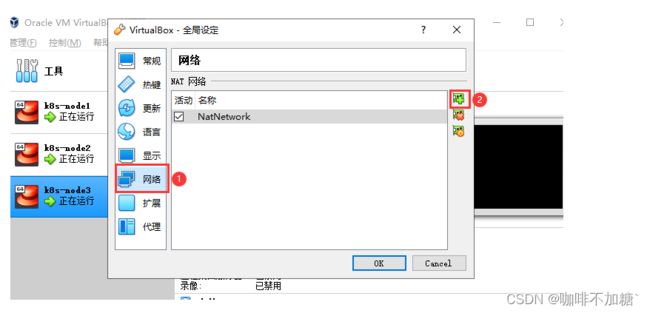

- 设置虚拟机网络使用nat网卡

1.将虚拟机全部关闭,打开全局设定

2.创建一个网卡

3.依次将每个虚拟机应用设置,如图

*使用Xshell连接三台虚拟机,三体虚拟机都要执行以下操作,如遇Xshell公钥链接不上问题,请看博主Linux踩坑下的解决方案@_@

连接成功之后,右键空白处,可以同时操作三台虚拟机,不过三台虚拟机可能不同步,每次操作都要确认三台虚拟机都能执行成功

一 * 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

二 * 关闭Linux

sed -i ‘s/enforcing/disabled/’ /etc/selinux/config

setenforce 0

三 * 关闭swap分区

swapoff -a

sed -ri ‘s/.swap./#&/’ /etc/fstab

echo “vm.swappiness = 0”>> /etc/sysctl.conf

swapoff -a && swapon -a

sysctl -p

- 验证,swap必须为0

free -g

四 * 添加主机名与IP对应关系:

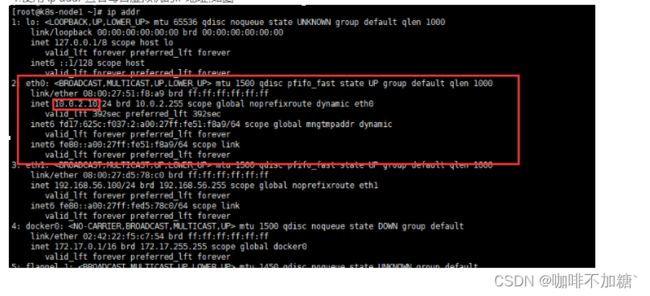

1.使用 ip addr 查看每台虚拟机的IP地址,如图

2.键入以下命令,修改hosts文件

vi /etc/hosts

3.将上图中每台虚拟机的地址添加到文件中

10.0.2.10 k8s-node1

10.0.2.15 k8s-node2

10.0.2.11 k8s-node3

PS: 地址为你自己的虚拟机对应的IP地址

五 * 将桥接的IPV4流量传递到iptables的链:

1.键入命令

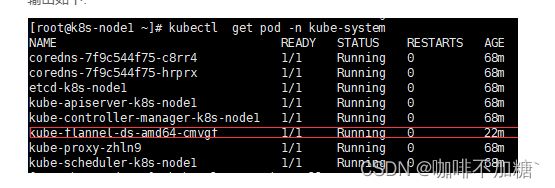

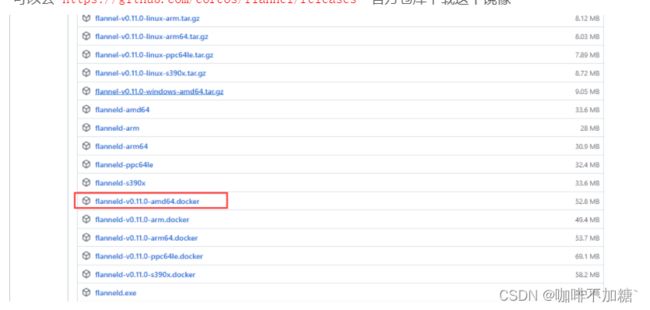

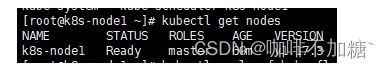

cat > /etc/sysctl.d/k8s.conf < net.bridge.bridge-nf-call-ip6tables = 1 2.应用以上规则 六 * 安装dokcer 1.更新工具 2.下载新的CentOS-Base.repo由于文件相同可能会替换或者覆盖,所以最好把源文件做一下备份: mv /etc/yum.repos.d/CentOS-Base.repo /etc/yum.repos.d/CentOS-Base.repo.backup 3.备份好了之后,执行以下命令: wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo 4.再执行生成缓存命令: yum makecache 5.docker下载镜像加速 yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo 6.下载docker,版本必须小于19.03 yum -y install docker-ce-19.03.0 docker-ce-cli-19.03.0 containerd.io systemctl enable docker 七 * 安装三件套 3.设置开机启动 1.在Master节点上,创建并执行master_images.sh images=( for imageName in i m a g e s [ @ ] ; d o d o c k e r p u l l r e g i s t r y . c n − h a n g z h o u . a l i y u n c s . c o m / g o o g l e c o n t a i n e r s / {images[@]} ; do docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/ images[@];dodockerpullregistry.cn−hangzhou.aliyuncs.com/googlecontainers/imageName done 2.将 master_images.sh 设置为可执行 4.初始化kubeadm 6.执行成功会显示以下内容 Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube You should now deploy a pod network to the cluster. Then you can join any number of worker nodes by running the following on each as root: kubeadm join 10.0.2.15:6443 --token sg47f3.4asffoi6ijb8ljhq 7.然后依次执行红色部分命令 mkdir -p $HOME/.kube 绿色部分为子节点加入命令保存起来,后面会用 1.创建kube-flannel.yml文件 vi kube-flannel.yml apiVersion: policy/v1beta1 runAsUser: allowPrivilegeEscalation: false allowedCapabilities: [‘NET_ADMIN’] hostPID: false seLinux: kind: ClusterRole kind: ClusterRoleBinding apiVersion: apps/v1 apiVersion: apps/v1 apiVersion: apps/v1 apiVersion: apps/v1 apiVersion: apps/v1 2.执行安装命令 kubectl apply -f kube-flannel.yml 3.查看pod状态 kubectl get pod -n kube-system 输出如下: 巨坑!!! :如果红色框中的状态不是running, 可以去 https://github.com/coreos/flannel/releases 官方仓库下载这个镜像 然后运行下面命令 docker load < flanneld-v0.11.0-amd64.docker 等待一两分钟,问题解决,此操作需要在所有节点上执行,所有节点flanneld都必须运行 4.查看主节点运行状态 kubectl get nodes 状态为ready,表示成功,此时主节点配置完成 *最后步骤 1.子节点只需运行上文中绿色部分子节点加入命令即可,当然是你自己的. kubeadm join ********* --token sg47f3.4asffoi6ijb8ljhq kubectl get nodes 3.此时其他节点为notReady,我们可以打开监控页面,监控其他节点进度,执行: watch kubectl get pod -n kube-system -o wide 然后等待所有节点部署完成,都是runnig状态 4.进入master节点再次查看所有节点状态: kubectl get nodes ** 整合图形化界面 KubeSphere ** *安装包管理工具helm 使用命令下载helm压缩包 wget https://get.helm.sh/helm-v2.16.2-linux-amd64.tar.gz 然后解压 tar -zxvf helm-v2.16.2-linux-amd64.tar.gz 设置文件执行路径,将解压后的helm移动到 /usr/local/bin/目录 mv linux-amd64/helm /usr/local/bin/helm 3.创建权限 apiVersion: rbac.authorization.k8s.io/v1 *初始化helm 1.helm init --service-account=tiller --tiller-image=sapcc/tiller:v2.16.2 --history-max 300 执行以下命令 touch /root/.helm/repository/repositories.yaml 如果下载tiller失败,则需要手动去docker下载 kubectl edit deployment tiller-deploy -n kube-system 2.添加常用仓库 helm repo add stable https://kubernetes.oss-cn-hangzhou.aliyuncs.com/charts helm repo add aliyun https://apphub.aliyuncs.com/ helm repo add bitnami https://charts.bitnami.com/bitnami/

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

yum install -y yum-utils

yum install -y wget

7.设置docker开机启动并启动docker

service docker start

1.键入以下命令

cat < /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2.安装

yum install -y kubelet-1.17.3 kubeadm-1.17.3 kubectl-1.17.3

如果报错需要ssh认证,那么使用下面这条命令

yum install -y --nogpgcheck kubelet kubeadm kubectl

systemctl enable kubelet && systemctl start kubelet

vi master_images.sh

内容如下

#!/bin/bash

kube-apiserver:v1.17.3

kube-proxy:v1.17.3

kube-controller-manager:v1.17.3

kube-scheduler:v1.17.3

coredns:1.6.5

etcd:3.4.3-0

pause:3.1

)docker tag registry.cn-hangzhou.aliyuncs.com/google_containers/ i m a g e N a m e k 8 s . g c r . i o / imageName k8s.gcr.io/ imageNamek8s.gcr.io/imageName

chmod -R 777 master_images.sh

3.执行文件下载镜像

sh master_images.sh

执行以下命令:

kubeadm init

–apiserver-advertise-address=10.0.2.15

–image-repository registry.cn-hangzhou.aliyuncs.com/google_containers

–kubernetes-version v1.17.3

–service-cidr=10.96.0.0/16

–pod-network-cidr=10.244.0.0/16

PS: apiserver-advertise-address 为你当前master节点的IP

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown ( i d − u ) : (id -u): (id−u):(id -g) $HOME/.kube/config

Run “kubectl apply -f [podnetwork].yaml” with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

–discovery-token-ca-cert-hash sha256:81fccdd29970cbc1b7dc7f171ac0234d53825bdf9b05428fc9e6767436991bfb

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown ( i d − u ) : (id -u): (id−u):(id -g) $HOME/.kube/config

内容如下:

kind: PodSecurityPolicy

metadata:

name: psp.flannel.unprivileged

annotations:

seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default

seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default

apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default

apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default

spec:

privileged: false

volumes:

allowedHostPaths:

readOnlyRootFilesystem: falseUsers and groups

rule: RunAsAny

supplementalGroups:

rule: RunAsAny

fsGroup:

rule: RunAsAnyPrivilege Escalation

defaultAllowPrivilegeEscalation: falseCapabilities

defaultAddCapabilities: []

requiredDropCapabilities: []Host namespaces

hostIPC: false

hostNetwork: true

hostPorts:

max: 65535SELinux

SELinux is unused in CaaSP

rule: ‘RunAsAny’

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

rules:

resources: [‘podsecuritypolicies’]

verbs: [‘use’]

resourceNames: [‘psp.flannel.unprivileged’]

resources:

verbs:

resources:

verbs:

resources:

verbs:

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: flannel

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: flannel

subjects:

name: flannel

namespace: kube-system

apiVersion: v1

kind: ServiceAccount

metadata:

name: flannel

namespace: kube-systemkind: ConfigMap

apiVersion: v1

metadata:

name: kube-flannel-cfg

namespace: kube-system

labels:

tier: node

app: flannel

data:

cni-conf.json: |

{

“name”: “cbr0”,

“cniVersion”: “0.3.1”,

“plugins”: [

{

“type”: “flannel”,

“delegate”: {

“hairpinMode”: true,

“isDefaultGateway”: true

}

},

{

“type”: “portmap”,

“capabilities”: {

“portMappings”: true

}

}

]

}

net-conf.json: |

{

“Network”: “10.244.0.0/16”,

“Backend”: {

“Type”: “vxlan”

}

}

kind: DaemonSet

metadata:

name: kube-flannel-ds-amd64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

operator: In

values:

operator: In

values:

hostNetwork: true

tolerations:

effect: NoSchedule

serviceAccountName: flannel

initContainers:

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

args:

volumeMounts:

mountPath: /etc/cni/net.d

mountPath: /etc/kube-flannel/

containers:

image: quay.io/coreos/flannel:v0.11.0-amd64

command:

args:

resources:

requests:

cpu: “100m”

memory: “50Mi”

limits:

cpu: “100m”

memory: “50Mi”

securityContext:

privileged: false

capabilities:

add: [“NET_ADMIN”]

env:

valueFrom:

fieldRef:

fieldPath: metadata.name

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

mountPath: /run/flannel

mountPath: /etc/kube-flannel/

volumes:

hostPath:

path: /run/flannel

hostPath:

path: /etc/cni/net.d

configMap:

name: kube-flannel-cfg

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm64

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

operator: In

values:

operator: In

values:

hostNetwork: true

tolerations:

effect: NoSchedule

serviceAccountName: flannel

initContainers:

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

args:

volumeMounts:

mountPath: /etc/cni/net.d

mountPath: /etc/kube-flannel/

containers:

image: quay.io/coreos/flannel:v0.11.0-arm64

command:

args:

resources:

requests:

cpu: “100m”

memory: “50Mi”

limits:

cpu: “100m”

memory: “50Mi”

securityContext:

privileged: false

capabilities:

add: [“NET_ADMIN”]

env:

valueFrom:

fieldRef:

fieldPath: metadata.name

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

mountPath: /run/flannel

mountPath: /etc/kube-flannel/

volumes:

hostPath:

path: /run/flannel

hostPath:

path: /etc/cni/net.d

configMap:

name: kube-flannel-cfg

kind: DaemonSet

metadata:

name: kube-flannel-ds-arm

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

operator: In

values:

operator: In

values:

hostNetwork: true

tolerations:

effect: NoSchedule

serviceAccountName: flannel

initContainers:

image: quay.io/coreos/flannel:v0.11.0-arm

command:

args:

volumeMounts:

mountPath: /etc/cni/net.d

mountPath: /etc/kube-flannel/

containers:

image: quay.io/coreos/flannel:v0.11.0-arm

command:

args:

resources:

requests:

cpu: “100m”

memory: “50Mi”

limits:

cpu: “100m”

memory: “50Mi”

securityContext:

privileged: false

capabilities:

add: [“NET_ADMIN”]

env:

valueFrom:

fieldRef:

fieldPath: metadata.name

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

mountPath: /run/flannel

mountPath: /etc/kube-flannel/

volumes:

hostPath:

path: /run/flannel

hostPath:

path: /etc/cni/net.d

configMap:

name: kube-flannel-cfg

kind: DaemonSet

metadata:

name: kube-flannel-ds-ppc64le

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

operator: In

values:

operator: In

values:

hostNetwork: true

tolerations:

effect: NoSchedule

serviceAccountName: flannel

initContainers:

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

args:

volumeMounts:

mountPath: /etc/cni/net.d

mountPath: /etc/kube-flannel/

containers:

image: quay.io/coreos/flannel:v0.11.0-ppc64le

command:

args:

resources:

requests:

cpu: “100m”

memory: “50Mi”

limits:

cpu: “100m”

memory: “50Mi”

securityContext:

privileged: false

capabilities:

add: [“NET_ADMIN”]

env:

valueFrom:

fieldRef:

fieldPath: metadata.name

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

mountPath: /run/flannel

mountPath: /etc/kube-flannel/

volumes:

hostPath:

path: /run/flannel

hostPath:

path: /etc/cni/net.d

configMap:

name: kube-flannel-cfg

kind: DaemonSet

metadata:

name: kube-flannel-ds-s390x

namespace: kube-system

labels:

tier: node

app: flannel

spec:

selector:

matchLabels:

app: flannel

template:

metadata:

labels:

tier: node

app: flannel

spec:

affinity:

nodeAffinity:

requiredDuringSchedulingIgnoredDuringExecution:

nodeSelectorTerms:

operator: In

values:

operator: In

values:

hostNetwork: true

tolerations:

effect: NoSchedule

serviceAccountName: flannel

initContainers:

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

args:

volumeMounts:

mountPath: /etc/cni/net.d

mountPath: /etc/kube-flannel/

containers:

image: quay.io/coreos/flannel:v0.11.0-s390x

command:

args:

resources:

requests:

cpu: “100m”

memory: “50Mi”

limits:

cpu: “100m”

memory: “50Mi”

securityContext:

privileged: false

capabilities:

add: [“NET_ADMIN”]

env:

valueFrom:

fieldRef:

fieldPath: metadata.name

valueFrom:

fieldRef:

fieldPath: metadata.namespace

volumeMounts:

mountPath: /run/flannel

mountPath: /etc/kube-flannel/

volumes:

hostPath:

path: /run/flannel

hostPath:

path: /etc/cni/net.d

configMap:

name: kube-flannel-cfg

--discovery-token-ca-cert-hash sha256:81fccdd29970cbc1b7dc7f171ac0234d53825bdf9b05428fc9e6767436991bfb

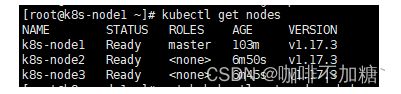

2.在master查看节点状态

输出如下创建helm-rbac.yaml,写入如下内容

vi helm-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: tiller

namespace: kube-system

kind: ClusterRoleBinding

metadata:

name: tiller

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

name: kubernetes-dashboard

namespace: kube-system

并编辑资源文件,使用本地镜像

helm repo add azure https://mirror.azure.cn/kubernetes/charts/