深度学习之目标检测(Swin Transformer for Object Detection)

目录

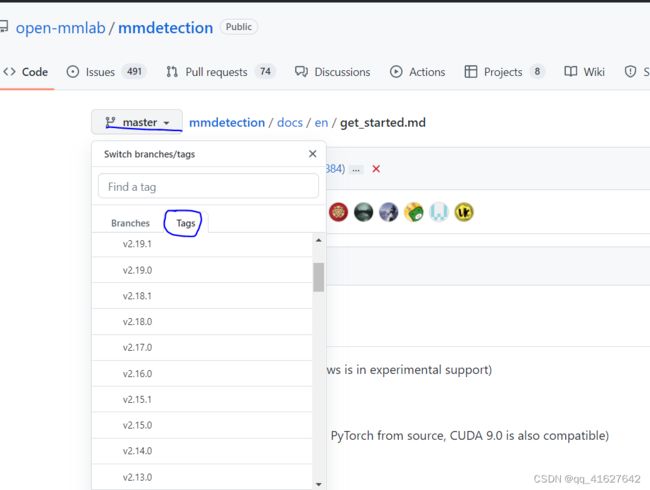

1、MMdetection系列版本编辑

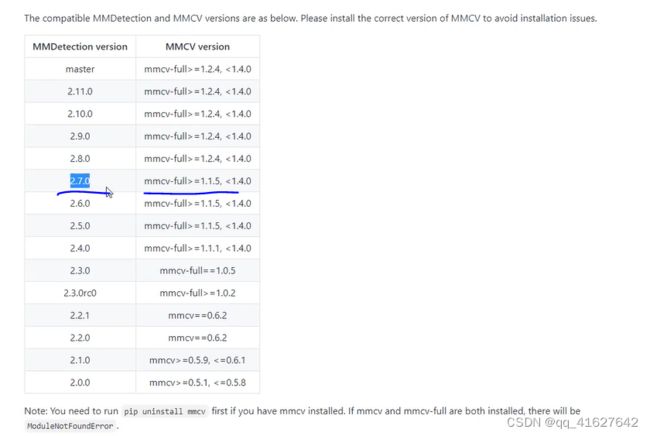

2、 MMDetection和MMCV兼容版本

3、Installation(Linux系统环境安装)

3.1 搭建基本环境

3.2 安装mmcv-full

3.3 安装其他必要的Python包

3.4 安装 MMDetection

3.5、apex安装

2、 Windows 的mmcv-full安装

windows的本地编译安装

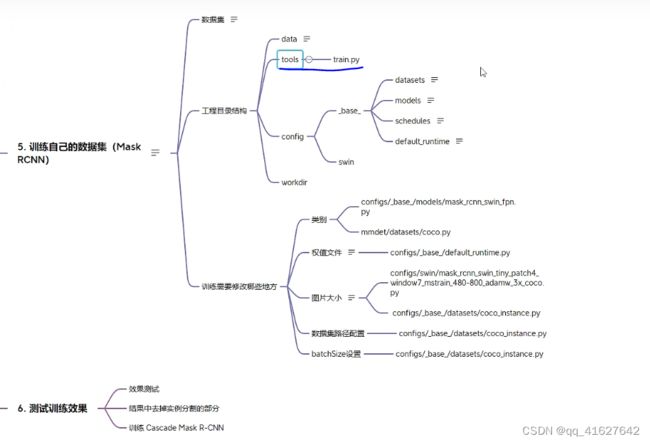

4、Swin Transform 训练自己的数据集

4.1 准备coco数据集

4.1.1 案例1 txt转coco数据集

1、visdrone数据

1. 将annotations中的txt标签转化为voc需要的xml文件

1. VOC Annotations文件夹

2.xml2json

4.2 配置修改工程

1、设置类别数(configs/base/models/mask_rcnn_swin_fpn.py):

2、修改配置信息(间隔和加载预训练模型configs/base/default_runtime.py)

3、修改训练尺寸大小、max_epochs按需修改(configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py)

4、配置数据集路径、img_scale、samples_per_gpu、workers_per_gpu和增加数据增强(configs/base/datasets/coco_detection.py)

configs/base/datasets/coco_instance.py 文件的最上面指定了数据集的路径,因此在项目下新建 data/coco目录,下面四个子目录 annotations和test2017,train2017,val2017。路径/configs/base/datasets/coco_detection.py,第2行的data_root数据集根目录路径,第8行的img_scale可以根据需要修改,下面train、test、val数据集的具体路径ann_file根据自己数据集修改

5、修改分类数组:mmdet/datasets/coco.py

6、可以修改学习率(mmdetection/configs/_base_/schedules/schedule_20e.py )

这里是调整学习率的schedule的位置,可以设置warmup schedule和衰减策略。 1x, 2x分别对应12epochs和24epochs,20e对应20epochs,这里注意配置都是默认8块gpu的训练,如果用一块gpu训练,需要在lr/8

7、载入修改好的配置文件

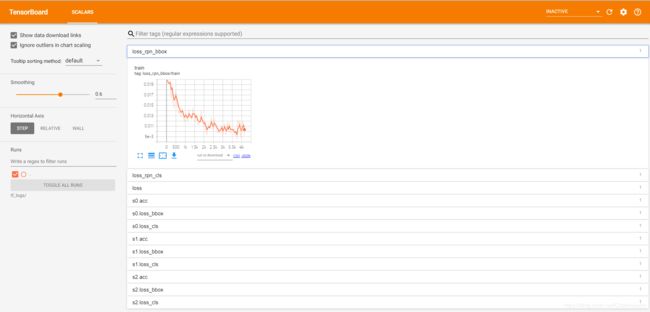

8、使用Tensorboard进行可视化,查看训练

9、训练过程中进行模型验证

4.3 开始训练执行图下命令

编辑

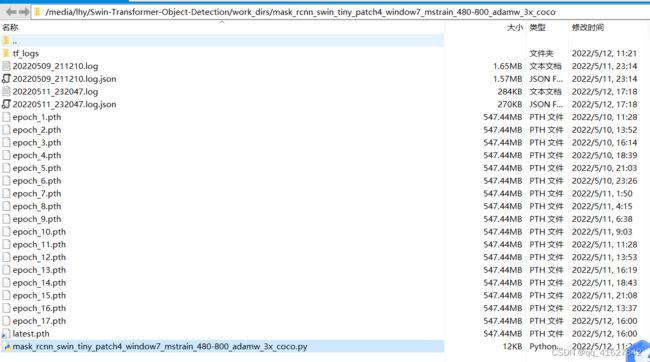

上面的.log、.log.json文件就是训练的日志文件,每训练完一个epoch后目录下还会有对应的以epoch_x.pth的模型文件,最新训练的模型文件命名为latest.pth。

4.4、禁用mask

训练后的模型进行推理测试、评估

将mmdetection产生的coco(json)格式的测试结果转化成VisDrone19官网所需要的txt格式文件(还包括无标签测试图片产生mmdet所需要的test.json方法)

4、遇到的问题及解决办法

5、测试训练好的模型

目录

1、MMdetection系列版本编辑

2、 MMDetection和MMCV兼容版本

3、Installation(Linux系统环境安装)

3.1 搭建基本环境

3.2 安装mmcv-full

3.3 安装其他必要的Python包

3.4 安装 MMDetection

3.5、apex安装

2、 Windows 的mmcv-full安装

windows的本地编译安装

4、Swin Transform 训练自己的数据集

4.1 准备coco数据集

4.1.1 案例1 txt转coco数据集

1、visdrone数据

1. 将annotations中的txt标签转化为voc需要的xml文件

1. VOC Annotations文件夹

2.xml2json

4.2 配置修改工程

1、设置类别数(configs/base/models/mask_rcnn_swin_fpn.py):

2、修改配置信息(间隔和加载预训练模型configs/base/default_runtime.py)

3、修改训练尺寸大小、max_epochs按需修改(configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py)

4、配置数据集路径、img_scale、samples_per_gpu、workers_per_gpu和增加数据增强(configs/base/datasets/coco_detection.py)

configs/base/datasets/coco_instance.py 文件的最上面指定了数据集的路径,因此在项目下新建 data/coco目录,下面四个子目录 annotations和test2017,train2017,val2017。路径/configs/base/datasets/coco_detection.py,第2行的data_root数据集根目录路径,第8行的img_scale可以根据需要修改,下面train、test、val数据集的具体路径ann_file根据自己数据集修改

5、修改分类数组:mmdet/datasets/coco.py

6、可以修改学习率(mmdetection/configs/_base_/schedules/schedule_20e.py )

这里是调整学习率的schedule的位置,可以设置warmup schedule和衰减策略。 1x, 2x分别对应12epochs和24epochs,20e对应20epochs,这里注意配置都是默认8块gpu的训练,如果用一块gpu训练,需要在lr/8

7、载入修改好的配置文件

8、使用Tensorboard进行可视化,查看训练

9、训练过程中进行模型验证

4.3 开始训练执行图下命令

4.4、禁用mask

4、遇到的问题及解决办法

5、测试训练好的模型

目录

1、MMdetection系列版本

2、 MMDetection和MMCV兼容版本

3、Installation(Linux系统环境安装)

3.1 搭建基本环境

3.2 安装mmcv-full

1.3 安装其他必要的Python包

1.3 安装 MMDetection

2、 Windows 的mmcv-full安装

windows的本地编译安装

4、Swin Transform 训练自己的数据集

4.1 准备coco数据集

4.2 配置修改工程

1、设置类别数(configs/base/models/mask_rcnn_swin_fpn.py):

2、修改配置信息(间隔和加载预训练模型configs/base/default_runtime.py)

3、修改训练尺寸大小、max_epochs按需修改(configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py)

4、配置数据集路径、img_scale、samples_per_gpu、workers_per_gpu和增加数据增强(configs/base/datasets/coco_detection.py)

configs/base/datasets/coco_instance.py 文件的最上面指定了数据集的路径,因此在项目下新建 data/coco目录,下面四个子目录 annotations和test2017,train2017,val2017。路径/configs/base/datasets/coco_detection.py,第2行的data_root数据集根目录路径,第8行的img_scale可以根据需要修改,下面train、test、val数据集的具体路径ann_file根据自己数据集修改

5、修改分类数组:mmdet/datasets/coco.py

6、可以修改学习率(mmdetection/configs/_base_/schedules/schedule_20e.py )

这里是调整学习率的schedule的位置,可以设置warmup schedule和衰减策略。 1x, 2x分别对应12epochs和24epochs,20e对应20epochs,这里注意配置都是默认8块gpu的训练,如果用一块gpu训练,需要在lr/8

7、载入修改好的配置文件

8、使用Tensorboard进行可视化

4.3 开始训练执行图下命令

4.4、禁用mask

4、遇到的问题及解决办法

5、测试训练好的模型

1、MMdetection系列版本

2、 MMDetection和MMCV兼容版本

| MMDetection version | MMCV version |

|---|---|

| master | mmcv-full>=1.3.17, <1.6.0 |

| 2.24.1 | mmcv-full>=1.3.17, <1.6.0 |

| 2.24.0 | mmcv-full>=1.3.17, <1.6.0 |

| 2.23.0 | mmcv-full>=1.3.17, <1.5.0 |

| 2.22.0 | mmcv-full>=1.3.17, <1.5.0 |

| 2.21.0 | mmcv-full>=1.3.17, <1.5.0 |

| 2.20.0 | mmcv-full>=1.3.17, <1.5.0 |

| 2.19.1 | mmcv-full>=1.3.17, <1.5.0 |

| 2.19.0 | mmcv-full>=1.3.17, <1.5.0 |

| 2.18.0 | mmcv-full>=1.3.17, <1.4.0 |

| 2.17.0 | mmcv-full>=1.3.14, <1.4.0 |

| 2.16.0 | mmcv-full>=1.3.8, <1.4.0 |

| 2.15.1 | mmcv-full>=1.3.8, <1.4.0 |

| 2.15.0 | mmcv-full>=1.3.8, <1.4.0 |

| 2.14.0 | mmcv-full>=1.3.8, <1.4.0 |

| 2.13.0 | mmcv-full>=1.3.3, <1.4.0 |

| 2.12.0 | mmcv-full>=1.3.3, <1.4.0 |

| 2.11.0 | mmcv-full>=1.2.4, <1.4.0 |

| 2.10.0 | mmcv-full>=1.2.4, <1.4.0 |

| 2.9.0 | mmcv-full>=1.2.4, <1.4.0 |

| 2.8.0 | mmcv-full>=1.2.4, <1.4.0 |

| 2.7.0 | mmcv-full>=1.1.5, <1.4.0 |

| 2.6.0 | mmcv-full>=1.1.5, <1.4.0 |

| 2.5.0 | mmcv-full>=1.1.5, <1.4.0 |

| 2.4.0 | mmcv-full>=1.1.1, <1.4.0 |

| 2.3.0 | mmcv-full==1.0.5 |

| 2.3.0rc0 | mmcv-full>=1.0.2 |

| 2.2.1 | mmcv==0.6.2 |

| 2.2.0 | mmcv==0.6.2 |

| 2.1.0 | mmcv>=0.5.9, <=0.6.1 |

| 2.0.0 | mmcv>=0.5.1, <=0.5.8 |

3、Installation(Linux系统环境安装)

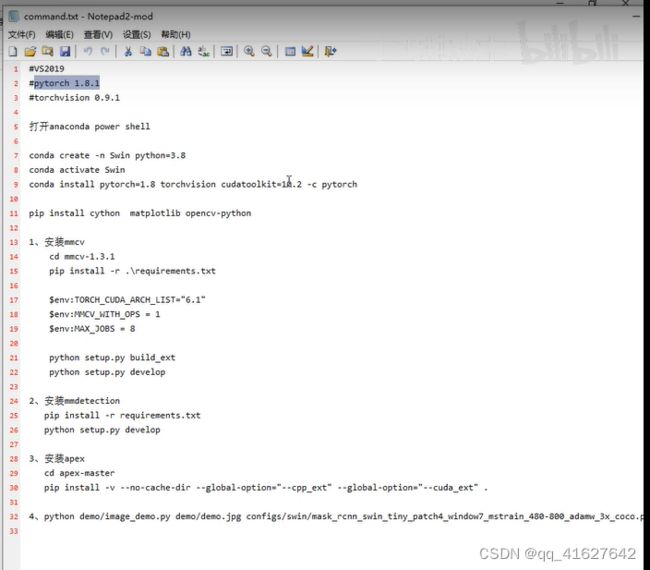

Windows10系统下swin-transformer目标检测环境搭建

3.1 搭建基本环境

cuda与pytorch版本

conda create -n mmdetection python=3.7 -y #创建环境

conda activate mmdetection #激活环境

conda install pytorch torchvision torchaudio cudatoolkit=10.2 -c pytorch #安装 PyTorch and torchvision

#或者这样安装

pip3 install torch==1.8.2+cu102 torchvision==0.9.2+cu102 torchaudio===0.8.2 -f https://download.pytorch.org/whl/lts/1.8/torch_lts.html -i http://mirrors.aliyun.com/pypi/simple/ --trusted-host mirrors.aliyun.com

验证是否安装成功

>>> import torchvision

>>> import torch

>>> import.__version__

File "", line 1

import.__version__

^

SyntaxError: invalid syntax

>>> torch.__version__

'1.8.2+cu102'

3.2 安装mmcv-full

| CUDA | torch 1.11 | torch 1.10 | torch 1.9 | torch 1.8 | torch 1.7 | torch 1.6 | torch 1.5 |

|---|---|---|---|---|---|---|---|

| 11.5 | install |

||||||

| 11.3 | install |

install |

|||||

| 11.1 | install |

install |

install |

||||

| 11.0 | install |

||||||

| 10.2 | install |

install |

install |

install |

install |

install |

install |

| 10.1 | install |

install |

install |

install |

|||

| 9.2 | install |

install |

install |

||||

| cpu | install |

install |

install |

install |

install |

install |

install |

#Install mmcv-full. 安装mmcv-full

pip install mmcv-full -f https://download.openmmlab.com/mmcv/dist/{cu_version}/{torch_version}/index.html

Please replace {cu_version} and {torch_version} in the url to your desired one. For example, to install the latest mmcv-full with CUDA 11.0 and PyTorch 1.7.0, use the following command:

pip install mmcv-full -f https://download.openmmlab.com/mmcv/dist/cu110/torch1.7.0/index.html

pip install mmcv-full==1.3.9 -f https://download.openmmlab.com/mmcv/dist/cu111/torch1.9.0/index.html #明确mmcv-full的版本号

pip install mmcv-full -f https://download.openmmlab.com/mmcv/dist/cu102/torch1.8.0/index.html

pip install mmcv-full==1.3.17 -f https://download.openmmlab.com/mmcv/dist/cu102/torch1.8.0/index.html -i http://mirrors.aliyun.com/pypi/simple/ --trusted-host mirrors.aliyun.com

验证是否安装成功

import mmcv

如果出现

>>> import mmcv

No CUDA runtime is found, using CUDA_HOME='/usr/local/cuda-10.2'

我们去看看驱动:

nvidia-smi

如果返回NVIDIA驱动失效简单解决方案:NVIDIA-SMI has failed because it couldn‘t communicate with the NVIDIA driver.

这种情况是由于重启服务器,linux内核升级导致的,由于linux内核升级,之前的Nvidia驱动就不匹配连接了,但是此时Nvidia驱动还在,可以通过命令 nvcc -V 找到答案。

解决方法:

查看已安装驱动的版本信息

ls /usr/src | grep nvidia

(mmdetection) lhy@thales-Super-Server:~$ ls /usr/src | grep nvidia

nvidia-440.33.01

进行下列操作

sudo apt-get install dkms

sudo dkms install -m nvidia -v 440.33.01

然后进行验证:

(mmdetection) lhy@thales-Super-Server:~$ nvidia-smi

Fri May 6 00:56:02 2022

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 440.33.01 Driver Version: 440.33.01 CUDA Version: 10.2 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 TITAN RTX Off | 00000000:02:00.0 Off | N/A |

| 0% 47C P0 54W / 280W | 0MiB / 24220MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 1 TITAN RTX Off | 00000000:03:00.0 Off | N/A |

| 0% 47C P0 65W / 280W | 0MiB / 24220MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

| 2 TITAN RTX Off | 00000000:82:00.0 Off | N/A |

| 0% 48C P0 63W / 280W | 0MiB / 24220MiB | 1% Default |

+-------------------------------+----------------------+----------------------+

| 3 TITAN RTX Off | 00000000:83:00.0 Off | N/A |

| 0% 46C P0 42W / 280W | 0MiB / 24220MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

(mmdetection) lhy@thales-Super-Server:~$ python

Python 3.7.13 (default, Mar 29 2022, 02:18:16)

[GCC 7.5.0] :: Anaconda, Inc. on linux

Type "help", "copyright", "credits" or "license" for more information.

>>> import mmcv

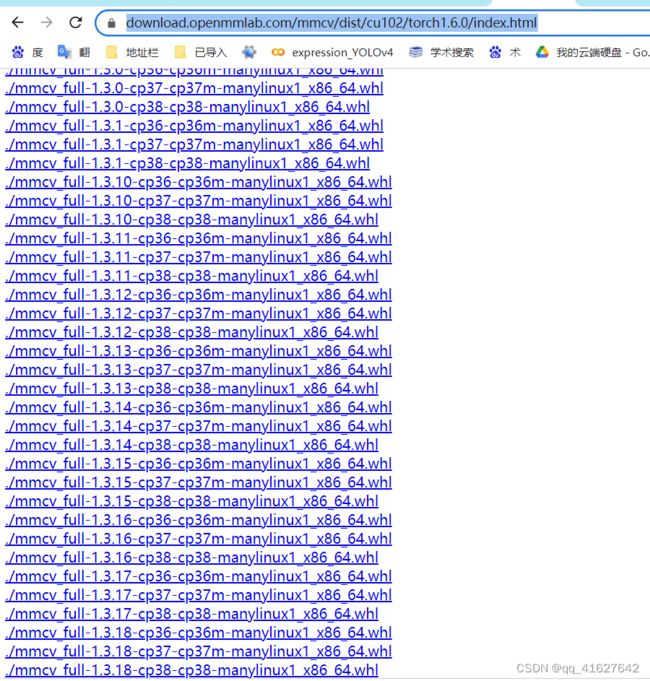

https://download.openmmlab.com/mmcv/dist/cu102/torch1.6.0/index.html

根据这个 网址可以查看torch1.6.0支持的mmcv-full的版本

注意:上面提供的预构建包不包括所有版本的mmcv-full,您可以单击相应的链接来查看支持的版本。例如,您可以单击cu102-torch1.8.0,可以看到cu102-torch1.8.0只提供1.3.0及以上版本的mmcv-full。此外,从v1.3.17开始,我们不再提供使用PyTorch 1.3和1.4编译的完整的mmcv预构建包。你可以在这里找到用PyTorch 1.3和1.4编译的以前版本。在我们的Cl中,兼容性仍然得到保证,但我们将在明年放弃对PyTorch 1.3和1.4的支持。

3.3 安装其他必要的Python包

pip install cython matplotlib opencv-python timm -i [http://mirrors.aliyun.com/pypi/simple/]3.4 安装 MMDetection

# These must be installed before building mmdetection

pip install -r requirements.txt -i http://mirrors.aliyun.com/pypi/simple/ --trusted-host mirrors.aliyun.com

pip install cython matplotlib opencv-python

cython

numpy

matplotlib

You can simply install mmdetection with the following command:

你可以使用下面的命令简单地安装mmdetection:

pip install mmdet

或者克隆存储库然后安装:

git clone https://github.com/open-mmlab/mmdetection.git

cd mmdetection

pip install -r requirements/build.txt

pip install -v -e . # or "python setup.py develop"

安装完成

Using /home/lhy/anaconda3/envs/mmdetection/lib/python3.7/site-packages

Finished processing dependencies for mmdet==2.24.1

a.当指定-e或develop时,MMDetection被安装在dev模式下,对代码所做的任何本地修改都将生效,无需重新安装

b.如果你想使用opencv-python-headless而不是opencv-python,你可以在安装MMCV之前安装它。

安装额外依赖Instaboost, Panoptic Segmentation, LVIS数据集,或Albumentations。

# for instaboost

pip install instaboostfast

# for panoptic segmentation

pip install git+https://github.com/cocodataset/panopticapi.git

# for LVIS dataset

pip install git+https://github.com/lvis-dataset/lvis-api.git

# for albumentations

pip install -r requirements/albu.txt

d.如果你想使用albumentations,我们建议使用pip install -r requirements/ albumentations或pip install -U albumentations——nobinary qudida, albumentations。如果您简单地使用pip install albumentations>=0.3.2,它将同时安装opencv-python-headless(即使您已经安装了opencv-python)。我们建议在安装albumentation的产品后检查环境,以确保opencv-python和opencv-python-headless没有被同时安装,因为如果同时安装可能会导致意想不到的问题。请参阅官方文件了解更多细节。3.5、apex安装

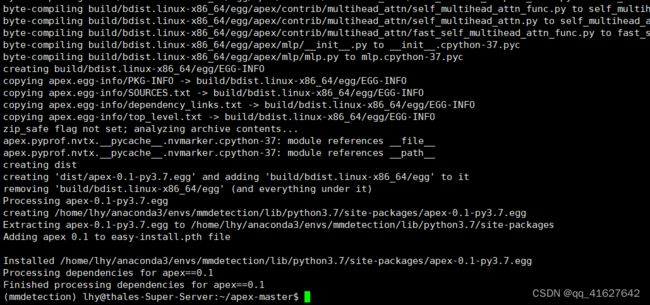

git clone https://github.com/NVIDIA/apex

进入 apex 文件夹

执行:python setup.py install

git clone https://github.com/NVIDIA/apex

cd apex

python3 setup.py install

pip list 能看见 apex (0.1版本,只有这一个版本)

注:安装的apex会在训练模型时候有一个警告内容如下:(但实际没啥影响)

fused_weight_gradient_mlp_cuda module not found. gradient accumulation fusion with weight gradient computation disabled.

2、 Windows 的mmcv-full安装

1、打开Anaconda Powershell prompt命令窗口

conda create -n swim python=3.8 -y # These must be installed before building mmdetection

pip install cython matplotlib opencv-python

cython

numpy

matplotlib通过查询可知按照官方的方式只能安装2.7.0版本的mmdetection

windows的本地编译安装

目标检测学习笔记——mmdet的mmcv安装

- 准备 MMCV 源代码

+![]() https://github.com/open-mmlab/mmcv/graphs/contributors

https://github.com/open-mmlab/mmcv/graphs/contributors

git clone https://github.com/open-mmlab/mmcv.git

git checkout v1.2.0 # based on target version

cd mmcv- 安装所需 Python 依赖包

- 置 MSVC 编译器

- 训练自己的数据集

4、Swin Transform 训练自己的数据集

Swin-Transformer

Swin-Transformer-Object-Detection

Swin Transformer Object Detection 目标检测-2——训练自己的数据集

Swin-transformer纯目标检测训练自己的数据集

4.1 准备coco数据集

4.1.1 案例1 txt转coco数据集

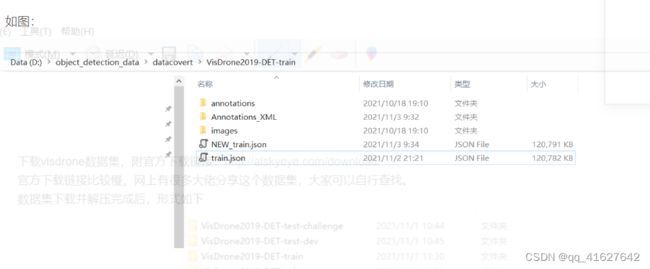

将visdrone数据集转化为coco格式并在mmdetection上训练,附上转好的json文件

1、visdrone数据

配备摄像头的无人机(或通用无人机)已被快速部署到广泛的应用领域,包括农业、航空摄影、快速交付和监视。因此,从这些平台上收集的视觉数据的自动理解要求越来越高,这使得计算机视觉与无人机的关系越来越密切。我们很高兴为各种重要的计算机视觉任务展示一个大型基准,并仔细注释了地面真相,命名为VisDrone,使视觉与无人机相遇。VisDrone2019数据集由天津大学机器学习和数据挖掘实验室AISKYEYE团队收集。基准数据集包括288个视频片段,由261908帧和10209幅静态图像组成,由各种无人机摄像头捕获,覆盖范围广泛,包括位置(来自中国相隔数千公里的14个不同城市)、环境(城市和农村)、物体(行人、车辆、自行车、等)和密度(稀疏和拥挤的场景)。请注意,数据集是在不同的场景、不同的天气和光照条件下使用不同的无人机平台(即不同型号的无人机)收集的。这些框架用超过260万个经常感兴趣的目标框手工标注,比如行人、汽车、自行车和三轮车。一些重要的属性,包括场景可见性,对象类和遮挡,也提供了更好的数据利用。

isdrone是一个无人机的目标检测数据集,在很多目标检测的论文中都能看到它的身影。

标签从0到11分别为’ignored regions’,‘pedestrian’,‘people’,‘bicycle’,‘car’,‘van’,

‘truck’,‘tricycle’,‘awning-tricycle’,‘bus’,‘motor’,‘others’

下载完成后我们可以在Anootations看到txt文件,其中相关的数字解释如下:

, , , , , , ,

Name Description

-------------------------------------------------------------------------------------------------------------------------------

The x coordinate of the top-left corner of the predicted bounding box

The y coordinate of the top-left corner of the predicted object bounding box

The width in pixels of the predicted object bounding box

The height in pixels of the predicted object bounding box

The score in the DETECTION file indicates the confidence of the predicted bounding box enclosing

an object instance.

The score in GROUNDTRUTH file is set to 1 or 0. 1 indicates the bounding box is considered in evaluation,

while 0 indicates the bounding box will be ignored.

The object category indicates the type of annotated object, (i.e., ignored regions(0), pedestrian(1),

people(2), bicycle(3), car(4), van(5), truck(6), tricycle(7), awning-tricycle(8), bus(9), motor(10),

others(11))

The score in the DETECTION result file should be set to the constant -1.

The score in the GROUNDTRUTH file indicates the degree of object parts appears outside a frame

(i.e., no truncation = 0 (truncation ratio 0%), and partial truncation = 1 (truncation ratio 1% ~ 50%)).

The score in the DETECTION file should be set to the constant -1.

The score in the GROUNDTRUTH file indicates the fraction of objects being occluded (i.e., no occlusion = 0

(occlusion ratio 0%), partial occlusion = 1 (occlusion ratio 1% ~ 50%), and heavy occlusion = 2

(occlusion ratio 50% ~ 100%)).

其中:两种有用的注释:truncation截断率,occlusion遮挡率。

被遮挡的对象比例来定义遮挡率。

截断率用于指示对象部分出现在框架外部的程度。

如果目标的截断率大于50%,则会在评估过程中将其跳过。

现在先要用mmdetection自己训练一下这个数据集,需要把他转化为coco数据集格式

分两步走:

1. 将annotations中的txt标签转化为voc需要的xml文件

1. VOC Annotations文件夹

该文件下存放的是xml格式的标签文件,每个xml文件都对应于JPEGImages文件夹的一张图片, 其中对xml的解析如下:

VOC2007

2007_000392.jpg //文件名

//图像来源(不重要)

The VOC2007 Database

PASCAL VOC2007

flickr

//图像尺寸(长宽以及通道数)

500

332

3

1 //是否用于分割(在图像物体识别中01无所谓)

下面是visDrone2019的txt注释文件转换为voc xml的代码,visDrone2019_txt2xml_voc.py

需要改的地方有注释,就是几个路径改一下即可

'''

Author: 刘鸿燕 [email protected]

Date: 2022-05-09 10:17:40

LastEditors: 刘鸿燕 [email protected]

LastEditTime: 2022-05-09 11:17:20

FilePath: \VisDrone2019\data_process\visDrone2019_txt2xml.py

Description: 这是默认设置,请设置`customMade`, 打开koroFileHeader查看配置 进行设置: https://github.com/OBKoro1/koro1FileHeader/wiki/%E9%85%8D%E7%BD%AE

'''

import os

import datetime

from PIL import Image

from pathlib import Path

FILE = Path(__file__).resolve()

# print("FILE",FILE)

ROOT = FILE.parent.parents[0] # root directory

print("ROOT",ROOT)

def check_dir(path):

if os.path.isdir(path):

print("{}文件路径存在!".format(path))

pass

else:

os.makedirs(path)

print("{}文件路径创建成功!".format(path))

#把下面的root_dir路径改成你自己的路径即可

root_dir = ROOT / 'VisDrone2019-DET-train'

annotations_dir = root_dir / "annotations/"

image_dir = root_dir / "images/"

xml_dir = root_dir / "Annotations_XML/" #在工作目录下创建Annotations_XML文件夹保存xml文件

check_dir(xml_dir)

# print("annotation_dir",annotations_dir)

# print("image_dir",image_dir)

# print("xml_dir",xml_dir)

# root_dir = r"D:\object_detection_data\datacovert\VisDrone2019-DET-val/"

# annotations_dir = root_dir+"annotations/"

# image_dir = root_dir + "images/"

# xml_dir = root_dir+"Annotations_XML/" #在工作目录下创建Annotations_XML文件夹保存xml文件

# 下面的类别也换成你自己数据类别,也可适用于其他的数据集转换

class_name = ['ignored regions','pedestrian','people','bicycle','car','van',

'truck','tricycle','awning-tricycle','bus','motor','others']

for filename in os.listdir(annotations_dir):

fin = open(annotations_dir/ filename, 'r')

image_name = filename.split('.')[0]

image_path=Path(image_dir).joinpath(image_name+".jpg")# 若图像数据是“png”转换成“.png”即可

img = Image.open(image_path) # 若图像数据是“png”转换成“.png”即可

xml_name = Path(xml_dir).joinpath(image_name+'.xml')

with open(xml_name, 'w') as fout:

#写入的xml基本信息

fout.write(''+'\n')

fout.write('\t'+'VOC2007 '+'\n')

fout.write('\t'+''+image_name+'.jpg'+' '+'\n')

fout.write('\t'+''+'\n')

fout.write('\t\t'+''+'VisDrone2019-DET'+' '+'\n')

fout.write('\t\t'+''+'VisDrone2019-DET'+' '+'\n')

fout.write('\t\t'+''+'flickr'+' '+'\n')

fout.write('\t\t'+''+'Unspecified'+' '+'\n')

fout.write('\t'+' '+'\n')

fout.write('\t'+''+'\n')

fout.write('\t\t'+''+'LJ'+' '+'\n')

fout.write('\t\t'+''+'LJ'+' '+'\n')

fout.write('\t'+' '+'\n')

fout.write('\t'+''+'\n')

fout.write('\t\t'+''+str(img.size[0])+' '+'\n')

fout.write('\t\t'+''+str(img.size[1])+' '+'\n')

fout.write('\t\t'+''+'3'+' '+'\n')

fout.write('\t'+' '+'\n')

fout.write('\t'+''+'0'+' '+'\n')

for line in fin.readlines():

line = line.split(',')

fout.write('\t'+''+'\n')

fin.close()

fout.write(' ')2.xml2json

#!/usr/bin/python

# xml是voc的格式

# json是coco的格式

import sys, os, json, glob

import xml.etree.ElementTree as ET

INITIAL_BBOXIds = 1

# PREDEF_CLASSE = {}

PREDEF_CLASSE = { 'pedestrian': 1, 'people': 2,

'bicycle': 3, 'car': 4, 'van': 5, 'truck': 6, 'tricycle': 7,

'awning-tricycle': 8, 'bus': 9, 'motor': 10}

#我这里只想检测这十个类, 0和11没有加入转化。

# function

def get(root, name):

return root.findall(name)

def get_and_check(root, name, length):

vars = root.findall(name)

if len(vars) == 0:

raise NotImplementedError('Can not find %s in %s.'%(name, root.tag))

if length > 0 and len(vars) != length:

raise NotImplementedError('The size of %s is supposed to be %d, but is %d.'%(name, length, len(vars)))

if length == 1:

vars = vars[0]

return vars

def convert(xml_paths, out_json):

json_dict = {'images': [], 'type': 'instances',

'categories': [], 'annotations': []}

categories = PREDEF_CLASSE

bbox_id = INITIAL_BBOXIds

for image_id, xml_f in enumerate(xml_paths):

# 进度输出

sys.stdout.write('\r>> Converting image %d/%d' % (

image_id + 1, len(xml_paths)))

sys.stdout.flush()

tree = ET.parse(xml_f)

root = tree.getroot()

filename = get_and_check(root, 'filename', 1).text

size = get_and_check(root, 'size', 1)

width = int(get_and_check(size, 'width', 1).text)

height = int(get_and_check(size, 'height', 1).text)

image = {'file_name': filename, 'height': height,

'width': width, 'id': image_id + 1}

json_dict['images'].append(image)

## Cruuently we do not support segmentation

#segmented = get_and_check(root, 'segmented', 1).text

#assert segmented == '0'

for obj in get(root, 'object'):

category = get_and_check(obj, 'name', 1).text

if category not in categories:

new_id = max(categories.values()) + 1

categories[category] = new_id

category_id = categories[category]

bbox = get_and_check(obj, 'bndbox', 1)

xmin = int(get_and_check(bbox, 'xmin', 1).text) - 1

ymin = int(get_and_check(bbox, 'ymin', 1).text) - 1

xmax = int(get_and_check(bbox, 'xmax', 1).text)

ymax = int(get_and_check(bbox, 'ymax', 1).text)

if xmax <= xmin or ymax <= ymin:

continue

o_width = abs(xmax - xmin)

o_height = abs(ymax - ymin)

ann = {'area': o_width * o_height, 'iscrowd': 0, 'image_id': image_id + 1,

'bbox': [xmin, ymin, o_width, o_height], 'category_id': category_id,

'id': bbox_id, 'ignore': 0, 'segmentation': []}

json_dict['annotations'].append(ann)

bbox_id = bbox_id + 1

for cate, cid in categories.items():

cat = {'supercategory': 'none', 'id': cid, 'name': cate}

json_dict['categories'].append(cat)

# json_file = open(out_json, 'w')

# json_str = json.dumps(json_dict)

# json_file.write(json_str)

# json_file.close() # 快

json.dump(json_dict, open(out_json, 'w'), indent=4) # indent=4 更加美观显示 慢

if __name__ == '__main__':

xml_path = r'D:\object_detection_data\datacovert\VisDrone2019-DET-val/Annotations_XML/' #改一下读取xml文件位置

xml_file = glob.glob(os.path.join(xml_path, '*.xml'))

convert(xml_file, r'D:\object_detection_data\datacovert\VisDrone2019-DET-val/NEW_val.json') #这里是生成的json保存位置,改一下

4.2 配置修改工程

1、设置类别数(configs/base/models/mask_rcnn_swin_fpn.py):

修改 configs/base/models/mask_rcnn_swin_fpn.py 中 num_classes 为自己数据集的类别(有两处需要修改)。两处大概在第54行和73行,修改为自己数据集的类别数量,示例如下。

# model settings

model = dict(

type='MaskRCNN',

pretrained=None,

backbone=dict(

type='SwinTransformer',

embed_dim=96,

depths=[2, 2, 6, 2],

num_heads=[3, 6, 12, 24],

window_size=7,

mlp_ratio=4.,

qkv_bias=True,

qk_scale=None,

drop_rate=0.,

attn_drop_rate=0.,

drop_path_rate=0.2,

ape=False,

patch_norm=True,

out_indices=(0, 1, 2, 3),

use_checkpoint=False),

neck=dict(

type='FPN',

in_channels=[96, 192, 384, 768],

out_channels=256,

num_outs=5),

rpn_head=dict(

type='RPNHead',

in_channels=256,

feat_channels=256,

anchor_generator=dict(

type='AnchorGenerator',

scales=[8],

ratios=[0.5, 1.0, 2.0],

strides=[4, 8, 16, 32, 64]),

bbox_coder=dict(

type='DeltaXYWHBBoxCoder',

target_means=[.0, .0, .0, .0],

target_stds=[1.0, 1.0, 1.0, 1.0]),

loss_cls=dict(

type='CrossEntropyLoss', use_sigmoid=True, loss_weight=1.0),

loss_bbox=dict(type='L1Loss', loss_weight=1.0)),

roi_head=dict(

type='StandardRoIHead',

bbox_roi_extractor=dict(

type='SingleRoIExtractor',

roi_layer=dict(type='RoIAlign', output_size=7, sampling_ratio=0),

out_channels=256,

featmap_strides=[4, 8, 16, 32]),

bbox_head=dict(

type='Shared2FCBBoxHead',

in_channels=256,

fc_out_channels=1024,

roi_feat_size=7,

num_classes=80, #修改为自己的类别,注意这里不需要加BG类(+1)

bbox_coder=dict(

type='DeltaXYWHBBoxCoder',

target_means=[0., 0., 0., 0.],

target_stds=[0.1, 0.1, 0.2, 0.2]),

reg_class_agnostic=False,

loss_cls=dict(

type='CrossEntropyLoss', use_sigmoid=False, loss_weight=1.0),

loss_bbox=dict(type='L1Loss', loss_weight=1.0)),

mask_roi_extractor=dict(

type='SingleRoIExtractor',

roi_layer=dict(type='RoIAlign', output_size=14, sampling_ratio=0),

out_channels=256,

featmap_strides=[4, 8, 16, 32]),

mask_head=dict(

type='FCNMaskHead',

num_convs=4,

in_channels=256,

conv_out_channels=256,

num_classes=80, #修改为自己的类别,注意这里不需要加BG类(+1)

loss_mask=dict(

type='CrossEntropyLoss', use_mask=True, loss_weight=1.0))),

# model training and testing settings

train_cfg=dict(

rpn=dict(

assigner=dict(

type='MaxIoUAssigner',

pos_iou_thr=0.7,

neg_iou_thr=0.3,

min_pos_iou=0.3,

match_low_quality=True,

ignore_iof_thr=-1),

sampler=dict(

type='RandomSampler',

num=256,

pos_fraction=0.5,

neg_pos_ub=-1,

add_gt_as_proposals=False),

allowed_border=-1,

pos_weight=-1,

debug=False),

rpn_proposal=dict(

nms_pre=2000,

max_per_img=1000,

nms=dict(type='nms', iou_threshold=0.7),

min_bbox_size=0),

rcnn=dict(

assigner=dict(

type='MaxIoUAssigner',

pos_iou_thr=0.5,

neg_iou_thr=0.5,

min_pos_iou=0.5,

match_low_quality=True,

ignore_iof_thr=-1),

sampler=dict(

type='RandomSampler',

num=512,

pos_fraction=0.25,

neg_pos_ub=-1,

add_gt_as_proposals=True),

mask_size=28,

pos_weight=-1,

debug=False)),

test_cfg=dict(

rpn=dict(

nms_pre=1000,

max_per_img=1000,

nms=dict(type='nms', iou_threshold=0.7),

min_bbox_size=0),

rcnn=dict(

score_thr=0.05,

nms=dict(type='nms', iou_threshold=0.5),

max_per_img=100,

mask_thr_binary=0.5)))2、修改配置信息(间隔和加载预训练模型configs/base/default_runtime.py)

修改 configs/base/default_runtime.py 中的 interval,loadfrom

interval:dict(interval=1) # 表示多少个 epoch 验证一次,然后保存一次权重信息,

第1行interval=1表示每1个epoch保存一次权重信息,表示多少个 epoch 验证一次,然后保存一次权重信息,

第4行interval=50表示每50次打印一次日志信息

loadfrom:表示加载哪一个训练好的权重,可以直接写绝对路径如: load_from = r"E:\workspace\Python\Pytorch\Swin-Transformer-Object-Detection\mask_rcnn_swin_tiny_patch4_window7.pth"

3、修改训练尺寸大小、max_epochs按需修改(configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py)

如果显存够的话可以不改(基本都运行不起来),文件位置为:configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py

修改所有的 img_scale 为 :img_scale = [(224, 224)] 或者 img_scale = [(256, 256)] 或者 480,512等。

同时 configs/base/datasets/coco_instance.py 或者configs/base/datasets/coco_detection.py中的 img_scale 也要改成 img_scale = [(224, 224)] 或者其他值

第3行’…/base/datasets/coco_instance.py’修改为’…/base/datasets/coco_detection.py’

第69行的max_epochs按需修改

_base_ = [

'../_base_/models/mask_rcnn_swin_fpn.py',

'../_base_/datasets/coco_instance.py', #做目标检测,修改为coco_detection.py

'../_base_/schedules/schedule_1x.py', '../_base_/default_runtime.py'

]

model = dict(

backbone=dict(

embed_dim=96,

depths=[2, 2, 6, 2],

num_heads=[3, 6, 12, 24],

window_size=7,

ape=False,

drop_path_rate=0.2,

patch_norm=True,

use_checkpoint=False

),

neck=dict(in_channels=[96, 192, 384, 768]))

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

# augmentation strategy originates from DETR / Sparse RCNN

train_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='LoadAnnotations', with_bbox=True, with_mask=True),

dict(type='RandomFlip', flip_ratio=0.5),

dict(type='AutoAugment',

policies=[

[

dict(type='Resize',

#这里可以根据自己的硬件设置进行修改

img_scale=[(480, 1333), (512, 1333), (544, 1333), (576, 1333),

(608, 1333), (640, 1333), (672, 1333), (704, 1333),

(736, 1333), (768, 1333), (800, 1333)],

multiscale_mode='value',

keep_ratio=True)

],

[

dict(type='Resize',

img_scale=[(400, 1333), (500, 1333), (600, 1333)],

multiscale_mode='value',

keep_ratio=True),

dict(type='RandomCrop',

crop_type='absolute_range',

crop_size=(384, 600),

allow_negative_crop=True),

dict(type='Resize',

#这里可以根据自己的硬件设置进行修改

img_scale=[(480, 1333), (512, 1333), (544, 1333),

(576, 1333), (608, 1333), (640, 1333),

(672, 1333), (704, 1333), (736, 1333),

(768, 1333), (800, 1333)],

multiscale_mode='value',

override=True,

keep_ratio=True)

]

]),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle'),

dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels', 'gt_masks']),

]

data = dict(train=dict(pipeline=train_pipeline))

optimizer = dict(_delete_=True, type='AdamW', lr=0.0001, betas=(0.9, 0.999), weight_decay=0.05,

paramwise_cfg=dict(custom_keys={'absolute_pos_embed': dict(decay_mult=0.),

'relative_position_bias_table': dict(decay_mult=0.),

'norm': dict(decay_mult=0.)}))

lr_config = dict(step=[27, 33])

runner = dict(type='EpochBasedRunnerAmp', max_epochs=36) #训练的epoch可以根据需要修改

# do not use mmdet version fp16

fp16 = None

optimizer_config = dict(

type="DistOptimizerHook",

update_interval=1,

grad_clip=None,

coalesce=True,

bucket_size_mb=-1,

use_fp16=True,

)

4、配置数据集路径、img_scale、samples_per_gpu、workers_per_gpu和增加数据增强(configs/base/datasets/coco_detection.py)

configs/base/datasets/coco_instance.py 文件的最上面指定了数据集的路径,因此在项目下新建 data/coco目录,下面四个子目录 annotations和test2017,train2017,val2017。路径/configs/base/datasets/coco_detection.py,第2行的data_root数据集根目录路径,第8行的img_scale可以根据需要修改,下面train、test、val数据集的具体路径ann_file根据自己数据集修改

第31行的samples_per_gpu表示batch size大小,太大会内存溢出

第32行的workers_per_gpu表示每个GPU对应线程数,2、4、6、8按需修改

修改 batch size 和 线程数:根据自己的显存和CPU来设置

dataset_type = 'CocoDataset'

data_root = 'data/coco/' #数据的根目录

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

train_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='LoadAnnotations', with_bbox=True, with_mask=True),

dict(type='Resize', img_scale=(1333, 800), keep_ratio=True), #img_scale修改

dict(type='RandomFlip', flip_ratio=0.5),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle'),

dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels', 'gt_masks']),

]

test_pipeline = [

dict(type='LoadImageFromFile'),

dict(

type='MultiScaleFlipAug',

img_scale=(1333, 800), #img_scale修改

flip=False,

transforms=[

dict(type='Resize', keep_ratio=True),

dict(type='RandomFlip'),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='ImageToTensor', keys=['img']),

dict(type='Collect', keys=['img']),

])

]

data = dict(

samples_per_gpu=2, #batch size大小

workers_per_gpu=2, #每个GPU对应线程数

train=dict(

type=dataset_type,

ann_file=data_root + 'annotations/instances_train2017.json',

img_prefix=data_root + 'train2017/',

pipeline=train_pipeline),

val=dict(

type=dataset_type,

ann_file=data_root + 'annotations/instances_val2017.json',

img_prefix=data_root + 'val2017/',

pipeline=test_pipeline),

test=dict(

type=dataset_type,

ann_file=data_root + 'annotations/instances_val2017.json',

img_prefix=data_root + 'val2017/',

pipeline=test_pipeline))

evaluation = dict(metric=['bbox', 'segm'])

configs/_base_/datasets/coco_detection.py 在train pipeline修改Data Augmentation在train

dataset_type = 'CocoDataset'

data_root = 'data/coco/'

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

# 在这里加albumentation的aug

albu_train_transforms = [

dict(

type='ShiftScaleRotate',

shift_limit=0.0625,

scale_limit=0.0,

rotate_limit=0,

interpolation=1,

p=0.5),

dict(

type='RandomBrightnessContrast',

brightness_limit=[0.1, 0.3],

contrast_limit=[0.1, 0.3],

p=0.2),

dict(

type='OneOf',

transforms=[

dict(

type='RGBShift',

r_shift_limit=10,

g_shift_limit=10,

b_shift_limit=10,

p=1.0),

dict(

type='HueSaturationValue',

hue_shift_limit=20,

sat_shift_limit=30,

val_shift_limit=20,

p=1.0)

],

p=0.1),

dict(type='JpegCompression', quality_lower=85, quality_upper=95, p=0.2),

dict(type='ChannelShuffle', p=0.1),

dict(

type='OneOf',

transforms=[

dict(type='Blur', blur_limit=3, p=1.0),

dict(type='MedianBlur', blur_limit=3, p=1.0)

],

p=0.1),

]

train_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='LoadAnnotations', with_bbox=True, with_mask=True),

#据说这里改img_scale即可多尺度训练,但是实际运行报错。

dict(type='Resize', img_scale=(1333, 800), keep_ratio=True),

dict(type='Pad', size_divisor=32),

dict(

type='Albu',

transforms=albu_train_transforms,

bbox_params=dict(

type='BboxParams',

format='pascal_voc',

label_fields=['gt_labels'],

min_visibility=0.0,

filter_lost_elements=True),

keymap={

'img': 'image',

'gt_masks': 'masks',

'gt_bboxes': 'bboxes'

},

]

# 测试的pipeline

test_pipeline = [

dict(type='LoadImageFromFile'),

dict(

type='MultiScaleFlipAug',

# 多尺度测试 TTA在这里修改,注意有些模型不支持多尺度TTA,比如cascade_mask_rcnn,若不支持会提示

# Unimplemented Error

img_scale=(1333, 800),

flip=False,

transforms=[

dict(type='Resize', keep_ratio=True),

dict(type='RandomFlip'),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='ImageToTensor', keys=['img']),

dict(type='Collect', keys=['img']),

])

]

# 包含batch_size, workers和路径。

# 路径如果按照上面的设置好就不需要更改

data = dict(

samples_per_gpu=2,

workers_per_gpu=2,

train=dict(

type=dataset_type,

ann_file=data_root + 'annotations/instances_train2017.json',

img_prefix=data_root + 'train2017/',

pipeline=train_pipeline),

val=dict(

type=dataset_type,

ann_file=data_root + 'annotations/instances_val2017.json',

img_prefix=data_root + 'val2017/',

pipeline=test_pipeline),

test=dict(

type=dataset_type,

ann_file=data_root + 'annotations/instances_val2017.json',

img_prefix=data_root + 'val2017/',

pipeline=test_pipeline))

evaluation = dict(interval=1, metric='bbox')-

目录

1、MMdetection系列版本

2、 MMDetection和MMCV兼容版本

3、Installation(Linux系统环境安装)

3.1 搭建基本环境

3.2 安装mmcv-full

1.3 安装其他必要的Python包

1.3 安装 MMDetection

2、 Windows 的mmcv-full安装

windows的本地编译安装

4、Swin Transform 训练自己的数据集

4.1 准备coco数据集

4.2 配置修改工程

4.3 开始训练执行图下命令

4.4、禁用mask

4、遇到的问题及解决办法

5、测试训练好的模型

5、修改分类数组:mmdet/datasets/coco.py

-

one:路径/mmdet/datasets/coco.py的第23行CLASSES two:路径/mmdet/core/evaluation/class_names.py的第67行coco_classes 修改为自己数据集的类别 CLASSES中填写自己的分类:CLASSES = ('person', 'bicycle', 'car') one: @DATASETS.register_module() class CocoDataset(CustomDataset): CLASSES = ('person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus', 'train', 'truck', 'boat', 'traffic light', 'fire hydrant', 'stop sign', 'parking meter', 'bench', 'bird', 'cat', 'dog', 'horse', 'sheep', 'cow', 'elephant', 'bear', 'zebra', 'giraffe', 'backpack', 'umbrella', 'handbag', 'tie', 'suitcase', 'frisbee', 'skis', 'snowboard', 'sports ball', 'kite', 'baseball bat', 'baseball glove', 'skateboard', 'surfboard', 'tennis racket', 'bottle', 'wine glass', 'cup', 'fork', 'knife', 'spoon', 'bowl', 'banana', 'apple', 'sandwich', 'orange', 'broccoli', 'carrot', 'hot dog', 'pizza', 'donut', 'cake', 'chair', 'couch', 'potted plant', 'bed', 'dining table', 'toilet', 'tv', 'laptop', 'mouse', 'remote', 'keyboard', 'cell phone', 'microwave', 'oven', 'toaster', 'sink', 'refrigerator', 'book', 'clock', 'vase', 'scissors', 'teddy bear', 'hair drier', 'toothbrush')#修改为自己的类别数 #two def coco_classes(): return [ 'person', 'bicycle', 'car', 'motorcycle', 'airplane', 'bus', 'train', 'truck', 'boat', 'traffic_light', 'fire_hydrant', 'stop_sign', 'parking_meter', 'bench', 'bird', 'cat', 'dog', 'horse', 'sheep', 'cow', 'elephant', 'bear', 'zebra', 'giraffe', 'backpack', 'umbrella', 'handbag', 'tie', 'suitcase', 'frisbee', 'skis', 'snowboard', 'sports_ball', 'kite', 'baseball_bat', 'baseball_glove', 'skateboard', 'surfboard', 'tennis_racket', 'bottle', 'wine_glass', 'cup', 'fork', 'knife', 'spoon', 'bowl', 'banana', 'apple', 'sandwich', 'orange', 'broccoli', 'carrot', 'hot_dog', 'pizza', 'donut', 'cake', 'chair', 'couch', 'potted_plant', 'bed', 'dining_table', 'toilet', 'tv', 'laptop', 'mouse', 'remote', 'keyboard', 'cell_phone', 'microwave', 'oven', 'toaster', 'sink', 'refrigerator', 'book', 'clock', 'vase', 'scissors', 'teddy_bear', 'hair_drier', 'toothbrush' ] #修改为自己的数据集名称 6、可以修改学习率(mmdetection/configs/_base_/schedules/schedule_20e.py )

-

这里是调整学习率的schedule的位置,可以设置warmup schedule和衰减策略。 1x, 2x分别对应12epochs和24epochs,20e对应20epochs,这里注意配置都是默认8块gpu的训练,如果用一块gpu训练,需要在lr/8

# optimizer optimizer = dict(type='SGD', lr=0.02/8, momentum=0.9, weight_decay=0.0001) optimizer_config = dict(grad_clip=None) # learning policy lr_config = dict( policy='step', warmup='linear', warmup_iters=500, warmup_ratio=0.001, step=[16, 19]) total_epochs = 20目录

1、MMdetection系列版本

2、 MMDetection和MMCV兼容版本

3、Installation(Linux系统环境安装)

3.1 搭建基本环境

3.2 安装mmcv-full

1.3 安装其他必要的Python包

1.3 安装 MMDetection

2、 Windows 的mmcv-full安装

windows的本地编译安装

4、Swin Transform 训练自己的数据集

4.1 准备coco数据集

4.2 配置修改工程

1、设置类别数(configs/base/models/mask_rcnn_swin_fpn.py):

2、修改配置信息(间隔和加载预训练模型configs/base/default_runtime.py)

3、修改训练尺寸大小、max_epochs按需修改(configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py)

4、配置数据集路径、img_scale、samples_per_gpu、workers_per_gpu和增加数据增强(configs/base/datasets/coco_detection.py)

configs/base/datasets/coco_instance.py 文件的最上面指定了数据集的路径,因此在项目下新建 data/coco目录,下面四个子目录 annotations和test2017,train2017,val2017。路径/configs/base/datasets/coco_detection.py,第2行的data_root数据集根目录路径,第8行的img_scale可以根据需要修改,下面train、test、val数据集的具体路径ann_file根据自己数据集修改

5、修改分类数组:mmdet/datasets/coco.py

6、可以修改学习率(mmdetection/configs/_base_/schedules/schedule_20e.py )

这里是调整学习率的schedule的位置,可以设置warmup schedule和衰减策略。 1x, 2x分别对应12epochs和24epochs,20e对应20epochs,这里注意配置都是默认8块gpu的训练,如果用一块gpu训练,需要在lr/8

4.3 开始训练执行图下命令

4.4、禁用mask

4、遇到的问题及解决办法

5、测试训练好的模型

7、载入修改好的配置文件

-

from mmcv import Config import albumentations as albu cfg = Config.fromfile('./configs/dcn/cascade_rcnn_r101_fpn_dconv_c3-c5_20e_coco.py')可以使用以下的命令检查几个重要参数: cfg.data.train cfg.total_epochs cfg.data.samples_per_gpu cfg.resume_from cfg.load_from cfg.data ...改变config中某些参数 from mmdet.apis import set_random_seed # Modify dataset type and path # cfg.dataset_type = 'Xray' # cfg.data_root = 'Xray' cfg.data.samples_per_gpu = 4 cfg.data.workers_per_gpu = 4 # cfg.data.test.type = 'Xray' cfg.data.test.data_root = '../mmdetection_torch_1.5' # cfg.data.test.img_prefix = '../mmdetection_torch_1.5' # cfg.data.train.type = 'Xray' cfg.data.train.data_root = '../mmdetection_torch_1.5' # cfg.data.train.ann_file = 'instances_train2014.json' # # cfg.data.train.classes = classes # cfg.data.train.img_prefix = '../mmdetection_torch_1.5' # cfg.data.val.type = 'Xray' cfg.data.val.data_root = '../mmdetection_torch_1.5' # cfg.data.val.ann_file = 'instances_val2014.json' # # cfg.data.train.classes = classes # cfg.data.val.img_prefix = '../mmdetection_torch_1.5' # modify neck classes number # cfg.model.neck.num_outs # modify num classes of the model in box head # for i in range(len(cfg.model.roi_head.bbox_head)): # cfg.model.roi_head.bbox_head[i].num_classes = 10 # cfg.data.train.pipeline[2].img_scale = (1333,800) cfg.load_from = '../mmdetection_torch_1.5/coco_exps/latest.pth' # cfg.resume_from = './coco_exps_v3/latest.pth' # Set up working dir to save files and logs. cfg.work_dir = './coco_exps_v4' # The original learning rate (LR) is set for 8-GPU training. # We divide it by 8 since we only use one GPU. cfg.optimizer.lr = 0.02 / 8 # cfg.lr_config.warmup = None # cfg.lr_config = dict( # policy='step', # warmup='linear', # warmup_iters=500, # warmup_ratio=0.001, # # [7] yields higher performance than [6] # step=[7]) # cfg.lr_config = dict( # policy='step', # warmup='linear', # warmup_iters=500, # warmup_ratio=0.001, # step=[36,39]) cfg.log_config.interval = 10 # # Change the evaluation metric since we use customized dataset. # cfg.evaluation.metric = 'mAP' # # We can set the evaluation interval to reduce the evaluation times # cfg.evaluation.interval = 12 # # We can set the checkpoint saving interval to reduce the storage cost # cfg.checkpoint_config.interval = 12 # # Set seed thus the results are more reproducible cfg.seed = 0 set_random_seed(0, deterministic=False) cfg.gpu_ids = range(1) # cfg.total_epochs = 40 # # We can initialize the logger for training and have a look # # at the final config used for training print(f'Config:\n{cfg.pretty_text}')给定一个在COCO数据集上训练Faster R-CNN的配置,我们需要修改一些值来使用它在KITTI数据集上训练Faster R-CNN。 from mmdet.apis import set_random_seed # Modify dataset type and path cfg.dataset_type = 'KittiTinyDataset' cfg.data_root = 'kitti_tiny/' cfg.data.test.type = 'KittiTinyDataset' cfg.data.test.data_root = 'kitti_tiny/' cfg.data.test.ann_file = 'train.txt' cfg.data.test.img_prefix = 'training/image_2' cfg.data.train.type = 'KittiTinyDataset' cfg.data.train.data_root = 'kitti_tiny/' cfg.data.train.ann_file = 'train.txt' cfg.data.train.img_prefix = 'training/image_2' cfg.data.val.type = 'KittiTinyDataset' cfg.data.val.data_root = 'kitti_tiny/' cfg.data.val.ann_file = 'val.txt' cfg.data.val.img_prefix = 'training/image_2' # modify num classes of the model in box head cfg.model.roi_head.bbox_head.num_classes = 3 # We can still use the pre-trained Mask RCNN model though we do not need to # use the mask branch cfg.load_from = 'checkpoints/mask_rcnn_r50_caffe_fpn_mstrain-poly_3x_coco_bbox_mAP-0.408__segm_mAP-0.37_20200504_163245-42aa3d00.pth' # Set up working dir to save files and logs. cfg.work_dir = './tutorial_exps' # The original learning rate (LR) is set for 8-GPU training. # We divide it by 8 since we only use one GPU. cfg.optimizer.lr = 0.02 / 8 cfg.lr_config.warmup = None cfg.log_config.interval = 10 # Change the evaluation metric since we use customized dataset. cfg.evaluation.metric = 'mAP' # We can set the evaluation interval to reduce the evaluation times cfg.evaluation.interval = 12 # We can set the checkpoint saving interval to reduce the storage cost cfg.checkpoint_config.interval = 12 # Set seed thus the results are more reproducible cfg.seed = 0 set_random_seed(0, deterministic=False) cfg.gpu_ids = range(1) # We can initialize the logger for training and have a look # at the final config used for training print(f'Config:\n{cfg.pretty_text}')训练一个新的探测器 最后,初始化数据集和检测器,然后训练一个新的检测器!from mmdet.datasets import build_dataset from mmdet.models import build_detector from mmdet.apis import train_detector # Build dataset datasets = [build_dataset(cfg.data.train)] # Build the detector model = build_detector( cfg.model, train_cfg=cfg.get('train_cfg'), test_cfg=cfg.get('test_cfg')) # Add an attribute for visualization convenience model.CLASSES = datasets[0].CLASSES # Create work_dir mmcv.mkdir_or_exist(osp.abspath(cfg.work_dir)) train_detector(model, datasets, cfg, distributed=False, validate=True) -

8、使用Tensorboard进行可视化,查看训练

- 参考博客1

-

如果有在default_runtime中解除注释tensorboard,键入下面的命令可以开启实时更新的tensorboard可视化模块

-

-

在config文件修改如下 log_config = dict( interval=50, hooks=[ dict(type='TextLoggerHook'), dict(type='TensorboardLoggerHook') #生成Tensorboard 日志 ]) 设置之后,会在work_dir目录下生成一个tf_logs目录,使用Tensorboard打开日志 cd /path/to/tf_logs tensorboard --logdir . --host 服务器IP地址 --port 6006 tensorboard 默认端口号是6006,在浏览器中输入http://:6006即可打开tensorboard界面 -

# Load the TensorBoard notebook extension %load_ext tensorboard # logdir需要填入你的work_dir/+tf_logs %tensorboard --logdir=coco_exps_v4/tf_logs9、训练过程中进行模型验证

在config文件中设置val数据集和评估指标

-

# coco支持的metric有['bbox', 'segm', 'proposal', 'proposal_fast'] # voc数据集支持的 metric 有['mAP', 'recall'] evaluation = dict(interval=1, metric=['mAP']) # len(metric) == 1, metric 只能设置一个指标 ———————————————— -

4.3 开始训练执行图下命令

-

python tools/train.py configs\swin\mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py 实际命令根据自己使用的修改,可以看到已经可以训练了,但是这样还是训练的带mask的,还不是真正意义上的目标检测模型。

-

上面的.log、.log.json文件就是训练的日志文件,每训练完一个epoch后目录下还会有对应的以epoch_x.pth的模型文件,最新训练的模型文件命名为latest.pth。

- 上面的文件内容大同小异,有当前时间、epoch次数,迭代次数(配置文件中默认设置50个batch输出一次log信息),学习率、损失函数loss、准确率等信息,可以根据上面的训练信息进行模型的评估与测试,另外可以通过读取.log.json文件进行可视化展示,方便调试。

-

4.4、禁用mask

-

1.路径./configs/base/models/mask_rcnn_swin_fpn.py中第75行use_mask=True 修改为use_mask=False 还需要删除mask_roi_extractor和mask_head两个变量,大概在第63行和68行,这里删除之后注意末尾的逗号和小括号的格式匹配问题 2.路径/configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py中: 第26行dict(type=‘LoadAnnotations’, with_bbox=True, with_mask=True)修改为dict(type=‘LoadAnnotations’, with_bbox=True, with_mask=False) 第60行删掉’gt_masks’ 训练时使用下面命令训练: bash tools/dist_train.sh 'configs/swin/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py' 1 --cfg-options model.pretrained='checkpoints/swin_tiny_patch4_window7_224.pth' 其中1为GPU数量,按需修改,预训练模型model.pretrained可选训练后的模型进行推理测试、评估

-

python3 tools/test.py ./config/retinanet/retinanet_r50_fpn_1x_coco.py ./work_dirs/retinanet_r50_fpn_1x_coco/epoch_12.pth --out ./result/result.pkl --eval bbox #保存测试结果和测试结果图片,并且对测试结果进行评估 python tools/test.py work_dirs/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py work_dirs/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco/latest.pth --out inference_dirs/mask_rcnn_swin_tiny_coco_result.pkl --eval bbox --show-dir ./inference_dirs/ #生成其他格式的结果文件 python tools/test.py work_dirs/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py work_dirs/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco/latest.pth --format-only --options "txtfile_prefix=./inference_dirs/mask_rcnn_swin_tiny_coco_result" --eval bbox --show-dir ./inference_dirs/ ./tools/dist_test.sh \ configs/cityscapes/mask_rcnn_r50_fpn_1x_cityscapes.py \ checkpoints/mask_rcnn_r50_fpn_1x_cityscapes_20200227-afe51d5a.pth \ 8 \ --format-only \ --options "txtfile_prefix=./mask_rcnn_cityscapes_test_results"将mmdetection产生的coco(json)格式的测试结果转化成VisDrone19官网所需要的txt格式文件(还包括无标签测试图片产生mmdet所需要的test.json方法)

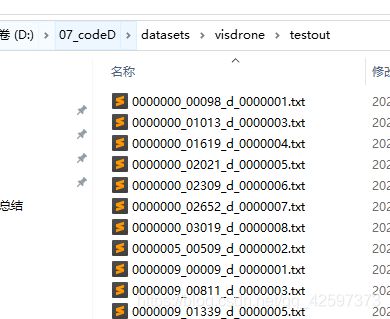

- 最近VisDrone19开放测试服务器了,自己尝试在它的test的图片上给出标签然后提交到官网上去。

使用了mmdetection工具,这个工具出来的结果是coco(json)格式的。

我们需要把它转化成官网需要的visdrone的txt格式才可以提交到服务器上,查看自己的score。

这个转化脚本目前找不到,所以只能自己写了。import json import os import argparse # wangzhifneg write in 2021-6-30 def coco2vistxt(json_path, out_folder): labels = json.load(open(json_path, 'r', encoding='utf-8')) for i in range(len(labels)): # print(len(labels)) 158000 # print(labels[i]['image_id']) # print(labels[i]['bbox']) file_name = labels[i]['image_id'] + '.txt' with open(os.path.join(args.o, file_name), 'a+', encoding='utf-8') as f: l = labels[i]['bbox'] s = [round(i) for i in l] line =str(s)[1:-1].replace(' ','')+ ',' + str(labels[i]['score'])[:6] + ',' + str(labels[i]['category_id']) + ',' + str('-1') + ',' + str('-1') f.write(line+'\n') if __name__ == '__main__': parser = argparse.ArgumentParser(description='coco test json to visdrone txt file.') parser.add_argument('-j', help='JSON file', default='D:/07_codeD/datasets/visdrone/cascadevis10test.bbox.json') parser.add_argument('-o', help='path to output folder', default='D:/07_codeD/datasets/visdrone/testout') args = parser.parse_args() coco2vistxt(json_path=args.j,out_folder=args.o)简单说一下:

cascadevis10test.bbox.json这个是我们mmdetection产生的json文件,里面有我们对测试图片产生的coco格式的结果。

testout10cascade是传出txt存放的目录。

具体如下图: -

PS:

mmdetection需要把测试集(完全是图片,没有标签)转化成一个带有图片信息的json文件才可以进行coco格式的test。

这部分代码官方没有,要自己写,我找了好久才找到可以参考的,自己改动了下。具体代码如下。 -

import os import PIL.Image as Image import json root = 'D:/07_codeD/datasets/visdrone/VisDrone2019-DET-test-challenge' test_json = os.path.join(root, 'images') # test image root out_file = os.path.join(root, 'test.json') # test json output path 生成json的位置 data = {} # 这部分如果不同数据集可以替换 data['categories'] = [{"id": 1, "name": "Pedestrain", "supercategory": "none"}, {"id": 2, "name": "People", "supercategory": "none"}, {"id": 3, "name": "Bicycle", "supercategory": "none"}, {"id": 4, "name": "Car", "supercategory": "none"}, {"id": 5, "name": "Van", "supercategory": "none"}, {"id": 6, "name": "Truck", "supercategory": "none"}, {"id": 7, "name": "Tricycle", "supercategory": "none"}, {"id": 8, "name": "Awning-tricycle", "supercategory": "none"}, {"id": 9, "name": "Bus", "supercategory": "none"}, {"id": 10, "name": "Motor", "supercategory": "none"}] # 数据集的类别 images = [] for name in os.listdir(test_json): file_path = os.path.join(test_json, name) file = Image.open(file_path) tmp = dict() tmp['id'] = name[:-4] # idx += 1 tmp['width'] = file.size[0] tmp['height'] = file.size[1] tmp['file_name'] = name images.append(tmp) data['images'] = images with open(out_file, 'w') as f: json.dump(data, f) # with open(out_file, 'r') as f: # test = json.load(f) # for i in test['categories']: # print(i['id']) print('finish')这里注意VisDrone是12个类,其中1和11我们是不需要的,大家建立自己的coco(json)和voc(xml)标签的时候就要去除。

我们上面这个代码可以将我们的test(完全是图片),生成相应的test.json(这里面没有标签,只有图片信息),然后将test.json写入到我们的mmdetection的config中的test部分就好了。

(这部分代码mmdetection官方居然没有。。。资料也好少,之前搞得我恼火得很,这里给后面想做这个数据集的给点参考)

-

4、遇到的问题及解决办法

1.AssertionError: Incompatible version of pycocotools is installed. Run pip uninstall pycocotools first. Then run pip install mmpycocotools to install open-mmlab forked pycocotools.

解决办法已经给出了,命令行中:

pip uninstall pycocotoolspip install mmpycocotools2.KeyError: "CascadeRCNN: 'backbone.layers.0.blocks.0.attn.relative_position_bias_table'"

预训练模型加载错误,应该使用imagenet预训练的模型,而不是在coco上微调的模型,这个错误我也很无奈啊,跟我预想的使用coco模型预训练不一样,官方github也有人提出相同问题,解决办法就是不加载预训练模型从头训练,或者在https://github.com/microsoft/Swin-Transformer上下载分类的模型。

3.import pycocotools._mask as _mask

File "pycocotools/_mask.pyx", line 1, in init pycocotools._mask

ValueError: numpy.ndarray size changed, may indicate binary incompatibility. Expected 88 from C header, got 80 from PyObject

numpy版本问题,使用pip install --upgrade numpy升级numpy版本

-

5、测试训练好的模型

-

添加一个自己的图片在demo目录下,执行:

python demo/image_demo.py demo/000019.jpg configs\swin\mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco.py work_dirs/mask_rcnn_swin_tiny_patch4_window7_mstrain_480-800_adamw_3x_coco/latest.pthlatest.pth 就是自己训练好的最新的权重文件,默认会放在workdir下。

-

不输出实例分割图

-

demo/image_demo.py 做如下修改: # test a single image result = inference_detector(model, args.img) new_result = result[0] # show the results show_result_pyplot(model, args.img, new_result, score_thr=args.score_thr)三、训练 cascade_mask_rcnn_swin

与之前训练mask_rcnn_swin_一样,但是如果是单卡多修改如下部分

configs/swin/cascade_mask_rcnn_swin_small_patch4_window7_mstrain_480-800_giou_4conv1f_adamw_3x_coco.py 文件中,所有的 SyncBN 改为 BN。