深度学习07——处理多维特征的输入

目录

1.分类问题

2.多维特征的输入

2.1 高维输入的逻辑回归模型

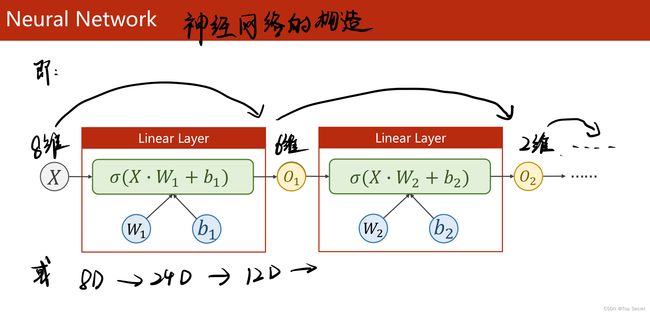

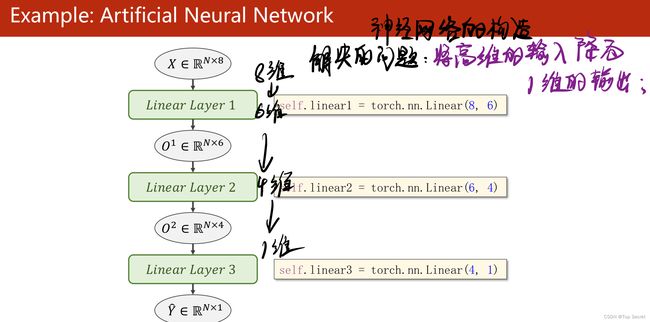

2.2 神经网络层的构建原理

3.以糖尿病数据集为例,由逻辑回归模型做分类

3.1 数据集的准备(导入数据)

3.2 构建模型

3.3 计算损失和选择优化器

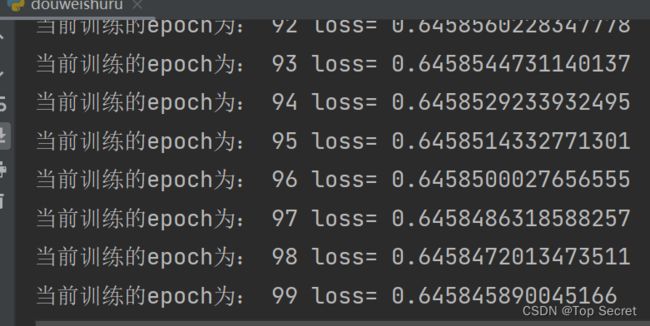

3.4 训练

3.5 激活函数一览

4.代码

4.1 视频学习代码

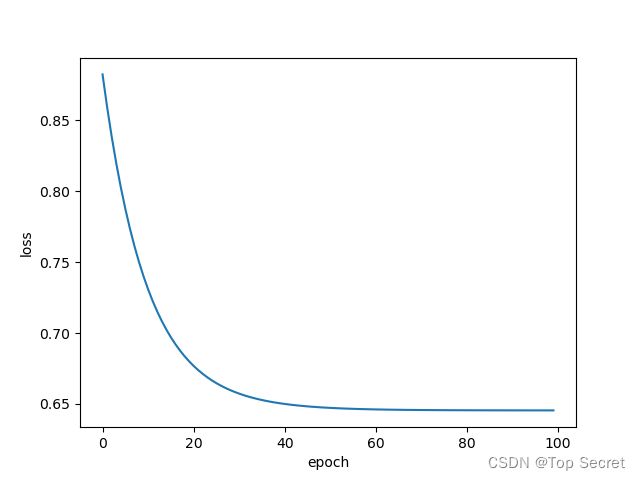

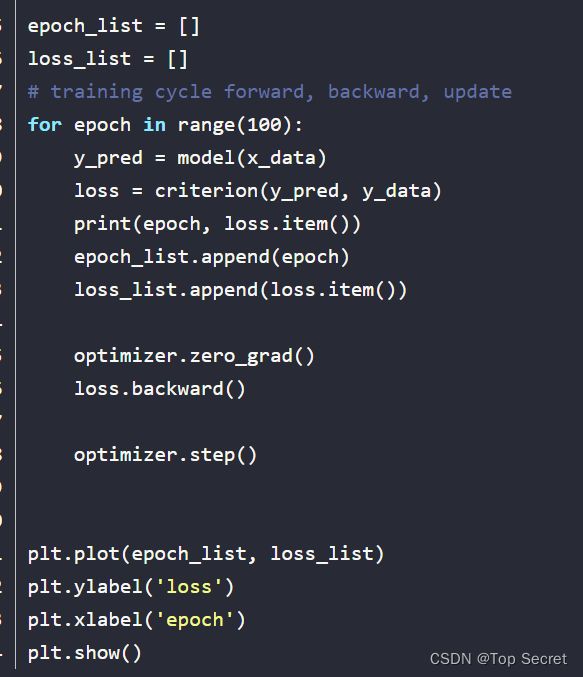

4.2 将训练结果绘制成图像

4.3 查看某些层的参数

刘老师学习视频地址:https://www.bilibili.com/video/BV1Y7411d7Ys?p=7

其他参考笔记:PyTorch 深度学习实践 第7讲_错错莫的博客-CSDN博客

PyTorch学习(六)--处理多维特征的输入_陈同学爱吃方便面的博客-CSDN博客

1.分类问题

2.多维特征的输入

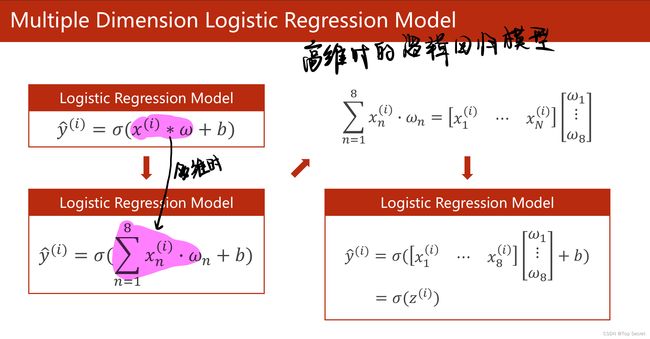

2.1 高维输入的逻辑回归模型

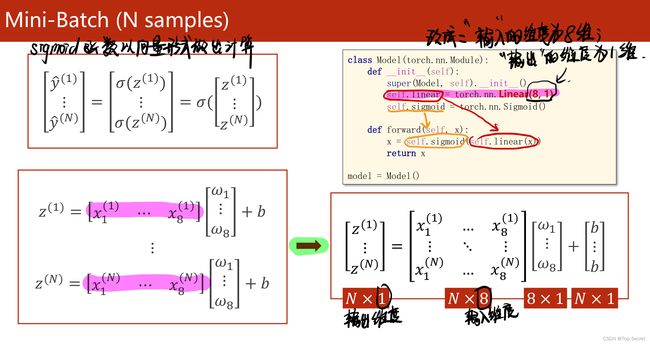

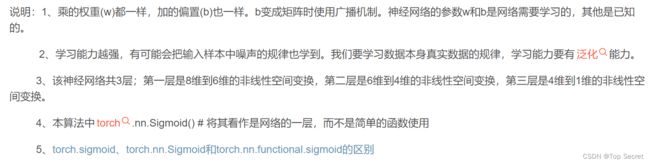

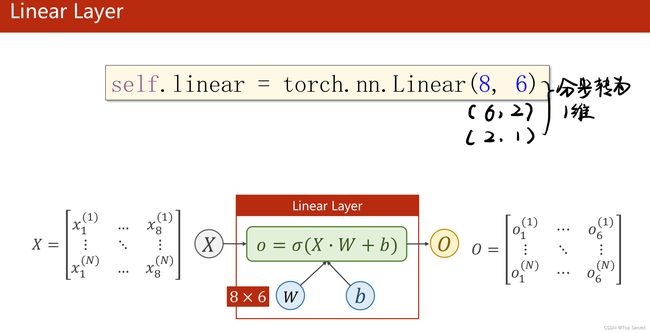

2.2 神经网络层的构建原理

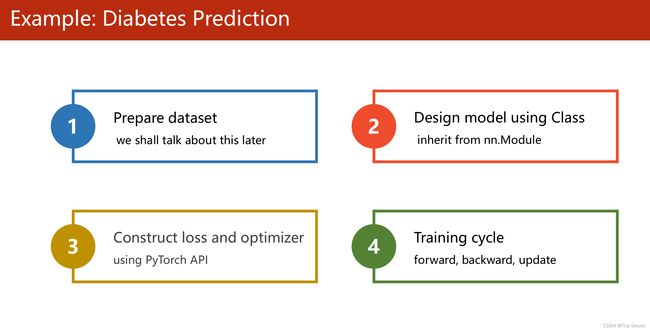

3.以糖尿病数据集为例,由逻辑回归模型做分类

3.1 数据集的准备(导入数据)

链接:https://pan.baidu.com/s/1UKLJpSkZ3dsxh-FcTPJaoQ

提取码:2022

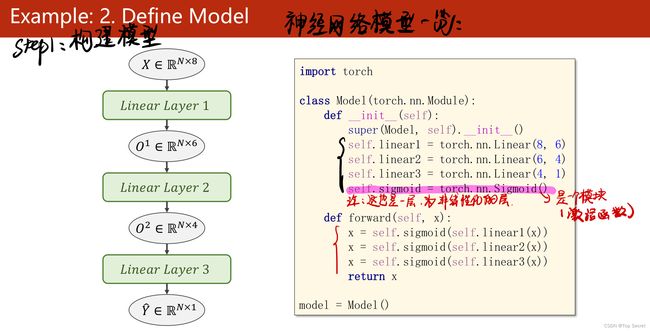

3.2 构建模型

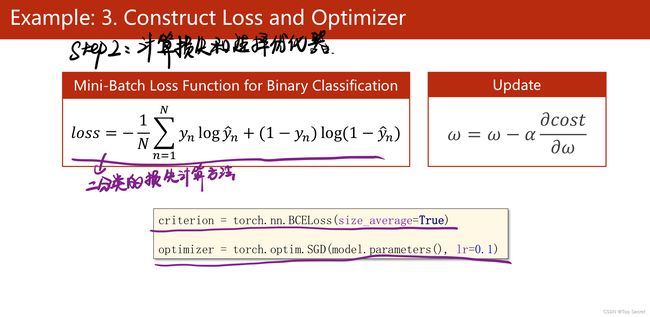

3.3 计算损失和选择优化器

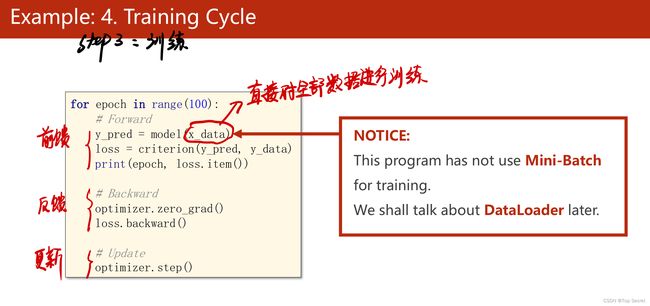

3.4 训练

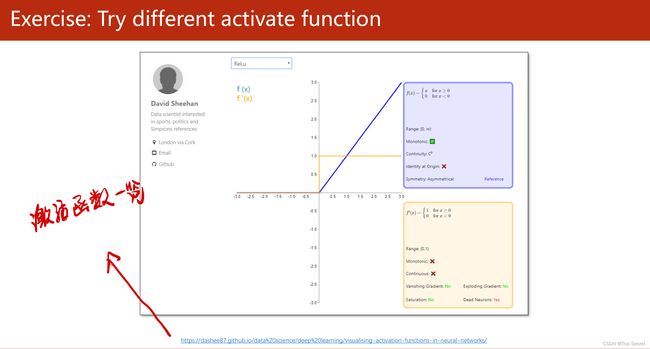

3.5 激活函数一览

http://rasbt.github.io/mlxtend/user_guide/general_concepts/activation-functions/#activation-functions-for-artificial-neural-networks

Redirecting…

torch.nn — PyTorch 1.12 documentation

4.代码

4.1 视频学习代码

import torch

import numpy as np

#导入数据

xy = np.loadtxt('diabetes.csv.gz',delimiter=',',dtype=np.float32)

x_data = torch.from_numpy(xy[:,:-1]) # ":-1" 每行的最后一个元素不要

y_data = torch.from_numpy(xy[:,[-1]]) # [-1] 只要每行的最后一个元素

#step1:自定义模型,并且实例化

class Model(torch.nn.Module):

def __init__(self):

super(Model,self).__init__()

self.linear1 = torch.nn.Linear(8,6) # 定义第一层神经网络

self.linear2 = torch.nn.Linear(6,4)

self.linear3 = torch.nn.Linear(4,1)

self.sigmoid = torch.nn.Sigmoid() #非线性化

#计算

def forward(self,x):

x = self.sigmoid(self.linear1(x))

x = self.sigmoid(self.linear2(x))

x = self.sigmoid(self.linear3(x))

return x

model = Model() #实例化

#step2:计算损失和选择优化器

criterion = torch.nn.BCELoss(size_average=True) #BCELoss二分类的损失计算方法

optimizer =torch.optim.SGD(model.parameters(),lr=0.1) #选择优化器

for epoch in range(100):

# 前馈

y_pred = model(x_data)

loss = criterion(y_pred,y_data)

print("当前训练的epoch为:",epoch,"loss=",loss.item())

#反馈

optimizer.zero_grad() #梯度归零

loss.backward()

#更新

optimizer.step()4.2 将训练结果绘制成图像

import numpy as np

import torch

import matplotlib.pyplot as plt

# prepare dataset

xy = np.loadtxt('diabetes.csv', delimiter=',', dtype=np.float32)

x_data = torch.from_numpy(xy[:, :-1]) # 第一个‘:’是指读取所有行,第二个‘:’是指从第一列开始,最后一列不要

y_data = torch.from_numpy(xy[:, [-1]]) # [-1] 最后得到的是个矩阵

# design model using class

class Model(torch.nn.Module):

def __init__(self):

super(Model, self).__init__()

self.linear1 = torch.nn.Linear(8, 6) # 输入数据x的特征是8维,x有8个特征

self.linear2 = torch.nn.Linear(6, 4)

self.linear3 = torch.nn.Linear(4, 1)

self.sigmoid = torch.nn.Sigmoid() # 将其看作是网络的一层,而不是简单的函数使用

def forward(self, x):

x = self.sigmoid(self.linear1(x))

x = self.sigmoid(self.linear2(x))

x = self.sigmoid(self.linear3(x)) # y hat

return x

model = Model()

# construct loss and optimizer

# criterion = torch.nn.BCELoss(size_average = True)

criterion = torch.nn.BCELoss(reduction='mean')

optimizer = torch.optim.SGD(model.parameters(), lr=0.1)

epoch_list = []

loss_list = []

# training cycle forward, backward, update

for epoch in range(100):

y_pred = model(x_data)

loss = criterion(y_pred, y_data)

print(epoch, loss.item())

epoch_list.append(epoch)

loss_list.append(loss.item())

optimizer.zero_grad()

loss.backward()

optimizer.step()

plt.plot(epoch_list, loss_list)

plt.ylabel('loss')

plt.xlabel('epoch')

plt.show()4.3 查看某些层的参数

# 参数说明

# 第一层的参数:

layer1_weight = model.linear1.weight.data

layer1_bias = model.linear1.bias.data

print("layer1_weight", layer1_weight)

print("layer1_weight.shape", layer1_weight.shape)

print("layer1_bias", layer1_bias)

print("layer1_bias.shape", layer1_bias.shape)