Hadoop(MapReduce)

1、MapReduce概述

1.1 定义

1.2 优缺点

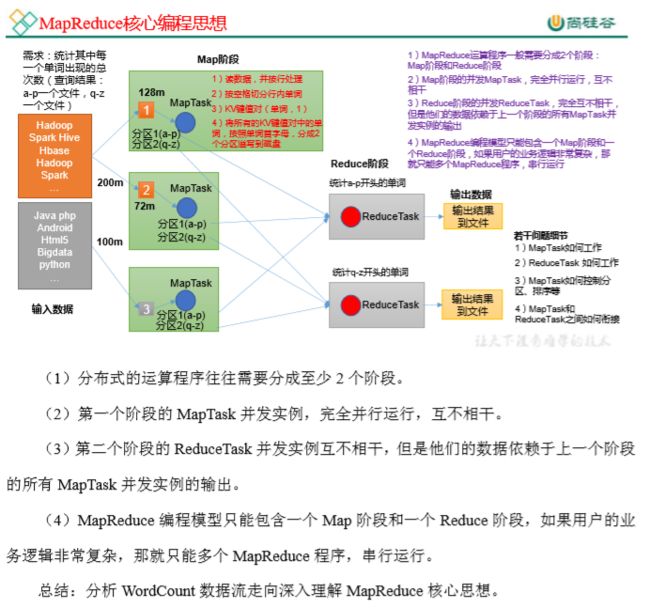

1.3 MapReduce 核心思想

1.4 MapReduce进程

1.5 官方 WordCount 源码

采用反编译工具反编译源码,发现 WordCount 案例有 Map 类、Reduce 类和驱动类。且数据的类型是 Hadoop 自身封装的序列化类型。

1.6 常用数据序列化类型

1.7 MapReduce编程规范

1.8 WordCount案例实操

(3)环境准备

a.创建maven工程,MapReduceDemo

b.在pom.xml文件中添加如下依赖

org.apache.hadoop</groupId>

hadoop-client</artifactId>

3.1.3</version>

</dependency>

junit</groupId>

junit</artifactId>

4.12</version>

</dependency>

org.slf4j</groupId>

slf4j-log4j12</artifactId>

1.7.30</version>

</dependency>

</dependencies>

c.在项目的src/main/resources目录下,新建一个文件,命名为“log4j.properties”,在文件中填入。

log4j.rootLogger=INFO, stdout

log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%d %p [%c] - %m%n

log4j.appender.logfile=org.apache.log4j.FileAppender

log4j.appender.logfile.File=target/spring.log

log4j.appender.logfile.layout=org.apache.log4j.PatternLayout

log4j.appender.logfile.layout.ConversionPattern=%d %p [%c] - %m%n

d.创建包名com.xxxx.mapreduce.wordcount

e.编写Mapper类

package com.xxxx.mapreduce.wordcount;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* KEYIN map阶段输入的key: LongWritable

* VALUEIN map阶段输入的value: Text

* KEYOUT map阶段输出的key: Text

* VALUEOUT map阶段输出的value类型: IntWritable

*/

public class WordCountMapper extends Mapper<LongWritable, Text,Text, IntWritable> {

private Text outK = new Text();

private IntWritable outV = new IntWritable(1);

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, IntWritable>.Context context) throws IOException, InterruptedException {

//1.获取一行

//atguigu atguigu

String line = value.toString();

//2.切割

//atguigu

//atguigu

String[] words = line.split(" ");

//3.循环写出

for (String word : words){

//封装outK

outK.set(word);

//写出

context.write(outK,outV);

}

}

}

f.编写 Reducer 类

package com.xxxx.mapreduce.wordcount;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* KEYIN reduce阶段输入的key: Text

* VALUEIN reduce阶段输入的value: IntWritable

* KEYOUT reduce阶段输出的key: Text

* VALUEOUT reduce阶段输出的value类型: IntWritable

*/

public class WordCountReducer extends Reducer<Text, IntWritable,Text,IntWritable> {

private IntWritable outV = new IntWritable();

@Override

protected void reduce(Text key, Iterable<IntWritable> values, Reducer<Text, IntWritable, Text, IntWritable>.Context context) throws IOException, InterruptedException {

int sum=0;

//atguigu (1,1)

//1.累计求和

for (IntWritable value : values){

sum += value.get();

}

outV.set(sum);

//写出

context.write(key,outV);

}

}

g.编写 Driver 驱动类

package com.xxxx.mapreduce.wordcount;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* 注释内容

*

* @author : li.linnan

* @create : 2022/9/28

*/

public class WordCountDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

// 1 获取配置信息以及获取 job 对象

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

// 2 关联本 Driver 程序的 jar

job.setJarByClass(WordCountDriver.class);

// 3 关联 Mapper 和 Reducer 的 jar

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

// 4 设置 Mapper 输出的 kv 类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

// 5 设置最终输出 kv 类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

// 6 设置输入和输出路径

FileInputFormat.setInputPaths(job, new Path("D:\\input\\hello.txt"));

FileOutputFormat.setOutputPath(job, new Path("D:\\input\\result"));

// 7 提交 job

boolean result = job.waitForCompletion(true);

System.exit(result ? 0 : 1);

}

}

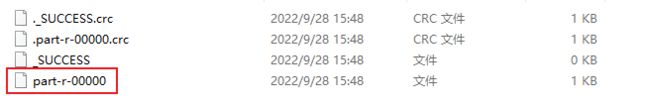

文件内容:

atguigu 2

banzhang 1

cls 3

hadoop 1

jiao 1

ss 2

xue 1

1.8.2 提交到集群测试

集群上测试

(1)用 maven 打 jar 包,需要添加的打包插件依赖

<build>

<plugins>

<plugin>

<artifactId>maven-compiler-pluginartifactId>

<version>3.6.1version>

<configuration>

<source>1.8source>

<target>1.8target>

configuration>

plugin>

<plugin>

<artifactId>maven-assembly-pluginartifactId>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependenciesdescriptorRef>

descriptorRefs>

configuration>

<executions>

<execution>

<id>make-assemblyid>

<phase>packagephase>

<goals>

<goal>singlegoal>

goals>

execution>

executions>

plugin>

plugins>

build>

(2)修改输入输出路径

// 6 设置输入和输出路径

FileInputFormat.setInputPaths(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[1]));

(3)将程序打成 jar 包

(4)修改不带依赖的 jar 包名称为 wc.jar,并拷贝该 jar 包到 Hadoop 集群的/opt/module/hadoop-3.1.3 路径。 (直接将jar包拖拽过来)

(5)执行命令

[lln@hadoop102 hadoop-3.1.3]$ hadoop jar wc.jar com.xxxx.mapreduce.wordcount.WordCountDriver /input /output

2、Hadoop序列化

2.1 概述

2.2 自定义bean对象实现序列化接口(Writable)

2.3 序列化案例实操

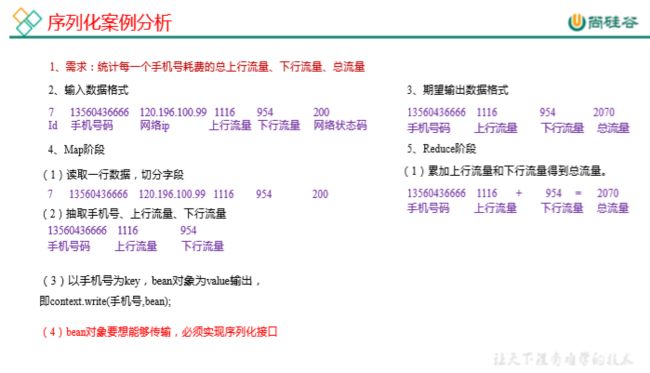

(1)需求

(2)需求分析

(3)编写MapReduce程序

a.编写流量统计的Bean对象

package com.xxxx.mapreduce.writable;

import org.apache.hadoop.io.Writable;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

/**

* 1、定义类,实现Writable接口

* 2、重写序列化和反序列化方法

* 3、重写空参构造

* 4、toString方法

*

* @author : li.linnan

* @create : 2022/9/29

*/

public class FlowBean implements Writable {

private long upFlow;//上行流量

private long downFlow;//下行流量

private long sumFlow;//总流量

//空参构造

public FlowBean(){

}

public long getUpFlow() {

return upFlow;

}

public void setUpFlow(long upFlow) {

this.upFlow = upFlow;

}

public long getDownFlow() {

return downFlow;

}

public void setDownFlow(long downFlow) {

this.downFlow = downFlow;

}

public long getSumFlow() {

return sumFlow;

}

public void setSumFlow(long sumFlow) {

this.sumFlow = sumFlow;

}

public void setSumFlow() {

this.sumFlow = this.upFlow + this.downFlow;

}

@Override

public void write(DataOutput dataOutput) throws IOException {

dataOutput.writeLong(upFlow);

dataOutput.writeLong(downFlow);

dataOutput.writeLong(sumFlow);

}

@Override

public void readFields(DataInput dataInput) throws IOException {

this.upFlow = dataInput.readLong();

this.downFlow = dataInput.readLong();

this.sumFlow = dataInput.readLong();

}

@Override

public String toString() {

return upFlow + "\t" + downFlow + "\t" + sumFlow;

}

}

b.编写Mapper类

package com.xxxx.mapreduce.writable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Mapper;

import java.io.IOException;

/**

* 注释内容

*

* @author : li.linnan

* @create : 2022/9/29

*/

public class FlowMapper extends Mapper<LongWritable, Text,Text,FlowBean> {

private Text outK = new Text();

private FlowBean outV = new FlowBean();

@Override

protected void map(LongWritable key,Text value,Context context) throws IOException,InterruptedException{

//1、获取一行

//1 13736230513 192.196.100.1 www.atguigu.com 2481 24681 200

//2 13846544121 192.196.100.2 264 0 200

String line = value.toString();

//2 切割

//1,13736230513,192.196.100.1,www.atguigu.com,2481,24681,200

//2,13846544121,192.196.100.2,264,0,200

String[] split = line.split("\t");

//3、抓取想要的数据

//手机号 13736230513

//上行流量和下行流量 2481 24681

String phone = split[1];

String up = split[split.length-3];

String down = split[split.length-2];

//4、封装outK、outV

outK.set(phone);

outV.setUpFlow(Long.parseLong(up));

outV.setDownFlow(Long.parseLong(down));

outV.setSumFlow();

//5、写出outK、outV

context.write(outK,outV);

}

}

c.编写Reducer类

package com.xxxx.mapreduce.writable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Reducer;

import java.io.IOException;

/**

* 注释内容

*

* @author : li.linnan

* @create : 2022/9/29

*/

public class FlowReducer extends Reducer<Text,FlowBean,Text,FlowBean> {

private FlowBean outV = new FlowBean();

@Override

protected void reduce(Text key, Iterable<FlowBean> values, Context context) throws IOException, InterruptedException {

long totalUp = 0;

long totalDown = 0;

//1、遍历values,将其中的上行流量,下行流量分别累加

for(FlowBean flowBean : values){

totalUp += flowBean.getUpFlow();

totalDown += flowBean.getDownFlow();

}

//2、封装outV

outV.setUpFlow(totalUp);

outV.setDownFlow(totalDown);

outV.setSumFlow();

//3、写出outK、outV

context.write(key,outV);

}

}

d.编写Driver驱动类

package com.xxxx.mapreduce.writable;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

/**

* 注释内容

*

* @author : li.linnan

* @create : 2022/9/29

*/

public class FlowDriver {

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

//1 获取job

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

//2 设置jar

job.setJarByClass(FlowDriver.class);

//3 关联mapper 和 Reducer

job.setMapperClass(FlowMapper.class);

job.setReducerClass(FlowReducer.class);

//4 设置mapper 输出的 key 和 value 类型

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(FlowBean.class);

//5 设置最终数据输出的 key 和 value 类型

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(FlowBean.class);

//6 设置数据的输入路径和输出路径

FileInputFormat.setInputPaths(job, new Path("D:\\input\\phone.txt"));

FileOutputFormat.setOutputPath(job, new Path("D:\\input\\result"));

//7 提交job

boolean b = job.waitForCompletion(true);

System.exit(b?0:1);

}

}

phone.txt 文件内容

1 13736230513 192.196.100.1 www.atguigu.com 2481 24681 200

2 13846544121 192.196.100.2 264 0 200

3 13956435636 192.196.100.3 132 1512 200

4 13966251146 192.168.100.1 240 0 404

5 18271575951 192.168.100.2 www.atguigu.com 1527 2106 200

6 84188413 192.168.100.3 www.atguigu.com 4116 1432 200

7 13590439668 192.168.100.4 1116 954 200

8 15910133277 192.168.100.5 www.hao123.com 3156 2936 200

9 13729199489 192.168.100.6 240 0 200

10 13630577991 192.168.100.7 www.shouhu.com 6960 690 200

11 15043685818 192.168.100.8 www.baidu.com 3659 3538 200

12 15959002129 192.168.100.9 www.atguigu.com 1938 180 500

13 13560439638 192.168.100.10 918 4938 200

14 13470253144 192.168.100.11 180 180 200

15 13682846555 192.168.100.12 www.qq.com 1938 2910 200

16 13992314666 192.168.100.13 www.gaga.com 3008 3720 200

17 13509468723 192.168.100.14 www.qinghua.com 7335 110349 404

18 18390173782 192.168.100.15 www.sogou.com 9531 2412 200

19 13975057813 192.168.100.16 www.baidu.com 11058 48243 200

20 13768778790 192.168.100.17 120 120 200

21 13568436656 192.168.100.18 www.alibaba.com 2481 24681 200

22 13568436656 192.168.100.19 1116 954 200

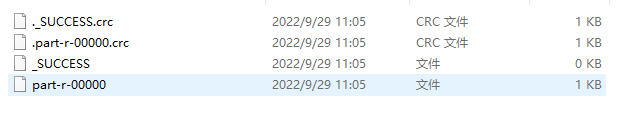

运行结果

13470253144 180 180 360

13509468723 7335 110349 117684

13560439638 918 4938 5856

13568436656 3597 25635 29232

13590439668 1116 954 2070

13630577991 6960 690 7650

13682846555 1938 2910 4848

13729199489 240 0 240

13736230513 2481 24681 27162

13768778790 120 120 240

13846544121 264 0 264

13956435636 132 1512 1644

13966251146 240 0 240

13975057813 11058 48243 59301

13992314666 3008 3720 6728

15043685818 3659 3538 7197

15910133277 3156 2936 6092

15959002129 1938 180 2118

18271575951 1527 2106 3633

18390173782 9531 2412 11943

84188413 4116 1432 5548

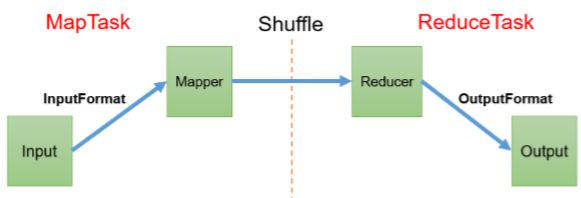

3、MapReduce框架原理

3.1 InputFormat数据输入

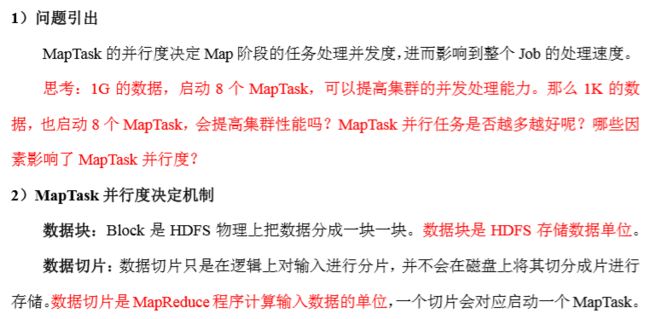

3.1.1 切片与MapTask并行度决定机制

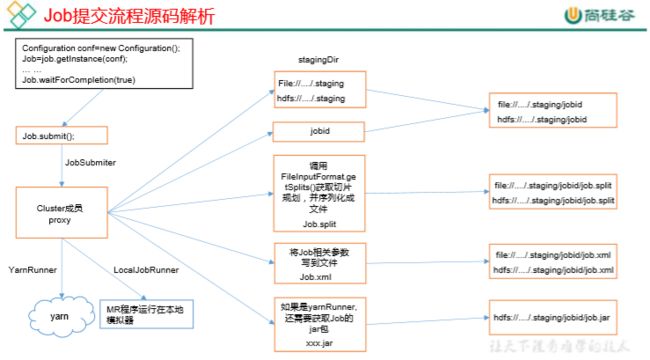

3.1.2 Job提交流程源码和切片源码详情

(1)Job提交流程源码详解

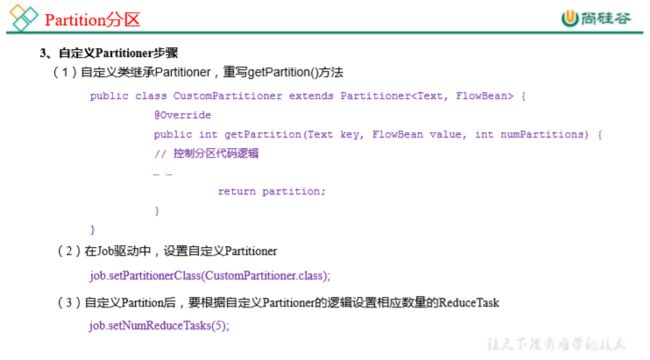

3.2

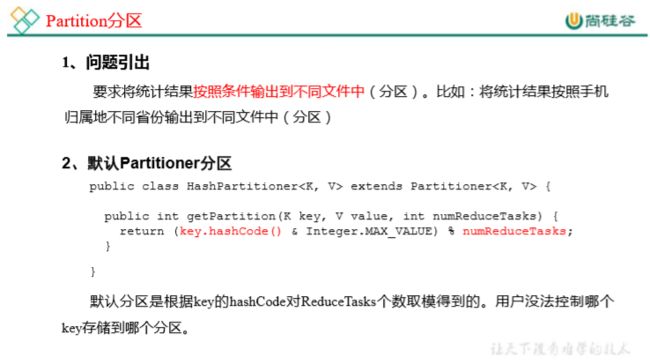

3.3 Shuffle机制

3.3.1 Shuffle机制

Map方法之后,Reduce方法之前的数据处理过程称之为Shuffle。