B站 刘二中 深度学习pytorch RNN(基础篇)所有代码

1.RNNcell设计

init中:

cell = torch.nn.RNNCell(inpt_size=input_size, hidden_size=hidden_size)forward中:

hidden = cell(input,hidden)注意:维度是RNN中十分复杂的问题

RNNcell设计中:input维度(batchsize,inputsize)hidden维度(batch,hiddensize)

output维度(batch,hiddensize)

dataset维度:data.shape=(seqLen,batchsize,inputsize) 可以理解为seqLen个input

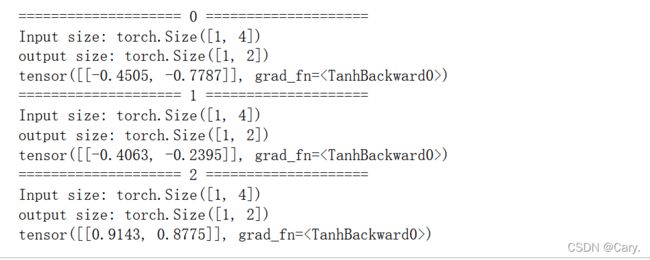

import torch

batch_size = 1

seq_len = 3

input_size = 4

hidden_size = 2

cell = torch.nn.RNNCell(input_size=input_size, hidden_size=hidden_size)

dataset = torch.randn(seq_len,batch_size,input_size)

hidden = torch.zeros(batch_size,hidden_size)

for idx,input in enumerate(dataset):

print('='*20,idx,'='*20)

print('Input size:',input.shape)

hidden = cell(input,hidden)

print('output size:',hidden.shape)

print(hidden)输出:

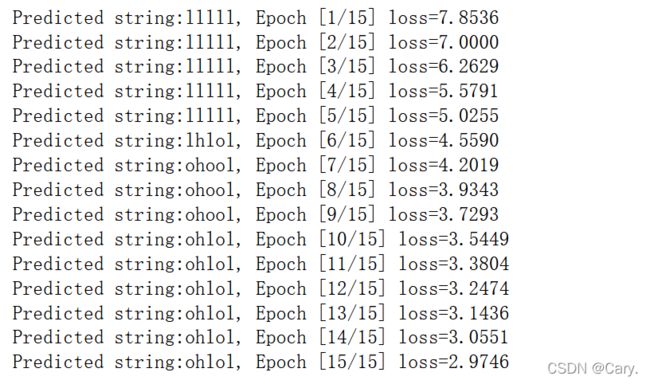

RNN实现“hello”—>“ohlol”

#####RNNcell实现 hello->ohlol

import torch

batch_size = 1

input_size = 4

hidden_size = 4

####hidden_size是中间输出的隐层 代表了输出的类别,(1,0,0,0)就表示输出字符'e'

idx2char = ['e','h','l','o']

x_data = [1,0,2,2,3]

y_data = [3,1,2,3,2]

one_hot_lookup = [[1,0,0,0],

[0,1,0,0],

[0,0,1,0],

[0,0,0,1]]

x_one_hot = [one_hot_lookup[x] for x in x_data]

"""

y_data为什么不进行独热编码?(LongTensor)P9

"""

inputs = torch.Tensor(x_one_hot).view(-1,batch_size,input_size)

labels = torch.LongTensor(y_data).view(-1,1)

class Model(torch.nn.Module):

def __init__(self,input_size,hidden_size,batch_size):

super(Model,self).__init__()

self.batch_size = batch_size

self.input_size = input_size

self.hidden_size = hidden_size

self.rnncell = torch.nn.RNNCell(input_size=self.input_size,hidden_size=self.hidden_size)

def forward(self,input,hidden):

hidden = self.rnncell(input,hidden)

return hidden

def init_hidden(self):

return torch.zeros(self.batch_size,self.hidden_size)

net = Model(input_size,hidden_size,batch_size)

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(),lr = 0.1)

for epoch in range(15):

loss = 0

optimizer.zero_grad()

hidden = net.init_hidden()

print('Predicted string:',end='')

for input,label in zip(inputs,labels):

hidden = net(input,hidden) ###ht=cell(xt,ht-1)

loss += criterion(hidden,label)

_,idx = hidden.max(dim=1)

print(idx2char[idx.item()],end='')

loss.backward()

optimizer.step()

print(', Epoch [%d/15] loss=%.4f' % (epoch+1,loss.item()))结果:

其中,注释部分有个疑问,就是y_data为什么转换成LongTensor形式就不用再进行one_hot编码

又看了一遍P9 有一点点理解 就是使用CrossEntropyLoss时,就已经包含了softmax层

CrossEntropyLoss = Logsoftmax + NLLLoss

而在NLL中,nll_loss函数计算时,便无需再进行独热编码

想要详细理解的可以参考这篇文章:一文详解PyTorch中的交叉熵 - 知乎 (zhihu.com)![]() https://zhuanlan.zhihu.com/p/3696990032.RNN设计

https://zhuanlan.zhihu.com/p/3696990032.RNN设计

init中:

cell = torch.nn.RNN(input_size=input_size,hidden_size=hidden_size,num_layers=num_layers) ##层数是一个cell上叠加的层数forward中:

out,hidden = cell(inputs,hidden) #inputs包含所有x1,x2,x3...hidden 为h0 output中,out是h1,h2,h3.... hidden为hN其中,inputs包含所有x1,x2,x3...hidden 为h0 output中,out是h1,h2,h3.... hidden为hN

同样,维度信息:

输入的维度

input shape(seqSize,batch,input_size)

hidden shape(numlayers,batch,hidden_size)

输出的维度

output (seqSize,batch,hidden_size)

hidden (numlayers,batch,hidden_size)

h0(numlayers,batch_size,hiddenSize)

如果想让batchsize是第一维度:

cell = torch.nn.RNN(input_size=input_size,hidden_size=hidden_size,num_layers=num_layers,batch_first=True)小栗子:

import torch

batch_size = 1

seq_len = 3

input_size = 4

hidden_size = 2

num_layers = 1

cell = torch.nn.RNN(input_size=input_size,hidden_size=hidden_size,num_layers=num_layers)

inputs = torch.randn(seq_len,batch_size,input_size)

hidden = torch.zeros(num_layers,batch_size,hidden_size)

out,hidden = cell(inputs,hidden)

print('Input size:',input.shape)

print("Output:",out)

print('Hidden size:',hidden.shape)

print("Hidden:",hidden)输出:

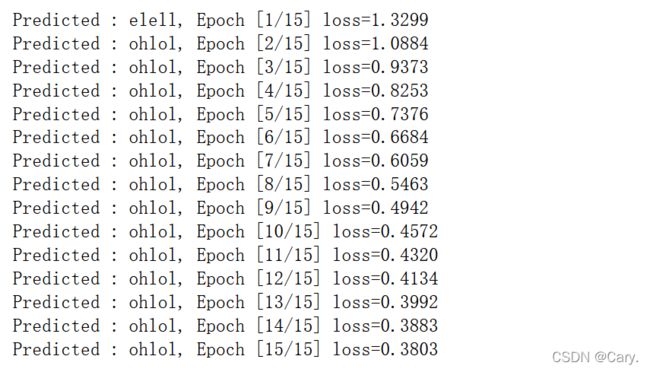

RNN实现“hello”—>“ohlol”

####RNN实现

import torch

batch_size = 1

input_size = 4

hidden_size = 4

num_layers = 1

seq_len = 5

####hidden_size是中间输出的隐层 代表了输出的类别,(1,0,0,0)就表示输出字符'e'

idx2char = ['e','h','l','o']

x_data = [1,0,2,2,3]

y_data = [3,1,2,3,2]

one_hot_lookup = [[1,0,0,0],

[0,1,0,0],

[0,0,1,0],

[0,0,0,1]]

x_one_hot = [one_hot_lookup[x] for x in x_data]

inputs = torch.Tensor(x_one_hot).view(seq_len,batch_size,input_size)

labels = torch.LongTensor(y_data)

class Model(torch.nn.Module):

def __init__(self,input_size,hidden_size,batch_size,num_layers = 1):

super(Model,self).__init__()

self.num_layers = num_layers

self.batch_size = batch_size

self.input_size = input_size

self.hidden_size = hidden_size

self.rnn = torch.nn.RNN(input_size=self.input_size,hidden_size=self.hidden_size,num_layers=num_layers)

def forward(self,input):

hidden = torch.zeros(self.num_layers,self.batch_size,self.hidden_size)####构造h0

out,_ = self.rnn(input,hidden)

return out.view(-1,self.hidden_size) ###输出是(segLen*batchSize,hiddensize)用交叉熵的时候转换为矩阵进行处理

net = Model(input_size,hidden_size,batch_size,num_layers)

criterion = torch.nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(net.parameters(),lr = 0.1)

for epoch in range(15):

optimizer.zero_grad()

outputs = net(inputs)

loss = criterion(outputs,labels)

loss.backward()

optimizer.step()

_,idx = outputs.max(dim=1)

idx = idx.data.numpy()

print('Predicted :',''.join([idx2char[x] for x in idx]),end='')

print(', Epoch [%d/15] loss=%.4f' % (epoch+1,loss.item()))输出:

一些细节写在注释中了~