CentOS7 安装Nvidia Tesla T4驱动 CUDA CUDNN,The third-party dynamic library (libcudnn.so) that Paddle,

安装详细案例参考

https://www.cnblogs.com/liuyim/p/15039193.html

CentOS7 安装Nvidia Tesla T4驱动 CUDA CUDNN

显卡为 Nvidia Tesla T4

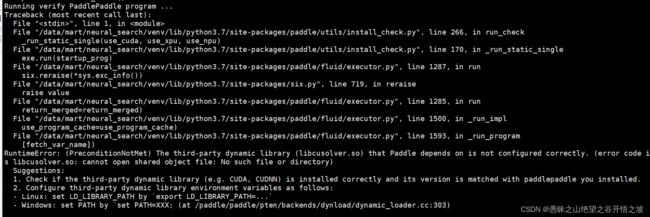

报错

The third-party dynamic library (libcudnn.so) that Paddle depends on is not configured correctly. (error code is /usr/local/cuda/lib64/libcudnn.so: cannot open shared object file: No such file or directory)

Suggestions:

- Check if the third-party dynamic library (e.g. CUDA, CUDNN) is installed correctly and its version is matched with paddlepaddle you installed.

- Configure third-party dynamic library environment variables as follows:

- Linux: set LD_LIBRARY_PATH by

export LD_LIBRARY_PATH=... - Windows: set PATH by `set PATH=XXX;

pytorch gpu运行安装

所以假如只安装了GPU驱动,pytorch运行在gpu上也是成功的

https://www.zhihu.com/question/378419173

为了防止冲突,pytorch安装 自带cuda runtime。

pytorch官网的安装命令里面,除了pytorch torchvision 还有一个cudnn tools,cuda是驱动自带有,所以这两个都不用装了

paddle GPU安装

特有的指令

CUDA 工具包10.1/10.2配合cuDNN v7.6+(如需多卡支持,需配合NCCL2.7及更高)

python -m pip install paddlepaddle-gpu==0.0.0.post101 -f https://www.paddlepaddle.org.cn/whl/linux/gpu/develop.html

paddle cuda安装成功验证

使用python进入python解释器,输入

import paddle.fluid

再输入

paddle.fluid.install_check.run_check()

如果出现Your Paddle Fluid is installed successfully!,说明您已成功安装paddle-gpu。

如果没成功,验证驱动、cuda、cdnn是否安装成功。

一、准备工作

安装gcc编译环境以及内核相关的包

添加阿里云的安装源

curl -o /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

安装基础环境

yum -y install apr autoconf automake bash bash-completion bind-utils bzip2 bzip2-devel chrony cmake coreutils curl curl-devel dbus dbus-libs dhcp-common dos2unix e2fsprogs e2fsprogs-devel file file-libs freetype freetype-devel gcc gcc-c++ gdb glib2 glib2-devel glibc glibc-devel gmp gmp-devel gnupg iotop kernel kernel-devel kernel-doc kernel-firmware kernel-headers krb5-devel libaio-devel libcurl libcurl-devel libevent libevent-devel libffi-devel libidn libidn-devel libjpeg libjpeg-devel libmcrypt libmcrypt-devel libpng libpng-devel libxml2 libxml2-devel libxslt libxslt-devel libzip libzip-devel lrzsz lsof make microcode_ctl mysql mysql-devel ncurses ncurses-devel net-snmp net-snmp-libs net-snmp-utils net-tools nfs-utils nss nss-sysinit nss-tools openldap-clients openldap-devel openssh openssh-clients openssh-server openssl openssl-devel patch policycoreutils polkit procps readline-devel rpm rpm-build rpm-libs rsync sos sshpass strace sysstat tar tmux tree unzip uuid uuid-devel vim wget yum-utils zip zlib* jq

时间同步

systemctl start chronyd && systemctl enable chronyd

重启

reboot

整体升级

yum update -y

再次重启

reboot

检查

注意:安装内核包时需要先检查一下当前内核版本是否与所要安装的kernel-devel/kernel-doc/kernel-headers的版本一致,请务必保持两者版本一致,否则后续的编译过程会出问题。

[root@localhost opt]# uname -a

Linux localhost 3.10.0-1160.31.1.el7.x86_64 #1 SMP Thu Jun 10 13:32:12 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux

[root@localhost opt]# yum list | grep kernel-

kernel-devel.x86_64 3.10.0-1160.31.1.el7 @updates

kernel-doc.noarch 3.10.0-1160.31.1.el7 @updates

kernel-headers.x86_64 3.10.0-1160.31.1.el7 @updates

kernel-tools.x86_64 3.10.0-1160.31.1.el7 @updates

kernel-tools-libs.x86_64 3.10.0-1160.31.1.el7 @updates

kernel-abi-whitelists.noarch 3.10.0-1160.31.1.el7 updates

kernel-debug.x86_64 3.10.0-1160.31.1.el7 updates

kernel-debug-devel.x86_64 3.10.0-1160.31.1.el7 updates

kernel-tools-libs-devel.x86_64 3.10.0-1160.31.1.el7 updates

两种方法可以解决版本不一致的问题:

方法一、升级内核版本,具体升级方法请自行百度, 可以不用设为默认启动内核;

方法二、安装与内核版本一致的kernel-devel/kernel-doc/kernel-headers,例如:

yum install "kernel-devel-uname-r == $(uname -r)"

二、英伟达驱动下载:

是否安装验证

nvidia-smi

下载

https://www.nvidia.cn/Download/index.aspx?lang=cn

查看支持显卡的驱动最新版本及下载,下载之后是.run后缀。然后上传到服务器任意位置即可

准备

禁用系统默认安装的 nouveau 驱动

# 修改配置

echo -e "blacklist nouveau\noptions nouveau modeset=0" > /etc/modprobe.d/blacklist.conf

# 备份原来的镜像文件

cp /boot/initramfs-$(uname -r).img /boot/initramfs-$(uname -r).img.bak

# 重建新镜像文件

sudo dracut --force

# 重启

reboot

# 查看nouveau是否启动,如果结果为空即为禁用成功

lsmod | grep nouveau

安装DKMS模块

DKMS全称是DynamicKernel ModuleSupport,它可以帮我们维护内核外的驱动程序,在内核版本变动之后可以自动重新生成新的模块。

yum -y install dkms

安装

执行以下命令进行安装,文件名替换为自己的。

sudo sh NVIDIA-Linux-x86_64-418.226.00.run -no-x-check -no-nouveau-check -no-opengl-files

# -no-x-check #安装驱动时关闭X服务

# -no-nouveau-check #安装驱动时禁用nouveau

# -no-opengl-files #只安装驱动文件,不安装OpenGL文件

按照安装提示进行安装,一路点yes、ok

安装完之后输入以下命令 ,显示如下:

[root@localhost opt]# nvidia-smi

Wed Jul 7 11:11:33 2021

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 410.129 Driver Version: 410.129 CUDA Version: 10.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 Tesla T4 Off | 00000000:41:00.0 Off | 0 |

| N/A 94C P0 36W / 70W | 0MiB / 15079MiB | 0% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| No running processes found |

+-----------------------------------------------------------------------------+

三、英伟达cuda下载

是否安装验证

nvcc -V

1、安装前检查

确定已经安装NVIDIA显卡

[root@localhost opt]# lspci | grep -i nvidia

41:00.0 3D controller: NVIDIA Corporation TU104GL [Tesla T4] (rev a1)

2、确认安装gcc,如果没有安装需要安装

[root@localhost opt]# gcc --version

gcc (GCC) 4.8.5 20150623 (Red Hat 4.8.5-44)

Copyright © 2015 Free Software Foundation, Inc.

本程序是自由软件;请参看源代码的版权声明。本软件没有任何担保;

包括没有适销性和某一专用目的下的适用性担保。

# yum -y install gcc gcc-c++

3、禁用Nouveau

没有输出就是已经禁用了Nouveau

如果没有禁用, 看文档上面的禁用Nouveau

[root@localhost opt]# lsmod | grep nouveau

[root@localhost opt]#

4、设置开机启动级别

在加载Nouveau驱动程序或图形界面处于活动状态时,无法安装CUDA驱动程序

[

root@localhost opt]# systemctl set-default multi-user.target

Removed symlink /etc/systemd/system/default.target.

Created symlink from /etc/systemd/system/default.target to /usr/lib/systemd/system/multi-user.target.

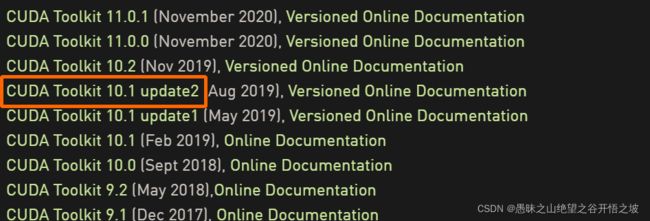

安装

此处的安装环境为离线环境,需要先下载cuda安装文件,安装文https://developer.nvidia.com/cuda-toolkit-archivehttps://developer.nvidia.com/cuda-toolkit-archive

CUDA版本按照自己的需求选择即可, 这里我选择的安装类型为 runfile(local)

可以直接配置,然后在线下载

https://developer.nvidia.com/cuda-10.1-download-archive-update2?target_os=Linux&target_arch=x86_64&target_distro=CentOS&target_version=7&target_type=runfilelocal

wget https://developer.download.nvidia.com/compute/cuda/10.1/Prod/local_installers/cuda_10.1.243_418.87.00_linux.run

sudo sh cuda_10.1.243_418.87.00_linux.run

接着

会出现安装界面,输入accept,

第二个界面, 直接选择install

安装后脚本输出, 临时保存一下, 后面需要:

===========

= Summary =

===========

Driver: Installed

Toolkit: Installed in /usr/local/cuda-10.1/

Samples: Installed in /root/, but missing recommended libraries

Please make sure that

- PATH includes /usr/local/cuda-10.1/bin

- LD_LIBRARY_PATH includes /usr/local/cuda-10.1/lib64, or, add /usr/local/cuda-10.1/lib64 to /etc/ld.so.conf andrun ldconfig as root

To uninstall the CUDA Toolkit, run cuda-uninstaller in /usr/local/cuda-10.1/bin

To uninstall the NVIDIA Driver, run nvidia-uninstall

Please see CUDA_Installation_Guide_Linux.pdf in /usr/local/cuda-10.1/doc/pdf for detailed information on setting upCUDA.

Logfile is /var/log/cuda-installer.log

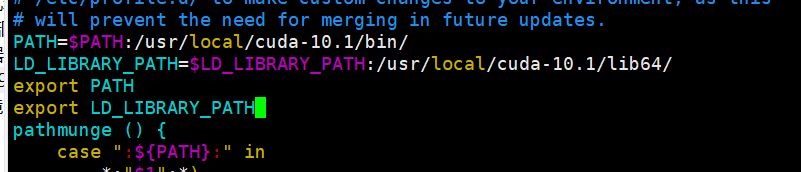

添加CUDA进入环境变量

# 需要按照自己的cuda安装脚本输出来更改

[root@localhost cuda-10.1]# vim /etc/profile

添加以下四行

PATH=$PATH:/usr/local/cuda-10.1/bin/

LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/cuda-10.1/lib64/

export PATH

export LD_LIBRARY_PATH

[root@localhost cuda-10.1]# source /etc/profile

验证

[root@localhost cuda-10.1]# nvcc -V

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2019 NVIDIA Corporation

Built on Sun_Jul_28_19:07:16_PDT_2019

Cuda compilation tools, release 10.1, V10.1.243

[root@localhost NVIDIA_CUDA-10.1_Samples]# cd /root/NVIDIA_CUDA-10.1_Samples

[root@localhost NVIDIA_CUDA-10.1_Samples]# make

[root@localhost NVIDIA_CUDA-10.1_Samples]# cd 1_Utilities/deviceQuery

[root@localhost deviceQuery]# ./deviceQuery

./deviceQuery Starting...

CUDA Device Query (Runtime API) version (CUDART static linking)

Detected 1 CUDA Capable device(s)

Device 0: "Tesla T4"

CUDA Driver Version / Runtime Version 10.1 / 10.1

CUDA Capability Major/Minor version number: 7.5

Total amount of global memory: 15080 MBytes (15812263936 bytes)

(40) Multiprocessors, ( 64) CUDA Cores/MP: 2560 CUDA Cores

GPU Max Clock rate: 1590 MHz (1.59 GHz)

Memory Clock rate: 5001 Mhz

Memory Bus Width: 256-bit

L2 Cache Size: 4194304 bytes

Maximum Texture Dimension Size (x,y,z) 1D=(131072), 2D=(131072, 65536), 3D=(16384, 16384, 16384)

Maximum Layered 1D Texture Size, (num) layers 1D=(32768), 2048 layers

Maximum Layered 2D Texture Size, (num) layers 2D=(32768, 32768), 2048 layers

Total amount of constant memory: 65536 bytes

Total amount of shared memory per block: 49152 bytes

Total number of registers available per block: 65536

Warp size: 32

Maximum number of threads per multiprocessor: 1024

Maximum number of threads per block: 1024

Max dimension size of a thread block (x,y,z): (1024, 1024, 64)

Max dimension size of a grid size (x,y,z): (2147483647, 65535, 65535)

Maximum memory pitch: 2147483647 bytes

Texture alignment: 512 bytes

Concurrent copy and kernel execution: Yes with 3 copy engine(s)

Run time limit on kernels: No

Integrated GPU sharing Host Memory: No

Support host page-locked memory mapping: Yes

Alignment requirement for Surfaces: Yes

Device has ECC support: Enabled

Device supports Unified Addressing (UVA): Yes

Device supports Compute Preemption: Yes

Supports Cooperative Kernel Launch: Yes

Supports MultiDevice Co-op Kernel Launch: Yes

Device PCI Domain ID / Bus ID / location ID: 0 / 65 / 0

Compute Mode:

< Default (multiple host threads can use ::cudaSetDevice() with device simultaneously) >

deviceQuery, CUDA Driver = CUDART, CUDA Driver Version = 10.1, CUDA Runtime Version = 10.1, NumDevs = 1

Result = PASS

主要关注 Result = PASS 代表测试通过

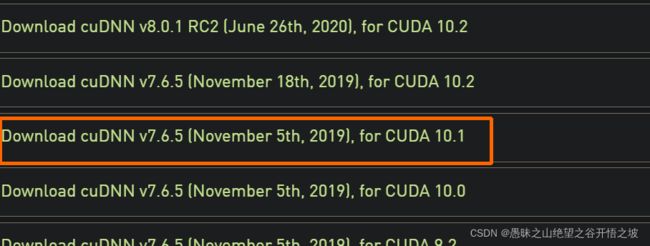

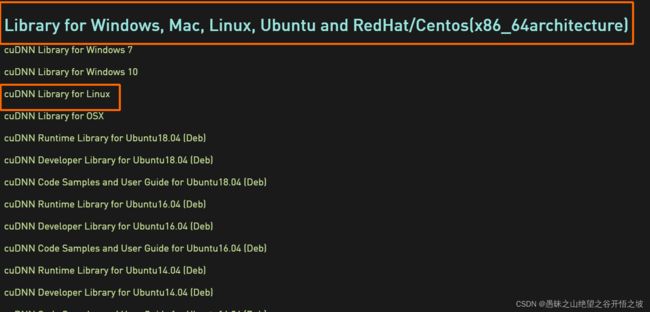

英伟达cudnn下载

下载相关版本的CUDNN(需要先注册账号才能下载):

注意:要选择CUDA相对应版本的。

安装

https://developer.nvidia.com/after_signup/complete_profile

https://developer.nvidia.com/rdp/cudnn-archive

上传并解压

[root@localhost opt]# cd /opt/

[root@localhost opt]# tar xzvf cudnn-10.1-linux-x64-v7.6.5.32.tgz

cuda/include/cudnn.h

cuda/NVIDIA_SLA_cuDNN_Support.txt

cuda/lib64/libcudnn.so

cuda/lib64/libcudnn.so.7

cuda/lib64/libcudnn.so.7.6.5

cuda/lib64/libcudnn_static.a

[root@localhost opt]# cp cuda/include/cudnn.h /usr/local/cuda/include

[root@localhost opt]# cp cuda/lib64/libcudnn* /usr/local/cuda/lib64

[root@localhost opt]# chmod a+r /usr/local/cuda/include/cudnn.h /usr/local/cuda/lib64/libcudnn*

四、验证paddle cuda相关的指令

https://www.paddlepaddle.org.cn/documentation/docs/zh/api/paddle/version/cudnn_cn.html#cudnn

查看安装软件的型号

进入python环境

import paddle

print(paddle.version.cuda())

import paddle

print(paddle.version.cudnn())

查看硬件本身的型号

退出python环境

nvcc -V

进入python环境

import paddle

print(paddle.device.get_device())

import paddle

print(paddle.device.get_cudnn_version())

查看cuda的位置

find / -name “cudnn*”

安装好,驱动、cuda、cudnn还是报错的话,配置以下路径

临时生效

export LD_LIBRARY_PATH='/usr/local/cuda-10.1/lib64/'

永久生效写入文件

需要按照自己的cuda安装脚本输出来更改

[root@localhost cuda-10.1]# tail -5 /etc/profile

vim /etc/profile

写入:以下四行

PATH=$PATH:/usr/local/cuda-10.1/bin/

LD_LIBRARY_PATH=$LD_LIBRARY_PATH:/usr/local/cuda-10.1/lib64/

export PATH

export LD_LIBRARY_PATH

[root@localhost cuda-10.1]# source /etc/profile

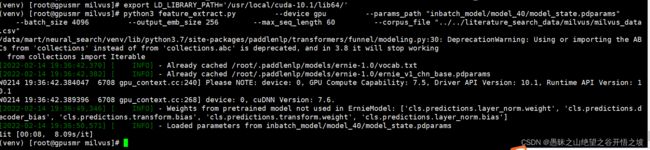

五、 终于运行成功

六、 参考内容

1.检查是否安装了GPU:

lspci | grep -i nvidia

2 检查内核版本

uname -r

3.常用linux命令

1.查看显卡型号:lspci | grep -i vga

2.查看cuda版本:cat /usr/local/cuda/version.txt

3.查看cudnn版本:cat /usr/local/cuda/include/cudnn.h | grep CUDNN_MAJOR -A 2

4.查看linux内核版本号:cat /proc/version

5.查看系统版本:cat /etc/centos-release

6.查看cpu版本:cat /etc/cpuinfo

7.vim /etc/profile修改系统变量

vim ~/.bash_profile修改用户变量

8.gedit ~/.bashrc更改用户环境变量

9.w显示当前已经登入系统得用户名

10.su username切换用户名

11.root用户下修改普通用户密码:passwd username

12.切换root用户:su ; 切换普通用户:su username

13.useradd新建用户;userdel删除用户;

14.ls ~ | wc -w查看~下文件数量

15.history 查看前1000条命令

16.stat 文件名 查看文件详细信息

17.source .bashrc .bashrc也可以是其他含有环境变量的文件,用来刷新环境变量。

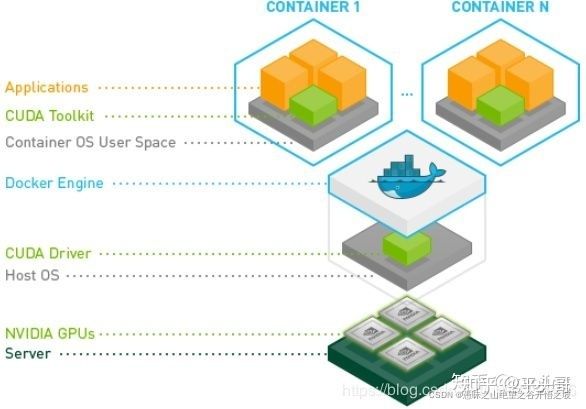

cuda驱动+cuda版本+cudnn

从下面的图中可以很看到nvidia-docker共享了宿主机的CUDA Driver。

这样有一个好处,不同cuda版本与tf版本匹配就不会受到宿主cuda版本影响了。